记录一次在VM上搭建k8s集群

安装私有镜像仓库Harbor:https://blog.csdn.net/a595077052/article/details/119893070

配置k8s的dashboard:https://blog.csdn.net/a595077052/article/details/119894730

IDEA借助maven插件构建镜像:https://blog.csdn.net/a595077052/article/details/119895152

安装 Kubernetes 多集群管理工具-Kuboard v3:https://blog.csdn.net/a595077052/article/details/119895591

k8s部署微服务:https://blog.csdn.net/a595077052/article/details/119896910

一、安装并设置VM虚拟机

1.前提

在同一台电脑上安装三个VM模拟物理机,电脑配置CPU:是4核8线程内存8G(够呛,建议16G),每个VM分配2个处理器,每个处理器2个内核,硬盘50G,内存2G(我的8G只能将master设置为1.4G),之前在本本(2核4线程)安装,VM启动后经常连不上,k8s部署报了很多错误。

=======================================================================

以下操作在部署k8s主节点前,可以先在一台VM进行,完成后直接复制出2个VM镜像,修改CentOS 7 64 位.vmx中的mac地址,启动后再修改主机名和ip(静态ip)即可。

=======================================================================

2.安装VM和Centos

安装步骤省略。系统版本:Centos7.5(镜像版本CentOS-7-x86_64-DVD-1804),VMware-workstation-15.5.0

3.给普通用户授权

root给普通用户(k8s,大多数操作都没在普通用户下执行,其实涉及权限问题直接用root用户更方便)sudo权限vi /etc/sudoers,增加k8s ALL=(ALL) ALL,注意不能有太多空格和换行,并按wq!保存。(1、修改/etc/sudoers文件权限,进入超级用户,因为没有写权限,所以要先把写权限加上,chmod u+w /etc/sudoers,撤销写权限chmod u-w /etc/sudoers ,可以免密码登录xxx ALL=(ALL) NOPASSWD: ALL)

4.重启

shutdown -r now

5.设置网络

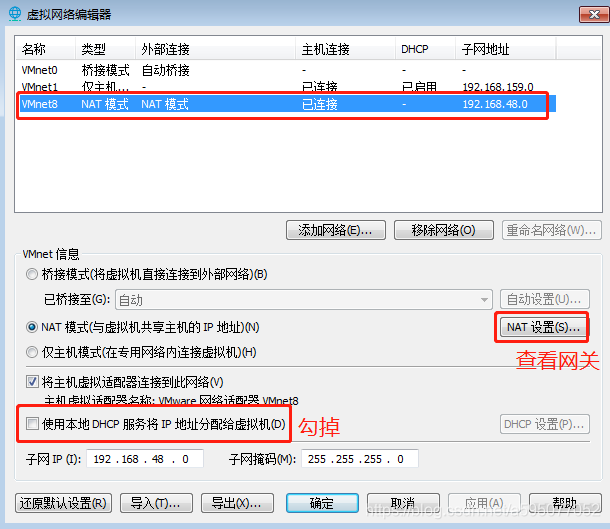

1)设置VM网络,编辑-》虚拟网络编辑器,并记下网关

2)k8s使用sudo修改只读文件,sudo vi /etc/sysconfig/network添加网关,添加的是在1)步查看的网关IP

GATEWAY=192.168.48.2

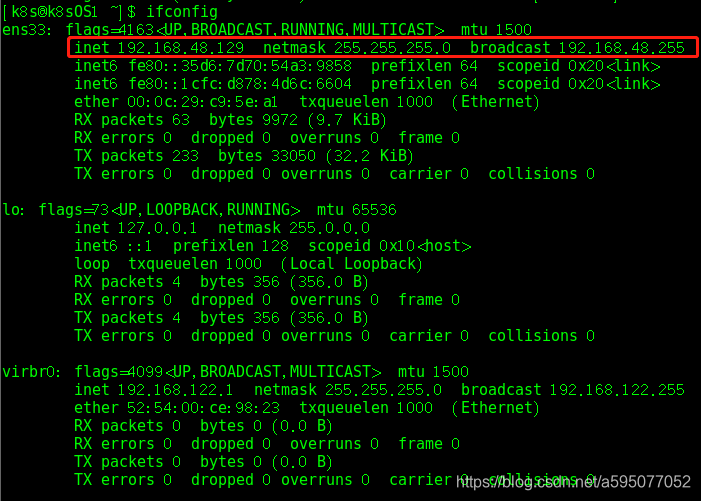

3)配置本机的网络(是虚拟网卡名ens33):sudo vi /etc/sysconfig/network-scripts/ifcfg-ens33

4)重启网络服务service network restart 或/etc/init.d/network restart

Ifconfig 或者 ip addr查看

5)ping www.baidu.com 或者宿主机是否畅通。宿主机也可以ping虚拟机的ip,查看是否畅通

6)设置主机名

hostname查看当前主机名

可以使用如下命令来修改主机名hostnamectl set-hostname k8s-master

也可以修改其配置文件/etc/hostname里边的内容

三台VM机的网络配置如下(以下是本本上的IP,台式机上的子网为192.168.126.*)

| 节点 |

ip |

主机名 |

| master |

192.168.48.128 |

k8s-master |

| node01 |

192.168.48.129 |

k8s-node01 |

| node02 |

192.168.48.130 |

k8s-node02 |

7)各VM和宿主机配置域名映射hosts,使用域名访问

VM修改/etc/hosts文件添加映射

192.168.48.128 k8s-master

192.168.48.129 k8s-node01

192.168.48.130 k8s-node02

宿主机hosts文件地址C:\Windows\System32\drivers\etc\hosts也添加上述配置

安装依赖环境,注意:每⼀台机器都需要安装此依赖环境,以下只能在一行执行(否则会报找不到换行引起的命令分隔)

yum install -y conntrack ntpdate ntp ipvsadm ipset jq iptables curl sysstat libseccomp wget vim net-tools git iproute lrzsz bash-completion tree bridge-utils unzip bind-utils gcc

如果出linux 编辑文件时提示swp文件已经存在,使用rm -rf删除该文件即可。

6.安装iptables并启动iptables

设置开机⾃启,清空iptables规则,保存当前规则到默认规则 # 关闭防⽕墙

systemctl stop firewalld && systemctl disable firewalld

7.置空iptables

yum -y install iptables-services && systemctl start iptables && systemctl

enable iptables && iptables -F && service iptables save

8.关闭selinux

#关闭swap分区【虚拟内存】并且永久关闭虚拟内存

swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

#关闭selinux

setenforce 0 && sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config

9.升级Linux内核为4.44版本

rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-4.el7.elrepo.noarch.rpm

#安装内核

yum --enablerepo=elrepo-kernel install -y kernel-lt

#设置开机从新内核启动

grub2-set-default 'CentOS Linux (4.4.189-1.el7.elrepo.x86_64) 7 (Core)'

安装后版本可能不一样(grub2-set-default 'CentOS Linux (5.4.114-1.el7.elrepo.x86_64) 7 (Core)',

修改步骤步骤一:cat /boot/grub2/grub.cfg |grep menuentry命令查看当前操作系统有几个系统内核所有的内核

步骤二:grub2-editenv list命令查看系统当前的默认内核,也可以使用uname -r查看,当前内核

步骤三:使用命令grub2-set-default '第一步中列出的内核版本'

步骤四:重启生效reboot

)

# 生成grub2配置文件

grub2-mkconfig -o /boot/grub2/grub.cfg

#注意:设置完内核后,需要重启服务器才会⽣效。

reboot

#查询内核

uname -r

过程中出现错误及解决

rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-4.el7.elrepo.noarch.rpm执行时出现已杀死,单独执行又错误:无法从 /var/lib/rpm 打开软件包数据库,删除rpm库重新安装:依次执行以下三条命令: cd /var/lib/rpm rm -rf __db.* rpm --rebuilddb 再执行之前的命令提示已安装; node01节点安装时报内存不足,重新执行又报权限等问题,必须切换到root用户安装才行。错误:can't create 事务 lock on /var/lib/rpm/.rpm.lock (权限不够)

错误如下:

[k8s@k8s-node01 ~]$ rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-4.el7.elrepo.noarch.rpm --force --nodeps

获取http://www.elrepo.org/elrepo-release-7.0-4.el7.elrepo.noarch.rpm

警告:/var/tmp/rpm-tmp.PXtAt7: 头V4 DSA/SHA1 Signature, 密钥 ID baadae52: NOKEY

错误:can't create 事务 lock on /var/lib/rpm/.rpm.lock (权限不够)

[k8s@k8s-node01 ~]$ yum --enablerepo=elrepo-kernel install -y kernel-lt

已加载插件:fastestmirror, langpacks

您需要 root 权限执行此命令。

yum安装时下载的rpm包存放路径 /var/cache/yum/,如果需要清理该目标下的文件,则执行rm清理,再重新执行rpm命令

如果报内存无法分配内存,或者内核没安装完整,那么就rpm -e(yum remove kernel-3.10.0-862.el7.x86_64 #删除旧内核这条命令可能无法删除,使用rpm -e进行删除)删除错误的内核,再重新尝试安装

10、调整内核参数

对于k8s

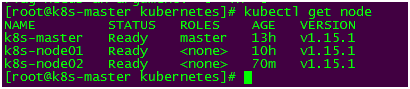

cat > kubernetes.conf < net.bridge.bridge-nf-call-iptables=1 net.bridge.bridge-nf-call-ip6tables=1 net.ipv4.ip_forward=1 net.ipv4.tcp_tw_recycle=0 vm.swappiness=0 vm.overcommit_memory=1 vm.panic_on_oom=0 fs.inotify.max_user_instances=8192 fs.inotify.max_user_watches=1048576 fs.file-max=52706963 fs.nr_open=52706963 net.ipv6.conf.all.disable_ipv6=1 net.netfilter.nf_conntrack_max=2310720 EOF #将优化内核⽂件拷⻉到/etc/sysctl.d/⽂件夹下,这样优化⽂件开机的时候能够被调⽤ cp kubernetes.conf /etc/sysctl.d/kubernetes.conf #⼿动刷新,让优化⽂件⽴即⽣效 sysctl -p /etc/sysctl.d/kubernetes.conf --- 如果已经设置时区,可略过 #设置系统时区为中国/上海 timedatectl set-timezone Asia/Shanghai #将当前的 UTC 时间写⼊硬件时钟 timedatectl set-local-rtc 0 #重启依赖于系统时间的服务 systemctl restart rsyslog systemctl restart crond systemctl stop postfix && systemctl disable postfix #1).创建保存⽇志的⽬录 mkdir /var/log/journal #2).创建配置⽂件存放⽬录 mkdir /etc/systemd/journald.conf.d #3).创建配置⽂件 cat > /etc/systemd/journald.conf.d/99-prophet.conf < [Journal] Storage=persistent Compress=yes SyncIntervalSec=5m RateLimitInterval=30s RateLimitBurst=1000 SystemMaxUse=10G SystemMaxFileSize=200M MaxRetentionSec=2week ForwardToSyslog=no EOF #4).重启systemd journald的配置 systemctl restart systemd-journald echo "* soft nofile 65536" >> /etc/security/limits.conf echo "* hard nofile 65536" >> /etc/security/limits.conf modprobe br_netfilter #如果内核版本较高,如5.4.114,已经不支持nf_conntrack_ipv4,需要将其改成nf_conntrack cat > /etc/sysconfig/modules/ipvs.modules < #!/bin/bash modprobe -- ip_vs modprobe -- ip_vs_rr modprobe -- ip_vs_wrr modprobe -- ip_vs_sh modprobe -- nf_conntrack_ipv4 EOF ##使⽤lsmod命令查看这些⽂件是否被引导 chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack_ipv4 yum install -y yum-utils device-mapper-persistent-data lvm2 #紧接着配置⼀个稳定(stable)的仓库、仓库配置会保存到/etc/yum.repos.d/docker-ce.repo⽂件中 yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo #更新Yum安装的相关Docker软件包&安装Docker CE,耗时较长 yum update -y && yum install docker-ce 如果出现上图错误,需要执行命令:mv /var/lib/rpm/__db.00* /tmp/&&yum clean all后再执行安装更新命令yum update -y && yum install docker-ce 需要安装kubelet, kubeadm等包,但k8s官⽹给的yum源是 packages.cloud.google.com,国内访问不了,此时我们可以使⽤阿⾥云的yum仓库镜像。 cat < [kubernetes] name=Kubernetes baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF yum install -y kubeadm-1.15.1 kubelet-1.15.1 kubectl-1.15.1 # 启动 kubelet systemctl enable kubelet && systemctl start kubelet 文件kubeadm-basic.images.tar.gz(有点大暂不上传了) tar -zxvf kubeadm-basic.images.tar.gz kubeadm-basic.images/ kubeadm-basic.images/coredns.tar kubeadm-basic.images/etcd.tar kubeadm-basic.images/pause.tar kubeadm-basic.images/apiserver.tar kubeadm-basic.images/proxy.tar kubeadm-basic.images/kubec-con-man.tar kubeadm-basic.images/scheduler.tar 使用脚本的方式将这些文件导入 1)touch load-images.sh 2)chmod 755 load-images.sh 3)vi load-images.sh 4)脚本 #1、导⼊镜像脚本代码 (在任意⽬录下创建sh脚本⽂件:image-load.sh) #!/bin/bash #注意 镜像解压的⽬录位置 ls /root/kubeadm-basic.images > /tmp/images-list.txt cd /root/kubeadm-basic.images for i in $(cat /tmp/images-list.txt) do docker load -i $i done rm -rf /tmp/images-list.txt 5)开始执⾏,镜像导⼊ ./image-load.sh 6)传输⽂件及镜像到其他node节点 #拷⻉到node01节点 scp -r image-load.sh kubeadm-basic.images root@k8s-node01:/root/ #拷⻉到node02节点 scp -r image-load.sh kubeadm-basic.images root@k8s-node02:/root/ #其他节点依次执⾏sh脚本,导⼊镜像 使用docker images查看所有镜像,kubectl get node检查k8s是否可用 ======================================================================= 以上可以先在一台VM上执行,完成后直接复制2个VM镜像,修改CentOS 7 64 位.vmx中的mac地址,启动后再修改主机名和ip(静态ip)即可 ======================================================================= #初始化主节点 --- 只需要在主节点执⾏ #1、拉去yaml资源配置⽂件 kubeadm config print init-defaults > kubeadm-config.yaml #2、修改yaml资源⽂件 localAPIEndpoint: advertiseAddress: 192.168.48.128 # 注意:修改配置⽂件的IP地址(本机的IP) kubernetesVersion: v1.15.1 #注意:修改版本号,必须和kubectl版本保持⼀致 networking: # 指定flannel模型通信 pod⽹段地址,此⽹段和flannel⽹段⼀致,podSubnet固定 podSubnet: "10.244.0.0/16" serviceSubnet: "10.96.0.0/12" #指定使⽤ipvs⽹络进⾏通信 --- apiVersion: kubeproxy.config.k8s.io/v1alpha1 kind: kubeProxyConfiguration featureGates: SupportIPVSProxyMode: true mode: ipvs #3、初始化主节点,开始部署 kubeadm init --config=kubeadm-config.yaml --experimental-upload-certs | tee kubeadm-init.log #注意:执⾏此命令,CPU核⼼数量必须⼤于1核(2个处理器,每个处理器2个核心,配置低了会报很多错误),否则⽆法执⾏成功(执行后未生成kubeadm-init.log日志文件,很奇怪) (下图非本人的图片) #4、初始化成功后执⾏如下命令 按照上图提示执行 #创建⽬录,保存连接配置缓存,认证⽂件 mkdir -p $HOME/.kube #拷⻉集群管理配置⽂件 cp -i /etc/kubernetes/admin.conf $HOME/.kube/config #授权给配置⽂件 chown $(id -u):$(id -g) $HOME/.kube/config 执⾏命令前查询node(下图非本人的图片) 执⾏命令后查询node(下图非本人的图片): 我们发现已经可以成功查询node节点信息了,但是节点的状态却是NotReady,不是Runing的状态。原因是此时我们使⽤ipvs+flannel的⽅式进⾏⽹络通信,但是flannel⽹络插件还没有部署,因此节点状态此时为NotReady #部署flannel⽹络插件 --- 只需要在主节点执⾏ #1、下载flannel⽹络插件 wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml 如果上述命令无法下载或者很慢,可以使用下面的代码,创建kube-flannel.yml文件 如果带上面的quay.io路径的也无法下载成功,将其全部替换成quay-mirror.qiniu.com,再试 #2、部署flannel kubectl create -f kube-flannel.yml #也可进⾏⽹络部署 kubectl apply –f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml 如果部署完毕执行kubectl get pod -n kube-system,发现⼀些异常错误现象(下图非本人出现的问题): 发现通过flannel部署的pod都出现pending,ImagePullBackOff这样的问题: 查询⽇志信息,发现了⼀些错误: #查询⼀个pod的详细信息 kubectl get pod -n kube-system #查看kube-system下的pod kubectl apply -f kube-flannel.yml #服务已正常启动 部署flannel⽹络插件时候,注意⽹络连通的问题,以下是成功后的状态: #3、节点Join 如果原有的kubeadm版本与(kubeadm version查看)master的不一致,卸载原kubeadm(若有): yum remove -y kubelet kubeadm kubectl 安装指定版本的kubeadm yum install -y kubelet-1.15.1 kubeadm-1.15.1 kubectl-1.15.1 在master 节点执行kubeadm token create --print-join-command,会生成token信息 kubeadm join 192.168.126.128:6443 --token ag2z1s.hw3foj8gd39pb96r --discovery-token-ca-cert-hash sha256:e4868a0800c958d4066f1f1558b758a4155bad4ba4fd9afae956047d3fc6c3d9 在需要加入集群的node节点上执行上输出的信息,加入成功的如下 如果报错后面加上参数 --ignore-preflight-errors=all(或者执行前先执行kubeadm reset进行重置,注意这个没试过) master查看加入的节点kubectl get node 以上就是k8s集群搭建的经过(主要是硬件问题很头疼,配置好能省不少事儿),接下来准备使用k8s部署微服务了11、调整系统时区

12、关闭系统不需要的服务

13、设置⽇志保存⽅式

14、打开⽂件数调整 (可忽略,不执⾏)

15、kube-proxy 开启 ipvs 前置条件

二、docker部署

1、安装docker

三、安装kubeadm

1、安装kubernetes

2、安装kubeadm、kubelet、kubectl

四、k8s集群安装

1、rz上传基础镜像tar

2、解压tar

3、将解压出来的镜像tar包导入到本地镜像仓库

4、k8s部署

5、flannel插件

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unused in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-amd64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- amd64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-amd64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-amd64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- arm64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-arm64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-arm64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- arm

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-arm

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-arm

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-ppc64le

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- ppc64le

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-ppc64le

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-ppc64le

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-s390x

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- s390x

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.11.0-s390x

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.11.0-s390x

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg