hadoop集群搭建

hadoop有三种部署方式

1、Local (Standalone) Mode(单机模式)

数据存储在本地

2、Pseudo-Distributed Mode(伪集群模式)

数据存储在HDFS

3、Fully-Distributed Mode(集群模式)

集群部署,数据存储在HDFS

一、安装JDK

因为hadoop是Java语言开发的,所以依赖jdk环境,需要先安装jdk

JDK安装教程

二、安装hadoop

2.1、下载hadoop

下载地址

2.2、解压缩

tar -zxvf hadoop-3.1.3.tar.gz -C /opt/module/

2.3、配置环境变量

vim /etc/profile.d/my_env.sh

#HADOOP_HOME

export HADOOP_HOME=/opt/module/hadoop-3.1.3

export PATH=$PATH:$HADOOP_HOME/bin

export PATH=$PATH:$HADOOP_HOME/sbin

2.4、刷新环境变量

source /etc/profile

2.5、验证是否安装成功

hadoop version

2.6、集群分发

2.6.1、编写集群分发脚本

vim xsync

#!/bin/bash

#1. 判断参数个数

if [ $# -lt 1 ]

then

echo Not Enough Arguement!

exit;

fi

#2. 遍历集群所有机器

for host in hadoop102 hadoop103 hadoop104

do

echo ==================== $host ====================

#3. 遍历所有目录,挨个发送

for file in $@

do

#4. 判断文件是否存在

if [ -e $file ]

then

#5. 获取父目录

pdir=$(cd -P $(dirname $file); pwd)

#6. 获取当前文件的名称

fname=$(basename $file)

ssh $host "mkdir -p $pdir"

rsync -av $pdir/$fname $host:$pdir

else

echo $file does not exists!

fi

done

done

2.6.2、修改权限

chmod 777 xsync

2.6.3、免密登录

这步可以省略

往其他服务器分发文件每次都需要输入服务器密码,设置免密登录则可以不用每次都输入密码

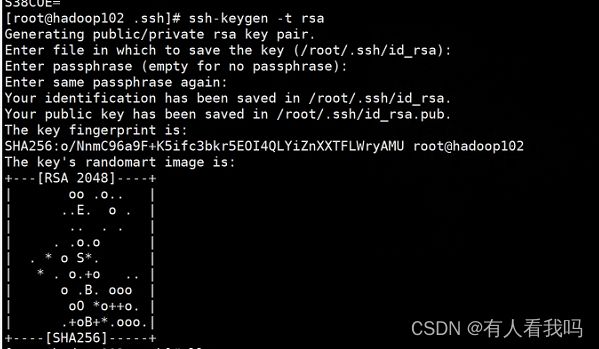

2.6.3.1、生产公钥

ssh-keygen -t rsa

2.6.3.2、将公钥分发到其他机器

ssh-copy-id hadoop103

2.6.3.3、效果

2.6.4、集群同步

将hadoop102中的jdk和hadoop同步到hadoop103和hadoop104,同步之后需要刷新profile

# 同步软件

xsync /opt/module/*

# 同步环境变量

xsync /etc/profile.d/my_env.sh

三、修改配置

3.1、修改hadoop核心配置

vim /opt/module/hadoop-3.1.3/etc/hadoop/core-site.xml

<property>

<name>fs.defaultFSname>

<value>hdfs://hadoop102:8020value>

property>

<property>

<name>hadoop.tmp.dirname>

<value>/opt/module/hadoop-3.1.3/datavalue>

property>

3.2、修改hdfs配置

vim /opt/module/hadoop-3.1.3/etc/hadoop/hdfs-site.xml

<property>

<name>dfs.namenode.http-addressname>

<value>hadoop102:9870value>

property>

<property>

<name>dfs.namenode.secondary.http-addressname>

<value>hadoop104:9868value>

property>

3.3、修改yarn配置

vim /opt/module/hadoop-3.1.3/etc/hadoop/yarn-site.xml

<property>

<name>yarn.nodemanager.aux-servicesname>

<value>mapreduce_shufflevalue>

property>

<property>

<name>yarn.resourcemanager.hostnamename>

<value>hadoop103value>

property>

<property>

<name>yarn.nodemanager.env-whitelistname>

<value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CO

NF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAP

RED_HOMEvalue>

property>

3.4、修改MapReduce配置

vim /opt/module/hadoop-3.1.3/etc/hadoop/mapred-site.xml

<property>

<name>mapreduce.framework.namename>

<value>yarnvalue>

property>

3.5、将修改好的配置分发到其他服务

xsync /opt/module/hadoop-3.1.3/etc/hadoop/

四、启动集群

4.1、设置集群节点

vim /opt/module/hadoop-3.1.3/etc/hadoop/workers

hadoop102

hadoop103

hadoop104

xsync /opt/module/hadoop-3.1.3/etc/hadoop/workers

4.2、初始化 NameNode

hdfs namenode -format

4.3、修改启停脚本

在#!/usr/bin/env bash下面添加如下配置,如果非root用户则不需要添加

vim /opt/module/hadoop-3.1.3/sbin/start-dfs.sh

HDFS_DATANODE_USER=root

HADOOP_SECURE_DN_USER=hdfs

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root

vim /opt/module/hadoop-3.1.3/sbin/stop-dfs.sh

HDFS_DATANODE_USER=root

HADOOP_SECURE_DN_USER=hdfs

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root

vim /opt/module/hadoop-3.1.3/sbin/start-yarn.sh

YARN_RESOURCEMANAGER_USER=root

HADOOP_SECURE_DN_USER=yarn

YARN_NODEMANAGER_USER=root

vim /opt/module/hadoop-3.1.3/sbin/stop-yarn.sh

YARN_RESOURCEMANAGER_USER=root

HADOOP_SECURE_DN_USER=yarn

YARN_NODEMANAGER_USER=root

4.4、启动集群

在hadoop102服务器上启动hdfs

/opt/module/hadoop-3.1.3/sbin/start-dfs.sh

在hadoop103服务器上启动yarn

/opt/module/hadoop-3.1.3/sbin/start-yarn.sh

4.5、访问yarn

hadoop103:8088

4.6、访问hdfs

hadoop102:9870