Flink安装与编程实践(Flink1.9.1)

1、安装Flink

Flink的运行需要Java环境的支持,因此,在安装Flink之前,请先参照相关资料安装Java环境(比如Java8)。然后,到Flink官网下载安装包。

然后,使用如下命令对安装文件进行解压缩:

#解压安装包

hadoop@hadoop-master:~$ sudo tar xf flink-1.9.1-bin-scala_2.11.tgz -C /usr/local/

hadoop@hadoop-master:~$ cd /usr/local

hadoop@hadoop-master:/usr/local$ sudo mv flink-1.9.1 flink

#把spark目录权限赋予给hadoop用户

hadoop@hadoop-master:/usr/local$ sudo chown -R hadoop:hadoop flink/Flink对于本地模式是开箱即用的,如果要修改Java运行环境,可以修改/usr/local/flink/conf/flink-conf.yaml文件中的env.java.home参数,设置为本地Java的绝对路径。

#添加环境变量

hadoop@hadoop-master:/usr/local$ vim ~/.bashrc

hadoop@hadoop-master:/usr/local$ tail -2 ~/.bashrc

export FLINK_HOME=/usr/local/flink

export PATH=$FLINK_HOME/bin:$PATH

#使环境变量生效

hadoop@hadoop-master:/usr/local$ source ~/.bashrc#启动Flink

hadoop@hadoop-master:/usr/local$ cd /usr/local/flink/

hadoop@hadoop-master:/usr/local/flink$ ./bin/start-cluster.sh

#使用jps命令查看进程:

hadoop@hadoop-master:/usr/local/flink$ jps

....

49170 StandaloneSessionClusterEntrypoint

....

49614 TaskManagerRunner如果能够看到TaskManagerRunner和StandaloneSessionClusterEntrypoint这两个进程,就说明启动成功。

Flink的JobManager同时会在8081端口上启动一个Web前端,可以在浏览器中输入“http://localhost:8081”来访问。

Flink安装包中自带了测试样例,这里可以运行WordCount样例程序来测试Flink的运行效果,具体命令如下:

hadoop@hadoop-master:/usr/local/flink$ ./bin/flink run /usr/local/flink/examples/batch/WordCount.jar

Starting execution of program

Executing WordCount example with default input data set.

Use --input to specify file input.

Printing result to stdout. Use --output to specify output path.

(a,5)

(action,1)

(after,1)

(against,1)

(all,2)

......2、编程实现WordCount程序

编写WordCount程序主要包括以下几个步骤:

- 安装Maven

- 编写代码

- 使用Maven打包Java程序

- 通过flink run命令运行程序

2.1 安装Maven

Ubuntu中没有自带安装maven,需要手动安装maven。可以访问maven官方下载自己下载。

#解压安装包

hadoop@hadoop-master:~$ sudo unzip apache-maven-3.6.3-bin.zip -d /usr/local/

hadoop@hadoop-master:~$ cd /usr/local

hadoop@hadoop-master:/usr/local$ sudo mv apache-maven-3.6.3 maven

#把sbt目录权限赋予给hadoop用户

hadoop@hadoop-master:/usr/local$ sudo chown -R hadoop:hadoop ./maven/2.2 编写代码

在Linux终端中执行如下命令,在用户主文件夹下创建一个文件夹flinkapp作为应用程序根目录:

#创建应用程序根目录

hadoop@hadoop-master:~$ mkdir flinkapp

#创建所需的文件夹结构

hadoop@hadoop-master:~$ mkdir -p flinkapp/src/main/java然后,使用vim编辑器在./flinkapp/src/main/java目录下建立三个代码文件,即WordCountData.java、WordCountTokenizer.java和WordCount.java。

#WordCountData.java用于提供原始数据

hadoop@hadoop-master:~$ nano flinkapp/src/main/java/WordCountData.java

hadoop@hadoop-master:~$ cat flinkapp/src/main/java/WordCountData.java

package cn.edu.xmu;

import org.apache.flink.api.java.DataSet;

import org.apache.flink.api.java.ExecutionEnvironment;

public class WordCountData {

public static final String[] WORDS=new String[]{"To be, or not to be,--that is the question:--", "Whether \'tis nobler in the mind to suffer", "The slings and arrows of outrageous fortune", "Or to take arms against a sea of troubles,", "And by opposing end them?--To die,--to sleep,--", "No more; and by a sleep to say we end", "The heartache, and the thousand natural shocks", "That flesh is heir to,--\'tis a consummation", "Devoutly to be wish\'d. To die,--to sleep;--", "To sleep! perchance to dream:--ay, there\'s the rub;", "For in that sleep of death what dreams may come,", "When we have shuffled off this mortal coil,", "Must give us pause: there\'s the respect", "That makes calamity of so long life;", "For who would bear the whips and scorns of time,", "The oppressor\'s wrong, the proud man\'s contumely,", "The pangs of despis\'d love, the law\'s delay,", "The insolence of office, and the spurns", "That patient merit of the unworthy takes,", "When he himself might his quietus make", "With a bare bodkin? who would these fardels bear,", "To grunt and sweat under a weary life,", "But that the dread of something after death,--", "The undiscover\'d country, from whose bourn", "No traveller returns,--puzzles the will,", "And makes us rather bear those ills we have", "Than fly to others that we know not of?", "Thus conscience does make cowards of us all;", "And thus the native hue of resolution", "Is sicklied o\'er with the pale cast of thought;", "And enterprises of great pith and moment,", "With this regard, their currents turn awry,", "And lose the name of action.--Soft you now!", "The fair Ophelia!--Nymph, in thy orisons", "Be all my sins remember\'d."};

public WordCountData() {

}

public static DataSet getDefaultTextLineDataset(ExecutionEnvironment env){

return env.fromElements(WORDS);

}

} #WordCountTokenizer.java用于切分句子

hadoop@hadoop-master:~$ nano flinkapp/src/main/java/WordCountTokenizer.java

hadoop@hadoop-master:~$ cat flinkapp/src/main/java/WordCountTokenizer.java

package cn.edu.xmu;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.util.Collector;

public class WordCountTokenizer implements FlatMapFunction>{

public WordCountTokenizer(){}

public void flatMap(String value, Collector> out) throws Exception {

String[] tokens = value.toLowerCase().split("\\W+");

int len = tokens.length;

for(int i = 0; i0){

out.collect(new Tuple2(tmp,Integer.valueOf(1)));

}

}

}

} #WordCount.java提供主函数

hadoop@hadoop-master:~$ nano flinkapp/src/main/java/WordCount.java

hadoop@hadoop-master:~$ cat flinkapp/src/main/java/WordCount.java

package cn.edu.xmu;

import org.apache.flink.api.java.DataSet;

import org.apache.flink.api.java.ExecutionEnvironment;

import org.apache.flink.api.java.operators.AggregateOperator;

import org.apache.flink.api.java.utils.ParameterTool;

public class WordCount {

public WordCount(){}

public static void main(String[] args) throws Exception {

ParameterTool params = ParameterTool.fromArgs(args);

ExecutionEnvironment env = ExecutionEnvironment.getExecutionEnvironment();

env.getConfig().setGlobalJobParameters(params);

Object text;

//如果没有指定输入路径,则默认使用WordCountData中提供的数据

if(params.has("input")){

text = env.readTextFile(params.get("input"));

}else{

System.out.println("Executing WordCount example with default input data set.");

System.out.println("Use -- input to specify file input.");

text = WordCountData.getDefaultTextLineDataset(env);

}

AggregateOperator counts = ((DataSet)text).flatMap(new WordCountTokenizer()).groupBy(new int[]{0}).sum(1);

//如果没有指定输出,则默认打印到控制台

if(params.has("output")){

counts.writeAsCsv(params.get("output"),"\n", " ");

env.execute();

}else{

System.out.println("Printing result to stdout. Use --output to specify output path.");

counts.print();

}

}

}该程序依赖Flink Java API,因此,我们需要通过Maven进行编译打包。需要新建文件pom.xml文件中添加如下内容,用来声明该独立应用程序的信息以及与Flink的依赖关系:

hadoop@hadoop-master:~$ cd flinkapp/

hadoop@hadoop-master:~/flinkapp$ nano pom.xml

hadoop@hadoop-master:~/flinkapp$ cat pom.xml

cn.edu.xmu

simple-project

4.0.0

Simple Project

jar

1.0

jboss

JBoss Repository

http://repository.jboss.com/maven2/

org.apache.flink

flink-java

1.9.1

org.apache.flink

flink-streaming-java_2.11

1.9.1

org.apache.flink

flink-clients_2.11

1.9.1

2.3 使用Maven打包Java程序

为了保证Maven能够正常运行,先执行如下命令检查整个应用程序的文件结构:

hadoop@hadoop-master:~/flinkapp$ find .

.

./src

./src/main

./src/main/java

./src/main/java/WordCountTokenizer.java

./src/main/java/WordCountData.java

./src/main/java/WordCount.java

./pom.xml接下来,我们可以通过如下代码将整个应用程序打包成JAR包(注意:计算机需要保持连接网络的状态,而且首次运行打包命令时,Maven会自动下载依赖包,需要消耗几分钟的时间):

hadoop@hadoop-master:~/flinkapp$ /usr/local/maven/bin/mvn package

....

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 01:29 min

[INFO] Finished at: 2022-04-26T17:10:17+08:00

[INFO] ------------------------------------------------------------------------如果屏幕返回的信息中包含“BUILD SUCCESS”,则说明生成JAR包成功。

2.4 通过flink run命令运行程序

最后,可以将生成的JAR包通过flink run命令提交到Flink中运行(请确认已经启动Flink),命令如下:

hadoop@hadoop-master:~/flinkapp$ /usr/local/flink/bin/flink run --class cn.edu.xmu.WordCount ~/flinkapp/target/simple-project-1.0.jar

Starting execution of program

Executing WordCount example with default input data set.

Use -- input to specify file input.

Printing result to stdout. Use --output to specify output path.

(a,5)

(action,1)

(after,1)

(against,1)

(all,2)

......执行成功后,可以在屏幕上看到词频统计结果。

3、使用IntelliJ IDEA开发调试WordCount程序

请参考相关网络资料完成IntelliJ IDEA的安装

在开始本实验之前,首先要启动Flink。

下面介绍如何使用IntelliJ IDEA工具开发WordCount程序。

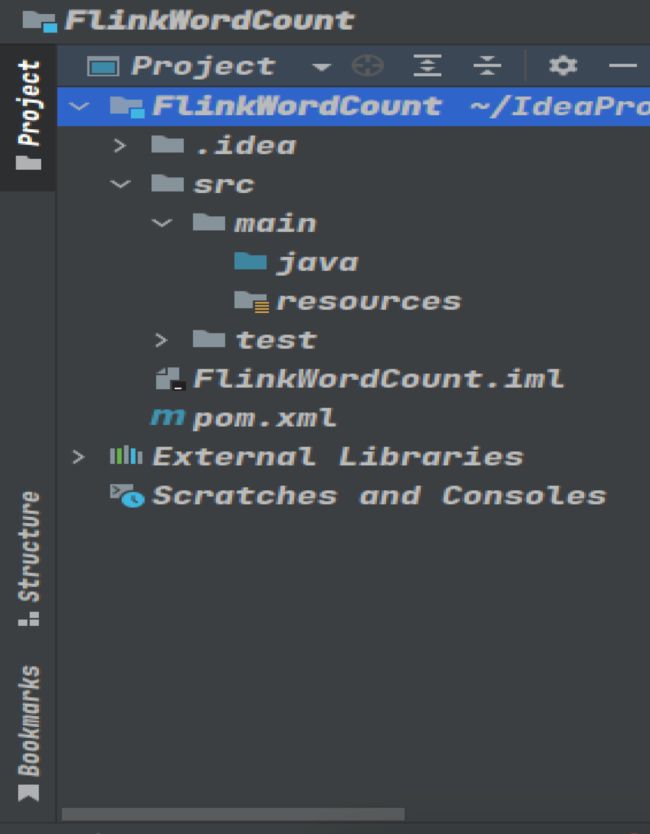

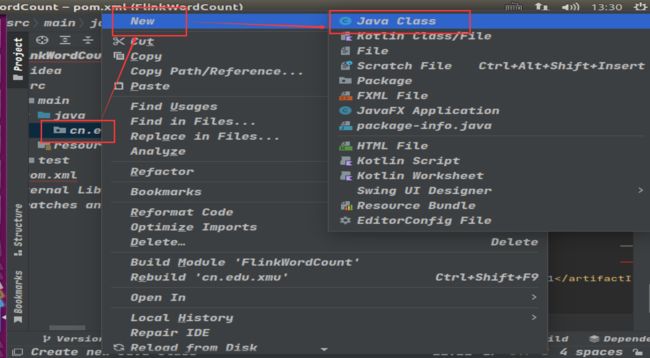

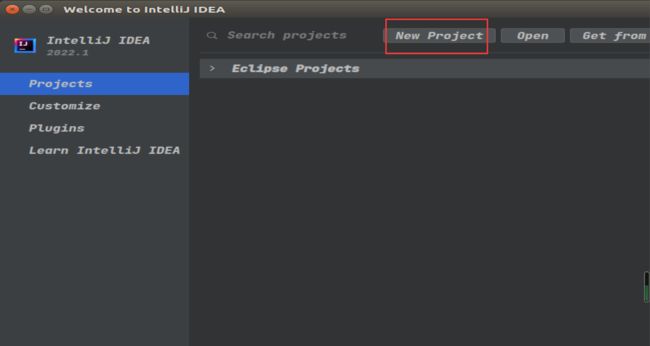

启动进入IDEA,如下图所示,新建一个项目。

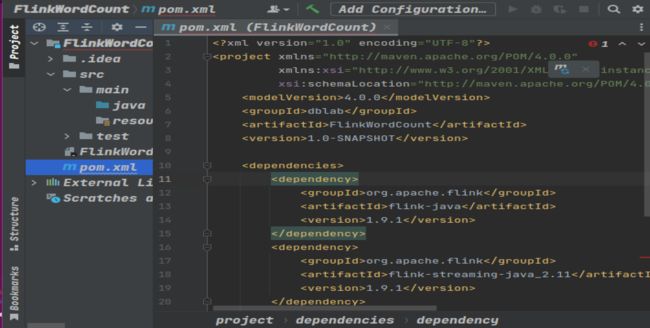

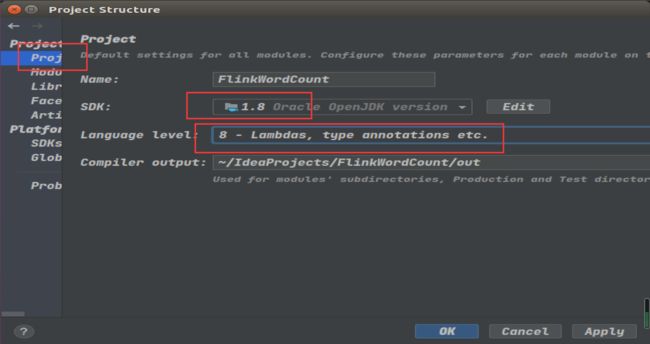

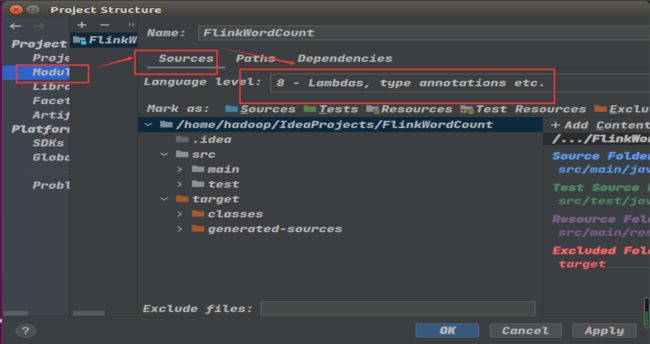

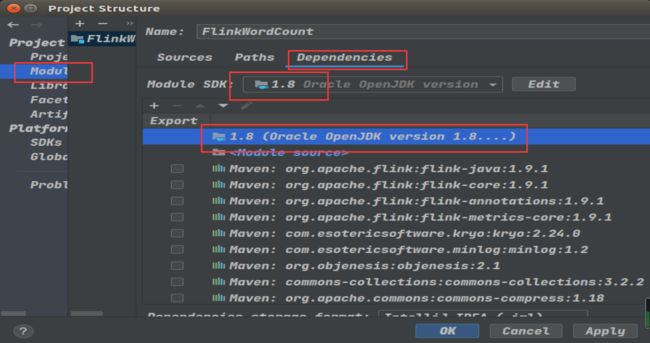

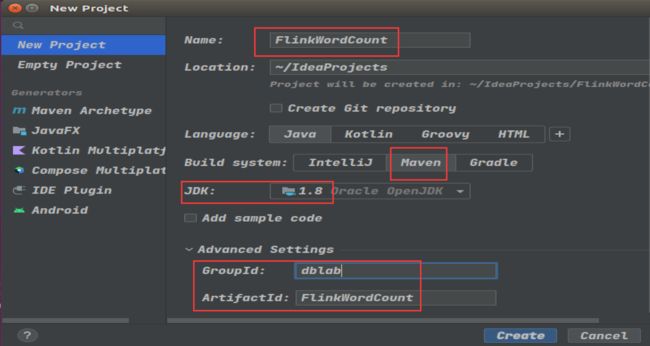

执行如下图所示的操作,设置Project name为FlinkWordCount、GroupId是dblab、ArtifactId是FlinkWordCount

4.0.0

dblab

FlinkWordCount

1.0-SNAPSHOT

org.apache.flink

flink-java

1.9.1

org.apache.flink

flink-streaming-java_2.11

1.9.1

org.apache.flink

flink-clients_2.11

1.9.1

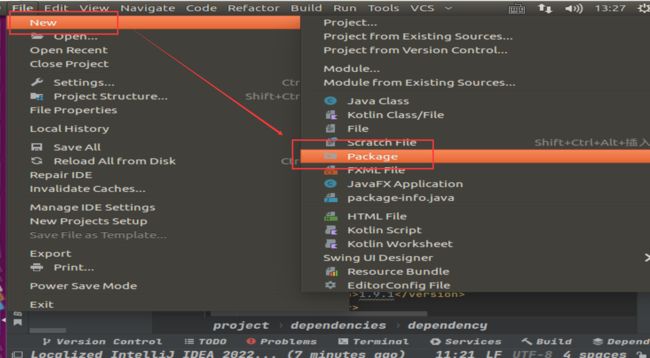

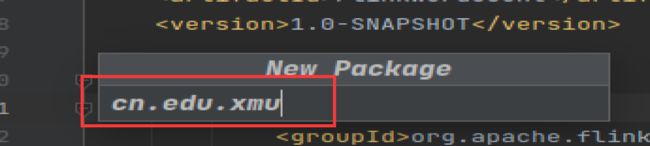

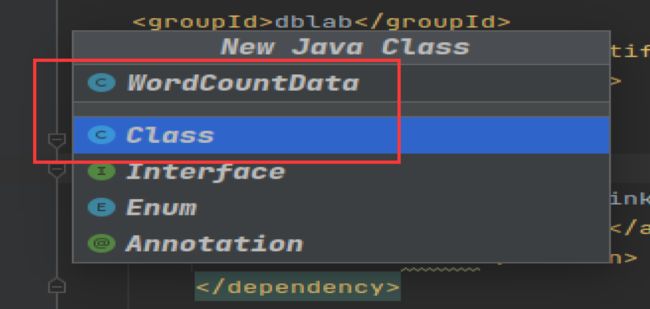

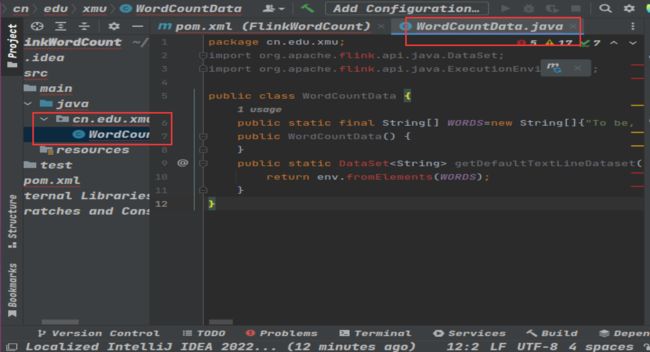

WordCountData.java用于提供原始数据,其内容如下:

package cn.edu.xmu;

import org.apache.flink.api.java.DataSet;

import org.apache.flink.api.java.ExecutionEnvironment;

public class WordCountData {

public static final String[] WORDS=new String[]{"To be, or not to be,--that is the question:--", "Whether \'tis nobler in the mind to suffer", "The slings and arrows of outrageous fortune", "Or to take arms against a sea of troubles,", "And by opposing end them?--To die,--to sleep,--", "No more; and by a sleep to say we end", "The heartache, and the thousand natural shocks", "That flesh is heir to,--\'tis a consummation", "Devoutly to be wish\'d. To die,--to sleep;--", "To sleep! perchance to dream:--ay, there\'s the rub;", "For in that sleep of death what dreams may come,", "When we have shuffled off this mortal coil,", "Must give us pause: there\'s the respect", "That makes calamity of so long life;", "For who would bear the whips and scorns of time,", "The oppressor\'s wrong, the proud man\'s contumely,", "The pangs of despis\'d love, the law\'s delay,", "The insolence of office, and the spurns", "That patient merit of the unworthy takes,", "When he himself might his quietus make", "With a bare bodkin? who would these fardels bear,", "To grunt and sweat under a weary life,", "But that the dread of something after death,--", "The undiscover\'d country, from whose bourn", "No traveller returns,--puzzles the will,", "And makes us rather bear those ills we have", "Than fly to others that we know not of?", "Thus conscience does make cowards of us all;", "And thus the native hue of resolution", "Is sicklied o\'er with the pale cast of thought;", "And enterprises of great pith and moment,", "With this regard, their currents turn awry,", "And lose the name of action.--Soft you now!", "The fair Ophelia!--Nymph, in thy orisons", "Be all my sins remember\'d."};

public WordCountData() {

}

public static DataSet getDefaultTextLineDataset(ExecutionEnvironment env){

return env.fromElements(WORDS);

}

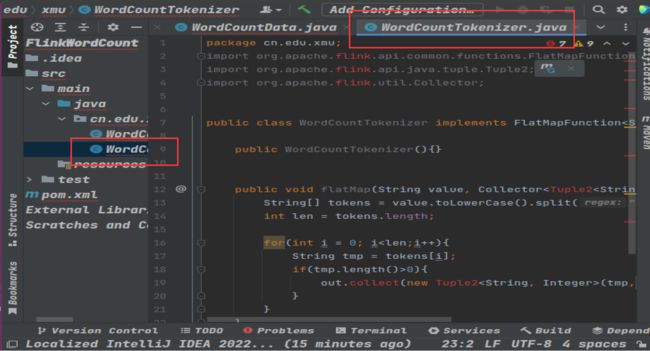

} 按照刚才同样的操作,创建第2个文件WordCountTokenizer.java用于切分句子,其内容如下:

package cn.edu.xmu;

import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.util.Collector;

public class WordCountTokenizer implements FlatMapFunction>{

public WordCountTokenizer(){}

public void flatMap(String value, Collector> out) throws Exception {

String[] tokens = value.toLowerCase().split("\\W+");

int len = tokens.length;

for(int i = 0; i0){

out.collect(new Tuple2(tmp,Integer.valueOf(1)));

}

}

}

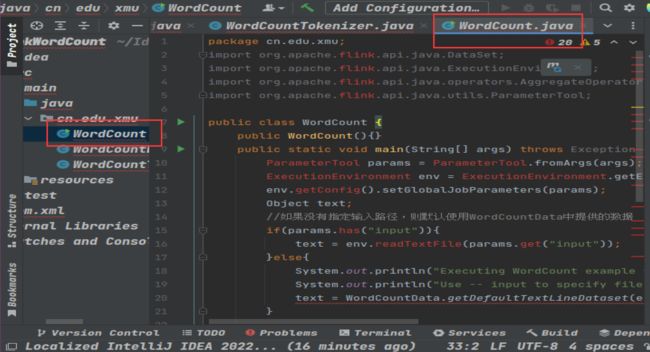

} 按照刚才同样的操作,创建第3个文件WordCount.java提供主函数,其内容如下:

package cn.edu.xmu;

import org.apache.flink.api.java.DataSet;

import org.apache.flink.api.java.ExecutionEnvironment;

import org.apache.flink.api.java.operators.AggregateOperator;

import org.apache.flink.api.java.utils.ParameterTool;

public class WordCount {

public WordCount(){}

public static void main(String[] args) throws Exception {

ParameterTool params = ParameterTool.fromArgs(args);

ExecutionEnvironment env = ExecutionEnvironment.getExecutionEnvironment();

env.getConfig().setGlobalJobParameters(params);

Object text;

//如果没有指定输入路径,则默认使用WordCountData中提供的数据

if(params.has("input")){

text = env.readTextFile(params.get("input"));

}else{

System.out.println("Executing WordCount example with default input data set.");

System.out.println("Use -- input to specify file input.");

text = WordCountData.getDefaultTextLineDataset(env);

}

AggregateOperator counts = ((DataSet)text).flatMap(new WordCountTokenizer()).groupBy(new int[]{0}).sum(1);

//如果没有指定输出,则默认打印到控制台

if(params.has("output")){

counts.writeAsCsv(params.get("output"),"\n", " ");

env.execute();

}else{

System.out.println("Printing result to stdout. Use --output to specify output path.");

counts.print();

}

}

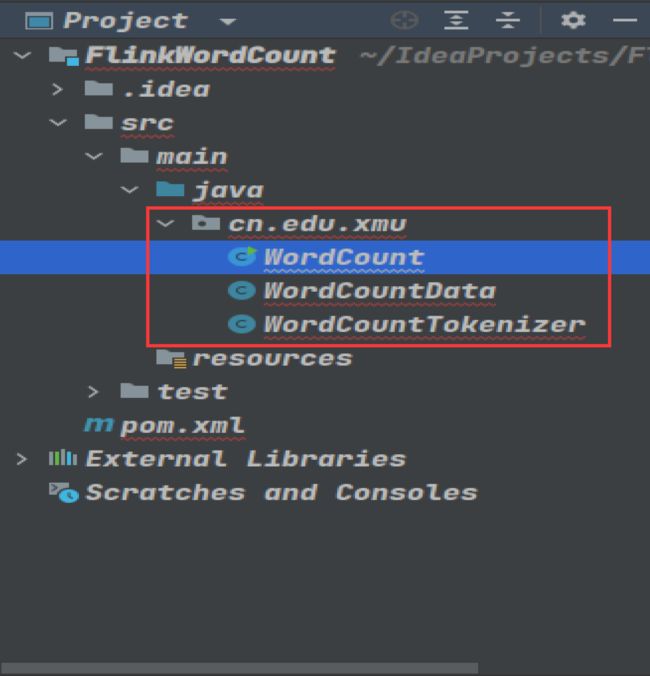

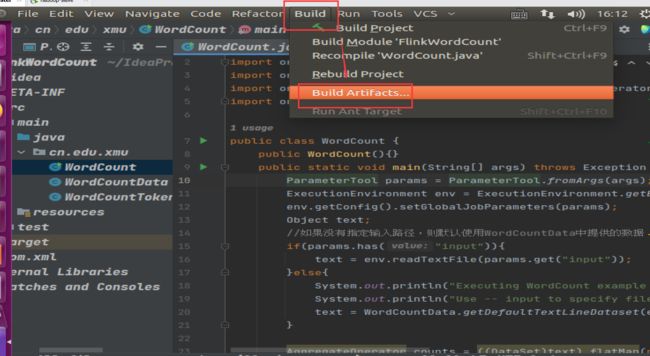

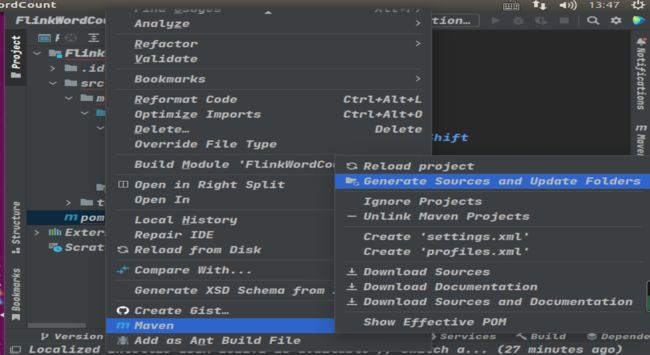

}如下图所示,在左侧目录树的pom.xml文件上单击鼠标右键,在弹出的菜单中选择Maven,再在弹出的菜单中选择Generate Sources and Update Folders。

如下图所示,在左侧目录树的pom.xml文件上单击鼠标右键,在弹出的菜单中选择Maven,再在弹出的菜单中选择Reimport。

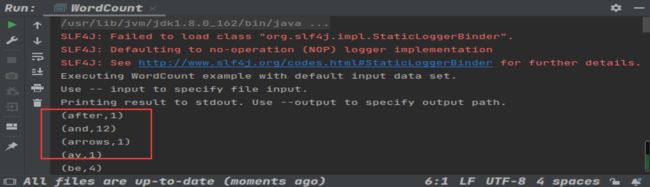

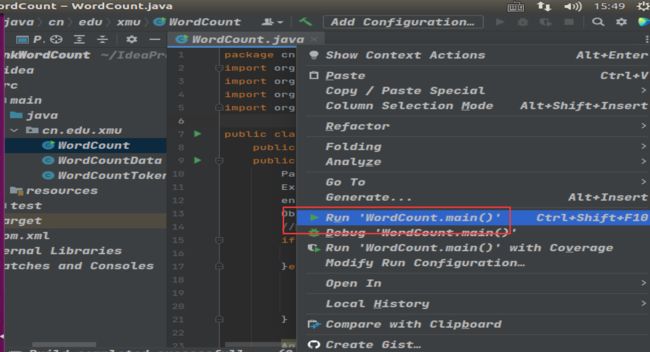

如下图所示,打开WordCount.java代码文件,在这个代码文件的代码区域,鼠标右键单击,弹出菜单中选中Run WordCount.main()

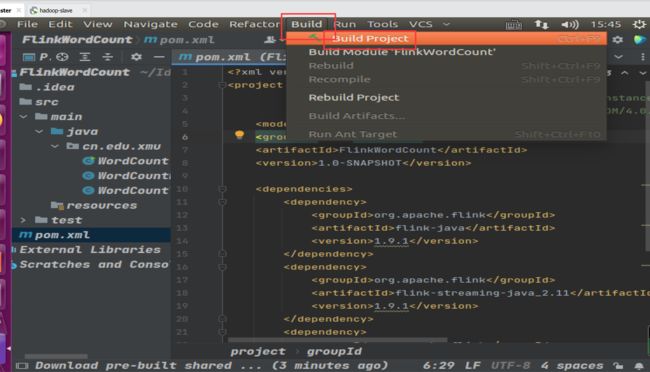

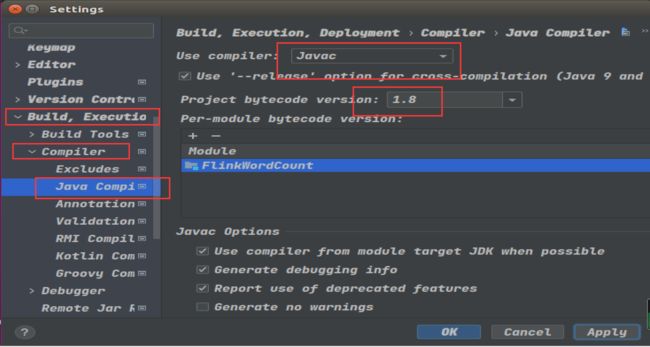

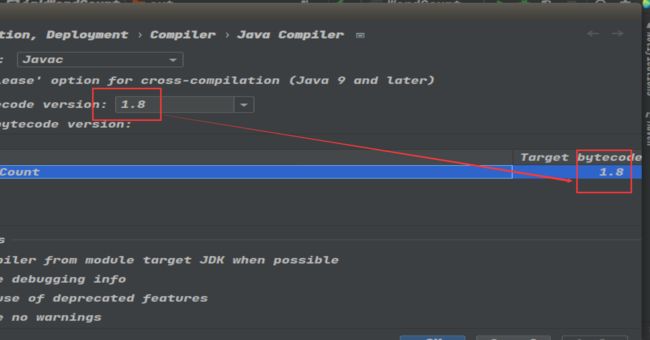

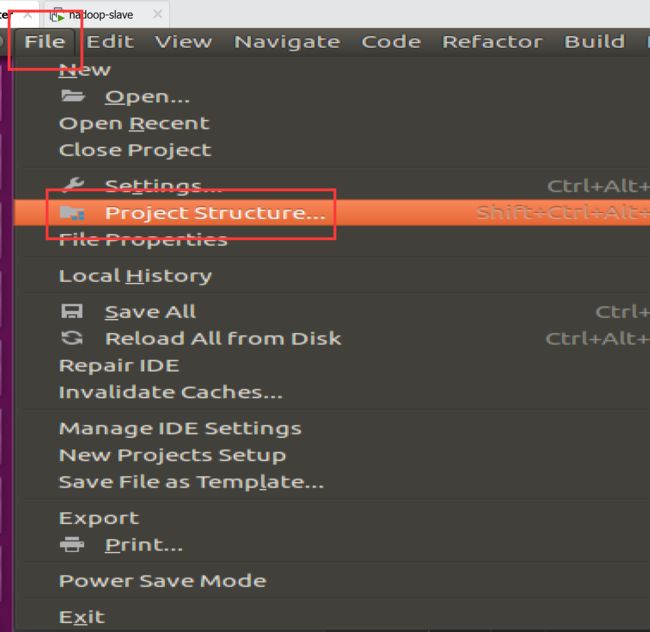

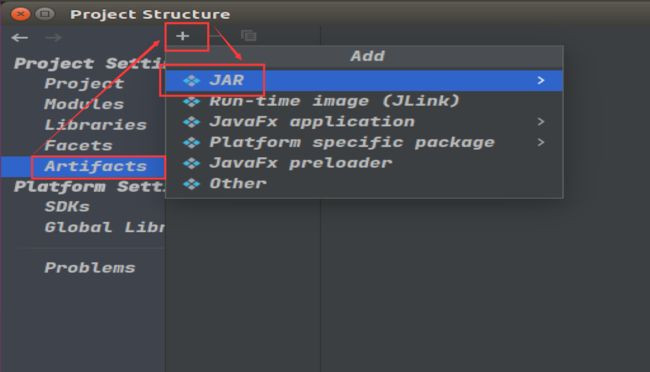

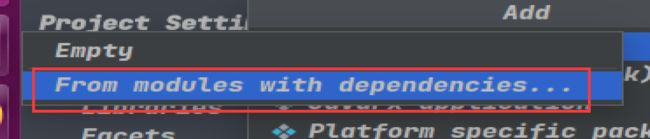

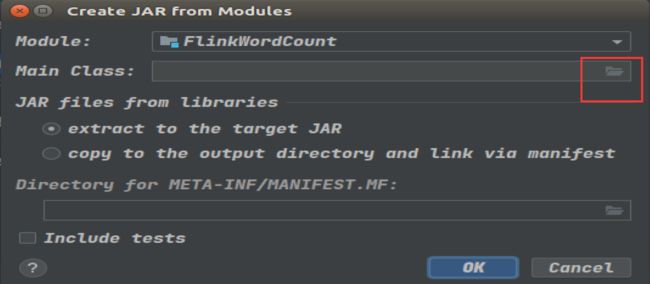

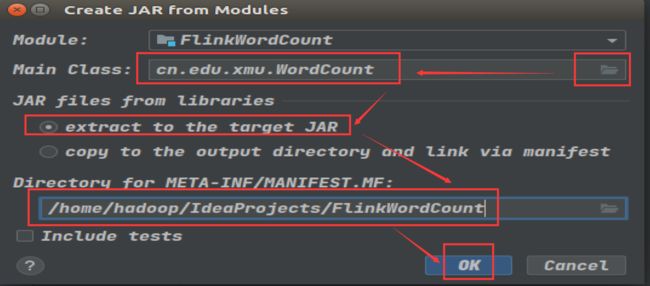

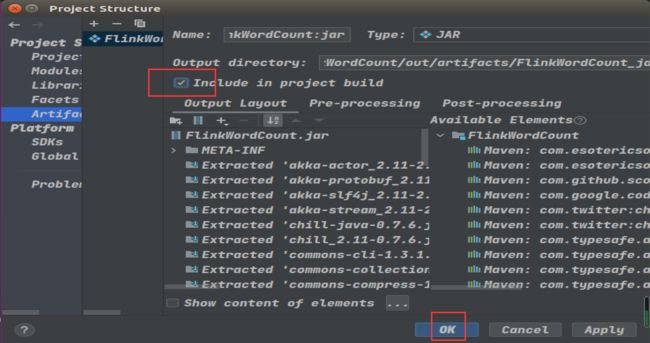

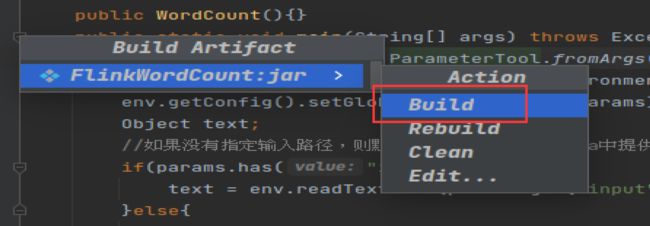

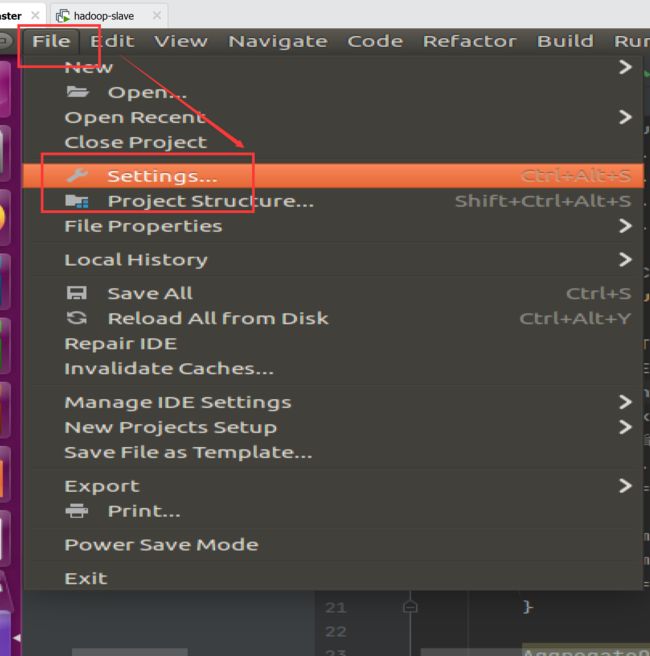

下面要把代码进行编译打包,打包成jar包。为此,需要做一些准备工作。

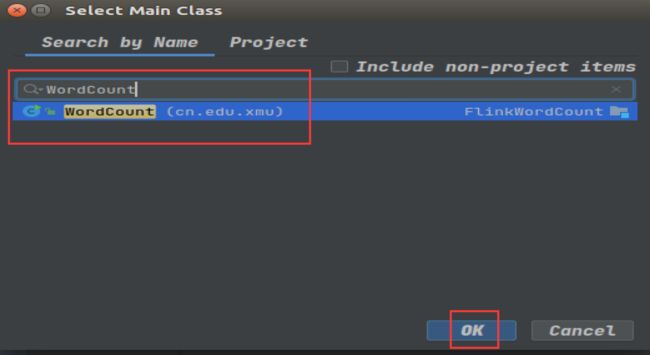

如下图所示进行设置。在搜索框中输入WordCount就会自动搜索到主类,然后在搜索到的结果条上双击鼠标。

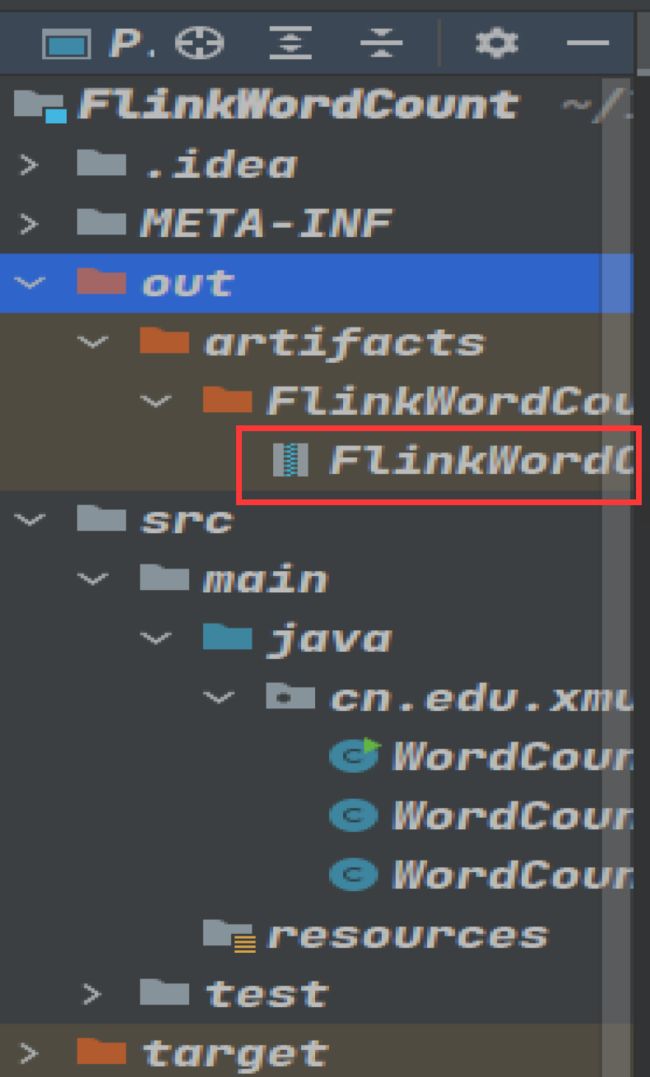

如下图所示,编译打包成功以后,可以看到生成的FlinkWordCount.jar文件。

最后,到Flink中运行FlinkWordCount.jar

hadoop@hadoop-master:~$ cd /usr/local/flink/bin/

hadoop@hadoop-master:/usr/local/flink/bin$ ./flink run --class cn.edu.xmu.WordCount ~/IdeaProjects/FlinkWordCount/out/artifacts/FlinkWordCount_jar/FlinkWordCount.jar

Starting execution of program

Executing WordCount example with default input data set.

Use -- input to specify file input.

Printing result to stdout. Use --output to specify output path.

(a,5)

(action,1)

(after,1)

(against,1)

(all,2)

(and,12)

(arms,1)

(arrows,1)

(awry,1)

(ay,1)

(bare,1)

(be,4)

.......本文参考:http://dblab.xmu.edu.cn/blog/2507-2/