LLM大语言模型(典型ChatGPT)入门指南

文章目录

- 一、基础概念学习篇

-

- 1.1 langchain视频学习笔记

- 1.2 Finetune LLM视频学习笔记

- 二、实践篇

-

- 2.1 预先下载模型:

- 2.2 LangChain

- 2.3 Colab demo

- 2.3 text-generation-webui

- 三、国内项目实践langchain-chatchat

一、基础概念学习篇

1.1 langchain视频学习笔记

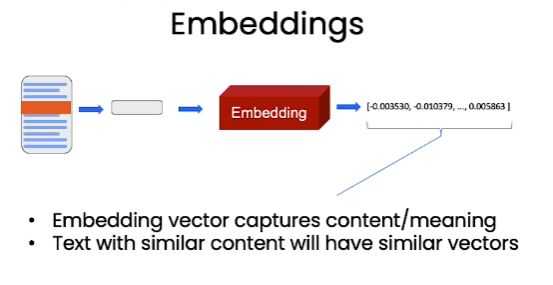

langchain是基于LLM的一套解决方案,包括对文本问答等功能

参考视频(吴恩达大神团队的):https://www.bilibili.com/video/BV1pz4y1e7T9/?p=1&vd_source=82b50e78f6d8c4b40bd90af87f9a980b

- 整理流程

问题和参考知识(来自文本),打包成prompt传入给LLM,然后LLM返回回答完成对文本进行问答

1.2 Finetune LLM视频学习笔记

参考:https://www.bilibili.com/video/BV1Rz4y1T7wz?p=8&spm_id_from=pageDriver&vd_source=82b50e78f6d8c4b40bd90af87f9a980b

二、实践篇

部署入口:https://github.com/ymcui/Chinese-LLaMA-Alpaca-2

2.1 预先下载模型:

2.2 LangChain

参考链接;https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki/langchain_zh

bash交互式chat:langchain_zh部署

预先下载text2vec-large-chinese向量化模型:https://huggingface.co/GanymedeNil/text2vec-large-chinese/tree/main

链接中的解释:在检索式问答中,LangChain通过问句与文档内容的相似性匹配,来选取文档中与问句最相关的部分作为上下文,与问题组合生成LLM的输入。因此,需要准备一个合适的embedding model用于匹配过程中的文本/问题向量化。

- 部署:

conda create -n langchain3 python=3.8

conda activate langchain3

git clone https://github.com/ymcui/Chinese-LLaMA-Alpaca-2.git

pip install langchain

pip install sentence_transformers==2.2.2

pip install pydantic==1.10.8

pip install faiss-gpu==1.7.1

pip install protobuf

pip install accelerate

python langchain_qa.py --embedding_path /path/to/text2vec-large-chinese --model_path /path/to/chinese-alpaca-2-7b --file_path doc.txt --chain_type refine

2.3 Colab demo

参考链接:https://colab.research.google.com/drive/1yu0eZ3a66by8Zqm883LLtRQrguBAb9MR?usp=sharing

- 部署:

conda create -n colab python=3.8

conda activate colab

# 然后按照链接步骤来即可

git clone https://github.com/ymcui/Chinese-LLaMA-Alpaca-2.git

pip install -r Chinese-LLaMA-Alpaca-2/requirements.txt

pip install gradio

# 下载模型

git clone https://huggingface.co/ziqingyang/chinese-alpaca-2-7b

python Chinese-LLaMA-Alpaca-2/scripts/inference/gradio_demo.py --base_model /content/chinese-alpaca-2-7b --load_in_8bit

- 报错 Could not create share link. Please check your internet

Please check your internet connection. This can happen if your antivirus software blocks the download of this file. You can install manually by following these steps:

1. Download this file: https://cdn-media.huggingface.co/frpc-gradio-0.2/frpc_linux_amd64

2. Rename the downloaded file to: frpc_linux_amd64_v0.2

3. Move the file to this location: /home/gykj/miniconda3/envs/textgen/lib/python3.11/site-packages/gradio

- 解决方案

如果这个报错,则去https://cdn-media.huggingface.co/frpc-gradio-0.2/frpc_linux_amd64下载再重命名frpc_linux_amd64_v0.2再放入/home/gykj/miniconda3/envs/textgen/lib/python3.11/site-packages/gradio内即可。

然后特别注意需要修改权限:

chmod +x /home/gykj/miniconda3/envs/textgen/lib/python3.11/site-packages/gradio/frpc_linux_amd64_v0.2

2.3 text-generation-webui

参考链接:https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki/text-generation-webui_zh

- 安装text-generation-webui

参考:https://github.com/oobabooga/text-generation-webui#installation

git clone https://github.com/oobabooga/text-generation-webui.git

cd text-generation-webui

conda create -n textgen python=3.11

conda activate textgen

# 我用的cuda11.8 NV:TITAN

pip3 install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118

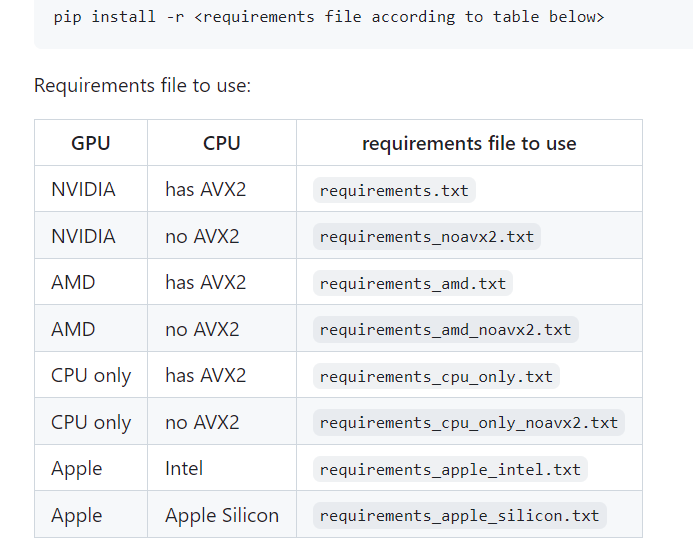

- 看是否has AVX2

apt install cpuid

cpuid | grep AVX2

然后安装对应reqirements(我这边装这个报错,没走这步,下一步缺少什么安装什么也可,包不多)

然后

python server.py

缺什么pip install什么就可以。

- 运行

参考:https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki/text-generation-webui_zh

- 准备模型权重

>>> ls models/chinese-alpaca-2-7b

config.json

generation_config.json

pytorch_model-00001-of-00002.bin

pytorch_model-00002-of-00002.bin

pytorch_model.bin.index.json

special_tokens_map.json

tokenizer_config.json

tokenizer.json

tokenizer.model

- 加载命令:

python server.py --model chinese-alpaca-2-7b --chat --share

也可以是:

python server.py --model /home/gykj/thomascai/models/chinese-alpaca-2-13b --chat --share

- 报错

Please check your internet connection. This can happen if your antivirus software blocks the download of this file. You can install manually by following these steps:

4. Download this file: https://cdn-media.huggingface.co/frpc-gradio-0.2/frpc_linux_amd64

5. Rename the downloaded file to: frpc_linux_amd64_v0.2

6. Move the file to this location: /home/gykj/miniconda3/envs/textgen/lib/python3.11/site-packages/gradio

- 解决方案

如果这个报错,则去https://cdn-media.huggingface.co/frpc-gradio-0.2/frpc_linux_amd64下载再重命名frpc_linux_amd64_v0.2再放入/home/gykj/miniconda3/envs/textgen/lib/python3.11/site-packages/gradio内即可。

然后特别注意需要修改权限:

sudo chmod +x /home/gykj/miniconda3/envs/textgen/lib/python3.11/site-packages/gradio/frpc_linux_amd64_v0.2

三、国内项目实践langchain-chatchat

比较好用的国内项目

按照

https://github.com/chatchat-space/Langchain-Chatchat/wiki/%E5%BC%80%E5%8F%91%E7%8E%AF%E5%A2%83%E9%83%A8%E7%BD%B2

的本地部署环境安装即可,记得预先下载好模型,放在对应位置

有专门的wiki,比较详细,如有问题,可以讨论,他们也有群,也可以加群讨论~

整理资料不易,请一键三连支持,感谢~

∼ O n e p e r s o n g o f a s t e r , a g r o u p o f p e o p l e c a n g o f u r t h e r ∼ \sim_{One\ person\ go\ faster,\ a\ group\ of\ people\ can\ go\ further}\sim ∼One person go faster, a group of people can go further∼