langchain 之 Tools 多案例使用(一)

原文:langchain 之 Tools 多案例使用(一) - 简书

ATTENTION:

- 如果采用 openai 的接口,需要走代理,本文采用 proxychains 进行设置。

- 开启 debug 模式后,能看到更多的输出信息。

import langchain

langchain.debug = True

1. Tools

参数如下。

- name (str), 必填参数,并且在提供给代理的一组工具中必须是唯一的。

- description (str), 可选参数,但推荐使用,因为它由代理用于确定工具的使用。描述的越清晰,LLM采用工具完成的任务越准确!

- return_direct (bool), defaults to False

- args_schema (Pydantic BaseModel), 可选参数,但推荐使用,可用于提供更多信息(例如,few-shot examples)或验证预期参数( validation for expected parameters)。

- func (function), 必填,传入 tool 需要执行的操作。可以是 class、function等等。

- coroutine (function), 可选参数,传入参数与 func 一样,但是需要用 async 修饰。

典型函数:

def read_previous_db(words:str)

....

class CInput(pydantic.BaseModel):

question: str = pydantic.Field()

tool = Tool.from_function(func=read_previous_db,

name="previous_records",

description="用于回答与用户的历史对话信息,直接传入用户的问题,将查询后的结果再用 xx 工具回答给用户"

# args_schema=CInput,

)

1.1 调用自定义函数(局部代码)

如下面代码所示,可以通过接口使用 SQL 语句查询数据库

import requests

from typing import List, Dict, Any

import json

def vocab_lookup(

search: str,

entity_type: str = "item",

url: str = "https://www.wikidata.org/w/api.php",

user_agent_header: str = wikidata_user_agent_header,

srqiprofile: str = None,

) -> Optional[str]:

headers = {"Accept": "application/json"}

if wikidata_user_agent_header is not None:

headers["User-Agent"] = wikidata_user_agent_header

if entity_type == "item":

srnamespace = 0

srqiprofile = "classic_noboostlinks" if srqiprofile is None else srqiprofile

elif entity_type == "property":

srnamespace = 120

srqiprofile = "classic" if srqiprofile is None else srqiprofile

else:

raise ValueError("entity_type must be either 'property' or 'item'")

params = {

"action": "query",

"list": "search",

"srsearch": search,

"srnamespace": srnamespace,

"srlimit": 1,

"srqiprofile": srqiprofile,

"srwhat": "text",

"format": "json",

}

response = requests.get(url, headers=headers, params=params)

if response.status_code == 200:

title = get_nested_value(response.json(), ["query", "search", 0, "title"])

if title is None:

return f"I couldn't find any {entity_type} for '{search}'. Please rephrase your request and try again"

# if there is a prefix, strip it off

return title.split(":")[-1]

else:

return "Sorry, I got an error. Please try again."

def run_sparql(

query: str,

url="https://query.wikidata.org/sparql",

user_agent_header: str = wikidata_user_agent_header,

) -> List[Dict[str, Any]]:

headers = {"Accept": "application/json"}

if wikidata_user_agent_header is not None:

headers["User-Agent"] = wikidata_user_agent_header

response = requests.get(

url, headers=headers, params={"query": query, "format": "json"}

)

if response.status_code != 200:

return "That query failed. Perhaps you could try a different one?"

results = get_nested_value(response.json(), ["results", "bindings"])

return json.dumps(results)

sql_result = run_sparql("SELECT (COUNT(?children) as ?count) WHERE { wd:Q1339 wdt:P40 ?children . }")

print(sql_result)

### Agent wraps the tools

from langchain.agents import (

Tool,

AgentExecutor,

LLMSingleActionAgent,

AgentOutputParser,

)

# Define which tools the agent can use to answer user queries

tools = [

Tool(

name="ItemLookup",

func=(lambda x: vocab_lookup(x, entity_type="item")),

description="useful for when you need to know the q-number for an item",

),

Tool(

name="PropertyLookup",

func=(lambda x: vocab_lookup(x, entity_type="property")),

description="useful for when you need to know the p-number for a property",

),

Tool(

name="SparqlQueryRunner",

func=run_sparql,

description="useful for getting results from a wikibase",

),

]

或者传入构造的函数,比如下面传入的 multi-input,直接传入参数 String 然后分解参数。

def multiplier(a, b):

return a * b

def parsing_multiplier(string):

a, b = string.split(",")

return multiplier(int(a), int(b))

llm = OpenAI(temperature=0)

tools = [

Tool(

name="Multiplier",

func=parsing_multiplier,

description="useful for when you need to multiply two numbers together. The input to this tool should be a comma separated list of numbers of length two, representing the two numbers you want to multiply together. For example, `1,2` would be the input if you wanted to multiply 1 by 2.",

)

]

https://python.langchain.com/docs/modules/agents/tools/multi_input_tool

或者自定义类。

import yfinance as yf

from datetime import datetime, timedelta

def get_current_stock_price(ticker):

"""Method to get current stock price"""

ticker_data = yf.Ticker(ticker)

recent = ticker_data.history(period="1d")

return {"price": recent.iloc[0]["Close"], "currency": ticker_data.info["currency"]}

def get_stock_performance(ticker, days):

"""Method to get stock price change in percentage"""

past_date = datetime.today() - timedelta(days=days)

ticker_data = yf.Ticker(ticker)

history = ticker_data.history(start=past_date)

old_price = history.iloc[0]["Close"]

current_price = history.iloc[-1]["Close"]

return {"percent_change": ((current_price - old_price) / old_price) * 100}

# res1 = get_current_stock_price("MSFT")

# print(res1)

# res2 = get_stock_performance("MSFT", 30)

# print(res2)

from typing import Type

from pydantic import BaseModel, Field

from langchain.tools import BaseTool

class CurrentStockPriceInput(BaseModel):

"""Inputs for get_current_stock_price"""

ticker: str = Field(description="Ticker symbol of the stock")

class CurrentStockPriceTool(BaseTool):

name = "get_current_stock_price"

description = """

Useful when you want to get current stock price.

You should enter the stock ticker symbol recognized by the yahoo finance

"""

args_schema: Type[BaseModel] = CurrentStockPriceInput

def _run(self, ticker: str):

price_response = get_current_stock_price(ticker)

return price_response

def _arun(self, ticker: str):

raise NotImplementedError("get_current_stock_price does not support async")

class StockPercentChangeInput(BaseModel):

"""Inputs for get_stock_performance"""

ticker: str = Field(description="Ticker symbol of the stock")

days: int = Field(description="Timedelta days to get past date from current date")

class StockPerformanceTool(BaseTool):

name = "get_stock_performance"

description = """

Useful when you want to check performance of the stock.

You should enter the stock ticker symbol recognized by the yahoo finance.

You should enter days as number of days from today from which performance needs to be check.

output will be the change in the stock price represented as a percentage.

"""

args_schema: Type[BaseModel] = StockPercentChangeInput

def _run(self, ticker: str, days: int):

response = get_stock_performance(ticker, days)

return response

def _arun(self, ticker: str):

raise NotImplementedError("get_stock_performance does not support async")

from langchain.agents import AgentType

from langchain.chat_models import ChatOpenAI

from langchain.agents import initialize_agent

llm = ChatOpenAI(model="gpt-3.5-turbo-0613", temperature=0)

tools = [CurrentStockPriceTool(), StockPerformanceTool()]

agent = initialize_agent(tools, llm, agent=AgentType.OPENAI_FUNCTIONS, verbose=True)

res3 = agent.run(

"What is the current price of Microsoft stock? How it has performed over past 6 months?"

)

print(res3)

至于这里为什么 agent=AgentType.OPENAI_FUNCTIONS ?文章第二部分会提到。

1.2 搜索引擎等第三方工具

直接通过 serp 进行接口调用,可点击 https://serpapi.com/ 进行查看所有支持的接口类型,也包括百度,涉及到接口问题,需要添加 api_key。

# 需要添加 os.environ["SERPAPI_API_KEY"] = ""

# Load the tool configs that are needed.

search = SerpAPIWrapper()

llm_math_chain = LLMMathChain(llm=llm, verbose=True)

tools = [

Tool.from_function(

func=search.run,

name="Search",

description="useful for when you need to answer questions about current events"

# coroutine= ... <- you can specify an async method if desired as well

),

]

也可直接使用 langchain 自带内部的接口工具。

llm = OpenAI(temperature=0)

tools = load_tools(["wikipedia", "llm-math", "terminal"], llm=llm)

agent = initialize_agent(

tools,

llm,

agent=AgentType.ZERO_SHOT_REACT_DESCRIPTION,

)

from llama_index.tools.ondemand_loader_tool import OnDemandLoaderTool

from llama_index.readers.wikipedia import WikipediaReader

from typing import List

from pydantic import BaseModel

reader = WikipediaReader()

tool = OnDemandLoaderTool.from_defaults(

reader,

name="Wikipedia Tool",

description="A tool for loading and querying articles from Wikipedia",

)

# run only the llama_Index Tool by itself

tool(["Berlin"], query_str="What's the arts and culture scene in Berlin?")

# run tool from LangChain Agent

lc_tool = tool.to_langchain_structured_tool(verbose=True)

lc_tool.run(tool_input={"pages": ["Berlin"], "query_str": "What's the arts and culture scene in Berlin?"})

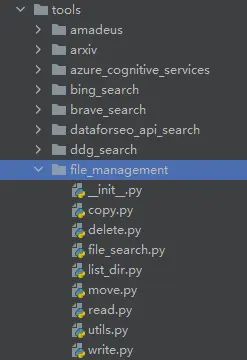

1.3 引入 langchain.tools 中自带 openai functions

from langchain.chat_models import ChatOpenAI

from langchain.schema import HumanMessage

model = ChatOpenAI(model="gpt-3.5-turbo-0613")

from langchain.tools import MoveFileTool, format_tool_to_openai_function

tools = [MoveFileTool()]

functions = [format_tool_to_openai_function(t) for t in tools]

message = model.predict_messages(

[HumanMessage(content="move file foo to bar")], functions=functions

)

print(message)

print(message.additional_kwargs["function_call"])

file management

https://python.langchain.com/docs/modules/agents/tools/tools_as_openai_functions

1.4 tools 中再次包装 chains

class CalculatorInput(pydantic.BaseModel):

question: str = pydantic.Field()

llm_math_chain = LLMMathChain.from_llm(llm=llm, verbose=True)

class PrimeInput(pydantic.BaseModel):

n: int = pydantic.Field()

def get_prime(n: int, primes: dict = primes) -> str:

return str(primes.get(int(n)))

async def aget_prime(n: int, primes: dict = primes) -> str:

return str(primes.get(int(n)))

class CalculatorInput(pydantic.BaseModel):

question: str = pydantic.Field()

tools = [

Tool(

name="GetPrime",

func=get_prime,

description="A tool that returns the `n`th prime number",

args_schema=PrimeInput,

coroutine=aget_prime,

),

Tool.from_function(

func=llm_math_chain.run,

name="Calculator",

description="Useful for when you need to compute mathematical expressions",

args_schema=CalculatorInput,

coroutine=llm_math_chain.arun,

),

]

agent = initialize_agent(

tools, llm, agent=AgentType.ZERO_SHOT_REACT_DESCRIPTION, verbose=True

)

1.5 tool 与 vectorstores 结合

利用 embeddings 将数据存入向量数据库,然后进行检索。

from langchain.embeddings.openai import OpenAIEmbeddings

from langchain.vectorstores import Chroma

from langchain.text_splitter import CharacterTextSplitter

from langchain.llms import OpenAI

from langchain.chains import RetrievalQA

llm = OpenAI(temperature=0)

from pathlib import Path

from langchain.document_loaders import TextLoader

doc_path = "E:\DEVELOP\LLM_Demo\examples\state_of_the_union.txt"

loader = TextLoader(doc_path)

documents = loader.load()

text_splitter = CharacterTextSplitter(chunk_size=1000, chunk_overlap=0)

texts = text_splitter.split_documents(documents)

embeddings = OpenAIEmbeddings()

docsearch = Chroma.from_documents(texts, embeddings, collection_name="state-of-union")

state_of_union = RetrievalQA.from_chain_type(

llm=llm, chain_type="stuff", retriever=docsearch.as_retriever()

)

###

from langchain.document_loaders import WebBaseLoader

loader = WebBaseLoader("https://beta.ruff.rs/docs/faq/")

docs = loader.load()

ruff_texts = text_splitter.split_documents(docs)

ruff_db = Chroma.from_documents(ruff_texts, embeddings, collection_name="ruff")

ruff = RetrievalQA.from_chain_type(

llm=llm, chain_type="stuff", retriever=ruff_db.as_retriever()

)

# Import things that are needed generically

from langchain.agents import initialize_agent, Tool

from langchain.agents import AgentType

from langchain.tools import BaseTool

from langchain.llms import OpenAI

from langchain import LLMMathChain, SerpAPIWrapper

tools = [

Tool(

name="State of Union QA System",

func=state_of_union.run,

description="useful for when you need to answer questions about the most recent state of the union address. Input should be a fully formed question.",

),

Tool(

name="Ruff QA System",

func=ruff.run,

description="useful for when you need to answer questions about ruff (a python linter). Input should be a fully formed question.",

),

]

# Construct the agent. We will use the default agent type here.

# See documentation for a full list of options.

agent = initialize_agent(

tools, llm, agent=AgentType.ZERO_SHOT_REACT_DESCRIPTION, verbose=True

)

res = agent.run(

"What did biden say about ketanji brown jackson in the state of the union address?"

)

print(res)

1.6 调用浏览器工具库

如果已有库工具,可以直接将相关的工具作为 tools。

from langchain.agents import AgentType

from langchain.chat_models import ChatOpenAI

from langchain.agents import initialize_agent

from langchain.agents.agent_toolkits import PlayWrightBrowserToolkit

from langchain.tools.playwright.utils import (

create_async_playwright_browser,

create_sync_playwright_browser, # A synchronous browser is available, though it isn't compatible with jupyter.

)

async_browser = create_async_playwright_browser()

browser_toolkit = PlayWrightBrowserToolkit.from_browser(async_browser=async_browser)

tools = browser_toolkit.get_tools()

llm = ChatOpenAI(temperature=0) # Also works well with Anthropic models

agent_chain = initialize_agent(tools, llm, agent=AgentType.STRUCTURED_CHAT_ZERO_SHOT_REACT_DESCRIPTION,

verbose=True)

response1 = agent_chain.arun(input="Hi I'm Erica.")

print(response1)

1.7 sqlite 数据库加上 chain,整体作为 tool 工具

from langchain import LLMMathChain, OpenAI, SerpAPIWrapper, SQLDatabase, SQLDatabaseChain

from langchain.agents import initialize_agent, Tool

from langchain.agents import AgentType

llm = OpenAI(temperature=0)

search = SerpAPIWrapper()

llm_math_chain = LLMMathChain(llm=llm, verbose=True)

db = SQLDatabase.from_uri("sqlite:///../../../../../notebooks/Chinook.db")

db_chain = SQLDatabaseChain.from_llm(llm, db, verbose=True)

tools = [

Tool(

name="Search",

func=search.run,

description="useful for when you need to answer questions about current events. You should ask targeted questions"

),

Tool(

name="Calculator",

func=llm_math_chain.run,

description="useful for when you need to answer questions about math"

),

Tool(

name="FooBar DB",

func=db_chain.run,

description="useful for when you need to answer questions about FooBar. Input should be in the form of a question containing full context"

)

]

mrkl = initialize_agent(tools, llm, agent=AgentType.ZERO_SHOT_REACT_DESCRIPTION, verbose=True)

1.8 StructuredTool dataclass 进行接口访问

To dynamically generate a structured tool from a given function, the fastest way to get started is with

import requests

from langchain.tools import StructuredTool

def post_message(url: str, body: dict, parameters: Optional[dict] = None) -> str:

"""Sends a POST request to the given url with the given body and parameters."""

result = requests.post(url, json=body, params=parameters)

return f"Status: {result.status_code} - {result.text}"

tool = StructuredTool.from_function(post_message)

1.9 decorator 作为 tool

import requests

from langchain.tools import tool

@tool

def post_message(url: str, body: dict, parameters: Optional[dict] = None) -> str:

"""Sends a POST request to the given url with the given body and parameters."""

result = requests.post(url, json=body, params=parameters)

return f"Status: {result.status_code} - {result.text}"

https://api.python.langchain.com/en/latest/tools/langchain.tools.base.tool.html

1.10 修改 tool 的属性

tools = load_tools(["serpapi", "llm-math"], llm=llm)

tools[0].name = "Google Search"

1.11 Defining the priorities among Tools

对所有包装的工具进行优先级定义。

When you made a Custom tool, you may want the Agent to use the custom tool more than normal tools.

For example, you made a custom tool, which gets information on music from your database. When a user wants information on songs, You want the Agent to use the custom tool more than the normal Search tool. But the Agent might prioritize a normal Search tool.

This can be accomplished by adding a statement such as Use this more than the normal search if the question is about Music, like 'who is the singer of yesterday?' or 'what is the most popular song in 2022?' to the description.

https://python.langchain.com/docs/modules/agents/tools/custom_tools

参考资料:

Introduction | ️ Langchain