Python 网络爬虫教程2

据小伙伴私信反馈,让小絮絮多讲讲Python 爬虫的实践应用,那么今天这一期就光讲Python 的实践了。

Python 的实践篇

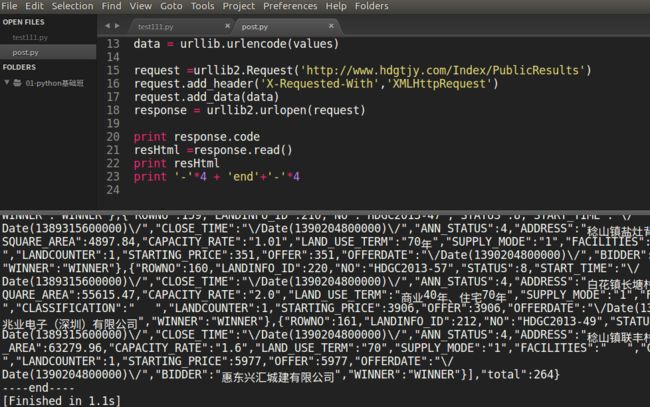

案例1惠州市网上挂牌交易系统

以 惠州市网上挂牌交易系统 为例

http://www.hdgtjy.com/index/Index4/

采集所有的挂牌交易信息

源码

import urllib2

import json

fp = open('hdgtjy.json','w')

for page in range(1,28):

for i in range(5):

try:

send_headers = {'X-Requested-With': 'XMLHttpRequest', 'Content-Type': 'application/x-www-form-urlencoded'}

request =urllib2.Request('http://www.hdgtjy.com/Index/PublicResults',data='page='+ str(page) +'&size=10',headers=send_headers)

response = urllib2.urlopen(request)

data = response.read()

obj = json.loads(data)

print obj['data'][0]['ADDRESS']

except Exception,e:

print e

fp.write(data)

fp.close()

print 'end'查看运行结果,感受一下。

案例2Requests基本用法与药品监督管理局

Requests

Requests 唯一的一个非转基因的 Python HTTP 库,人类可以安全享用

urllib2

urllib2是python自带的模块

自定义 'Connection': 'keep-alive',通知服务器交互结束后,不断开连接,即所谓长连接。当然这也是urllib2不支持keep-alive的解决办法之一,另一个方法是Requests。

安装 Requests

优点:

Requests 继承了urllib2的所有特性。Requests支持HTTP连接保持和连接池,支持使用cookie保持会话,支持文件上传,支持自动确定响应内容的编码,支持国际化的 URL 和 POST 数据自动编码。

缺陷:

requests不是python自带的库,需要另外安装 easy_install or pip install

直接使用不能异步调用,速度慢(自动确定响应内容的编码)

pip install requests文档:

Requests: 让 HTTP 服务人类 — Requests 2.18.1 文档

http://www.python-requests.org/en/master/#

使用方法:

requests.get(url, data={'key1': 'value1'},headers={'User-agent','Mozilla/5.0'})

requests.post(url, data={'key1': 'value1'},headers={'content-type': 'application/json'})以 药品监督管理局 为例

http://app1.sfda.gov.cn/

采集分类 国产药品商品名(6994) 下的所有的商品信息

商品列表页:http://app1.sfda.gov.cn/datasearch/face3/base.jsp?tableId=32&tableName=TABLE32&title=%B9%FA%B2%FA%D2%A9%C6%B7%C9%CC%C6%B7%C3%FB&bcId=124356639813072873644420336632

商品详情页:http://app1.sfda.gov.cn/datasearch/face3/content.jsp?tableId=32&tableName=TABLE32&tableView=%B9%FA%B2%FA%D2%A9%C6%B7%C9%CC%C6%B7%C3%FB&Id=211315

源码

# -*- coding: utf-8 -*-

import urllib

from lxml import etree

import re

import json

import chardet

import requests

curstart = 2

values = {

'tableId': '32',

'State': '1',

'bcId': '124356639813072873644420336632',

'State': '1',

'tableName': 'TABLE32',

'State': '1',

'viewtitleName': 'COLUMN302',

'State': '1',

'viewsubTitleName': 'COLUMN299,COLUMN303',

'State': '1',

'curstart': str(curstart),

'State': '1',

'tableView': urllib.quote("国产药品商品名"),

'State': '1',

}

post_headers = {

'Content-Type': 'application/x-www-form-urlencoded',

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.116 Safari/537.36'

}

url = "http://app1.sfda.gov.cn/datasearch/face3/search.jsp"

response = requests.post(url, data=values, headers=post_headers)

resHtml = response.text

print response.status_code

# print resHtml

Urls = re.findall(r'callbackC,\'(.*?)\',null', resHtml)

for url in Urls:

# 坑

print url.encode('gb2312')查看运行结果,感受一下。

-

总结

- User-Agent伪装Chrome,欺骗web服务器

- urlencode字典类型Dict、元祖 转化成 url query 字符串

那么都已经进行到这一步,刚好药品监督管理局发布的漏洞悬赏 ,那么我们接着往下(说实话进行到这,小编感觉自己头发都烧完了):

- 完成商品详情页采集

# -*- coding: utf-8 -*-

from lxml import etree

import re

import json

import requests

url ='http://app1.sfda.gov.cn/datasearch/face3/content.jsp?tableId=32&tableName=TABLE32&tableView=%B9%FA%B2%FA%D2%A9%C6%B7%C9%CC%C6%B7%C3%FB&Id=211315'

get_headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.116 Safari/537.36',

'Connection': 'keep-alive',

}

item = {}

response = requests.get(url,headers=get_headers)

resHtml = response.text

print response.encoding

html = etree.HTML(resHtml)

for site in html.xpath('//tr')[1:]:

if len(site.xpath('./td'))!=2:

continue

name = site.xpath('./td')[0].text

if not name:

continue

# value =site.xpath('./td')[1].text

value = re.sub('<.*?>', '', etree.tostring(site.xpath('./td')[1],encoding='utf-8'))

item[name.encode('utf-8')] = value

json.dump(item,open('sfda.json','w'),ensure_ascii=False)- 完成整个项目的采集

那么完整项目展示:

# -*- coding: utf-8 -*-

import urllib

from lxml import etree

import re

import json

import requests

def ParseDetail(url):

# url = 'http://app1.sfda.gov.cn/datasearch/face3/content.jsp?tableId=32&tableName=TABLE32&tableView=%B9%FA%B2%FA%D2%A9%C6%B7%C9%CC%C6%B7%C3%FB&Id=211315'

get_headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.116 Safari/537.36',

'Connection': 'keep-alive',

}

item = {}

response = requests.get(url, headers=get_headers)

resHtml = response.text

print response.encoding

html = etree.HTML(resHtml)

for site in html.xpath('//tr')[1:]:

if len(site.xpath('./td')) != 2:

continue

name = site.xpath('./td')[0].text

if not name:

continue

# value =site.xpath('./td')[1].text

value = re.sub('<.*?>', '', etree.tostring(site.xpath('./td')[1], encoding='utf-8'))

value = re.sub('', '', value)

item[name.encode('utf-8').strip()] = value.strip()

# json.dump(item, open('sfda.json', 'a'), ensure_ascii=False)

fp = open('sfda.json', 'a')

str = json.dumps(item, ensure_ascii=False)

fp.write(str + '\n')

fp.close()

def main():

curstart = 2

values = {

'tableId': '32',

'State': '1',

'bcId': '124356639813072873644420336632',

'State': '1',

'tableName': 'TABLE32',

'State': '1',

'viewtitleName': 'COLUMN302',

'State': '1',

'viewsubTitleName': 'COLUMN299,COLUMN303',

'State': '1',

'curstart': str(curstart),

'State': '1',

'tableView': urllib.quote("国产药品商品名"),

'State': '1',

}

post_headers = {

'Content-Type': 'application/x-www-form-urlencoded',

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.116 Safari/537.36'

}

url = "http://app1.sfda.gov.cn/datasearch/face3/search.jsp"

response = requests.post(url, data=values, headers=post_headers)

resHtml = response.text

print response.status_code

# print resHtml

Urls = re.findall(r'callbackC,\'(.*?)\',null', resHtml)

for url in Urls:

# 坑

url = re.sub('tableView=.*?&', 'tableView=' + urllib.quote("国产药品商品名") + "&", url)

ParseDetail('http://app1.sfda.gov.cn/datasearch/face3/' + url.encode('gb2312'))

if __name__ == '__main__':

main()案例3爬取糗事百科段子

确定URL并抓取页面代码,首先我们确定好页面的URL是http://www.qiushibaike.com/8hr/page/4,其中最后一个数字1代表页数,我们可以传入不同的值来获得某一页的段子内容。

我们初步构建如下的代码来打印页面代码内容试试看,先构造最基本的页面抓取方式,看看会不会成功

然后尝试在Composer raw 模拟发送数据

GET http://www.qiushibaike.com/8hr/page/2/ HTTP/1.1

Host: www.qiushibaike.com

User-Agent: Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.116 Safari/537.36

Accept-Language: zh-CN,zh;q=0.8在删除了User-Agent、Accept-Language报错

应该是headers验证的问题,加上一个headers验证试试看

# -*- coding:utf-8 -*-

import urllib

import requests

import re

import chardet

from lxml import etree

page = 2

url = 'http://www.qiushibaike.com/8hr/page/' + str(page) + "/"

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.116 Safari/537.36',

'Accept-Language': 'zh-CN,zh;q=0.8'}

try:

response = requests.get(url, headers=headers)

resHtml = response.text

html = etree.HTML(resHtml)

result = html.xpath('//div[contains(@id,"qiushi_tag")]')

for site in result:

#print etree.tostring(site,encoding='utf-8')

item = {}

imgUrl = site.xpath('./div/a/img/@src')[0].encode('utf-8')

username = site.xpath('./div/a/@title')[0].encode('utf-8')

#username = site.xpath('.//h2')[0].text

content = site.xpath('.//div[@class="content"]')[0].text.strip().encode('utf-8')

vote = site.xpath('.//i')[0].text

#print site.xpath('.//*[@class="number"]')[0].text

comments = site.xpath('.//i')[1].text

print imgUrl, username, content, vote, comments

except Exception, e:

print e好啦,大家来测试一下吧,点一下回车会输出一个段子,包括发布人,发布时间,段子内容以及点赞数,是不是感觉爽爆了!

拜托拜托给小絮絮点个赞吧(欢迎各位师哥师姐的评论,你们的评论是絮絮的最大支持!)

那么祝大家周末愉快!