伪分布式系列 - 第一篇 - hadoop-3.2.0环境搭建

目录

- Hadoop的三种运行模式

-

- 单机模式

- 伪分布式模式

- 全分布式集群模式

- 环境准备

-

- 系统环境

- ssh免密码连接

- 防火墙关闭

- jdk安装配置

- 相关环境变量配置

- 安装包下载

- Hadoop配置

-

- 解压

- hadoop文件配置

- linux环境配置

- 启动服务

-

- 格式化NameNode

- 启动

- web页面

- 简单使用

-

- hdfs

- yarn

Hadoop的三种运行模式

单机模式

- 该模式用于开发和调式

- 不对配置文件进行修改

- 使用本地文件系统

- 没有namenode,datanode,resoucrcemanager,nodemanager等进程,是单进程的服务在执行所有逻辑

伪分布式模式

- 有namenode,datanode,resoucrcemanager,nodemanager等进程,这些进程运行在同一台服务器上

- 守护进程之间走的是rpc通讯协议,和完全分布式一致

- 存储使用的也是hdfs,副本数为1

全分布式集群模式

- 有namenode,datanode,resoucrcemanager,nodemanager等进程,这些进程运行在不同的服务器上

- 守护进程之间走的是rpc通讯协议

- hdfs是完全分布式的,副本数默认是3

- hdfs可以配置高可用(热备),namenode联邦等

- yarn也可配置高可用,yarn的联邦

- yarn上的计算是完全分布式,分布在集群各台机器上

环境准备

系统环境

ssh免密码连接

执行命令:ssh-keygen -t rsa 一路回车即可

复制秘钥到本地: cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

验证 ssh 本机ip 成功

防火墙关闭

systemctl stop firewalld.service #停止firewall

systemctl disable firewalld.service #禁止firewall开机启动

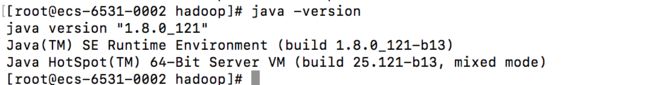

jdk安装配置

安装方式自行百度

配置java_home,vim /etc/profile

export JAVA_HOME=/usr/local/java_1.8.0_121

export JAVA_BIN= J A V A H O M E / b i n e x p o r t J A V A L I B = JAVA_HOME/bin export JAVA_LIB= JAVAHOME/binexportJAVALIB=JAVA_HOME/lib

export CLASSPATH=.: J A V A L I B / t o o l s . j a r : JAVA_LIB/tools.jar: JAVALIB/tools.jar:JAVA_LIB/dt.jar

export PATH= J A V A B I N : JAVA_BIN: JAVABIN:PATH

相关环境变量配置

host配置

vim /etc/hosts

192.168.1.42 ecs-6531-0002.novalocal

安装包下载

下载地址:https://hadoop.apache.org/releases.html

版本自行选择,我们选择最新的3.2.0版本

Hadoop配置

解压

- 命令:tar zcvf ~/soft/hadoop-3.2.0.tar.gz -C ./

hadoop文件配置

- core-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!--指定HADOOP所使用的文件系统schema(URI),HDFS的老大(NameNode)的地址-->

<property>

<name>fs.defaultFS</name>

<value>hdfs://ecs-6531-0002.novalocal:9000</value>

</property>

<!--指定HADOOP运行时产生文件的存储目录-->

<property>

<name>hadoop.tmp.dir</name>

<value>/data/hadoop/hdfs/meta</value>

</property>

</configuration>

- hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!--指定HDFS副本的数量-->

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

</configuration>

- yarn-site.xml

<?xml version="1.0"?>

<configuration>

<!-- 指定YARN(ResourceManager)的地址-->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>ecs-6531-0002.novalocal</value>

</property>

<!-- 指定reducer获取数据的方式,此处做了mapreduce和spark配置,如果没有spark可以先关闭spark_shuffle配置 -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle,spark_shuffle</value>

</property>

<!-- spark shuffle的类 -->

<property>

<name>yarn.nodemanager.aux-services.spark_shuffle.class</name>

<value>org.apache.spark.network.yarn.YarnShuffleService</value>

</property>

<!-- nodemanager的总内存量,我们服务器是64GB,此处配置大概60GB -->

<property>

<description>Amount of physical memory, in MB, that can be allocated for containers.</description>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>61440</value>

</property>

<!-- yarn最小分配内存 -->

<property>

<description>The minimum allocation for every container request at the RM,

in MBs. Memory requests lower than this won't take effect,

and the specified value will get allocated at minimum.</description>

<name>yarn.scheduler.minimum-allocation-mb</name>

<value>512</value>

</property>

<!-- yarn最大分配内存 -->

<property>

<description>The maximum allocation for every container request at the RM,

in MBs. Memory requests higher than this won't take effect,

and will get capped to this value.</description>

<name>yarn.scheduler.maximum-allocation-mb</name>

<value>8192</value>

</property>

<!-- 聚合log 方便后面开启history服务 -->

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>2592000</value>

</property>

<!-- 历史服务器web地址 -->

<property>

<name>yarn.log.server.url</name>

<value>http://ecs-6531-0002.novalocal:8988/jobhistory/logs</value>

</property>

<!-- log数据存放hdfs地址 -->

<property>

<name>yarn.nodemanager.remote-app-log-dir</name>

<value>hdfs://ecs-6531-0002.novalocal:9000/user/root/yarn-logs/</value>

</property>

<!-- 此处cpu核数,我们机器是16核,此处不可多配,建议略小于系统CPU核数 -->

<property>

<description>Number of vcores that can be allocated

for containers. This is used by the RM scheduler when allocating

resources for containers. This is not used to limit the number of

CPUs used by YARN containers. If it is set to -1 and

yarn.nodemanager.resource.detect-hardware-capabilities is true, it is

automatically determined from the hardware in case of Windows and Linux.

In other cases, number of vcores is 8 by default.</description>

<name>yarn.nodemanager.resource.cpu-vcores</name>

<value>16</value>

</property>

<!-- cpu最小分配 -->

<property>

<description>The minimum allocation for every container request at the RM

in terms of virtual CPU cores. Requests lower than this will be set to the

value of this property. Additionally, a node manager that is configured to

have fewer virtual cores than this value will be shut down by the resource

manager.</description>

<name>yarn.scheduler.minimum-allocation-vcores</name>

<value>1</value>

</property>

<!-- cpu最大分配 -->

<property>

<description>The maximum allocation for every container request at the RM

in terms of virtual CPU cores. Requests higher than this will throw an

InvalidResourceRequestException.</description>

<name>yarn.scheduler.maximum-allocation-vcores</name>

<value>3</value>

</property>

<!-- classpath配置 -->

<property>

<name>yarn.application.classpath</name>

<value>/opt/bigdata/hadoop/default/etc/hadoop:/opt/bigdata/hadoop/default/share/hadoop/common/lib/*:/opt/bigdata/hadoop/default/share/hadoop/common/*:/opt/bigdata/hadoop/default/share/hadoop/hdfs:/opt/bigdata/hadoop/default/share/hadoop/hdfs/lib/*:/opt/bigdata/hadoop/default/share/hadoop/hdfs/*:/opt/bigdata/hadoop/default/share/hadoop/mapreduce/lib/*:/opt/bigdata/hadoop/default/share/hadoop/mapreduce/*:/opt/bigdata/hadoop/default/share/hadoop/yarn:/opt/bigdata/hadoop/default/share/hadoop/yarn/lib/*:/opt/bigdata/hadoop/default/share/hadoop/yarn/*

- mapred-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!--指定mr运行在yarn上-->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<!-- 历史服务器配置,同步mapreduce任务到历史服务器 -->

<property>

<name>mapreduce.jobhistory.address</name>

<value>ecs-6531-0002.novalocal:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>ecs-6531-0002.novalocal:8988</value>

</property>

<!-- 历史服务器缓存任务数 -->

<property>

<name>mapreduce.jobhistory.joblist.cache.size</name>

<value>5000</value>

</property>

</configuration>

- workers

localhost // 此处是配置的本机

- hadoop-env.sh 最后加入你的javahome

JAVA_HOME=/usr/local/java_1.8.0_121

linux环境配置

- /etc/profile文件配置

# hadoop 相关配置

export HADOOP_HOME=/opt/bigdata/hadoop/default

#export HADOOP_OPTS="-Djava.library.path=$HADOOP_PREFIX/lib:$HADOOP_PREFIX/lib/native"

export LD_LIBRARY_PATH=$HADOOP_HOME/lib/native

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export HADOOP_OPTS="-Djava.library.path=$HADOOP_HOME/lib"

#export HADOOP_ROOT_LOGGER=DEBUG,console

export PATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/sbin:$HADOOP_HOME/bin

#hadoop-3.1.0必须添加如下5个变量否则启动报错,hadoop-2.x貌似不需要

export HDFS_NAMENODE_USER=root

export HDFS_DATANODE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export YARN_RESOURCEMANAGER_USER=root

export YARN_NODEMANAGER_USER=root

- 应用一下环境: source /etc/profile

启动服务

格式化NameNode

命令: hdfs namenode -format

中间没有报错并且最后显示如下信息表示格式化成功

...

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at ecs-6531-0002

************************************************************/

如果格式化NameNode之后运行过hadoop,然后又想再格式化一次NameNode,那么需要先删除第一次运行Hadoop后产生的VERSION文件,否则会出错

启动

- start-all.sh 启动所有服务,启动日志在hadoop软件目录的logs下

- jps查看服务进程

6662 Jps

9273 DataNode #hdfs worker节点

5465 SecondaryNameNode #hdfs备份节点

9144 NameNode #hdfs主节点

9900 NodeManager #yarn的worker节点

9575 ResourceManager #yarn的主节点 - 启动历史任务服务器

命令:mapred --daemon start historyserver

jps看到

12710 JobHistoryServer - 停止集群 stop-all.sh 命令

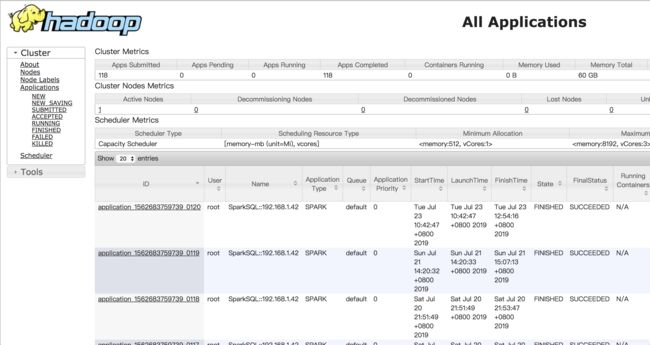

web页面

- hdfs地址:http://xxx:9870/dfshealth.html#tab-overview

- yarn地址:http://xxx:8088/cluster

![在这里插入描述]失https://败(imblog.csdnimg.cn/20ess=img-age/watermark,type_ZmFuZ3poZW5naGVpdGk,shadow_10,text_aHR0cHM6Ly9ibG9nLmNz

- history地址:http://xxx:8988/jobhistory

简单使用

hdfs

yarn

大家可以自行百度或者下面给单独给大家讲一下,请继续关注后续文章.