人工智能学习9(LightGBM)

编译工具:PyCharm

文章目录

-

-

- 编译工具:PyCharm

-

- lightGBM原理

- lightGBM的基础使用

- 案例1:鸢尾花

- 案例2:绝对求生玩家排名预测

-

- 一、数据处理部分

-

- 1.数据获取及分析

- 2.缺失数据处理

- 3.数据规范化

- 4.规范化输出部分数据

- 5.异常数据处理

-

- 5.1删除开挂数据没有移动却kill了的

- 5.2删除驾车杀敌数异常的数据

- 5.3 删除一局中杀敌数超过30的

- 5.4删除爆头率异常数据:认为杀敌人数大于9且爆头率为100%为异常数据

- 5.5 最大杀敌距离大于1km的玩家

- 5.6运动距离异常

- 5.7武器收集异常

- 5.8使用治疗药品数量异常

- 6.类别型数据处理one-hot处理

- 7.数据截取

- 二、模型训练部分

-

- 1.使用随机森林进行模型训练

- 2.使用lightgbm进行模型训练

lightGBM原理

lightGBM主要基于以下几个方面进行优化,提升整体特性

1.基于Histogram(直方图)的决策树算法

2.Lightgbm的Histogram(直方图)做差加速

3.带深度限制的Leaf-wise的叶子生长策略

4.直接支持类别特征

5.直接支持高效并行

直方图算法降低内存消耗,不仅不需要额外存储与排序的结果,而且可以只保存特征离散化后的值。

一个叶子的直方图可以由他的父亲节点的直方图与它兄弟节点的直方图做差得到,效率会高很多。

lightGBM的基础使用

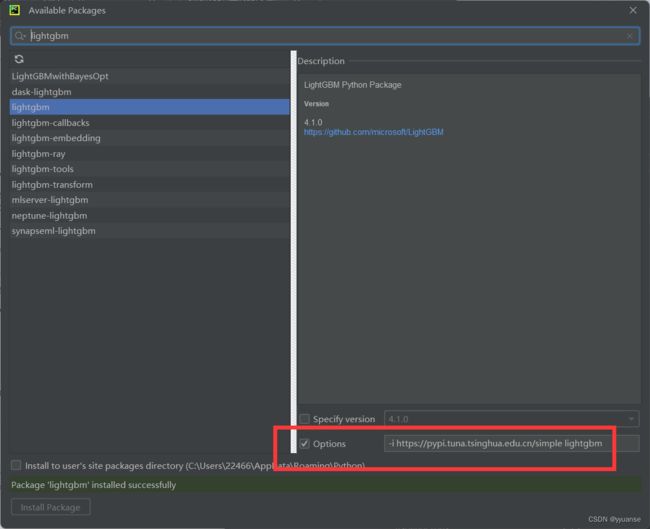

先安装包,直接安装可能会出现问题,建议改成清华大学提供的网站进行安装,安装速度快不会出错,命令行模式安装的话:pip install -i https://pypi.tuna.tsinghua.edu.cn/simple 包名称

pycharm安装的话-i https://pypi.tuna.tsinghua.edu.cn/simple 包名称

案例1:鸢尾花

# 使用lightgbm模型训练

import pandas as pd

# sklearn提供的数据集

from sklearn.datasets import load_iris

# 数据分割

from sklearn.model_selection import train_test_split

#

from sklearn.model_selection import GridSearchCV

#

from sklearn.metrics import mean_squared_error

# lightGBM

import lightgbm as lgb

# 获取数据

iris = load_iris()

data = iris.data

target = iris.target

# 数据基本处理

# 数据分割

x_train,x_test,y_train,y_test = train_test_split(data,target,test_size=0.2)

# 模型训练

gbm = lgb.LGBMRegressor(objective="regression",learning_rate=0.05,n_estimators=200)

gbm.fit(x_train, y_train, eval_set=[(x_test,y_test)], eval_metric="l1",callbacks=[lgb.early_stopping(stopping_rounds=3)]) #看到训练过程

print("----------基础模型训练----------")

print(gbm.score(x_test, y_test))

# 网格搜索进行调优训练

estimators = lgb.LGBMRegressor(num_leaves=31)

param_grid = {

"learning_rate":[0.01,0.1,1],

"n_estmators":[20,40,60,80]

}

gbm = GridSearchCV(estimators,param_grid,cv=5)

gbm.fit(x_train,y_train,callbacks=[lgb.log_evaluation(show_stdv=False)])

print("----------最优参数----------")

print(gbm.best_params_)

# 获取到最优参数后,将参数值带入到里面

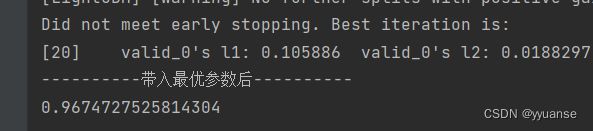

gbm = lgb.LGBMRegressor(objective="regression",learning_rate=0.1,n_estimators=20)

gbm.fit(x_train, y_train, eval_set=[(x_test,y_test)], eval_metric="l1",callbacks=[lgb.early_stopping(stopping_rounds=3)]) #看到训练过程

print("----------带入最优参数后----------")

print(gbm.score(x_test, y_test))

这里看到基础模型训练的结果比带入最优参数后的结果更好,原因在于基础训练里面,我们设置了200步,而最优参数才只需要20步。

案例2:绝对求生玩家排名预测

数据集:https://download.csdn.net/download/m0_51607165/84819953

一、数据处理部分

1.数据获取及分析

# 绝对求生玩家排名预测

import numpy as np

import numpy as py

import matplotlib.pyplot as plt

import pandas as pd

import seaborn as sns

# 获取数据以及数据查看分析

train = pd.read_csv("./data/train_V2.csv")

# print(train.head())

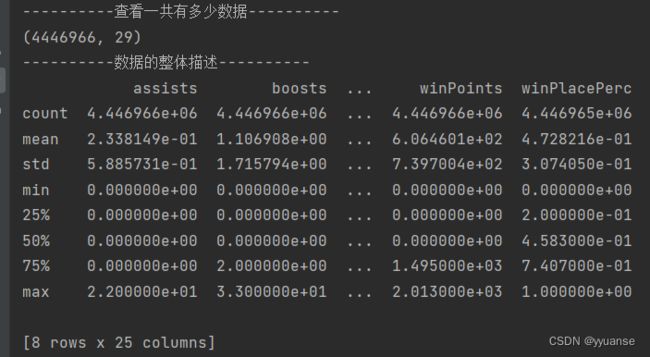

print("----------查看一共有多少数据----------")

print(train.shape)

print("----------数据的整体描述----------")

print(train.describe()) # 数据的整体描述

print("----------数据的字段基本描述----------")

print(train.info()) # 数据的字段基本描述

print("----------一共有多少场比赛----------")

# print(train["matchId"])

# 去重

print(np.unique(train["matchId"]).shape)

print("----------有多少队伍----------")

print(np.unique(train["groupId"]).shape)

# 数据处理

# 查看缺失值

# print(np.any(train.isnull()))

2.缺失数据处理

# 查看缺失值

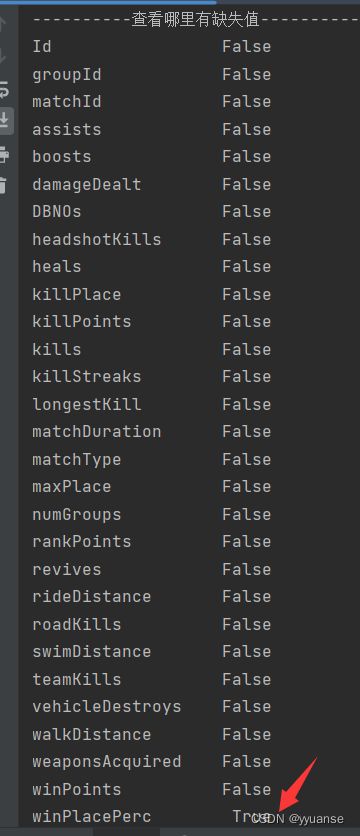

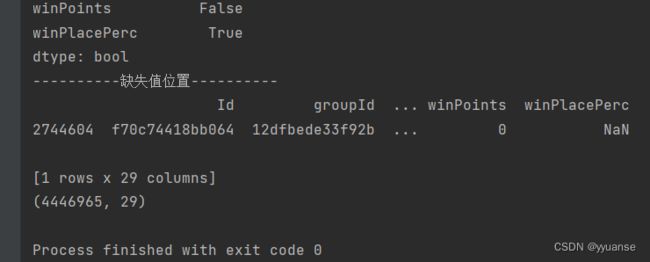

print("----------查看哪里有缺失值----------")

# print(np.any(train.isnull())) # 这个只能打印出True说明存在缺失值

print(train.isnull().any()) # 打印缺失值的情况

# 查看发现只有winPlacePerc有缺失值

# 寻找缺失值,打印出来即可获取行号为 2744604

print("----------缺失值位置----------")

print(train[train["winPlacePerc"].isnull()])

# 删除缺失值

train = train.drop(2744604)

print(train.shape)

3.数据规范化

我的pycharm跑不出来了。。。我用转用线上的 jupyter notebook来写剩下的部分。

感觉jupyter notebook比pycharm方便

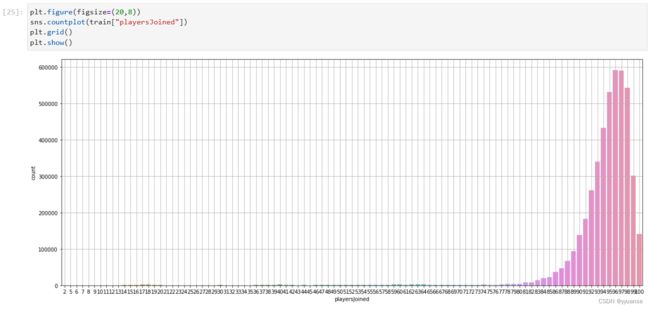

# 数据规范化

# 对每场比赛参加的人数进行统计

count = train.groupby("matchId")["matchId"].transform("count")

# 在train数据中添加一列,为当前该场比赛总参加人数

train["playersJoined"]=count

# print(train.head())

# 参赛人数排序小到大并显示

# print(train["playersJoined"].sort_values().head())

# 可视化显示

plt.figure(figsize=(20,8))

sns.countplot(train["playersJoined"])

plt.grid()

plt.show()

# 这里显示有些比赛人数差距很大,可以添加筛选条件

plt.figure(figsize=(20,8))

sns.countplot(train[train["playersJoined"]>=75]["playersJoined"])

plt.grid()

plt.show()

4.规范化输出部分数据

每局玩家数不一样,不能仅靠杀敌数等来进行比较

# kills(杀敌数),damageDealt(总伤害数)、maxPlace(本局最差名次)、matchDuration(比赛时长)

train["killsNorm"]=train["kills"]*((100-train["playersJoined"])/100+1)

train["damageDealtNorm"]=train["damageDealt"]*((100-train["playersJoined"])/100+1)

train["maxPlaceNorm"]=train["maxPlace"]*((100-train["playersJoined"])/100+1)

train["matchDurationNorm"]=train["matchDuration"]*((100-train["playersJoined"])/100+1)

train.head()

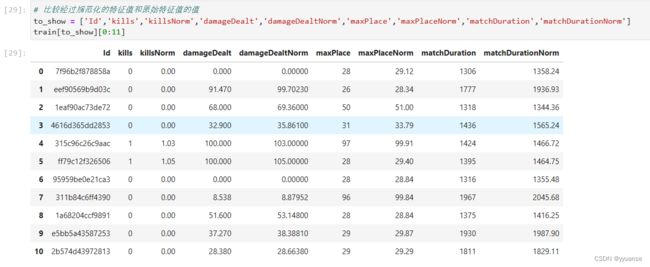

# 比较经过规范化的特征值和原始特征值的值

to_show = ['Id','kills','killsNorm','damageDealt','damageDealtNorm','maxPlace','maxPlaceNorm','matchDuration','matchDurationNorm']

train[to_show][0:11]

train['healsandboosts']=train['heals']+train['boosts']

train[["heals","boosts","healsandboosts"]].tail()

5.异常数据处理

jupyter

我们认为以下数据为异常数据:

5.1没有移动有杀敌数的

5.2驾车杀敌大于10的

5.3一局中杀敌超过30的

5.4爆头率异常的数据:认为杀敌人数大于9且爆头率为100%为异常数据

5.5最大杀敌距离大于1km的玩家

5.6运动距离异常

5.7武器收集异常

5.8shiyong治疗药品数量异常

5.1删除开挂数据没有移动却kill了的

# 删除有击杀但是完全没有移动的玩家(开挂)

train["totalDistance"]=train["rideDistance"]+train["walkDistance"]+train["swimDistance"]

train.head()

train["killwithoutMoving"]=(train["kills"]>0)&(train["totalDistance"]==0)

# 查看开挂的数据

train[train["killwithoutMoving"] == True]

# 统计以下开挂的数据

train[train["killwithoutMoving"] == True].shape

# 删除开挂数据

train.drop(train[train["killwithoutMoving"] == True].index,inplace=True)

# 删除后的整体train数据

train.shape

5.2删除驾车杀敌数异常的数据

# 删除驾车杀敌大于10数据

train.drop(train[train["roadKills"] >10].index,inplace=True)

# 删除后整体train数据情况

train.shape

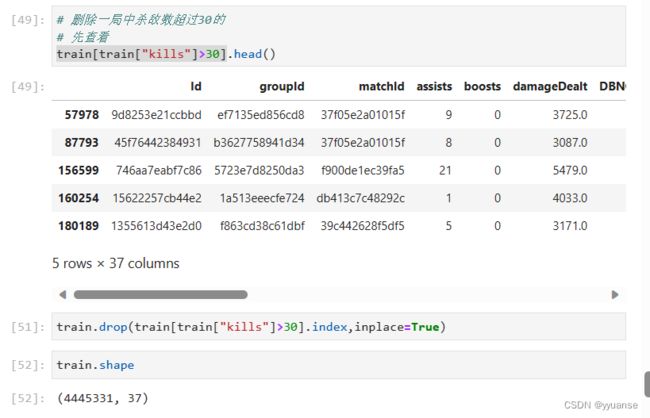

5.3 删除一局中杀敌数超过30的

# 删除一局中杀敌数超过30的

# 先查看

train[train["kills"]>30].head()

train.drop(train[train["kills"]>30].index,inplace=True)

train.shape

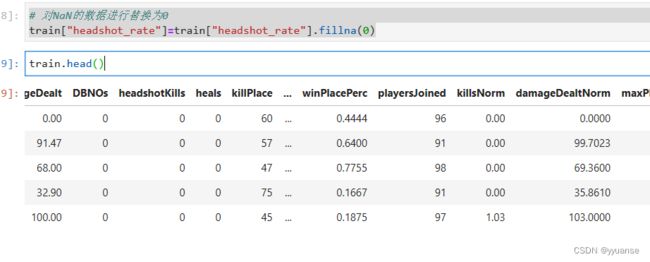

5.4删除爆头率异常数据:认为杀敌人数大于9且爆头率为100%为异常数据

# 删除爆头率异常的数据

# 计算爆头率

train["headshot_rate"]=train["headshotKills"]/train["kills"]

train["headshot_rate"].head()

# 对NaN的数据进行替换为0

train["headshot_rate"]=train["headshot_rate"].fillna(0)

train.head()

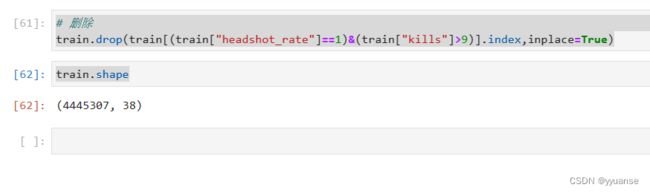

# 先检索有没有爆头率有问题的数据:认为杀敌人数大于9且爆头率为100%为异常数据

train [(train["headshot_rate"]==1)&(train["kills"]>9)].head()

# 删除

train.drop(train[(train["headshot_rate"]==1)&(train["kills"]>9)].index,inplace=True)

train.shape

5.5 最大杀敌距离大于1km的玩家

# 最大杀敌距离大于1km的玩家

train[train["longestKill"]>=1000].shape

train[train["longestKill"]>=1000]["longestKill"].head()

train.drop(train[train["longestKill"]>=1000].index,inplace=True)

train.shape

5.6运动距离异常

train[train['walkDistance']>=10000].shape

train.drop(train[train['walkDistance']>=10000].index,inplace=True)

train.shape

# 载具

train[train['rideDistance']>=20000].shape

train.drop(train[train['rideDistance']>=20000].index,inplace=True)

train.shape

# 游泳距离

train[train['swimDistance']>=2000].shape

train.drop(train[train['swimDistance']>=2000].index,inplace=True)

train.shape

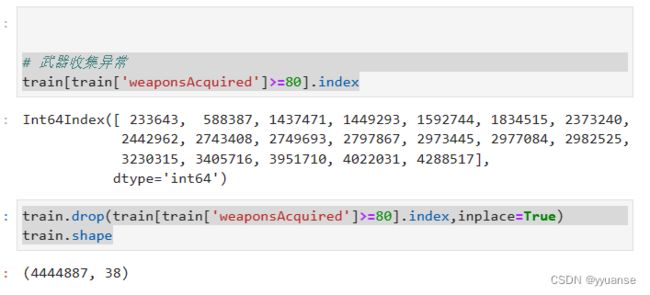

5.7武器收集异常

# 武器收集异常

train[train['weaponsAcquired']>=80].index

train.drop(train[train['weaponsAcquired']>=80].index,inplace=True)

train.shape

5.8使用治疗药品数量异常

# 使用治疗药品数量异常

train[train['heals']>=80].index

train.drop(train[train['heals']>=80].index,inplace=True)

train.shape

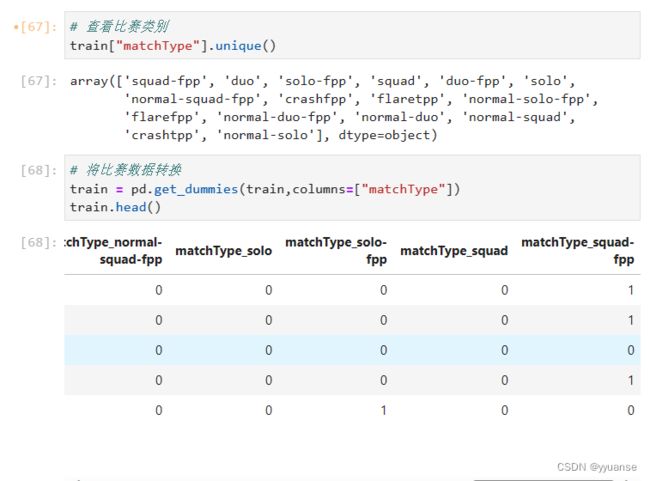

6.类别型数据处理one-hot处理

# 查看比赛类别

train["matchType"].unique()

# 将比赛数据转换

train = pd.get_dummies(train,columns=["matchType"])

train.head()

# 正则化

matchType_encoding = train.filter(regex="matchType")

matchType_encoding.head()

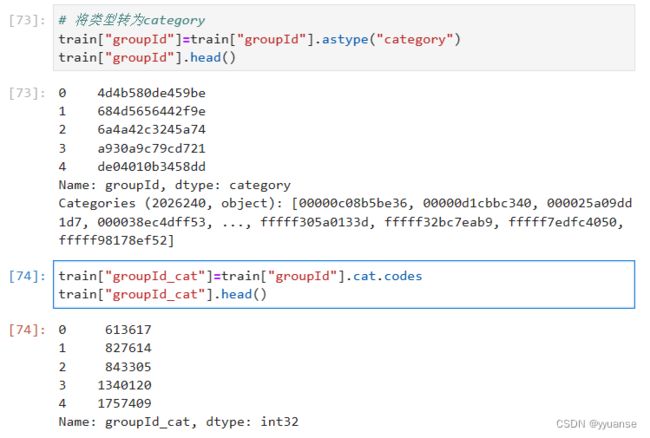

# 对groupId数据转换

train["groupId"].head()

# 将类型转为category

train["groupId"]=train["groupId"].astype("category")

train["groupId"].head()

train["groupId_cat"]=train["groupId"].cat.codes

train["groupId_cat"].head()

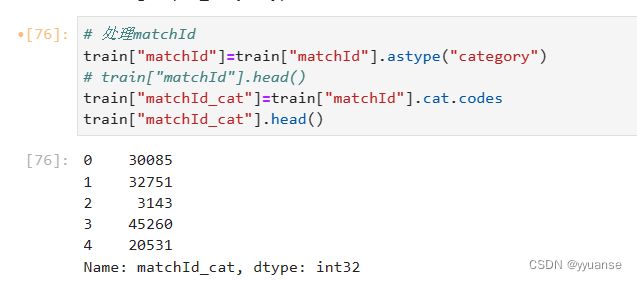

# 处理matchId

train["matchId"]=train["matchId"].astype("category")

# train["matchId"].head()

train["matchId_cat"]=train["matchId"].cat.codes

train["matchId_cat"].head()

# 将原来的groupId和matchId删除,axis=1按照列删除

train.drop(["groupId","matchId"],axis=1,inplace=True)

train.head()

7.数据截取

df_sample = train.sample(100000)

df_sample.shape

# 确定特征值和目标值

df = df_sample.drop(["winPlacePerc","Id"],axis=1)

y=df_sample["winPlacePerc"]

df.shape

# 分割测试集和测试集

from sklearn.model_selection import train_test_split

x_train,x_valid,y_train,y_valid = train_test_split(df,y,test_size=0.2)

二、模型训练部分

两种模型训练结果进行对比:

1.使用随机森林进行模型训练

2.使用lightgbm进行模型训练

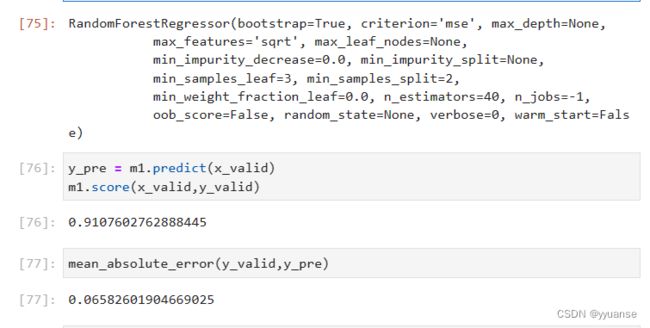

1.使用随机森林进行模型训练

from sklearn.ensemble import RandomForestRegressor

from sklearn.metrics import mean_absolute_error

# 初步训练

# n_jobs = -1表示训练的时候,并行数和cpu的核数一样,如果传入具体的值,表示用几个核去泡

m1 = RandomForestRegressor(n_estimators=40,min_samples_leaf=3,max_features='sqrt',n_jobs=-1)

m1.fit(x_train,y_train)

y_pre = m1.predict(x_valid)

m1.score(x_valid,y_valid)

mean_absolute_error(y_valid,y_pre)

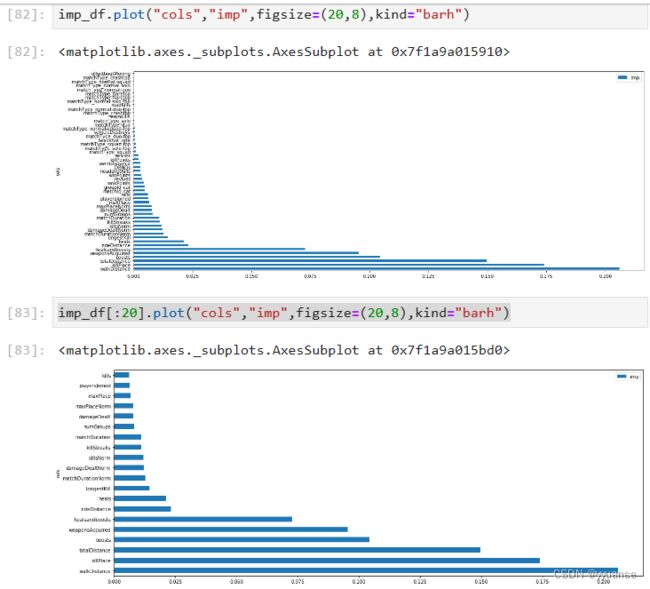

# 再次使用随机森林进行模型训练

# 去除冗余特征

m1.feature_importances_

imp_df = pd.DataFrame({"cols":df.columns,"imp":m1.feature_importances_})

imp_df.head()

imp_df = imp_df.sort_values("imp",ascending=False)

imp_df.head()

imp_df.plot("cols","imp",figsize=(20,8),kind="barh")

imp_df[:20].plot("cols","imp",figsize=(20,8),kind="barh")

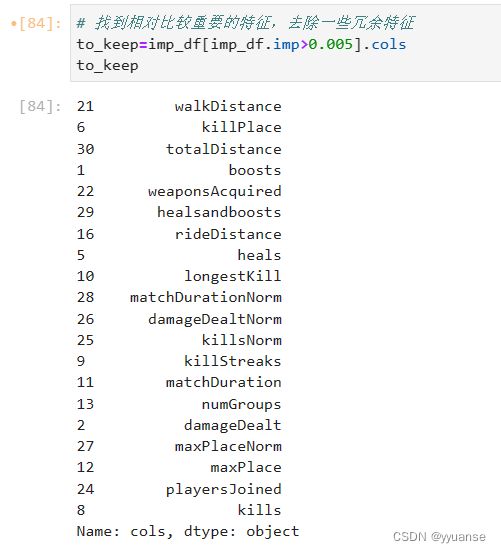

# 找到相对比较重要的特征,去除一些冗余特征

to_keep=imp_df[imp_df.imp>0.005].cols

to_keep

df_keep = df[to_keep]

x_train,x_valid,y_train,y_valid=train_test_split(df_keep,y,test_size=0.2)

x_train.shape

m2 = RandomForestRegressor(n_estimators=40,min_samples_leaf=3,max_features='sqrt',n_jobs=-1)

m2.fit(x_train,y_train)

# 模型评估

y_pre = m2.predict(x_valid)

m2.score(x_valid,y_valid)

mean_absolute_error(y_valid,y_pre)

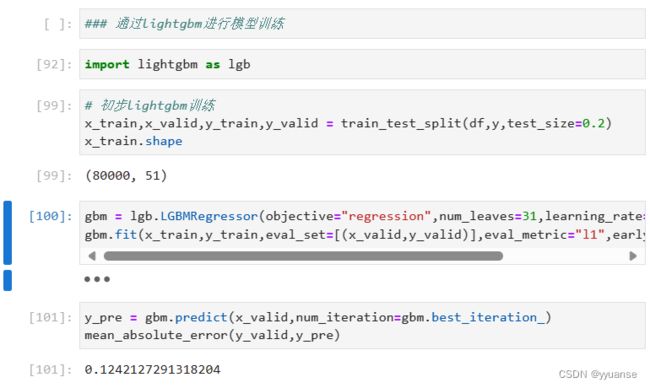

2.使用lightgbm进行模型训练

import lightgbm as lgb

# 初步lightgbm训练

x_train,x_valid,y_train,y_valid = train_test_split(df,y,test_size=0.2)

x_train.shape

gbm = lgb.LGBMRegressor(objective="regression",num_leaves=31,learning_rate=0.05,n_estimators=20)

gbm.fit(x_train,y_train,eval_set=[(x_valid,y_valid)],eval_metric="l1",early_stopping_rounds=5)

y_pre = gbm.predict(x_valid,num_iteration=gbm.best_iteration_)

mean_absolute_error(y_valid,y_pre)

# 第二次调优:网格搜索进行参数调优

from sklearn.model_selection import GridSearchCV

estimator = lgb.LGBMRegressor(num_leaves=31)

param_grid={"learning_rate":[0.01,0.1,1],00

"n_estimators":[40,60,80,100,200,300]}

gbm = GridSearchCV(estimator,param_grid,cv=5,n_jobs=-1)

gbm.fit(x_train,y_train,eval_set=[(x_valid,y_valid)],eval_metric="l1",early_stopping_rounds=5)

y_pred = gbm.predict(x_valid)

mean_absolute_error(y_valid,y_pred)

gbm.best_params_

# 第三次调优

# n_estimators

scores = []

n_estimators = [100,500,1000]

for nes in n_estimators:

lgbm = lgb.LGBMRegressor(boosting_type='gbdt',

num_leaves=31,

max_depth=5,

learning_rate=0.1,

n_estimators=nes,

min_child_samples=20,

n_jobs=-1)

lgbm.fit(x_train,y_train,eval_set=[(x_valid,y_valid)],eval_metric='l1',early_stopping_rounds=5)

y_pre = lgbm.predict(x_valid)

mae = mean_absolute_error(y_valid,y_pre)

scores.append(mae)

mae

# 可视化

import numpy as np

plt.plot(n_estimators,scores,'o-')

plt.ylabel("mae")

plt.xlabel("n_estimator")

print("best n_estimator {}".format(n_estimators[np.argmin(scores)]))

# 通过这种方式,可以继续对其他的参数调优,找到最优的参数

# ......