MobileNetV2代码

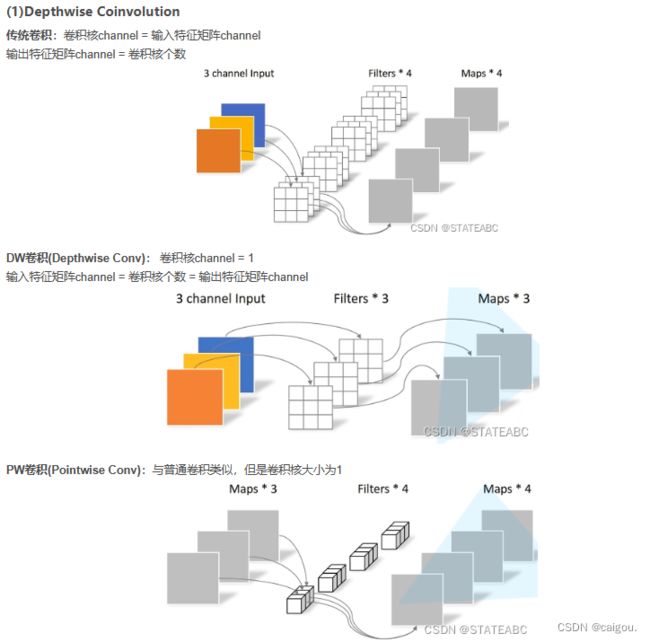

这个PW其实类似resnet中的1*1卷积变换,只不过这里是先升维,再降维,因为更多的模型参数是在3*3卷积层,ResNeXt使用组卷积减少模型参数,当组数和通道数一样时就是DW卷积,MobilNet为了减少参数主要是在3*3这一层上进行的,但是为了兼顾一下通道信息,所以先升维。

PW+DW+PW

MobileNetV1参数表:

MobileNetV1并没有使用shortcut连接,V2中使用,由于先升后降,称之为倒残差结构。

MobileNetV2 参数表

model.py:

from torch import nn

import torch

def _make_divisible(ch, divisor=8, min_ch=None):

"""

This function is taken from the original tf repo.

It ensures that all layers have a channel number that is divisible by 8

It can be seen here:

https://github.com/tensorflow/models/blob/master/research/slim/nets/mobilenet/mobilenet.py

"""

#这个函数实现了对输出通道调整为8的倍数,目的是方便使用alpha倍率因子减少MobileNet模型参数时,能够得到整数的通道数

if min_ch is None:

min_ch = divisor

new_ch = max(min_ch, int(ch + divisor / 2) // divisor * divisor)

# Make sure that round down does not go down by more than 10%.

if new_ch < 0.9 * ch:

new_ch += divisor

#返回新的调整后的通道数

return new_ch

class ConvBNReLU(nn.Sequential):

#注意这个模块没有前向传播的写法,直接init传参进行卷积

def __init__(self, in_channel, out_channel, kernel_size=3, stride=1, groups=1):

padding = (kernel_size - 1) // 2

super(ConvBNReLU, self).__init__(

#DW卷积是Conv2d通过group参数实现的,group=in_channel时,就是DW卷积

nn.Conv2d(in_channel, out_channel, kernel_size, stride, padding, groups=groups, bias=False),

nn.BatchNorm2d(out_channel),

nn.ReLU6(inplace=True)

)

class InvertedResidual(nn.Module): #参数表中的bottleneck操作,或模块

def __init__(self, in_channel, out_channel, stride, expand_ratio):

super(InvertedResidual, self).__init__()

# 倒残差模块中间PW升维的倍率 expand_ratio,升维后再用DW卷积

hidden_channel = in_channel * expand_ratio

#是否用残差连接,第一次执行并不使用shortcut连接

self.use_shortcut = stride == 1 and in_channel == out_channel

#ConvBNReLU就是一个DW实现块,通过groups参数实现DW卷积,附带BN和DW的ReLU6激活

#layers = []就是一个包含PW,DW,PW的倒残差块,最后一个PW降维用Conv2d实现,因为采用线性激活可以省略,不用ConvBNReLU,虽然也可控制group实现PW卷积,但激活函数不匹配

layers = []

if expand_ratio != 1:

#PW膨胀系数不为1才进行PW升维操作,第一个bottleneck的t=1,用于减少模型参数的DW操作的隐藏层和输入通道一样,不进行升维

#即t!=1,才有这一层PW操作

# 1x1 pointwise conv

layers.append(ConvBNReLU(in_channel, hidden_channel, kernel_size=1))

layers.extend([

# 3x3 depthwise conv 这就是一个bottleneck

ConvBNReLU(hidden_channel, hidden_channel, stride=stride, groups=hidden_channel),

# 1x1 pointwise conv(linear)

nn.Conv2d(hidden_channel, out_channel, kernel_size=1, bias=False),

nn.BatchNorm2d(out_channel),

])

#对layers[]实现的bottleneck模块进行封装

self.conv = nn.Sequential(*layers)

def forward(self, x):

if self.use_shortcut:

return x + self.conv(x)

else:

return self.conv(x)

class MobileNetV2(nn.Module):

def __init__(self, num_classes=1000, alpha=1.0, round_nearest=8):

super(MobileNetV2, self).__init__()

block = InvertedResidual

# 第一层倒残差输入是32,最后一层倒残差输出是1280

input_channel = _make_divisible(32 * alpha, round_nearest)

last_channel = _make_divisible(1280 * alpha, round_nearest)

# 倒残差组成的块参数设置

inverted_residual_setting = [

# t, c, n, s

[1, 16, 1, 1],

[6, 24, 2, 2],

[6, 32, 3, 2],

[6, 64, 4, 2],

[6, 96, 3, 1],

[6, 160, 3, 2],

[6, 320, 1, 1],

]

#t是隐藏层扩张系数,即PW对输入通道升维的倍数,c是PW降维后的输出通道数,n是这个倒残差模块使用次数,s是第一层PW的步长,其他层步长都是1

#c也就是这一层的输出通道数

#stride = s if i == 0 else 1 通过下面的这行代码实现

features = []

# conv1 layer

#第一层卷积操作,输入是RGB3通道,输出是前面的input_channel = _make_divisible(32 * alpha, round_nearest)

features.append(ConvBNReLU(3, input_channel, stride=2))

# building inverted residual residual blockes

for t, c, n, s in inverted_residual_setting:

#每一层的输出通道数都要乘alpha系数,这个alpha是通过减少通道数来减少模型参数的

#整个MobileNet系列模型的主要目的就是在尽量保证准确率,运算速度前提下减少模型参数,

output_channel = _make_divisible(c * alpha, round_nearest)

for i in range(n):

# 每个模块的重复过程中只有除第一层步长是s(参数表给出的s),其他操作s都是1

stride = s if i == 0 else 1

# 第一次执行的input_channel是第一层的卷积层输出的32层通道,第一个倒残差块的扩张系数是1,即PW不参与隐藏层升维

features.append(block(input_channel, output_channel, stride, expand_ratio=t))

input_channel = output_channel

# building last several layers

#(input_channel, last_channel, 1) = (320,1280,1)

features.append(ConvBNReLU(input_channel, last_channel, 1))

# combine feature layers

self.features = nn.Sequential(*features)

# building classifier

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.classifier = nn.Sequential(

nn.Dropout(0.2),

nn.Linear(last_channel, num_classes)

)

# weight initialization

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out')

if m.bias is not None:

nn.init.zeros_(m.bias)

elif isinstance(m, nn.BatchNorm2d):

nn.init.ones_(m.weight)

nn.init.zeros_(m.bias)

elif isinstance(m, nn.Linear):

nn.init.normal_(m.weight, 0, 0.01)

nn.init.zeros_(m.bias)

def forward(self, x):

x = self.features(x)

x = self.avgpool(x)

#先经过self.avgpool(x)把提取特征图后的7*7*1280大小的x转为1*1*1280的形状,再x = torch.flatten(x, 1)展平为1维向量

x = torch.flatten(x, 1)

x = self.classifier(x)

return x

train.py

冻结预训练模型权重,在我的笔记本上训练的是相当快!

import os

import sys

import json

import torch

import torch.nn as nn

import torch.optim as optim

from torchvision import transforms, datasets

from tqdm import tqdm

from model_v2 import MobileNetV2

def main():

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print("using {} device.".format(device))

batch_size = 16

epochs = 5

data_transform = {

"train": transforms.Compose([transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])]),

"val": transforms.Compose([transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])])}

data_root = os.path.abspath(os.path.join(os.getcwd(), "../..")) # get data root path

image_path = os.path.join(data_root, "data_set", "flower_data") # flower data set path

assert os.path.exists(image_path), "{} path does not exist.".format(image_path)

train_dataset = datasets.ImageFolder(root=os.path.join(image_path, "train"),

transform=data_transform["train"])

train_num = len(train_dataset)

# {'daisy':0, 'dandelion':1, 'roses':2, 'sunflower':3, 'tulips':4}

flower_list = train_dataset.class_to_idx

cla_dict = dict((val, key) for key, val in flower_list.items())

# write dict into json file

json_str = json.dumps(cla_dict, indent=4)

with open('class_indices.json', 'w') as json_file:

json_file.write(json_str)

nw = min([os.cpu_count(), batch_size if batch_size > 1 else 0, 8]) # number of workers

print('Using {} dataloader workers every process'.format(nw))

train_loader = torch.utils.data.DataLoader(train_dataset,

batch_size=batch_size, shuffle=True,

num_workers=nw)

validate_dataset = datasets.ImageFolder(root=os.path.join(image_path, "val"),

transform=data_transform["val"])

val_num = len(validate_dataset)

validate_loader = torch.utils.data.DataLoader(validate_dataset,

batch_size=batch_size, shuffle=False,

num_workers=nw)

print("using {} images for training, {} images for validation.".format(train_num,

val_num))

# create model

net = MobileNetV2(num_classes=5)

# load pretrain weights

# download url: https://download.pytorch.org/models/mobilenet_v2-b0353104.pth

model_weight_path = "./mobilenet_v2-pre.pth"

assert os.path.exists(model_weight_path), "file {} dose not exist.".format(model_weight_path)

pre_weights = torch.load(model_weight_path, map_location='cpu')

# delete classifier weights

pre_dict = {k: v for k, v in pre_weights.items() if net.state_dict()[k].numel() == v.numel()}

missing_keys, unexpected_keys = net.load_state_dict(pre_dict, strict=False)

# freeze features weights

for param in net.features.parameters():

param.requires_grad = False

net.to(device)

# define loss function

loss_function = nn.CrossEntropyLoss()

# construct an optimizer

params = [p for p in net.parameters() if p.requires_grad]

optimizer = optim.Adam(params, lr=0.0001)

best_acc = 0.0

save_path = './MobileNetV2.pth'

train_steps = len(train_loader)

for epoch in range(epochs):

# train

net.train()

running_loss = 0.0

train_bar = tqdm(train_loader, file=sys.stdout)

for step, data in enumerate(train_bar):

images, labels = data

optimizer.zero_grad()

logits = net(images.to(device))

loss = loss_function(logits, labels.to(device))

loss.backward()

optimizer.step()

# print statistics

running_loss += loss.item()

train_bar.desc = "train epoch[{}/{}] loss:{:.3f}".format(epoch + 1,

epochs,

loss)

# validate

net.eval()

acc = 0.0 # accumulate accurate number / epoch

with torch.no_grad():

val_bar = tqdm(validate_loader, file=sys.stdout)

for val_data in val_bar:

val_images, val_labels = val_data

outputs = net(val_images.to(device))

# loss = loss_function(outputs, test_labels)

predict_y = torch.max(outputs, dim=1)[1]

acc += torch.eq(predict_y, val_labels.to(device)).sum().item()

val_bar.desc = "valid epoch[{}/{}]".format(epoch + 1,

epochs)

val_accurate = acc / val_num

print('[epoch %d] train_loss: %.3f val_accuracy: %.3f' %

(epoch + 1, running_loss / train_steps, val_accurate))

if val_accurate > best_acc:

best_acc = val_accurate

torch.save(net.state_dict(), save_path)

print('Finished Training')

if __name__ == '__main__':

main()

训练结果:

using cuda:0 device.

Using 8 dataloader workers every process

using 3306 images for training, 364 images for validation.

train epoch[1/5] loss:0.888: 100%|██████████| 207/207 [00:24<00:00, 8.40it/s]

valid epoch[1/5]: 100%|██████████| 23/23 [00:14<00:00, 1.63it/s]

[epoch 1] train_loss: 1.281 val_accuracy: 0.805

train epoch[2/5] loss:0.614: 100%|██████████| 207/207 [00:20<00:00, 10.22it/s]

valid epoch[2/5]: 100%|██████████| 23/23 [00:13<00:00, 1.66it/s]

[epoch 2] train_loss: 0.885 val_accuracy: 0.838

train epoch[3/5] loss:0.811: 100%|██████████| 207/207 [00:19<00:00, 10.40it/s]

valid epoch[3/5]: 100%|██████████| 23/23 [00:14<00:00, 1.62it/s]

[epoch 3] train_loss: 0.720 val_accuracy: 0.830

train epoch[4/5] loss:0.620: 100%|██████████| 207/207 [00:19<00:00, 10.65it/s]

valid epoch[4/5]: 100%|██████████| 23/23 [00:13<00:00, 1.70it/s]

[epoch 4] train_loss: 0.636 val_accuracy: 0.838

train epoch[5/5] loss:0.689: 100%|██████████| 207/207 [00:19<00:00, 10.46it/s]

valid epoch[5/5]: 100%|██████████| 23/23 [00:14<00:00, 1.60it/s]

[epoch 5] train_loss: 0.583 val_accuracy: 0.852

Finished Trainingpredict.py

import os

import json

import torch

from PIL import Image

from torchvision import transforms

import matplotlib.pyplot as plt

from model_v2 import MobileNetV2

def main():

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

data_transform = transforms.Compose(

[transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])])

# load image

img_path = "./test.jpg"

assert os.path.exists(img_path), "file: '{}' dose not exist.".format(img_path)

img = Image.open(img_path)

plt.imshow(img)

# [N, C, H, W]

img = data_transform(img)

# expand batch dimension

img = torch.unsqueeze(img, dim=0)

# read class_indict

json_path = './class_indices.json'

assert os.path.exists(json_path), "file: '{}' dose not exist.".format(json_path)

with open(json_path, "r") as f:

class_indict = json.load(f)

# create model

model = MobileNetV2(num_classes=5).to(device)

# load model weights

model_weight_path = "./MobileNetV2.pth"

model.load_state_dict(torch.load(model_weight_path, map_location=device))

model.eval()

with torch.no_grad():

# predict class

output = torch.squeeze(model(img.to(device))).cpu()

predict = torch.softmax(output, dim=0)

predict_cla = torch.argmax(predict).numpy()

print_res = "class: {} prob: {:.3}".format(class_indict[str(predict_cla)],

predict[predict_cla].numpy())

plt.title(print_res)

for i in range(len(predict)):

print("class: {:10} prob: {:.3}".format(class_indict[str(i)],

predict[i].numpy()))

plt.show()

if __name__ == '__main__':

main()

预测结果:

class: daisy prob: 0.00213

class: dandelion prob: 0.0071

class: roses prob: 0.0101

class: sunflowers prob: 0.0144

class: tulips prob: 0.966

Process finished with exit code 0