大数据---15.Mapreduce案例之---统计手机号耗费的总上行流量、下行流量、总流量

Mapreduce案例之—统计手机号耗费的总上行流量、下行流量、总流量

1.需求:

统计每一个手机号耗费的总上行流量、下行流量、总流量

2.数据准备:

2.1 输入数据格式:

时间戳、电话号码、基站的物理地址、访问网址的ip、网站域名、数据包、接包数、上行/传流量、下行/载流量、响应码

这些就是10个字段的数据;我们可以通过 自己去模拟数据;

2.2 最终输出的数据格式:

手机号码 上行流量 下行流量 总流量

3.基本思路:

3.1 Map阶段:

(1) 读取一行数据,转换为字符串类型

(2) 切分字段

(3) 抽取手机号、上行流量、下行流量

(4)以手机号为key,bean对象(上行流量、下行流量、总流量)为value 进行封装

(5)文件写出,即context.write(手机号,bean)

3.2 Reduce阶段

(1) 遍历集合上行流量和下行流量总和得到总流量

(2)实现自定义的bean来封装流量信息,并将bean作为map输出的key来传输

(3)MR程序在处理数据的过程中会对数据排序(map输出的kv对传输到reduce之前,会排序),排序的依据是map输出的key

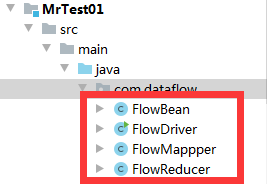

4.代码实现

4.1 编写流量统计的bean对象–FlowBean.java

package com.dataflow;

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

//1实现writable方法

public class FlowBean implements Writable {

private long upflow;

private long downflow;

private long sumflow;

//必须要有空参构造,为了以后反射用

public FlowBean() {

super();

}

public FlowBean(long upflow, long downflow) {

super();

this.upflow = upflow;

this.downflow = downflow;

this.sumflow = upflow+downflow;

}

public void set(long upflow, long downflow) {

this.upflow = upflow;

this.downflow = downflow;

this.sumflow = upflow+downflow;

}

//序列化的方法 ---- 对数据进行读和写的具体的操作;

public void write(DataOutput out) throws IOException {

out.writeLong(upflow);

out.writeLong(downflow);

out.writeLong(sumflow);

//反序列化方法

//注意序列化方法和反序列化方法顺序必须保持一致

}

public void readFields(DataInput in) throws IOException {

this.upflow=in.readLong();

this.downflow=in.readLong();

this.sumflow=in.readLong();

}

@Override

public String toString() {

return upflow + "\t" + downflow + "\t" + sumflow;

}

public void setUpflow(long upflow) {

this.upflow = upflow;

}

public long getUpflow() {

return upflow;

}

public long getDownflow() {

return downflow;

}

public void setDownflow(long downflow) {

this.downflow = downflow;

}

public long getSumflow() {

return sumflow;

}

public void setSumflow(long sumflow) {

this.sumflow = sumflow;

}

}

4.2 Mapper阶段–FlowBeanMapper.java

package com.dataflow;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

public class FlowMappper extends Mapper

Text k = new Text();

// 对象的方式接数据

FlowBean v = new FlowBean();

@Override

protected void map(LongWritable key, Text value, Context context)throws IOException, InterruptedException {

String line = value.toString();

String[] fields = line.split("\t");

String phNum = fields[1];

long upFlow = Long.parseLong(fields[fields.length - 3]);

long downFlow = Long.parseLong(fields[fields.length - 2]);

// 以对象的方式把数据接收

k.set(phNum);

v.set(upFlow, downFlow);

context.write(k, v);

}

}

4.3 Reduce阶段–FlowBeanReducer.java

package com.dataflow;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class FlowReducer extends Reducer

@Override

protected void reduce(Text key, Iterable values, Context context)

throws IOException, InterruptedException {

long sumUpFlow = 0;

long sumDownFlow = 0;

System.out.println(values);

for (FlowBean flowBean : values) {

sumUpFlow += flowBean.getUpflow();

sumDownFlow += flowBean.getDownflow();

}

FlowBean v = new FlowBean(sumUpFlow, sumDownFlow);

context.write(key, v);

}

}

4.4 Driver 阶段–FlowBeanDriver.java—启动程序

package com.dataflow;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class FlowDriver {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

Configuration Configuration=new Configuration();

Job job= Job.getInstance(Configuration);

job.setJarByClass(FlowDriver.class);

job.setMapperClass(FlowMappper.class);

job.setReducerClass(FlowReducer.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(FlowBean.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

FileInputFormat.setInputPaths(job, new Path("E:/dataflow.txt"));

FileOutputFormat.setOutputPath(job, new Path("E:/BigData"));

// FileInputFormat.setInputPaths(job, new Path(args[0]));

// FileOutputFormat.setOutputPath(job, new Path(args[1]));

boolean result=job.waitForCompletion(true);

System.out.println(result?"老铁,没毛病。就算出来的结果了!!!!!!!!":"哥们,出BUG了,赶快去修改一下!!!");

}

}