【机器学习】【线性回归】梯度下降

文章目录

-

- @[toc]

-

- 数据集

- 实际值

- 估计值

- 估计误差

- 代价函数

- 学习率

- 参数更新

- `Python`实现

- 线性拟合结果

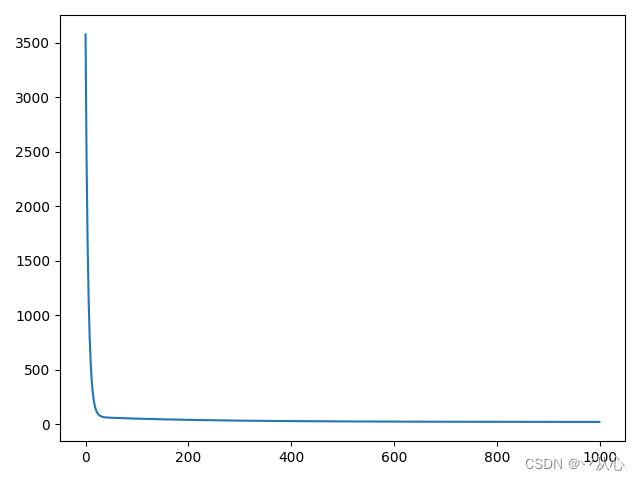

- 代价结果

文章目录

-

- @[toc]

-

- 数据集

- 实际值

- 估计值

- 估计误差

- 代价函数

- 学习率

- 参数更新

- `Python`实现

- 线性拟合结果

- 代价结果

数据集

( x ( i ) , y ( i ) ) , i = 1 , 2 , ⋯ , m \left(x^{(i)} , y^{(i)}\right) , i = 1 , 2 , \cdots , m (x(i),y(i)),i=1,2,⋯,m

实际值

y ( i ) y^{(i)} y(i)

估计值

h θ ( x ( i ) ) = θ 0 + θ 1 x ( i ) h_{\theta}{\left(x^{(i)}\right)} = \theta_{0} + \theta_{1}{x^{(i)}} hθ(x(i))=θ0+θ1x(i)

估计误差

h θ ( x ( i ) ) − y ( i ) h_{\theta}{\left(x^{(i)}\right)} - y^{(i)} hθ(x(i))−y(i)

代价函数

J ( θ ) = J ( θ 0 , θ 1 ) = 1 2 m ∑ i = 1 m ( h θ ( x ( i ) ) − y ( i ) ) 2 = 1 2 m ∑ i = 1 m ( θ 0 + θ 1 x ( i ) − y ( i ) ) 2 J(\theta) = J(\theta_{0} , \theta_{1}) = \cfrac{1}{2m} \displaystyle\sum\limits_{i = 1}^{m}{\left(h_{\theta}{\left(x^{(i)}\right)} - y^{(i)}\right)^{2}} = \cfrac{1}{2m} \displaystyle\sum\limits_{i = 1}^{m}{\left(\theta_{0} + \theta_{1}{x^{(i)}} - y^{(i)}\right)^{2}} J(θ)=J(θ0,θ1)=2m1i=1∑m(hθ(x(i))−y(i))2=2m1i=1∑m(θ0+θ1x(i)−y(i))2

学习率

- α \alpha α是学习率,一个大于 0 0 0的很小的经验值,决定代价函数下降的程度

参数更新

Δ θ j = ∂ ∂ θ j J ( θ 0 , θ 1 ) \Delta{\theta_{j}} = \cfrac{\partial}{\partial{\theta_{j}}} J(\theta_{0} , \theta_{1}) Δθj=∂θj∂J(θ0,θ1)

θ j : = θ j − α Δ θ j = θ j − α ∂ ∂ θ j J ( θ 0 , θ 1 ) \theta_{j} := \theta_{j} - \alpha \Delta{\theta_{j}} = \theta_{j} - \alpha \cfrac{\partial}{\partial{\theta_{j}}} J(\theta_{0} , \theta_{1}) θj:=θj−αΔθj=θj−α∂θj∂J(θ0,θ1)

[ θ 0 θ 1 ] : = [ θ 0 θ 1 ] − α [ ∂ J ( θ 0 , θ 1 ) ∂ θ 0 ∂ J ( θ 0 , θ 1 ) ∂ θ 1 ] \left[ \begin{matrix} \theta_{0} \\ \theta_{1} \end{matrix} \right] := \left[ \begin{matrix} \theta_{0} \\ \theta_{1} \end{matrix} \right] - \alpha \left[ \begin{matrix} \cfrac{\partial{J(\theta_{0} , \theta_{1})}}{\partial{\theta_{0}}} \\ \cfrac{\partial{J(\theta_{0} , \theta_{1})}}{\partial{\theta_{1}}} \end{matrix} \right] [θ0θ1]:=[θ0θ1]−α ∂θ0∂J(θ0,θ1)∂θ1∂J(θ0,θ1)

[ ∂ J ( θ 0 , θ 1 ) ∂ θ 0 ∂ J ( θ 0 , θ 1 ) ∂ θ 1 ] = [ 1 m ∑ i = 1 m ( h θ ( x ( i ) ) − y ( i ) ) 1 m ∑ i = 1 m ( h θ ( x ( i ) ) − y ( i ) ) x ( i ) ] = [ 1 m ∑ i = 1 m e ( i ) 1 m ∑ i = 1 m e ( i ) x ( i ) ] e ( i ) = h θ ( x ( i ) ) − y ( i ) \left[ \begin{matrix} \cfrac{\partial{J(\theta_{0} , \theta_{1})}}{\partial{\theta_{0}}} \\ \cfrac{\partial{J(\theta_{0} , \theta_{1})}}{\partial{\theta_{1}}} \end{matrix} \right] = \left[ \begin{matrix} \cfrac{1}{m} \displaystyle\sum\limits_{i = 1}^{m}{\left(h_{\theta}{\left(x^{(i)}\right)} - y^{(i)}\right)} \\ \cfrac{1}{m} \displaystyle\sum\limits_{i = 1}^{m}{\left(h_{\theta}{\left(x^{(i)}\right)} - y^{(i)}\right) x^{(i)}} \end{matrix} \right] = \left[ \begin{matrix} \cfrac{1}{m} \displaystyle\sum\limits_{i = 1}^{m}{e^{(i)}} \\ \cfrac{1}{m} \displaystyle\sum\limits_{i = 1}^{m}{e^{(i)} x^{(i)}} \end{matrix} \right] \kern{2em} e^{(i)} = h_{\theta}{\left(x^{(i)}\right)} - y^{(i)} ∂θ0∂J(θ0,θ1)∂θ1∂J(θ0,θ1) = m1i=1∑m(hθ(x(i))−y(i))m1i=1∑m(hθ(x(i))−y(i))x(i) = m1i=1∑me(i)m1i=1∑me(i)x(i) e(i)=hθ(x(i))−y(i)

[ ∂ J ( θ 0 , θ 1 ) ∂ θ 0 ∂ J ( θ 0 , θ 1 ) ∂ θ 1 ] = [ 1 m ∑ i = 1 m e ( i ) 1 m ∑ i = 1 m e ( i ) x ( i ) ] = [ 1 m ( e ( 1 ) + e ( 2 ) + ⋯ + e ( m ) ) 1 m ( e ( 1 ) + e ( 2 ) + ⋯ + e ( m ) ) x ( i ) ] = 1 m [ 1 1 ⋯ 1 x ( 1 ) x ( 2 ) ⋯ x ( m ) ] [ e ( 1 ) e ( 2 ) ⋮ e ( m ) ] = 1 m X T e = 1 m X T ( X θ − y ) \begin{aligned} \left[ \begin{matrix} \cfrac{\partial{J(\theta_{0} , \theta_{1})}}{\partial{\theta_{0}}} \\ \cfrac{\partial{J(\theta_{0} , \theta_{1})}}{\partial{\theta_{1}}} \end{matrix} \right] &= \left[ \begin{matrix} \cfrac{1}{m} \displaystyle\sum\limits_{i = 1}^{m}{e^{(i)}} \\ \cfrac{1}{m} \displaystyle\sum\limits_{i = 1}^{m}{e^{(i)} x^{(i)}} \end{matrix} \right] = \left[ \begin{matrix} \cfrac{1}{m} \left(e^{(1)} + e^{(2)} + \cdots + e^{(m)}\right) \\ \cfrac{1}{m} \left(e^{(1)} + e^{(2)} + \cdots + e^{(m)}\right) x^{(i)} \end{matrix} \right] \\ &= \cfrac{1}{m} \left[ \begin{matrix} 1 & 1 & \cdots & 1 \\ x^{(1)} & x^{(2)} & \cdots & x^{(m)} \end{matrix} \right] \left[ \begin{matrix} e^{(1)} \\ e^{(2)} \\ \vdots \\ e^{(m)} \end{matrix} \right] = \cfrac{1}{m} X^{T} e = \cfrac{1}{m} X^{T} (X \theta - y) \end{aligned} ∂θ0∂J(θ0,θ1)∂θ1∂J(θ0,θ1) = m1i=1∑me(i)m1i=1∑me(i)x(i) = m1(e(1)+e(2)+⋯+e(m))m1(e(1)+e(2)+⋯+e(m))x(i) =m1[1x(1)1x(2)⋯⋯1x(m)] e(1)e(2)⋮e(m) =m1XTe=m1XT(Xθ−y)

- 由上述推导得

Δ θ = 1 m X T e \Delta{\theta} = \cfrac{1}{m} X^{T} e Δθ=m1XTe

θ : = θ − α Δ θ = θ − α 1 m X T e \theta := \theta - \alpha \Delta{\theta} = \theta - \alpha \cfrac{1}{m} X^{T} e θ:=θ−αΔθ=θ−αm1XTe

Python实现

import numpy as np

import matplotlib.pyplot as plt

x = np.array([4, 3, 3, 4, 2, 2, 0, 1, 2, 5, 1, 2, 5, 1, 3])

y = np.array([8, 6, 6, 7, 4, 4, 2, 4, 5, 9, 3, 4, 8, 3, 6])

m = len(x)

x = np.c_[np.ones([m, 1]), x]

y = y.reshape(m, 1) # 转成列向量

theta = np.zeros([2, 1])

alpha = 0.01

iter_cnt = 1000 # 迭代次数

cost = np.zeros([iter_cnt]) # 代价数据

for i in range(iter_cnt):

h = x.dot(theta) # 估计值

error = h - y # 误差值

cost[i] = 1 / 2 * m * error.T.dot(error) # 代价值

# 更新参数

delta_theta = 1 / m * x.T.dot(error)

theta -= alpha * delta_theta

# 线性拟合结果

plt.scatter(x[:, 1], y, c='blue')

plt.plot(x[:, 1], h, 'r-')

plt.show()

# 代价结果

plt.plot(cost)

plt.show()