k8s的二进制部署(一)

k8s的二进制部署:源码包部署

环境:

k8smaster01: 20.0.0.71 kube-apiserver kube-controller-manager kube-schedule ETCD

k8smaster02: 20.0.0.72 kube-apiserver kube-controller-manager kube-schedule

Node节点01: 20.0.0.73 kubelet kube-proxy ETCD

Node节点01: 20.0.0.74 kubelet kube-proxy ETCD

负载均衡:nginx+keepalived:

master:20.0.0.75 backup:20.0.0.0.76

ETCD:20.0.0.71

20.0.0.73

20.0.0.74

开始实验:

所有 :master01 node 1,2

systemctl stop firewalld

setenforce 0

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

swapoff -a

###

swapoff -a

k8s在设计时,为了提升性能,默认是不使用swap分区,kubenetes在初始化时,会检测swap是否关闭

##

master1:

hostnamectl set-hostname master01

node1:

hostnamectl set-hostname node01

node2:

hostnamectl set-hostname node02

所有

vim /etc/hosts

20.0.0.71 master01

20.0.0.72 node01

20.0.0.73 node02

vim /etc/sysctl.d/k8s.conf

#开启网桥模式:

net.bridge.bridge-nf-call-ip6tables=1

net.bridge.bridge-nf-call-iptables=1

#网桥的流量传给iptables链,实现地址映射

#关闭ipv6的流量(可选项)

net.ipv6.conf.all.disable_ipv6=1

#根据工作中的实际情况,自定

net.ipv4.ip_forward=1

wq!

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv6.conf.all.disable_ipv6=1

net.ipv4.ip_forward=1

##

sysctl --system

yum install ntpdate -y

ntpdate ntp.aliyun.com

date

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum install -y docker-ce docker-ce-cli containerd.io

systemctl start docker.service

systemctl enable docker.service

mkdir -p /etc/docker

tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://t7pjr1xu.mirror.aliyuncs.com"]

}

EOF

systemctl daemon-reload

systemctl restart docker

部署第一个组件:

存储k8s的集群信息和用户配置组件:etcd

etcd是一个高可用---分布式的键值对存储数据库

采用raft算法保证节点的信息一致性,etcd是用go语言写的

etcd的端口:2379:api接口 对外为客户端提供通讯

2380:内存服务的通信端口

etcd一般都是集群部署,etcd也有选举leader的机制,至少要3台,或者奇数台

k8s的内部通信依靠证书认证,密钥认证:证书的签发环境

关闭全部操作

master01:

cd /opt

投进来三个证书

mv cfssl cfssl-certinfo cfssljson /usr/local/bin

chmod 777 /usr/local/bin/cfssl*

##

cfssl:证书签发的命令工具

cdssl-certinfo:查看证书信息的工具

cfssljson:把证书的格式转化为json格式,变成文件的承载式证书

##

cd /opt

mkdir k8s

cd k8s

把两个证书投进去:

vim etcd-cert.sh

vim etcd.sh

q!

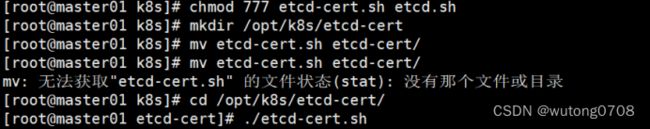

chmod 777 etcd-cert.sh etcd.sh

mkdir /opt/k8s/etcd-cert

mv etcd-cert.sh etcd-cert/

cd /opt/k8s/etcd-cert/

./etcd-cert.sh

Ls

##

ca-config.json ca-csr.json ca.pem server.csr server-key.pem

ca.csr ca-key.pem etcd-cert.sh server-csr.json server.pem

解析:

ca-config.json: 证书颁发的配置文件,定义了证书生成的策略,默认的过期时间和模版

ca-csr.json: 签名的请求文件,包括一些组织信息和加密方式

ca.pem 根证书文件,用于给其他组件签发证书

server.csr etcd的服务器签发证书的请求文件

server-key.pem etcd服务器的私钥文件

ca.csr 根证书签发请求文件

ca-key.pem 根证书私钥文件

server-csr.json 用于生成etcd的服务器证书和私钥签名文件

server.pem etcd服务器证书文件,用于加密和认证 etcd 节点之间的通信。

###

cd /opt/k8s/

把 etcd-v3.4.9-linux-amd64.tar.gz 拖进去

tar zxvf etcd-v3.4.9-linux-amd64.tar.gz

ls etcd-v3.4.9-linux-amd64

mkdir -p /opt/etcd/{cfg,bin,ssl}

cd etcd-v3.4.9-linux-amd64

mv etcd etcdctl /opt/etcd/bin/

cp /opt/k8s/etcd-cert/*.pem /opt/etcd/ssl/

cd /opt/k8s/

./etcd.sh etcd01 20.0.0.71 etcd02=https://20.0.0.72:2380,etcd03=https://20.0.0.73:2380

开一台master1终端

scp -r /opt/etcd/ [email protected]:/opt/

scp -r /opt/etcd/ [email protected]:/opt/

scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/

scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/

node1:

vim /opt/etcd/cfg/etcd

node2:

vim /opt/etcd/cfg/etcd

一个一个起(谁先启谁就是主节点)

从master01开始

systemctl start etcd

systemctl enable etcd

systemctl status etcd

master:

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem --endpoints="https://20.0.0.71:2379,https://20.0.0.72:2379,https://20.0.0.73:2379" endpoint health --write-out=table

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem --endpoints="https://20.0.0.71:2379,https://20.0.0.72:2379,https://20.0.0.73:2379" --write-out=table member list

master01:

#上传 master.zip 和 k8s-cert.sh 到 /opt/k8s 目录中,解压 master.zip 压缩包

cd /opt/k8s/

vim k8s-cert.sh

unzip master.zip

chmod 777 *.sh

vim controller-manager.sh

vim scheduler.sh

vim admin.sh

##

#创建kubernetes工作目录

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

mkdir /opt/k8s/k8s-cert

mv /opt/k8s/k8s-cert.sh /opt/k8s/k8s-cert

cd /opt/k8s/k8s-cert/

./k8s-cert.sh

Ls

cp ca*pem apiserver*pem /opt/kubernetes/ssl/

cd /opt/kubernetes/ssl/

Ls

cd /opt/k8s/

把 kubernetes-server-linux-amd64.tar.gz 拖进去

tar zxvf kubernetes-server-linux-amd64.tar.gz

cd /opt/k8s/kubernetes/server/bin

cp kube-apiserver kubectl kube-controller-manager kube-scheduler /opt/kubernetes/bin/

ln -s /opt/kubernetes/bin/* /usr/local/bin/

kubectl get node

kubectl get cs

cd /opt/k8s/

vim token.sh

#!/bin/bash

#获取随机数前16个字节内容,以十六进制格式输出,并删除其中空格

BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')

#生成 token.csv 文件,按照 Token序列号,用户名,UID,用户组 的格式生成

cat > /opt/kubernetes/cfg/token.csv < ${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap" EOF chmod 777 token.sh ./token.sh cat /opt/kubernetes/cfg/token.csv cd /opt/k8s/ ./apiserver.sh 20.0.0.71 https://20.0.0.71:2379,https://20.0.0.72:2379,https://20.0.0.73:2379 netstat -natp | grep 6443 cd /opt/k8s/ ./scheduler.sh ps aux | grep kube-scheduler ./controller-manager.sh ps aux | grep kube-controller-manager ./admin.sh kubectl get cs kubectl version kubectl api-resources vim /etc/profile G o source < (kubectl completion bash) source /etc/profile Master1操作完毕,接下来操作node1,2 在所有 node 节点上操作 node1,2: mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs} cd /opt node.zip 到 /opt 目录中 unzip node.zip chmod 777 kubelet.sh proxy.sh 关闭同步操作: master01: cd /opt/k8s/kubernetes/server/bin scp kubelet kube-proxy [email protected]:/opt/kubernetes/bin/ scp kubelet kube-proxy [email protected]:/opt/kubernetes/bin/ master 01: mkdir -p /opt/k8s/kubeconfig cd /opt/k8s/kubeconfig 把kubeconfig.sh 拖进去 chmod 777 kubeconfig.sh ./kubeconfig.sh 20.0.0.71 /opt/k8s/k8s-cert/ scp bootstrap.kubeconfig kube-proxy.kubeconfig [email protected]:/opt/kubernetes/cfg/ scp bootstrap.kubeconfig kube-proxy.kubeconfig [email protected]:/opt/kubernetes/cfg/ node1,2: cd /opt/kubernetes/cfg/ ls master01: kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap #RBAC授权,使用户 kubelet-bootstrap 能够有权限发起 CSR 请求证书,通过CSR加密认证实现INIDE节点加入到集群当中,kubelet获取master的验证信息和获取API的验证 kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=system:anonymous node1: cd /opt/ chmod 777 kubelet.sh ./kubelet.sh 20.0.0.72 master01: kubectl get cs kubectl get csr NAME AGE SIGNERNAME REQUESTOR CONDITION node-csr-duiobEzQ0R93HsULoS9NT9JaQylMmid_nBF3Ei3NtFE 12s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending kubectl certificate approve node-csr-duiobEzQ0R93HsULoS9NT9JaQylMmid_nBF3Ei3NtFE ##上面的密钥对 kubectl get csr NAME AGE SIGNERNAME REQUESTOR CONDITION node-csr-duiobEzQ0R93HsULoS9NT9JaQylMmid_nBF3Ei3NtFE 2m5s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued ##必须是Approved,Issued 才成功 node2: cd /opt/ chmod 777 kubelet.sh ./kubelet.sh 20.0.0.73 master01: kubectl get cs kubectl get csr NAME AGE SIGNERNAME REQUESTOR CONDITION node-csr-duiobEzQ0R93HsULoS9NT9JaQylMmid_nBF3Ei3NtFE 12s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending kubectl certificate approve node-csr-duiobEzQ0R93HsULoS9NT9JaQylMmid_nBF3Ei3NtFE ##上面的密钥对 kubectl get csr NAME AGE SIGNERNAME REQUESTOR CONDITION node-csr-duiobEzQ0R93HsULoS9NT9JaQylMmid_nBF3Ei3NtFE 2m5s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Approved,Issued ##必须是Approved,Issued 才成功 master01: kubectl get node