K8s资源管理介绍

用这个官网下的,kube-flannel.yml ,就不会nodes not-ready

---

kind: Namespace

apiVersion: v1

metadata:

name: kube-flannel

labels:

k8s-app: flannel

pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: flannel

name: flannel

rules:

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

- apiGroups:

- networking.k8s.io

resources:

- clustercidrs

verbs:

- list

- watch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: flannel

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: flannel

name: flannel

namespace: kube-flannel

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-flannel

labels:

tier: node

k8s-app: flannel

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-flannel

labels:

tier: node

app: flannel

k8s-app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

image: docker.io/flannel/flannel-cni-plugin:v1.2.0

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

image: docker.io/flannel/flannel:v0.24.0

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: docker.io/flannel/flannel:v0.24.0

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate省略搭建过程

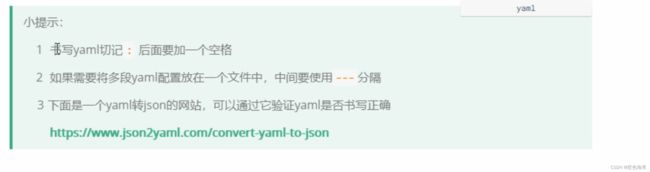

1.资源管理介绍

2.命令式对象管理

kubectl命令

kubectl是kubernetes集群的命令行工具,通过它能够对集群本身进行管理,并能够在集群上进行容器化应用的安装部署。kubectl命令的语法如下:

kubectl [command] [type] [name] [flags]comand:指定要对资源执行的操作,例如create、get、delete

type:指定资源类型,比如deployment、pod、service

name:指定资源的名称,名称大小写敏感

flags:指定额外的可选参数

# 查看所有pod

kubectl get pod

# 查看某个pod

kubectl get pod pod_name

# 查看某个pod,以yaml格式展示结果

kubectl get pod pod_name -o yaml资源类型

kubernetes中所有的内容都抽象为资源,可以通过下面的命令进行查看:

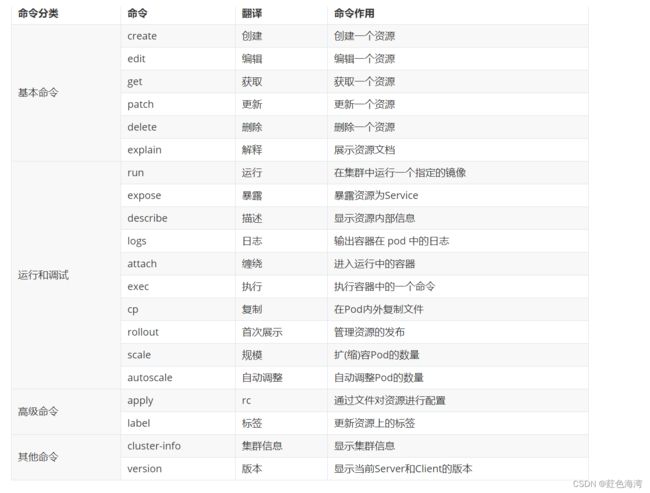

kubectl api-resourceskubectl --help经常使用的操作有下面这些:

下面以一个namespace / pod的创建和删除简单演示下命令的使用:

# 创建一个namespace

[root@master ~]# kubectl create namespace dev

namespace/dev created

# 获取namespace

[root@master ~]# kubectl get ns

NAME STATUS AGE

default Active 21h

dev Active 21s

kube-node-lease Active 21h

kube-public Active 21h

kube-system Active 21h

# 在此namespace下创建并运行一个nginx的Pod

[root@master ~]# kubectl run pod --image=nginx -n dev

kubectl run --generator=deployment/apps.v1 is DEPRECATED and will be removed in a future version. Use kubectl run --generator=run-pod/v1 or kubectl create instead.

deployment.apps/pod created

# 查看新创建的pod

[root@master ~]# kubectl get pod -n dev

NAME READY STATUS RESTARTS AGE

pod-864f9875b9-pcw7x 1/1 Running 0 21s

# 删除指定的pod

[root@master ~]# kubectl delete pod pod-864f9875b9-pcw7x

pod "pod-864f9875b9-pcw7x" deleted

# 删除指定的namespace

[root@master ~]# kubectl delete ns dev

namespace "dev" deleted3.命令式对象配置

命令式对象配置就是使用命令配合配置文件一起来操作kubernetes资源。

1) 创建一个nginxpod.yaml,内容如下:

apiVersion: v1

kind: Namespace

metadata:

name: dev

---

apiVersion: v1

kind: Pod

metadata:

name: nginxpod

namespace: dev

spec:

containers:

- name: nginx-containers

image: nginx:1.17.12)执行create命令,创建资源:

[root@master ~]# kubectl create -f nginxpod.yaml

namespace/dev created

pod/nginxpod created此时发现创建了两个资源对象,分别是namespace和pod

3)执行get命令,查看资源:

[root@master ~]# kubectl get -f nginxpod.yaml

NAME STATUS AGE

namespace/dev Active 18s

NAME READY STATUS RESTARTS AGE

pod/nginxpod 1/1 Running 0 17s这样就显示了两个资源对象的信息

4)执行delete命令,删除资源:

[root@master ~]# kubectl delete -f nginxpod.yaml

namespace "dev" deleted

pod "nginxpod" deleted此时发现两个资源对象被删除了

总结:

命令式对象配置的方式操作资源,可以简单的认为:命令 + yaml配置文件(里面是命令需要的各种参数)

4.声明式对象配置

声明式对象配置跟命令式对象配置很相似,但是它只有一个命令apply。

# 首先执行一次kubectl apply -f yaml文件,发现创建了资源

[root@master ~]# kubectl apply -f nginxpod.yaml

namespace/dev created

pod/nginxpod created

# 再次执行一次kubectl apply -f yaml文件,发现说资源没有变动

[root@master ~]# kubectl apply -f nginxpod.yaml

namespace/dev unchanged

pod/nginxpod unchanged5.小结

总结:

其实声明式对象配置就是使用apply描述一个资源最终的状态(在yaml中定义状态)

使用apply操作资源:

如果资源不存在,就创建,相当于 kubectl create

如果资源已存在,就更新,相当于 kubectl patch扩展:kubectl可以在node节点上运行吗 ?

kubectl的运行是需要进行配置的,它的配置文件是$HOME/.kube,如果想要在node节点运行此命令,需要将master上的.kube文件复制到node节点上,即在master节点上执行下面操作:

scp -r HOME/.kube node1: HOME/使用推荐: 三种方式应该怎么用 ?

创建/更新资源 使用声明式对象配置 kubectl apply -f XXX.yaml

删除资源 使用命令式对象配置 kubectl delete -f XXX.yaml

查询资源 使用命令式对象管理 kubectl get(describe) 资源名称5.5.