gstreamer学习(3)——动态创建pipeline

目录

- 实例

-

- 示例代码

- 运行结果

- 概念

-

- signals

- GStreamer States

- 代码解读

-

- pipeline创建

- callback

- 课后练习

-

- 代码

- 效果

此博客是在gstreamer官网学习并总结的学习概要,具体参考gstreamer官网教程:动态pipeline

实例

惯例,先上官网示例代码:

示例代码

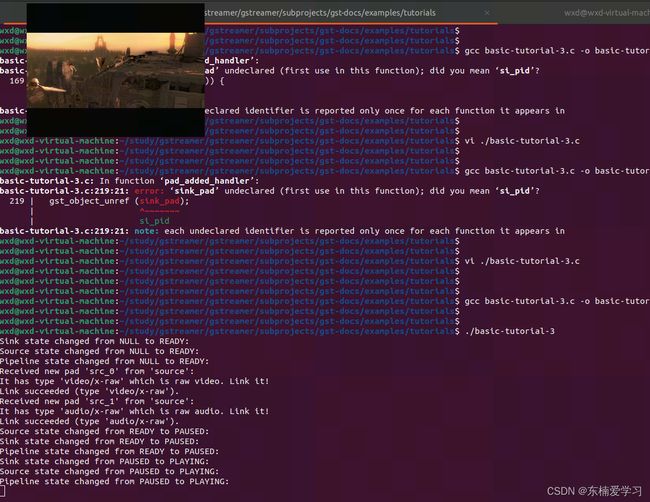

#include 运行结果

同时,耳机中会有音频播放(因为这个教程就是只把audio部分播放出来)。

概念

signals

信号用来对感兴趣的事件执行某些指定操作,它通过名字区分不同信号,并且每个GObject都有自己的信号。

GStreamer States

gstreamer有4种states,分别是:

| state | 描述 |

|---|---|

| NULL | 元素的初始状态 |

| READY | 元素已经准备好进入PAUSED状态了 |

| PAUSED | 元素被暂停,它已经准备好接收数据并处理它,sink元素只接收一个buffer并且阻塞住 |

| PLAYING | 元素进入播放状态,数据流此时已经运转起来了 |

需要注意的是,你不能从NULL状态直接流转到PLAYING状态,只能按照NULL --> READY --> PAUSED --> PLAYING状态时序进行流转,如果你将pipeline配置为PLAYING状态,gstreamer会为你进行中间状态的流转。

每个元素都会将自己当前的状态封装成一个消息放到pipeline的bus上(存疑,从代码中看是从bus中获取pipeline的状态,但是此处显然是说元素的状态会上报到bus,原文为

Every element puts messages on the bus regarding its current state, so we filter them out and only listen to messages coming from the pipeline.

代码解读

pipeline创建

/* Structure to contain all our information, so we can pass it to callbacks */

typedef struct _CustomData {

GstElement *pipeline;

GstElement *source;

GstElement *convert;

GstElement *resample;

GstElement *sink;

} CustomData;

因为这个教程需要有一个回调函数,所以把所有数据放到同一个结构体中,方便传入。

/* Create the elements */

data.source = gst_element_factory_make ("uridecodebin", "source");

data.convert = gst_element_factory_make ("audioconvert", "convert");

data.resample = gst_element_factory_make ("audioresample", "resample");

data.sink = gst_element_factory_make ("autoaudiosink", "sink");

这里创建了4个元素,用来串pipeline。

uridecodebin这个元素,已经包含了必要的所有元素(sources, demuxers, decoders)用来将URL转换成audio/video数据流。

if (!gst_element_link_many (data.convert, data.resample, data.sink, NULL)) {

g_printerr ("Elements could not be linked.\n");

gst_object_unref (data.pipeline);

return -1;

}

把除了source元素之外的三个元素link起来,source放在后面link,这也是dynamic pipeline的意义。

/* Set the URI to play */

g_object_set (data.source, "uri", "https://gstreamer.freedesktop.org/data/media/sintel_trailer-480p.webm", NULL);

配置source的URL。

/* Connect to the pad-added signal */

g_signal_connect (data.source, "pad-added", G_CALLBACK (pad_added_handler), &data);

为source元素的"pad-added"信号添加回调函数和对应的入参。

callback

uridecodebin模块在有足够的数据之后,它会创建source pad,这时候它会触发一个"pad-added"信号,并调用之前注册进去的回调函数。

GstPad *sink_pad = gst_element_get_static_pad (data->convert, "sink");

通过函数gst_element_get_static_pad()可以获取元素的对应pad。把这个sink pad和uridecodebin模块的source pad相连,就可以创建完整的pipeline。

/* If our converter is already linked, we have nothing to do here */

if (gst_pad_is_linked (sink_pad)) {

g_print ("We are already linked. Ignoring.\n");

goto exit;

}

当然,在连接sink pad前,先判断下这个pad是否已经link过了

/* Check the new pad's type */

new_pad_caps = gst_pad_get_current_caps (new_pad, NULL);

new_pad_struct = gst_caps_get_structure (new_pad_caps, 0);

new_pad_type = gst_structure_get_name (new_pad_struct);

if (!g_str_has_prefix (new_pad_type, "audio/x-raw")) {

g_print ("It has type '%s' which is not raw audio. Ignoring.\n", new_pad_type);

goto exit;

}

获取当前pad的属性,因为我们创建的pipeline是用来处理audio的,所以其他的pad都会默认忽略掉。

函数gst_pad_get_current_caps()用来获取当前pad的能力集。

通过函数gst_pad_query_caps()可以获取一个pad可以支持的所有能力。所以new_pad_caps可能包含多个能力,可以通过遍历的方式去遍历每个能力,直到它返回NULL。

因为我们知道uridecodebin的audio source pad只有一个能力,所以就直接获取它第一个能力即可。

/* Attempt the link */

ret = gst_pad_link (new_pad, sink_pad);

if (GST_PAD_LINK_FAILED (ret)) {

g_print ("Type is '%s' but link failed.\n", new_pad_type);

} else {

g_print ("Link succeeded (type '%s').\n", new_pad_type);

}

通过函数gst_pad_link()将两个pad连接到一起,跟函数gst_element_link()类似,link操作必须是一个source pad和一个sink pad相连,并且他们所属的元素同属于同一个bin。

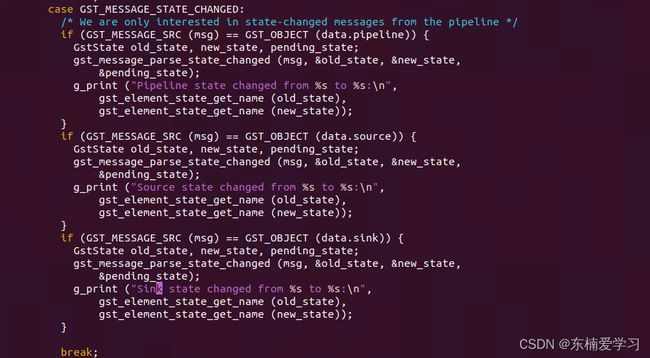

case GST_MESSAGE_STATE_CHANGED:

/* We are only interested in state-changed messages from the pipeline */

if (GST_MESSAGE_SRC (msg) == GST_OBJECT (data.pipeline)) {

GstState old_state, new_state, pending_state;

gst_message_parse_state_changed (msg, &old_state, &new_state, &pending_state);

g_print ("Pipeline state changed from %s to %s:\n",

gst_element_state_get_name (old_state), gst_element_state_get_name (new_state));

}

break;

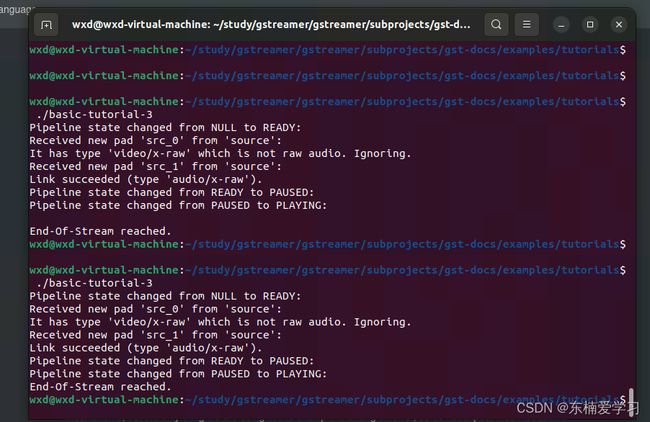

这些语句将当前pipeline的状态打印出来。在此处可以验证上面概念中的疑惑,是否总线上不止存在pipeline的状态,还存在各个元素的状态,通过添加以下代码验证:

运行结果为:

从上述打印可以看到,bus上的确同时存在pipeline和元素的状态,并且,当所有元素(或者最后一个元素)都处于某个状态时,pipeline状态才会对应的改变。因为pipeline也是一种特殊的元素,也就是说,只要是元素,都会将自身状态上报到bus。

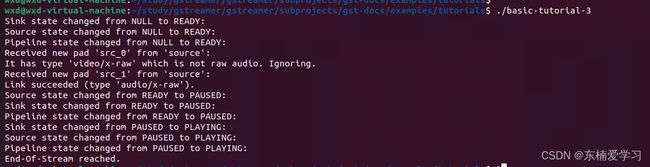

课后练习

我们可以添加一个autovideosink模块,用来播放当前例程里的video,代码如下:

代码

#include