YOLOv5改进 | 主干篇 | 12月份最新成果TransNeXt特征提取网络(全网首发)

一、本文介绍

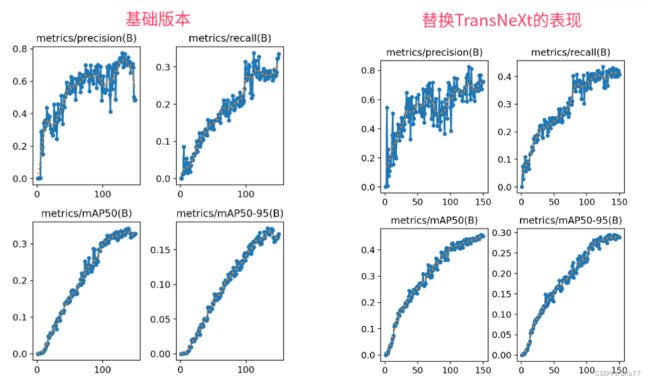

本文给大家带来的改进机制是TransNeXt特征提取网络,其发表于2023年的12月份是一个最新最前沿的网络模型,将其应用在我们的特征提取网络来提取特征,同时本文给大家解决其自带的一个报错,通过结合聚合的像素聚焦注意力和卷积GLU,模拟生物视觉系统,特别是对于中心凹的视觉感知。这种方法使得每个像素都能实现全局感知,并强化了模型的信息混合和自然视觉感知能力。TransNeXt在各种视觉任务中,包括图像分类、目标检测和语义分割,都显示出优异的性能(该模型的训练时间很长这是需要大家注意的)。

欢迎大家订阅我的专栏一起学习YOLO!

专栏目录:YOLOv5改进有效涨点目录 | 包含卷积、主干、检测头、注意力机制、Neck上百种创新机制

专栏回顾:YOLOv5改进专栏——持续复现各种顶会内容——内含100+创新

目录

一、本文介绍

二、TransNeXt的框架原理

2.1 聚合注意力机制

2.2 卷积GLU

2.3 TransNeXt的架构示意图

三、TransNeXt的核心代码

四、手把手教你添加TransNeXt机制

修改一

修改二

修改三

修改四

修改五

修改六

修改七

注意!!! 额外的修改!

修改八

注意事项!!!

五、TransNeXt的yaml文件

5.1 训练文件的代码

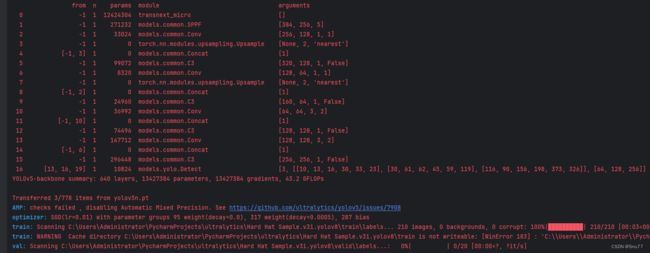

六、成功运行记录

七、本文总结

二、TransNeXt的框架原理

官方论文地址:官方论文地址

官方代码地址:官方代码地址

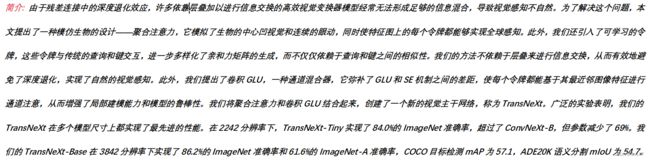

TransNeXt: Robust Foveal Visual Perception for Vision Transformers介绍了一种新的视觉模型,旨在改进现有视觉变换器的性能。这个模型,被称为 TransNeXt,通过结合聚合的像素聚焦注意力和卷积GLU,模拟生物视觉系统,特别是对于中心凹的视觉感知。这种方法使得每个像素都能实现全局感知,并强化了模型的信息混合和自然视觉感知能力。TransNeXt在各种视觉任务中,包括图像分类、目标检测和语义分割,都显示出优异的性能。

TransNeXt的主要创新点包括:

1. 聚合注意力机制:模仿生物中心凹视觉和连续眼动,使每个令牌在特征图上都能实现全球感知。

2. 卷积GLU(Gated Linear Unit):弥补了GLU和SE(Squeeze-and-Excitation)机制之间的差距,增强局部建模能力和模型鲁棒性。

这些创新点共同使TransNeXt在图像分类、目标检测和语义分割等多种视觉任务中表现卓越。

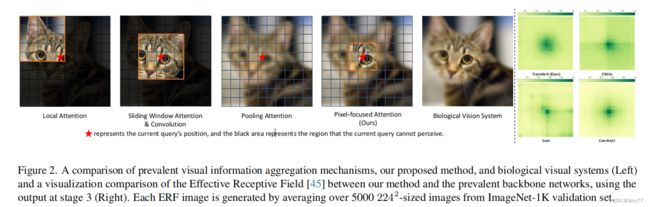

这幅图展示了不同视觉信息聚合机制的比较,包括提出的方法和生物视觉系统。通过平均超过5000张224²分辨率的ImageNet-1K验证集图像,展示了不同方法与流行背景网络之间的有效感受野(ERF)的可视化对比。图中展示了四种不同的注意力机制:局部注意力、滑动窗口注意力与卷积、池化注意力,以及本文提出的像素聚焦注意力,以及它们与生物视觉系统的对比。每种机制下,红星表示当前查询的位置,黑色区域表示当前查询无法感知的区域。右侧的图表比较了TransNeXt(本文提出的方法)和其他几种流行的模型在处理视觉信息时的差异。

这幅图展示了不同视觉信息聚合机制的比较,包括提出的方法和生物视觉系统。通过平均超过5000张224²分辨率的ImageNet-1K验证集图像,展示了不同方法与流行背景网络之间的有效感受野(ERF)的可视化对比。图中展示了四种不同的注意力机制:局部注意力、滑动窗口注意力与卷积、池化注意力,以及本文提出的像素聚焦注意力,以及它们与生物视觉系统的对比。每种机制下,红星表示当前查询的位置,黑色区域表示当前查询无法感知的区域。右侧的图表比较了TransNeXt(本文提出的方法)和其他几种流行的模型在处理视觉信息时的差异。

2.1 聚合注意力机制

聚合注意力机制(Aggregated Attention, AA)是TransNeXt模型中的一个核心创新,它融合了多种注意力机制并为多尺度输入增强了外推能力,具体包括以下几点:

1. 像素聚焦注意力:

- 该机制受到生物视觉系统功能的启发,旨在为每个查询(query)提供细粒度的感知,同时保持对全局信息的粗粒度认识。

- 通过使用双路径设计,结合了以查询为中心的滑动窗口注意力和池化注意力,实现了像素级的平移等变性,模拟眼球运动的特性。

- 这种设计导致细粒度和粗粒度特征之间的竞争,使得像素聚焦注意力转变为一个多尺度的注意力机制。

2. 集成多样的注意力机制:

- 研究表明,将可学习的查询前缀整合到注意力机制中,并直接对其进行优化,对于图像分类、目标检测和语义分割等明确定义的任务是有效且高效的。

- 添加可学习的查询嵌入到所有传统的查询-键值-值(QKV)注意力中的查询令牌可以实现类似的信息聚合效果,且额外开销微不足道。

3. 位置注意力:

- 使用一组可学习的键与输入中的查询互动,获得注意力权重,即查询-可学习值(QLV)注意力。

- 与传统的QKV注意力相比,该方法打破了键和值之间一对一的对应关系,可以学习当前查询的更隐式的相对位置信息。

4. 多尺度输入的外推能力:

- 为了克服多尺度输入的问题,提出了长度缩放的余弦注意力,该方法使用余弦相似性,通过增加一个额外的可学习系数来改善训练大型视觉模型的稳定性。

- 这种设计有助于处理随着输入序列长度增加而减少的注意力输出的置信度问题,并通过长度缩放来维持熵的不变性,以便更好地推广到未知长度。

5. 聚合注意力(Aggregated Attention):

- 通过应用上述多样的注意力聚合方法和技术,提出了增强版的像素聚焦注意力,即聚合像素聚焦注意力。

- 该机制不仅聚合了多种注意力机制,还在计算过程中引入了长度缩放的余弦注意力和可学习的查询嵌入,以及特定的位置编码方法,进一步提升了对多尺度输入的处理能力。

总结:聚合注意力机制通过模拟生物视觉系统,提供了一种更自然的视觉感知方式,可以有效地处理来自不同层次和尺度的信息,并通过结合不同的注意力路径和可学习组件,增强了模型对于多尺度输入的外推能力。

缺点:但是它总结了这么多注意力机制,它限制了通道数减少了参数量,但是其运算非常复杂导致速度很慢

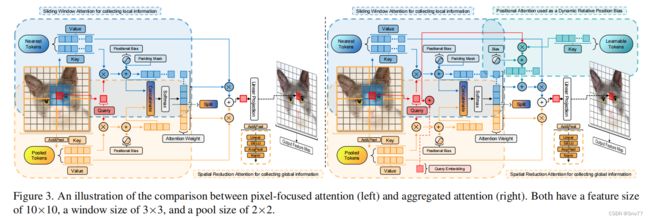

图3是像素聚焦注意力(左图)与聚合注意力(右图)之间对比的插图。两者都具有10x10的特征尺寸,一个3x3的窗口尺寸和2x2的池化尺寸。

左图(像素聚焦注意力):

- 展示了一个包含滑动窗口注意力来收集局部信息的结构。这涉及到对最近令牌的查询和键值对比较以及位置偏差的应用。

- 使用池化操作来收集更广泛区域的信息,通过AxialPool来实现,这样的设计旨在捕捉到全局信息。

- 在计算完注意力权重之后,这些信息会被合并并通过AxialPool、LayerNorm等操作处理,最终生成输出特征图。

右图(聚合注意力):

- 在像素聚焦注意力的基础上增加了一些关键的组件来形成聚合注意力。引入了位置注意力,它作为动态相对位置偏差使用,和可学习的令牌相结合,来增强模型对位置的感知能力。

- 加入了查询嵌入(Query Embedding),这是一种改进,它使得每个查询都与一个额外的可学习向量相结合,以进一步优化注意力权重的计算。

- 同样地,通过各种层操作处理后生成输出特征图。

2.2 卷积GLU

卷积GLU是TransNeXt模型中的一个关键创新点,旨在弥补GLU和SE(大家看的熟悉么我们之前讲过)机制之间的差距。以下是关于卷积GLU的详细介绍:

1. 基于最近邻图像特征的通道注意力:卷积GLU采用了一种基于最近邻图像特征的通道注意力机制。这种设计避免了SE机制中全局平均池化的过于粗粒度的缺点,并满足了一些没有位置编码设计的ViT(视觉变换器)模型的需求,这些模型需要通过深度卷积提供的位置信息。

2.强化局部建模能力和模型鲁棒性:与传统的卷积前馈网络相比,卷积GLU通过较少的浮点运算(FLOPs)实现了通道混合器的注意力化,从而有效地增强了模型的鲁棒性。

3. 创建新的视觉主干网络TransNeXt:将聚合注意力和卷积GLU结合起来,创造了一个新的视觉主干网络,名为TransNeXt。通过广泛的实验,TransNeXt在多个模型尺寸上都实现了最先进的性能。

总结:卷积GLU的引入,使得每个令牌都能够基于其最近的细粒度特征拥有独特的门控信号,这不仅提高了对局部特征的建模能力,还提高了模型在处理不同尺度和复杂性的视觉数据时的稳健性。

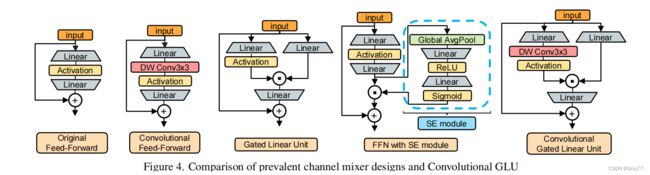

图4展示了当前流行的通道混合器设计与卷积GLU(Convolutional Gated Linear Unit)的比较。四个框架分别表示:

1. 原始前馈网络(Original Feed-Forward):

- 输入通过一个线性层,接着是激活函数,然后又是一个线性层。

- 最后,输入和线性层的输出相加,形成最终的输出。

2. 卷积前馈网络(Convolutional Feed-Forward):

- 输入通过一个线性层,接着是一个深度卷积层(DW Conv 3x3),然后是一个激活函数,再是一个线性层。

- 最后,输入和线性层的输出相加,形成最终的输出。

3. 门控线性单元(Gated Linear Unit, GLU):

- 输入通过两个平行的线性层,一个直接输出,另一个先经过激活函数,然后输出。

- 这两个输出进行逐元素乘法操作,然后通过另一个线性层。

- 最后,输入和这个线性层的输出相加,形成最终的输出。

4. 带有SE模块的前馈网络(FFN with SE module):

- 输入通过一个线性层,接着是激活函数,然后是另一个线性层。

- 同时,输入经过全局平均池化,然后是一个线性层,ReLU激活函数,另一个线性层,以及Sigmoid函数,形成SE模块的输出。

- SE模块的输出与前馈网络的中间输出进行逐元素乘法操作。

- 最后,输入和乘法操作后的输出相加,形成最终的输出。

5. 卷积门控线性单元(Convolutional Gated Linear Unit):

- 输入通过一个线性层,接着是一个深度卷积层(DW Conv 3x3),然后是激活函数。

- 同时,输入也经过另外一个线性层的处理。

- 这两个部分的输出进行逐元素乘法操作。

- 最后,输入和乘法操作后的输出相加,形成最终的输出。

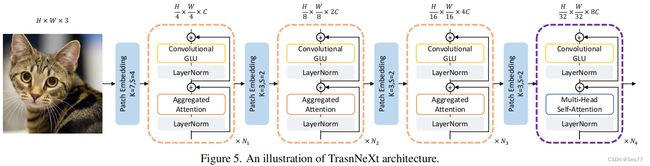

2.3 TransNeXt的架构示意图

图5展示了TransNeXt架构的一个示意图,揭示了其内部的组件和数据流。这个架构通过多个阶段的处理来处理输入图像,其中每个阶段都包含卷积GLU和聚合注意力机制的层。以下是每个阶段的详细介绍:

1. 图像输入:

- 输入图像的维度是

,其中H和W是图像的高度和宽度,3代表RGB三个颜色通道。

,其中H和W是图像的高度和宽度,3代表RGB三个颜色通道。

2. 阶段1:

- 首先,图像通过一个补丁嵌入层(Patch Embedding),这个层将图像分割成更小的块,并将每块映射成一个向量,向量的大小由

决定,这里的C是嵌入向量的维度。

决定,这里的C是嵌入向量的维度。 - 接着,数据流通过多个卷积GLU和聚合注意力机制的层,每个层后都跟随一个层归一化。

- 这个阶段重复

次,每次都可能对特征图进行下采样,减少其空间维度并增加通道数例如

次,每次都可能对特征图进行下采样,减少其空间维度并增加通道数例如 。

。

3. 阶段2和3:

- 这些阶段与阶段1类似,但是每个阶段都会进一步减少特征图的空间维度并增加通道数(例如阶段2是

,阶段3是

,阶段3是 。

。 - 在这些阶段中,模型继续使用卷积GLU和聚合注意力来处理和提炼特征,这些特征对应于更抽象的图像表示。

- 阶段2和3分别重复

和

和 次。

次。

4. 阶段4:

- 在最后一个阶段,模型增加了多头自注意力(Multi-Head Self-Attention)层,这是标准Transformer架构的关键部分,它可以捕捉不同头部间的不同表示。

- 同样,这个阶段还使用卷积GLU和层归一化,重复

次。

次。

总结:通过这些阶段的处理,TransNeXt模型能够逐步提取和处理图像特征,从局部像素级特征到更高层次的抽象表示。每个阶段的输出都准备好进入下一个阶段,直到最终生成能够用于图像分类、目标检测或语义分割任务的高级特征。此架构展示了如何通过结合卷积和注意力机制来有效地处理视觉数据,同时逐步增加通道数和降低空间分辨率,以提高计算效率和模型性能。

三、TransNeXt的核心代码

官方的代码提供了两种一种是需要编译的,条件非常苛刻,但是速度运行比不编译的要快一些,我这里提供的是不编译的方便大家运行,大家要是想用编译的可以从我给的代码链接中找到,按照我的对比着来进行修改即可。

import torch

import torch.nn as nn

import torch.nn.functional as F

import numpy as np

from functools import partial

from timm.models.layers import DropPath, to_2tuple, trunc_normal_

import math

class DWConv(nn.Module):

def __init__(self, dim=768):

super(DWConv, self).__init__()

self.dwconv = nn.Conv2d(dim, dim, kernel_size=3, stride=1, padding=1, bias=True, groups=dim)

def forward(self, x, H, W):

B, N, C = x.shape

x = x.transpose(1, 2).view(B, C, H, W).contiguous()

x = self.dwconv(x)

x = x.flatten(2).transpose(1, 2)

return x

class ConvolutionalGLU(nn.Module):

def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):

super().__init__()

out_features = out_features or in_features

hidden_features = hidden_features or in_features

hidden_features = int(2 * hidden_features / 3)

self.fc1 = nn.Linear(in_features, hidden_features * 2)

self.dwconv = DWConv(hidden_features)

self.act = act_layer()

self.fc2 = nn.Linear(hidden_features, out_features)

self.drop = nn.Dropout(drop)

def forward(self, x, H, W):

x, v = self.fc1(x).chunk(2, dim=-1)

x = self.act(self.dwconv(x, H, W)) * v

x = self.drop(x)

x = self.fc2(x)

x = self.drop(x)

return x

@torch.no_grad()

def get_relative_position_cpb(query_size, key_size, pretrain_size=None):

# device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

pretrain_size = pretrain_size or query_size

axis_qh = torch.arange(query_size[0], dtype=torch.float32)

axis_kh = F.adaptive_avg_pool1d(axis_qh.unsqueeze(0), key_size[0]).squeeze(0)

axis_qw = torch.arange(query_size[1], dtype=torch.float32)

axis_kw = F.adaptive_avg_pool1d(axis_qw.unsqueeze(0), key_size[1]).squeeze(0)

axis_kh, axis_kw = torch.meshgrid(axis_kh, axis_kw)

axis_qh, axis_qw = torch.meshgrid(axis_qh, axis_qw)

axis_kh = torch.reshape(axis_kh, [-1])

axis_kw = torch.reshape(axis_kw, [-1])

axis_qh = torch.reshape(axis_qh, [-1])

axis_qw = torch.reshape(axis_qw, [-1])

relative_h = (axis_qh[:, None] - axis_kh[None, :]) / (pretrain_size[0] - 1) * 8

relative_w = (axis_qw[:, None] - axis_kw[None, :]) / (pretrain_size[1] - 1) * 8

relative_hw = torch.stack([relative_h, relative_w], dim=-1).view(-1, 2)

relative_coords_table, idx_map = torch.unique(relative_hw, return_inverse=True, dim=0)

relative_coords_table = torch.sign(relative_coords_table) * torch.log2(

torch.abs(relative_coords_table) + 1.0) / torch.log2(torch.tensor(8, dtype=torch.float32))

return idx_map, relative_coords_table

@torch.no_grad()

def get_seqlen_and_mask(input_resolution, window_size):

attn_map = F.unfold(torch.ones([1, 1, input_resolution[0], input_resolution[1]]), window_size,

dilation=1, padding=(window_size // 2, window_size // 2), stride=1)

attn_local_length = attn_map.sum(-2).squeeze().unsqueeze(-1)

attn_mask = (attn_map.squeeze(0).permute(1, 0)) == 0

return attn_local_length, attn_mask

class AggregatedAttention(nn.Module):

def __init__(self, dim, input_resolution, num_heads=8, window_size=3, qkv_bias=True,

attn_drop=0., proj_drop=0., sr_ratio=1):

super().__init__()

assert dim % num_heads == 0, f"dim {dim} should be divided by num_heads {num_heads}."

self.dim = dim

self.num_heads = num_heads

self.head_dim = dim // num_heads

self.sr_ratio = sr_ratio

assert window_size % 2 == 1, "window size must be odd"

self.window_size = window_size

self.local_len = window_size ** 2

self.pool_H, self.pool_W = input_resolution[0] // self.sr_ratio, input_resolution[1] // self.sr_ratio

self.pool_len = self.pool_H * self.pool_W

self.unfold = nn.Unfold(kernel_size=window_size, padding=window_size // 2, stride=1)

self.temperature = nn.Parameter(

torch.log((torch.ones(num_heads, 1, 1) / 0.24).exp() - 1)) # Initialize softplus(temperature) to 1/0.24.

self.q = nn.Linear(dim, dim, bias=qkv_bias)

self.query_embedding = nn.Parameter(

nn.init.trunc_normal_(torch.empty(self.num_heads, 1, self.head_dim), mean=0, std=0.02))

self.kv = nn.Linear(dim, dim * 2, bias=qkv_bias)

self.attn_drop = nn.Dropout(attn_drop)

self.proj = nn.Linear(dim, dim)

self.proj_drop = nn.Dropout(proj_drop)

# Components to generate pooled features.

self.pool = nn.AdaptiveAvgPool2d((self.pool_H, self.pool_W))

self.sr = nn.Conv2d(dim, dim, kernel_size=1, stride=1, padding=0)

self.norm = nn.LayerNorm(dim)

self.act = nn.GELU()

# mlp to generate continuous relative position bias

self.cpb_fc1 = nn.Linear(2, 512, bias=True)

self.cpb_act = nn.ReLU(inplace=True)

self.cpb_fc2 = nn.Linear(512, num_heads, bias=True)

# relative bias for local features

self.relative_pos_bias_local = nn.Parameter(

nn.init.trunc_normal_(torch.empty(num_heads, self.local_len), mean=0,

std=0.0004))

# Generate padding_mask && sequnce length scale

local_seq_length, padding_mask = get_seqlen_and_mask(input_resolution, window_size)

self.register_buffer("seq_length_scale", torch.as_tensor(np.log(local_seq_length.numpy() + self.pool_len)),

persistent=False)

self.register_buffer("padding_mask", padding_mask, persistent=False)

# dynamic_local_bias:

self.learnable_tokens = nn.Parameter(

nn.init.trunc_normal_(torch.empty(num_heads, self.head_dim, self.local_len), mean=0, std=0.02))

self.learnable_bias = nn.Parameter(torch.zeros(num_heads, 1, self.local_len))

def forward(self, x, H, W, relative_pos_index, relative_coords_table):

B, N, C = x.shape

# Generate queries, normalize them with L2, add query embedding, and then magnify with sequence length scale and temperature.

# Use softplus function ensuring that the temperature is not lower than 0.

q_norm = F.normalize(self.q(x).reshape(B, N, self.num_heads, self.head_dim).permute(0, 2, 1, 3), dim=-1)

q_norm_scaled = (q_norm + self.query_embedding) * F.softplus(self.temperature) * self.seq_length_scale

# Generate unfolded keys and values and l2-normalize them

k_local, v_local = self.kv(x).chunk(2, dim=-1)

k_local = F.normalize(k_local.reshape(B, N, self.num_heads, self.head_dim), dim=-1).reshape(B, N, -1)

kv_local = torch.cat([k_local, v_local], dim=-1).permute(0, 2, 1).reshape(B, -1, H, W)

k_local, v_local = self.unfold(kv_local).reshape(

B, 2 * self.num_heads, self.head_dim, self.local_len, N).permute(0, 1, 4, 2, 3).chunk(2, dim=1)

# Compute local similarity

attn_local = ((q_norm_scaled.unsqueeze(-2) @ k_local).squeeze(-2) \

+ self.relative_pos_bias_local.unsqueeze(1)).masked_fill(self.padding_mask, float('-inf'))

# Generate pooled features

x_ = x.permute(0, 2, 1).reshape(B, -1, H, W).contiguous()

x_ = self.pool(self.act(self.sr(x_))).reshape(B, -1, self.pool_len).permute(0, 2, 1)

x_ = self.norm(x_)

# Generate pooled keys and values

kv_pool = self.kv(x_).reshape(B, self.pool_len, 2 * self.num_heads, self.head_dim).permute(0, 2, 1, 3)

k_pool, v_pool = kv_pool.chunk(2, dim=1)

# Use MLP to generate continuous relative positional bias for pooled features.

pool_bias = self.cpb_fc2(self.cpb_act(self.cpb_fc1(relative_coords_table))).transpose(0, 1)[:,

relative_pos_index.view(-1)].view(-1, N, self.pool_len)

# Compute pooled similarity

attn_pool = q_norm_scaled @ F.normalize(k_pool, dim=-1).transpose(-2, -1) + pool_bias

# Concatenate local & pooled similarity matrices and calculate attention weights through the same Softmax

attn = torch.cat([attn_local, attn_pool], dim=-1).softmax(dim=-1)

attn = self.attn_drop(attn)

# Split the attention weights and separately aggregate the values of local & pooled features

attn_local, attn_pool = torch.split(attn, [self.local_len, self.pool_len], dim=-1)

x_local = (((q_norm @ self.learnable_tokens) + self.learnable_bias + attn_local).unsqueeze(

-2) @ v_local.transpose(-2, -1)).squeeze(-2)

x_pool = attn_pool @ v_pool

x = (x_local + x_pool).transpose(1, 2).reshape(B, N, C)

# Linear projection and output

x = self.proj(x)

x = self.proj_drop(x)

return x

class Attention(nn.Module):

def __init__(self, dim, input_resolution, num_heads=8, qkv_bias=True, attn_drop=0., proj_drop=0.):

super().__init__()

assert dim % num_heads == 0, f"dim {dim} should be divided by num_heads {num_heads}."

self.dim = dim

self.num_heads = num_heads

self.head_dim = dim // num_heads

self.temperature = nn.Parameter(

torch.log((torch.ones(num_heads, 1, 1) / 0.24).exp() - 1)) # Initialize softplus(temperature) to 1/0.24.

# Generate sequnce length scale

self.register_buffer("seq_length_scale", torch.as_tensor(np.log(input_resolution[0] * input_resolution[1])),

persistent=False)

self.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)

self.query_embedding = nn.Parameter(

nn.init.trunc_normal_(torch.empty(self.num_heads, 1, self.head_dim), mean=0, std=0.02))

self.attn_drop = nn.Dropout(attn_drop)

self.proj = nn.Linear(dim, dim)

self.proj_drop = nn.Dropout(proj_drop)

# mlp to generate continuous relative position bias

self.cpb_fc1 = nn.Linear(2, 512, bias=True)

self.cpb_act = nn.ReLU(inplace=True)

self.cpb_fc2 = nn.Linear(512, num_heads, bias=True)

def forward(self, x, H, W, relative_pos_index, relative_coords_table):

B, N, C = x.shape

qkv = self.qkv(x).reshape(B, -1, 3 * self.num_heads, self.head_dim).permute(0, 2, 1, 3)

q, k, v = qkv.chunk(3, dim=1)

# Use MLP to generate continuous relative positional bias

rel_bias = self.cpb_fc2(self.cpb_act(self.cpb_fc1(relative_coords_table))).transpose(0, 1)[:,

relative_pos_index.view(-1)].view(-1, N, N)

# Calculate attention map using sequence length scaled cosine attention and query embedding

attn = ((F.normalize(q, dim=-1) + self.query_embedding) * F.softplus(

self.temperature) * self.seq_length_scale) @ F.normalize(k, dim=-1).transpose(-2, -1) + rel_bias

attn = attn.softmax(dim=-1)

attn = self.attn_drop(attn)

x = (attn @ v).transpose(1, 2).reshape(B, N, C)

x = self.proj(x)

x = self.proj_drop(x)

return x

class Block(nn.Module):

def __init__(self, dim, num_heads, input_resolution, window_size=3, mlp_ratio=4.,

qkv_bias=False, drop=0., attn_drop=0.,

drop_path=0., act_layer=nn.GELU, norm_layer=nn.LayerNorm, sr_ratio=1):

super().__init__()

self.norm1 = norm_layer(dim)

if sr_ratio == 1:

self.attn = Attention(

dim,

input_resolution,

num_heads=num_heads,

qkv_bias=qkv_bias,

attn_drop=attn_drop,

proj_drop=drop)

else:

self.attn = AggregatedAttention(

dim,

input_resolution,

window_size=window_size,

num_heads=num_heads,

qkv_bias=qkv_bias,

attn_drop=attn_drop,

proj_drop=drop,

sr_ratio=sr_ratio)

self.norm2 = norm_layer(dim)

mlp_hidden_dim = int(dim * mlp_ratio)

self.mlp = ConvolutionalGLU(in_features=dim, hidden_features=mlp_hidden_dim, act_layer=act_layer, drop=drop)

# NOTE: drop path for stochastic depth, we shall see if this is better than dropout here

self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()

def forward(self, x, H, W, relative_pos_index, relative_coords_table):

x = x + self.drop_path(self.attn(self.norm1(x), H, W, relative_pos_index, relative_coords_table))

x = x + self.drop_path(self.mlp(self.norm2(x), H, W))

return x

class OverlapPatchEmbed(nn.Module):

""" Image to Patch Embedding

"""

def __init__(self, patch_size=7, stride=4, in_chans=3, embed_dim=768):

super().__init__()

patch_size = to_2tuple(patch_size)

assert max(patch_size) > stride, "Set larger patch_size than stride"

self.proj = nn.Conv2d(in_chans, embed_dim, kernel_size=patch_size, stride=stride,

padding=(patch_size[0] // 2, patch_size[1] // 2))

self.norm = nn.LayerNorm(embed_dim)

def forward(self, x):

x = self.proj(x)

_, _, H, W = x.shape

x = x.flatten(2).transpose(1, 2)

x = self.norm(x)

return x, H, W

class TransNeXt(nn.Module):

'''

The parameter "img size" is primarily utilized for generating relative spatial coordinates,

which are used to compute continuous relative positional biases. As this TransNeXt implementation does not support multi-scale inputs,

it is recommended to set the "img size" parameter to a value that is exactly the same as the resolution of the inference images.

It is not advisable to set the "img size" parameter to a value exceeding 800x800.

The "pretrain size" refers to the "img size" used during the initial pre-training phase,

which is used to scale the relative spatial coordinates for better extrapolation by the MLP.

For models trained on ImageNet-1K at a resolution of 224x224,

as well as downstream task models fine-tuned based on these pre-trained weights,

the "pretrain size" parameter should be set to 224x224.

'''

def __init__(self, img_size=640, pretrain_size=None, window_size=[3, 3, 3, None],

patch_size=16, in_chans=3, num_classes=1000, embed_dims=[64, 128, 256, 512],

num_heads=[1, 2, 4, 8], mlp_ratios=[4, 4, 4, 4], qkv_bias=False, drop_rate=0.,

attn_drop_rate=0., drop_path_rate=0., norm_layer=nn.LayerNorm,

depths=[3, 4, 6, 3], sr_ratios=[8, 4, 2, 1], num_stages=4):

super().__init__()

self.num_classes = num_classes

self.depths = depths

self.num_stages = num_stages

pretrain_size = pretrain_size or img_size

dpr = [x.item() for x in torch.linspace(0, drop_path_rate, sum(depths))] # stochastic depth decay rule

cur = 0

for i in range(num_stages):

# Generate relative positional coordinate table and index for each stage to compute continuous relative positional bias.

relative_pos_index, relative_coords_table = get_relative_position_cpb(

query_size=to_2tuple(img_size // (2 ** (i + 2))),

key_size=to_2tuple(img_size // (2 ** (num_stages + 1))),

pretrain_size=to_2tuple(pretrain_size // (2 ** (i + 2))))

self.register_buffer(f"relative_pos_index{i + 1}", relative_pos_index, persistent=False)

self.register_buffer(f"relative_coords_table{i + 1}", relative_coords_table, persistent=False)

patch_embed = OverlapPatchEmbed(patch_size=patch_size * 2 - 1 if i == 0 else 3,

stride=patch_size if i == 0 else 2,

in_chans=in_chans if i == 0 else embed_dims[i - 1],

embed_dim=embed_dims[i])

block = nn.ModuleList([Block(

dim=embed_dims[i], input_resolution=to_2tuple(img_size // (2 ** (i + 2))), window_size=window_size[i],

num_heads=num_heads[i], mlp_ratio=mlp_ratios[i], qkv_bias=qkv_bias,

drop=drop_rate, attn_drop=attn_drop_rate, drop_path=dpr[cur + j], norm_layer=norm_layer,

sr_ratio=sr_ratios[i])

for j in range(depths[i])])

norm = norm_layer(embed_dims[i])

cur += depths[i]

setattr(self, f"patch_embed{i + 1}", patch_embed)

setattr(self, f"block{i + 1}", block)

setattr(self, f"norm{i + 1}", norm)

for n, m in self.named_modules():

self._init_weights(m, n)

self.width_list = [i.size(1) for i in self.forward(torch.randn(1, 3, 640, 640))]

def _init_weights(self, m: nn.Module, name: str = ''):

if isinstance(m, nn.Linear):

trunc_normal_(m.weight, std=.02)

if m.bias is not None:

nn.init.zeros_(m.bias)

elif isinstance(m, nn.Conv2d):

fan_out = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

fan_out //= m.groups

m.weight.data.normal_(0, math.sqrt(2.0 / fan_out))

if m.bias is not None:

m.bias.data.zero_()

elif isinstance(m, (nn.LayerNorm, nn.GroupNorm, nn.BatchNorm2d)):

nn.init.zeros_(m.bias)

nn.init.ones_(m.weight)

def forward(self, x):

B = x.shape[0]

feature = []

for i in range(self.num_stages):

patch_embed = getattr(self, f"patch_embed{i + 1}")

block = getattr(self, f"block{i + 1}")

norm = getattr(self, f"norm{i + 1}")

x, H, W = patch_embed(x)

relative_pos_index = getattr(self, f"relative_pos_index{i + 1}")

relative_coords_table = getattr(self, f"relative_coords_table{i + 1}")

for blk in block:

x = blk(x, H, W, relative_pos_index.to(x.device), relative_coords_table.to(x.device))

x = norm(x)

x = x.reshape(B, H, W, -1).permute(0, 3, 1, 2).contiguous()

feature.append(x)

return feature

class transnext_micro(TransNeXt):

def __init__(self, **kwargs):

super().__init__(window_size=[3, 3, 3, None],

patch_size=4, embed_dims=[48, 96, 192, 384], num_heads=[2, 4, 8, 16],

mlp_ratios=[8, 8, 4, 4], qkv_bias=True,

norm_layer=partial(nn.LayerNorm, eps=1e-6), depths=[2, 2, 15, 2], sr_ratios=[8, 4, 2, 1])

class transnext_tiny(TransNeXt):

def __init__(self, **kwargs):

super().__init__(window_size=[3, 3, 3, None],

patch_size=4, embed_dims=[72, 144, 288, 576], num_heads=[3, 6, 12, 24],

mlp_ratios=[8, 8, 4, 4], qkv_bias=True,

norm_layer=partial(nn.LayerNorm, eps=1e-6), depths=[2, 2, 15, 2], sr_ratios=[8, 4, 2, 1],

drop_rate=0.0, drop_path_rate=0.3)

class transnext_small(TransNeXt):

def __init__(self, **kwargs):

super().__init__(window_size=[3, 3, 3, None],

patch_size=4, embed_dims=[72, 144, 288, 576], num_heads=[3, 6, 12, 24],

mlp_ratios=[8, 8, 4, 4], qkv_bias=True,

norm_layer=partial(nn.LayerNorm, eps=1e-6), depths=[5, 5, 22, 5], sr_ratios=[8, 4, 2, 1],

drop_rate=0.0, drop_path_rate=0.5)

class transnext_base(TransNeXt):

def __init__(self, **kwargs):

super().__init__(window_size=[3, 3, 3, None],

patch_size=4, embed_dims=[96, 192, 384, 768], num_heads=[4, 8, 16, 32],

mlp_ratios=[8, 8, 4, 4], qkv_bias=True,

norm_layer=partial(nn.LayerNorm, eps=1e-6), depths=[5, 5, 23, 5], sr_ratios=[8, 4, 2, 1],

drop_rate=0.0, drop_path_rate=0.6,)

四、手把手教你添加TransNeXt机制

这个主干的网络结构添加起来算是所有的改进机制里最麻烦的了,因为有一些网略结构可以用yaml文件搭建出来,有一些网络结构其中的一些细节根本没有办法用yaml文件去搭建,用yaml文件去搭建会损失一些细节部分(而且一个网络结构设计很多细节的结构修改方式都不一样,一个一个去修改大家难免会出错),所以这里让网络直接返回整个网络,然后修改部分 yolo代码以后就都以这种形式添加了,以后我提出的网络模型基本上都会通过这种方式修改,我也会进行一些模型细节改进。创新出新的网络结构大家直接拿来用就可以的。下面开始添加教程->

(同时每一个后面都有代码,大家拿来复制粘贴替换即可,但是要看好了不要复制粘贴替换多了)

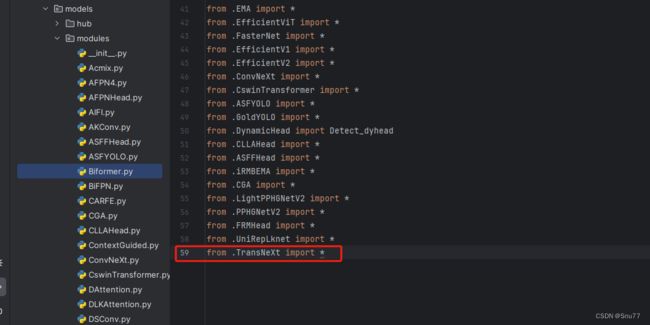

修改一

我们复制网络结构代码到“yolov5-master/models”目录下创建一个目录,我这里的名字是modules(如果将文件放在models下面随着改进机制越来越多不太好区分,所以创建一个文件目录将改进机制全部放在里面) ,然后创建一个py文件将代码复制粘贴到里面我这里起的名字是TransNeXt。

修改二

然后我们在我们创建的目录里面创建一个初始化文件'__init__.py',然后在里面导入我们同级目录的所有改进机制

修改三

我们找到如下文件'models/yolo.py'在开头里面导入我们的模块,这里需要注意要将代码放在common导入的文件上面,否则有一些模块会使用我们modules里面的,可能同名导致报错,这里如果你使用多个我的改进机制填写一个即可,不用重复添加。

![]()

修改四

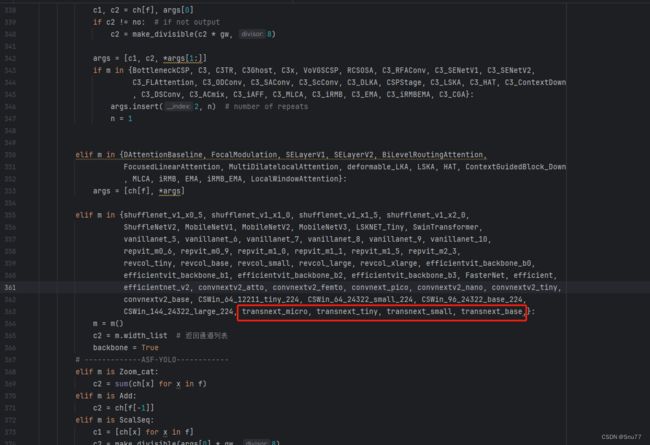

添加如下两行代码,根据行数找相似的代码进行添加

![]()

修改五

找到七百多行大概把具体看图片,按照图片来修改就行,添加红框内的部分,注意没有()只是函数名,我这里只添加了部分的版本,大家有兴趣这个主干还有更多的版本可以添加,看我给的代码函数头即可。

elif m in {自行添加对应的模型即可,下面都是一样的}:

m = m()

c2 = m.width_list # 返回通道列表

backbone = True修改五

下面的两个红框内都是需要改动的。

![]()

if isinstance(c2, list):

m_ = m

m_.backbone = True

else:

m_ = nn.Sequential(*(m(*args) for _ in range(n))) if n > 1 else m(*args) # module

t = str(m)[8:-2].replace('__main__.', '') # module type

np = sum(x.numel() for x in m_.parameters()) # number params

m_.i, m_.f, m_.type, m_.np = i + 4 if backbone else i, f, t, np # attach index, 'from' index, type修改六

如下的也需要修改,全部按照我的来。

![]()

代码如下把原先的代码替换了即可。

save.extend(x % (i + 4 if backbone else i) for x in ([f] if isinstance(f, int) else f) if x != -1) # append to savelist

layers.append(m_)

if i == 0:

ch = []

if isinstance(c2, list):

ch.extend(c2)

if len(c2) != 5:

ch.insert(0, 0)

else:

ch.append(c2)修改七

修改七和前面的都不太一样,需要修改前向传播中的一个部分, 已经离开了parse_model方法了。

可以在图片中开代码行数,没有离开task.py文件都是同一个文件。 同时这个部分有好几个前向传播都很相似,大家不要看错了,是70多行左右的!!!,同时我后面提供了代码,大家直接复制粘贴即可,有时间我针对这里会出一个视频。

找到如下的代码,这里不太好找,我给大家上传一个原始的样子。

![]()

然后我们用后面的代码进行替换,替换完之后的样子如下->

![]()

代码如下->

def _forward_once(self, x, profile=False, visualize=False):

y, dt = [], [] # outputs

for m in self.model:

if m.f != -1: # if not from previous layer

x = y[m.f] if isinstance(m.f, int) else [x if j == -1 else y[j] for j in m.f] # from earlier layers

if profile:

self._profile_one_layer(m, x, dt)

if hasattr(m, 'backbone'):

x = m(x)

if len(x) != 5: # 0 - 5

x.insert(0, None)

for index, i in enumerate(x):

if index in self.save:

y.append(i)

else:

y.append(None)

x = x[-1] # 最后一个输出传给下一层

else:

x = m(x) # run

y.append(x if m.i in self.save else None) # save output

if visualize:

feature_visualization(x, m.type, m.i, save_dir=visualize)

return x到这里就完成了修改部分,但是这里面细节很多,大家千万要注意不要替换多余的代码,导致报错,也不要拉下任何一部,都会导致运行失败,而且报错很难排查!!!很难排查!!!

五、TransNeXt的yaml文件

复制如下yaml文件进行运行!!!

# YOLOv5 by Ultralytics, AGPL-3.0 license

# Parameters

nc: 80 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.25 # layer channel multiple

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[[-1, 1, transnext_micro, []], # 0-4

[-1, 1, SPPF, [1024, 5]], # 5

]

# YOLOv5 v6.0 head

head:

[[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 3], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 9

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 2], 1, Concat, [1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 13 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 10], 1, Concat, [1]], # cat head P4

[-1, 3, C3, [512, False]], # 16 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[[-1, 6], 1, Concat, [1]], # cat head P5

[-1, 3, C3, [1024, False]], # 19 (P5/32-large)

[[13, 16, 19], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

六、成功运行记录

下面是成功运行的截图,已经完成了有1个epochs的训练,图片太大截不全第2个epochs了。

七、本文总结

到此本文的正式分享内容就结束了,在这里给大家推荐我的YOLOv5改进有效涨点专栏,本专栏目前为新开的平均质量分97分,后期我会根据各种最新的前沿顶会进行论文复现,也会对一些老的改进机制进行补充,如果大家觉得本文帮助到你了,订阅本专栏,关注后续更多的更新~