ClickHouse/Doris vs Elasticsearch谁更胜一筹?

前言

我之前在ClickHouse vs Doris 读写性能比较 一文中,初步做了一下ClickHouse和Doris的读写性能比较,但由于数据样本比较小,且未发挥出所有硬件资源的性能,因此进行了第二轮压测。

本轮压测与上一轮的区别在于:

- 新加入了Elasticsearch搜索引擎

- ClickHouse和Doris均采用多并发写入,发挥最大性能

- 本轮测试得到了飞轮科技多位技术专家的指导,对Doris进行了一定的参数调优

环境准备(硬件机器配置同上一篇文章)

clickhouse集群

| 节点 | IP | 分片编号 | 副本编号 |

|---|---|---|---|

| ck93 | 192.168.101.93 | 1 | 1 |

| ck94 | 192.168.101.94 | 1 | 2 |

| ck96 | 192.168.101.96 | 2 | 1 |

| ck97 | 192.168.101.97 | 2 | 2 |

doris集群

| 角色 | 节点 | IP |

|---|---|---|

| FE | ck94 | 192.168.101.94 |

| BE | ck93 | 192.168.101.93 |

| BE | ck94 | 192.168.101.94 |

| BE | ck96 | 192.168.101.96 |

| BE | ck97 | 192.168.101.97 |

| BE | ck98 | 192.168.101.98 |

FE 配置:

meta_dir = /data01/doris/fe

http_port = 58030

rpc_port = 59020

query_port = 59030

edit_log_port = 59010

priority_networks = 192.168.101.0/24

enable_single_replica_load = true

max_routine_load_task_concurrent_num = 50

max_routine_load_task_num_per_be = 10BE配置

be_port = 59060

webserver_port = 58040

heartbeat_service_port = 59050

brpc_port = 58060

priority_networks = 192.168.101.0/24

storage_root_path = /data01/doris/be

enable_single_replica_load = true

inverted_index_compaction_enable = true

scan_thread_nice_value = 5ES集群

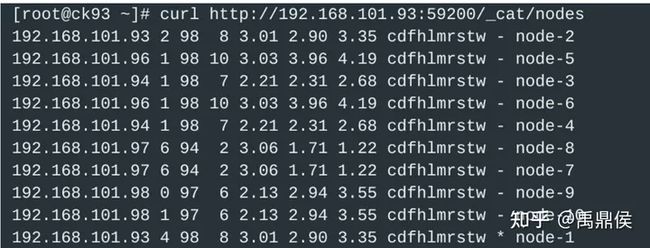

计10个节点。

| 节点 | 节点 | 服务 |

|---|---|---|

| node1 | ck93 | 192.168.101.93:59200 |

| node2 | ck93 | 192.168.101.93:59201 |

| node3 | ck94 | 192.168.101.94:59200 |

| node4 | ck94 | 192.168.101.94:59201 |

| node5 | ck96 | 192.168.101.96:59200 |

| node6 | ck96 | 192.168.101.96:59201 |

| node7 | ck97 | 192.168.101.97:59200 |

| node8 | ck97 | 192.168.101.97:59201 |

| node9 | ck98 | 192.168.101.98:59200 |

| node10 | ck98 | 192.168.101.98:59201 |

数据准备

数据源

4个节点clickhouse-server日志,每个节点约2.5亿数据量,共计10亿数据,原始数据kafka压缩后为155GB。

建表语句

clickhouse

--- 本地表

create table log_test on cluster abc (

`@@id` String NOT NULL CODEC(ZSTD(1)),

`@message` String NOT NULL CODEC(ZSTD(1)) ,

`@filehashkey` String NOT NULL CODEC(ZSTD(1)) ,

`@collectiontime` DateTime64(3) CODEC(DoubleDelta, LZ4),

`@hostname` LowCardinality(String) NOT NULL CODEC(ZSTD(1)) ,

`@path` String NOT NULL CODEC(ZSTD(1)) ,

`@rownumber` Int64 NOT NULL ,

`@seq` Int64 NOT NULL ,

`@ip` LowCardinality(String) NOT NULL CODEC(ZSTD(1)) ,

`@topic` LowCardinality(String) NOT NULL CODEC(ZSTD(1)) ,

`@timestamp` DateTime64(3) CODEC(DoubleDelta, LZ4),

INDEX message_idx `@message` TYPE ngrambf_v1(5, 65535, 1, 0) GRANULARITY 1,

PROJECTION p_cnt (

SELECT `@ip`, `@path`, count() GROUP BY `@ip`, `@path`

)

)ENGINE = ReplicatedMergeTree

PARTITION BY toYYYYMMDD(`@timestamp`)

ORDER BY (`@timestamp`, `@ip`, `@path`);

--- 分布式表

create table dist_log_test on cluster abc as log_test engine = Distributed('abc', 'default', 'log_test')Doris

CREATE TABLE demo.log_test (

`@@id` CHAR(34) NOT NULL ,

`@message` STRING NOT NULL ,

`@filehashkey` CHAR(34) NOT NULL,

`@collectiontime` DATETIME(3) ,

`@hostname` VARCHAR(20) NOT NULL ,

`@path` VARCHAR(256) NOT NULL ,

`@rownumber` BIGINT NOT NULL ,

`@seq` BIGINT NOT NULL,

`@ip` CHAR(16) NOT NULL ,

`@topic` CHAR(16) NOT NULL,

`@timestamp` DATETIME(3),

INDEX idx_message_inv(`@message`) USING INVERTED PROPERTIES(

"parser" = "unicode",

"parser_mode" = "fine_grained",

"support_phrase" = "true"

)

)

DUPLICATE KEY(`@@id`)

PARTITION BY RANGE(`@timestamp`) ()

DISTRIBUTED BY HASH(`@@id`) BUCKETS AUTO

ROLLUP (

r1 (`@ip`, `@path`)

)

PROPERTIES (

"replication_allocation" = "tag.location.default: 2",

"dynamic_partition.enable" = "true",

"dynamic_partition.time_unit" = "MONTH",

"dynamic_partition.start" = "-12",

"dynamic_partition.create_history_partition" = "true",

"dynamic_partition.history_partition_num" = "12",

"dynamic_partition.end" = "3",

"dynamic_partition.prefix" = "p",

"compression"="zstd",

"compaction_policy"="time_series",

"enable_single_replica_compaction"="true"

);写入配置

clickhouse_sinker

开启5个sinker并发,向clickhouse写入数据。配置如下:

{

"clickhouse": {

"cluster": "abc",

"db": "default",

"hosts": [

["192.168.101.93", "192.168.101.94"],

["192.168.101.96", "192.168.101.97"]

],

"port": 19000,

"username": "default",

"password": "123456",

"maxOpenConns": 5,

"retryTimes": 0

},

"kafka": {

"brokers": "192.168.101.94:29092,192.168.101.96:29092,192.168.101.98:29092"

},

"tasks": [{

"name": "log_test",

"topic": "log_test",

"earliest": true,

"consumerGroup": "test_2024001",

"parser": "fastjson",

"tableName": "log_test",

"autoSchema": true,

"dynamicSchema":{

"enable": false

},

"prometheusSchema": false,

"bufferSize": 1000000,

"flushInterval": 10

}],

"logLevel": "info"

}routine_load

CREATE ROUTINE LOAD demo.log_test_10 ON log_test

COLUMNS(`@message`,`@@id`,`@filehashkey`,`@collectiontime`,`@hostname`,`@path`,`@rownumber`,`@seq`,`@ip`,`@topic`,`@timestamp`)

PROPERTIES

(

"desired_concurrent_number"="10",

"max_error_number" = "500",

"max_batch_interval" = "20",

"max_batch_rows" = "1000000",

"max_batch_size" = "536870912",

"strict_mode" = "false",

"format" = "json"

)

FROM KAFKA

(

"kafka_broker_list" = "192.168.101.94:29092,192.168.101.96:29092,192.168.101.98:29092",

"kafka_topic" = "log_test",

"kafka_partitions" = "0,1,2,3,4,5",

"kafka_offsets" = "0,0,0,0,0,0"

);ElasticSearch settings and mapping

{

"order": 200,

"index_patterns": [

"estest_chenyc_log_*"

],

"settings": {

"index": {

"codec": "best_compression",

"refresh_interval": "10s",

"number_of_shards": "10",

"translog": {

"sync_interval": "60s",

"durability": "async"

},

"merge": {

"scheduler": {

"max_thread_count": "10"

},

"policy": {

"max_merged_segment": "5g"

}

},

"unassigned": {

"node_left": {

"delayed_timeout": "15m"

}

},

"number_of_replicas": "1"

}

},

"mappings": {

"dynamic": true,

"dynamic_templates": [

{

"message_field": {

"path_match": "@message",

"mapping": {

"norms": false,

"type": "text"

},

"match_mapping_type": "string"

}

},

{

"string_fields": {

"mapping": {

"type": "keyword"

},

"match_mapping_type": "string",

"match": "*"

}

}

],

"properties": {

"@message": {

"norms": false,

"type": "text"

},

"@seq": {

"type": "long"

},

"@timestamp": {

"type": "date"

},

"@topic": {

"type": "keyword"

},

"@filehashkey": {

"type": "keyword"

},

"@@id": {

"type": "keyword"

},

"@rownumber": {

"type": "long"

},

"@ip": {

"type": "keyword"

},

"@collectiontime": {

"type": "date"

},

"@hostname": {

"type": "keyword"

},

"@path": {

"type": "keyword"

}

}

},

"aliases": {}

}资源配置:

(为了最大发挥es的写入性能,重新生成了一份数据,设置kafka的topic partition数为 30)

中台配置:

写入性能

clickhouse

| 并发数 | 资源占用(sinker) | 资源占用(clickhouse) | 数据总量 | 写入速度 | 数据大小 | 压缩后大小(含副本) |

|---|---|---|---|---|---|---|

| 5 | 15vcpu|25G | 5vcpu|6G | 10亿 | 1205k/s | 155GB | 95GB |

| 5(有大查询) | 10vcpu|16G | 37vcpu|8G | 10亿 | 890k/s | 155GB | 95GB |

Doris

| 并发数 | 资源占用(be) | 数据总量 | 写入速度 | 数据大小 | 压缩后大小(含副本) |

|---|---|---|---|---|---|

| 5 | 2~8vcpu|9GB | 10亿 | 532k/s | 155GB | 161GB |

| 10 | 2~11vcpu|10GB | 10亿 | 559k/s | 155GB | 161GB |

| 15 | 5-15vcpu|10GB | 10亿 | 675k/s | 155GB | 161GB |

| 20 | 3~12vcpu|9GB | 10亿 | 609k/s | 155GB | 161GB |

| 15并发,有大查询 | 16~29vcpu|12GB | 10亿 | 490k/s | 155GB | 161GB |

cpu负载各个节点有所区别,部分节点cpu负载比较高(应该是被写入数据的节点),剩余节点CPU负载都维持在一个相对低的水平,和ClickHouse相当。

ElasticSearch

使用擎创科技内部的日志精析产品启动flink存储任务写入数据。

| 并发数 | ES节点资源占用 | 数据总量 | 写入速度 | 数据大小 | 压缩后大小(含副本) |

|---|---|---|---|---|---|

| 30 | 7vcpu|38GB | 10亿 | 106k/s | 155GB | 281GB |

| 30(有大查询) | 11vcpu|39GB | 10亿 | 75k/s | 155GB | 281GB |

写入结论

ES:CK:Doris写入速度比为 1:6: 12。Doris写入性能是ES的6倍,ck是ES的12倍。

在本次测试中,数据均保留两副本,存储相同的数据,得出压缩比为ES:CK:Doris 为 1: 0.35: 0.57。即存储相同的数据量,Doris只需要ES近一半的存储资源,ClickHouse仅需ES三分之一的存储资源即可满足要求。

查询性能

依然使用上次的查询场景:

| 场景 | 说明 |

|---|---|

| 场景1 | 根据ip和path维度统计每个ip下path的个数 |

| 场景2 | 统计每个ip下的Error日志的数量 |

| 场景3 | 统计日志中出现Debug 和 query_id 为 cdb56920-2d39-4e6d-be99-dd6ef24cc66a 的条数 |

| 场景4 | 统计出现Trace和gauge.apm_service_span出现的次数 |

| 场景5 | 查询Error中出现READ_ONLY的日志明细 |

| 场景6 | 查询日志中出现“上海”关键字的明细 |

查询语句:

| 场景 | 数据库 | SQL语句 |

|---|---|---|

| 场景1 | clickhouse | SELECT @ip, @path, count() FROM dist_log_test GROUP BY @ip,@path |

| 场景1 | Doris | SELECT @ip, @path, count() FROM log_test GROUP BY @ip,@path |

| 场景1 | ElasticSearch | |stats count by @ip,@path |

| 场景2 | clickhouse | SELECT @ip, count() FROM dist_log_test WHERE @message LIKE '%Error%' GROUP BY @ip |

| 场景2 | Doris | SELECT @ip, count() FROM log_test WHERE @message MATCH_ANY 'Error' GROUP BY @ip |

| 场景2 | ElasticSearch | Error | stats count by @ip |

| 场景3 | clickhouse | SELECT count() FROM dist_log_test WHERE @message LIKE '%Debug%' AND @message LIKE '%cdb56920-2d39-4e6d-be99-dd6ef24cc66a%' |

| 场景3 | Doris | SELECT count() FROM log_test WHERE @message MATCH_ALL 'Debug cdb56920-2d39-4e6d-be99-dd6ef24cc66a' |

| 场景3 | ElasticSearch | Debug AND cdb56920-2d39-4e6d-be99-dd6ef24cc66a | stats by count |

| 场景4 | clickhouse | SELECT count() FROM dist_log_test WHERE @message LIKE '%Trace%' AND @message LIKE '%gauge.apm_service_span%' |

| 场景4 | Doris | SELECT count() FROM log_test WHERE @message MATCH_ALL 'Trace gauge.apm_service_span' |

| 场景4 | ElasticSearch | Trace AND gauge.apm_service_span |stats by count |

| 场景5 | clickhouse | SELECT * FROM dist_log_test WHERE @message LIKE '%Error%' AND @message LIKE '%READ_ONLY%' |

| 场景5 | Doris | SELECT * FROM log_test WHERE @message MATCH_ALL 'Error READ_ONLY' |

| 场景5 | ElasticSearch | Error AND READ_ONLY |

| 场景6 | clickhouse | SELECT * FROM dist_log_test WHERE @message LIKE '%上海%' |

| 场景6 | Doris | SELECT * FROM log_test WHERE @message MATCH_ANY '上海' |

| 场景6 | ElasticSearch | 上海 |

查询结果如下所示:

| 数据库 | 场景1 | 场景2 | 场景3 | 场景4 | 场景5 | 场景6 | |

|---|---|---|---|---|---|---|---|

| clickhouse | 无干扰查询 有写入时查询 |

0.064 0.181 |

16.284 22.156 |

1.688 3.994 |

12.879 35.167 |

16.065 33.792 |

14.694 31.244 |

| Doris | 无干扰查询 有写入时查询 |

7.08 9.78 |

2.56 3.28 |

0.09 0.37 |

0.48 0.75 |

0.33 0.37 |

0.48 0.56 |

| elasticsearch | 无干扰查询 有写入时查询 |

9.09 9.25 |

1.54 6.22 |

0.556 1.68 |

0.296 3.36 |

0.248 0.828 |

0.49 1.09 |

结论

在给出的6个场景中,除了场景1,ClickHouse凭借projection带来的预聚合加速明显更快之外,其余场景ClickHouse均慢于doris和ES,在全⽂检索的查询场景,落后近10倍以上。

得益于全⽂检索的加持,Doris与ES在模糊查询的场景下不分轩轾,各有千秋,但均比clickhouse快很多。

在数据库有⾼速写⼊时,三者都出现了⼀定的查询性能下降。但从实际效果来看,doris和ES因为本身就比较快,因此影响不是很大。ClickHouse的影响⽐较明显,在原本就⽐较慢的基础上,⼜有了近乎3倍的查询时间消耗。

整体结论

- 写入性能: clickhouse > doris >> es

- 数据压缩: clickhouse > doris > es

- 查询性能:

- 全文检索:Doris ≈ es > clickhouse

- 聚合查询: clickhouse > doris ≈ es

在并发足够的情况下,clickhouse能轻松满足每秒100w+数据的写入。Doris写入性能相比之下要减半,并且不会随着并发数的增加而增加(并发数过多,反而写入更慢了)。但总体可以达到60w+每秒左右。三者之中,ES写入速度最慢,峰值仅能达到10w每秒左右。

在有大查询时,三者均对写入有一定影响,会造成写入性能下降25%左右。

压缩比方面,clickhouse和Doris均有比较优秀的压缩表现,而ES不仅没有压缩,反而数据有所膨胀。Doris相比与ES,有着近2倍的压缩比,而clickhouse更是达到了3倍之多。

查询方面,在聚合查询场景,clickhouse明显要更优秀,Doris和ES相对弱一些。

模糊查询场景,Doris与ES性能相当,都明显优于clickhouse。

本专栏知识点是通过<零声教育>的系统学习,进行梳理总结写下文章,对C/C++课程感兴趣的读者,可以点击链接,查看详细的服务:C/C++Linux服务器开发/高级架构师