basic CNN

文章目录

- 回顾

- 卷积神经网络

-

- 卷积

- 卷积核

- 卷积过程

- 卷积后图像尺寸计算公式:

-

- 代码

- padding

-

- 代码

- Stride

-

- 代码

- MaxPooling

-

- 代码

- 一个简单的卷积神经网络

-

- 用卷积神经网络来对MINIST数据集进行分类

- 如何使用GPU

- 代码

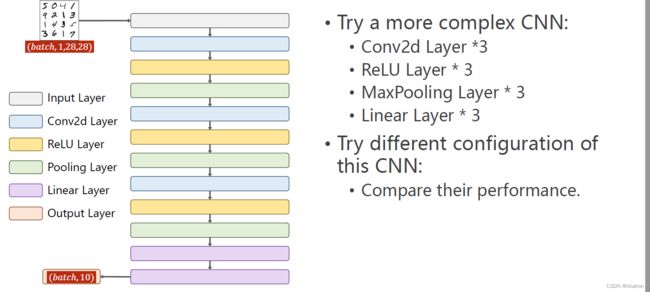

- 练习

回顾

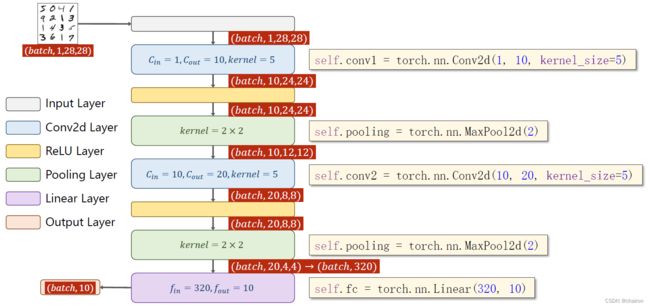

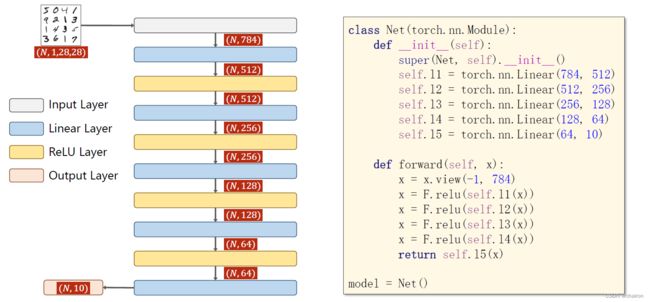

下面这种由线形层构成的网络是全连接网络。

对于图像数据而言,卷积神经网络更常用。

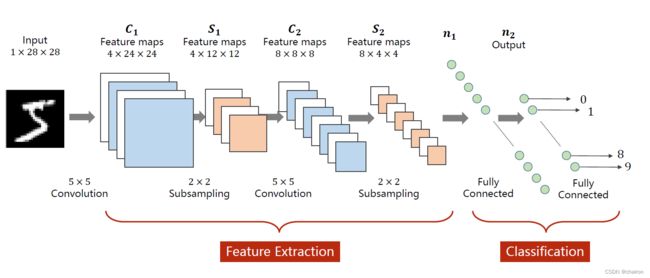

卷积神经网络

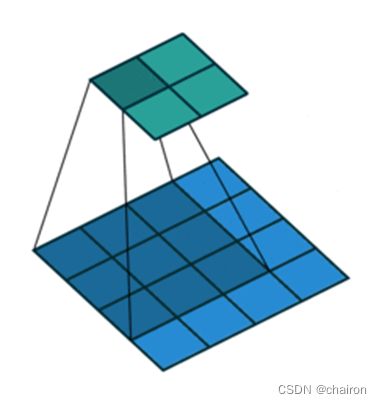

通过二维卷积可以实现图像特征的自动提取,卷积输出的称为特征图;特征提取之后可以通过全连接层构造分类器进行分类。

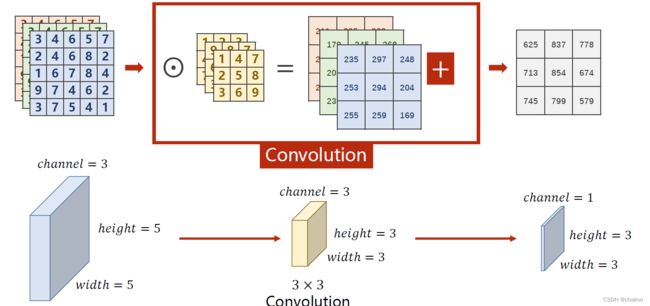

卷积

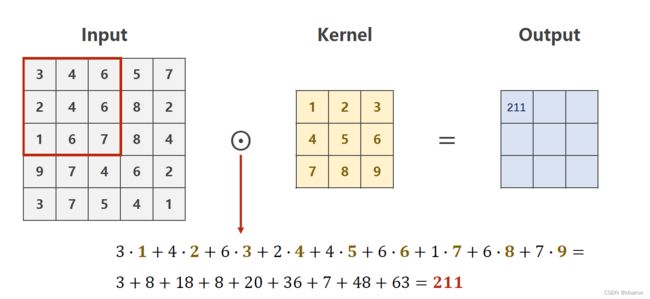

图像中不同数据窗口的数据和卷积核作内积的操作叫做卷积,本质是提取图像不同频段的特征。和图像处理中的高斯模糊核原理一样。

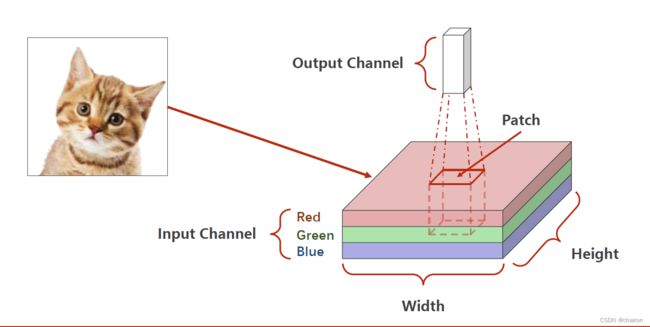

卷积核

- 带着一组固定权重的神经元,可以用来提取特定的特征(例如可以提取物体轮廓、颜色深浅等)

- 卷积核大小:3x3,5x5,7x7

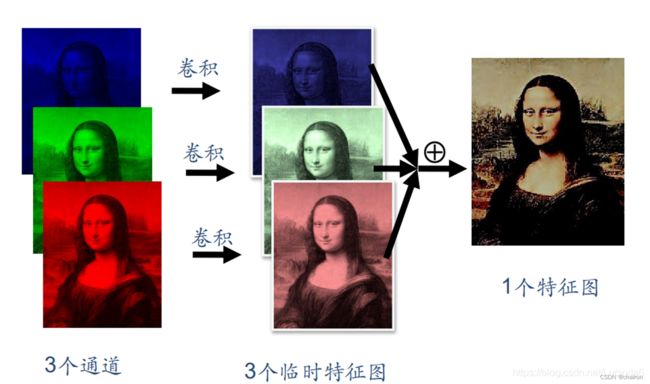

- 卷积核的通道数与被卷积的图片通道数相同

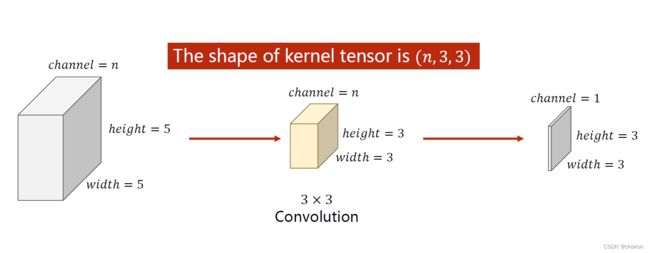

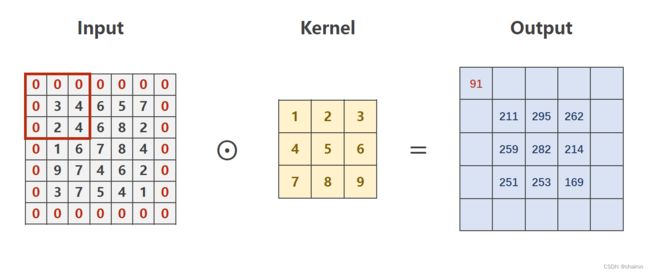

卷积过程

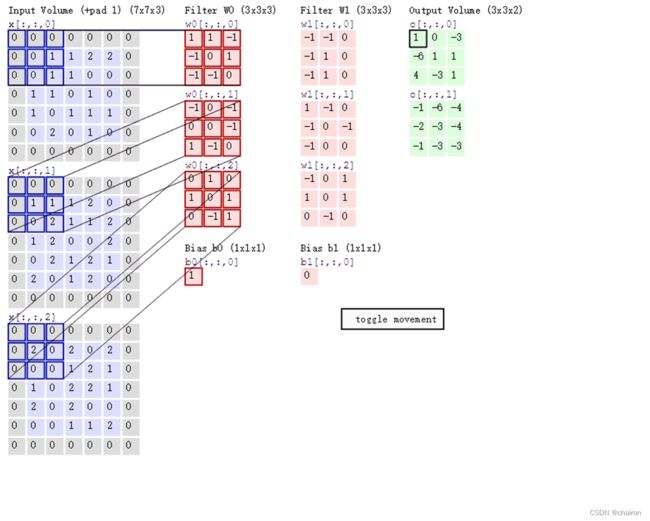

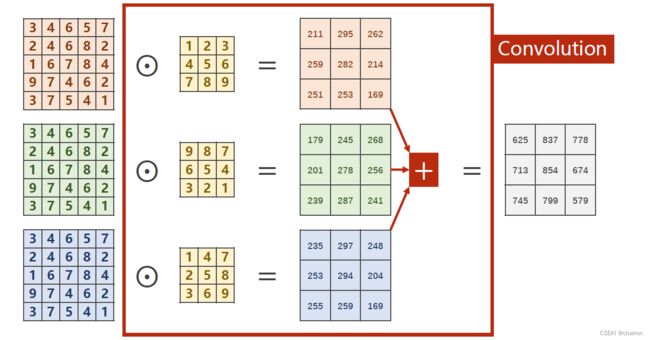

n通道输入的卷积:

如果想要输出通道为M,则需要M个卷积核:

注意:卷积核的通道要求和输入通道一样;卷积核的个数要求和输出通道数一样。

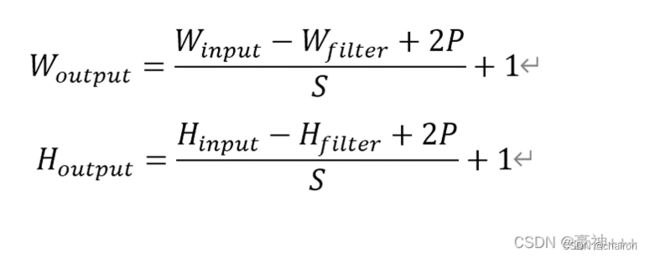

卷积后图像尺寸计算公式:

代码

import torch

in_channels,out_channels=5,10

width,height=100,100

kernel_size=3

batch_size=1

input = torch.randn(batch_size,in_channels,width,height)#生成0-1正态分布

conv_layer=torch.nn.Conv2d(in_channels,out_channels,kernel_size=kernel_size)

output=conv_layer(input)

print(input.shape)

print(output.shape)

print(conv_layer.weight.shape)

结果:

torch.Size([1, 5, 100, 100])

torch.Size([1, 10, 98, 98])

torch.Size([10, 5, 3, 3])

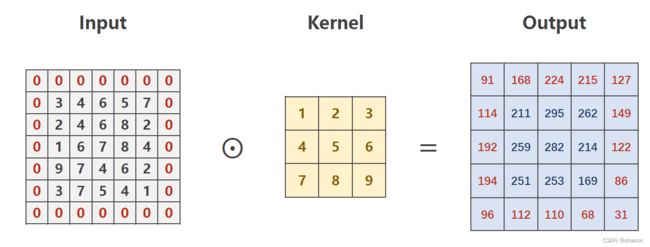

padding

进行卷积之后,图像大小(W、H)可能会发生改变;生成的特征图大小不是我们想要的,比如说我们希望特征图大小在卷积之后不发生变化;那么可以使用padding在输入图像像素周围进行填充,padding=1就是填充一圈0.

代码

import torch

in_channels,out_channels=5,10

width,height=100,100

kernel_size=3

batch_size=1

input=[3,4,6,5,7,

2,4,6,8,2,

1,6,7,8,4,

9,7,4,6,2,

3,7,5,4,1]

input=torch.Tensor(input).view(1,1,5,5)

conv_layer=torch.nn.Conv2d(1,1,kernel_size=3,padding=1,bias=False)#paddings=1(扩充一圈)相当于扩充原来矩阵维数,比如4*4,变成5*5

kernel=torch.Tensor([1,2,3,4,5,6,7,8,9]).view(1,1,3,3)

conv_layer.weight.data=kernel.data

output=conv_layer(input)

print(output)

结果:

ensor([[[[ 91., 168., 224., 215., 127.],

[114., 211., 295., 262., 149.],

[192., 259., 282., 214., 122.],

[194., 251., 253., 169., 86.],

[ 96., 112., 110., 68., 31.]]]], grad_fn=<ConvolutionBackward0>)

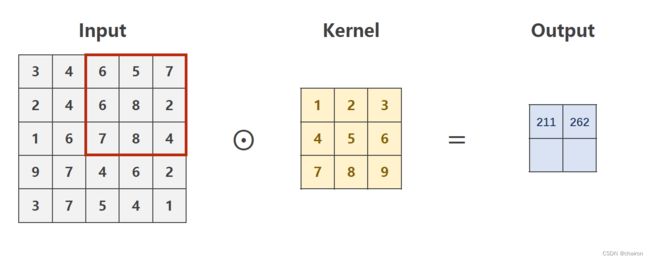

Stride

Stride:步长。卷积核每次移动的cell距离

代码

import torch

in_channels,out_channels=5,10

width,height=100,100

kernel_size=3

batch_size=1

input=[3,4,6,5,7,

2,4,6,8,2,

1,6,7,8,4,

9,7,4,6,2,

3,7,5,4,1]

input=torch.Tensor(input).view(1,1,5,5)

conv_layer=torch.nn.Conv2d(1,1,kernel_size=3,stride=2,bias=False)#stride=2

kernel=torch.Tensor([1,2,3,4,5,6,7,8,9]).view(1,1,3,3)

conv_layer.weight.data=kernel.data

output=conv_layer(input)

print(output)

结果:

tensor([[[[211., 262.],

[251., 169.]]]], grad_fn=<ConvolutionBackward0>)

MaxPooling

MaxPooling:下采样,图片W、H会缩小为原来的一半。(默认情况下,kernel=2,stride=2)

代码

import torch

in_channels,out_channels=5,10

width,height=100,100

kernel_size=3

batch_size=1

input1 = [3,4,6,5,

2,4,6,8,

1,6,7,8,

9,7,4,6,]

input1 = torch.Tensor(input1).view(1, 1, 4, 4)

maxpooling_layer=torch.nn.MaxPool2d(kernel_size=2)#默认kernei_size=2

output1=maxpooling_layer(input1)

print(output1)

结果:

tensor([[[[4., 8.],

[9., 8.]]]])

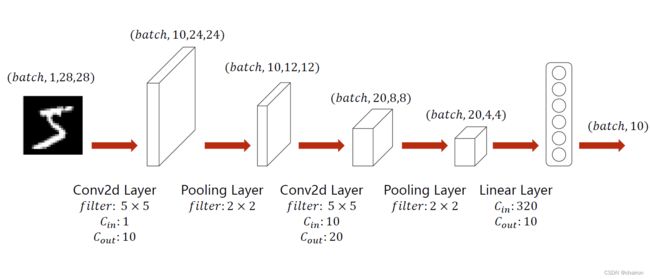

一个简单的卷积神经网络

用卷积神经网络来对MINIST数据集进行分类

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1=torch.nn.Conv2d(1,10,kernel_size=5)

self.conv2=torch.nn.Conv2d(10,20,kernel_size=5)

self.pooling=torch.nn.MaxPool2d(2)

self.fc=torch.nn.Linear(320,10)

def forward(self,x):

# Flatten data from (n, 1, 28, 28) to (n, 784)

batch_size=x.size(0)

x=F.relu(self.pooling(self.conv1(x)))

x=F.relu(self.pooling(self.conv2(x)))

x=x.view(batch_size,-1)#flatten

x=self.fc(x)

return x

model=Net()

在之前的代码里改一下模型部分就可以了。

请自己尝试改一下,并且输出loss曲线!

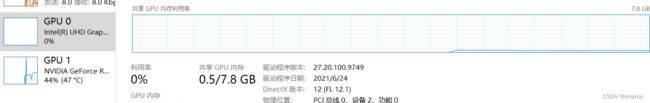

如何使用GPU

- 定义device

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu") - 将所有模型的parameters and buffers转化为CUDA Tensor.

model.to(device) - 将数据送到GPU上

inputs,target=inputs.to(device),target.to(device)

代码

import numpy as np

import torch

import matplotlib.pyplot as plt

from torch.utils.data import DataLoader #For constructing DataLoader

from torchvision import transforms #For constructing DataLoader

from torchvision import datasets #For constructing DataLoader

import torch.nn.functional as F #For using function relu()

batch_size=64

transform=transforms.Compose([transforms.ToTensor(),#Convert the PIL Image to Tensor.

transforms.Normalize((0.1307,),(0.3081,))])#The parameters are mean and std respectively.

train_dataset = datasets.MNIST(root='dataset',train=True,transform=transform,download=True)

test_dataset = datasets.MNIST(root='dataset',train=False,transform=transform,download=True)

train_loader = DataLoader(dataset=train_dataset,batch_size=batch_size,shuffle=True)

test_loader = DataLoader(dataset=test_dataset,batch_size=batch_size,shuffle=False)

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1=torch.nn.Conv2d(1,10,kernel_size=5)

self.conv2=torch.nn.Conv2d(10,20,kernel_size=5)

self.pooling=torch.nn.MaxPool2d(2)

self.fc=torch.nn.Linear(320,10)

def forward(self,x):

# Flatten data from (n, 1, 28, 28) to (n, 784)

batch_size=x.size(0)

x=F.relu(self.pooling(self.conv1(x)))

x=F.relu(self.pooling(self.conv2(x)))

x=x.view(batch_size,-1)#flatten

x=self.fc(x)

return x

model=Net()

#device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

#定义device,如果有GPU就用GPU,否则用CPU

#model.to(device)

# 将所有模型的parameters and buffers转化为CUDA Tensor.

criterion=torch.nn.CrossEntropyLoss()

optimizer=torch.optim.SGD(model.parameters(),lr=0.01,momentum=0.5)

def train(epoch):

running_loss=0.0

for batch_id,data in enumerate(train_loader,0):

inputs,target=data

#inputs,target=inputs.to(device),target.to(device)

#将数据送到GPU上

optimizer.zero_grad()

# forward + backward + update

outputs=model(inputs)

loss=criterion(outputs,target)

loss.backward()

optimizer.step()

running_loss +=loss.item()

if batch_id% 300==299:

print('[%d,%5d] loss: %.3f' % (epoch+1,batch_id,running_loss/300))

running_loss=0.0

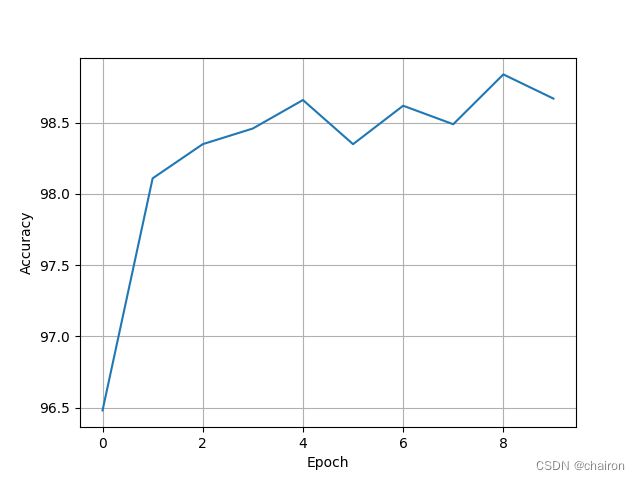

accracy = []

def test():

correct=0

total=0

with torch.no_grad():

for data in test_loader:

inputs,target=data

#inputs,target=inputs.to(device),target.to(device)

#将数据送到GPU上

outputs=model(inputs)

predicted=torch.argmax(outputs.data,dim=1)

total+=target.size(0)

correct+=(predicted==target).sum().item()

print('Accuracy on test set : %d %% [%d/%d]'%(100*correct/total,correct,total))

accracy.append([100*correct/total])

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test()

x=np.arange(10)

plt.plot(x, accracy)

plt.xlabel("Epoch")

plt.ylabel("Accuracy")

plt.grid()

plt.show()

训练结果:

如果使用了GPU,可以查看GPU利用率,被占用就说明跑起来了

练习

使用MINIST数据集构建更为复杂的卷积神经网络进行分类,要求conv、relu、maxpooling、linear层都使用三个,参数自己调整,比较一下训练结果。