【RL】Bellman Optimality Equation(贝尔曼最优等式)

Lecture3: Optimal Policy and Bellman Optimality Equation

Definition of optimal policy

state value可以被用来去评估policy的好坏,如果:

v π 1 ( s ) ≥ v π 2 ( s ) for all s ∈ S v_{\pi_1}(s) \ge v_{\pi_2}(s) \;\;\;\;\; \text{for all } s \in S vπ1(s)≥vπ2(s)for all s∈S

那么, π 1 \pi_1 π1比 π 2 \pi_2 π2更优。

定义:

如果 v π ∗ ( s ) > v π v_{\pi^*}(s) > v_{\pi} vπ∗(s)>vπ对所有的 s s s都成立,那么policy π \pi π就是最优的,标记为 π ∗ \pi^* π∗。

BOE: Introduction

回顾元素形式的Bellman等式:

v ( s ) = ∑ a π ( a ∣ s ) ( ∑ r p ( r ∣ s , a ) + γ ∑ s ′ p ( s ′ ∣ s , a ) v ( s ′ ) ) ∀ s ∈ S v(s)=\sum_a \pi(a | s) \left( \sum_rp(r | s, a) + \gamma \sum_{s'}p(s' | s, a)v(s') \right)\;\;\;\;\; \forall s \in S v(s)=a∑π(a∣s)(r∑p(r∣s,a)+γs′∑p(s′∣s,a)v(s′))∀s∈S

则,元素形式的Bellman最优等式(Bellman Optimiality Equation)为:

v ( s ) = m a x π ∑ a π ( a ∣ s ) ( ∑ r p ( r ∣ s , a ) + γ ∑ s ′ p ( s ′ ∣ s , a ) v ( s ′ ) ) ∀ s ∈ S = m a x π ∑ a π ( a ∣ s ) q ( s ∣ a ) s ∈ S \begin{align*} v(s)&=max_{\pi} \sum_a \pi(a | s) \left( \sum_rp(r | s, a) + \gamma \sum_{s'}p(s' | s,a) v(s') \right)\;\;\;\;\; \forall s \in S \\ &=max_{\pi}\sum_a \pi(a | s)q(s | a) \;\;\;\;\; s \in S \end{align*} v(s)=maxπa∑π(a∣s)(r∑p(r∣s,a)+γs′∑p(s′∣s,a)v(s′))∀s∈S=maxπa∑π(a∣s)q(s∣a)s∈S

其中:

- p ( r ∣ s , a ) p(r | s, a) p(r∣s,a)、 p ( s ′ ∣ s , a ) p(s'| s, a) p(s′∣s,a)是已知的

- v ( s ) v(s) v(s)、 v ( s ′ ) v(s') v(s′)是未知需要计算的

矩阵形式的Bellman最优等式:

v = m a x π ( r π + γ P π v ) \mathbf{v} = max_{\pi}(\mathbf{r}_{\pi} + \gamma \mathbf{P}_{\pi} \mathbf{v}) v=maxπ(rπ+γPπv)

其中:

[ r π ] s : = ∑ a π ( a ∣ s ) ∑ r p ( r ∣ s , a ) r [ P π ] s , s ′ = p ( s ′ ∣ s ) : = ∑ a π ( a ∣ s ) ∑ s ′ p ( s ′ ∣ s , a ) [\mathbf{r}_{\pi}]_s := \sum_a \pi(a | s)\sum_rp(r | s, a)r \\ [\mathbf{P}_{\pi}]_{s, s'} = p(s' | s) := \sum_a \pi(a | s) \sum_{s'} p(s' | s, a) [rπ]s:=a∑π(a∣s)r∑p(r∣s,a)r[Pπ]s,s′=p(s′∣s):=a∑π(a∣s)s′∑p(s′∣s,a)

BOE: Maximization on the right-hand side

考虑元素形式Bellman最优等式:

v ( s ) = m a x π ∑ a π ( a ∣ s ) ( ∑ r p ( r ∣ s , a ) + γ ∑ s ′ p ( s ′ ∣ s , a ) v ( s ′ ) ) ∀ s ∈ S = m a x π ∑ a π ( a ∣ s ) q ( s ∣ a ) s ∈ S \begin{align*} v(s)&=max_{\pi} \sum_a \pi(a | s) \left( \sum_rp(r | s, a) + \gamma \sum_{s'}p(s' | s,a) v(s') \right)\;\;\;\;\; \forall s \in S \\ &=max_{\pi}\sum_a \pi(a | s)q(s | a) \;\;\;\;\; s \in S \end{align*} v(s)=maxπa∑π(a∣s)(r∑p(r∣s,a)+γs′∑p(s′∣s,a)v(s′))∀s∈S=maxπa∑π(a∣s)q(s∣a)s∈S

因为 ∑ a π ( a ∣ s ) = 1 \sum_a \pi(a | s) = 1 ∑aπ(a∣s)=1,可得:

m a x π ∑ a π ( a ∣ s ) q ( s ∣ a ) = m a x a ∈ A ( s ) q ( s , a ) max_{\pi} \sum_a \pi(a | s)q(s | a) =max_{a \in \mathcal{A}(s)} q(s, a) maxπa∑π(a∣s)q(s∣a)=maxa∈A(s)q(s,a)

当策略最优时:

π ( a ∣ s ) = { 1 a = a ∗ 0 a ≠ a ∗ \pi(a | s) = \left\{\begin{matrix} 1 & a = a^*\\ 0 & a \ne a^* \end{matrix}\right. π(a∣s)={10a=a∗a=a∗

其中: a ∗ = argmax a q ( s , a ) a^* = \text{argmax}_a q(s, a) a∗=argmaxaq(s,a)

BOE: Rewtite as v = f ( v ) v=f(v) v=f(v)

考虑矩阵形式Bellman最优等式:

v = m a x π ( r π + γ P π v ) \mathbf{v} = max_{\pi}(\mathbf{r}_{\pi} + \gamma \mathbf{P}_{\pi} \mathbf{v}) v=maxπ(rπ+γPπv)

使:

f ( v ) : = m a x π ( r π + γ P π v ) f(\mathbf{v}) := max_{\pi}(\mathbf{r}_{\pi} + \gamma \mathbf{P}_{\pi} \mathbf{v}) f(v):=maxπ(rπ+γPπv)

那么,Bellman最优等式就变为:

v = f ( v ) \mathbf{v} = f(\mathbf{v}) v=f(v)

其中:

[ f ( v ) ] s = m a x π ∑ a π ( a ∣ s ) q ( s , a ) s ∈ S [f(v)]_s = max_{\pi} \sum_a \pi(a | s)q(s, a) \;\;\;\;\; s\in S [f(v)]s=maxπa∑π(a∣s)q(s,a)s∈S

Contraction mapping theorem

Fixed Point:对于 x ∈ X x \in X x∈X,如果其为 f : X → X f: X \rightarrow X f:X→X的fixed point(不动点),那么其满足:

f ( x ) = x f(x)=x f(x)=x

Contraction Mapping(or Contraction Function): f f f是contraction mapping,如果:

∥ f ( x 1 ) − f ( x 2 ) ∥ ≤ γ ∥ x 1 − x 2 ∥ \| f(x_1) - f(x_2) \| \le \gamma \| x_1 - x_2 \| ∥f(x1)−f(x2)∥≤γ∥x1−x2∥

其中, γ ∈ ( 0 , 1 ) \gamma \in (0, 1) γ∈(0,1)

- γ \gamma γ必须严格小于1

- ∥ ⋅ ∥ \| \cdot\| ∥⋅∥可以是任何向量形式

对于任何满足 x = f ( x ) x = f(x) x=f(x)的等式形式,如果 f f f是contractnon mapping,那么:

- Existence:存在一个fixed point,满足 f ( x ∗ ) = x ∗ f(x^*) = x^* f(x∗)=x∗

- Uniquencess:fixed point x ∗ x^* x∗是唯一的

- Algorithm:考虑序列 { x k } \{ x_k \} {xk},其中 x k + 1 = f ( x k ) x_{k+1}=f(x_k) xk+1=f(xk),那么当 k → ∞ k \rightarrow \infty k→∞时 x k → x ∗ x_k \rightarrow x^* xk→x∗。而且,收敛速度时指数级。

例:

-

x = 0.5 x x=0.5x x=0.5x,其中 f ( x ) = 0.5 x f(x)=0.5x f(x)=0.5x而且 x ∈ R x \in \mathbb{R} x∈R。

x ∗ = 0 x^*=0 x∗=0是唯一的fixed point。其可以被迭代求解为:

x k + 1 = 0.5 x k x_{k+1}=0.5x_k xk+1=0.5xk -

x = A x x=Ax x=Ax,其中 f ( x ) = A x f(x)=Ax f(x)=Ax并且 x ∈ R n x \in \mathbb{R}^n x∈Rn, ∥ A ∥ < 1 \|A\| <1 ∥A∥<1。

x ∗ = 0 x^*=0 x∗=0是唯一的fixed point。其可以被迭代求解为:

x k + 1 = A x k x_{k+1} = Ax_k xk+1=Axk

BOE: Solution

考虑Bellman最优等式:

v = f ( v ) = m a x π ( r + γ P π v ) \mathbf{v} = f(\mathbf{v}) = max_{\pi}(r + \gamma \mathbf{P}_{\pi}\mathbf{v}) v=f(v)=maxπ(r+γPπv)

Contraction Property: f ( v ) f(v) f(v)是contraction mapping 满足:

∥ f ( v 1 ) − f ( v 2 ) ∥ ≤ γ ∥ v 1 − v 2 ∥ \|f(v_1) - f(v_2)\| \le \gamma \|v_1 - v_2\| ∥f(v1)−f(v2)∥≤γ∥v1−v2∥

其中, γ \gamma γ是discount rate。

对于BOE v = f ( v ) = m a x π ( r π + γ P π v ) \mathbf{v} = f(\mathbf{v}) = max_{\pi}(\mathbf{r}_{\pi} + \gamma \mathbf{P}_{\pi} \mathbf{v}) v=f(v)=maxπ(rπ+γPπv),其总存在一个最优解 v ∗ \mathbf{v}^* v∗,而且 v ∗ \mathbf{v}^* v∗是唯一的。最优解可以被迭代求解为:

v k + 1 = f ( v k ) = m a x π ( r π + γ P π v k ) \mathbf{v}_{k+1}=f(\mathbf{v}_k) = max_{\pi}(\mathbf{r}_{\pi} + \gamma \mathbf{P}_{\pi}\mathbf{v}_k) vk+1=f(vk)=maxπ(rπ+γPπvk)

给定任何初始猜测 v 0 v^0 v0,该序列 v k {v_k} vk都会以指数速度快速收敛到 v ∗ v^* v∗。 收敛速度由 γ \gamma γ决定。

迭代算法:

对于矩阵形式的Bellman最优等式:

v k + 1 = f ( v k ) = m a x π ( r π + γ P π v k ) \mathbf{v}_{k+1}=f(\mathbf{v}_k) = max_{\pi}(\mathbf{r}_{\pi} + \gamma \mathbf{P}_{\pi}\mathbf{v}_k) vk+1=f(vk)=maxπ(rπ+γPπvk)

其元素形式为:

v k + 1 ( s ) = m a x π π ( a ∣ s ) ( ∑ r p ( r ∣ s , a ) + γ ∑ s ′ p ( s ′ ∣ s , a ) v k ( s ′ ) ) = m a x π ∑ a π ( a ∣ s ) q k ( s , a ) = m a x a q k ( s , a ) \begin{align*} v_{k+1}(s)&=max_{\pi} \pi(a|s) \left( \sum_r p(r | s, a) + \gamma \sum_{s'} p(s' | s, a)v_k(s') \right)\\ &=max_{\pi} \sum_a \pi(a|s)q_k(s, a)\\ &=max_a q_k(s, a) \end{align*} vk+1(s)=maxππ(a∣s)(r∑p(r∣s,a)+γs′∑p(s′∣s,a)vk(s′))=maxπa∑π(a∣s)qk(s,a)=maxaqk(s,a)

Procedure Summary:

-

对于任何 s s s,其最近估计值为 v k ( s ) v_k(s) vk(s)

-

对于任何 a ∈ A ( s ) a \in \mathcal{A}(s) a∈A(s),计算 q k ( s , a ) = ∑ r p ( r ∣ s , a ) + γ ∑ s ′ p ( s ′ ∣ s , a ) v k ( s ′ ) q_k(s, a) = \sum_r p(r | s, a) + \gamma \sum_{s'} p(s' | s, a)v_k(s') qk(s,a)=∑rp(r∣s,a)+γ∑s′p(s′∣s,a)vk(s′)

-

对 s s s计算policy π k + 1 \pi_{k+1} πk+1:

π k + 1 ( a ∣ s ) = { 1 a = a k ∗ ( s ) 0 a ≠ a k ∗ ( s ) \pi_{k+1}(a|s)=\left\{\begin{matrix} 1 & a = a^*_k(s)\\ 0 & a \ne a^*_k(s) \end{matrix}\right. πk+1(a∣s)={10a=ak∗(s)a=ak∗(s)

其中, a k ∗ ( s ) = argmax a q k ( s , a ) a^*_k(s) = \text{argmax}_a q_k(s, a) ak∗(s)=argmaxaqk(s,a) -

计算 v k + 1 ( s ) = max a q k ( s , a ) v_{k+1}(s) = \text{max}_a q_k(s, a) vk+1(s)=maxaqk(s,a)

例:

对于下图:

action: a ℓ , a 0 , a r a_{\ell},a_0,a_r aℓ,a0,ar分别代表向左、保持不变,向右。

reward:进入target area:+1,试图突破边界:-1。

q ( s , a ) q(s, a) q(s,a)值表:

考虑 γ = 0.9 \gamma=0.9 γ=0.9

算法目标是发现 v ∗ ( s i ) v^*(s_i) v∗(si)和 π ∗ \pi^* π∗。

k=0:

v-value: v 0 ( s 1 ) = v 0 ( s 2 ) = v 0 ( s 3 ) = 0 v_0(s_1)=v_0(s_2)=v_0(s_3)=0 v0(s1)=v0(s2)=v0(s3)=0

q-value:

greedy policy:

π ( a r ∣ s 1 ) = 1 π ( a 0 ∣ s 2 ) = 1 π ( a ℓ ∣ s 3 ) = 1 \pi(a_r | s_1) = 1\\ \pi(a_0 | s_2) = 1\\ \pi(a_{\ell} | s_3) = 1 π(ar∣s1)=1π(a0∣s2)=1π(aℓ∣s3)=1

BOE: Optimality

假设 v ∗ v^* v∗是Bellman最优等式的解,其满足:

v ∗ = m a x π ( r π + γ P π v ∗ ) \mathbf{v}^* = max_{\pi}(\mathbf{r}_{\pi} + \gamma \mathbf{P}_{\pi} \mathbf{v}^*) v∗=maxπ(rπ+γPπv∗)

假设:

π ∗ = argmax π ( r π + γ P π v ∗ ) \mathbf{\pi}^* = \text{argmax}_{\pi} (\mathbf{r}_{\pi} + \gamma \mathbf{P}_{\pi}\mathbf{v}^*) π∗=argmaxπ(rπ+γPπv∗)

那么:

v ∗ = r π − γ P π ∗ v ∗ \mathbf{v}^* = \mathbf{r}_{\pi} - \gamma \mathbf{P}_{\pi^*}\mathbf{v}^* v∗=rπ−γPπ∗v∗

因此, π ∗ \pi^* π∗是策略, v ∗ = v π ∗ v^*=v_{\pi}^* v∗=vπ∗是对应的state value。

Theorem (Policy Optimality):

假设 $v^* $是 v = m a x π ( r π + γ P π v ) v = max\pi(r_\pi + \gamma P_\pi v) v=maxπ(rπ+γPπv)的唯一解, v π v_\pi vπ 是对于任何给定policy π \pi π 满足 v π = r π + γ P π v π v_\pi = r_\pi + \gamma P_\pi v_\pi vπ=rπ+γPπvπ 的state value函数,则:

v ∗ ≥ v π ∀ π v^* \ge v_{\pi} \;\;\;\;\; \forall \pi v∗≥vπ∀π

Theorem (Greedy Optimal Policy):

对于任何 s ∈ S s \in S s∈S,确定的greedy policy是:

π ∗ ( a ∣ s ) = { 1 a = a ∗ ( s ) 0 a ≠ a ∗ ( s ) ( 1 ) \pi^*(a| s)= \left\{\begin{matrix} 1 & a = a^*(s) \\ 0 & a \ne a^*(s) \end{matrix}\right. \;\;\;\;\; (1) π∗(a∣s)={10a=a∗(s)a=a∗(s)(1)

是BOE求得的最优policy。其中:

a ∗ ( s ) = argmax a q ∗ ( a , s ) a^*(s) = \text{argmax}_a q^*(a, s) a∗(s)=argmaxaq∗(a,s)

其中 q ∗ ( s , a ) : = ∑ r p ( r ∣ s , a ) + γ ∑ s ′ p ( s ′ ∣ s , a ) v ∗ ( s ′ ) q^*(s,a) := \sum_r p(r | s, a) + \gamma \sum_{s'} p(s' | s, a) v^*(s') q∗(s,a):=∑rp(r∣s,a)+γ∑s′p(s′∣s,a)v∗(s′)

Analyzing optimal policies

考虑BOE等式:

v ( s ) = m a x π π ( a ∣ s ) ( ∑ r p ( r ∣ s , a ) + γ ∑ s ′ p ( s ′ ∣ s , a ) v k ( s ′ ) ) v(s)=max_{\pi} \pi(a|s) \left( \sum_r p(r | s, a) + \gamma \sum_{s'} p(s' | s, a)v_k(s') \right) v(s)=maxππ(a∣s)(r∑p(r∣s,a)+γs′∑p(s′∣s,a)vk(s′))

有三个要素:

- Reward: r r r

- System model: p ( s ′ ∣ s , a ) p(s' | s, a) p(s′∣s,a), p ( r ∣ s , a ) p(r | s, a) p(r∣s,a)

- Discount rate: γ \gamma γ

- v ( s ) , v ( s ′ ) , π ( a ∣ s ) v(s),v(s'),\pi(a|s) v(s),v(s′),π(a∣s)是未知需要计算的。

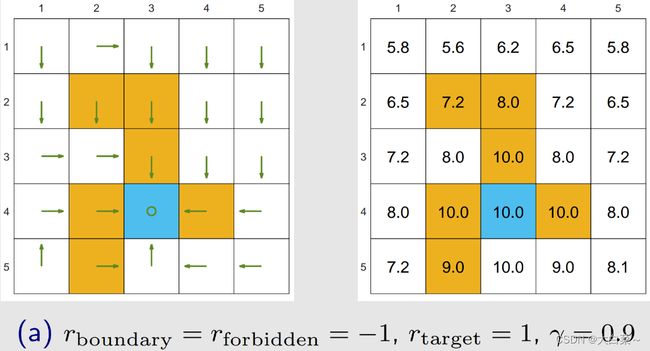

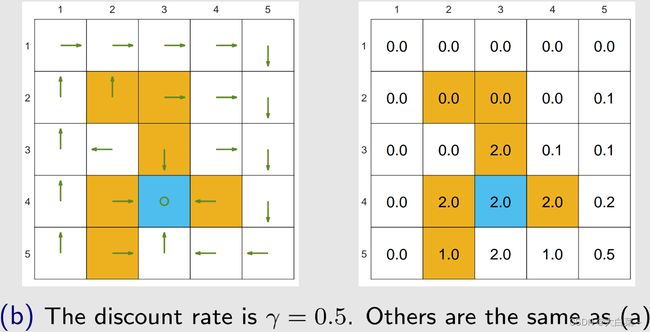

接下来,通过改变 r r r和 γ \gamma γ来讨论optimal policy的变化。

通过求解BOE得到最优policy和对应的最优state value。

敢于冒险的最优策略:进入forbidden area!

改变 γ = 0.9 \gamma = 0.9 γ=0.9 到 γ = 0.5 \gamma = 0.5 γ=0.5

最优policy变得短视! 避开所有forbidden area!

改变 γ = 0 \gamma=0 γ=0

最优policy变得极其短视! 另外,选择立即reward最大的行动! 达不到目标!

如果加大进入forbidden area的处罚力度(改变 r f o r b i d d e n = − 1 r_{forbidden=-1} rforbidden=−1到 r f o r b i d d e n = − 10 r_{forbidden}=-10 rforbidden=−10)

最优的政策也会避开forbidden area。

Theorem (Optimal Policy Invariance):

考虑一个马尔可夫决策过程,其中 v ∗ ∈ R ∣ S ∣ v^* \in \mathbb{R}^{|S|} v∗∈R∣S∣作为满足 v ∗ = m a x π ( r π + γ P π v ∗ ) v* = max_\pi(r_\pi + γP_\pi v^*) v∗=maxπ(rπ+γPπv∗)的最优状态值。如果每个奖励 r r r通过仿射变换变为 a r + b ar + b ar+b,其中 a , b ∈ R a,b \in \mathbb{R} a,b∈R且 a ≠ 0 a \ne 0 a=0,则对应的最优状态值 v ′ v' v′也是 v ∗ v^* v∗的仿射变换。

v ′ = a v ∗ + b 1 − γ 1 v' = a v^* + \frac{b}{1 - \gamma} \mathbf{1} v′=av∗+1−γb1

其中, γ ∈ ( 0 , 1 ) \gamma \in (0, 1) γ∈(0,1)是discount rate, 1 = [ 1 , . . . , 1 ] T \mathbb{1} = [1, ..., 1]^T 1=[1,...,1]T。因此,最优policy对于reward信号的仿射变换是不变的。

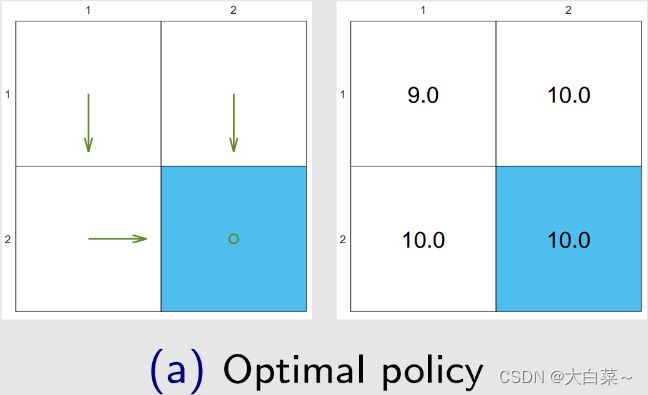

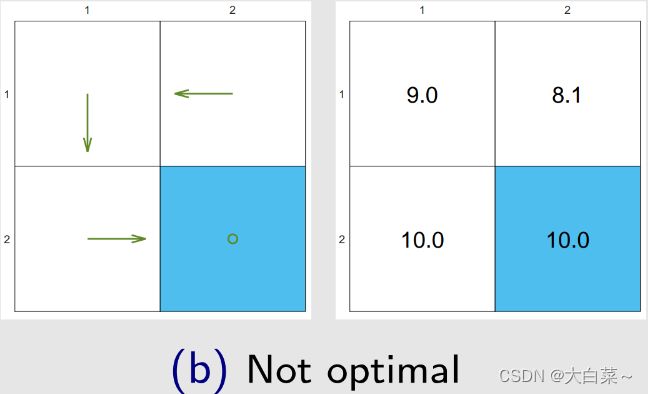

关于无意义绕路:

走弯路不施加惩罚也可以避免优化过程中走弯路。

discount rate!

Policy ( a ) : return = 1 + γ 1 + γ 2 1 + ⋯ = 1 / ( 1 − γ ) = 10 \text{Policy}(a): \text{return}=1 + \gamma 1 + \gamma^21 + \cdots = 1 / (1 - \gamma ) = 10 Policy(a):return=1+γ1+γ21+⋯=1/(1−γ)=10

Policy(b) : return = 0 + γ 0 + γ 2 0 + ⋯ = γ 2 / ( 1 − γ ) = 8.1 \text{Policy(b)}: \text{return} = 0 + \gamma0 + \gamma^2 0 + \cdots = \gamma^2 / (1 - \gamma) = 8.1 Policy(b):return=0+γ0+γ20+⋯=γ2/(1−γ)=8.1

以上内容为B站西湖大学智能无人系统 强化学习的数学原理 公开课笔记。