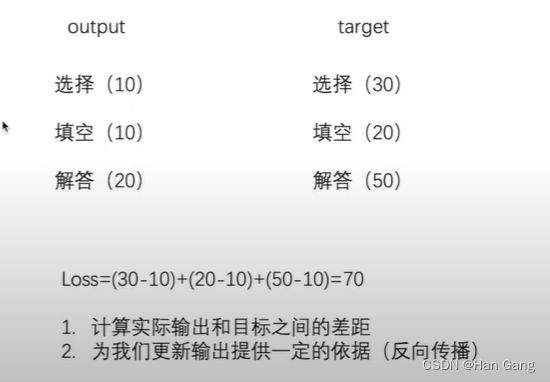

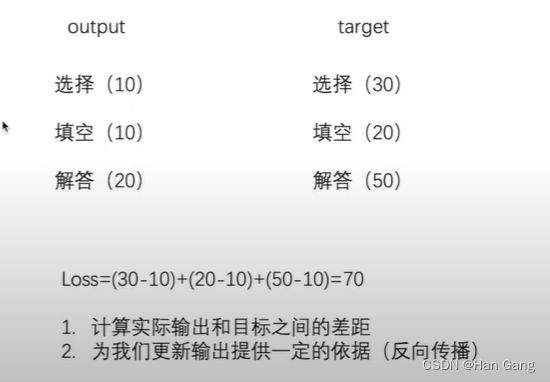

反向传播

Loss Function

import torchvision

from torch import nn

from torch.nn import Flatten

from torch.utils.data import DataLoader

dataset=torchvision.datasets.CIFAR10("./data",train=False,transform=torchvision.transforms.ToTensor(),download=True)

data_loader=DataLoader(dataset,batch_size=1)

class My_mod(nn.Module):

def __init__(self):

super(My_mod,self).__init__()

self.conv1=nn.Conv2d(3,32,5,1,2)

self.maxpool=nn.MaxPool2d(2)

self.conv2=nn.Conv2d(32,32,5,1,2)

self.conv3=nn.Conv2d(32,64,5,1,2)

self.relu=nn.ReLU()

self.flatten=Flatten()

self.linear=nn.Linear(1024,10)

self.model=nn.Sequential(

nn.Conv2d(3, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 5, 1, 2),

nn.MaxPool2d(2),

Flatten(),

nn.Linear(1024, 10)

)

def forward(self,x):

x=self.model(x)

return x

my_mod=My_mod()

loss=nn.CrossEntropyLoss()

for data in data_loader:

img,target=data

output=my_mod(img)

print(output,target)

ls=loss(output,target)

ls.backward()

print((ls))

加上优化器这模型就基本训练起来了?

import torch

import torchvision

from torch import nn

from torch.nn import Flatten

from torch.utils.data import DataLoader

dataset=torchvision.datasets.CIFAR10("./data",train=False,transform=torchvision.transforms.ToTensor(),download=True)

data_loader=DataLoader(dataset,batch_size=64)

class My_mod(nn.Module):

def __init__(self):

super(My_mod,self).__init__()

self.conv1=nn.Conv2d(3,32,5,1,2)

self.maxpool=nn.MaxPool2d(2)

self.conv2=nn.Conv2d(32,32,5,1,2)

self.conv3=nn.Conv2d(32,64,5,1,2)

self.relu=nn.ReLU()

self.flatten=Flatten()

self.linear=nn.Linear(1024,10)

self.model=nn.Sequential(

nn.Conv2d(3, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 5, 1, 2),

nn.MaxPool2d(2),

Flatten(),

nn.Linear(1024, 10)

)

def forward(self,x):

x=self.model(x)

return x

my_mod=My_mod()

loss=nn.CrossEntropyLoss()

optim=torch.optim.SGD(my_mod.parameters(),lr=0.01)

for i in range(100):

Lo=0.0

for data in data_loader:

img,target=data

output=my_mod(img)

ls=loss(output,target)

Lo=Lo+ls

optim.zero_grad()

ls.backward()

optim.step()

print(Lo)

利用和更改已经存在的模型

import torchvision

from torch import nn

vgg16_T=torchvision.models.vgg16(True)

vgg16_F=torchvision.models.vgg16(False)

print(vgg16_T)

vgg16_T.add_module("add_linear",nn.Linear(1000,10))

vgg16_F.classifier.add_module("add_linear",nn.Linear(1000,10))

print(vgg16_T,vgg16_F)

模型的保存和加载

模型的保存

import torchvision

import torch

vgg16=torchvision.models.vgg16(False)

torch.save(vgg16,"./method1.pth")

torch.save(vgg16.state_dict(),"./method2.pth")

模型的加载

import torch

import torchvision

model=torch.load("./method1.pth")

print(model)

vgg16=torchvision.models.vgg16(False)

vgg16.load_state_dict(torch.load("./method2.pth"))

print(vgg16)