多媒体本地播放流程video playback--base on jellybean (四) .

上一篇我们讲了mediaplayer播放的第一步骤setdataSource,下面我们来讲解preparesync的流程,在prepare前我们还有setDisplay这一步,即获取surfacetexture来进行画面的展示

setVideoSurface(JNIEnv *env, jobject thiz, jobject jsurface, jboolean mediaPlayerMustBeAlive)

{

sp<MediaPlayer> mp = getMediaPlayer(env, thiz);

………

sp<ISurfaceTexture> new_st;

if (jsurface) {

sp<Surface> surface(Surface_getSurface(env, jsurface));

if (surface != NULL) {

new_st = surface->getSurfaceTexture();

---通过surface获取surfaceTexture

new_st->incStrong(thiz);

……….

}………….

mp->setVideoSurfaceTexture(new_st);

}

为什么用surfaceTexture不用surface来展示呢?ICS之前都用的是surfaceview来展示video或者openGL的内容,surfacaview render在surface上,textureview render在surfaceTexture,textureview和surfaceview 这两者有什么区别呢?surfaceview跟应用的视窗不是同一个视窗,它自己new了一个window来展示openGL或者video的内容,这样做有一个好处就是不用重绘应用的视窗,本身就可以不停的更新,但这也带来一些局限性,surfaceview不是依附在应用视窗中,也就不能移动、缩放、旋转,应用ListView或者 ScrollView就比较费劲。Textureview就很好的解决了这些问题。它拥有surfaceview的一切特性外,它也拥有view的一切行为,可以当个view使用。

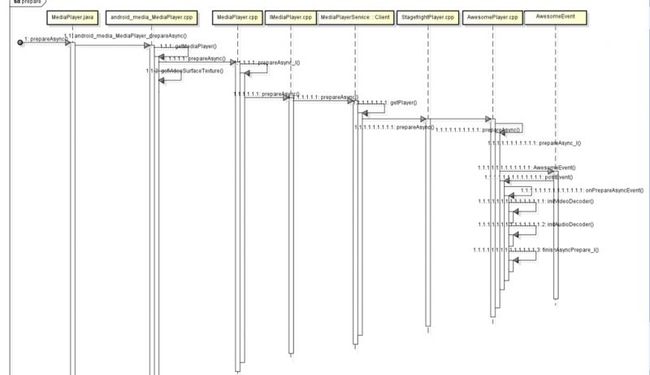

获取完surfaceTexture,我们就可以prepare/prepareAsync了,先给大伙看个大体时序图吧:

JNI的部分我们跳过,直接进入libmedia下的mediaplayer.cpp的 prepareAsync_l方法,prepare是个同步的过程,所以要加锁,prepareAsync_l后缀加_l就是表面是同步的过程。

status_t MediaPlayer::prepareAsync_l()

{

if ( (mPlayer != 0) && ( mCurrentState & ( MEDIA_PLAYER_INITIALIZED | MEDIA_PLAYER_STOPPED) ) ) {

mPlayer->setAudioStreamType(mStreamType);

mCurrentState = MEDIA_PLAYER_PREPARING;

return mPlayer->prepareAsync();

}

ALOGE("prepareAsync called in state %d", mCurrentState);

return INVALID_OPERATION;

}

在上面的代码中,我们看到有个mPlayer,看过前一章的朋友都会记得,就是我们从Mediaplayerservice获得的BpMediaplayer.通过BpMediaplayer我们就可以长驱直入,直捣Awesomeplayer这条干实事的黄龙,前方的mediaplayerservice:client和stagefrightplayer都是些通风报信的料,不值得我们去深入研究,无非是些接口而已。进入了prepareAsync_l方法,我们的播放器所处的状态就是MEDIA_PLAYER_PREPARING了。好了,我们就来看看Awesomeplayer到底做了啥吧.

代码定位于:frameworks/av/media/libstagefright/Awesomeplayer.cpp

先看下prepareAsync_l吧:

status_t AwesomePlayer::prepareAsync_l() {

if (mFlags & PREPARING) {

return UNKNOWN_ERROR; // async prepare already pending

}

if (!mQueueStarted) {

mQueue.start();

mQueueStarted = true;

}

modifyFlags(PREPARING, SET);

mAsyncPrepareEvent = new AwesomeEvent(

this, &AwesomePlayer::onPrepareAsyncEvent);

mQueue.postEvent(mAsyncPrepareEvent);

return OK;

}

这里我们涉及到了TimeEventQueue,即时间事件队列模型,Awesomeplayer里面类似Handler的东西,它的实现方式是把事件响应时间和事件本身封装成一个queueItem,通过postEvent 插入队列,时间到了就会根据事件id进行相应的处理。

首先我们来看下TimeEventQueue的start(mQueue.start();)方法都干了什么:

frameworks/av/media/libstagefright/TimedEventQueue.cpp

void TimedEventQueue::start() {

if (mRunning) {

return;

}

……..

pthread_create(&mThread, &attr, ThreadWrapper, this);

………

}

目的很明显就是在主线程创建一个子线程,可能很多没有写过C/C++的人对ptread_create这个创建线程的方法有点陌生,我们就来分析下:

int pthread_create(pthread_t *thread, pthread_addr_t *arr,

void* (*start_routine)(void *), void *arg);

thread :用于返回创建的线程的ID

arr : 用于指定的被创建的线程的属性

start_routine : 这是一个函数指针,指向线程被创建后要调用的函数

arg : 用于给线程传递参数

分析完了,我们就看下创建线程后调用的函数ThreadWrapper吧:

// static

void *TimedEventQueue::ThreadWrapper(void *me) {

……

static_cast<TimedEventQueue *>(me)->threadEntry();

return NULL;

}

跟踪到threadEntry:

frameworks/av/media/libstagefright/TimedEventQueue.cpp

void TimedEventQueue::threadEntry() {

prctl(PR_SET_NAME, (unsigned long)"TimedEventQueue", 0, 0, 0);

for (;;) {

int64_t now_us = 0;

sp<Event> event;

{

Mutex::Autolock autoLock(mLock);

if (mStopped) {

break;

}

while (mQueue.empty()) {

mQueueNotEmptyCondition.wait(mLock);

}

event_id eventID = 0;

for (;;) {

if (mQueue.empty()) {

// The only event in the queue could have been cancelled

// while we were waiting for its scheduled time.

break;

}

List<QueueItem>::iterator it = mQueue.begin();

eventID = (*it).event->eventID();

……………………………

static int64_t kMaxTimeoutUs = 10000000ll; // 10 secs

……………..

status_t err = mQueueHeadChangedCondition.waitRelative(

mLock, delay_us * 1000ll);

if (!timeoutCapped && err == -ETIMEDOUT) {

// We finally hit the time this event is supposed to

// trigger.

now_us = getRealTimeUs();

break;

}

}

……………………….

event = removeEventFromQueue_l(eventID);

}

if (event != NULL) {

// Fire event with the lock NOT held.

event->fire(this, now_us);

}

}

}

从代码我们可以了解到,主要目的是检查queue是否为空,刚开始肯定是为空了,等待队列不为空时的条件成立,即有queueIten进入进入队列中。这个事件应该就是

mQueue.postEvent(mAsyncPrepareEvent);

在讲postEvent前,我们先来看看mAsyncPrepareEvent这个封装成AwesomeEvent的Event。

frameworks/av/media/libstagefright/TimedEventQueue.cpp

void TimedEventQueue::threadEntry() {

prctl(PR_SET_NAME, (unsigned long)"TimedEventQueue", 0, 0, 0);

for (;;) {

int64_t now_us = 0;

sp<Event> event;

{

Mutex::Autolock autoLock(mLock);

if (mStopped) {

break;

}

while (mQueue.empty()) {

mQueueNotEmptyCondition.wait(mLock);

}

event_id eventID = 0;

for (;;) {

if (mQueue.empty()) {

// The only event in the queue could have been cancelled

// while we were waiting for its scheduled time.

break;

}

List<QueueItem>::iterator it = mQueue.begin();

eventID = (*it).event->eventID();

……………………………

static int64_t kMaxTimeoutUs = 10000000ll; // 10 secs

……………..

status_t err = mQueueHeadChangedCondition.waitRelative(

mLock, delay_us * 1000ll);

if (!timeoutCapped && err == -ETIMEDOUT) {

// We finally hit the time this event is supposed to

// trigger.

now_us = getRealTimeUs();

break;

}

}

……………………….

event = removeEventFromQueue_l(eventID);

}

if (event != NULL) {

// Fire event with the lock NOT held.

event->fire(this, now_us);

}

}

}

从这个结构体我们可以知道当这个event被触发时将会执行Awesomeplayer的某个方法,我们看下mAsyncPrepareEvent:

mAsyncPrepareEvent = new AwesomeEvent(

this, &AwesomePlayer::onPrepareAsyncEvent);

mAsyncPrepareEvent被触发时也就触发了onPrepareAsyncEvent方法。

好了,回到我们的postEvent事件,我们开始说的TimeEventQueue,即时间事件队列模型,刚刚我们说了Event, 但是没有看到delay time啊?会不会在postEvent中加入呢?跟下去看看:

TimedEventQueue::event_id TimedEventQueue::postEvent(const sp<Event> &event) {

// Reserve an earlier timeslot an INT64_MIN to be able to post

// the StopEvent to the absolute head of the queue.

return postTimedEvent(event, INT64_MIN + 1);

}

终于看到delay时间了INT64_MIN + 1。重点在postTimedEvent,它把post过来的event和时间封装成queueItem加入队列中,并通知Queue为空的条件不成立,线程解锁,允许thread继续进行,经过delay time后pull event_id所对应的event。

frameworks/av/media/libstagefright/TimedEventQueue.cpp

TimedEventQueue::event_id TimedEventQueue::postTimedEvent(

const sp<Event> &event, int64_t realtime_us) {

Mutex::Autolock autoLock(mLock);

event->setEventID(mNextEventID++);

………………….

QueueItem item;

item.event = event;

item.realtime_us = realtime_us;

if (it == mQueue.begin()) {

mQueueHeadChangedCondition.signal();

}

mQueue.insert(it, item);

mQueueNotEmptyCondition.signal();

return event->eventID();

}

到此,我们的TimeEventQueue,即时间事件队列模型讲完了。实现机制跟handle的C/C++部分类似。

在我们setdataSource实例化Awesomeplayer的时候,我们还顺带创建了如下几个event

sp<TimedEventQueue::Event> mVideoEvent;

sp<TimedEventQueue::Event> mStreamDoneEvent;

sp<TimedEventQueue::Event> mBufferingEvent;

sp<TimedEventQueue::Event> mCheckAudioStatusEvent;

sp<TimedEventQueue::Event> mVideoLagEvent;

具体都是实现了什么功能呢?我们在具体调用的时候再深入讲解。

接下来我们就来讲讲onPrepareAsyncEvent方法了。

frameworks/av/media/libstagefight/AwesomePlayer.cpp

void AwesomePlayer::onPrepareAsyncEvent() {

Mutex::Autolock autoLock(mLock);

…………………………

if (mUri.size() > 0) {

status_t err = finishSetDataSource_l();----这个不会走了,如果是本地文件的话

…………………………

if (mVideoTrack != NULL && mVideoSource == NULL) {

status_t err = initVideoDecoder();-----------如果有videotrack初始化video的解码器

…………………………

if (mAudioTrack != NULL && mAudioSource == NULL) {

status_t err = initAudioDecoder();---------------如果有audiotrack初始化audio解码器

……………………..

modifyFlags(PREPARING_CONNECTED, SET);

if (isStreamingHTTP()) {

postBufferingEvent_l(); ------一般不会走了

} else {

finishAsyncPrepare_l();----------对外宣布prepare完成,并从timeeventqueue中移除该queueitem,mAsyncPrepareEvent=null

}

}

我们终于知道prepare主要目的了,根据类型找到解码器并初始化对应的解码器。那我们首先就来看看有videotrack的媒体文件是如何找到并初始化解码器吧。

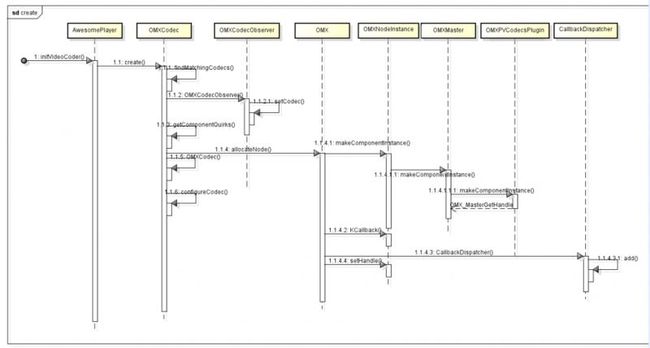

先看图吧,了解大概步骤:

看完图就开讲了:

iniVideoDecoder目的是初始化解码器,取得已解码器的联系,解码数据输出格式等等。

frameworks/av/media/libstagefright/Awesomeplayer.cpp

status_t AwesomePlayer::initVideoDecoder(uint32_t flags) {

…………

mVideoSource = OMXCodec::Create(

mClient.interface(), mVideoTrack->getFormat(),

false, // createEncoder

mVideoTrack,

NULL, flags, USE_SURFACE_ALLOC ? mNativeWindow : NULL);

…………..

status_t err = mVideoSource->start();

}

我们先来看create函数到底干了啥吧:

frameworks/av/media/libstagefright/OMXCodec.cpp

sp<MediaSource> OMXCodec::Create(

const sp<IOMX> &omx,

const sp<MetaData> &meta, bool createEncoder,

const sp<MediaSource> &source,

const char *matchComponentName,

uint32_t flags,

const sp<ANativeWindow> &nativeWindow) {

…………..

bool success = meta->findCString(kKeyMIMEType, &mime);

……………

(1) findMatchingCodecs(

mime, createEncoder, matchComponentName, flags,

&matchingCodecs, &matchingCodecQuirks);

……….

(2) sp<OMXCodecObserver> observer = new OMXCodecObserver;

(3) status_t err = omx->allocateNode(componentName, observer, &node);

……….

(4) sp<OMXCodec> codec = new OMXCodec(

omx, node, quirks, flags,

createEncoder, mime, componentName,

source, nativeWindow);

(5) observer->setCodec(codec);

(6)err = codec->configureCodec(meta);

…………

}

首先看下findMatchingCodecs,原来是根据mimetype找到匹配的解码组件,android4.1的寻找组件有了很大的变化,以前都是把codecinfo都写在代码上了,现在把他们都放到media_codec.xml文件中,full build 后会保存在“/etc/media_codecs.xml”,这个xml由各个芯片厂商来提供,这样以后添加起来就很方便,不用改代码了。一般是原生态的代码都是软解码。解码器的匹配方式是排名制,因为一般厂商的配置文件都有很多的同类型的编码器,谁排前面就用谁的。

frameworks/av/media/libstagefright/OMXCodec.cpp

void OMXCodec::findMatchingCodecs(

const char *mime,

bool createEncoder, const char *matchComponentName,

uint32_t flags,

Vector<String8> *matchingCodecs,

Vector<uint32_t> *matchingCodecQuirks) {

…………

const MediaCodecList *list = MediaCodecList::getInstance();

………

for (;;) {

ssize_t matchIndex =

list->findCodecByType(mime, createEncoder, index);

………………..

matchingCodecs->push(String8(componentName));

…………….

}

frameworks/av/media/libstagefright/MediaCodecList.cpp

onst MediaCodecList *MediaCodecList::getInstance() {

..

if (sCodecList == NULL) {

sCodecList = new MediaCodecList;

}

return sCodecList->initCheck() == OK ? sCodecList : NULL;

}

MediaCodecList::MediaCodecList()

: mInitCheck(NO_INIT) {

FILE *file = fopen("/etc/media_codecs.xml", "r");

if (file == NULL) {

ALOGW("unable to open media codecs configuration xml file.");

return;

}

parseXMLFile(file);

}

有了匹配的componentName,我们就可以创建ComponentInstance,这由allocateNode方法来实现。

frameworks/av/media/libstagefright/omx/OMX.cpp

status_t OMX::allocateNode(

const char *name, const sp<IOMXObserver> &observer, node_id *node) {

……………………

OMXNodeInstance *instance = new OMXNodeInstance(this, observer);

OMX_COMPONENTTYPE *handle;

OMX_ERRORTYPE err = mMaster->makeComponentInstance(

name, &OMXNodeInstance::kCallbacks,

instance, &handle);

……………………………

*node = makeNodeID(instance);

mDispatchers.add(*node, new CallbackDispatcher(instance));

instance->setHandle(*node, handle);

mLiveNodes.add(observer->asBinder(), instance);

observer->asBinder()->linkToDeath(this);

return OK;

}

在allocateNode,我们要用到mMaster来创建component,但是这个mMaster什么时候初始化了呢?我们看下OMX的构造函数:

OMX::OMX()

: mMaster(new OMXMaster),-----------原来在这呢!

mNodeCounter(0) {

}

但是我们前面没有讲到OMX什么时候构造的啊?我们只能往回找了,原来我们在初始化Awesomeplayer的时候忽略掉了,罪过啊:

AwesomePlayer::AwesomePlayer()

: mQueueStarted(false),

mUIDValid(false),

mTimeSource(NULL),

mVideoRendererIsPreview(false),

mAudioPlayer(NULL),

mDisplayWidth(0),

mDisplayHeight(0),

mVideoScalingMode(NATIVE_WINDOW_SCALING_MODE_SCALE_TO_WINDOW),

mFlags(0),

mExtractorFlags(0),

mVideoBuffer(NULL),

mDecryptHandle(NULL),

mLastVideoTimeUs(-1),

mTextDriver(NULL) {

CHECK_EQ(mClient.connect(), (status_t)OK) 这个就是创建的地方

mClient是OMXClient,

status_t OMXClient::connect() {

sp<IServiceManager> sm = defaultServiceManager();

sp<IBinder> binder = sm->getService(String16("media.player"));

sp<IMediaPlayerService> service = interface_cast<IMediaPlayerService>(binder);---很熟悉吧,获得BpMediaplayerservice

CHECK(service.get() != NULL);

mOMX = service->getOMX();

CHECK(mOMX.get() != NULL);

if (!mOMX->livesLocally(NULL /* node */, getpid())) {

ALOGI("Using client-side OMX mux.");

mOMX = new MuxOMX(mOMX);

}

return OK;

}

好了,我们直接进入mediaplayerservice.cpp看个究竟吧:

sp<IOMX> MediaPlayerService::getOMX() {

Mutex::Autolock autoLock(mLock);

if (mOMX.get() == NULL) {

mOMX = new OMX;

}

return mOMX;

}

终于看到了OMX的创建了,哎以后得注意看代码才行!!!

我们搞了那么多探究OMXMaster由来有什么用呢?

OMXMaster::OMXMaster()

: mVendorLibHandle(NULL) {

addVendorPlugin();

addPlugin(new SoftOMXPlugin);

}

void OMXMaster::addVendorPlugin() {

addPlugin("libstagefrighthw.so");

}

原来是用来加载各个厂商的解码器(libstagefrighthw.so),还有就是把google本身的软解码器(SoftOMXPlugin)也加载了进来。那么这个libstagefrighthw.so在哪?我找了半天终于找到了,每个芯片厂商对应自己的libstagefrighthw

hardware/XX/media/libstagefrighthw/xxOMXPlugin

如何实例化自己解码器的component?我们以高通为例:

void OMXMaster::addPlugin(const char *libname) {

mVendorLibHandle = dlopen(libname, RTLD_NOW);

…………………………….

if (createOMXPlugin) {

addPlugin((*createOMXPlugin)());-----创建OMXPlugin,并添加进我们的列表里

}

}

hardware/qcom/media/libstagefrighthw/ QComOMXPlugin.cpp

OMXPluginBase *createOMXPlugin() {

return new QComOMXPlugin;

}

QComOMXPlugin::QComOMXPlugin()

: mLibHandle(dlopen("libOmxCore.so", RTLD_NOW)),----载入自己的omx API

mInit(NULL),

mDeinit(NULL),

mComponentNameEnum(NULL),

mGetHandle(NULL),

mFreeHandle(NULL),

mGetRolesOfComponentHandle(NULL) {

if (mLibHandle != NULL) {

mInit = (InitFunc)dlsym(mLibHandle, "OMX_Init");

mDeinit = (DeinitFunc)dlsym(mLibHandle, "OMX_DeInit");

mComponentNameEnum =

(ComponentNameEnumFunc)dlsym(mLibHandle, "OMX_ComponentNameEnum");

mGetHandle = (GetHandleFunc)dlsym(mLibHandle, "OMX_GetHandle");

mFreeHandle = (FreeHandleFunc)dlsym(mLibHandle, "OMX_FreeHandle");

mGetRolesOfComponentHandle =

(GetRolesOfComponentFunc)dlsym(

mLibHandle, "OMX_GetRolesOfComponent");

(*mInit)();

}

}

以上我们就可以用高通的解码器了。我们在创建component的时候就可以创建高通相应的component实例了:

OMX_ERRORTYPE OMXMaster::makeComponentInstance(

const char *name,

const OMX_CALLBACKTYPE *callbacks,

OMX_PTR appData,

OMX_COMPONENTTYPE **component) {

Mutex::Autolock autoLock(mLock);

*component = NULL;

ssize_t index = mPluginByComponentName.indexOfKey(String8(name)); ----根据我们在media_codec.xml的解码器名字,在插件列表找到其索引

OMXPluginBase *plugin = mPluginByComponentName.valueAt(index); --根据索引找到XXOMXPlugin

OMX_ERRORTYPE err =

plugin->makeComponentInstance(name, callbacks, appData, component);

-----创建组件

mPluginByInstance.add(*component, plugin);

return err;

}

hardware/qcom/media/libstagefrighthw/ QComOMXPlugin.cpp

OMX_ERRORTYPE QComOMXPlugin::makeComponentInstance(

const char *name,

const OMX_CALLBACKTYPE *callbacks,

OMX_PTR appData,

OMX_COMPONENTTYPE **component) {

if (mLibHandle == NULL) {

return OMX_ErrorUndefined;

}

String8 tmp;

RemovePrefix(name, &tmp);

name = tmp.string();

return (*mGetHandle)(

reinterpret_cast<OMX_HANDLETYPE *>(component),

const_cast<char *>(name),

appData, const_cast<OMX_CALLBACKTYPE *>(callbacks));

}

哈哈,我们终于完成了app到寻找到正确解码器的工程了!!!

ComponentInstance, OMXCodecObserver,omxcodec,omx的关系和联系,我写了篇文章,可以到链接进去看看:

http://blog.csdn.net/tjy1985/article/details/7397752

OMXcodec通过binder(IOMX)跟omx建立了联系,解码器则通过注册的几个回调事件OMX_CALLBACKTYPE OMXNodeInstance::kCallbacks = {

&OnEvent, &OnEmptyBufferDone, &OnFillBufferDone

}往OMXNodeInstance这个接口上报消息,OMX通过消息分发机制往OMXCodecObserver发消息,它再给注册进observer的omxcodec(observer->setCodec(codec);)进行最后的处理!

stagefright 通过OpenOMX联通解码器的过程至此完毕。

create最后一步就剩下configureCodec(meta),主要是设置下输出的宽高和initNativeWindow。

忘了个事,就是OMXCOdec的状态:

enum State {

DEAD,

LOADED,

LOADED_TO_IDLE,

IDLE_TO_EXECUTING,

EXECUTING,

EXECUTING_TO_IDLE,

IDLE_TO_LOADED,

RECONFIGURING,

ERROR

};

在我们实例化omxcodec的时候该状态处于LOADED状态。

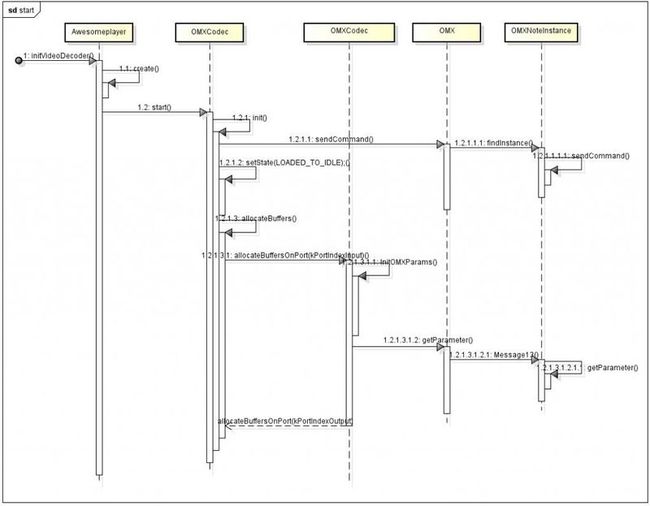

LOADER后应该就是LOADER_TO_IDLE,那什么时候进入该状态呢,就是我们下面讲的start方法:

status_t err = mVideoSource->start();

mVideoSource就是omxcodec,我们进入omxcodec.cpp探个究竟:

status_t OMXCodec::start(MetaData *meta) {

….

return init();

}

status_t OMXCodec::init() {

……..

err = allocateBuffers();

err = mOMX->sendCommand(mNode, OMX_CommandStateSet, OMX_StateIdle);

setState(LOADED_TO_IDLE);

……………………

}

start原来做了三件事啊,

1:allocateBuffers给输入端放入缓存的数据,给输出端准备匹配的native window

status_t OMXCodec::allocateBuffers() {

status_t err = allocateBuffersOnPort(kPortIndexInput);

if (err != OK) {

return err;

}

return allocateBuffersOnPort(kPortIndexOutput);

}

2:分配完后通知解码器器端进入idle状态,sendCommand的流程可以参考http://blog.csdn.net/tjy1985/article/details/7397752

emptyBuffer过程

3:本身也处于IDLE。

到此我们的initVideoDecoder就完成了,initAudioDecoder流程也差不多一致,这里就不介绍了,有兴趣的可以自己跟进去看看。

prepare的最后一步finishAsyncPrepare_l(),对外宣布prepare完成,并从timeeventqueue中移除该queueitem,mAsyncPrepareEvent=null。

费了很多的口舌和时间,我们终于完成了prepare的过程,各路信息通道都打开了,往下就是播放的过程了。

转载请注明出处:太妃糖出品