一、环境

转载请出至出处:http://eksliang.iteye.com/blog/2223784

准备3台虚拟机,安装Centos 64-bit操作系统。

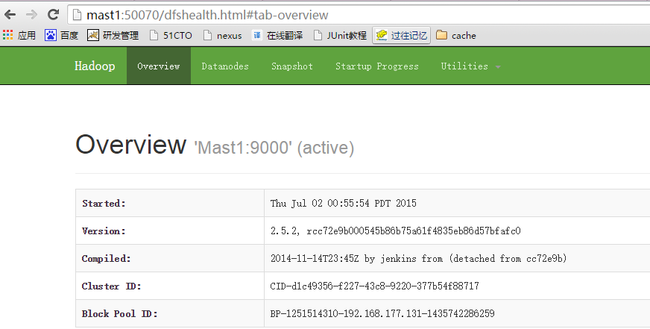

- 192.168.177.131 mast1.com mast1

- 192.168.177.132 mast2.com mast2

- 192.168.177.133 mast3.com mast3

其中mast1充当NameNade节点、mast2、mast3充当DataNode节点

二、安装之前的准备工作

- 安装jdk

- 每台机器新建hadoop用户,并配置ssh公钥密钥自动登录

这部分工作省略掉,配置ssh公钥密码自动登录参考:http://eksliang.iteye.com/blog/2187265

三、开始部署

3.1、下载hadoop2.5.2

下载地址:http://mirrors.cnnic.cn/apache/hadoop/common/hadoop-2.5.2/

3.2、配置hadoop-2.5.2/etc/hadoop

先配置mast1这台机器,配置后了后,将配置环境,复制到mast2、mast3上面即可

3.2.1、core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://mast1:9000</value>

</property>

<property>

<name>io.file.buffer.size</name>

<value>4096</value>

</property>

</configuration>

- io.file.buffer.size:在读写文件时使用的缓存大小

3.2.2、hdfs-site.xml

<configuration>

<property>

<name>dfs.nameservices</name>

<value>ns</value>

</property>

<property>

<name>dfs.namenode.http-address</name>

<value>mast1:50070</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>mast1:50090</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:///home/hadoop/workspace/hdfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:///home/hadoop/workspace/hdfs/data</value>

</property>

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

</configuration>

- dfs.namenode.secondary.http-address:SecondaryNameNode服务地址

- dfs.webhdfs.enabled :在NN和DN上开启WebHDFS (REST API)功能

3.2.3、mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobtracker.http.address</name>

<value>mast1:50030</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>mast1:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>mast1:19888</value>

</property>

</configuration>

- mapreduce.jobhistory.address :mapreduce的历史服务IPC端口

- mapreduce.jobhistory.webapp.address :mapreduce的历史服务器的http端口

3.2.4、yarn-site.xml

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>mast1:8030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>mast1:8031</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>mast1:8032</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>mast1:8033</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>mast1:8088</value>

</property>

</configuration>

3.2.5.slaves:指定DataNode节点的文件

mast2 mast3

3.2.6.修改JAVA_HOME

分别在文件hadoop-env.sh和yarn-env.sh中添加JAVA_HOME配置

#export JAVA_HOME=${JAVA_HOME} --原来

export JAVA_HOME=/usr/local/java/jdk1.7.0_67

虽然配置的JAVA_HOME的环境变量,但是hadoop启动时,会提示找不到,没有办法,指定绝对路径

3.2.7.配置hadoop的环境变量,参考我的配置

[hadoop@Mast1 hadoop]$ vim ~/.bash_profile export HADOOP_HOME="/home/hadoop/hadoop-2.5.2" export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native export HADOOP_OPTS="-Djava.library.path=$HADOOP_HOME/lib"

温馨提示:其中HADOOP_COMMON_LIB_NATIVE_DIR 、HADOOP_OPTS这两个环境变量,是2.5.0后必须添加的,不然在启动集群时会报个小错

3.3、将配置复制到mast2、mast3

温馨提示:复制的过程是在hadoop用户下面复制的

scp -r ~/.bash_profile hadoop@mast2:/home/hadoop/ scp -r ~/.bash_profile hadoop@mast3:/home/hadoop/ scp -r $HADOOP_HOME/etc/hadoop hadoop@mast2:/home/hadoop/hadoop-2.5.2/etc/ scp -r $HADOOP_HOME/etc/hadoop hadoop@mast3:/home/hadoop/hadoop-2.5.2/etc/

3.4、格式化文件系统

bin/hdfs namenode -format

3.5、启动、停止(hdfs文件系统)跟yarn(资源管理器)

#启动HDFS分布式文件系统 [hadoop@Mast1 hadoop-2.5.2]$ sbin/start-dfs.sh #关闭HDFS分布式文件系统 [hadoop@Mast1 hadoop-2.5.2]$ sbin/stop-dfs.sh #启动YEAR资源管理器 [hadoop@Mast1 hadoop-2.5.2]$ sbin/start-yarn.sh #停止YEAR资源管理器 [hadoop@Mast1 hadoop-2.5.2]$ sbin/stop-yarn.sh

3.6、JPS验证是否启动

#mast1(NameNode)上面执行jps,可以看到NameNode、ResourceManager [hadoop@Mast1 hadoop-2.5.2]$ jps 3428 NameNode 4057 ResourceManager 4307 Jps #切换到mast2或者mast3(DataNode)节点执行jps [hadoop@Mast2 ~]$ jps 2726 DataNode 3154 Jps 3012 NodeManager

3.7、浏览器验证

http://mast1:8088/

http://mast2:50075/

备注:

- hadoop2.5.2官方文档,放在下载包的~/hadoop-2.5.2\hadoop-2.5.2\share\doc\hadoop目录下面可以查看到core.xml、hdfs.xml、mapreduce.xml、year.xml所有的默认配置,以及他的各种操作

- hadoop的参数中文写得很好的博客:http://segmentfault.com/a/1190000000709725#articleHeader2