Oracle 10g Data Pump Expdp/Impdp 详解

一. 官网说明

1. Oracle 10g文档如下:

http://download.oracle.com/docs/cd/B19306_01/server.102/b14215/dp_overview.htm#i1010293

Data Pump Components

Oracle Data Pump is made up of three distinct parts:

(1)The command-line clients, expdp and impdp

(2)The DBMS_DATAPUMP PL/SQL package (also known as the Data Pump API)

(3)The DBMS_METADATA PL/SQL package (also known as the Metadata API)

The Data Pump clients, expdp and impdp, invoke the Data Pump Export utility and Data Pump Import utility, respectively. They provide a user interface that closely resembles the original export (exp) and import (imp) utilities.

The expdp and impdp clients use the procedures provided in the DBMS_DATAPUMP PL/SQL package to execute export and import commands, using the parameters entered at the command-line. These parameters enable the exporting and importing of data and metadata for a complete database or subsets of a database.

Note:

All Data Pump Export and Import processing, including the reading and writing of dump files, is done on the system (server) selected by the specified database connect string. This means that, for nonprivileged users, the database administrator (DBA) must create directory objects for the Data Pump files that are read and written on that server file system. For privileged users, a default directory object is available. See Default Locations for Dump, Log, and SQL Files for more information about directory objects.

When data is moved, Data Pump automatically uses either direct path load (or unload) or the external tables mechanism, or a combination of both. When metadata is moved, Data Pump uses functionality provided by the DBMS_METADATA PL/SQL package. The DBMS_METADATA package provides a centralized facility for the extraction, manipulation, and resubmission of dictionary metadata.

The DBMS_DATAPUMP and DBMS_METADATA PL/SQL packages can be used independently of the Data Pump clients.

See Also:

Oracle Database PL/SQL Packages and Types Reference for descriptions of the DBMS_DATAPUMP and DBMS_METADATA packages

What New Features Do Data Pump Export and Import Provide?

The new Data Pump Export and Import utilities (invoked with the expdp and impdp commands, respectively) have a similar look and feel to the original Export (exp) and Import (imp) utilities, but they are completely separate. Dump files generated by the new Data Pump Export utility are not compatible with dump files generated by the original Export utility. Therefore, files generated by the original Export (exp) utility cannot be imported with the Data Pump Import (impdp) utility.

Oracle recommends that you use the new Data Pump Export and Import utilities because they support all Oracle Database 10g features, except for XML schemas and XML schema-based tables. Original Export and Import support the full set of Oracle database release 9.2 features. Also, the design of Data Pump Export and Import results in greatly enhanced data movement performance over the original Export and Import utilities.

Note:

See Chapter 19, "Original Export and Import" for information about situations in which you should still use the original Export and Import utilities.

The following are the major new features that provide this increased performance, as well as enhanced ease of use:

(1)The ability to specify the maximum number of threads of active execution operating on behalf of the Data Pump job. This enables you to adjust resource consumption versus elapsed time. See PARALLEL for information about using this parameter in export. See PARALLEL for information about using this parameter in import. (This feature is available only in the Enterprise Edition of Oracle Database 10g.)

(2)The ability to restart Data Pump jobs. See START_JOB for information about restarting export jobs. See START_JOB for information about restarting import jobs.

(3)The ability to detach from and reattach to long-running jobs without affecting the job itself. This allows DBAs and other operations personnel to monitor jobs from multiple locations. The Data Pump Export and Import utilities can be attached to only one job at a time; however, you can have multiple clients or jobs running at one time. (If you are using the Data Pump API, the restriction on attaching to only one job at a time does not apply.) You can also have multiple clients attached to the same job. See ATTACH for information about using this parameter in export. See ATTACH for information about using this parameter in import.

(4)Support for export and import operations over the network, in which the source of each operation is a remote instance. See NETWORK_LINK for information about using this parameter in export. See NETWORK_LINK for information about using this parameter in import.

(5)The ability, in an import job, to change the name of the source datafile to a different name in all DDL statements where the source datafile is referenced.See REMAP_DATAFILE.

(6)Enhanced support for remapping tablespaces during an import operation. See REMAP_TABLESPACE.

(7)Support for filtering the metadata that is exported and imported, based upon objects and object types. For information about filtering metadata during an export operation, see INCLUDE and EXCLUDE. For information about filtering metadata during an import operation, see INCLUDE and EXCLUDE.

(8)Support for an interactive-command mode that allows monitoring of and interaction with ongoing jobs. See Commands Available in Export's Interactive-Command Mode and Commands Available in Import's Interactive-Command Mode.

(9)The ability to estimate how much space an export job would consume, without actually performing the export. See ESTIMATE_ONLY.

(10)The ability to specify the version of database objects to be moved. In export jobs, VERSION applies to the version of the database objects to be exported. See VERSION for more information about using this parameter in export.

In import jobs, VERSION applies only to operations over the network. This means that VERSION applies to the version of database objects to be extracted from the source database. See VERSION for more information about using this parameter in import.

For additional information about using different versions, see Moving Data Between Different Database Versions.

(11)Most Data Pump export and import operations occur on the Oracle database server. (This contrasts with original export and import, which were primarily client-based.) See Default Locations for Dump, Log, and SQL Files for information about some of the implications of server-based operations.

The remainder of this chapter discusses Data Pump technology as it is implemented in the Data Pump Export and Import utilities. To make full use of Data Pump technology, you must be a privileged user. Privileged users have the EXP_FULL_DATABASE and IMP_FULL_DATABASE roles. Nonprivileged users have neither.

Privileged users can do the following:

(1)Export and import database objects owned by others

(2)Export and import nonschema-based objects such as tablespace and schema definitions, system privilege grants, resource plans, and so forth

(3)Attach to, monitor, and control Data Pump jobs initiated by others

(4)Perform remapping operations on database datafiles

(5)Perform remapping operations on schemas other than their own

2. Oracle 11gR2 中文档:

http://download.oracle.com/docs/cd/E11882_01/server.112/e16508/cncptdba.htm#CNCPT1277

Oracle Data Pump Export and Import

Oracle Data Pump enables high-speed movement of data and metadata from one database to another. This technology is the basis for the following Oracle Database data movement utilities:

(1)Data Pump Export (Export)

Export is a utility for unloading data and metadata into a set of operating system files called a dump file set. The dump file set is made up of one or more binary files that contain table data, database object metadata, and control information.

(2)Data Pump Import (Import)

Import is a utility for loading an export dump file set into a database. You can also use Import to load a destination database directly from a source database with no intervening files, which allows export and import operations to run concurrently, minimizing total elapsed time.

Oracle Data Pump is made up of the following distinct parts:

(1)The command-line clients expdp and impdp

These client make calls to the DBMS_DATAPUMP package to perform Oracle Data Pump operations (see "PL/SQL Packages").

(2)The DBMS_DATAPUMP PL/SQL package, also known as the Data Pump API。This API provides high-speed import and export functionality.

(3)The DBMS_METADATA PL/SQL package, also known as the Metadata API。This API, which stores object definitions in XML, is used by all processes that load and unload metadata.

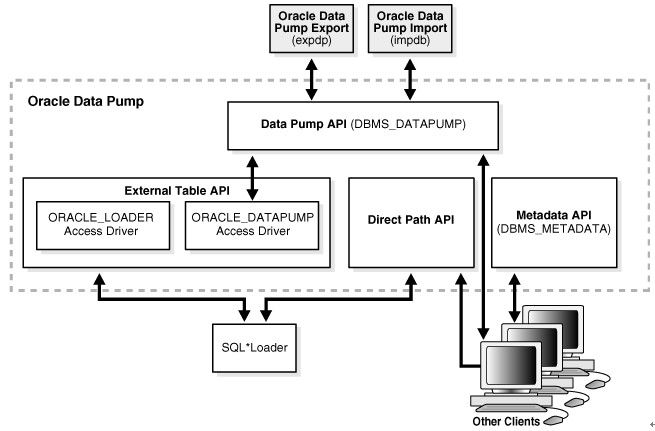

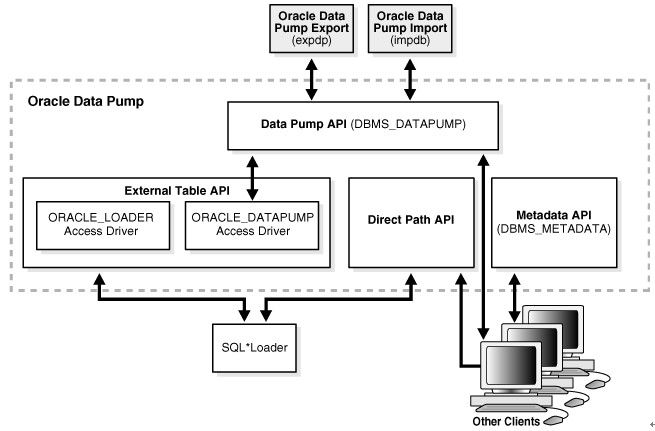

Figure 18-2 shows how Oracle Data Pump integrates with SQL*Loader and external tables. As shown, SQL*Loader is integrated with the External Table API and the Data Pump API to load data into external tables (see "External Tables"). Clients such as Database Control and transportable tablespaces can use the Oracle Data Pump infrastructure.

Figure 18-2 Oracle Data Pump Architecture

[img]

[/img]

二. Data Pump 介绍

在第一部分看了2段官网的说明, 可以看出数据泵的工作流程如下:

(1)在命令行执行命令

(2)expdp/impd 命令调用DBMS_DATAPUMP PL/SQL包。 这个API提供高速的导出导入功能。

(3)当data 移动的时候, Data Pump 会自动选择direct path 或者external table mechanism 或者 两种结合的方式。 当metadata(对象定义) 移动的时候,Data Pump会使用DBMS_METADATA PL/SQL包。 Metadata API 将metadata(对象定义)存储在XML里。 所有的进程都能load 和unload 这些metadata.

因为Data Pump 调用的是服务端的API, 所以当一个任务被调度或执行,客户端就可以退出连接,任务Job 会在server端继续执行,随后通过客户端实用程序从任何地方检查任务的状态和进行修改。

在下面连接文章里对expdp/impdp 不同模式下的原理做了说明:

exp/imp 与 expdp/impdp 对比 及使用中的一些优化事项

http://blog.csdn.net/xujinyang/article/details/6831324

在上面说了expdp/impdp 是JOB,我们可以停止与修改。 因此我们在这里做一个简答的测试:

导出语句:

expdp system/oracle full=y directory=dump dumpfile=orcl_%U.dmp parallel=2 job_name=davedump

job_name:指定要导出Job的名称, 默认为SYS_XXX。 在前面已经说过, 调用的API都是Job。 我们为这个JOB命名一下, 等会还要用这个job name。

C:/Users/Administrator.DavidDai>expdp system/oracle full=y directory=dump dumpfile=orcl_%U.dmp job_name=davedump

Export: Release 11.2.0.1.0 - Production on 星期一 12月 27 15:24:38 2010

Copyright (c) 1982, 2009, Oracle and/or its affiliates. All rights reserved.

连接到: Oracle Database 11g Enterprise Edition Release 11.2.0.1.0 - Production

With the Partitioning, OLAP, Data Mining and Real Application Testing options

启动 "SYSTEM"."DAVEDUMP": system/******** full=y directory=dump dumpfile=orcl_%U.dmp job_name=davedump

正在使用 BLOCKS 方法进行估计...

处理对象类型 DATABASE_EXPORT/SCHEMA/TABLE/TABLE_DATA

使用 BLOCKS 方法的总估计: 132.6 MB

处理对象类型 DATABASE_EXPORT/TABLESPACE

处理对象类型 DATABASE_EXPORT/PROFILE

处理对象类型 DATABASE_EXPORT/SYS_USER/USER

处理对象类型 DATABASE_EXPORT/SCHEMA/USER

处理对象类型 DATABASE_EXPORT/ROLE

处理对象类型 DATABASE_EXPORT/GRANT/SYSTEM_GRANT/PROC_SYSTEM_GRANT

处理对象类型 DATABASE_EXPORT/SCHEMA/GRANT/SYSTEM_GRANT

处理对象类型 DATABASE_EXPORT/SCHEMA/ROLE_GRANT

处理对象类型 DATABASE_EXPORT/SCHEMA/DEFAULT_ROLE

处理对象类型 DATABASE_EXPORT/SCHEMA/TABLESPACE_QUOTA

处理对象类型 DATABASE_EXPORT/RESOURCE_COST

处理对象类型 DATABASE_EXPORT/TRUSTED_DB_LINK

处理对象类型 DATABASE_EXPORT/SCHEMA/SEQUENCE/SEQUENCE

--按下CTRL+C 组合,退出交互模式

Export>

Export> status

作业: DAVEDUMP

操作: EXPORT

模式: FULL

状态: EXECUTING

处理的字节: 0

当前并行度: 1

作业错误计数: 0

转储文件: D:/BACKUP/ORCL_01.DMP

写入的字节: 4,096

转储文件: d:/Backup/orcl_%u.dmp

Worker 1 状态:

进程名: DW00

状态: EXECUTING

对象名: STORAGE_CONTEXT

对象类型: DATABASE_EXPORT/CONTEXT

完成的对象数: 7

总的对象数: 7

Worker 并行度: 1

--停止作业

Export> stop_job

是否确实要停止此作业 ([Y]/N): yes

--用job_name再次连接到job

C:/Users/Administrator.DavidDai>expdp system/oracle attach=davedump

-- ATTACH用于在客户会话与已存在导出作用之间建立关联. 如果使用ATTACH选项,在命令行除了连接字符串和ATTACH选项外,不能指定任何其他选

Export: Release 11.2.0.1.0 - Production on 星期一 12月 27 15:26:14 2010

Copyright (c) 1982, 2009, Oracle and/or its affiliates. All rights reserved.

连接到: Oracle Database 11g Enterprise Edition Release 11.2.0.1.0 - Production

With the Partitioning, OLAP, Data Mining and Real Application Testing options

作业: DAVEDUMP

所有者: SYSTEM

操作: EXPORT

创建者权限: TRUE

GUID: 454A188F62AA4D578AA0DA4C35259CD8

开始时间: 星期一, 27 12月, 2010 15:26:16

模式: FULL

实例: orcl

最大并行度: 1

EXPORT 个作业参数:

参数名 参数值:

CLIENT_COMMAND system/******** full=y directory=dump dumpfile=orcl_%U.dmp job_name=davedump

状态: IDLING

处理的字节: 0

当前并行度: 1

作业错误计数: 0

转储文件: d:/Backup/orcl_01.dmp

写入的字节: 950,272

转储文件: d:/Backup/orcl_%u.dmp

Worker 1 状态:

进程名: DW00

状态: UNDEFINED

启动JOB

Export> start_job

-- 查看状态

Export> status

作业: DAVEDUMP

操作: EXPORT

模式: FULL

状态: EXECUTING

处理的字节: 0

当前并行度: 1

作业错误计数: 0

转储文件: d:/Backup/orcl_01.dmp

写入的字节: 954,368

转储文件: d:/Backup/orcl_%u.dmp

Worker 1 状态:

进程名: DW00

状态: EXECUTING

在此期间的备份情况,可以使用status命令来查看:

Export> status

作业: DAVEDUMP

操作: EXPORT

模式: FULL

状态: EXECUTING

处理的字节: 0

当前并行度: 1

作业错误计数: 0

转储文件: d:/Backup/orcl_01.dmp

写入的字节: 954,368

转储文件: d:/Backup/orcl_%u.dmp

Worker 1 状态:

进程名: DW00

状态: EXECUTING

对象方案: SYSMAN

对象名: AQ$_MGMT_NOTIFY_QTABLE_T

对象类型: DATABASE_EXPORT/SCHEMA/TABLE/TABLE

完成的对象数: 59

Worker 并行度: 1

Export> help

------------------------------------------------------------------------------

下列命令在交互模式下有效。

注: 允许使用缩写。

ADD_FILE

将转储文件添加到转储文件集。

CONTINUE_CLIENT

返回到事件记录模式。如果处于空闲状态, 将重新启动作业。

EXIT_CLIENT

退出客户机会话并使作业保持运行状态。

FILESIZE

用于后续 ADD_FILE 命令的默认文件大小 (字节)。

HELP

汇总交互命令。

KILL_JOB

分离并删除作业。

PARALLEL

更改当前作业的活动 worker 的数量。

REUSE_DUMPFILES

覆盖目标转储文件 (如果文件存在) [N]。

START_JOB

启动或恢复当前作业。

有效的关键字值为: SKIP_CURRENT。

STATUS

监视作业状态的频率, 其中

默认值 [0] 表示只要有新状态可用, 就立即显示新状态。

STOP_JOB

按顺序关闭作业执行并退出客户机。

有效的关键字值为: IMMEDIATE。

Export>

注意,就是在expdp命令进行交互式切换时,不能使用paralle 参数。 我在开始测试的时候,指定了这个参数,当stop_job后,在启动时就会报错。 说找不到指定的job_name.

三、EXPDP/IMPDP 命令使用详解

Data Pump包括导出表,导出方案,导出表空间,导出数据库4种方式.

3.1 EXPDP命令参数及说明

(1). ATTACH

该选项用于在客户会话与已存在导出作用之间建立关联.语法如下

ATTACH=[schema_name.]job_name

Schema_name用于指定方案名,job_name用于指定导出作业名.注意,如果使用ATTACH选项,在命令行除了连接字符串和ATTACH选项外,不能指定任何其他选项,示例如下:

Expdp scott/tiger ATTACH=scott.export_job

(2). CONTENT

该选项用于指定要导出的内容.默认值为ALL

CONTENT={ALL | DATA_ONLY | METADATA_ONLY}

当设置CONTENT为ALL 时,将导出对象定义及其所有数据.为DATA_ONLY时,只导出对象数据,为METADATA_ONLY时,只导出对象定义。

Expdp scott/tiger DIRECTORY=dump DUMPFILE=a.dump CONTENT=METADATA_ONLY

(3) DIRECTORY

指定转储文件和日志文件所在的目录,DIRECTORY=directory_object

Directory_object用于指定目录对象名称.需要注意,目录对象是使用CREATE DIRECTORY语句建立的对象,而不是OS 目录。

Expdp scott/tiger DIRECTORY=dump DUMPFILE=a.dump

先在对应的位置创建物理文件夹,如D:/backup

建立目录:

create or replace directory backup as '/opt/oracle/utl_file'

SQL>CREATE DIRECTORY backup as ‘d:/backup’;

SQL>grant read,write on directory backup to SYSTEM;

查询创建了那些子目录:

SELECT * FROM dba_directories;

(4). DUMPFILE

用于指定转储文件的名称,默认名称为expdat.dmp

DUMPFILE=[directory_object:]file_name [,….]

Directory_object用于指定目录对象名,file_name用于指定转储文件名.需要注意,如果不指定directory_object,导出工具会自动使用DIRECTORY选项指定的目录对象:Expdp scott/tiger DIRECTORY=dump1 DUMPFILE=dump2:a.dmp

(5). ESTIMATE

指定估算被导出表所占用磁盘空间分方法.默认值是BLOCKS。

EXTIMATE={BLOCKS | STATISTICS}

设置为BLOCKS时,oracle会按照目标对象所占用的数据块个数乘以数据块尺寸估算对象占用的空间,设置为STATISTICS时,根据最近统计值估算对象占用空间: Expdp scott/tiger TABLES=emp ESTIMATE=STATISTICS DIRECTORY=dump DUMPFILE=a.dump

(6). EXTIMATE_ONLY

指定是否只估算导出作业所占用的磁盘空间,默认值为N

EXTIMATE_ONLY={Y | N}

设置为Y时,导出作用只估算对象所占用的磁盘空间,而不会执行导出作业,为N时,不仅估算对象所占用的磁盘空间,还会执行导出操作.

Expdp scott/tiger ESTIMATE_ONLY=y NOLOGFILE=y

(7). EXCLUDE

该选项用于指定执行操作时释放要排除对象类型或相关对象

EXCLUDE=object_type[:name_clause] [,….]

Object_type用于指定要排除的对象类型,name_clause用于指定要排除的具体对象.EXCLUDE和INCLUDE不能同时使用。

Expdp scott/tiger DIRECTORY=dump DUMPFILE=a.dup EXCLUDE=VIEW

(8). FILESIZE

指定导出文件的最大尺寸,默认为0,(表示文件尺寸没有限制)

(9). FLASHBACK_SCN

指定导出特定SCN时刻的表数据。FLASHBACK_SCN=scn_value

Scn_value用于标识SCN值.FLASHBACK_SCN和FLASHBACK_TIME不能同时使用: Expdp scott/tiger DIRECTORY=dump DUMPFILE=a.dmp FLASHBACK_SCN=358523

(10). FLASHBACK_TIME

指定导出特定时间点的表数据

FLASHBACK_TIME=”TO_TIMESTAMP(time_value)”

Expdp scott/tiger DIRECTORY=dump DUMPFILE=a.dmp FLASHBACK_TIME= “TO_TIMESTAMP(’25-08-2004 14:35:00’,’DD-MM-YYYY HH24:MI:SS’)”

(11). FULL

指定数据库模式导出,默认为N。 FULL={Y | N} 。为Y时,标识执行数据库导出.

(12). HELP

指定是否显示EXPDP命令行选项的帮助信息,默认为N。当设置为Y时,会显示导出选项的帮助信息. Expdp help=y

(13). INCLUDE

指定导出时要包含的对象类型及相关对象。INCLUDE = object_type[:name_clause] [,… ]

(14). JOB_NAME

指定要导出作用的名称,默认为SYS_XXX 。JOB_NAME=jobname_string

(15). LOGFILE

指定导出日志文件文件的名称,默认名称为export.log

LOGFILE=[directory_object:]file_name

Directory_object用于指定目录对象名称,file_name用于指定导出日志文件名.如果不指定directory_object.导出作用会自动使用DIRECTORY的相应选项值.

Expdp scott/tiger DIRECTORY=dump DUMPFILE=a.dmp logfile=a.log

(16). NETWORK_LINK

指定数据库链接名,如果要将远程数据库对象导出到本地例程的转储文件中,必须设置该选项.

(17). NOLOGFILE

该选项用于指定禁止生成导出日志文件,默认值为N.

(18). PARALLEL

指定执行导出操作的并行进程个数,默认值为1

(19). PARFILE

指定导出参数文件的名称。PARFILE=[directory_path] file_name

(20). QUERY

用于指定过滤导出数据的where条件

QUERY=[schema.] [table_name:] query_clause

Schema用于指定方案名,table_name用于指定表名,query_clause用于指定条件限制子句.QUERY选项不能与CONNECT=METADATA_ONLY,EXTIMATE_ONLY,TRANSPORT_TABLESPACES等选项同时使用.

Expdp scott/tiger directory=dump dumpfiel=a.dmp Tables=emp query=’WHERE deptno=20’

(21). SCHEMAS

该方案用于指定执行方案模式导出,默认为当前用户方案.

(22). STATUS

指定显示导出作用进程的详细状态,默认值为0

(23). TABLES

指定表模式导出

TABLES=[schema_name.]table_name[:partition_name][,…]

Schema_name用于指定方案名,table_name用于指定导出的表名,partition_name用于指定要导出的分区名.

(24). TABLESPACES

指定要导出表空间列表

(25). TRANSPORT_FULL_CHECK

该选项用于指定被搬移表空间和未搬移表空间关联关系的检查方式,默认为N. 当设置为Y时,导出作用会检查表空间直接的完整关联关系,如果表空间所在表空间或其索引所在的表空间只有一个表空间被搬移,将显示错误信息.当设置为N时,导出作用只检查单端依赖,如果搬移索引所在表空间,但未搬移表所在表空间,将显示出错信息,如果搬移表所在表空间,未搬移索引所在表空间,则不会显示错误信息.

(26). TRANSPORT_TABLESPACES

指定执行表空间模式导出

(27). VERSION

指定被导出对象的数据库版本,默认值为COMPATIBLE.

VERSION={COMPATIBLE | LATEST | version_string}

为COMPATIBLE时,会根据初始化参数COMPATIBLE生成对象元数据;为LATEST时,会根据数据库的实际版本生成对象元数据.version_string用于指定数据库版本字符串.

3.2 EXPDP 使用示例

使用EXPDP工具时,其转储文件只能被存放在DIRECTORY对象对应的OS目录中,而不能直接指定转储文件所在的OS目录.因此,使用EXPDP工具时,必须首先建立DIRECTORY对象.并且需要为数据库用户授予使用DIRECTORY对象权限.

CREATE DIRECTORY dump_dir AS ‘D:/DUMP’;

GRANT READ, WIRTE ON DIRECTORY dump_dir TO scott;

(1)导出表

Expdp scott/tiger DIRECTORY=dump_dir DUMPFILE=tab.dmp TABLES=dept,emp logfile=exp.log;

(2)导出方案 (schema,与用户对应)

Expdp scott/tiger DIRECTORY=dump_dir DUMPFILE=schema.dmp SCHEMAS=system,scott logfile=/exp.log;

(3)导出表空间

Expdp system/manager DIRECTORY=dump_dir DUMPFILE=tablespace.dmp TABLESPACES=user01,user02 logfile=/exp.log;

(4)导出数据库

Expdp system/manager DIRECTORY=dump_dir DUMPFILE=full.dmp FULL=Y logfile=/exp.log;

3.3 IMPDP 命令参数说明

IMPDP命令行选项与EXPDP有很多相同的,不同的有:

(1)REMAP_DATAFILE

该选项用于将源数据文件名转变为目标数据文件名,在不同平台之间搬移表空间时可能需要该选项.

REMAP_DATAFIEL=source_datafie:target_datafile

(2)REMAP_SCHEMA

该选项用于将源方案的所有对象装载到目标方案中.

REMAP_SCHEMA=source_schema:target_schema

(3)REMAP_TABLESPACE

将源表空间的所有对象导入到目标表空间中

REMAP_TABLESPACE=source_tablespace:target_tablespace

(4)REUSE_DATAFILES

该选项指定建立表空间时是否覆盖已存在的数据文件.默认为N。

REUSE_DATAFIELS={Y | N}

(5)SKIP_UNUSABLE_INDEXES

指定导入是是否跳过不可使用的索引,默认为N

(6)SQLFILE

指定将导入要指定的索引DDL操作写入到SQL脚本中。

SQLFILE=[directory_object:]file_name

Impdp scott/tiger DIRECTORY=dump DUMPFILE=tab.dmp SQLFILE=a.sql

(7)STREAMS_CONFIGURATION

指定是否导入流元数据(Stream Matadata),默认值为Y.

(8)TABLE_EXISTS_ACTION

该选项用于指定当表已经存在时导入作业要执行的操作,默认为SKIP

TABBLE_EXISTS_ACTION={SKIP | APPEND | TRUNCATE | FRPLACE }

当设置该选项为SKIP时,导入作业会跳过已存在表处理下一个对象;当设置为APPEND时,会追加数据,为TRUNCATE时,导入作业会截断表,然后为其追加新数据;当设置为REPLACE时,导入作业会删除已存在表,重建表病追加数据,注意,TRUNCATE选项不适用与簇表和NETWORK_LINK选项

(9)TRANSFORM

该选项用于指定是否修改建立对象的DDL语句

TRANSFORM=transform_name:value[:object_type]

Transform_name用于指定转换名,其中SEGMENT_ATTRIBUTES用于标识段属性(物理属性,存储属性,表空间,日志等信息),STORAGE用于标识段存储属性,VALUE用于指定是否包含段属性或段存储属性,object_type用于指定对象类型.

Impdp scott/tiger directory=dump dumpfile=tab.dmp Transform=segment_attributes:n:table

(10)TRANSPORT_DATAFILES

该选项用于指定搬移空间时要被导入到目标数据库的数据文件。

TRANSPORT_DATAFILE=datafile_name

Datafile_name用于指定被复制到目标数据库的数据文件

Impdp system/manager DIRECTORY=dump DUMPFILE=tts.dmp TRANSPORT_DATAFILES=’/user01/data/tbs1.f’

3.4 IMPDP 命令实例

(1)导入表

Impdp scott/tiger DIRECTORY=dump_dir DUMPFILE=tab.dmp TABLES=dept,emp logfile=/exp.log;

--将DEPT和EMP表导入到SCOTT方案中

Impdp system/manage DIRECTORY=dump_dir DUMPFILE=tab.dmp

TABLES=scott.dept,scott.emp REMAP_SCHEMA=SCOTT:SYSTEM logfile=/exp.log;

-- 将DEPT和EMP表导入的SYSTEM方案中.

(2)导入方案

Impdp scott/tiger DIRECTORY=dump_dir DUMPFILE=schema.dmp SCHEMAS=scott logfile=/exp.log;

Impdp system/manager DIRECTORY=dump_dir DUMPFILE=schema.dmp

SCHEMAS=scott REMAP_SCHEMA=scott:system logfile=/exp.log;

(3)导入表空间

Impdp system/manager DIRECTORY=dump_dir DUMPFILE=tablespace.dmp TABLESPACES=user01 logfile=/exp.log;

(4)导入数据库

Impdp system/manager DIRECTORY=dump_dir DUMPFILE=full.dmp FULL=y logfile=/exp.log;

1. Oracle 10g文档如下:

http://download.oracle.com/docs/cd/B19306_01/server.102/b14215/dp_overview.htm#i1010293

Data Pump Components

Oracle Data Pump is made up of three distinct parts:

(1)The command-line clients, expdp and impdp

(2)The DBMS_DATAPUMP PL/SQL package (also known as the Data Pump API)

(3)The DBMS_METADATA PL/SQL package (also known as the Metadata API)

The Data Pump clients, expdp and impdp, invoke the Data Pump Export utility and Data Pump Import utility, respectively. They provide a user interface that closely resembles the original export (exp) and import (imp) utilities.

The expdp and impdp clients use the procedures provided in the DBMS_DATAPUMP PL/SQL package to execute export and import commands, using the parameters entered at the command-line. These parameters enable the exporting and importing of data and metadata for a complete database or subsets of a database.

Note:

All Data Pump Export and Import processing, including the reading and writing of dump files, is done on the system (server) selected by the specified database connect string. This means that, for nonprivileged users, the database administrator (DBA) must create directory objects for the Data Pump files that are read and written on that server file system. For privileged users, a default directory object is available. See Default Locations for Dump, Log, and SQL Files for more information about directory objects.

When data is moved, Data Pump automatically uses either direct path load (or unload) or the external tables mechanism, or a combination of both. When metadata is moved, Data Pump uses functionality provided by the DBMS_METADATA PL/SQL package. The DBMS_METADATA package provides a centralized facility for the extraction, manipulation, and resubmission of dictionary metadata.

The DBMS_DATAPUMP and DBMS_METADATA PL/SQL packages can be used independently of the Data Pump clients.

See Also:

Oracle Database PL/SQL Packages and Types Reference for descriptions of the DBMS_DATAPUMP and DBMS_METADATA packages

What New Features Do Data Pump Export and Import Provide?

The new Data Pump Export and Import utilities (invoked with the expdp and impdp commands, respectively) have a similar look and feel to the original Export (exp) and Import (imp) utilities, but they are completely separate. Dump files generated by the new Data Pump Export utility are not compatible with dump files generated by the original Export utility. Therefore, files generated by the original Export (exp) utility cannot be imported with the Data Pump Import (impdp) utility.

Oracle recommends that you use the new Data Pump Export and Import utilities because they support all Oracle Database 10g features, except for XML schemas and XML schema-based tables. Original Export and Import support the full set of Oracle database release 9.2 features. Also, the design of Data Pump Export and Import results in greatly enhanced data movement performance over the original Export and Import utilities.

Note:

See Chapter 19, "Original Export and Import" for information about situations in which you should still use the original Export and Import utilities.

The following are the major new features that provide this increased performance, as well as enhanced ease of use:

(1)The ability to specify the maximum number of threads of active execution operating on behalf of the Data Pump job. This enables you to adjust resource consumption versus elapsed time. See PARALLEL for information about using this parameter in export. See PARALLEL for information about using this parameter in import. (This feature is available only in the Enterprise Edition of Oracle Database 10g.)

(2)The ability to restart Data Pump jobs. See START_JOB for information about restarting export jobs. See START_JOB for information about restarting import jobs.

(3)The ability to detach from and reattach to long-running jobs without affecting the job itself. This allows DBAs and other operations personnel to monitor jobs from multiple locations. The Data Pump Export and Import utilities can be attached to only one job at a time; however, you can have multiple clients or jobs running at one time. (If you are using the Data Pump API, the restriction on attaching to only one job at a time does not apply.) You can also have multiple clients attached to the same job. See ATTACH for information about using this parameter in export. See ATTACH for information about using this parameter in import.

(4)Support for export and import operations over the network, in which the source of each operation is a remote instance. See NETWORK_LINK for information about using this parameter in export. See NETWORK_LINK for information about using this parameter in import.

(5)The ability, in an import job, to change the name of the source datafile to a different name in all DDL statements where the source datafile is referenced.See REMAP_DATAFILE.

(6)Enhanced support for remapping tablespaces during an import operation. See REMAP_TABLESPACE.

(7)Support for filtering the metadata that is exported and imported, based upon objects and object types. For information about filtering metadata during an export operation, see INCLUDE and EXCLUDE. For information about filtering metadata during an import operation, see INCLUDE and EXCLUDE.

(8)Support for an interactive-command mode that allows monitoring of and interaction with ongoing jobs. See Commands Available in Export's Interactive-Command Mode and Commands Available in Import's Interactive-Command Mode.

(9)The ability to estimate how much space an export job would consume, without actually performing the export. See ESTIMATE_ONLY.

(10)The ability to specify the version of database objects to be moved. In export jobs, VERSION applies to the version of the database objects to be exported. See VERSION for more information about using this parameter in export.

In import jobs, VERSION applies only to operations over the network. This means that VERSION applies to the version of database objects to be extracted from the source database. See VERSION for more information about using this parameter in import.

For additional information about using different versions, see Moving Data Between Different Database Versions.

(11)Most Data Pump export and import operations occur on the Oracle database server. (This contrasts with original export and import, which were primarily client-based.) See Default Locations for Dump, Log, and SQL Files for information about some of the implications of server-based operations.

The remainder of this chapter discusses Data Pump technology as it is implemented in the Data Pump Export and Import utilities. To make full use of Data Pump technology, you must be a privileged user. Privileged users have the EXP_FULL_DATABASE and IMP_FULL_DATABASE roles. Nonprivileged users have neither.

Privileged users can do the following:

(1)Export and import database objects owned by others

(2)Export and import nonschema-based objects such as tablespace and schema definitions, system privilege grants, resource plans, and so forth

(3)Attach to, monitor, and control Data Pump jobs initiated by others

(4)Perform remapping operations on database datafiles

(5)Perform remapping operations on schemas other than their own

2. Oracle 11gR2 中文档:

http://download.oracle.com/docs/cd/E11882_01/server.112/e16508/cncptdba.htm#CNCPT1277

Oracle Data Pump Export and Import

Oracle Data Pump enables high-speed movement of data and metadata from one database to another. This technology is the basis for the following Oracle Database data movement utilities:

(1)Data Pump Export (Export)

Export is a utility for unloading data and metadata into a set of operating system files called a dump file set. The dump file set is made up of one or more binary files that contain table data, database object metadata, and control information.

(2)Data Pump Import (Import)

Import is a utility for loading an export dump file set into a database. You can also use Import to load a destination database directly from a source database with no intervening files, which allows export and import operations to run concurrently, minimizing total elapsed time.

Oracle Data Pump is made up of the following distinct parts:

(1)The command-line clients expdp and impdp

These client make calls to the DBMS_DATAPUMP package to perform Oracle Data Pump operations (see "PL/SQL Packages").

(2)The DBMS_DATAPUMP PL/SQL package, also known as the Data Pump API。This API provides high-speed import and export functionality.

(3)The DBMS_METADATA PL/SQL package, also known as the Metadata API。This API, which stores object definitions in XML, is used by all processes that load and unload metadata.

Figure 18-2 shows how Oracle Data Pump integrates with SQL*Loader and external tables. As shown, SQL*Loader is integrated with the External Table API and the Data Pump API to load data into external tables (see "External Tables"). Clients such as Database Control and transportable tablespaces can use the Oracle Data Pump infrastructure.

Figure 18-2 Oracle Data Pump Architecture

[img]

[/img]

二. Data Pump 介绍

在第一部分看了2段官网的说明, 可以看出数据泵的工作流程如下:

(1)在命令行执行命令

(2)expdp/impd 命令调用DBMS_DATAPUMP PL/SQL包。 这个API提供高速的导出导入功能。

(3)当data 移动的时候, Data Pump 会自动选择direct path 或者external table mechanism 或者 两种结合的方式。 当metadata(对象定义) 移动的时候,Data Pump会使用DBMS_METADATA PL/SQL包。 Metadata API 将metadata(对象定义)存储在XML里。 所有的进程都能load 和unload 这些metadata.

因为Data Pump 调用的是服务端的API, 所以当一个任务被调度或执行,客户端就可以退出连接,任务Job 会在server端继续执行,随后通过客户端实用程序从任何地方检查任务的状态和进行修改。

在下面连接文章里对expdp/impdp 不同模式下的原理做了说明:

exp/imp 与 expdp/impdp 对比 及使用中的一些优化事项

http://blog.csdn.net/xujinyang/article/details/6831324

在上面说了expdp/impdp 是JOB,我们可以停止与修改。 因此我们在这里做一个简答的测试:

导出语句:

expdp system/oracle full=y directory=dump dumpfile=orcl_%U.dmp parallel=2 job_name=davedump

job_name:指定要导出Job的名称, 默认为SYS_XXX。 在前面已经说过, 调用的API都是Job。 我们为这个JOB命名一下, 等会还要用这个job name。

C:/Users/Administrator.DavidDai>expdp system/oracle full=y directory=dump dumpfile=orcl_%U.dmp job_name=davedump

Export: Release 11.2.0.1.0 - Production on 星期一 12月 27 15:24:38 2010

Copyright (c) 1982, 2009, Oracle and/or its affiliates. All rights reserved.

连接到: Oracle Database 11g Enterprise Edition Release 11.2.0.1.0 - Production

With the Partitioning, OLAP, Data Mining and Real Application Testing options

启动 "SYSTEM"."DAVEDUMP": system/******** full=y directory=dump dumpfile=orcl_%U.dmp job_name=davedump

正在使用 BLOCKS 方法进行估计...

处理对象类型 DATABASE_EXPORT/SCHEMA/TABLE/TABLE_DATA

使用 BLOCKS 方法的总估计: 132.6 MB

处理对象类型 DATABASE_EXPORT/TABLESPACE

处理对象类型 DATABASE_EXPORT/PROFILE

处理对象类型 DATABASE_EXPORT/SYS_USER/USER

处理对象类型 DATABASE_EXPORT/SCHEMA/USER

处理对象类型 DATABASE_EXPORT/ROLE

处理对象类型 DATABASE_EXPORT/GRANT/SYSTEM_GRANT/PROC_SYSTEM_GRANT

处理对象类型 DATABASE_EXPORT/SCHEMA/GRANT/SYSTEM_GRANT

处理对象类型 DATABASE_EXPORT/SCHEMA/ROLE_GRANT

处理对象类型 DATABASE_EXPORT/SCHEMA/DEFAULT_ROLE

处理对象类型 DATABASE_EXPORT/SCHEMA/TABLESPACE_QUOTA

处理对象类型 DATABASE_EXPORT/RESOURCE_COST

处理对象类型 DATABASE_EXPORT/TRUSTED_DB_LINK

处理对象类型 DATABASE_EXPORT/SCHEMA/SEQUENCE/SEQUENCE

--按下CTRL+C 组合,退出交互模式

Export>

Export> status

作业: DAVEDUMP

操作: EXPORT

模式: FULL

状态: EXECUTING

处理的字节: 0

当前并行度: 1

作业错误计数: 0

转储文件: D:/BACKUP/ORCL_01.DMP

写入的字节: 4,096

转储文件: d:/Backup/orcl_%u.dmp

Worker 1 状态:

进程名: DW00

状态: EXECUTING

对象名: STORAGE_CONTEXT

对象类型: DATABASE_EXPORT/CONTEXT

完成的对象数: 7

总的对象数: 7

Worker 并行度: 1

--停止作业

Export> stop_job

是否确实要停止此作业 ([Y]/N): yes

--用job_name再次连接到job

C:/Users/Administrator.DavidDai>expdp system/oracle attach=davedump

-- ATTACH用于在客户会话与已存在导出作用之间建立关联. 如果使用ATTACH选项,在命令行除了连接字符串和ATTACH选项外,不能指定任何其他选

Export: Release 11.2.0.1.0 - Production on 星期一 12月 27 15:26:14 2010

Copyright (c) 1982, 2009, Oracle and/or its affiliates. All rights reserved.

连接到: Oracle Database 11g Enterprise Edition Release 11.2.0.1.0 - Production

With the Partitioning, OLAP, Data Mining and Real Application Testing options

作业: DAVEDUMP

所有者: SYSTEM

操作: EXPORT

创建者权限: TRUE

GUID: 454A188F62AA4D578AA0DA4C35259CD8

开始时间: 星期一, 27 12月, 2010 15:26:16

模式: FULL

实例: orcl

最大并行度: 1

EXPORT 个作业参数:

参数名 参数值:

CLIENT_COMMAND system/******** full=y directory=dump dumpfile=orcl_%U.dmp job_name=davedump

状态: IDLING

处理的字节: 0

当前并行度: 1

作业错误计数: 0

转储文件: d:/Backup/orcl_01.dmp

写入的字节: 950,272

转储文件: d:/Backup/orcl_%u.dmp

Worker 1 状态:

进程名: DW00

状态: UNDEFINED

启动JOB

Export> start_job

-- 查看状态

Export> status

作业: DAVEDUMP

操作: EXPORT

模式: FULL

状态: EXECUTING

处理的字节: 0

当前并行度: 1

作业错误计数: 0

转储文件: d:/Backup/orcl_01.dmp

写入的字节: 954,368

转储文件: d:/Backup/orcl_%u.dmp

Worker 1 状态:

进程名: DW00

状态: EXECUTING

在此期间的备份情况,可以使用status命令来查看:

Export> status

作业: DAVEDUMP

操作: EXPORT

模式: FULL

状态: EXECUTING

处理的字节: 0

当前并行度: 1

作业错误计数: 0

转储文件: d:/Backup/orcl_01.dmp

写入的字节: 954,368

转储文件: d:/Backup/orcl_%u.dmp

Worker 1 状态:

进程名: DW00

状态: EXECUTING

对象方案: SYSMAN

对象名: AQ$_MGMT_NOTIFY_QTABLE_T

对象类型: DATABASE_EXPORT/SCHEMA/TABLE/TABLE

完成的对象数: 59

Worker 并行度: 1

Export> help

------------------------------------------------------------------------------

下列命令在交互模式下有效。

注: 允许使用缩写。

ADD_FILE

将转储文件添加到转储文件集。

CONTINUE_CLIENT

返回到事件记录模式。如果处于空闲状态, 将重新启动作业。

EXIT_CLIENT

退出客户机会话并使作业保持运行状态。

FILESIZE

用于后续 ADD_FILE 命令的默认文件大小 (字节)。

HELP

汇总交互命令。

KILL_JOB

分离并删除作业。

PARALLEL

更改当前作业的活动 worker 的数量。

REUSE_DUMPFILES

覆盖目标转储文件 (如果文件存在) [N]。

START_JOB

启动或恢复当前作业。

有效的关键字值为: SKIP_CURRENT。

STATUS

监视作业状态的频率, 其中

默认值 [0] 表示只要有新状态可用, 就立即显示新状态。

STOP_JOB

按顺序关闭作业执行并退出客户机。

有效的关键字值为: IMMEDIATE。

Export>

注意,就是在expdp命令进行交互式切换时,不能使用paralle 参数。 我在开始测试的时候,指定了这个参数,当stop_job后,在启动时就会报错。 说找不到指定的job_name.

三、EXPDP/IMPDP 命令使用详解

Data Pump包括导出表,导出方案,导出表空间,导出数据库4种方式.

3.1 EXPDP命令参数及说明

(1). ATTACH

该选项用于在客户会话与已存在导出作用之间建立关联.语法如下

ATTACH=[schema_name.]job_name

Schema_name用于指定方案名,job_name用于指定导出作业名.注意,如果使用ATTACH选项,在命令行除了连接字符串和ATTACH选项外,不能指定任何其他选项,示例如下:

Expdp scott/tiger ATTACH=scott.export_job

(2). CONTENT

该选项用于指定要导出的内容.默认值为ALL

CONTENT={ALL | DATA_ONLY | METADATA_ONLY}

当设置CONTENT为ALL 时,将导出对象定义及其所有数据.为DATA_ONLY时,只导出对象数据,为METADATA_ONLY时,只导出对象定义。

Expdp scott/tiger DIRECTORY=dump DUMPFILE=a.dump CONTENT=METADATA_ONLY

(3) DIRECTORY

指定转储文件和日志文件所在的目录,DIRECTORY=directory_object

Directory_object用于指定目录对象名称.需要注意,目录对象是使用CREATE DIRECTORY语句建立的对象,而不是OS 目录。

Expdp scott/tiger DIRECTORY=dump DUMPFILE=a.dump

先在对应的位置创建物理文件夹,如D:/backup

建立目录:

create or replace directory backup as '/opt/oracle/utl_file'

SQL>CREATE DIRECTORY backup as ‘d:/backup’;

SQL>grant read,write on directory backup to SYSTEM;

查询创建了那些子目录:

SELECT * FROM dba_directories;

(4). DUMPFILE

用于指定转储文件的名称,默认名称为expdat.dmp

DUMPFILE=[directory_object:]file_name [,….]

Directory_object用于指定目录对象名,file_name用于指定转储文件名.需要注意,如果不指定directory_object,导出工具会自动使用DIRECTORY选项指定的目录对象:Expdp scott/tiger DIRECTORY=dump1 DUMPFILE=dump2:a.dmp

(5). ESTIMATE

指定估算被导出表所占用磁盘空间分方法.默认值是BLOCKS。

EXTIMATE={BLOCKS | STATISTICS}

设置为BLOCKS时,oracle会按照目标对象所占用的数据块个数乘以数据块尺寸估算对象占用的空间,设置为STATISTICS时,根据最近统计值估算对象占用空间: Expdp scott/tiger TABLES=emp ESTIMATE=STATISTICS DIRECTORY=dump DUMPFILE=a.dump

(6). EXTIMATE_ONLY

指定是否只估算导出作业所占用的磁盘空间,默认值为N

EXTIMATE_ONLY={Y | N}

设置为Y时,导出作用只估算对象所占用的磁盘空间,而不会执行导出作业,为N时,不仅估算对象所占用的磁盘空间,还会执行导出操作.

Expdp scott/tiger ESTIMATE_ONLY=y NOLOGFILE=y

(7). EXCLUDE

该选项用于指定执行操作时释放要排除对象类型或相关对象

EXCLUDE=object_type[:name_clause] [,….]

Object_type用于指定要排除的对象类型,name_clause用于指定要排除的具体对象.EXCLUDE和INCLUDE不能同时使用。

Expdp scott/tiger DIRECTORY=dump DUMPFILE=a.dup EXCLUDE=VIEW

(8). FILESIZE

指定导出文件的最大尺寸,默认为0,(表示文件尺寸没有限制)

(9). FLASHBACK_SCN

指定导出特定SCN时刻的表数据。FLASHBACK_SCN=scn_value

Scn_value用于标识SCN值.FLASHBACK_SCN和FLASHBACK_TIME不能同时使用: Expdp scott/tiger DIRECTORY=dump DUMPFILE=a.dmp FLASHBACK_SCN=358523

(10). FLASHBACK_TIME

指定导出特定时间点的表数据

FLASHBACK_TIME=”TO_TIMESTAMP(time_value)”

Expdp scott/tiger DIRECTORY=dump DUMPFILE=a.dmp FLASHBACK_TIME= “TO_TIMESTAMP(’25-08-2004 14:35:00’,’DD-MM-YYYY HH24:MI:SS’)”

(11). FULL

指定数据库模式导出,默认为N。 FULL={Y | N} 。为Y时,标识执行数据库导出.

(12). HELP

指定是否显示EXPDP命令行选项的帮助信息,默认为N。当设置为Y时,会显示导出选项的帮助信息. Expdp help=y

(13). INCLUDE

指定导出时要包含的对象类型及相关对象。INCLUDE = object_type[:name_clause] [,… ]

(14). JOB_NAME

指定要导出作用的名称,默认为SYS_XXX 。JOB_NAME=jobname_string

(15). LOGFILE

指定导出日志文件文件的名称,默认名称为export.log

LOGFILE=[directory_object:]file_name

Directory_object用于指定目录对象名称,file_name用于指定导出日志文件名.如果不指定directory_object.导出作用会自动使用DIRECTORY的相应选项值.

Expdp scott/tiger DIRECTORY=dump DUMPFILE=a.dmp logfile=a.log

(16). NETWORK_LINK

指定数据库链接名,如果要将远程数据库对象导出到本地例程的转储文件中,必须设置该选项.

(17). NOLOGFILE

该选项用于指定禁止生成导出日志文件,默认值为N.

(18). PARALLEL

指定执行导出操作的并行进程个数,默认值为1

(19). PARFILE

指定导出参数文件的名称。PARFILE=[directory_path] file_name

(20). QUERY

用于指定过滤导出数据的where条件

QUERY=[schema.] [table_name:] query_clause

Schema用于指定方案名,table_name用于指定表名,query_clause用于指定条件限制子句.QUERY选项不能与CONNECT=METADATA_ONLY,EXTIMATE_ONLY,TRANSPORT_TABLESPACES等选项同时使用.

Expdp scott/tiger directory=dump dumpfiel=a.dmp Tables=emp query=’WHERE deptno=20’

(21). SCHEMAS

该方案用于指定执行方案模式导出,默认为当前用户方案.

(22). STATUS

指定显示导出作用进程的详细状态,默认值为0

(23). TABLES

指定表模式导出

TABLES=[schema_name.]table_name[:partition_name][,…]

Schema_name用于指定方案名,table_name用于指定导出的表名,partition_name用于指定要导出的分区名.

(24). TABLESPACES

指定要导出表空间列表

(25). TRANSPORT_FULL_CHECK

该选项用于指定被搬移表空间和未搬移表空间关联关系的检查方式,默认为N. 当设置为Y时,导出作用会检查表空间直接的完整关联关系,如果表空间所在表空间或其索引所在的表空间只有一个表空间被搬移,将显示错误信息.当设置为N时,导出作用只检查单端依赖,如果搬移索引所在表空间,但未搬移表所在表空间,将显示出错信息,如果搬移表所在表空间,未搬移索引所在表空间,则不会显示错误信息.

(26). TRANSPORT_TABLESPACES

指定执行表空间模式导出

(27). VERSION

指定被导出对象的数据库版本,默认值为COMPATIBLE.

VERSION={COMPATIBLE | LATEST | version_string}

为COMPATIBLE时,会根据初始化参数COMPATIBLE生成对象元数据;为LATEST时,会根据数据库的实际版本生成对象元数据.version_string用于指定数据库版本字符串.

3.2 EXPDP 使用示例

使用EXPDP工具时,其转储文件只能被存放在DIRECTORY对象对应的OS目录中,而不能直接指定转储文件所在的OS目录.因此,使用EXPDP工具时,必须首先建立DIRECTORY对象.并且需要为数据库用户授予使用DIRECTORY对象权限.

CREATE DIRECTORY dump_dir AS ‘D:/DUMP’;

GRANT READ, WIRTE ON DIRECTORY dump_dir TO scott;

(1)导出表

Expdp scott/tiger DIRECTORY=dump_dir DUMPFILE=tab.dmp TABLES=dept,emp logfile=exp.log;

(2)导出方案 (schema,与用户对应)

Expdp scott/tiger DIRECTORY=dump_dir DUMPFILE=schema.dmp SCHEMAS=system,scott logfile=/exp.log;

(3)导出表空间

Expdp system/manager DIRECTORY=dump_dir DUMPFILE=tablespace.dmp TABLESPACES=user01,user02 logfile=/exp.log;

(4)导出数据库

Expdp system/manager DIRECTORY=dump_dir DUMPFILE=full.dmp FULL=Y logfile=/exp.log;

3.3 IMPDP 命令参数说明

IMPDP命令行选项与EXPDP有很多相同的,不同的有:

(1)REMAP_DATAFILE

该选项用于将源数据文件名转变为目标数据文件名,在不同平台之间搬移表空间时可能需要该选项.

REMAP_DATAFIEL=source_datafie:target_datafile

(2)REMAP_SCHEMA

该选项用于将源方案的所有对象装载到目标方案中.

REMAP_SCHEMA=source_schema:target_schema

(3)REMAP_TABLESPACE

将源表空间的所有对象导入到目标表空间中

REMAP_TABLESPACE=source_tablespace:target_tablespace

(4)REUSE_DATAFILES

该选项指定建立表空间时是否覆盖已存在的数据文件.默认为N。

REUSE_DATAFIELS={Y | N}

(5)SKIP_UNUSABLE_INDEXES

指定导入是是否跳过不可使用的索引,默认为N

(6)SQLFILE

指定将导入要指定的索引DDL操作写入到SQL脚本中。

SQLFILE=[directory_object:]file_name

Impdp scott/tiger DIRECTORY=dump DUMPFILE=tab.dmp SQLFILE=a.sql

(7)STREAMS_CONFIGURATION

指定是否导入流元数据(Stream Matadata),默认值为Y.

(8)TABLE_EXISTS_ACTION

该选项用于指定当表已经存在时导入作业要执行的操作,默认为SKIP

TABBLE_EXISTS_ACTION={SKIP | APPEND | TRUNCATE | FRPLACE }

当设置该选项为SKIP时,导入作业会跳过已存在表处理下一个对象;当设置为APPEND时,会追加数据,为TRUNCATE时,导入作业会截断表,然后为其追加新数据;当设置为REPLACE时,导入作业会删除已存在表,重建表病追加数据,注意,TRUNCATE选项不适用与簇表和NETWORK_LINK选项

(9)TRANSFORM

该选项用于指定是否修改建立对象的DDL语句

TRANSFORM=transform_name:value[:object_type]

Transform_name用于指定转换名,其中SEGMENT_ATTRIBUTES用于标识段属性(物理属性,存储属性,表空间,日志等信息),STORAGE用于标识段存储属性,VALUE用于指定是否包含段属性或段存储属性,object_type用于指定对象类型.

Impdp scott/tiger directory=dump dumpfile=tab.dmp Transform=segment_attributes:n:table

(10)TRANSPORT_DATAFILES

该选项用于指定搬移空间时要被导入到目标数据库的数据文件。

TRANSPORT_DATAFILE=datafile_name

Datafile_name用于指定被复制到目标数据库的数据文件

Impdp system/manager DIRECTORY=dump DUMPFILE=tts.dmp TRANSPORT_DATAFILES=’/user01/data/tbs1.f’

3.4 IMPDP 命令实例

(1)导入表

Impdp scott/tiger DIRECTORY=dump_dir DUMPFILE=tab.dmp TABLES=dept,emp logfile=/exp.log;

--将DEPT和EMP表导入到SCOTT方案中

Impdp system/manage DIRECTORY=dump_dir DUMPFILE=tab.dmp

TABLES=scott.dept,scott.emp REMAP_SCHEMA=SCOTT:SYSTEM logfile=/exp.log;

-- 将DEPT和EMP表导入的SYSTEM方案中.

(2)导入方案

Impdp scott/tiger DIRECTORY=dump_dir DUMPFILE=schema.dmp SCHEMAS=scott logfile=/exp.log;

Impdp system/manager DIRECTORY=dump_dir DUMPFILE=schema.dmp

SCHEMAS=scott REMAP_SCHEMA=scott:system logfile=/exp.log;

(3)导入表空间

Impdp system/manager DIRECTORY=dump_dir DUMPFILE=tablespace.dmp TABLESPACES=user01 logfile=/exp.log;

(4)导入数据库

Impdp system/manager DIRECTORY=dump_dir DUMPFILE=full.dmp FULL=y logfile=/exp.log;