Hadoop Hive与Hbase整合

Hadoop Hive与Hbase整合

一 、简介

Hive是基于Hadoop的一个数据仓库工具,可以将结构化的数据文件映射为一张数据库表,并提供完整的sql查询功能,可以将sql语句转换为MapReduce任务进行运行。 其优点是学习成本低,可以通过类SQL语句快速实现简单的MapReduce统计,不必开发专门的MapReduce应用,十分适合数据仓库的统计分析。

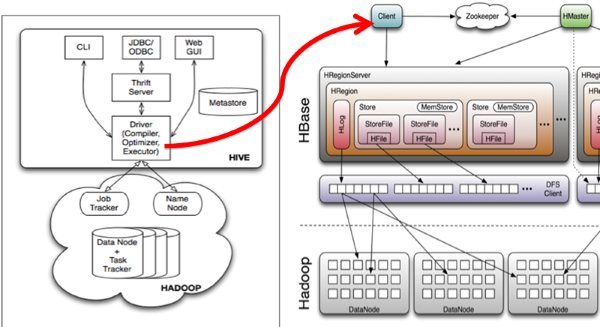

Hive与HBase的整合功能的实现是利用两者本身对外的API接口互相进行通信,相互通信主要是依靠hive_hbase-handler.jar工具类, 大致意思如图所示:

二、安装步骤:

1 .Hadoop和Hbase都已经成功安装了

Hadoop集群配置:http://blog.csdn.net/hguisu/article/details/723739

hbase安装配置:http://blog.csdn.net/hguisu/article/details/7244413

2 . 拷贝hbase-0.90.4.jar和zookeeper-3.3.2.jar到hive/lib下。

注意:如果hive/lib下已经存在这两个文件的其他版本(例如zookeeper-3.3.2.jar),建议删除后使用hbase下的相关版本。

3. 修改hive/conf下hive-site.xml文件,在底部添加如下内容:

- <!--

- <property>

- <name>hive.exec.scratchdir</name>

- <value>/usr/local/hive/tmp</value>

- </property>

- -->

- <property>

- <name>hive.querylog.location</name>

- <value>/usr/local/hive/logs</value>

- </property>

- <property>

- <name>hive.aux.jars.path</name>

- <value>file:///usr/local/hive/lib/hive-hbase-handler-0.8.0.jar,file:///usr/local/hive/lib/hbase-0.90.4.jar,file:///usr/local/hive/lib/zookeeper-3.3.2.jar</value>

- </property>

注意:如果hive-site.xml不存在则自行创建,或者把hive-default.xml.template文件改名后使用。

4. 拷贝hbase-0.90.4.jar到所有hadoop节点(包括master)的hadoop/lib下。

5. 拷贝hbase/conf下的hbase-site.xml文件到所有hadoop节点(包括master)的hadoop/conf下。

注意,如果3,4两步跳过的话,运行hive时很可能出现如下错误:

- [html] view plaincopy

- org.apache.hadoop.hbase.ZooKeeperConnectionException: HBase is able to connect to ZooKeeper but the connection closes immediately.

- This could be a sign that the server has too many connections (30 is the default). Consider inspecting your ZK server logs for that error and

- then make sure you are reusing HBaseConfiguration as often as you can. See HTable's javadoc for more information. at org.apache.hadoop.

- hbase.zookeeper.ZooKeeperWatcher.

三、启动Hive

1.单节点启动

#bin/hive -hiveconf hbase.master=master:490001

2 集群启动:

#bin/hive -hiveconf hbase.zookeeper.quorum=node1,node2,node3

如何hive-site.xml文件中没有配置hive.aux.jars.path,则可以按照如下方式启动。

bin/hive --auxpath /usr/local/hive/lib/hive-hbase-handler-0.8.0.jar, /usr/local/hive/lib/hbase-0.90.5.jar, /usr/local/hive/lib/zookeeper-3.3.2.jar -hiveconf hbase.zookeeper.quorum=node1,node2,node3

四、测试:

1.创建hbase识别的数据库:

- CREATE TABLE hbase_table_1(key int, value string)

- STORED BY 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

- WITH SERDEPROPERTIES ("hbase.columns.mapping" = ":key,cf1:val")

- TBLPROPERTIES ("hbase.table.name" = "xyz");

hbase.table.name 定义在hbase的table名称

hbase.columns.mapping 定义在hbase的列族

2.使用sql导入数据

1) 新建hive的数据表:

CREATE TABLE pokes (foo INT, bar STRING);

2)批量插入数据:

hive> LOAD DATA LOCAL INPATH './examples/files/kv1.txt' OVERWRITE INTO TABLE

3)使用sql导入hbase_table_1:

hive> INSERT OVERWRITE TABLE hbase_table_1 SELECT * FROM pokes WHERE foo=86;

3. 查看数据

hive> select * from hbase_table_1;

这时可以登录Hbase去查看数据了

#bin/hbase shell

hbase(main):001:0> describe 'xyz'

hbase(main):002:0> scan 'xyz'

hbase(main):003:0> put 'xyz','100','cf1:val','www.360buy.com'

这时在Hive中可以看到刚才在Hbase中插入的数据了。

4 hive访问已经存在的hbase

使用CREATE EXTERNAL TABLE:

- CREATE EXTERNAL TABLE hbase_table_2(key int, value string)

- STORED BY 'org.apache.hadoop.hive.hbase.HBaseStorageHandler'

- WITH SERDEPROPERTIES ("hbase.columns.mapping" = "cf1:val")

- TBLPROPERTIES("hbase.table.name" = "some_existing_table");