你们都睡了,而我却在写博客,呵呵!我也不知道为什么都夜深了,我却还没一点困意,趁着劲头赶紧把自定义结果集写完,已经拖了2天没更新了,不能让你们等太久,我也要把写博客一直坚持下去。

Collector是什么?还是看源码吧。这也是最权威的解释说明。

/**

* <p>Expert: Collectors are primarily meant to be used to

* gather raw results from a search, and implement sorting

* or custom result filtering, collation, etc. </p>

*

* <p>Lucene's core collectors are derived from {@link Collector}

* and {@link SimpleCollector}. Likely your application can

* use one of these classes, or subclass {@link TopDocsCollector},

* instead of implementing Collector directly:

*

* <ul>

*

* <li>{@link TopDocsCollector} is an abstract base class

* that assumes you will retrieve the top N docs,

* according to some criteria, after collection is

* done. </li>

*

* <li>{@link TopScoreDocCollector} is a concrete subclass

* {@link TopDocsCollector} and sorts according to score +

* docID. This is used internally by the {@link

* IndexSearcher} search methods that do not take an

* explicit {@link Sort}. It is likely the most frequently

* used collector.</li>

*

* <li>{@link TopFieldCollector} subclasses {@link

* TopDocsCollector} and sorts according to a specified

* {@link Sort} object (sort by field). This is used

* internally by the {@link IndexSearcher} search methods

* that take an explicit {@link Sort}.

*

* <li>{@link TimeLimitingCollector}, which wraps any other

* Collector and aborts the search if it's taken too much

* time.</li>

*

* <li>{@link PositiveScoresOnlyCollector} wraps any other

* Collector and prevents collection of hits whose score

* is <= 0.0</li>

*

* </ul>

*

* @lucene.experimental

*/

public interface Collector {

/**

* Create a new {@link LeafCollector collector} to collect the given context.

*

* @param context

* next atomic reader context

*/

LeafCollector getLeafCollector(LeafReaderContext context) throws IOException;

}

Collector系列接口是用来收集查询结果,实现排序,自定义结果集过滤和收集。Collector和LeafCollector是Lucene结果集收集的核心。

TopDocsCollector:是用来收集Top N结果的,

TopScoreDocCollector:它是TopDocsCollector的子类,它返回的结果集会根据评分和docId进行排序,该接口在IndexSearcher类的search方法内部被调用,但search方法并不需要显式的指定一个Sort排序器,TopScoreDocCollector是使用频率最高的一个结果收集器接口。

TopFieldCollector:它也是TopDocsCollector的子类,跟TopScoreDocCollector的区别是,TopScoreDocCollector是根据评分和docId进行排序的,而TopFieldCollector是根据用户指定的域进行排序,在调用IndexSearcher.search方法时需要显式的指定Sort排序器。

TimeLimitingCollector:它是其他Collector的包装器,它的功能是当被包装的Collector耗时超过限制时可以中断收集过程。

PositiveScoresOnlyCollector:从类名就知道它是干嘛的,Positive正数的意思,即只返回score评分大于零的索引文档,它跟TimeLimitingCollector都属于其他Collector的包装器,都使用了装饰者模式。

Collector接口只有一个接口方法:

LeafCollector getLeafCollector(LeafReaderContext context) throws IOException;

根据提供的IndexReader上下文对象返回一个LeafCollector,LeafCollector其实就是对应每个段文件的收集器,每次切换段文件时都会调用一次此接口方法。

其实LeafCollector才是结果收集器接口,Collector只是用来生成每个段文件对应的LeafCollector,在Lucene4,x时代,Collector和LeafCollector并没有分开,现在Lucene5.0中,接口定义粒度更细了,为用户自定义扩展提供了更多的便利。

接着看看LeafCollector的源码说明:

/**

* <p>Collector decouples the score from the collected doc:

* the score computation is skipped entirely if it's not

* needed. Collectors that do need the score should

* implement the {@link #setScorer} method, to hold onto the

* passed {@link Scorer} instance, and call {@link

* Scorer#score()} within the collect method to compute the

* current hit's score. If your collector may request the

* score for a single hit multiple times, you should use

* {@link ScoreCachingWrappingScorer}. </p>

*

* <p><b>NOTE:</b> The doc that is passed to the collect

* method is relative to the current reader. If your

* collector needs to resolve this to the docID space of the

* Multi*Reader, you must re-base it by recording the

* docBase from the most recent setNextReader call. Here's

* a simple example showing how to collect docIDs into a

* BitSet:</p>

*

* <pre class="prettyprint">

* IndexSearcher searcher = new IndexSearcher(indexReader);

* final BitSet bits = new BitSet(indexReader.maxDoc());

* searcher.search(query, new Collector() {

*

* public LeafCollector getLeafCollector(LeafReaderContext context)

* throws IOException {

* final int docBase = context.docBase;

* return new LeafCollector() {

*

* <em>// ignore scorer</em>

* public void setScorer(Scorer scorer) throws IOException {

* }

*

* public void collect(int doc) throws IOException {

* bits.set(docBase + doc);

* }

*

* };

* }

*

* });

* </pre>

*

* <p>Not all collectors will need to rebase the docID. For

* example, a collector that simply counts the total number

* of hits would skip it.</p>

*

* @lucene.experimental

*/

public interface LeafCollector {

/**

* Called before successive calls to {@link #collect(int)}. Implementations

* that need the score of the current document (passed-in to

* {@link #collect(int)}), should save the passed-in Scorer and call

* scorer.score() when needed.

*/

void setScorer(Scorer scorer) throws IOException;

/**

* Called once for every document matching a query, with the unbased document

* number.

* <p>Note: The collection of the current segment can be terminated by throwing

* a {@link CollectionTerminatedException}. In this case, the last docs of the

* current {@link org.apache.lucene.index.LeafReaderContext} will be skipped and {@link IndexSearcher}

* will swallow the exception and continue collection with the next leaf.

* <p>

* Note: This is called in an inner search loop. For good search performance,

* implementations of this method should not call {@link IndexSearcher#doc(int)} or

* {@link org.apache.lucene.index.IndexReader#document(int)} on every hit.

* Doing so can slow searches by an order of magnitude or more.

*/

void collect(int doc) throws IOException;

}

LeafCollector将打分操作从文档收集中分离出去了,如果你不需要打分操作,你可以完全跳过。

如果你需要打分操作,你需要实现setScorer方法并传入一个Scorer对象,然后在collect方法中

通过调用Scorer.score方法完成对当前命中文档的打分操作。如果你的LeafCollector在collect

方法中需要对命中的某个索引文档调用多次score方法的话,请你使用ScoreCachingWrappingScorer

对象包装你的Scorer对象。(利用缓存防止多次进行重复打分)

collect方法中的doc参数是相对于当前IndexReader的,如果你需要把doc解析成docId(索引文档ID),

你需要调用setNextReader方法来重新计算IndexReader的docBase值。

并不是所有的Collector都需要计算docID基数的,比如对于只需要收集总的命中结果数量的Collector来说,

可以跳过这个操作。

通过以上的理解,我们可以总结出:通过Collector接口生产LeafCollector,然后通过LeafCollector接口

去完成结果收集和命中结果的打分操作。即底下真正干活的是LeafCollector。

void collect(int doc) throws IOException;

这里collect方法用来收集每个索引文档,提供的doc参数表示段文件编号,如果你要获取索引文档的编号,请加上当前段文件Reader的docBase基数,如leafReaderContext.reader().docBase + doc;

如果你需要自定义打分器,请继承实现自己的Scorer,那这个setScorer什么时候调用呢,这个通过阅读IndexSearcher的search方法顺藤摸瓜从而知晓,看图:

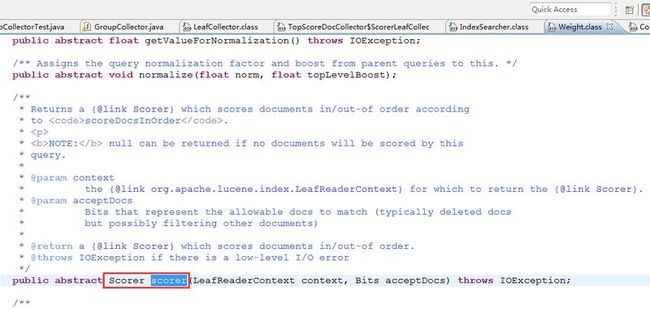

其实内部是先把Query对象包装成Filter,然后通过调用createNormalizedWeight方法生成Weight(权重类),观摩Weight接口你会发现,其中有个Scorer scorer接口方法:

至此我们就弄清楚了,我们的LeafCollector不用关心Scorer是怎么创建并传入到LeafCollector中的,我们只需要实现自己的Scorer即可,我们在IndexSearcher.search方法时内部会首先创建Weight,通过Weight来生成Scorer,我们在调用search方法时需要传入collector接口,那自然scorer接口就被传入了leafCollector中。

如果实现了自己的Scorer则必然需要也要实现自己的Weight并通过自定义Weight来生成自定义Scorer,特此提醒,为了简便起见,这里就没有自定义Scorer。

下面是一个自定义Collector的简单示例,希望能抛砖引玉,为大家排忧解惑,如果代码有任何BUG或纰漏,还望大家告知我。

package com.yida.framework.lucene5.collector;

import java.io.IOException;

import java.util.ArrayList;

import java.util.List;

import org.apache.lucene.index.LeafReaderContext;

import org.apache.lucene.index.SortedDocValues;

import org.apache.lucene.search.Collector;

import org.apache.lucene.search.LeafCollector;

import org.apache.lucene.search.ScoreDoc;

import org.apache.lucene.search.Scorer;

/**

* 自定义Collector结果收集器

* @author Lanxiaowei

*

*/

public class GroupCollector implements Collector, LeafCollector {

/**评分计算器*/

private Scorer scorer;

/**段文件的编号*/

private int docBase;

private String fieldName;

private SortedDocValues sortedDocValues;

private List<ScoreDoc> scoreDocs = new ArrayList<ScoreDoc>();

public LeafCollector getLeafCollector(LeafReaderContext context)

throws IOException {

this.sortedDocValues = context.reader().getSortedDocValues(fieldName);

return this;

}

public void setScorer(Scorer scorer) throws IOException {

this.scorer = scorer;

}

public void collect(int doc) throws IOException {

// scoreDoc:docId和评分

this.scoreDocs.add(new ScoreDoc(this.docBase + doc, this.scorer.score()));

}

public GroupCollector(String fieldName) {

super();

this.fieldName = fieldName;

}

public int getDocBase() {

return docBase;

}

public void setDocBase(int docBase) {

this.docBase = docBase;

}

public String getFieldName() {

return fieldName;

}

public void setFieldName(String fieldName) {

this.fieldName = fieldName;

}

public SortedDocValues getSortedDocValues() {

return sortedDocValues;

}

public void setSortedDocValues(SortedDocValues sortedDocValues) {

this.sortedDocValues = sortedDocValues;

}

public List<ScoreDoc> getScoreDocs() {

return scoreDocs;

}

public void setScoreDocs(List<ScoreDoc> scoreDocs) {

this.scoreDocs = scoreDocs;

}

public Scorer getScorer() {

return scorer;

}

}

package com.yida.framework.lucene5.collector;

import java.io.IOException;

import java.nio.file.Paths;

import java.util.List;

import org.apache.lucene.document.Document;

import org.apache.lucene.index.DirectoryReader;

import org.apache.lucene.index.IndexReader;

import org.apache.lucene.index.Term;

import org.apache.lucene.search.IndexSearcher;

import org.apache.lucene.search.ScoreDoc;

import org.apache.lucene.search.TermQuery;

import org.apache.lucene.store.Directory;

import org.apache.lucene.store.FSDirectory;

/**

* 自定义Collector测试

* @author Lanxiaowei

*

*/

public class GroupCollectorTest {

public static void main(String[] args) throws IOException {

String indexDir = "C:/lucenedir";

Directory directory = FSDirectory.open(Paths.get(indexDir));

IndexReader reader = DirectoryReader.open(directory);

IndexSearcher searcher = new IndexSearcher(reader);

TermQuery termQuery = new TermQuery(new Term("title", "lucene"));

GroupCollector collector = new GroupCollector("title2");

searcher.search(termQuery, null, collector);

List<ScoreDoc> docs = collector.getScoreDocs();

for (ScoreDoc scoreDoc : docs) {

int docID = scoreDoc.doc;

Document document = searcher.doc(docID);

String title = document.get("title");

float score = scoreDoc.score;

System.out.println(docID + ":" + title + " " + score);

}

reader.close();

directory.close();

}

}

这里仅仅是一个简单的示例,如果你需要更严格的干预索引文档,请在collect方法里实现的代码逻辑,如果你需要更细粒度的干预文档打分过程,请继承Scorer抽象类自定义的实现并继承Weight抽象类自定义的实现,然后调用IndexSearch的这个方法即可:

protected TopFieldDocs search(Weight weight, FieldDoc after, int nDocs,

Sort sort, boolean fillFields,

boolean doDocScores, boolean doMaxScore)

throws IOException

一如既往的,demo源码会上传到底下的附件里,至于有童鞋要求我的demo不要使用Maven构建,I am very sorry,I can't meet your requirments.如果你不会Maven,还是花时间去学下吧。OK,凌晨一点多了,我该搁笔就寝咯!

哥的QQ: 7-3-6-0-3-1-3-0-5,欢迎加入哥的Java技术群一起交流学习。

群号: ![]()