oracle RAC 使用Jumbo Frames

先来看看Jumbo Frames是什么东东。

我们知道在TCP/IP 协义簇中,以太网数据链路层通信的单位是帧(frame),1帧的大小被定为1,518字节,传统的10M网卡frame的MTU(Maximum Transmission Unit最大传输单元)大小是1500字节(如示例所示),基中14 字节保留给了帧的头,4字节保留给CRC校验,实际上去整个TCP/IP头40字节,有效数据是1460字节。后来的100M和1000M网卡保持了兼容,也是1500字节。但是对1000M网卡来说,这意味着更多的中断和和处理时间。因此千兆网卡使用“Jumbo Frames”将frmae扩展至9000字节。为什么是9000字节,而不是更大呢?因为32位的CRC校验和对大于12000字节来说将失去效率上的 优势,而9000字节对8KB的应用来说,比如NFS,已经足够了。

eg:

[root@vm1 ~]# ifconfig

eth0 Link encap:Ethernet HWaddr 08:00:27:37:9C:D0

inet addr:192.168.0.103 Bcast:192.168.0.255 Mask:255.255.255.0

inet6 addr: fe80::a00:27ff:fe37:9cd0/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:9093 errors:0 dropped:0 overruns:0 frame:0

TX packets:10011 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:749067 (731.5 KiB) TX bytes:4042337 (3.8 MiB)

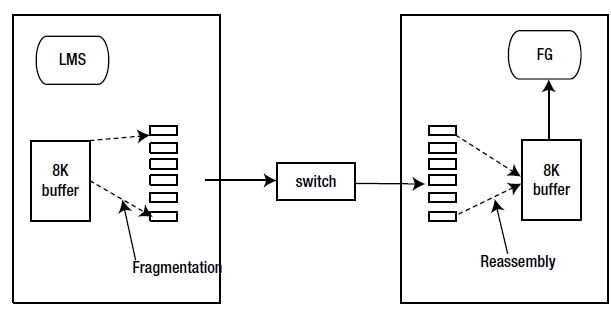

如果是在以上网络的示例一个配置MTU ~ 1500字节(1.5K)路径中,一个数据块的大小为8K的从一个节点传送到另一个节点,那么需要六个数据包来传输。8K缓存分割成六个IP数据包发送到接收侧面。在接收端,这六个IP分组被接收并重新创建8K缓冲。重组缓冲区最终被传递给应用程序,用于进一步处理。

图1

图1显示了数据块如何分割和重组。在这个图中,LMS进程发送一个8KB数据块到远程进程。在传送的过程中,在缓冲区8KB被分割为六个IP数据包,而这些IP包发送通过网络发送到接收侧。在接收侧,内核线程重组这六个IP数据包,并把8KB数据块存放在缓冲区中。前台进程从套接字缓冲区读取它到PGA,同时复制到database buffer中。

在以上过程中将会引起碎片与重新组合,即过度分割和重组问题,这在无形中增加了这数据库节点的以CPU使用率。这种情况我们不得不选择Jumbo Frames 。

现在我们的网络环境都可以达到千兆,万兆,甚至是更高,那么我们可以通过以下命令在系统中进行设置(前提是你的环境是gigabit Ethernet switches and gigabit Ethernet network):

# ifconfig eth0 mtu 9000

使其永久生效

# vi /etc/sysconfig/network-script/ifcfg-eth0

添加

MTU 9000

更多细节参看 http://www.cyberciti.biz/faq/rhel-centos-debian-ubuntu-jumbo-frames-configuration/

下面的文章对以上设置进行很好的测试:

https://blogs.oracle.com/XPSONHA/entry/jumbo_frames_for_rac_interconn_1

对其测试步骤与结果摘录如下:

Theory tells us properly configured Jumbo Frames can eliminate 10% of overhead on UDP traffic.

So how to test ?

I guess an 'end to end' test would be best way. So my first test is a 30 minute Swingbench run against a two node RAC, not too much stress in the begin.

The MTU configuration of the network bond (and the slave nics will be 1500 initially).

After the test, collect the results on the total transactions, the average transactions per second, the maximum transaction rate (results.xml), interconnect traffic (awr) and cpu usage. Then, do exactly the same, but now with an MTU of 9000 bytes. For this we need to make sure the switch settings are also modified to use an MTU of 9000.

B.t.w.: yes, it's possible to measure network only, but real-life end-to-end testing with a real Oracle application talking to RAC feels like the best approach to see what the impact is on for example the avg. transactions per second.

In order to make the test as reliable as possible some remarks:

- use guaranteed snapshots to flashback the database to its original state.

- stop/start the database (clean the cache)

B.t.w: before starting the test with an MTU of 9000 bytes the correct setting had to be proofed.

One way to do this is using ping with a packet size (-s) of 8972 and prohibiting fragmentation (-M do).

One could send Jumbo Frames and see if they can be sent without fragmentation.

[root@node01 rk]# ping -s 8972 -M do node02-ic -c 5

PING node02-ic. (192.168.23.32) 8972(9000) bytes of data.

8980 bytes from node02-ic. (192.168.23.32): icmp_seq=0 ttl=64 time=0.914 ms

As you can see this is not a problem. While for packages larger then 9000 bytes, this is a problem:

[root@node01 rk]# ping -s 8973 -M do node02-ic -c 5

--- node02-ic. ping statistics ---

5 packets transmitted, 5 received, 0% packet loss, time 4003ms

rtt min/avg/max/mdev = 0.859/0.955/1.167/0.109 ms, pipe 2

PING node02-ic. (192.168.23.32) 8973(9001) bytes of data.

From node02-ic. (192.168.23.52) icmp_seq=0 Frag needed and DF set (mtu = 9000)

Bringing back the MTU size to 1500 should also prohibit sending of fragmented 9000 packages:

[root@node01 rk]# ping -s 8972 -M do node02-ic -c 5

PING node02-ic. (192.168.23.32) 8972(9000) bytes of data.

--- node02-ic. ping statistics ---

5 packets transmitted, 0 received, 100% packet loss, time 3999ms

Bringing back the MTU size to 1500 and sending 'normal' packages should work again:

[root@node01 rk]# ping node02-ic -M do -c 5

PING node02-ic. (192.168.23.32) 56(84) bytes of data.

64 bytes from node02-ic. (192.168.23.32): icmp_seq=0 ttl=64 time=0.174 ms

--- node02-ic. ping statistics ---

5 packets transmitted, 5 received, 0% packet loss, time 3999ms

rtt min/avg/max/mdev = 0.174/0.186/0.198/0.008 ms, pipe 2

An other way to verify the correct usage of the MTU size is the command 'netstat -a -i -n' (the column MTU size should be 9000 when you are performing tests on Jumbo Frames):

Kernel Interface table

Iface MTU Met RX-OK RX-ERR RX-DRP RX-OVR TX-OK TX-ERR TX-DRP TX-OVR Flg

bond0 1500 0 10371535 0 0 0 15338093 0 0 0 BMmRU

bond0:1 1500 0 - no statistics available - BMmRU

bond1 9000 0 83383378 0 0 0 89645149 0 0 0 BMmRU

eth0 9000 0 36 0 0 0 88805888 0 0 0 BMsRU

eth1 1500 0 8036210 0 0 0 14235498 0 0 0 BMsRU

eth2 9000 0 83383342 0 0 0 839261 0 0 0 BMsRU

eth3 1500 0 2335325 0 0 0 1102595 0 0 0 BMsRU

eth4 1500 0 252075239 0 0 0 252020454 0 0 0 BMRU

eth5 1500 0 0 0 0 0 0 0 0 0 BM

As you can see my interconnect in on bond1 (build on eth0 and eth2). All 9000 bytes.

Not finished yet, no conclusions yet, but here is my first result.

You will notice the results are not that significantly.

MTU 1500:

TotalFailedTransactions : 0

AverageTransactionsPerSecond : 1364

MaximumTransactionRate : 107767

TotalCompletedTransactions : 4910834

MTU 9000:

TotalFailedTransactions : 1

AverageTransactionsPerSecond : 1336

MaximumTransactionRate : 109775

TotalCompletedTransactions : 4812122

In a chart this will look like this:

As you can see, the number of transactions between the two tests isn't really that significant, but the UDP traffic is less ! Still, I expected more from this test, so I have to put more stress to the test.

I noticed the failed transaction, and found "ORA-12155 TNS-received bad datatype in NSWMARKER packet". I did verify this and I am sure this is not related to the MTU size. This is because I only changed the MTU size for the interconnect and there is no TNS traffic on that network.

As said, I will now continue with tests that have much more stress on the systems:

- number of users changed from 80 to 150 per database

- number of databases changed from 1 to 2

- more network traffic:

- rebuild the Swingbench indexes without the 'REVERSE' option

- altered the sequences and lowered increment by value to 1 and cache size to 3. (in stead of 800)

- full table scans all the time on each instance

- run longer (4 hours in stead of half an hour)

Now, what you see is already improving. For the 4 hour test, the amount of extra UDP packets sent with an MTU size of 1500 compared to an MTU size of 9000 is about 2.5 to 3 million, see this chart:

Imagine yourself what an impact this has. Each package you not send save you the network-overhead of the package itself and a lot of CPU cycles that you don't need to spend.

The load average of the Linux box also decreases from an avg of 16 to 14.

In terms of completed transactions on different MTU sizes within the same timeframe, the chart looks like this:

To conclude this test two very high load runs are performed. Again, one with an MTU of 1500 and one with an MTU of 9000.

In the charts below you will see less CPU consumption when using 9000 bytes for MTU.

Also less packets are sent, although I think that number is not that significant compared to the total number of packets sent.

My final thoughts on this test:

1. you will hardly notice the benefits of using Jumbo on a system with no stress

2. you will notice the benefits of Jumbo using Frames on a stressed system and such a system will then use less CPU and will have less network overhead.

This means Jumbo Frames help you scaling out better then regular frames.

Depending on the interconnect usage of your applications the results may vary of course. With interconnect traffic intensive applications you will see the benefits earlier then with application that have relatively less interconnect activity.

I would use Jumbo Frames to scale better, since it saves CPU and reduces network traffic and this way leaves space for growth.