Hadoop分析日志实例的详细步骤及出现的问题分析和解决

首先分析 Hadoop 的日志格式, 日志是一行一条, 日志格式可以依次描述为:日期、时间、级别、相关类和提示信息。如下所示:

2014-01-07 00:31:25,393 INFO org.apache.hadoop.mapred.JobTracker: SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down JobTracker at hadoop1/192.168.91.101

************************************************************/

2014-01-07 00:33:42,425 INFO org.apache.hadoop.mapred.JobTracker: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting JobTracker

STARTUP_MSG: host = hadoop1/192.168.91.101

STARTUP_MSG: args = []

STARTUP_MSG: version = 1.1.2

STARTUP_MSG: build = https://svn.apache.org/repos/asf/hadoop/common/branches/branch-1.1 -r 1440782; compiled by 'hortonfo' on Thu Jan 31 02:03:24 UTC 2013

************************************************************/

2014-01-07 00:33:43,305 INFO org.apache.hadoop.metrics2.impl.MetricsConfig: loaded properties from hadoop-metrics2.properties

2014-01-07 00:33:43,358 INFO org.apache.hadoop.metrics2.impl.MetricsSourceAdapter: MBean for source MetricsSystem,sub=Stats registered.

2014-01-07 00:33:43,359 INFO org.apache.hadoop.metrics2.impl.MetricsSystemImpl: Scheduled snapshot period at 10 second(s).

2014-01-07 00:33:43,359 INFO org.apache.hadoop.metrics2.impl.MetricsSystemImpl: JobTracker metrics system started

2014-01-07 00:33:43,562 INFO org.apache.hadoop.metrics2.impl.MetricsSourceAdapter: MBean for source QueueMetrics,q=default registered.

2014-01-07 00:33:44,118 INFO org.apache.hadoop.metrics2.impl.MetricsSourceAdapter: MBean for source ugi registered.

2014-01-07 00:33:44,118 INFO org.apache.hadoop.security.token.delegation.AbstractDelegationTokenSecretManager: Updating the current master key for generating delegation tokens

2014-01-07 00:33:44,119 INFO org.apache.hadoop.mapred.JobTracker: Scheduler configured with (memSizeForMapSlotOnJT, memSizeForReduceSlotOnJT, limitMaxMemForMapTasks, limitMaxMemForReduceTasks) (-1, -1, -1, -1)

2014-01-07 00:33:44,120 INFO org.apache.hadoop.util.HostsFileReader: Refreshing hosts (include/exclude) list

2014-01-07 00:33:44,125 INFO org.apache.hadoop.security.token.delegation.AbstractDelegationTokenSecretManager: Starting expired delegation token remover thread, tokenRemoverScanInterval=60 min(s)

2014-01-07 00:33:44,125 INFO org.apache.hadoop.security.token.delegation.AbstractDelegationTokenSecretManager: Updating the current master key for generating delegation tokens

2014-01-07 00:33:44,126 INFO org.apache.hadoop.mapred.JobTracker: Starting jobtracker with owner as root

2014-01-07 00:33:44,187 INFO org.apache.hadoop.metrics2.impl.MetricsSourceAdapter: MBean for source RpcDetailedActivityForPort9001 registered.

2014-01-07 00:33:44,187 INFO org.apache.hadoop.metrics2.impl.MetricsSourceAdapter: MBean for source RpcActivityForPort9001 registered.

2014-01-07 00:33:44,188 INFO org.apache.hadoop.ipc.Server: Starting SocketReader

2014-01-07 00:33:44,490 INFO org.mortbay.log: Logging to org.slf4j.impl.Log4jLoggerAdapter(org.mortbay.log) via org.mortbay.log.Slf4jLog

2014-01-07 00:33:44,805 INFO org.apache.hadoop.http.HttpServer: Added global filtersafety (class=org.apache.hadoop.http.HttpServer$QuotingInputFilter)

2014-01-07 00:33:44,825 INFO org.apache.hadoop.http.HttpServer: Port returned by webServer.getConnectors()[0].getLocalPort() before open() is -1. Opening the listener

这只是部分日志。

2). 程序设计

本程序是在个人机器用 Eclipse 开发,该程序连接 Hadoop 集群,处理完的结果存储在MySQL 服务器上。

MySQL 数据库的存储信息的表“hadooplog”的 SQL 语句如下:

drop table if exists hadooplog;

create table hadooplog(

id int(11) not null auto_increment,

rdate varchar(50) null,

time varchar(50) default null,

type varchar(50) default null,

relateclass tinytext default null,

information longtext default null,

primary key (id)

) engine=innodb default charset=utf8;

操作如下:进入mysql 直接执行sql语句就行,创建一个hadooplog表

3). 程序代码

package com.wzl.hive;

import java.sql.Connection;

import java.sql.DriverManager;

import java.sql.SQLException;

/**

* 该类的主要功能是负责建立与 Hive 和 MySQL 的连接, 由于每个连接的开销比较大, 所以此类的设计采用设计模式中的单例模式。

*/

class DBHelper {

private static Connection connToHive = null;

private static Connection connToMySQL = null;

private DBHelper() {

}

// 获得与 Hive 连接,如果连接已经初始化,则直接返回

public static Connection getHiveConn() throws SQLException {

if (connToHive == null) {

try {

Class.forName("org.apache.hadoop.hive.jdbc.HiveDriver");

} catch (ClassNotFoundException err) {

err.printStackTrace();

System.exit(1);

}

connToHive = DriverManager.getConnection("jdbc:hive://192.168.91.101:10000/default", "hive", "");

}

return connToHive;

}

// 获得与 MySQL 连接

public static Connection getMySQLConn() throws SQLException {

if (connToMySQL == null) {

try {

Class.forName("com.mysql.jdbc.Driver");

} catch (ClassNotFoundException err) {

err.printStackTrace();

System.exit(1);

}

connToMySQL = DriverManager.getConnection("jdbc:mysql://192.168.91.101:3306/hive?useUnicode=true&characterEncoding=UTF8",

"root", "root"); //编码不要写成UTF-8

}

return connToMySQL;

}

public static void closeHiveConn() throws SQLException {

if (connToHive != null) {

connToHive.close();

}

}

public static void closeMySQLConn() throws SQLException {

if (connToMySQL != null) {

connToMySQL.close();

}

}

public static void main(String[] args) throws SQLException {

System.out.println(getMySQLConn());

closeMySQLConn();

}

}

package com.wzl.hive;

import java.sql.Connection;

import java.sql.ResultSet;

import java.sql.SQLException;

import java.sql.Statement;

/**

*

* 针对 Hive 的工具类

*/

class HiveUtil {

// 创建表

public static void createTable(String sql) throws SQLException {

Connection conn = DBHelper.getHiveConn();

Statement stmt = conn.createStatement();

ResultSet res = stmt.executeQuery(sql);

}

// 依据条件查询数据

public static ResultSet queryData(String sql) throws SQLException {

Connection conn = DBHelper.getHiveConn();

Statement stmt = conn.createStatement();

ResultSet res = stmt.executeQuery(sql);

return res;

}

// 加载数据

public static void loadData(String sql) throws SQLException {

Connection conn = DBHelper.getHiveConn();

Statement stmt = conn.createStatement();

ResultSet res = stmt.executeQuery(sql);

}

// 把数据存储到 MySQL 中

public static void hiveToMySQL(ResultSet res) throws SQLException {

Connection conn = DBHelper.getMySQLConn();

Statement stmt = conn.createStatement();

while (res.next()) {

String rdate = res.getString(1);

String time = res.getString(2);

String type = res.getString(3);

String relateclass = res.getString(4);

String information = res.getString(5) + res.getString(6) + res.getString(7);

StringBuffer sql = new StringBuffer();

sql.append("insert into hadooplog values(0,'");

sql.append(rdate + "','");

sql.append(time + "','");

sql.append(type + "','");

sql.append(relateclass + "','");

sql.append(information + "')");

System.out.println(sql.toString());

int i = stmt.executeUpdate(sql.toString());

}

}

}

package com.wzl.hive;

import java.sql.ResultSet;

import java.sql.SQLException;

public class AnalyszeHadoopLog {

public static void main(String[] args) throws SQLException {

StringBuffer sql = new StringBuffer();

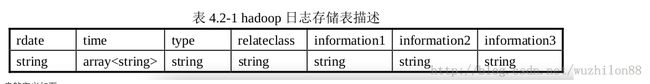

// 第一步:在 Hive 中创建表

sql.append("create table if not exists loginfo( ");

sql.append("rdate string, ");

sql.append("time array<string>, ");

sql.append("type string, ");

sql.append("relateclass string, ");

sql.append("information1 string, ");

sql.append("information2 string, ");

sql.append("information3 string) ");

sql.append("row format delimited fields terminated by ' ' ");

sql.append("collection items terminated by ',' ");

sql.append("map keys terminated by ':'");

System.out.println(sql);

HiveUtil.createTable(sql.toString());

// 第二步:加载 Hadoop 日志文件

sql.delete(0, sql.length());

sql.append("load data local inpath ");

sql.append("'/usr/local/hadoop/logs/hadoop-root-jobtracker-hadoop1.log'");

sql.append(" overwrite into table loginfo");

System.out.println(sql);

HiveUtil.loadData(sql.toString());

// 第三步:查询有用信息

sql.delete(0, sql.length());

sql.append("select rdate,time[0],type,relateclass,");

sql.append("information1,information2,information3 ");

sql.append("from loginfo where type='INFO'");

System.out.println(sql);

ResultSet res = HiveUtil.queryData(sql.toString());

// 第四步:查出的信息经过变换后保存到 MySQL 中

HiveUtil.hiveToMySQL(res);

// 第五步:关闭 Hive 连接

DBHelper.closeHiveConn();

// 第六步:关闭 MySQL 连接

DBHelper.closeMySQLConn();

}

}

4). 运行结果

在执行之前要注意的问题:

- 在运行前必须保证hive远端服务端口是开的 执行命令:nohup hive --service hiveserver & 如果没有执行这句命令常出现这个错误:Could not establish connection to 192.168.91.101:10000/default: java.net.ConnectException: Connection refused: connect

- mysql已经建立了hadooplog表

- mysql数据库允许本机连接数据库执行命令:grant all privileges on *.* to root@'%' identified by 'root'; 这句意思是允许任何的ip都能访问mysql数据库。如果如果没有执行这句命令常出现这个错误:java连接linux中mysql出现:Access denied for user 'root'@'192.168.91.1' (using password: YES)

mysql> use hive; mysql> show tables; mysql> select * from hadooplog;

5). 经验总结

在示例中同时对 Hive 的数据仓库库和 MySQL 数据库进行操作,虽然都是使用了 JDBC接口,但是一些地方还是有差异的,这个实战示例能比较好地体现 Hive 与关系型数据库的异同。

如果我们直接采用 MapReduce 来做,效率会比使用 Hive 高,因为 Hive 的底层就是调用了 MapReduce,但是程序的复杂度和编码量都会大大增加,特别是对于不熟悉 MapReduce编程的开发人员,这是一个棘手问题。Hive 在这两种方案中找到了平衡,不仅处理效率较高,而且实现起来也相对简单,给传统关系型数据库编码人员带来了便利,这就是目前 Hive被许多商业组织所采用的原因。