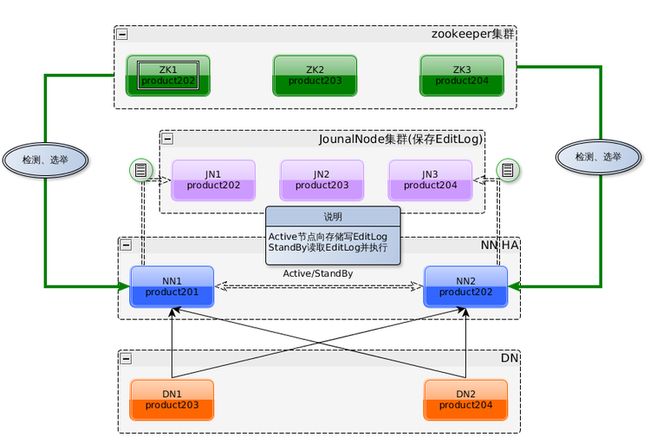

1:原理图

A:NN1、NN2(或者更多个NN节点)只有一个是Active状态,通过自带ZKFailoverController组件(zookeeper客户端)和zookeeper集群协同对所有NN节点进行检测和选举来达到此目的。

B:Active NN 的EditLog 写入共享的JournalNode集群中,Standby NN通过JournalNode集群获取Editlog,并在本地运行来保持和Active NN 的元数据同步。

C:如果不配置zookeeper,可以手工切换Active NN/Standby NN;如果要配置

zookeeper自动切换,还需要提供切换方法,也就是要配置dfs.ha.fencing.methods参数。

2:建立用户hadoop的ssh无密码登陆

[root@product201 ~]# su - hadoop

[hadoop@product201 ~]$ cd .ssh

[hadoop@product201 .ssh]$ ssh product201 cat /home/hadoop/.ssh/id_rsa.pub >> authorized_keys

[hadoop@product201 .ssh]$ ssh product202 cat /home/hadoop/.ssh/id_rsa.pub >> authorized_keys

[hadoop@product201 .ssh]$ ssh product203 cat /home/hadoop/.ssh/id_rsa.pub >> authorized_keys

[hadoop@product201 .ssh]$ ssh product204 cat /home/hadoop/.ssh/id_rsa.pub >> authorized_keys

[hadoop@product201 .ssh]$ chmod 600 authorized_keys

[hadoop@product201 .ssh]$ scp authorized_keys product202:/home/hadoop/.ssh/.

[hadoop@product201 .ssh]$ scp authorized_keys product203:/home/hadoop/.ssh/.

[hadoop@product201 .ssh]$ scp authorized_keys product204:/home/hadoop/.ssh/.

[hadoop@product201 .ssh]$ ssh product201 date

[hadoop@product201 .ssh]$ ssh product202 date

[hadoop@product201 .ssh]$ ssh product203 date

[hadoop@product201 .ssh]$ ssh product204 date

[hadoop@product201 .ssh]$ scp known_hosts product202:/home/hadoop/.ssh/.

[hadoop@product201 .ssh]$ scp known_hosts product203:/home/hadoop/.ssh/.

[hadoop@product201 .ssh]$ scp known_hosts product204:/home/hadoop/.ssh/.

***********************************************************************

*************************

TIPS:

如果要重新建立ssh无密码登陆,只要先删除.ssh目录所有文件,然后用ssh-keygen重新生成密钥对,然后按上面过程处理即可。

特别要注意的是authorized_keys文件的权限是600,而.ssh目录的权限是700

***********************************************************************

*************************

3:zookeeper配置

[hadoop@product201 .ssh]$ cd /app/hadoop/zookeeper345/conf

[hadoop@product201 conf]$ cp zoo_sample.cfg zoo.cfg

[hadoop@product201 conf]$ vi zoo.cfg

[hadoop@product201 conf]$ cat zoo.cfg

dataDir=/app/hadoop/zookeeper345/mydata

dataLogDir=/app/hadoop/zookeeper345/logs

server.1=product202:2888:3888

server.2=product203:2888:3888

server.3=product204:2888:3888

[hadoop@product201 conf]$ cd ..

[hadoop@product201 zookeeper345]$ mkdir mydata

[hadoop@product201 zookeeper345]$ mkdir logs

[hadoop@product201 zookeeper345]$ cd ..

[hadoop@product201 hadoop]$ scp -r zookeeper345 product202:/app/hadoop/

[hadoop@product201 hadoop]$ scp -r zookeeper345 product203:/app/hadoop/

[hadoop@product201 hadoop]$ scp -r zookeeper345 product204:/app/hadoop/

[hadoop@product201 hadoop]$ ssh -t -p 22 hadoop@product202 "echo 1 >/app/hadoop/zookeeper345/mydata/myid"

[hadoop@product201 hadoop]$ ssh -t -p 22 hadoop@product203 "echo 2 >/app/hadoop/zookeeper345/mydata/myid"

[hadoop@product201 hadoop]$ ssh -t -p 22 hadoop@product204 "echo 3 >/app/hadoop/zookeeper345/mydata/myid"

4:Hadoop配置(手工切换NN)

A:编辑slaves

[hadoop@product201 hadoop]$ cd hadoop220/etc/hadoop

[hadoop@product201 hadoop]$ vi slaves

[hadoop@product201 hadoop]$ cat slaves

B:编辑hadoop-env.sh

[hadoop@product201 hadoop]$ vi hadoop-env.sh

[hadoop@product201 hadoop]$ cat hadoop-env.sh

export JAVA_HOME=/usr/java/jdk1.7.0_21

C:编辑JournalNode节点

[hadoop@product201 hadoop]$ vi core-site.xml

[hadoop@product201 hadoop]$ cat core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://product201:8020</value>

</property>

</configuration>

D:编辑hdfs-site.xml

[hadoop@product201 hadoop]$ vi hdfs-site.xml

[hadoop@product201 hadoop]$ cat hdfs-site.xml

<configuration>

<property>

<name>dfs.nameservices</name>

<value>cluster1</value>

<description>nameservices名称,多个名称用逗号隔开</description>

</property>

<property>

<name>dfs.ha.namenodes.cluster1</name>

<value>nn1,nn2</value>

<description>dfs.nameservices中定义的nameservice中包含的namenode,多个namenode用逗号隔开。</description>

</property>

<property>

<name>dfs.namenode.rpc-address.cluster1.nn1</name>

<value>product201:8020</value>

</property>

<property>

<name>dfs.namenode.rpc-address.cluster1.nn2</name>

<value>product202:8020</value>

</property>

<property>

<name>dfs.namenode.http-address.cluster1.nn1</name>

<value>product201:50070</value>

</property>

<property>

<name>dfs.namenode.http-address.cluster1.nn2</name>

<value>product202:50070</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:///app/hadoop/hadoop220/mydata/name</value>

<description>保存fsimage的目录,多个逗号隔开的目录可以作为冗余。</description>

</property>

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://product202:8485;product203:8485;product204:8485/cluster1</value>

<description>多个namenode共享目录,为实施HA而存放EditLog。对于非HA系统为空。</description>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:///app/hadoop/hadoop220/mydata/data</value>

</property>

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>false</value>

</property>

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/app/hadoop/hadoop220/mydata/journal/</value>

<description>HA方式JournalNode节点存放EditLog的目录</description>

</property>

</configuration>

E:编辑mapred-site

[hadoop@product201 hadoop]$ vi mapred-site.xml

[hadoop@product201 hadoop]$ cat mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<!-- jobhistory properties -->

<property>

<name>mapreduce.jobhistory.address</name>

<value>product202:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>product202:19888</value>

</property>

</configuration>

F:编辑yarn-site.xml

[hadoop@product201 hadoop]$ vi yarn-site.xml

[hadoop@product201 hadoop]$ cat yarn-site.xml

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>product201</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>${yarn.resourcemanager.hostname}:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>${yarn.resourcemanager.hostname}:8030</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>${yarn.resourcemanager.hostname}:8088</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.https.address</name>

<value>${yarn.resourcemanager.hostname}:8090</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>${yarn.resourcemanager.hostname}:8031</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>${yarn.resourcemanager.hostname}:8033</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler</value>

</property>

<property>

<name>yarn.scheduler.fair.allocation.file</name>

<value>${yarn.home.dir}/etc/hadoop/fairscheduler.xml</value>

</property>

<property>

<name>yarn.nodemanager.local-dirs</name>

<value>/app/hadoop/hadoop220/mydata/yarn</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.nodemanager.remote-app-log-dir</name>

<value>/tmp/logs</value>

</property>

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>16384</value>

</property>

<property>

<name>yarn.nodemanager.resource.cpu-vcores</name>

<value>12</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

G:编辑fairscheduler.xml

[hadoop@product201 hadoop]$ vi fairscheduler.xml

[hadoop@product201 hadoop]$ cat fairscheduler.xml

<?xml version="1.0"?>

<allocations>

<queue name="news">

<minResources>10240 mb, 10 vcores </minResources>

<maxResources>15360 mb, 12 vcores </maxResources>

<maxRunningApps>20</maxRunningApps>

<minSharePreemptionTimeout>300</minSharePreemptionTimeout>

<weight>1.0</weight>

<aclSubmitApps>root,yarn,search,hdfs</aclSubmitApps>

</queue>

<queue name="crawler">

<minResources>10240 mb, 10 vcores</minResources>

<maxResources>15360 mb, 12 vcores</maxResources>

</queue>

<queue name="map">

<minResources>10240 mb, 10 vcores</minResources>

<maxResources>15360 mb, 12 vcores</maxResources>

</queue>

</allocations>

H:部署hadoop安装包

[hadoop@product201 hadoop]$ cd ..

[hadoop@product201 etc]$ cd ..

[hadoop@product201 hadoop220]$ mkdir logs

[hadoop@product201 hadoop220]$ mkdir -p mydata/name

[hadoop@product201 hadoop220]$ mkdir -p mydata/data

[hadoop@product201 hadoop220]$ mkdir -p mydata/journal

[hadoop@product201 hadoop220]$ mkdir -p mydata/yarn

[hadoop@product201 hadoop220]$ cd ..

[hadoop@product201 hadoop]$ scp -r hadoop220 product202:/app/hadoop/

[hadoop@product201 hadoop]$ scp -r hadoop220 product203:/app/hadoop/

[hadoop@product201 hadoop]$ scp -r hadoop220 product204:/app/hadoop/

5:启动hadoop

A:启动JournalNode节点product202、product203、product204

[hadoop@product202 ~]$ cd /app/hadoop/hadoop220/

[hadoop@product202 hadoop220]$ sbin/hadoop-daemon.sh start journalnode

[hadoop@product203 ~]$ cd /app/hadoop/hadoop220/

[hadoop@product203 hadoop220]$ sbin/hadoop-daemon.sh start journalnode

[hadoop@product204 ~]$ cd /app/hadoop/hadoop220/

[hadoop@product204 hadoop220]$ sbin/hadoop-daemon.sh start journalnode

B:格式化namenode

[hadoop@product201 hadoop220]$ bin/hdfs namenode -format

C:启动nn1

[hadoop@product201 hadoop220]$ sbin/hadoop-daemon.sh start namenode

D:启动nn2

在nn2上同步nn1的元数据信息

[hadoop@product202 hadoop220]$ bin/hdfs namenode -bootstrapStandby

启动nn2

[hadoop@product202 hadoop220]$ sbin/hadoop-daemon.sh start namenode

E:将nn1切换成active并启动datanode

[hadoop@product201 hadoop220]$ bin/hdfs haadmin -transitionToActive nn1

[hadoop@product201 hadoop220]$ sbin/hadoop-daemons.sh start datanode

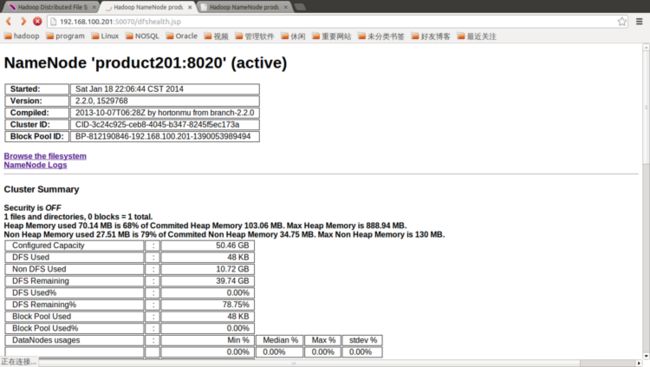

F:nn1:50070

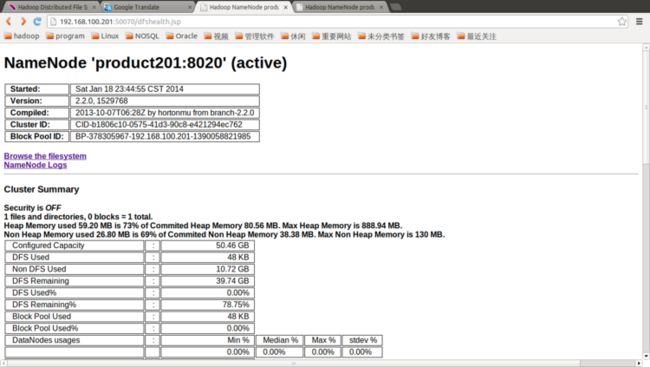

G:nn2:50070

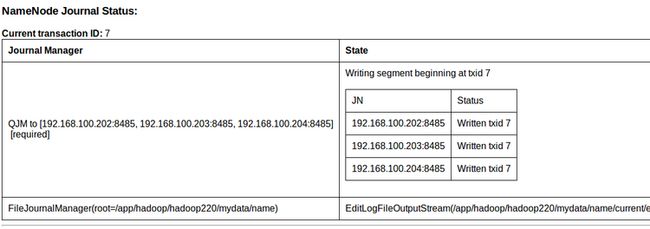

H:JournalNode状态

TIPS:

以上的配置是通过手工切换Nanmenode,如将nn1切换到nn2的命令如下:

[hadoop@product201 hadoop220]$ bin/hdfs haadmin -transitionToStandby nn1

[hadoop@product201 hadoop220]$ bin/hdfs haadmin -transitionToActive nn2

另外,使用stop-dfs.sh可以将datanode、namenode、journalnode进程都停止。

***********************************************************************

*************************

***********************************************************************

*************************

***********************************************************************

*************************

6:Hadoop配置(自动切换NN)

[hadoop@product201 hadoop220]$ sbin/stop-dfs.sh

[hadoop@product201 hadoop220]$ cd etc/hadoop

[hadoop@product201 hadoop]$ vi hdfs-site.xml

[hadoop@product201 hadoop]$ cat hdfs-site.xml

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>product202:2181,product203:2181,product204:2181</value>

</property>

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/home/hadoop/.ssh/id_rsa</value>

</property>

[hadoop@product201 hadoop]$ cd ..

[hadoop@product201 etc]$ scp -r hadoop product202:/app/hadoop/hadoop220/etc/

[hadoop@product201 etc]$ scp -r hadoop product203:/app/hadoop/hadoop220/etc/

[hadoop@product201 etc]$ scp -r hadoop product204:/app/hadoop/hadoop220/etc/

7:启动zookeeper和hadoop

关于Hadoop HA启动流程图参见 HDFS HA系列实验之经验总结

[hadoop@product202 hadoop220]$ /app/hadoop/zookeeper345/bin/zkServer.sh start

[hadoop@product203 hadoop220]$ /app/hadoop/zookeeper345/bin/zkServer.sh start

[hadoop@product204 hadoop220]$ /app/hadoop/zookeeper345/bin/zkServer.sh start

[hadoop@product201 etc]$ cd ..

[hadoop@product201 hadoop220]$ bin/hdfs zkfc -formatZK

[hadoop@product201 hadoop220]$ sbin/start-dfs.sh

8:故障转移测试

[hadoop@product201 hadoop220]$ jps

[hadoop@product201 hadoop220]$ bin/hdfs haadmin -getServiceState nn1

[hadoop@product201 hadoop220]$ kill -9 5792

[hadoop@product201 hadoop220]$ bin/hdfs haadmin -getServiceState nn1

[hadoop@product201 hadoop220]$ bin/hdfs haadmin -getServiceState nn2

[hadoop@product201 hadoop220]$ sbin/hadoop-daemon.sh start namenode

[hadoop@product201 hadoop220]$ bin/hdfs haadmin -getServiceState nn1

TIPS:

相关的hadoop配置文件下载(HA+JN)

相关的hadoop配置文件下载(HA+JN+Zookeeper)