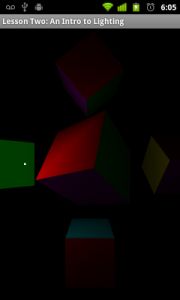

Android Lesson Two: Ambient and Diffuse Lighting

原文链接地址:http://www.learnopengles.com/android-lesson-two-ambient-and-diffuse-lighting/

Welcome to the second tutorial for Android. In this lesson, we’re going to learn how to implementLambertian reflectance using shaders, otherwise known as your standard diffuse lighting. In OpenGL ES 2, we need to implement our own lighting algorithms, so we will learn how the math works and how we can apply it to our scenes.

Assumptions and prerequisites

Each lesson in this series builds on the lesson before it. Before we begin, please review the first lesson as this lesson will build upon the concepts introduced there.

What is light?

A world without lighting would be a dim one, indeed. Without light, we would not even be able to perceive the world or the objects that lie around us, except via the other senses such as sound and touch. Light shows us how bright or dim something is, how near or far it is, and what angle it lies at.

In the real world, what we perceive as light is really the aggregation of trillions of tiny particles called photons, which fly out of a light source, bounce around thousands or millions of times, and eventually reach our eye where we perceive it as light.

How can we simulate the effects of light via computer graphics? There are two popular ways to do it:ray tracing, andrasterisation. Ray tracing works by mathematically tracing actual rays of light and seeing where they end up. This technique gives very accurate and realistic results, but the downside is that simulating all of those rays is very computationally expensive, and usually too slow for real-time rendering. Due to this limitation, most real-time computer graphics use rasterisation instead, which simulates lighting by approximating the result. Given the realism of recent games, rasterisation can also look very nice, and is fast enough for real-time graphics even on mobile phones. Open GL ES is primarily a rasterisation library, so this is the approach we will focus on.

The different kinds of light

It turns out that we can abstract the way that light works and come up with three basic types of lighting:

|

Ambient lighting. |

Ambient lighting This is a base level of lighting that seems to pervade an entire scene. It is light that doesn’t appear to come from any light source in particular because it has bounced around so much before reaching you. This type of lighting can be experienced outdoors on an overcast day, or indoors as the cumulative effect of many different light sources. Instead of calculating all of the individual lights, we can just set a base light level for the object or scene. |

| Diffuse lighting This is light that reaches your eye after bouncing directly off of an object. The illumination level of the object varies with its angle to the lighting. Something facing the light head on is lit more brightly than something facing the light at an angle. Also, we perceive the object to be the same brightness no matter which angle we are at relative to the object. This is otherwise known asLambert’s cosine law. Diffuse lighting or Lambertian reflectance is common in everyday life and can be easily seen on a white wall lit up by an indoor light. |

|

| Specular lighting Unlike diffuse lighting, specular lighting changes as we move relative to the object. This gives “shininess” to the object and can be seen on “smoother” surfaces such as glass and other shiny objects. |

Simulating light

Just as there are three main types of light in a 3D scene, there are also three main types of light sources: directional, point, and spotlight. These can also be easily seen in everyday life.

| Directional lighting Directional lighting usually comes from a bright source that is so far away that it lights up the entire scene evenly and to the same brightness. This light source is the simplest type as the light is the same strength and direction no matter where you are in the scene. |

|

| Point lighting Point lights can be added to a scene in order to give more varied and realistic lighting. The illumination of a point lightfalls off with distance, and its light rays travel out in all directions with the point light at the center. |

|

| Spot lighting In addition to the properties of a point light, spot lights also have the direction of light attenuated, usually in the shape of a cone. |

The math

In this lesson, we’re going to be looking at ambient lighting and diffuse lighting coming from a point source.

Ambient lighting

Ambient lighting is really indirect diffuse lighting, but it can also be thought of as a low-level light which pervades the entire scene. If we think of it that way, then it becomes very easy to calculate:

final color = material color * ambient light color

For example, let’s say our object is red and our ambient light is a dim white. Let’s assume that we store color as an array of three colors: red, green, and blue, using theRGB color model:

final color = {1, 0, 0} * {0.1, 0.1, 0.1} = {0.1, 0.0, 0.0}

The final color of the object will be a dim red, which is what you’d expect if you had a red object illuminated by a dim white light. There is really nothing more to basic ambient lighting than that, unless you want to get into more advanced lighting techniques such as radiosity.

Diffuse lighting – point light source

For diffuse lighting, we need to add attenuation and a light position. The light position will be used to calculate the angle between the light and the surface, which will affect the surface’s overall level of lighting. It will also be used to calculate the distance between the light and the surface, which determines the strength of the light at that point.

Step 1: Calculate the lambert factor.

The first major calculation we need to make is to figure out the angle between the surface and the light. A surface which is facing the light straight-on should be illuminated at full strength, while a surface which is slanted should get less illumination. The proper way to calculate this is by using Lambert’s cosine law. If we have two vectors, one being from the light to a point on the surface, and the second being asurface normal (if the surface is a flat plane, then the surface normal is a vector pointing straight up, or orthogonal to that surface), then we can calculate the cosine by first normalizing each vector so that it has a length of one, and then by calculating thedot product of the two vectors. This is an operation that can easily be done via OpenGL ES 2 shaders.

Let’s call this the lambert factor, and it will have a range of between0 and1.

light vector = light position - object position cosine = dot product(object normal, normalize(light vector)) lambert factor = max(cosine, 0)

Fitst we calculate the light vector by subtracting the object position from the light position. Then we get the cosine by doing a dot product between the object normal and the light vector. We normalize the light vector, which means to change its length so it has a length of one. The object normal should already have a length of one. Taking the dot product of two normalized vectors gives you the cosine between them. Because the dot product can have a range of-1 to1, we clamp it to a range of 0 to 1.

Here’s an example with an flat plane at the origin and the surface normal pointing straight up toward the sky. The light is positioned at {0, 10, -10}, or 10 units up and 10 units straight ahead. We want to calculate the light at the origin.

light vector = {0, 10, -10} - {0, 0, 0} = {0, 10, -10} object normal = {0, 1, 0}

In plain English, if we move from where we are along the light vector, we reach the position of the light. To normalize the vector, we divide each component by the vector length:

light vector length = square root(0*0 + 10*10 + -10*-10) = square root(200) = 14.14

Then we calculate the dot product:

dot product({0, 1, 0}, {0, 0.707, -0.707}) = (0 * 0) + (1 * 0.707) + (0 * -0.707) = 0 + 0.707 + 0 = 0.707

|

1

|

lambert factor = max(0.707, 0) = 0.707

|

OpenGL ES 2′s shading language has built in support for some of these functions so we don’t need to do all of the math by hand, but it can still be useful to understand what is going on.

Step 2: Calculate the attenuation factor.

Next, we need to calculate the attenuation. Real light attenuation from a point light source follows theinverse square law, which can also be stated as:

luminosity = 1 / (distance * distance)

Going back to our example, since we have a distance of 14.14, here is what our final luminosity looks like:

luminosity = 1 / (14.14*14.14) = 1 / 200 = 0.005

As you can see, the inverse square law can lead to a strong attenuation over distance. This is how light from a point light source works in the real world, but since our graphics displays have a limited range, it can be useful to dampen this attenuation factor so we still get realistic lighting without things looking too dark.

Step 3: Calculate the final color.

Now that we have both the cosine and the attenuation, we can calculate our final illumination level:

final color = material color * (light color * lambert factor * luminosity)

Going with our previous example of a red material and a full white light source, here is the final calculation:

final color = {1, 0, 0} * ({1, 1, 1} * 0.707 * 0.005}) = {1, 0, 0} * {0.0035, 0.0035, 0.0035} = {0.0035, 0, 0}

To recap, for diffuse lighting we need to use the angle between the surface and the light as well as the distance between the surface and the light in order to calculate the final overall diffuse illumination level. Here are the steps:

//Step one

light vector = light position - object position

cosine = dot product(object normal, normalize(light vector))

lambert factor = max(cosine, 0)

//Step two

luminosity = 1 / (distance * distance)

//Step three

final color = material color * (light color * lambert factor * luminosity)

Putting this all into OpenGL ES 2 shaders

The vertex shader

finalString vertexShader =

"uniform mat4 u_MVPMatrix; \n" // A constant representing the combined model/view/projection matrix.

+"uniform mat4 u_MVMatrix; \n" // A constant representing the combined model/view matrix.

+"uniform vec3 u_LightPos; \n" // The position of the light in eye space.

+"attribute vec4 a_Position; \n" // Per-vertex position information we will pass in.

+"attribute vec4 a_Color; \n" // Per-vertex color information we will pass in.

+"attribute vec3 a_Normal; \n" // Per-vertex normal information we will pass in.

+"varying vec4 v_Color; \n" // This will be passed into the fragment shader.

+"void main() \n" // The entry point for our vertex shader.

+"{ \n"

// Transform the vertex into eye space.

+" vec3 modelViewVertex = vec3(u_MVMatrix * a_Position); \n"

// Transform the normal's orientation into eye space.

+" vec3 modelViewNormal = vec3(u_MVMatrix * vec4(a_Normal, 0.0)); \n"

// Will be used for attenuation.

+" float distance = length(u_LightPos - modelViewVertex); \n"

// Get a lighting direction vector from the light to the vertex.

+" vec3 lightVector = normalize(u_LightPos - modelViewVertex); \n"

// Calculate the dot product of the light vector and vertex normal. If the normal and light vector are

// pointing in the same direction then it will get max illumination.

+" float diffuse = max(dot(modelViewNormal, lightVector), 0.1); \n"

// Attenuate the light based on distance.

+" diffuse = diffuse * (1.0 / (1.0 + (0.25 * distance * distance))); \n"

// Multiply the color by the illumination level. It will be interpolated across the triangle.

+" v_Color = a_Color * diffuse; \n"

// gl_Position is a special variable used to store the final position.

// Multiply the vertex by the matrix to get the final point in normalized screen coordinates.

+" gl_Position = u_MVPMatrix * a_Position; \n"

+"} \n";

There is quite a bit going on here. We have our combined model/view/projection matrix as inlesson one, but we’ve also added a model/view matrix. Why? We will need this matrix in order to calculate the distance between the position of the light source and the position of the current vertex. For diffuse lighting, it actually doesn’t matter whether you use world space (model matrix) or eye space (model/view matrix) so long as you can calculate the proper distances and angles.

We pass in the vertex color and position information, as well as the surface normal. We will pass the final color to the fragment shader, which will interpolate it between the vertices. This is also known asGouraud shading.

Let’s look at each part of the shader to see what’s going on:

// Transform the vertex into eye space.

+" vec3 modelViewVertex = vec3(u_MVMatrix * a_Position); \n"

Since we’re passing in the position of the light in eye space, we convert the current vertex position to a coordinate in eye space so we can calculate the proper distances and angles.

// Transform the normal's orientation into eye space.

+" vec3 modelViewNormal = vec3(u_MVMatrix * vec4(a_Normal, 0.0)); \n"

We also need to transform the normal’s orientation. Here we are just doing a regular matrix multiplication like with the position, but if the model or view matrices have been scaled or skewed, this won’t work: we’ll actually have to undo the effect of the skew or scale by multiplying the normal by the transpose of the inverse of the original matrix.This website best explains why we have to do this.

// Will be used for attenuation.

+" float distance = length(u_LightPos - modelViewVertex); \n"

As shown before in the math section, we need the distance in order to calculate the attenuation factor.

// Get a lighting direction vector from the light to the vertex.

+" vec3 lightVector = normalize(u_LightPos - modelViewVertex); \n"

We also need the light vector to calculate the Lambertian reflectance factor.

// Calculate the dot product of the light vector and vertex normal. If the normal and light vector are

// pointing in the same direction then it will get max illumination.

+" float diffuse = max(dot(modelViewNormal, lightVector), 0.1); \n"

This is the same math as above in the math section, just done in an OpenGL ES 2 shader. The 0.1 at the end is just a really cheap way of doing ambient lighting (the value will be clamped to a minimum of 0.1).

// Attenuate the light based on distance.

+" diffuse = diffuse * (1.0 / (1.0 + (0.25 * distance * distance))); \n"

The attenuation math is a bit different than above in the math section. We scale the square of the distance by 0.25 to dampen the attenuation effect, and we also add 1.0 to the modified distance so that we don’t get oversaturation when the light is very close to an object (otherwise, when the distance is less than one, this equation will actually brighten the light instead of attenuating it).

// Multiply the color by the illumination level. It will be interpolated across the triangle.

+" v_Color = a_Color * diffuse; \n"

// gl_Position is a special variable used to store the final position.

// Multiply the vertex by the matrix to get the final point in normalized screen coordinates.

+" gl_Position = u_MVPMatrix * a_Position; \n"

Once we have our final light color, we multiply it by the vertex color to get the final output color, and then we project the position of this vertex to the screen.

The pixel shader

final String fragmentShader =

"precision mediump float; \n" // Set the default precision to medium. We don't need as high of a

// precision in the fragment shader.

+ "varying vec4 v_Color; \n" // This is the color from the vertex shader interpolated across the

// triangle per fragment.

+ "void main() \n" // The entry point for our fragment shader.

+ "{ \n"

+ " gl_FragColor = v_Color; \n" // Pass the color directly through the pipeline.

+ "} \n";

Because we are calculating light on a per-vertex basis, our fragment shader looks the same as it did inthe first lesson — all we do is pass through the color directly through. In the next lesson, we’ll look at per-pixel lighting.

Per-Vertex versus per-pixel lighting

In this lesson we have focused on implementing per-vertex lighting. For diffuse lighting of objects with smooth surfaces, such as terrain, or for objects with many triangles, this will often be good enough. However, when your objects don’t contain many vertices (such as our cubes in this example program) or have sharp corners, vertex lighting can result in artifacts as the light level is linearly interpolated across the polygon; these artifacts also become much more apparent when specular highlights are added to the image. More can be seen at the Wiki article on Gouraud shading.

An explanation of the changes to the program

Besides the addition of per-vertex lighting, there are other changes to the program. We’ve switched from displaying a few triangles to a few cubes, and we’ve also added utility functions to load in the shader programs. There are also new shaders to display the position of the light as a point, as well as other various small changes.

Construction of the cube

In lesson one, we packed both position and color attributes into the same array, but OpenGL ES 2 also lets us specify these attributes in seperate arrays:

// X, Y, Z

final float[] cubePositionData =

{

// In OpenGL counter-clockwise winding is default. This means that when we look at a triangle,

// if the points are counter-clockwise we are looking at the "front". If not we are looking at

// the back. OpenGL has an optimization where all back-facing triangles are culled, since they

// usually represent the backside of an object and aren't visible anyways.

// Front face

-1.0f,1.0f, 1.0f,

-1.0f, -1.0f,1.0f,

1.0f,1.0f, 1.0f,

-1.0f, -1.0f,1.0f,

1.0f, -1.0f,1.0f,

1.0f,1.0f, 1.0f,

...

// R, G, B, A

final float[] cubeColorData =

{

// Front face (red)

1.0f,0.0f, 0.0f,1.0f,

1.0f,0.0f, 0.0f,1.0f,

1.0f,0.0f, 0.0f,1.0f,

1.0f,0.0f, 0.0f,1.0f,

1.0f,0.0f, 0.0f,1.0f,

1.0f,0.0f, 0.0f,1.0f,

...

New OpenGL flags

We have also enabled culling and the depth buffer via glEnable() calls:

// Use culling to remove back faces.

GLES20.glEnable(GLES20.GL_CULL_FACE);

// Enable depth testing

GLES20.glEnable(GLES20.GL_DEPTH_TEST);

As an optimization, you can tell OpenGL to eliminate triangles that are on the back side of an object. When we defined our cube, we also defined the three points of each triangle so that they are counter-clockwise when looking at the “front” side. When we flip the triangle around so we’re looking at the “back” side, the points then appear clockwise. You can only ever see three sides of a cube at the same time so this optimization tells OpenGL to not waste its time drawing the back sides of triangles.

Later when we draw transparent objects we may want to turn culling back off, as then it will be possible to see the back sides of objects.

We’ve also enabled depth testing. If you always draw things in order from back to front then depth testing is not strictly necessary, but by enabling it not only do you not need to worry about the draw order (although rendering can be faster if you draw closer objects first), but some graphics cards will also make optimizations which can speed up rendering by spending less time drawing pixels that will be drawn over anyways.

Changes in loading shader programs

Because the steps to loading shader programs in OpenGL are mostly the same, these steps can easily be refactored into a separate method. We’ve also added the following calls to retrieve debug info, in case the compilation/link fails:

GLES20.glGetProgramInfoLog(programHandle);

GLES20.glGetShaderInfoLog(shaderHandle);

Vertex and shader program for the light point

There is a new vertex and shader program specifically for drawing the point on the screen that represents the current position of the light:

// Define a simple shader program for our point.

final String pointVertexShader =

"uniform mat4 u_MVPMatrix; \n"

+"attribute vec4 a_Position; \n"

+"void main() \n"

+"{ \n"

+" gl_Position = u_MVPMatrix \n"

+" * a_Position; \n"

+" gl_PointSize = 5.0; \n"

+"} \n";

final String pointFragmentShader =

"precision mediump float; \n"

+"void main() \n"

+"{ \n"

+" gl_FragColor = vec4(1.0, \n"

+" 1.0, 1.0, 1.0); \n"

+"} \n";

This shader is similar to the simple shader from the first lesson. There’s a new property, gl_PointSize which we hard-code to 5.0; this is the output point size in pixels. It’s used when we draw the point using GLES20.GL_POINTS as the mode. We’ve also hard-coded the output color to white.

Further Exercises

- Try removing the “oversaturation protection” and see what happens.

- There is a flaw with the way the ambient lighting is done. Can you spot what it is?

- What happens if you add a gl_PointSize to the cube shader and draw it using GL_POINTS?

Further Reading

- Fallout Software: OpenGL Lighting or How Light Sources Work (Long, In-depth Tutorial)

- Clockworkcoders Tutorials: Per Fragment Lighting

- Lighthouse3D.com: The Normal Matrix

- arcsynthesis.org: OpenGL Tutorials: Normal Transformation

- OpenGL Programming Guide: Chapter 5 Lighting

The further reading section above was an invaluable resource to me while writing this tutorial, so I highly recommend reading them for more information and explanations.