corosync+pacemaker构建高可用web集群

一、概述:

1.1 什么是AIS和OpenAIS?

AIS是应用接口规范,是用来定义应用程序接口(API)的开放性规范的集合,这些应用程序作为中间件为应用服务提供一种开放、高移植性的程序接口。是在实现高可用应用过程中是亟需的。服务可用性论坛(SA Forum)是一个开放性论坛,它开发并发布这些免费规范。使用AIS规范的应用程序接口(API),可以减少应用程序的复杂性和缩短应用程序的开发时间,这些规范的主要目的就是为了提高中间组件可移植性和应用程序的高可用性。

OpenAIS是基于SA Forum 标准的集群框架的应用程序接口规范。OpenAIS提供一种集群模式,这个模式包括集群框架,集群成员管理,通信方式,集群监测等,能够为集群软件或工具提供满足 AIS标准的集群接口,但是它没有集群资源管理功能,不能独立形成一个集群。

1.2 corosync简介

corosync最初只是用来演示OpenAIS集群框架接口规范的一个应用,可以说corosync是OpenAIS的一部分,但后面的发展明显超越了官方最初的设想,越来越多的厂商尝试使用corosync作为集群解决方案。如Redhat的RHCS集群套件就是基于corosync实现。

corosync只提供了message layer,而没有直接提供CRM,一般使用Pacemaker进行资源管理。

1.3 CRM中的几个基本概念

1.3.1 资源粘性:

资源粘性表示资源是否倾向于留在当前节点,如果为正整数,表示倾向,负数则会离开,-inf表示正无穷,inf表示正无穷。

1.3.2 资源类型:

primitive(native):基本资源,原始资源

group:资源组

clone:克隆资源(可同时运行在多个节点上),要先定义为primitive后才能进行clone。主要包含STONITH和集群文件系统(cluster filesystem)

master/slave:主从资源,如drdb(下文详细讲解)

1.3.3 RA类型:

Lsb:linux表中库,一般位于/etc/rc.d/init.d/目录下的支持start|stop|status等参数的服务脚本都是lsb

ocf:Open cluster Framework,开放集群架构

heartbeat:heartbaet V1版本

stonith:专为配置stonith设备而用

1.5 集群类型和模型

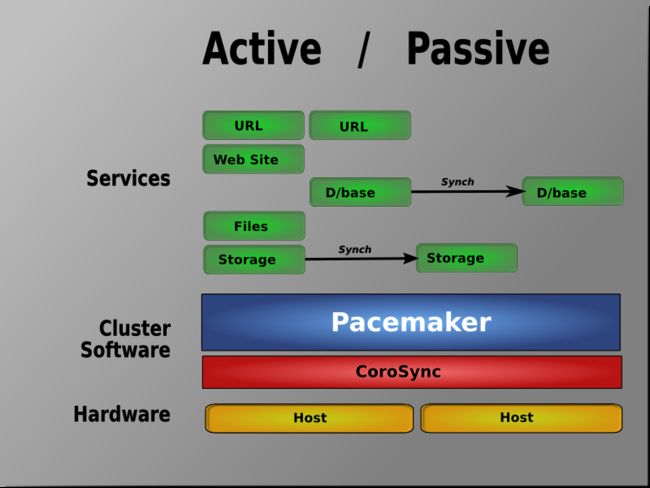

corosync+pacemaker可实现多种集群模型,包括 Active/Active, Active/Passive, N+1, N+M, N-to-1 and N-to-N。

Active/Passive 冗余:

N to N 冗余(多个节点多个服务):

二、在CentOS 6.x上配置基于corosync的Web高可用

2.1 基本前提配置:

两个节点要实现时间同步、ssh互信、hosts名称解析、安装httpd,这里不做详细介绍。

[root@node1 ~]# yum install httpd –y

[root@node1 ~]# echo "node1.toxingwang.com" >/var/www/html/index.html

[root@node2 ~]# yum install httpd –y

[root@node2 ~]# echo "node2.toxingwang.com" >/var/www/html/index.html

记得一定要关闭httpd的自启动:

[root@node1 ~]# chkconfig httpd off

[root@node1 ~]# chkconfig --list httpd

httpd 0:关闭 1:关闭 2:关闭 3:关闭 4:关闭 5:关闭 6:关闭[root@node2 ~]# chkconfig --list httpd

httpd 0:关闭 1:关闭 2:关闭 3:关闭 4:关闭 5:关闭 6:关闭

2.2 安装corosync:

注意,以下所有安装都是需要在所有节点上执行的。

2.2.1 安装依赖:

yum install libibverbs librdmacm lm_sensors libtool-ltdl openhpi-libs openhpi perl-TimeDate

2.2.2 安装集群组件:

RHEL6.x以后的版本中,直接集成了corosync和pacemaker,因此直接使用yum安装即可。

yum install corosync pacemaker –y

查看corosync安装生成文件:

[root@node1 ~]# rpm -ql corosync

/etc/corosync ##配置文件主目录

/etc/corosync/corosync.conf.example ##示例配置文件

/etc/corosync/corosync.conf.example.udpu

/etc/corosync/service.d

/etc/corosync/uidgid.d

/etc/dbus-1/system.d/corosync-signals.conf

/etc/rc.d/init.d/corosync ##服务脚本

/etc/rc.d/init.d/corosync-notifyd

/usr/bin/corosync-blackbox

/usr/libexec/lcrso

/usr/libexec/lcrso/coroparse.lcrso

/usr/libexec/lcrso/objdb.lcrso

/usr/libexec/lcrso/quorum_testquorum.lcrso

/usr/libexec/lcrso/quorum_votequorum.lcrso

/usr/libexec/lcrso/service_cfg.lcrso

/usr/libexec/lcrso/service_confdb.lcrso

/usr/libexec/lcrso/service_cpg.lcrso

/usr/libexec/lcrso/service_evs.lcrso

/usr/libexec/lcrso/service_pload.lcrso

/usr/libexec/lcrso/vsf_quorum.lcrso

/usr/libexec/lcrso/vsf_ykd.lcrso

/usr/sbin/corosync

/usr/sbin/corosync-cfgtool

/usr/sbin/corosync-cpgtool

/usr/sbin/corosync-fplay

/usr/sbin/corosync-keygen ##集群间通讯秘钥生成工具

/usr/sbin/corosync-notifyd

/usr/sbin/corosync-objctl

/usr/sbin/corosync-pload

/usr/sbin/corosync-quorumtool

/usr/share/doc/corosync-1.4.1……下略……

2.2.3 安装crmsh实现资源管理:

从pacemaker 1.1.8开始,crm发展成了一个独立项目,叫crmsh。也就是说,我们安装了pacemaker后,并没有crm这个命令,我们要实现对集群资源管理,还需要独立安装crmsh。crmsh的rpm安装可从如下地址下载:

http://download.opensuse.org/repositories/network:/ha-clustering:/Stable/CentOS_CentOS-6/x86_64/

crmsh依赖于pssh,因此也需要通过上面地址下载pssh.rpm

[root@node1 ~]# wget http://download.opensuse.org/repositories/network:/ha-clustering:/Stable/CentOS_CentOS-6/x86_64/crmsh-1.2.6-5.1.x86_64.rpm

[root@node1 ~]# wget http://download.opensuse.org/repositories/network:/ha-clustering:/Stable/CentOS_CentOS-6/x86_64/pssh-2.3.1-3.2.x86_64.rpm

[root@node1 ~]# yum --nogpgcheck localinstall pssh-2.3.1-3.2.x86_64.rpm crmsh-1.2.6-5.1.x86_64.rpm

特别注意:

使用了crmsh,就不在需要安装heartbeat,之前的版本中都需要安装heartbeat以利用其crm进行资源管理。网上大量的教程中都是基于pacemaker1.1.8以前的版本的。

说明:

如果不用yum安装,则所有的rpm包均可在http://clusterlabs.org/rpm/epel-5/x86_64/下载,具体如下:

cluster-glue

cluster-glue-libs

heartbeat

resource-agents

corosync

heartbeat-libs

pacemaker

corosynclib

libesmtp

pacemaker-libs

2.3 配置corosync:

2.3.1 主配置文件:

[root@node1 ~]# cd /etc/corosync/ <--主配置文件目录

[root@node1 corosync]# ls

corosync.conf.example corosync.conf.example.udpu service.d uidgid.d[root@node1 corosync]# cp corosync.conf.example corosync.conf

[root@node1 corosync]# vi corosync.conf# Please read the corosync.conf.5 manual page

compatibility: whitetanktotem {

version: 2 ##版本号,只能是2,不能修改

secauth: on ##安全认证,当使用aisexec时,会非常消耗CPU

threads: 2 ##线程数,根据CPU个数和核心数确定

interface {

ringnumber: 0 ##冗余环号,节点有多个网卡是可定义对应网卡在一个环内

bindnetaddr: 192.168.8.0 ##绑定心跳网段

mcastaddr: 226.94.8.8 ##心跳组播地址

mcastport: 5405 ##心跳组播使用端口

ttl: 1

}

}logging {

fileline: off ##指定要打印的行

to_stderr: no ##是否发送到标准错误输出

to_logfile: yes ##记录到文件

to_syslog: no ##记录到syslog

logfile: /var/log/cluster/corosync.log

debug: off

timestamp: on ##是否打印时间戳,利于定位错误,但会消耗CPU

logger_subsys {

subsys: AMF

debug: off

}

}service {

ver: 0

name: pacemaker ##定义corosync启动时同时启动pacemaker

# use_mgmtd: yes

}aisexec {

user: root

group: root

}amf {

mode: disabled

}

2.3.2 生成认证key:

使用corosync-keygen生成key时,由于要使用/dev/random生成随机数,因此如果新装的系统操作不多,如果没有足够的熵(关于random使用键盘敲击产生随机数的原理可自行google),可能会出现如下提示:

[root@node1 corosync]# corosync-keygen

Corosync Cluster Engine Authentication key generator.

Gathering 1024 bits for key from /dev/random.

Press keys on your keyboard to generate entropy.

Press keys on your keyboard to generate entropy (bits = 240).

此时只需要在本地登录后狂敲键盘即可!

[root@node1 corosync]# corosync-keygen

Corosync Cluster Engine Authentication key generator.

Gathering 1024 bits for key from /dev/random.

Press keys on your keyboard to generate entropy.

Writing corosync key to /etc/corosync/authkey.

[root@node1 corosync]# ll

总用量 24

-r--------. 1 root root 128 10月 19 19:18 authkey ##注意文件权限,该文件为data类型,因此无法直接查看

-rw-r--r--. 1 root root 546 10月 19 18:30 corosync.conf

-rw-r--r--. 1 root root 445 5月 15 05:09 corosync.conf.example

-rw-r--r--. 1 root root 1084 5月 15 05:09 corosync.conf.example.udpu

drwxr-xr-x. 2 root root 4096 5月 15 05:09 service.d

drwxr-xr-x. 2 root root 4096 5月 15 05:09 uidgid.d

2.3.3 拷贝配置至节点2:

[root@node1 corosync]# scp authkey corosync.conf node2:/etc/corosync/

authkey 100% 128 0.1KB/s 00:00

corosync.conf 100% 546 0.5KB/s 00:00

2.3.4 启动corosync

[root@node1 corosync]# service corosync start

Starting Corosync Cluster Engine (corosync): [确定]

[root@node1 corosync]# ssh node2 'service corosync start'

Starting Corosync Cluster Engine (corosync): [确定]

2.3.5 检查启动情况:

查看corosync引擎是否正常启动:

[root@node1 corosync]# grep -e "Corosync Cluster Engine" -e "configuration file" /var/log/messages

Oct 19 19:21:21 node1 corosync[2360]: [MAIN ] Corosync Cluster Engine ('1.4.1'): started and ready to provide service.

Oct 19 19:21:21 node1 corosync[2360]: [MAIN ] Successfully read main configuration file '/etc/corosync/corosync.conf'.

查看初始化成员节点通知是否正常发出:

[root@node1 corosync]# grep TOTEM /var/log/messages

Oct 19 19:21:21 node1 corosync[2360]: [TOTEM ] Initializing transport (UDP/IP Multicast).

Oct 19 19:21:21 node1 corosync[2360]: [TOTEM ] Initializing transmit/receive security: libtomcrypt SOBER128/SHA1HMAC (mode 0).

Oct 19 19:21:22 node1 corosync[2360]: [TOTEM ] The network interface [192.168.8.101] is now up.

Oct 19 19:21:23 node1 corosync[2360]: [TOTEM ] Process pause detected for 1264 ms, flushing membership messages.

Oct 19 19:21:23 node1 corosync[2360]: [TOTEM ] A processor joined or left the membership and a new membership was formed.

检查启动过程中是否有错误产生:

[root@node1 corosync]# grep ERROR: /var/log/messages | grep -v unpack_resources

Oct 19 19:21:22 node1 corosync[2360]: [pcmk ] ERROR: process_ais_conf: You have configured a cluster using the Pacemaker plugin for Corosync. The plugin is not supported in this environment and will be removed very soon.

Oct 19 19:21:22 node1 corosync[2360]: [pcmk ] ERROR: process_ais_conf: Please see Chapter 8 of 'Clusters from Scratch' (http://www.clusterlabs.org/doc) for details on using Pacemaker with CMAN

查看pacemaker是否正常启动:

[root@node1 corosync]# grep pcmk_startup /var/log/messages

Oct 19 19:21:22 node1 corosync[2360]: [pcmk ] info: pcmk_startup: CRM: Initialized

Oct 19 19:21:22 node1 corosync[2360]: [pcmk ] Logging: Initialized pcmk_startup

Oct 19 19:21:22 node1 corosync[2360]: [pcmk ] info: pcmk_startup: Maximum core file size is: 18446744073709551615

Oct 19 19:21:23 node1 corosync[2360]: [pcmk ] info: pcmk_startup: Service: 9

Oct 19 19:21:23 node1 corosync[2360]: [pcmk ] info: pcmk_startup: Local hostname: node1.toxingwang.com

可能存在的问题:iptables没有配置相关策略,导致两个节点无法通信。可关闭iptables或配置节点间的通信策略。

3、集群资源管理

3.1 crmsh基本介绍

[root@node1 ~]# crm <--进入crmsh

crm(live)# help ##查看帮助This is crm shell, a Pacemaker command line interface.

Available commands:

cib manage shadow CIBs ##CIB管理模块

resource resources management ##资源管理模块

configure CRM cluster configuration ##CRM配置,包含资源粘性、资源类型、资源约束等

node nodes management ##节点管理

options user preferences ##用户偏好

history CRM cluster history ##CRM 历史

site Geo-cluster support ##地理集群支持

ra resource agents information center ##资源代理配置

status show cluster status ##查看集群状态

help,? show help (help topics for list of topics) ##查看帮助

end,cd,up go back one level ##返回上一级

quit,bye,exit exit the program ##退出

crm(live)# configure <--进入配置模式crm(live)configure# show ##查看当前配置

node node1.toxingwang.com

node node2.toxingwang.com

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2"crm(live)configure# verify ##检查当前配置语法,由于没有STONITH,所以报错,可关闭

error: unpack_resources: Resource start-up disabled since no STONITH resources have been defined

error: unpack_resources: Either configure some or disable STONITH with the stonith-enabled option

error: unpack_resources: NOTE: Clusters with shared data need STONITH to ensure data integrity

Errors found during check: config not valid

-V may provide more detailscrm(live)configure# property stonith-enabled=false ##禁用stonith后再次检查配置,无报错

crm(live)configure# verifycrm(live)configure# commit ##提交配置

crm(live)configure# cd

crm(live)# ra <--进入RA(资源代理配置)模式

crm(live)ra# helpThis level contains commands which show various information about

the installed resource agents. It is available both at the top

level and at the `configure` level.Available commands:

classes list classes and providers ##查看RA类型

list list RA for a class (and provider) ##查看指定类型(或提供商)的RA

meta,info show meta data for a RA ##查看RA详细信息

providers show providers for a RA and a class ##查看指定资源的提供商和类型

help,? show help (help topics for list of topics)

end,cd,up go back one level

quit,bye,exit exit the program

crm(live)ra# classes

lsb

ocf / heartbeat pacemaker redhat

service

stonithcrm(live)ra# list ocf pacemaker

ClusterMon Dummy HealthCPU HealthSMART Stateful SysInfo SystemHealth controld o2cb ping pingdcrm(live)ra# info ocf:heartbeat:IPaddr

Manages virtual IPv4 addresses (portable version) (ocf:heartbeat:IPaddr)

This script manages IP alias IP addresses

It can add an IP alias, or remove one.Parameters (* denotes required, [] the default):

ip* (string): IPv4 address

The IPv4 address to be configured in dotted quad notation, for example

"192.168.1.1".nic (string, [eth0]): Network interface

The base network interface on which the IP address will be brought

online.……下略……

crm(live)ra# cd

crm(live)# status <--查看集群状态

Last updated: Sun Oct 20 22:06:16 2013

Last change: Sun Oct 20 21:58:46 2013 via cibadmin on node1.toxingwang.com

Stack: classic openais (with plugin)

Current DC: node2.toxingwang.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

0 Resources configured.Online: [ node1.toxingwang.com node2.toxingwang.com ]

3.2 法定票数问题:

在双节点集群中,由于票数是偶数,当心跳出现问题(脑裂)时,两个节点都将达不到法定票数,默认quorum策略会关闭集群服务,为了避免这种情况,可以增加票数为奇数(如前文的增加ping节点),或者调整默认quorum策略为【ignore】。

crm(live)configure# property no-quorum-policy=ignore

crm(live)configure# show

node node1.toxingwang.com

node node2.toxingwang.com

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

crm(live)configure# commit

3.3 防止资源在节点恢复后移动

故障发生时,资源会迁移到正常节点上,但当故障节点恢复后,资源可能再次回到原来节点,这在有些情况下并非是最好的策略,因为资源的迁移是有停机时间的,特别是一些复杂的应用,如oracle数据库,这个时间会更长。为了避免这种情况,可以根据需要,使用本文1.3.1介绍的资源粘性策略。

crm(live)configure# rsc_defaults resource-stickiness=100 ##设置资源粘性为100

3.4 配置一个web集群

3.4.1 定义IP:

crm(live)configure# primitive WebIP ocf:heartbeat:IPaddr params ip=192.168.8.100

crm(live)configure# commitcrm(live)configure# cd

crm(live)# status

Last updated: Sun Oct 20 22:34:35 2013

Last change: Sun Oct 20 22:34:12 2013 via cibadmin on node1.toxingwang.com

Stack: classic openais (with plugin)

Current DC: node2.toxingwang.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

1 Resources configured.Online: [ node1.toxingwang.com node2.toxingwang.com ]

WebIP (ocf::heartbeat:IPaddr): Started node1.toxingwang.com

注意上述最后一行,定义的资源已经的node1上启动。使用ifconfig命令也可以看到该IP已生效:

[root@node1 ~]# ifconfig

eth0 Link encap:Ethernet HWaddr 00:0C:29:65:6A:A4

inet addr:192.168.8.101 Bcast:192.168.8.255 Mask:255.255.255.0

inet6 addr: fe80::20c:29ff:fe65:6aa4/64 Scope:Link

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:28845 errors:0 dropped:0 overruns:0 frame:0

TX packets:38476 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:1000

RX bytes:4593211 (4.3 MiB) TX bytes:5878478 (5.6 MiB)eth0:0 Link encap:Ethernet HWaddr 00:0C:29:65:6A:A4

inet addr:192.168.8.100 Bcast:192.168.8.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

inet6 addr: ::1/128 Scope:Host

UP LOOPBACK RUNNING MTU:16436 Metric:1

RX packets:176 errors:0 dropped:0 overruns:0 frame:0

TX packets:176 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:23900 (23.3 KiB) TX bytes:23900 (23.3 KiB)

此时,我们在node2上停止node1的corosync服务,就会发现该IP自动转移到node2:

[root@node2 ~]# ssh node1 'service corosync stop'

Signaling Corosync Cluster Engine (corosync) to terminate: [确定]

Waiting for corosync services to unload:.......................................[确定][root@node2 ~]# crm status

Last updated: Sun Oct 20 23:00:02 2013

Last change: Sun Oct 20 22:34:12 2013 via cibadmin on node1.toxingwang.com

Stack: classic openais (with plugin)

Current DC: node2.toxingwang.com - partition WITHOUT quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

1 Resources configured.Online: [ node2.toxingwang.com ]

OFFLINE: [ node1.toxingwang.com ]WebIP (ocf::heartbeat:IPaddr): Started node2.toxingwang.com

[root@node2 ~]# ssh node1 'service corosync start' <--重新启动node1

Starting Corosync Cluster Engine (corosync): [确定]

3.4.2 配置httpd资源:

crm(live)configure# primitive WebSite lsb:httpd ##定义资源,资源类型为lsb

crm(live)configure# verify

crm(live)configure# show

node node1.toxingwang.com

node node2.toxingwang.com

primitive WebIP ocf:heartbeat:IPaddr \

params ip="192.168.8.100"

primitive WebSite lsb:httpd

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

crm(live)configure# commitcrm(live)configure# cd

crm(live)# status ##查看集群状态

Last updated: Sun Oct 20 23:12:32 2013

Last change: Sun Oct 20 23:11:39 2013 via cibadmin on node2.toxingwang.com

Stack: classic openais (with plugin)

Current DC: node2.toxingwang.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

2 Resources configured.Online: [ node1.toxingwang.com node2.toxingwang.com ]

WebIP (ocf::heartbeat:IPaddr): Started node1.toxingwang.com

WebSite (lsb:httpd): Started node2.toxingwang.com

从上面的信息中可以看出WebIP和WebSite有可能会分别运行于两个节点上,这对于通过此IP提供Web服务的应用来说是不成立的,即此两者资源必须同时运行在某节点上。

3.4.3 资源约束

由此可见,即便集群拥有所有必需资源,但它可能还无法进行正确处理。资源约束则用以指定在哪些群集节点上运行资源,以何种顺序装载资源,以及特定资源依赖于哪些其它资源。pacemaker共给我们提供了三种资源约束方法:

1)Resource Location(资源位置):定义资源可以、不可以或尽可能在哪些节点上运行;

2)Resource Collocation(资源排列):排列约束用以定义集群资源可以或不可以在某个节点上同时运行;

3)Resource Order(资源顺序):顺序约束定义集群资源在节点上启动的顺序;定义约束时,还需要指定分数。各种分数是集群工作方式的重要组成部分。其实,从迁移资源到决定在已降级集群中停止哪些资源的整个过程是通过以某种方式修改分数来实现的。分数按每个资源来计算,资源分数为负的任何节点都无法运行该资源。在计算出资源分数后,集群选择分数最高的节点。INFINITY(无穷大)目前定义为 1,000,000。加减无穷大遵循以下3个基本规则:

1)任何值 + 无穷大 = 无穷大

2)任何值 - 无穷大 = -无穷大

3)无穷大 - 无穷大 = -无穷大

定义资源约束时,也可以指定每个约束的分数。分数表示指派给此资源约束的值。分数较高的约束先应用,分数较低的约束后应用。通过使用不同的分数为既定资源创建更多位置约束,可以指定资源要故障转移至的目标节点的顺序。

因此,对于前述的WebIP和WebSite可能会运行于不同节点的问题,可以通过以下命令来解决:

crm(live)# configure

crm(live)configure# colocation website-with-ip INFINITY: WebSite WebIPcrm(live)configure# commit

提交后查看状态:

[root@node1 ~]# crm status

Last updated: Sun Oct 20 23:20:48 2013

Last change: Sun Oct 20 23:20:31 2013 via cibadmin on node2.toxingwang.com

Stack: classic openais (with plugin)

Current DC: node2.toxingwang.com - partition with quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

2 Resources configured.Online: [ node1.toxingwang.com node2.toxingwang.com ]

WebIP (ocf::heartbeat:IPaddr): Started node1.toxingwang.com

WebSite (lsb:httpd): Started node1.toxingwang.com

接着,我们还得确保WebSite在某节点启动之前得先启动WebIP,这可以使用如下命令实现:

crm(live)configure# order httpd-after-ip mandatory: WebIP WebSite

查看效果:

<a href="http://www.toxingwang.com/wp-content/uploads/2013/10/image.png" class="cboxElement" rel="example4" 1660"="" style="text-decoration: none; color: rgb(1, 150, 227);">

此外,由于HA集群本身并不强制每个节点的性能相同或相近,所以,某些时候我们可能希望在正常时服务总能在某个性能较强的节点上运行,这可以通过位置约束来实现:

crm(live)configure# location prefer-node1 WebSite 200: node1.toxingwang.com

crm(live)configure# show

node node1.toxingwang.com

node node2.toxingwang.com

primitive WebIP ocf:heartbeat:IPaddr \

params ip="192.168.8.100"

primitive WebSite lsb:httpd

location prefer-node1 WebSite 200: node1.toxingwang.com

colocation website-with-ip inf: WebSite WebIP

order httpd-after-ip inf: WebIP WebSite

property $id="cib-bootstrap-options" \

dc-version="1.1.8-7.el6-394e906" \

cluster-infrastructure="classic openais (with plugin)" \

expected-quorum-votes="2" \

stonith-enabled="false" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"

3.4.4 测试:

[root@node2 ~]# ssh node1 'service corosync stop' <--关闭node1测试

Signaling Corosync Cluster Engine (corosync) to terminate: [确定]

Waiting for corosync services to unload:.[确定]

[root@node2 ~]# crm status

Last updated: Sun Oct 20 23:40:37 2013

Last change: Sun Oct 20 23:25:21 2013 via cibadmin on node2.toxingwang.com

Stack: classic openais (with plugin)

Current DC: node2.toxingwang.com - partition WITHOUT quorum

Version: 1.1.8-7.el6-394e906

2 Nodes configured, 2 expected votes

2 Resources configured.Online: [ node2.toxingwang.com ]

OFFLINE: [ node1.toxingwang.com ]WebIP (ocf::heartbeat:IPaddr): Started node2.toxingwang.com

WebSite (lsb:httpd): Started node2.toxingwang.com

<a href="http://www.toxingwang.com/wp-content/uploads/2013/10/image1.png" class="cboxElement" rel="example4" 1660"="" style="text-decoration: none; color: rgb(1, 150, 227); outline: 0px;">

至此,一个基本的HA集群就搭建完成。