2016.4.10 pca简单实践

对于一个半导体数据的处理,http://archive.ics.uci.edu/ml/machine-learning-databases/secom

其中有三个文件,一个是原始数据文件,为了测试pca进行降维使用。

一个label文件为了分类使用。

一个说明文档。

import numpy as np

from matplotlib import pyplot as plt

#读取文件

fr = open('/Users/**/Desktop/test');

delim = " ";

stringArr = [line.strip().split(delim) for line in fr.readlines()]

dataArr = [map(float,line) for line in stringArr]

numFeat = np.shape(dataArr)[1]

numSample = np.shape(dataArr)[0]

#对于nan的缺失数据使用有效数据的平均值来代替

for i in range(numFeat):

notnan = [];

for j in range(numSample):

if ~np.isnan(dataArr[j][i]):

notnan.append(dataArr[j][i]);

meanVal = 0;

if len(notnan)!=0:

meanVal = np.mean(notnan);

for j in range(numSample):

if np.isnan(dataArr[j][i]):

dataArr[j][i] = meanVal;

#求特征值和特征向量

meanVals = np.mean(dataArr,axis = 0)

meanRemoved = dataArr - meanVals;

covMat = np.cov(meanRemoved,rowvar = 0)

eigVals,eigVects = np.linalg.eig(covMat)

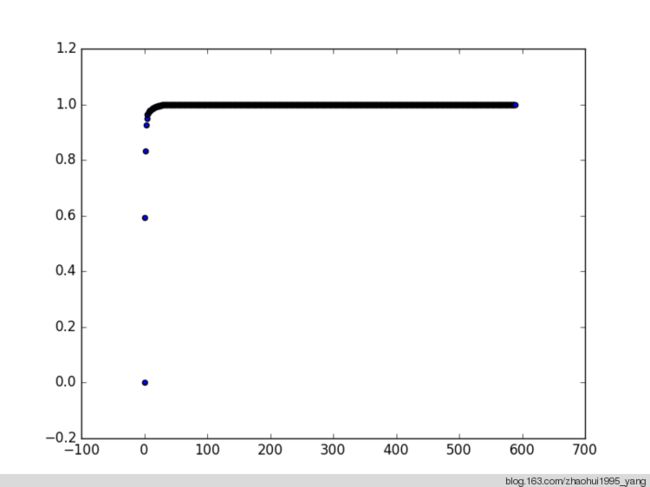

#累计分布

s = np.sum(eigVals);

p = np.array(eigVals);

for i in range(len(eigVals)):

p[i] = np.sum(eigVals[range(i)])/s;

#绘图

fig = plt.figure()

ax = fig.add_subplot(111)

x = range(len(p));

y = p;

ax.scatter(x,y);

plt.show();

>>> p

array([ 0. , 0.59254058, 0.83377877, 0.9252789 , 0.94828469,

0.96287661, 0.96806479, 0.97129137, 0.97443814, 0.97706893,

0.979382 , 0.98155734, 0.98363016, 0.985321 , 0.98657691,

0.98778044, 0.98892136, 0.99003252, 0.9909571 , 0.99186213,

0.99272358, 0.99346245, 0.9941864 , 0.99484841, 0.99542112,

0.99597946, 0.99648382, 0.99697404, 0.997435 , 0.99782913,

0.9981968 , 0.99849341, 0.99865661, 0.99881645, 0.99893773,

0.99905403, 0.99915929, 0.99925188, 0.99933205, 0.99939131,

0.99944629, 0.99949322, 0.99953878, 0.99957663, 0.99961259,

0.99964305, 0.99966912, 0.99969317, 0.99971385, 0.99973346,

0.99975237, 0.99977081, 0.99978788, 0.99980265, 0.99981658,

0.99982939, 0.99984186, 0.99985331, 0.99986451, 0.99987506,

0.99988515, 0.99989438, 0.99990335, 0.99991153, 0.99991916,

0.99992542, 0.99993154, 0.9999375 , 0.99994297, 0.99994756,

0.99995189, 0.99995563, 0.99995927, 0.99996269, 0.99996542,

0.99996796, 0.99997014, 0.99997209, 0.99997393, 0.99997566,

0.99997721, 0.99997864, 0.99997992, 0.99998113, 0.99998235,

0.99998347, 0.99998456, 0.99998548, 0.99998632, 0.99998708,

0.99998782, 0.9999885 , 0.99998909, 0.99998961, 0.99999011,

0.9999906 , 0.99999105, 0.99999149, 0.9999919 , 0.99999229,

0.99999265, 0.99999299, 0.99999331, 0.99999362, 0.9999939 ,

0.99999417, 0.99999443, 0.99999468, 0.99999492, 0.99999516,

0.99999538, 0.99999558, 0.99999579, 0.99999598, 0.99999616,

0.99999633, 0.99999649, 0.99999665, 0.99999681, 0.99999696,

0.99999711, 0.99999725, 0.99999738, 0.99999751, 0.99999764,

0.99999775, 0.99999786, 0.99999797, 0.99999807, 0.99999817,

0.99999827, 0.99999836, 0.99999845, 0.99999853, 0.99999861,

0.99999869, 0.99999877, 0.99999885, 0.99999892, 0.99999898,

0.99999905, 0.99999911, 0.99999916, 0.99999922, 0.99999927,

0.99999932, 0.99999936, 0.99999941, 0.99999945, 0.99999948,

0.99999952, 0.99999955, 0.99999957, 0.9999996 , 0.99999962,

0.99999964, 0.99999966, 0.99999968, 0.9999997 , 0.99999972,

0.99999974, 0.99999975, 0.99999977, 0.99999978, 0.9999998 ,

0.99999981, 0.99999982, 0.99999984, 0.99999985, 0.99999985,

0.99999986, 0.99999987, 0.99999988, 0.99999989, 0.99999989,

0.9999999 , 0.99999991, 0.99999991, 0.99999992, 0.99999992,

0.99999993, 0.99999993, 0.99999993, 0.99999994, 0.99999994,

0.99999994, 0.99999995, 0.99999995, 0.99999995, 0.99999995,

0.99999996, 0.99999996, 0.99999996, 0.99999996, 0.99999997,

0.99999997, 0.99999997, 0.99999997, 0.99999997, 0.99999997,

0.99999998, 0.99999998, 0.99999998, 0.99999998, 0.99999998,

0.99999998, 0.99999998, 0.99999998, 0.99999999, 0.99999999,

0.99999999, 0.99999999, 0.99999999, 0.99999999, 0.99999999,

0.99999999, 0.99999999, 0.99999999, 0.99999999, 0.99999999,

0.99999999, 0.99999999, 0.99999999, 0.99999999, 0.99999999,

0.99999999, 0.99999999, 0.99999999, 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ,

1. , 1. , 1. , 1. , 1. ])

参考资料:

1. 机器学习实战

2. http://archive.ics.uci.edu/ml/machine-learning-databases/secom

ps:

机器学习实践上的例子程序看明白思路后自己实现,语法不兼容。

这个程序也没有经过运行的优化,不是向量化的处理。