第87课:Flume推送数据到SparkStreaming案例实战和内幕源码解密--flume安装篇

1、 下载flume 老师提供的包

2、 安装 vi/etc/profile

exportFLUME_HOME=/usr/local/apache-flume-1.6.0-bin

exportPATH=.:$PATH:$JAVA_HOME/bin:$HADOOP_HOME/bin:$SCALA_HOME/bin:$SPARK_HOME/bin:$HIVE_HOME/bin:$FLUME_HOME/bin

3\配置文件

[root@master conf]#pwd

/usr/local/apache-flume-1.6.0-bin/conf

[root@master conf]#

[root@master conf]#catflume-conf.properties

#agent1

agent1.sources=source1

agent1.sinks=sink1

agent1.channels=channel1

#urce1

agent1.sources.source1.type=spooldir

agent1.sources.source1.spoolDir=/usr/local/flume/tmp/TestDir

agent1.sources.source1.channels=channel1

agent1.sources.source1.fileHeader = false

agent1.sources.source1.interceptors = i1

agent1.sources.source1.interceptors.i1.type= timestamp

#sink1

agent1.sinks.sink1.type=hdfs

#agent1.sinks.sink1.hdfs.path=hdfs://master:9000/library/flume

agent1.sinks.sink1.hdfs.path=/usr/local/flume/tmp/SinkDir

agent1.sinks.sink1.hdfs.fileType=DataStream

agent1.sinks.sink1.hdfs.writeFormat=TEXT

agent1.sinks.sink1.hdfs.rollInterval=1

agent1.sinks.sink1.channel=channel1

agent1.sinks.sink1.hdfs.filePrefix=%Y-%m-%d

#channel1

agent1.channels.channel1.type=file

agent1.channels.channel1.checkpointDir=/usr/local/flume/tmp/checkpointDir

agent1.channels.channel1.dataDirs=/usr/local/flume/tmp/dataDirs

[root@master conf]#

flume-ng flume-ng.cmd flume-ng.ps1

[root@master bin]#./flume-ng agent -c . -f/usr/local/apache-flume-1.6.0-bin/conf/flume-conf.properties -n agent1 -Dflume.root.logger=INFO,console

bash: ./flume-ng: Permission denied

[root@master bin]#

[root@master bin]# ls -l

total 36

-rw-r--r--. 1 hadoop hadoop 12845 May 8 2015flume-ng

-rw-r--r--. 1 hadoop hadoop 936 May 8 2015 flume-ng.cmd

-rw-r--r--. 1 hadoop hadoop 14041 May 8 2015flume-ng.ps1

[root@master bin]# chmod u+x flume-ng

[root@master bin]# ls -;

ls: cannot access -: No such file ordirectory

[root@master bin]# ls -l

total 36

-rwxr--r--. 1 hadoop hadoop 12845 May 8 2015flume-ng

-rw-r--r--. 1 hadoop hadoop 936 May 8 2015 flume-ng.cmd

-rw-r--r--. 1 hadoop hadoop 14041 May 8 2015flume-ng.ps1

[root@master bin]# chmod u+X flume-ng.cmd

[root@master bin]# chmod u+X flume-ng.ps1

[root@master bin]# ls -l

total 36

-rwxr--r--. 1 hadoop hadoop 12845 May 8 2015flume-ng

-rw-r--r--. 1 hadoop hadoop 936 May 8 2015 flume-ng.cmd

-rw-r--r--. 1 hadoop hadoop 14041 May 8 2015flume-ng.ps1

[root@master bin]#

执行了

[root@master bin]# ./flume-ng agent -c . -f/usr/local/apache-flume-1.6.0-bin/conf/flume-conf.properties -n agent1 -Dflume.root.logger=INFO,console

新建文件

[root@master hadoop]#cd /usr/local/flume/tmp/TestDir

[root@master TestDir]#ls

[root@master TestDir]#echo "hello IMF my flume data test 20160422 40w"> IMF_flume.log

[root@master TestDir]#ls

IMF_flume.log.COMPLETED

flume采集

16/04/22 10:29:30 INFO hdfs.BucketWriter: Renaming hdfs://master:9000/library/flume/2016-04-22.1461335365188.tmp to hdfs://master:9000/library/flume/2016-04-22.1461335365188

16/04/22 10:29:30 INFO hdfs.HDFSEventSink: Writer callback called.

16/04/22 10:29:53 INFO file.EventQueueBackingStoreFile: Start checkpoint for /usr/local/flume/tmp/checkpointDir/checkpoint, elements to sync = 1

16/04/22 10:29:53 INFO file.EventQueueBackingStoreFile: Updating checkpoint metadata: logWriteOrderID: 1461335244646, queueSize: 0, queueHead: 0

16/04/22 10:29:53 INFO file.Log: Updated checkpoint for file: /usr/local/flume/tmp/dataDirs/log-1 position: 226 logWriteOrderID: 14

16/04/22 10:27:25 INFO instrumentation.MonitoredCounterGroup: Component type: SOURCE, name: source1 started

16/04/22 10:29:21 INFO avro.ReliableSpoolingFileEventReader: Last read took us just up to a file boundary. Rolling to the next file, if there is one.

16/04/22 10:29:21 INFO avro.ReliableSpoolingFileEventReader: Preparing to move file /usr/local/flume/tmp/TestDir/IMF_flume.log to /usr/local/flume/tmp/TestDir/IMF_flume.log.COMPLETED

16/04/22 10:29:23 INFO file.EventQueueBackingStoreFile: Start checkpoint for /usr/local/flume/tmp/checkpointDir/checkpoint, elements to sync = 1

16/04/22 10:29:23 INFO file.EventQueueBackingStoreFile: Updating checkpoint metadata: logWriteOrderID: 1461335244643, queueSize: 1, queueHead: 999999

16/04/22 10:29:23 INFO file.Log: Updated checkpoint for file: /usr/local/flume/tmp/dataDirs/log-1 position: 149 logWriteOrderID: 1461335244643

16/04/22 10:29:25 INFO hdfs.HDFSDataStream: Serializer = TEXT, UseRawLocalFileSystem = false

16/04/22 10:29:25 INFO hdfs.BucketWriter: Creating hdfs://master:9000/library/flume/2016-04-22.1461335365188.tmp

16/04/22 10:29:25 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

16/04/22 10:29:30 INFO hdfs.BucketWriter: Closing hdfs://master:9000/library/flume/2016-04-22.1461335365188.tmp

16/04/22 10:29:30 INFO hdfs.BucketWriter: Renaming hdfs://master:9000/library/flume/2016-04-22.1461335365188.tmp to hdfs://master:9000/library/flume/2016-04-22.1461335365188

16/04/22 10:29:30 INFO hdfs.HDFSEventSink: Writer callback called.

16/04/22 10:29:53 INFO file.EventQueueBackingStoreFile: Start checkpoint for /usr/local/flume/tmp/checkpointDir/checkpoint, elements to sync = 1

16/04/22 10:29:53 INFO file.EventQueueBackingStoreFile: Updating checkpoint metadata: logWriteOrderID: 1461335244646, queueSize: 0, queueHead: 0

16/04/22 10:29:53 INFO file.Log: Updated checkpoint for file: /usr/local/flume/tmp/dataDirs/log-1 position: 226 logWriteOrderID: 1461335244646

hdfs查看结果

[root@master TestDir]#hadoop dfs -cat/library/flume/2016-04-22.1461335365188

DEPRECATED: Use of this script to executehdfs command is deprecated.

Instead use the hdfs command for it.

SLF4J: Failed to load class"org.slf4j.impl.StaticLoggerBinder".

SLF4J: Defaulting to no-operation (NOP)logger implementation

SLF4J: Seehttp://www.slf4j.org/codes.html#StaticLoggerBinder for further details.

16/04/22 10:30:50 WARN util.NativeCodeLoader:Unable to load native-hadoop library for your platform... using builtin-javaclasses where applicable

hello IMF my flume data test 20160422 40w

[root@master TestDir]#

=======================================================================

huawei test

flume

root@master:/usr/local/apache-flume-1.6.0-bin/conf# hadoop dfs -mkdir hdfs://master:9000/library/flume

DEPRECATED: Use of this script to execute hdfs command is deprecated.

Instead use the hdfs command for it.

export FLUME_HOME=/usr/local/apache-flume-1.6.0-bin

export PATH=.:$PATH:$JAVA_HOME/bin:$SCALA_HOME/bin:$HADOOP_HOME/bin:$SPARK_HOME/bin:$HIVE_HOME/bin:$FLUME_HOME/bin

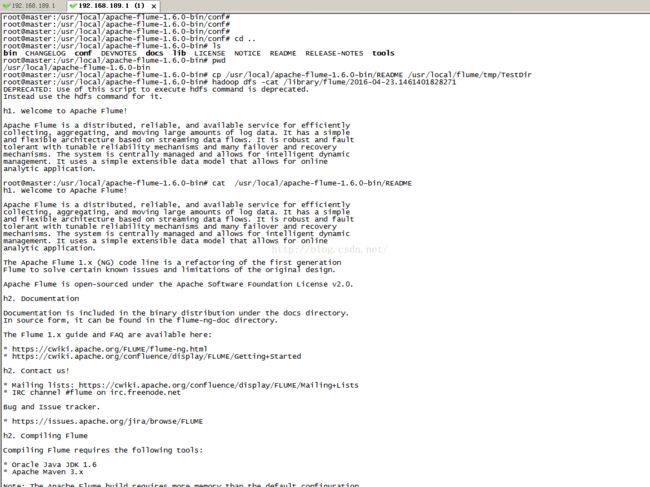

root@master:/usr/local/apache-flume-1.6.0-bin# source /etc/profile

root@master:/usr/local/apache-flume-1.6.0-bin/bin# chmod u+x flume-ng

root@master:/usr/local/apache-flume-1.6.0-bin# flume-ng version

Flume 1.6.0

Source code repository: https://git-wip-us.apache.org/repos/asf/flume.git

Revision: 2561a23240a71ba20bf288c7c2cda88f443c2080

Compiled by hshreedharan on Mon May 11 11:15:44 PDT 2015

From source with checksum b29e416802ce9ece3269d34233baf43f

root@master:/usr/local/apache-flume-1.6.0-bin#

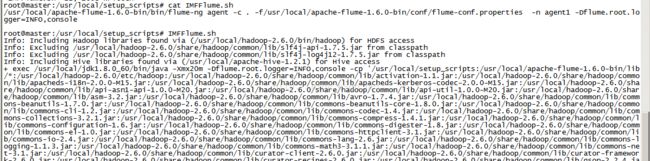

./flume-ng agent -c . -f/usr/local/apache-flume-1.6.0-bin/conf/flume-conf.properties -n agent1 -Dflume.root.logger=INFO,console

vi IMFFlume.sh

./flume-ng agent -c . -f/usr/local/apache-flume-1.6.0-bin/conf/flume-conf.properties -n agent1 -Dflume.root.logger=INFO,console

/usr/local/flume/tmp/TestDir

root@master:/usr/local/setup_scripts# cat IMFFlume.sh

/usr/local/apache-flume-1.6.0-bin/bin/flume-ng agent -c . -f/usr/local/apache-flume-1.6.0-bin/conf/flume-conf.properties -n agent1 -Dflume.root.logger=INFO,console

root@master:/usr/local/setup_scripts# IMFFlume.sh

cp /usr/local/apache-flume-1.6.0-bin/README /usr/local/flume/tmp/TestDir

root@master:/usr/local/apache-flume-1.6.0-bin/conf# cat flume-conf.properties

agent1琛ㄧず浠g悊鍚嶇О

agent1.sources=source1

agent1.sinks=sink1

agent1.channels=channel1

#閰嶇疆source1

agent1.sources.source1.type=spooldir

agent1.sources.source1.spoolDir=/usr/local/flume/tmp/TestDir

agent1.sources.source1.channels=channel1

agent1.sources.source1.fileHeader = false

agent1.sources.source1.interceptors = i1

agent1.sources.source1.interceptors.i1.type = timestamp

#閰嶇疆sink1

agent1.sinks.sink1.type=hdfs

agent1.sinks.sink1.hdfs.path=hdfs://master:9000/library/flume

agent1.sinks.sink1.hdfs.fileType=DataStream

agent1.sinks.sink1.hdfs.writeFormat=TEXT

agent1.sinks.sink1.hdfs.rollInterval=1

agent1.sinks.sink1.channel=channel1

agent1.sinks.sink1.hdfs.filePrefix=%Y-%m-%d

#閰嶇疆channel1

agent1.channels.channel1.type=file

agent1.channels.channel1.checkpointDir=/usr/local/flume/tmp/checkpointDir

agent1.channels.channel1.dataDirs=/usr/local/flume/tmp/dataDirs

root@master:/usr/local/apache-flume-1.6.0-bin/conf# hadoop dfs -mkidr hdfs://master:9000/library/flume

DEPRECATED: Use of this script to execute hdfs command is deprecated.

Instead use the hdfs command for it.

-mkidr: Unknown command

root@master:/usr/local/apache-flume-1.6.0-bin/conf# hadoop dfs -mkdir hdfs://master:9000/library/flume

DEPRECATED: Use of this script to execute hdfs command is deprecated.

Instead use the hdfs command for it.

root@master:/usr/local/apache-flume-1.6.0-bin/conf# mkidr /usr/local/flume/tmp/TestDir