3D Math Primer for Game Programmers (Matrices)

In this article, I will discuss matrices and operations on matrices. It is assumed that the reader has some experience with Linear Algebra, vectors, operations on vectors, and a basic understanding of matrices.

Table of Contents

- Conventions

- Linear Transformation

- Rotation

- Rotation about the X Axis

- Rotation about the Y Axis

- Rotation about the Z Axis

- Rotation about an arbitrary axis

- Scale

- Rotation

- Affine Transformation

- Determinant of a Matrix

- Inverse of a Matrix

- Orthogonal Matrices

- References

Conventions

Throughout this article, I will use a convention when referring to vectors, scalars, and matrices.

- Scalars are represented by lower-case italic characters (

).

). - Vectors are represented by lower-case bold characters (

)

) - Matrices are represented by upper-case bold characters (

)

)

Matrices are considered to be column-major matrices and rotations are expressed using the right-handed coordinate system.

Linear Transformation

A linear transformation is defined as a transformation between two vector spaces ![]() and

and ![]() denoted

denoted ![]() and must preserve the operations of vector addition and scalar multiplication. That is,

and must preserve the operations of vector addition and scalar multiplication. That is,

This property also implies that any linear transformation will transform the zero vector into the zero vector. Since a non-zero translation will transform the zero vector into a non-zero vector then any transformation that translates a vector is not a linear transformation. A few examples of linear transformations of three-dimensional space ![]() are:

are:

Rotation

As stated in the article about coordinate systems (Coordinate Systems) considering a right-handed coordinate system, a positive rotation rotates a point in a counter-clockwise direction when looking at the positive axis of rotation.

ROTATION ABOUT THE X AXIS

A linear transformation that rotates a vector space about the ![]() axis:

axis:

For example rotating the point ![]() 90 degrees about the

90 degrees about the ![]() axis would result in the point

axis would result in the point ![]() . Let’s confirm if this is true using this rotation.

. Let’s confirm if this is true using this rotation.

And rotating the point ![]() 90 degrees about the

90 degrees about the ![]() axis would result in the point

axis would result in the point ![]() .

.

ROTATION ABOUT THE Y AXIS

A linear transformation that rotates a vector space about the ![]() axis:

axis:

For example rotating the point ![]() 90 degrees about the

90 degrees about the ![]() axis would result in the point

axis would result in the point ![]() .

.

And rotating the point ![]() 90 degrees about the

90 degrees about the ![]() axis would result in the point

axis would result in the point ![]() .

.

ROTATION ABOUT THE Z AXIS

A linear transformation that rotates a vector space about the ![]() axis:

axis:

For example rotating the point ![]() 90 degrees about the

90 degrees about the ![]() axis would result in the point

axis would result in the point ![]() .

.

And rotating the point ![]() 90 degrees about the

90 degrees about the ![]() axis would result in the point

axis would result in the point ![]() .

.

ROTATION ABOUT AN ARBITRARY AXIS

A 3D rotation about an arbitrary axis ![]() by an angle

by an angle ![]() :

:

Scale

A linear transformation that scales a vector space:

A linear transformation that scales by a factor ![]() in an arbitrary direction

in an arbitrary direction ![]() :

:

Affine Transformation

An affine transformation is a linear transformation followed by a translation. Any linear transformation is an affine transformation with a translation of ![]() , but not all affine transformations are linear transformations. The set of affine transformations is a superset of linear transformations. Types of affine transformations are scale, shear, rotation, reflection, and translation. Any combination of affine transformation results in an affine transformation. Any transformation in the form

, but not all affine transformations are linear transformations. The set of affine transformations is a superset of linear transformations. Types of affine transformations are scale, shear, rotation, reflection, and translation. Any combination of affine transformation results in an affine transformation. Any transformation in the form ![]() is an affine transformation where

is an affine transformation where ![]() is the transformed vector,

is the transformed vector, ![]() is the original vector,

is the original vector, ![]() is a linear transform matrix, and

is a linear transform matrix, and ![]() is a translation vector.

is a translation vector.

Determinant of a Matrix (行列式)

For every square matrix, you can calculate a special scalar value called the “determinant” of the matrix. If the determinant is not ![]() , then the matrix is invertible and we can use the determinant to calculate the inverse of that matrix.

, then the matrix is invertible and we can use the determinant to calculate the inverse of that matrix.

The determinant of a matrix ![]() is denoted

is denoted ![]() or

or ![]() .

.

Before we discuss how to calculate the determinant of a larger ![]() matrix, let’s first discuss the determinant of a

matrix, let’s first discuss the determinant of a ![]() matrix:

matrix:

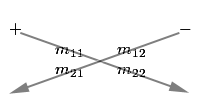

You can use the following diagram to help you remember the order in which the terms should be placed:

In this diagram, we see the arrows passing through the diagonal terms. We simply multiply the operands on the diagonal terms and we subtract the result of the back diagonal term from the result of the front diagonal term.

The determinant of a ![]() matrix is shown below:

matrix is shown below:

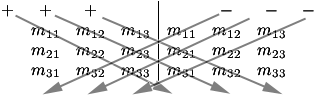

We can use a similar diagram to memorize this equation. For this diagram, we duplicate the matrix and place it next to itself, then we multiply the forward-diagonal operands, and the backward-diagonal operands and we add the results of the forward-diagonals and subtract the results of the backward-diagonals:

If we imagine the rows of the ![]() matrix as vectors, then the determinant of the matrix is equivalent to the triple product that was introduced in the article on vector operations. If you recall, this is the result of taking the dot product of a cross product. This is shown below:

matrix as vectors, then the determinant of the matrix is equivalent to the triple product that was introduced in the article on vector operations. If you recall, this is the result of taking the dot product of a cross product. This is shown below:

We can calculate the determinant of a ![]() matrix

matrix ![]() by choosing an arbitrary row

by choosing an arbitrary row ![]() and applying the general formula:

and applying the general formula:

Note that we can also arbitrarily choose a column ![]() that can be used to calculate the determinant, but for our general formula, we see the determinant applied to the

that can be used to calculate the determinant, but for our general formula, we see the determinant applied to the ![]() row.

row.

Where ![]() is the matrix obtained by removing the

is the matrix obtained by removing the ![]() row and the

row and the ![]() column from matrix

column from matrix ![]() . This is called the minor of the matrix

. This is called the minor of the matrix ![]() at the

at the ![]() row and the

row and the ![]() column (子式阵):

column (子式阵):

The cofactor of a matrix ![]() at the

at the ![]() row and the

row and the ![]() column (余因数矩阵) is denoted

column (余因数矩阵) is denoted ![]() and is the signed determinant of the minor of the matrix

and is the signed determinant of the minor of the matrix ![]() at the

at the ![]() row and the

row and the ![]() column:

column:

Note that the ![]() term has the effect of negating every odd-sum cofactor:

term has the effect of negating every odd-sum cofactor:

Let’s take an example of using the general form of the equation to calculate the determinant of a ![]() matrix:

matrix:

And expanding the determinants for the ![]() minors of the matrix:

minors of the matrix:

As you can see, the equations for calculating the determinants of matrices grows exponentially as the dimension of our matrix increases.

Inverse of a Matrix

We can calculate the inverse of any square matrix as long as it has the property that it is invertible. We can say that a matrix is invertible if it’s determinant is not zero. A matrix that is invertible is also called “non-singular”(非奇异) and a matrix that does not have an inverse is also called “singular”(奇异):

The general equation for the inverse of a matrix is:

Where ![]() is the classical adjoint, also known as the adjugate of the matrix(伴随矩阵) and

is the classical adjoint, also known as the adjugate of the matrix(伴随矩阵) and ![]() is the determinant of the matrix defined in the previous section. The adjugate of a matrix

is the determinant of the matrix defined in the previous section. The adjugate of a matrix ![]() is the transpose of the matrix of cofactors. The fact that the denominator of the ratio is the determinant of the matrix explains why only matrices whose determinant is not

is the transpose of the matrix of cofactors. The fact that the denominator of the ratio is the determinant of the matrix explains why only matrices whose determinant is not ![]() have an inverse.

have an inverse.

the adjugate is:

As an example, let us take the matrix ![]() :

:

Once we have the adjugate of the matrix, we can compute the inverse of the matrix by dividing the adjugate by the determinant:

An interesting property of the inverse of a matrix is that a matrix multiplied by its inverse is the identity matrix:

Orthogonal Matrices

A square matrix ![]() is orthogonal if and only if the product of the matrix and its transpose is the identity matrix:

is orthogonal if and only if the product of the matrix and its transpose is the identity matrix:

And since we know from the previous section that an invertible matrix ![]() multiplied by it’s inverse

multiplied by it’s inverse ![]() is the identity matrix

is the identity matrix ![]() , we can conclude that the transpose of an orthogonal matrix is equal to its inverse:

, we can conclude that the transpose of an orthogonal matrix is equal to its inverse:

This is a very powerful property of orthogonal matrices because of the computational benefit that we can achieve if we know in advance that our matrix is orthogonal. Translation, rotation, and reflection are the only orthogonal transformations. A matrix that has a scale applied to it is no longer orthogonal. If we can guarantee the matrix is orthogonal, then we can take advantage of the fact that the transpose of the matrix is its inverse and save on the computational power needed to calculate the inverse the hard way.

How can we tell if an arbitrary matrix ![]() is orthogonal? Let us assume

is orthogonal? Let us assume ![]() is a

is a ![]() matrix, then by definition

matrix, then by definition ![]() is orthogonal if and only if

is orthogonal if and only if ![]() .

.

If we rewrite the matrix ![]() as a set of column vectors:

as a set of column vectors:

Then we can write our matrix multiply as a series of dot products:

From this, we can make some interpretations:

- The dot product of a vector with itself is one if and only if the vector has a length of one, that is it is a unit vector.

- The dot product of two vectors is zero if and only if they are perpendicular.

So for a matrix to be orthogonal, the following must be true:

- Each column of the matrix must be a unit vector. This fact implies that there can be no scale applied to the matrix.

- The columns of the matrix must be mutually perpendicular.