DeepLearningToolBox学习——NN(neural network)

经典的DeepLearningToolBox,将里面的模型和Andrew Ng的UFLDL tutorial 对应学习,收获不小。

下载地址:DeepLearningToolBox

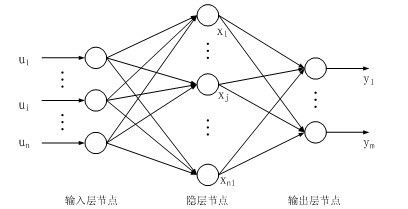

神经网络模型,层与层之间全连接。

1. test_example_NN

%% ex1 vanilla neural net

rand('state',0)

nn = nnsetup([784 100 10]);

opts.numepochs = 1; % Number of full sweeps through data

opts.batchsize = 100; % Take a mean gradient step over this many samples

[nn, L] = nntrain(nn, train_x, train_y, opts);

[er, bad] = nntest(nn, test_x, test_y);

batchsize 是指每个batch的大小,比如有60000张图片,这里把100个图片作为一个整体(batch)进行训练(或者测试),则有600个batch,需要训练600次。这个概念在DL中是常见的。

2. nnsetup

设置神经网络结构,包括初始化参数:

function nn = nnsetup(architecture)

% NNSETUP creates a Feedforward Backpropagate Neural Network

% nn = nnsetup(architecture) returns an neural network structure with n=numel(architecture)

% layers, architecture being a n x 1 vector of layer sizes e.g. [784 100 10]

nn.size = architecture;

nn.n = numel(nn.size);

nn.activation_function = 'tanh_opt'; % Activation functions of hidden layers: 'sigm' (sigmoid) or 'tanh_opt' (optimal tanh).

nn.learningRate = 2; % learning rate Note: typically needs to be lower when using 'sigm' activation function and non-normalized inputs.

nn.momentum = 0.5; % Momentum

nn.scaling_learningRate = 1; % Scaling factor for the learning rate (each epoch)

nn.weightPenaltyL2 = 0; % L2 regularization

nn.nonSparsityPenalty = 0; % Non sparsity penalty

nn.sparsityTarget = 0.05; % Sparsity target

nn.inputZeroMaskedFraction = 0; % Used for Denoising AutoEncoders

nn.dropoutFraction = 0; % Dropout level (http://www.cs.toronto.edu/~hinton/absps/dropout.pdf)

nn.testing = 0; % Internal variable. nntest sets this to one.

nn.output = 'sigm'; % output unit 'sigm' (=logistic), 'softmax' and 'linear'

for i = 2 : nn.n

% weights and weight momentum

nn.W{i - 1} = (rand(nn.size(i), nn.size(i - 1)+1) - 0.5) * 2 * 4 * sqrt(6 / (nn.size(i) + nn.size(i - 1)));

nn.vW{i - 1} = zeros(size(nn.W{i - 1}));

% average activations (for use with sparsity)

nn.p{i} = zeros(1, nn.size(i));

end

end

参数architecture传入了NN的每层节点数,比如为[784,100,10]: 则神经网络的输入层为784个节点,一个隐层有100个节点,一个输出层有100个节点。

3. nntrain

function [nn, L] = nntrain(nn, train_x, train_y, opts, val_x, val_y)

%NNTRAIN trains a neural net

% [nn, L] = nnff(nn, x, y, opts) trains the neural network nn with input x and

% output y for opts.numepochs epochs, with minibatches of size

% opts.batchsize. Returns a neural network nn with updated activations,

% errors, weights and biases, (nn.a, nn.e, nn.W, nn.b) and L, the sum

% squared error for each training minibatch.

assert(isfloat(train_x), 'train_x must be a float');

assert(nargin == 4 || nargin == 6,'number ofinput arguments must be 4 or 6')

loss.train.e = [];

loss.train.e_frac = [];

loss.val.e = [];

loss.val.e_frac = [];

opts.validation = 0;

if nargin == 6

opts.validation = 1;

end

fhandle = [];

if isfield(opts,'plot') && opts.plot == 1

fhandle = figure();

end

m = size(train_x, 1);

batchsize = opts.batchsize;

numepochs = opts.numepochs;

numbatches = m / batchsize;

assert(rem(numbatches, 1) == 0, 'numbatches must be a integer');

L = zeros(numepochs*numbatches,1);

n = 1;

for i = 1 : numepochs

tic;

kk = randperm(m);%打乱顺序

for l = 1 : numbatches

batch_x = train_x(kk((l - 1) * batchsize + 1 : l * batchsize), :);

%Add noise to input (for use in denoising autoencoder)

%//加入noise,这是denoising autoencoder需要使用到的部分

%//具体加入的方法就是把训练样例中的一些数据调整变为0,inputZeroMaskedFraction表示了调整的比例

if(nn.inputZeroMaskedFraction ~= 0)

batch_x = batch_x.*(rand(size(batch_x))>nn.inputZeroMaskedFraction);

end

batch_y = train_y(kk((l - 1) * batchsize + 1 : l * batchsize), :);

%nnff是进行前向传播,nnbp是后向传播,nnapplygrads是进行梯度下降

nn = nnff(nn, batch_x, batch_y);

nn = nnbp(nn);

nn = nnapplygrads(nn);

L(n) = nn.L;

n = n + 1;

end

t = toc;

if opts.validation == 1

loss = nneval(nn, loss, train_x, train_y, val_x, val_y);

str_perf = sprintf('; Full-batch train mse = %f, val mse = %f', loss.train.e(end), loss.val.e(end));

else

loss = nneval(nn, loss, train_x, train_y);

str_perf = sprintf('; Full-batch train err = %f', loss.train.e(end));

end

if ishandle(fhandle)

nnupdatefigures(nn, fhandle, loss, opts, i);

end

disp(['epoch ' num2str(i) '/' num2str(opts.numepochs) '. Took ' num2str(t) ' seconds' '. Mini-batch mean squared error on training set is ' num2str(mean(L((n-numbatches):(n-1)))) str_perf]);

nn.learningRate = nn.learningRate * nn.scaling_learningRate;

end

end

这里m = size(train_x, 1) 为训练样本的数目,batchsize是指每个batch的大小,numbatches是batch的数量。

L是一个1为向量,L[i*j] 是指第i个epoch的第j个batch的误差。

每次选择一个batch进行训练,每次训练都讲更新网络参数和误差,这些功能由下面三个函数实现:

4. nnff

nnff是前向传播函数,计算每一层的输出并保存在nn网络结构中

function nn = nnff(nn, x, y)

%NNFF performs a feedforward pass

% nn = nnff(nn, x, y) returns an neural network structure with updated

% layer activations, error and loss (nn.a, nn.e and nn.L)

n = nn.n;

m = size(x, 1);

x = [ones(m,1) x];

nn.a{1} = x;

%feedforward pass

for i = 2 : n-1

switch nn.activation_function

case 'sigm'

% Calculate the unit's outputs (including the bias term)

nn.a{i} = sigm(nn.a{i - 1} * nn.W{i - 1}');

case 'tanh_opt'

nn.a{i} = tanh_opt(nn.a{i - 1} * nn.W{i - 1}');

end

%dropout

if(nn.dropoutFraction > 0)

if(nn.testing)

nn.a{i} = nn.a{i}.*(1 - nn.dropoutFraction);

else

nn.dropOutMask{i} = (rand(size(nn.a{i}))>nn.dropoutFraction);

nn.a{i} = nn.a{i}.*nn.dropOutMask{i};

end

end

%calculate running exponential activations for use with sparsity

%计算sparsity,nonSparsityPenalty 是对没达到sparsitytarget的参数的惩罚系数

if(nn.nonSparsityPenalty>0)

nn.p{i} = 0.99 * nn.p{i} + 0.01 * mean(nn.a{i}, 1);

end

%Add the bias term

nn.a{i} = [ones(m,1) nn.a{i}];

end

switch nn.output

case 'sigm'

nn.a{n} = sigm(nn.a{n - 1} * nn.W{n - 1}');

case 'linear'

nn.a{n} = nn.a{n - 1} * nn.W{n - 1}';

case 'softmax'

nn.a{n} = nn.a{n - 1} * nn.W{n - 1}';

nn.a{n} = exp(bsxfun(@minus, nn.a{n}, max(nn.a{n},[],2)));

nn.a{n} = bsxfun(@rdivide, nn.a{n}, sum(nn.a{n}, 2));

end

%error and loss

nn.e = y - nn.a{n};

switch nn.output

case {'sigm', 'linear'}

nn.L = 1/2 * sum(sum(nn.e .^ 2)) / m;

case 'softmax'

nn.L = -sum(sum(y .* log(nn.a{n}))) / m;

end

end

其中x = [ones(m,1) x];是将输入数据扩大一维,这样更加容易计算偏置。 x*W + b = [x,1]*(W,b)'

根据activation_function来选择特定的非线性变换公式。

如果nn的dropoutFraction大于0,则需要对输出层进行dropout,以提高泛化能力。

nn.dropOutMask{i} = (rand(size(nn.a{i}))>nn.dropoutFraction);

nn.a{i} = nn.a{i}.*nn.dropOutMask{i};

nn.a{i} = nn.a{i}.*nn.dropOutMask{i};

这两句话实现的作用是将输出层按照dropoutFraction的比例置为0。

接下来计算sparsity,nonSparsityPenalty 是对没达到sparsitytarget的参数的惩罚系数 。

nn.p{i} = 0.99 * nn.p{i} + 0.01 * mean(nn.a{i}, 1); 对应UFLDL中的稀疏编码公式。

最后计算出error和loss保存在nn网络中。

5.nnbp

反向传播,从最后一层的误差倒推到第一层,计算出每层的deltaW,deltab

function nn = nnbp(nn)

%NNBP performs backpropagation

% nn = nnbp(nn) returns an neural network structure with updated weights

n = nn.n;

sparsityError = 0;

switch nn.output

case 'sigm'

d{n} = - nn.e .* (nn.a{n} .* (1 - nn.a{n}));

case {'softmax','linear'}

d{n} = - nn.e;

end

for i = (n - 1) : -1 : 2

% Derivative of the activation function

switch nn.activation_function

case 'sigm'

d_act = nn.a{i} .* (1 - nn.a{i});

case 'tanh_opt'

d_act = 1.7159 * 2/3 * (1 - 1/(1.7159)^2 * nn.a{i}.^2);

end

if(nn.nonSparsityPenalty>0)

pi = repmat(nn.p{i}, size(nn.a{i}, 1), 1);

sparsityError = [zeros(size(nn.a{i},1),1) nn.nonSparsityPenalty * (-nn.sparsityTarget ./ pi + (1 - nn.sparsityTarget) ./ (1 - pi))];

end

% Backpropagate first derivatives

if i+1==n % in this case in d{n} there is not the bias term to be removed

d{i} = (d{i + 1} * nn.W{i} + sparsityError) .* d_act; % Bishop (5.56)

else % in this case in d{i} the bias term has to be removed

d{i} = (d{i + 1}(:,2:end) * nn.W{i} + sparsityError) .* d_act;

end

if(nn.dropoutFraction>0)

d{i} = d{i} .* [ones(size(d{i},1),1) nn.dropOutMask{i}];

end

end

for i = 1 : (n - 1)

if i+1==n

nn.dW{i} = (d{i + 1}' * nn.a{i}) / size(d{i + 1}, 1);

else

nn.dW{i} = (d{i + 1}(:,2:end)' * nn.a{i}) / size(d{i + 1}, 1);

end

end

end

注意这里面加入了 dropout和sparisty部分。

6. nnapplygrads

根据delta更新网络参数

function nn = nnapplygrads(nn)

%NNAPPLYGRADS updates weights and biases with calculated gradients

% nn = nnapplygrads(nn) returns an neural network structure with updated

% weights and biases

for i = 1 : (nn.n - 1)

if(nn.weightPenaltyL2>0)

dW = nn.dW{i} + nn.weightPenaltyL2 * [zeros(size(nn.W{i},1),1) nn.W{i}(:,2:end)];

else

dW = nn.dW{i};

end

dW = nn.learningRate * dW;

if(nn.momentum>0)

nn.vW{i} = nn.momentum*nn.vW{i} + dW;

dW = nn.vW{i};

end

nn.W{i} = nn.W{i} - dW;

end

end

这里的weightPenaltyL2 就是weight decay项,是对网络权重W稀疏性的惩罚

learningRate是学习率。momentum是动量项,保存历史信息的程度由它决定。

若numepochs = 1,则对所有样本只训练一次,否则训练numepochs次。

训练好了,则对模型进行test

7. nntest

function [er, bad] = nntest(nn, x, y)

labels = nnpredict(nn, x);

[dummy, expected] = max(y,[],2);

bad = find(labels ~= expected);

er = numel(bad) / size(x, 1);

end

调用nnpredict来进行预测,得到预测的lables,与ground truth进行比较,得到erro.

nnpredict里只用到了nnff,前向传播,不再赘叙。

置此,NN学习完毕,传统神经网络好理解,也容易实现。

参考博客:

点击打开链接