- 浅谈MapReduce

Android路上的人

Hadoop分布式计算mapreduce分布式框架hadoop

从今天开始,本人将会开始对另一项技术的学习,就是当下炙手可热的Hadoop分布式就算技术。目前国内外的诸多公司因为业务发展的需要,都纷纷用了此平台。国内的比如BAT啦,国外的在这方面走的更加的前面,就不一一列举了。但是Hadoop作为Apache的一个开源项目,在下面有非常多的子项目,比如HDFS,HBase,Hive,Pig,等等,要先彻底学习整个Hadoop,仅仅凭借一个的力量,是远远不够的。

- Hadoop

傲雪凌霜,松柏长青

后端大数据hadoop大数据分布式

ApacheHadoop是一个开源的分布式计算框架,主要用于处理海量数据集。它具有高度的可扩展性、容错性和高效的分布式存储与计算能力。Hadoop核心由四个主要模块组成,分别是HDFS(分布式文件系统)、MapReduce(分布式计算框架)、YARN(资源管理)和HadoopCommon(公共工具和库)。1.HDFS(HadoopDistributedFileSystem)HDFS是Hadoop生

- Hadoop架构

henan程序媛

hadoop大数据分布式

一、案列分析1.1案例概述现在已经进入了大数据(BigData)时代,数以万计用户的互联网服务时时刻刻都在产生大量的交互,要处理的数据量实在是太大了,以传统的数据库技术等其他手段根本无法应对数据处理的实时性、有效性的需求。HDFS顺应时代出现,在解决大数据存储和计算方面有很多的优势。1.2案列前置知识点1.什么是大数据大数据是指无法在一定时间范围内用常规软件工具进行捕捉、管理和处理的大量数据集合,

- 分享一个基于python的电子书数据采集与可视化分析 hadoop电子书数据分析与推荐系统 spark大数据毕设项目(源码、调试、LW、开题、PPT)

计算机源码社

Python项目大数据大数据pythonhadoop计算机毕业设计选题计算机毕业设计源码数据分析spark毕设

作者:计算机源码社个人简介:本人八年开发经验,擅长Java、Python、PHP、.NET、Node.js、Android、微信小程序、爬虫、大数据、机器学习等,大家有这一块的问题可以一起交流!学习资料、程序开发、技术解答、文档报告如需要源码,可以扫取文章下方二维码联系咨询Java项目微信小程序项目Android项目Python项目PHP项目ASP.NET项目Node.js项目选题推荐项目实战|p

- hbase介绍

CrazyL-

云计算+大数据hbase

hbase是一个分布式的、多版本的、面向列的开源数据库hbase利用hadoophdfs作为其文件存储系统,提供高可靠性、高性能、列存储、可伸缩、实时读写、适用于非结构化数据存储的数据库系统hbase利用hadoopmapreduce来处理hbase、中的海量数据hbase利用zookeeper作为分布式系统服务特点:数据量大:一个表可以有上亿行,上百万列(列多时,插入变慢)面向列:面向列(族)的

- 大数据毕业设计hadoop+spark+hive知识图谱租房数据分析可视化大屏 租房推荐系统 58同城租房爬虫 房源推荐系统 房价预测系统 计算机毕业设计 机器学习 深度学习 人工智能

2401_84572577

程序员大数据hadoop人工智能

做了那么多年开发,自学了很多门编程语言,我很明白学习资源对于学一门新语言的重要性,这些年也收藏了不少的Python干货,对我来说这些东西确实已经用不到了,但对于准备自学Python的人来说,或许它就是一个宝藏,可以给你省去很多的时间和精力。别在网上瞎学了,我最近也做了一些资源的更新,只要你是我的粉丝,这期福利你都可拿走。我先来介绍一下这些东西怎么用,文末抱走。(1)Python所有方向的学习路线(

- Spark集群的三种模式

MelodyYN

#Sparksparkhadoopbigdata

文章目录1、Spark的由来1.1Hadoop的发展1.2MapReduce与Spark对比2、Spark内置模块3、Spark运行模式3.1Standalone模式部署配置历史服务器配置高可用运行模式3.2Yarn模式安装部署配置历史服务器运行模式4、WordCount案例1、Spark的由来定义:Hadoop主要解决,海量数据的存储和海量数据的分析计算。Spark是一种基于内存的快速、通用、可

- 月度总结 | 2022年03月 | 考研与就业的抉择 | 确定未来走大数据开发路线

「已注销」

个人总结hadoop

一、时间线梳理3月3日,寻找到同专业的就业伙伴3月5日,着手准备Java八股文,决定先走Java后端路线3月8月,申请到了校图书馆的考研专座,决定暂时放弃就业,先准备考研,买了数学和408的资料书3月9日-3月13日,因疫情原因,宿舍区暂封,这段时间在准备考研,发现内容特别多3月13日-3月19日,大部分时间在刷Hadoop、Zookeeper、Kafka的视频,同时在准备实习的项目3月20日,退

- HBase介绍

mingyu1016

数据库

概述HBase是一个分布式的、面向列的开源数据库,源于google的一篇论文《bigtable:一个结构化数据的分布式存储系统》。HBase是GoogleBigtable的开源实现,它利用HadoopHDFS作为其文件存储系统,利用HadoopMapReduce来处理HBase中的海量数据,利用Zookeeper作为协同服务。HBase的表结构HBase以表的形式存储数据。表有行和列组成。列划分为

- Java中的大数据处理框架对比分析

省赚客app开发者

java开发语言

Java中的大数据处理框架对比分析大家好,我是微赚淘客系统3.0的小编,是个冬天不穿秋裤,天冷也要风度的程序猿!今天,我们将深入探讨Java中常用的大数据处理框架,并对它们进行对比分析。大数据处理框架是现代数据驱动应用的核心,它们帮助企业处理和分析海量数据,以提取有价值的信息。本文将重点介绍ApacheHadoop、ApacheSpark、ApacheFlink和ApacheStorm这四种流行的

- Hadoop windows intelij 跑 MR WordCount

piziyang12138

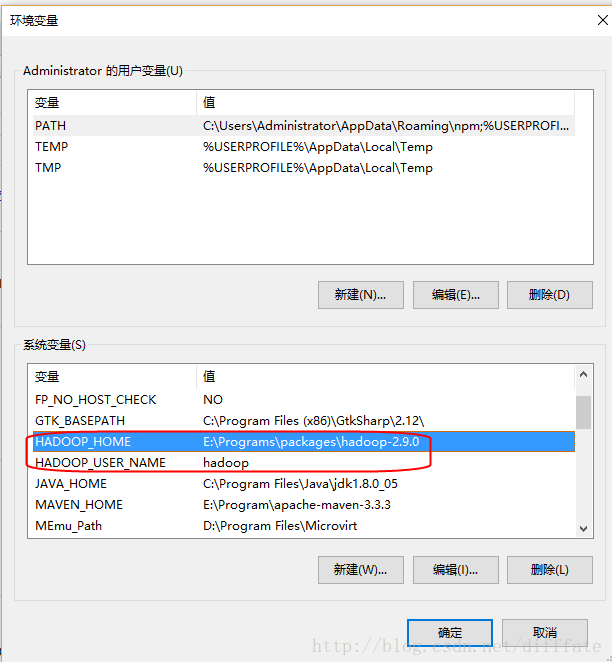

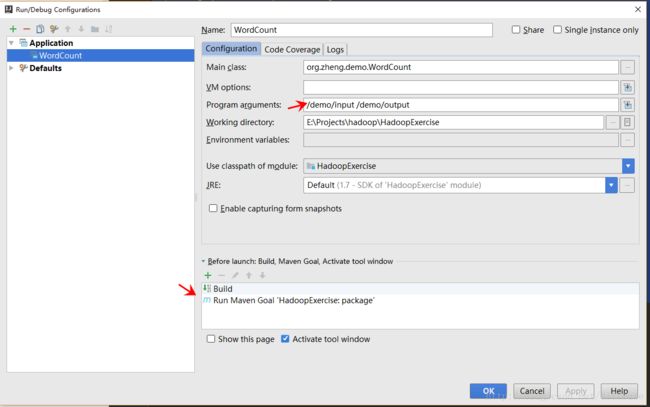

一、软件环境我使用的软件版本如下:IntellijIdea2017.1Maven3.3.9Hadoop分布式环境二、创建maven工程打开Idea,file->new->Project,左侧面板选择maven工程。(如果只跑MapReduce创建java工程即可,不用勾选Creatfromarchetype,如果想创建web工程或者使用骨架可以勾选)image.png设置GroupId和Artif

- Hadoop学习第三课(HDFS架构--读、写流程)

小小程序员呀~

数据库hadoop架构bigdata

1.块概念举例1:一桶水1000ml,瓶子的规格100ml=>需要10个瓶子装完一桶水1010ml,瓶子的规格100ml=>需要11个瓶子装完一桶水1010ml,瓶子的规格200ml=>需要6个瓶子装完块的大小规格,只要是需要存储,哪怕一点点,也是要占用一个块的块大小的参数:dfs.blocksize官方默认的大小为128M官网:https://hadoop.apache.org/docs/r3.

- hadoop启动HDFS命令

m0_67401228

java搜索引擎linux后端

启动命令:/hadoop/sbin/start-dfs.sh停止命令:/hadoop/sbin/stop-dfs.sh

- 【计算机毕设-大数据方向】基于Hadoop的电商交易数据分析可视化系统的设计与实现

程序员-石头山

大数据实战案例大数据hadoop毕业设计毕设

博主介绍:✌全平台粉丝5W+,高级大厂开发程序员,博客之星、掘金/知乎/华为云/阿里云等平台优质作者。【源码获取】关注并且私信我【联系方式】最下边感兴趣的可以先收藏起来,同学门有不懂的毕设选题,项目以及论文编写等相关问题都可以和学长沟通,希望帮助更多同学解决问题前言随着电子商务行业的迅猛发展,电商平台积累了海量的数据资源,这些数据不仅包括用户的基本信息、购物记录,还包括用户的浏览行为、评价反馈等多

- 分布式离线计算—Spark—基础介绍

测试开发abbey

人工智能—大数据

原文作者:饥渴的小苹果原文地址:【Spark】Spark基础教程目录Spark特点Spark相对于Hadoop的优势Spark生态系统Spark基本概念Spark结构设计Spark各种概念之间的关系Executor的优点Spark运行基本流程Spark运行架构的特点Spark的部署模式Spark三种部署方式Hadoop和Spark的统一部署摘要:Spark是基于内存计算的大数据并行计算框架Spar

- spark常用命令

我是浣熊的微笑

spark

查看报错日志:yarnlogsapplicationIDspark2-submit--masteryarn--classcom.hik.ReadHdfstest-1.0-SNAPSHOT.jar进入$SPARK_HOME目录,输入bin/spark-submit--help可以得到该命令的使用帮助。hadoop@wyy:/app/hadoop/spark100$bin/spark-submit--

- spark启动命令

学不会又听不懂

spark大数据分布式

hadoop启动:cd/root/toolssstart-dfs.sh,只需在hadoop01上启动stop-dfs.sh日志查看:cat/root/toolss/hadoop/logs/hadoop-root-datanode-hadoop03.outzookeeper启动:cd/root/toolss/zookeeperbin/zkServer.shstart,三台都要启动bin/zkServ

- 编程常用命令总结

Yellow0523

LinuxBigData大数据

编程命令大全1.软件环境变量的配置JavaScalaSparkHadoopHive2.大数据软件常用命令Spark基本命令Spark-SQL命令Hive命令HDFS命令YARN命令Zookeeper命令kafka命令Hibench命令MySQL命令3.Linux常用命令Git命令conda命令pip命令查看Linux系统的详细信息查看Linux系统架构(X86还是ARM,两种方法都可)端口号命令L

- Hadoop常见面试题整理及解答

叶青舟

Linuxhdfs大数据hadooplinux

Hadoop常见面试题整理及解答一、基础知识篇:1.把数据仓库从传统关系型数据库转到hadoop有什么优势?答:(1)关系型数据库成本高,且存储空间有限。而Hadoop使用较为廉价的机器存储数据,且Hadoop可以将大量机器构建成一个集群,并在集群中使用HDFS文件系统统一管理数据,极大的提高了数据的存储及处理能力。(2)关系型数据库仅支持标准结构化数据格式,Hadoop不仅支持标准结构化数据格式

- 2025毕业设计指南:如何用Hadoop构建超市进货推荐系统?大数据分析助力精准采购

计算机编程指导师

Java实战集Python实战集大数据实战集课程设计hadoop数据分析springbootjava进货python

✍✍计算机编程指导师⭐⭐个人介绍:自己非常喜欢研究技术问题!专业做Java、Python、小程序、安卓、大数据、爬虫、Golang、大屏等实战项目。⛽⛽实战项目:有源码或者技术上的问题欢迎在评论区一起讨论交流!⚡⚡Java实战|SpringBoot/SSMPython实战项目|Django微信小程序/安卓实战项目大数据实战项目⚡⚡文末获取源码文章目录⚡⚡文末获取源码基于hadoop的超市进货推荐系

- Hadoop Common 之序列化机制小解

猫君之上

#ApacheHadoop

1.JavaSerializable序列化该序列化通过ObjectInputStream的readObject实现序列化,ObjectOutputStream的writeObject实现反序列化。这不过此种序列化虽然跨病态兼容性强,但是因为存储过多的信息,但是传输效率比较低,所以hadoop弃用它。(序列化信息包括这个对象的类,类签名,类的所有静态,费静态成员的值,以及他们父类都要被写入)publ

- 深入理解hadoop(一)----Common的实现----Configuration

maoxiao_jsd

深入理解----hadoop

属本人个人原创,转载请注明,希望对大家有帮助!!一,hadoop的配置管理a,hadoop通过独有的Configuration处理配置信息Configurationconf=newConfiguration();conf.addResource("core-default.xml");conf.addResource("core-site.xml");后者会覆盖前者中未final标记的相同配置项b

- hadoop 0.22.0 部署笔记

weixin_33701564

大数据java运维

为什么80%的码农都做不了架构师?>>>因为需要使用hbase,所以开始对hbase进行学习。hbase是部署在hadoop平台上的NOSql数据库,因此在部署hbase之前需要先部署hadoop。环境:redhat5、hadoop-0.22.0.tar.gz、jdk-6u13-linux-i586.zipip192.168.1.128hostname:localhost.localdomain(

- 解决Windows环境下hadoop集群的运行_window运行hadoop,unknown hadoop01(4)

2401_84160087

大数据面试学习

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。需要这份系统化资料的朋友,可以戳这里获取一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!org.apache.hadoophadoop-com

- 解决Windows环境下hadoop集群的运行_window运行hadoop,unknown hadoop01(3)

2401_84160087

大数据面试学习

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。需要这份系统化资料的朋友,可以戳这里获取一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!xmlns:xsi="http://www.w3.or

- 深入解析HDFS:定义、架构、原理、应用场景及常用命令

CloudJourney

hdfs架构hadoop

引言Hadoop分布式文件系统(HDFS,HadoopDistributedFileSystem)是Hadoop框架的核心组件之一,它提供了高可靠性、高可用性和高吞吐量的大规模数据存储和管理能力。本文将从HDFS的定义、架构、工作原理、应用场景以及常用命令等多个方面进行详细探讨,帮助读者全面深入地了解HDFS。1.HDFS的定义1.1什么是HDFSHDFS是Hadoop生态系统中的一个分布式文件系

- Hadoop的搭建流程

lzhlizihang

hadoop大数据分布式

文章目录一、配置IP二、配置主机名三、配置主机映射四、关闭防火墙五、配置免密六、安装jdk1、第一步:2、第二步:3、第三步:4、第四步:5、第五步:七、安装hadoop1、上传2、解压3、重命名4、开始配置环境变量5、刷新配置文件6、验证hadoop命令是否可以识别八、全分布搭建7、修改配置文件core-site.xml8、修改配置文件hdfs-site.xml9、修改配置文件hadoop-en

- hive搭建 -----内嵌模式和本地模式

lzhlizihang

hivehadoop

文章目录一、内嵌模式(使用较少)1、上传、解压、重命名2、配置环境变量3、配置conf下的hive-env.sh4、修改conf下的hive-site.xml5、启动hadoop集群6、给hdfs创建文件夹7、修改hive-site.xml中的非法字符8、初始化元数据9、测试是否成功10、内嵌模式的缺点二、本地模式(最常用)1、检查mysql是否正常2、上传、解压、重命名3、配置环境变量4、修改c

- Hadoop之mapreduce -- WrodCount案例以及各种概念

lzhlizihang

hadoopmapreduce大数据

文章目录一、MapReduce的优缺点二、MapReduce案例--WordCount1、导包2、Mapper方法3、Partitioner方法(自定义分区器)4、reducer方法5、driver(main方法)6、Writable(手机流量统计案例的实体类)三、关于片和块1、什么是片,什么是块?2、mapreduce启动多少个MapTask任务?四、MapReduce的原理五、Shuffle过

- IAAS: IT公司去IOE-Alibaba系统构架解读

wishchin

心理学/职业BigDataMiniSparkPaaS

从Hadoop到自主研发,技术解读阿里去IOE后的系统架构原地址:......................云计算阿里飞天摘要:从IOE时代,到Hadoop与飞天并行,再到飞天单集群5000节点的实现,阿里一直摸索在技术衍变的前沿。这里,我们将从架构、性能、运维等多个方面深入了解阿里基础设施。【导读】互联网的普及,智能终端的增加,大数据时代悄然而至。在这个数据为王的时代,数十倍、数百倍的数据给各

- rust的指针作为函数返回值是直接传递,还是先销毁后创建?

wudixiaotie

返回值

这是我自己想到的问题,结果去知呼提问,还没等别人回答, 我自己就想到方法实验了。。

fn main() {

let mut a = 34;

println!("a's addr:{:p}", &a);

let p = &mut a;

println!("p's addr:{:p}", &a

- java编程思想 -- 数据的初始化

百合不是茶

java数据的初始化

1.使用构造器确保数据初始化

/*

*在ReckInitDemo类中创建Reck的对象

*/

public class ReckInitDemo {

public static void main(String[] args) {

//创建Reck对象

new Reck();

}

}

- [航天与宇宙]为什么发射和回收航天器有档期

comsci

地球的大气层中有一个时空屏蔽层,这个层次会不定时的出现,如果该时空屏蔽层出现,那么将导致外层空间进入的任何物体被摧毁,而从地面发射到太空的飞船也将被摧毁...

所以,航天发射和飞船回收都需要等待这个时空屏蔽层消失之后,再进行

&

- linux下批量替换文件内容

商人shang

linux替换

1、网络上现成的资料

格式: sed -i "s/查找字段/替换字段/g" `grep 查找字段 -rl 路径`

linux sed 批量替换多个文件中的字符串

sed -i "s/oldstring/newstring/g" `grep oldstring -rl yourdir`

例如:替换/home下所有文件中的www.admi

- 网页在线天气预报

oloz

天气预报

网页在线调用天气预报

<%@ page language="java" contentType="text/html; charset=utf-8"

pageEncoding="utf-8"%>

<!DOCTYPE html PUBLIC "-//W3C//DTD HTML 4.01 Transit

- SpringMVC和Struts2比较

杨白白

springMVC

1. 入口

spring mvc的入口是servlet,而struts2是filter(这里要指出,filter和servlet是不同的。以前认为filter是servlet的一种特殊),这样就导致了二者的机制不同,这里就牵涉到servlet和filter的区别了。

参见:http://blog.csdn.net/zs15932616453/article/details/8832343

2

- refuse copy, lazy girl!

小桔子

copy

妹妹坐船头啊啊啊啊!都打算一点点琢磨呢。文字编辑也写了基本功能了。。今天查资料,结果查到了人家写得完完整整的。我清楚的认识到:

1.那是我自己觉得写不出的高度

2.如果直接拿来用,很快就能解决问题

3.然后就是抄咩~~

4.肿么可以这样子,都不想写了今儿个,留着作参考吧!拒绝大抄特抄,慢慢一点点写!

- apache与php整合

aichenglong

php apache web

一 apache web服务器

1 apeche web服务器的安装

1)下载Apache web服务器

2)配置域名(如果需要使用要在DNS上注册)

3)测试安装访问http://localhost/验证是否安装成功

2 apache管理

1)service.msc进行图形化管理

2)命令管理,配

- Maven常用内置变量

AILIKES

maven

Built-in properties

${basedir} represents the directory containing pom.xml

${version} equivalent to ${project.version} (deprecated: ${pom.version})

Pom/Project properties

Al

- java的类和对象

百合不是茶

JAVA面向对象 类 对象

java中的类:

java是面向对象的语言,解决问题的核心就是将问题看成是一个类,使用类来解决

java使用 class 类名 来创建类 ,在Java中类名要求和构造方法,Java的文件名是一样的

创建一个A类:

class A{

}

java中的类:将某两个事物有联系的属性包装在一个类中,再通

- JS控制页面输入框为只读

bijian1013

JavaScript

在WEB应用开发当中,增、删除、改、查功能必不可少,为了减少以后维护的工作量,我们一般都只做一份页面,通过传入的参数控制其是新增、修改或者查看。而修改时需将待修改的信息从后台取到并显示出来,实际上就是查看的过程,唯一的区别是修改时,页面上所有的信息能修改,而查看页面上的信息不能修改。因此完全可以将其合并,但通过前端JS将查看页面的所有信息控制为只读,在信息量非常大时,就比较麻烦。

- AngularJS与服务器交互

bijian1013

JavaScriptAngularJS$http

对于AJAX应用(使用XMLHttpRequests)来说,向服务器发起请求的传统方式是:获取一个XMLHttpRequest对象的引用、发起请求、读取响应、检查状态码,最后处理服务端的响应。整个过程示例如下:

var xmlhttp = new XMLHttpRequest();

xmlhttp.onreadystatechange

- [Maven学习笔记八]Maven常用插件应用

bit1129

maven

常用插件及其用法位于:http://maven.apache.org/plugins/

1. Jetty server plugin

2. Dependency copy plugin

3. Surefire Test plugin

4. Uber jar plugin

1. Jetty Pl

- 【Hive六】Hive用户自定义函数(UDF)

bit1129

自定义函数

1. 什么是Hive UDF

Hive是基于Hadoop中的MapReduce,提供HQL查询的数据仓库。Hive是一个很开放的系统,很多内容都支持用户定制,包括:

文件格式:Text File,Sequence File

内存中的数据格式: Java Integer/String, Hadoop IntWritable/Text

用户提供的 map/reduce 脚本:不管什么

- 杀掉nginx进程后丢失nginx.pid,如何重新启动nginx

ronin47

nginx 重启 pid丢失

nginx进程被意外关闭,使用nginx -s reload重启时报如下错误:nginx: [error] open() “/var/run/nginx.pid” failed (2: No such file or directory)这是因为nginx进程被杀死后pid丢失了,下一次再开启nginx -s reload时无法启动解决办法:nginx -s reload 只是用来告诉运行中的ng

- UI设计中我们为什么需要设计动效

brotherlamp

UIui教程ui视频ui资料ui自学

随着国际大品牌苹果和谷歌的引领,最近越来越多的国内公司开始关注动效设计了,越来越多的团队已经意识到动效在产品用户体验中的重要性了,更多的UI设计师们也开始投身动效设计领域。

但是说到底,我们到底为什么需要动效设计?或者说我们到底需要什么样的动效?做动效设计也有段时间了,于是尝试用一些案例,从产品本身出发来说说我所思考的动效设计。

一、加强体验舒适度

嗯,就是让用户更加爽更加爽的用你的产品。

- Spring中JdbcDaoSupport的DataSource注入问题

bylijinnan

javaspring

参考以下两篇文章:

http://www.mkyong.com/spring/spring-jdbctemplate-jdbcdaosupport-examples/

http://stackoverflow.com/questions/4762229/spring-ldap-invoking-setter-methods-in-beans-configuration

Sprin

- 数据库连接池的工作原理

chicony

数据库连接池

随着信息技术的高速发展与广泛应用,数据库技术在信息技术领域中的位置越来越重要,尤其是网络应用和电子商务的迅速发展,都需要数据库技术支持动 态Web站点的运行,而传统的开发模式是:首先在主程序(如Servlet、Beans)中建立数据库连接;然后进行SQL操作,对数据库中的对象进行查 询、修改和删除等操作;最后断开数据库连接。使用这种开发模式,对

- java 关键字

CrazyMizzz

java

关键字是事先定义的,有特别意义的标识符,有时又叫保留字。对于保留字,用户只能按照系统规定的方式使用,不能自行定义。

Java中的关键字按功能主要可以分为以下几类:

(1)访问修饰符

public,private,protected

p

- Hive中的排序语法

daizj

排序hiveorder byDISTRIBUTE BYsort by

Hive中的排序语法 2014.06.22 ORDER BY

hive中的ORDER BY语句和关系数据库中的sql语法相似。他会对查询结果做全局排序,这意味着所有的数据会传送到一个Reduce任务上,这样会导致在大数量的情况下,花费大量时间。

与数据库中 ORDER BY 的区别在于在hive.mapred.mode = strict模式下,必须指定 limit 否则执行会报错。

- 单态设计模式

dcj3sjt126com

设计模式

单例模式(Singleton)用于为一个类生成一个唯一的对象。最常用的地方是数据库连接。 使用单例模式生成一个对象后,该对象可以被其它众多对象所使用。

<?phpclass Example{ // 保存类实例在此属性中 private static&

- svn locked

dcj3sjt126com

Lock

post-commit hook failed (exit code 1) with output:

svn: E155004: Working copy 'D:\xx\xxx' locked

svn: E200031: sqlite: attempt to write a readonly database

svn: E200031: sqlite: attempt to write a

- ARM寄存器学习

e200702084

数据结构C++cC#F#

无论是学习哪一种处理器,首先需要明确的就是这种处理器的寄存器以及工作模式。

ARM有37个寄存器,其中31个通用寄存器,6个状态寄存器。

1、不分组寄存器(R0-R7)

不分组也就是说说,在所有的处理器模式下指的都时同一物理寄存器。在异常中断造成处理器模式切换时,由于不同的处理器模式使用一个名字相同的物理寄存器,就是

- 常用编码资料

gengzg

编码

List<UserInfo> list=GetUserS.GetUserList(11);

String json=JSON.toJSONString(list);

HashMap<Object,Object> hs=new HashMap<Object, Object>();

for(int i=0;i<10;i++)

{

- 进程 vs. 线程

hongtoushizi

线程linux进程

我们介绍了多进程和多线程,这是实现多任务最常用的两种方式。现在,我们来讨论一下这两种方式的优缺点。

首先,要实现多任务,通常我们会设计Master-Worker模式,Master负责分配任务,Worker负责执行任务,因此,多任务环境下,通常是一个Master,多个Worker。

如果用多进程实现Master-Worker,主进程就是Master,其他进程就是Worker。

如果用多线程实现

- Linux定时Job:crontab -e 与 /etc/crontab 的区别

Josh_Persistence

linuxcrontab

一、linux中的crotab中的指定的时间只有5个部分:* * * * *

分别表示:分钟,小时,日,月,星期,具体说来:

第一段 代表分钟 0—59

第二段 代表小时 0—23

第三段 代表日期 1—31

第四段 代表月份 1—12

第五段 代表星期几,0代表星期日 0—6

如:

*/1 * * * * 每分钟执行一次。

*

- KMP算法详解

hm4123660

数据结构C++算法字符串KMP

字符串模式匹配我们相信大家都有遇过,然而我们也习惯用简单匹配法(即Brute-Force算法),其基本思路就是一个个逐一对比下去,这也是我们大家熟知的方法,然而这种算法的效率并不高,但利于理解。

假设主串s="ababcabcacbab",模式串为t="

- 枚举类型的单例模式

zhb8015

单例模式

E.编写一个包含单个元素的枚举类型[极推荐]。代码如下:

public enum MaYun {himself; //定义一个枚举的元素,就代表MaYun的一个实例private String anotherField;MaYun() {//MaYun诞生要做的事情//这个方法也可以去掉。将构造时候需要做的事情放在instance赋值的时候:/** himself = MaYun() {*

- Kafka+Storm+HDFS

ssydxa219

storm

cd /myhome/usr/stormbin/storm nimbus &bin/storm supervisor &bin/storm ui &Kafka+Storm+HDFS整合实践kafka_2.9.2-0.8.1.1.tgzapache-storm-0.9.2-incubating.tar.gzKafka安装配置我们使用3台机器搭建Kafk

- Java获取本地服务器的IP

中华好儿孙

javaWeb获取服务器ip地址

System.out.println("getRequestURL:"+request.getRequestURL());

System.out.println("getLocalAddr:"+request.getLocalAddr());

System.out.println("getLocalPort:&quo