CDH 6.2.0 或 6.3.0 安装实战及官方文档资料链接

Download CDH 6.2.0 | Detailed CDH 6.2.x Installation Guide | Cloudera Manager 6.2.0 | CDH 6.2.x Download

Download CDH 6.3.0 | Detailed CDH 6.3.x Installation Guide | Cloudera Manager 6.3.0 | CDH 6.3.x Download

目录

- 1 环境准备

- 1.1 环境清除或重装

- 1.2 Apache HTTP 服务安装

- Step 1:先查Apache http服务状态

- Step 2:安装 Apache http服

- Step 3:修改 Apache http 配置

- Step 4:创建资源路径

- 1.3 Host 配置

- 1.4 NTP

- 1.5 MySQL

- 1.6 剩下

- 1.7 其他

- 2 下载资源

- 2.1 简版下载

- 2.1.1 下载 parcel 包

- 2.1.2 下载需要的rpm包

- 2.1.3 获取 cloudera-manager 其他资源

- 2.1.3.1 获取

cloudera-manager.repo - 2.1.3.2 初始化

repodata

- 2.1.3.1 获取

- 2.1.4 下载数据库驱动

- *2.2 完整版下载

- 2.2.1 下载 parcel files

- 2.2.2 下载 Cloudera Manager

- 2.2.3 下载数据库驱动

- 2.3 设置安装节点的 cloudera-manager yum信息

- 2.3.1 下载

- 2.3.2 修改

- 2.3.3 更新yum

- 2.4 设置Cloudera Manager驱动

- 2.1 简版下载

- 3 安装

- 3.1 安装 Cloudera Manager

- 3.2 设置 Cloudera Manager 数据库

- 3.2.1 创建 Cloudera 软件对应的数据库

- 3.2.2 初始化数据库

- 3.3 安装CDH和其他软件

- 3.3.1 启动Cloudera Manager Server

- 3.3.2 转到 Web 浏览器

- 集群安装

- 使用向导设置群集

- 4 其他问题

- 4.1 Error starting NodeManager

- 4.2 Could not open file in log_dir /var/log/catalogd: Permission denied

- 4.3 Cannot connect to port 2049

- 4.4 Kafka不能创建Topic

- 4.5 Hive组件安装和启动时驱动找不到

- 4.6 Hive组件启动提示获取VERSION失败

- 4.7 Impala时区问题设置

- 4.8 hdfs用户登录不上

- 4.9 NTP问题

- 4.10 安装组件的其他异常

- PO一张最后安装完成的CDH Web 页面

- 最后

常见的 JDK 有 Oracle JDK、和 Open JDK,而常用到的 Open JDK有 Linux yum 版的 Open JDK、Zulu JDK、GraalVM CE JDK等。安装 CDH 环境的 JDK 时还是建议先使用官方提供的下载资源列表里的 oracle-j2sdk1.8-1.8.0+update181-1.x86_64.rpm。如果公司有要求必须使用Open JDK,可以先安装下载包中的Oracle JDK 安装,等CDH安装完毕,需要的组件服务安装配置完成之后,再升级为自己需公司要求的 Open JDK,且强烈建议这样做。

CDH升级JDK可以参考我的另一篇博客 CDH-5.16之 JDK 版本升级为 Open JDK1.8

对于包中个组件的版本可查看 CDH 6.2.0 Packaging,或者镜像库中查看 noarch | x86_64 。注意:从cdh6.0开始,Hadoop的版本升级到了3.0

1. 环境准备

这里是CDH对环境的要求:Cloudera Enterprise 6 Requirements and Supported Versions

1.1 环境清除或重装

假设旧环境已经安装过CDH或HDP或者其它,需要清除这些,清除相对麻烦些,删除的时候需谨慎。但整体可以这样快速清除

- 1 获取root用户

- 2 卸除通过rmp安装的服务:

rpm -qa # 或者指定某些服务 rpm -qa 'cloudera-manager-*' # 移除服务 rpm -e 上面命令查到的名字 --nodeps # 清除yum 缓存 sudo yum clean all - 3 查看进程:

ps -aux > ps.txt,通过第一列USER和 最后一列COMMAND确定是否为清除的进程,如果是根据第二列PIDkill掉:kill -9 pid号 - 4 查看系统的用户信息:

cat /etc/passwd - 5 删除多余的用户:

userdel 用户名 - 6 搜索这个用户相关的的文件,并删除:

find / -name 用户名* # 删除查到的文件 rm -rf 文件 - 7 在删除时有时可能文件被占用,可以先通过

lsof命令找到被占用的进程,关闭后再删除。 - 8 虽然查不到进程被占用,可能文件被挂载了,卸除后再删除:

umount cm-5.16.1/run/cloudera-scm-agent/process,

然后再:rm -rf cm-5.16.1/。当卸除命令执行后还是不能删除时,可以多运行几次,再尝试删除文件。

1.2 Apache HTTP 服务安装

因为有些服务器对访问外网有严格限制时,可以配置一个 HTTP服务,将下载的资源上传上去,方便后面的安装。

Step 1:先查Apache http服务状态

如果状态可查到,只需要修改配置文件(查看Step 3),重启服务就行。如果状态查询失败,需要先安装 Apache HTTP 服务(接着Step 2)。

sudo systemctl start httpd

Step 2:安装 Apache http服

yum -y install httpd

Step 3:修改 Apache http 配置

配置如下内容。最后保存退出。可以查看到配置的文档路径为:/var/www/html。其他的配置项可以默认,也可以根据情况修改。

vim /etc/httpd/conf/httpd.conf

# 大概在 119 行

DocumentRoot "/var/www/html"

# 大概在 131 行, 标签内对索引目录样式设置

# http://httpd.apache.org/docs/2.4/en/mod/mod_autoindex.html#indexoptions

# 最多显示100个字符,utf-8字符集,开启目录浏览修饰,目录优先排序

IndexOptions NameWidth=100 Charset=UTF-8 FancyIndexing FoldersFirst

配置完后,记得重启服务。

Step 4:创建资源路径

sudo mkdir -p /var/www/html/cloudera-repos

1.3 Host 配置

将集群的Host的ip和域名配置到每台机器的/etc/hosts。

注意 hostname必须是一个FQDN(全限定域名),例如myhost-1.example.com,否则后面转到页面时在启动Agent时有个验证会无法通过↘。

# cdh1

sudo hostnamectl set-hostname cdh1.example.com

# cdh2

sudo hostnamectl set-hostname cdh2.example.com

# cdh3

sudo hostnamectl set-hostname cdh3.example.com

#配置 /etc/hosts

192.168.33.3 cdh1.example.com cdh1

192.168.33.6 cdh2.example.com cdh2

192.168.33.9 cdh3.example.com cdh3

# 配置 /etc/sysconfig/network

# cdh1

HOSTNAME=cdh1.example.com

# cdh2

HOSTNAME=cdh2.example.com

# cdh3

HOSTNAME=cdh3.example.com

1.4 NTP

NTP服务在集群中是非常重要的服务,它是为了保证集群中的每个节点的时间在同一个频道上的服务。如果集群内网有时间同步服务,只需要在每个节点配置上NTP客户端配置,和时间同步服务同步实际就行,但如果没有时间同步服务,那就需要我们配置NTP服务。

规划如下,当可以访问时间同步服务,例如可以直接和亚洲NTP服务进行同步。例如不能访问时,可以将cdh1.example.com配置为NTP服务端。集群内节点和这个服务进行时间同步。

| ip | 用途 |

|---|---|

| 亚洲NTP时间服务地址 | |

| cdh1.example.com | ntpd服务,以本地时间为准 |

| cdh2.example.com | ntpd客户端。与ntpd服务同步时间 |

| cdh3.example.com | ntpd客户端。与ntpd服务同步时间 |

step1 ntpd service

# NTP服务,如果没有先安装

systemctl status ntpd.service

step2 与系统时间一起同步

非常重要 硬件时间与系统时间一起同步。修改配置文件vim /etc/sysconfig/ntpd。末尾新增代码SYNC_HWCLOCK=yes

# Command line options for ntpd

#OPTIONS="-x -u ntp:ntp -p /var/run/ntpd.pid"

OPTIONS="-g"

SYNC_HWCLOCK=yes

step3 添加NTP服务列表

编辑vim /etc/ntp/step-tickers

# List of NTP servers used by the ntpdate service.

#0.centos.pool.ntp.org

cdh1.example.com

step4 NTP服务端ntp.conf

修改ntp配置文件vim /etc/ntp.conf

driftfile /var/lib/ntp/drift

logfile /var/log/ntp.log

pidfile /var/run/ntpd.pid

leapfile /etc/ntp.leapseconds

includefile /etc/ntp/crypto/pw

keys /etc/ntp/keys

#允许任何IP的客户端进行时间同步,但不允许修改NTP服务端参数,default类似于0.0.0.0

restrict default kod nomodify notrap nopeer noquery

restrict -6 default kod nomodify notrap nopeer noquery

#restrict 10.135.3.58 nomodify notrap nopeer noquery

#允许通过本地回环接口进行所有访问

restrict 127.0.0.1

restrict -6 ::1

# 允许内网其他机器同步时间。网关和子网掩码。注意有些集群的网关可能比价特殊,可以用下面的命令查看

# 查看网关信息:/etc/sysconfig/network-scripts/ifcfg-网卡名;route -n、ip route show

restrict 192.168.33.2 mask 255.255.255.0 nomodify notrap

# 允许上层时间服务器主动修改本机时间

#server asia.pool.ntp.org minpoll 4 maxpoll 4 prefer

# 外部时间服务器不可用时,以本地时间作为时间服务

server 127.127.1.0 # local clock

fudge 127.127.1.0 stratum 10

step5 NTP客户端ntp.conf

driftfile /var/lib/ntp/drift

logfile /var/log/ntp.log

pidfile /var/run/ntpd.pid

leapfile /etc/ntp.leapseconds

includefile /etc/ntp/crypto/pw

keys /etc/ntp/keys

restrict default kod nomodify notrap nopeer noquery

restrict -6 default kod nomodify notrap nopeer noquery

restrict 127.0.0.1

restrict -6 ::1

server 192.168.33.3 iburst

step6 NTP服务重启和同步

#重启服务

systemctl restart ntpd.service

#开机自启

chkconfig ntpd on

ntpq -p

#ntpd -q -g

#ss -tunlp | grep -w :123

#手动触发同步

#ntpdate -uv cdh1.example.com

ntpdate -u cdh1.example.com

# 查看同步状态。需要过一段时间,查看状态会变成synchronised

ntpstat

timedatectl

ntptime

step7 NTP服务状态查看

如果显示如下则同步是正常的状态(状态显示 PLL,NANO):

[root@cdh2 ~]# ntptime

ntp_gettime() returns code 0 (OK)

time e0b2b842.b180f51c Fri, Apr 19 2019 11:09:20.333, (.693374110),

maximum error 27426 us, estimated error 0 us, TAI offset 0

ntp_adjtime() returns code 0 (OK)

modes 0x0 (),

offset 0.000 us, frequency 3.932 ppm, interval 1 s,

maximum error 27426 us, estimated error 0 us,

status 0x2001 (PLL,NANO),

time constant 6, precision 0.001 us, tolerance 500 ppm,

或者使用timedatectl命令查看(如果显示 NTP synchronized: yes,则同步成功):

[root@cdh2 ~]# timedatectl

Local time: Fri 2019-04-19 11:09:20 CST

Universal time: Fri 2019-04-19 11:09:20 UTC

RTC time: Fri 2019-04-19 11:09:20

Time zone: Asia/Shanghai (CST, +0800)

NTP enabled: no

NTP synchronized: yes

RTC in local TZ: no

DST active: n/a

1.5 MySQL

Download MySQL

step1 配置环境变量

# 配置Mysql环境变量

export PATH=$PATH:/usr/local/mysql/bin

step2 创建用户和组

#①建立一个mysql的组

groupadd mysql

#②建立mysql用户,并且把用户放到mysql组

useradd -r -g mysql mysql

#③还可以给mysql用户设置一个密码(mysql)。回车设置mysql用户的密码

passwd mysql

#④修改/usr/local/mysql 所属的组和用户

chown -R mysql:mysql /usr/local/mysql/

step3 设置MySQL配置文件

编辑/etc/my.cnf文件:vim /etc/my.cnf。设置为如下:

[mysqld]

basedir = /usr/local/mysql

datadir = /usr/local/mysql/data

port = 3306

socket=/var/lib/mysql/mysql.sock

character-set-server=utf8

transaction-isolation = READ-COMMITTED

# Disabling symbolic-links is recommended to prevent assorted security risks;

# to do so, uncomment this line:

symbolic-links = 0

server_id=1

max_connections = 550

log_bin=/var/lib/mysql/mysql_binary_log

binlog_format = mixed

read_buffer_size = 2M

read_rnd_buffer_size = 16M

sort_buffer_size = 8M

join_buffer_size = 8M

# InnoDB settings

innodb_file_per_table = 1

innodb_flush_log_at_trx_commit = 2

innodb_log_buffer_size = 64M

innodb_buffer_pool_size = 4G

innodb_thread_concurrency = 8

innodb_flush_method = O_DIRECT

innodb_log_file_size = 512M

[client]

default-character-set=utf8

socket=/var/lib/mysql/mysql.sock

[mysql]

default-character-set=utf8

socket=/var/lib/mysql/mysql.sock

[mysqld_safe]

log-error=/var/log/mysqld.log

pid-file=/var/run/mysqld/mysqld.pid

sql_mode=STRICT_ALL_TABLES

step4 配置解压和设置

# 解压到 /usr/local/ 下

tar -zxf mysql-5.7.27-el7-x86_64.tar.gz -C /usr/local/

# 重命名

mv /usr/local/mysql-5.7.27-el7-x86_64/ /usr/local/mysql

# 实现mysqld -install这样开机自动执行效果

cp /usr/local/mysql/support-files/mysql.server /etc/init.d/mysql

vim /etc/init.d/mysql

# 添加如下配置

basedir=/usr/local/mysql

datadir=/usr/local/mysql/data

#创建存放socket文件的目录

mkdir -p /var/lib/mysql

chown mysql:mysql /var/lib/mysql

#添加服务mysql

chkconfig --add mysql

# 设置mysql服务为自动

chkconfig mysql on

step5 开始安装

#初始化mysql。注意记录下临时密码: ?w=HuL-yV05q

/usr/local/mysql/bin/mysqld --initialize --user=mysql --basedir=/usr/local/mysql --datadir=/usr/local/mysql/data

#给数据库加密

/usr/local/mysql/bin/mysql_ssl_rsa_setup --datadir=/usr/local/mysql/data

# 启动mysql服务。过段时间,当不再刷屏时,按Ctrl + C退出后台进程

/usr/local/mysql/bin/mysqld_safe --user=mysql &

# 重启MySQL服务

/etc/init.d/mysql restart

#查看mysql进程

ps -ef|grep mysql

step6 登陆MySQL,完成后续设置

#第一次登陆mysql数据库,输入刚才的那个临时密码

/usr/local/mysql/bin/mysql -uroot -p

输入前面一步生成的临时密码,进入MySQL的命令行,其中密码密码可以访问☌随机密码生成☌网站生成安全强度较高的随机密码,生产环境一般是有强度要求。

--必须先修改密码

mysql> set password=password('V&0XkVpHZwkCEdY$');

--在mysql中添加一个远程访问的用户

mysql> use mysql;

mysql> select host,user from user;

-- 添加一个远程访问用户scm,并设置其密码为 U@P3uXBSmAe%kQh^

mysql> grant all privileges on *.* to 'scm'@'%' identified by '*YPGT$%GqA' with grant option;

--刷新配置

mysql> flush privileges;

1.6 剩下

这部分安装我们都比较熟悉,可以自行先将这些先安装完成。下面以 Centos 7.4 为例安装 CDH 6.2.0 。

- 配置网络名称

- 关闭防火墙

- 设置 SELinux mode

- 安装一个元数据,比如 PostgreSQL,MariaDB,MySQL或Oracle数据库,这里可以选用 Mysql。

注意 Mysql的配置文件/etc/my.cnf请参考Configuring and Starting the MySQL Server,进行配置。

- JDK:jdk8-downloads

- Scala

1.7 其他

其他更详细的可以阅读CDH官方文档:

- Cloudera Manager和Cloudera Navigator使用的端口

- CDH集群主机和角色分配。官方针对不同规模的集群有一些推荐的角色分配。

- CDH中组建使用的端口

- Cloudera Manager中的服务依赖项

2. 下载资源

如果服务器无法下载,对步骤2.1和2.2选其一种方式在本地将如下资源下载后,上传到Apache HTTP 服务器上的目录:/var/www/html/cloudera-repos。

这里分享两种方式,一种是最简版,一种是完全版,服务器上的资源库都可以使用这个,2.1和2.2选择一种方式下载即可。推荐第一种方式,直下载需要的包,下载的资源少,下载速度快,安装比较快,后期parcel或者cdh组建升级,可以下载对应的包再在web页面配置资源库,进行升级。

2.1 简版下载

其中将下载资源上传到搭建的 Apache HTTP 服务节点,如果文件夹不存在,需要手动创建。记得文件路径有足够的权限:

# 注意文件的权限

chmod 555 -R /var/www/html/cloudera-repos

2.1.1 下载 parcel 包

wget -b https://archive.cloudera.com/cdh6/6.2.0/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373-el7.parcel

wget https://archive.cloudera.com/cdh6/6.2.0/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373-el7.parcel.sha1

wget https://archive.cloudera.com/cdh6/6.2.0/parcels/manifest.json

将下载的包上传到 /var/www/html/cloudera-repos/cdh6/6.2.0/parcels

cdh 6.3.0的parcel 包访问:https://archive.cloudera.com/cdh6/6.3.0/parcels/

2.1.2 下载需要的rpm包

wget https://archive.cloudera.com/cm6/6.2.0/redhat7/yum/RPMS/x86_64/cloudera-manager-agent-6.2.0-968826.el7.x86_64.rpm

wget -b https://archive.cloudera.com/cm6/6.2.0/redhat7/yum/RPMS/x86_64/cloudera-manager-daemons-6.2.0-968826.el7.x86_64.rpm

wget https://archive.cloudera.com/cm6/6.2.0/redhat7/yum/RPMS/x86_64/cloudera-manager-server-6.2.0-968826.el7.x86_64.rpm

wget https://archive.cloudera.com/cm6/6.2.0/redhat7/yum/RPMS/x86_64/cloudera-manager-server-db-2-6.2.0-968826.el7.x86_64.rpm

wget https://archive.cloudera.com/cm6/6.2.0/redhat7/yum/RPMS/x86_64/enterprise-debuginfo-6.2.0-968826.el7.x86_64.rpm

wget https://archive.cloudera.com/cm6/6.2.0/redhat7/yum/RPMS/x86_64/oracle-j2sdk1.8-1.8.0+update181-1.x86_64.rpm

将下载的包上传到 /var/www/html/cloudera-repos/cm6/6.2.0/redhat7/yum/RPMS/x86_64

其它版本的可以访问Cloudera Manager页面,例如CDH 6.3.0:https://archive.cloudera.com/cm6/6.3.0/redhat7/yum/RPMS/x86_64/

2.1.3 获取 cloudera-manager 其他资源

2.1.3.1 获取cloudera-manager.repo

将下面下载的包上传到 /var/www/html/cloudera-repos/cm6/6.2.0/redhat7/yum

wget https://archive.cloudera.com/cm6/6.2.0/redhat7/yum/RPM-GPG-KEY-cloudera

wget https://archive.cloudera.com/cm6/6.2.0/redhat7/yum/cloudera-manager.repo

2.1.3.2 获取allkeys.asc

将下面下载的包上传到 /var/www/html/cloudera-repos/cm6/6.2.0

wget https://archive.cloudera.com/cm6/6.2.0/allkeys.asc

mv allkeys.asc /var/www/html/cloudera-repos/cm6/6.2.0

2.1.3.3 初始化repodata

进入到Apache HTTP服务器的:/var/www/html/cloudera-repos/cm6/6.2.0/redhat7/yum/目录下,然后执行

#yum repolist

# 如果没有安装 createrepo,请 yum 安装 createrepo

yum -y install createrepo

cd /var/www/html/cloudera-repos/cm6/6.2.0/redhat7/yum/

# 创建repodata

createrepo .

2.1.4 下载数据库驱动

这里保存元数据的数据库选用Mysql,因此需要下载Mysql数据库驱动,如果选用的其他数据,请详细阅读安装和配置数据库。

并将下载的驱动压缩包解压,或获得mysql-connector-java-5.1.46-bin.jar,记得务必将其名字改为mysql-connector-java.jar

wget https://dev.mysql.com/get/Downloads/Connector-J/mysql-connector-java-5.1.46.tar.gz

# 解压

tar zxvf mysql-connector-java-5.1.46.tar.gz

# 重命名mysql-connector-java-5.1.46-bin.jar为mysql-connector-java.jar,并放到/usr/share/java/下

mv mysql-connector-java-5.1.46-bin.jar /usr/share/java/mysql-connector-java.jar

# 同时发送到其它节点

scp /usr/share/java/mysql-connector-java.jar root@cdh2:/usr/share/java/

scp /usr/share/java/mysql-connector-java.jar root@cdh3:/usr/share/java/

*2.2 完整版下载

2.2.1 下载 parcel files

cd /var/www/html/cloudera-repos

sudo wget --recursive --no-parent --no-host-directories https://archive.cloudera.com/cdh6/6.2.0/parcels/ -P /var/www/html/cloudera-repos

sudo wget --recursive --no-parent --no-host-directories https://archive.cloudera.com/gplextras6/6.2.0/parcels/ -P /var/www/html/cloudera-repos

sudo chmod -R ugo+rX /var/www/html/cloudera-repos/cdh6

sudo chmod -R ugo+rX /var/www/html/cloudera-repos/gplextras6

2.2.2 下载 Cloudera Manager

sudo wget --recursive --no-parent --no-host-directories https://archive.cloudera.com/cm6/6.2.0/redhat7/ -P /var/www/html/cloudera-repos

sudo wget https://archive.cloudera.com/cm6/6.2.0/allkeys.asc -P /var/www/html/cloudera-repos/cm6/6.2.0/

sudo chmod -R ugo+rX /var/www/html/cloudera-repos/cm6

2.2.3 下载数据库驱动

见2.1.4 下载数据库驱动

2.3 设置安装节点的 cloudera-manager yum信息

假设通过上面,已经将需要的资源下载下来,并以上传到服务器可以访问的HTTP服务了。

2.3 .1 下载

一下连接中的${cloudera-repos.http.host} 请更换为自己的Apache HTTP服务的IP。

wget http://${cloudera-repos.http.host}/cloudera-repos/cm6/6.2.0/redhat7/yum/cloudera-manager.repo -P /etc/yum.repos.d/

# 导入存储库签名GPG密钥:

sudo rpm --import http://${cloudera-repos.http.host}/cloudera-repos/cm6/6.2.0/redhat7/yum/RPM-GPG-KEY-cloudera

2.3.2 修改

修改 cloudera-manager.repo。执行命令:vim /etc/yum.repos.d/cloudera-manager.repo,修改为如下(注意,原先的https一定要改为http)

[cloudera-manager]

name=Cloudera Manager 6.2.0

baseurl=http://${cloudera-repos.http.host}/cloudera-repos/cm6/6.2.0/redhat7/yum/

gpgkey=http://${cloudera-repos.http.host}/cloudera-repos/cm6/6.2.0/redhat7/yum/RPM-GPG-KEY-cloudera

gpgcheck=1

enabled=1

autorefresh=0

type=rpm-md

2.3.3 更新yum

#清除 yum 缓存

sudo yum clean all

#更新yum

sudo yum update

3. 安装

经过前面的准备,这里进入到正式的安装过程。

3.1 安装 Cloudera Manager

-

在 Server 端 执行

sudo yum install -y cloudera-manager-daemons cloudera-manager-agent cloudera-manager-server -

在 Agent 端 执行

sudo yum install -y cloudera-manager-agent cloudera-manager-daemons -

在安装完后,程序会自动在server节点上创建一个如下文件或文件夹:

/etc/cloudera-scm-agent/config.ini /etc/cloudera-scm-server/ /opt/cloudera …… -

为了后面安装的更快速,将下载的CDH包裹方到这里(仅Server端执行):

cd /opt/cloudera/parcel-repo/ wget http://${cloudera-repos.http.host}/cloudera-repos/cdh6/6.2.0/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373-el7.parcel wget http://${cloudera-repos.http.host}/cloudera-repos/cdh6/6.2.0/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373-el7.parcel.sha1 wget http://${cloudera-repos.http.host}/cloudera-repos/cdh6/6.2.0/parcels/manifest.json # 在manifest.json中找到对应版本的密钥(大概在755行),复制到*.sha文件中 # 一般CDH-6.2.0-1.cdh6.2.0.p0.967373-el7.parcel.sha1文件的内容和parcel密钥是一致的,只需重命名即可 echo "e9c8328d8c370517c958111a3db1a085ebace237" > CDH-6.2.0-1.cdh6.2.0.p0.967373-el7.parcel.sha #echo "d6e1483e47e3f2b1717db8357409865875dc307e" > CDH-6.3.0-1.cdh6.3.0.p0.1279813-el7.parcel.sha #修改属主属组。 chown cloudera-scm.cloudera-scm /opt/cloudera/parcel-repo/* -

修改agent配置文件,将Cloudera Manager Agent 配置为指向 Cloudera Manager Serve。

这里主要是配置 Agent节点的config.ini文件。vim /etc/cloudera-scm-agent/config.ini #配置如下项 # Hostname of the CM server. 运行Cloudera Manager Server的主机的名称 server_host=cdh1.example.com # Port that the CM server is listening on. 运行Cloudera Manager Server的主机上的端口 server_port=7182 #1位启用为代理使用 TLS 加密,如果前面没有设置,一定不要开启TLS #use_tls=1

3.2 设置 Cloudera Manager 数据库

Cloudera Manager Server包含一个可以数据库prepare的脚本,主要是使用这个脚本完成对数据库的相关配置进行初始化,这里不对元数据库中的表进行创建。

3.2.1 创建 Cloudera 软件对应的数据库:

这一步主要是创建 Cloudera 软件所需要的数据库,否则当执行后面一步的监本时会报如下错误

[ main] DbCommandExecutor INFO Able to connect to db server on host 'localhost' but not able to find or connect to database 'scm'.

[ main] DbCommandExecutor ERROR Error when connecting to database.

com.mysql.jdbc.exceptions.jdbc4.MySQLSyntaxErrorException: Unknown database 'scm'

……

Cloudera 软件对应的数据库列表如下:

如果只是先安装 Cloudera Manager Server ,就如上图,只需要创建scm的数据库,如果要安装其他服务请顺便也把数据库创建好。

# 登陆 Mysql后执行如下命令

CREATE DATABASE scm DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

# 顺便把其他的数据库也创建

CREATE DATABASE amon DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

CREATE DATABASE rman DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

CREATE DATABASE hue DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

# Hive、Impala等元数据库

CREATE DATABASE metastore DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

CREATE DATABASE sentry DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

CREATE DATABASE nav DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

CREATE DATABASE navms DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

CREATE DATABASE oozie DEFAULT CHARACTER SET utf8 DEFAULT COLLATE utf8_general_ci;

3.2.2 初始化数据库

初始化数据库时,主要使用的scm_prepare_database.sh脚本。脚本的语法如下

# options参数可以执行 scm_prepare_database.sh --help获取

sudo /opt/cloudera/cm/schema/scm_prepare_database.sh [options] <databaseType> <databaseName> <databaseUser> <password>

初始化 scm 数据库配置。这一步会在/etc/cloudera-scm-server/db.properties更新配置(如果驱动找不到,请确认/usr/share/java是否有mysql-connector-java.jar)。

[root@cdh1 ~]# sudo /opt/cloudera/cm/schema/scm_prepare_database.sh -h localhost mysql scm scm scm

JAVA_HOME=/usr/java/jdk1.8.0_181-cloudera

Verifying that we can write to /etc/cloudera-scm-server

Creating SCM configuration file in /etc/cloudera-scm-server

Executing: /usr/local/zulu8/bin/java -cp /usr/share/java/mysql-connector-java.jar:/usr/share/java/oracle-connector-java.jar:/usr/share/java/postgresql-connector-java.jar:/opt/cloudera/cm/schema/../lib/* com.cloudera.enterprise.dbutil.DbCommandExecutor /etc/cloudera-scm-server/db.properties com.cloudera.cmf.db.

[ main] DbCommandExecutor INFO Successfully connected to database.

All done, your SCM database is configured correctly!

参数说明:

- options 指定操作,如果数据库不再本地请用

-h或--host指定mysql的host,不指定默认为localhost - databaseType 指定为mysql,也可以是其它类型的数据,例如:oracle等

- databaseName 指定为scm数据库,这里使用 scm库

- databaseUser 指定mysql用户名,这里使用 scm

- password 指定mysql其用户名的密码,这里使用scm

这一步如果我们用的是自己配置的JDK可能会报如下的错误:

[root@cdh1 java]# sudo /opt/cloudera/cm/schema/scm_prepare_database.sh -h cdh1 mysql scm scm scm

JAVA_HOME=/usr/lib/jvm/java-1.8.0-openjdk-1.8.0.212.b04-0.el7_6.x86_64

Verifying that we can write to /etc/cloudera-scm-server

Creating SCM configuration file in /etc/cloudera-scm-server

Executing: /usr/lib/jvm/java-1.8.0-openjdk-1.8.0.212.b04-0.el7_6.x86_64/bin/java -cp /usr/share/java/mysql-connector-java.jar:/usr/share/java/oracle-connector-java.jar:/usr/share/java/postgresql-connector-java.jar:/opt/cloudera/cm/schema/../lib/* com.cloudera.enterprise.dbutil.DbCommandExecutor /etc/cloudera-scm-server/db.properties com.cloudera.cmf.db.

[ main] DbCommandExecutor ERROR Error when connecting to database.

java.sql.SQLException: java.lang.Error: java.io.FileNotFoundException: /usr/lib/jvm/java-1.8.0-openjdk-1.8.0.212.b04-0.el7_6.x86_64/jre/lib/tzdb.dat (No such file or directory)

at com.mysql.jdbc.SQLError.createSQLException(SQLError.java:964)

at com.mysql.jdbc.SQLError.createSQLException(SQLError.java:897)

at com.mysql.jdbc.SQLError.createSQLException(SQLError.java:886)

at com.mysql.jdbc.SQLError.createSQLException(SQLError.java:860)

at com.mysql.jdbc.SQLError.createSQLException(SQLError.java:877)

at com.mysql.jdbc.SQLError.createSQLException(SQLError.java:873)

at com.mysql.jdbc.Util.handleNewInstance(Util.java:443)

at com.mysql.jdbc.ConnectionImpl.getInstance(ConnectionImpl.java:389)

at com.mysql.jdbc.NonRegisteringDriver.connect(NonRegisteringDriver.java:330)

at java.sql.DriverManager.getConnection(DriverManager.java:664)

at java.sql.DriverManager.getConnection(DriverManager.java:247)

at com.cloudera.enterprise.dbutil.DbCommandExecutor.testDbConnection(DbCommandExecutor.java:263)

at com.cloudera.enterprise.dbutil.DbCommandExecutor.main(DbCommandExecutor.java:139)

Caused by: java.lang.Error: java.io.FileNotFoundException: /usr/lib/jvm/java-1.8.0-openjdk-1.8.0.212.b04-0.el7_6.x86_64/jre/lib/tzdb.dat (No such file or directory)

at sun.util.calendar.ZoneInfoFile$1.run(ZoneInfoFile.java:261)

at java.security.AccessController.doPrivileged(Native Method)

at sun.util.calendar.ZoneInfoFile.<clinit>(ZoneInfoFile.java:251)

at sun.util.calendar.ZoneInfo.getTimeZone(ZoneInfo.java:589)

at java.util.TimeZone.getTimeZone(TimeZone.java:560)

at java.util.TimeZone.setDefaultZone(TimeZone.java:666)

at java.util.TimeZone.getDefaultRef(TimeZone.java:636)

at java.util.GregorianCalendar.<init>(GregorianCalendar.java:591)

at com.mysql.jdbc.ConnectionImpl.<init>(ConnectionImpl.java:706)

at com.mysql.jdbc.JDBC4Connection.<init>(JDBC4Connection.java:47)

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at com.mysql.jdbc.Util.handleNewInstance(Util.java:425)

... 6 more

Caused by: java.io.FileNotFoundException: /usr/lib/jvm/java-1.8.0-openjdk-1.8.0.212.b04-0.el7_6.x86_64/jre/lib/tzdb.dat (No such file or directory)

at java.io.FileInputStream.open0(Native Method)

at java.io.FileInputStream.open(FileInputStream.java:195)

at java.io.FileInputStream.<init>(FileInputStream.java:138)

at sun.util.calendar.ZoneInfoFile$1.run(ZoneInfoFile.java:255)

... 20 more

[ main] DbCommandExecutor ERROR Exiting with exit code 4

--> Error 4, giving up (use --force if you wish to ignore the error)

解决办法,打开执行的脚本/opt/cloudera/cm/schema/scm_prepare_database.sh 在108行local JAVA8_HOME_CANDIDATES=()方法中将自己配置的JAVA_HOME填入:

local JAVA8_HOME_CANDIDATES=(

'/usr/java/jdk1.8.0_181-cloudera'

'/usr/java/jdk1.8'

'/usr/java/jre1.8'

'/usr/lib/jvm/j2sdk1.8-oracle'

'/usr/lib/jvm/j2sdk1.8-oracle/jre'

'/usr/lib/jvm/java-8-oracle'

)

3.3 安装CDH和其他软件

只需要在 Cloudera Manager Server 端启动 server即可,Agent 在进入Web页面后徐步骤中会自动帮我们启动。

3.3.1 启动Cloudera Manager Server

sudo systemctl start cloudera-scm-server

查看启动结果

sudo systemctl status cloudera-scm-server

如果要观察启动过程可以在 Cloudera Manager Server 主机上运行以下命令:

sudo tail -f /var/log/cloudera-scm-server/cloudera-scm-server.log

# 当您看到此日志条目时,Cloudera Manager管理控制台已准备就绪:

# INFO WebServerImpl:com.cloudera.server.cmf.WebServerImpl: Started Jetty server.

如果日志有问题,可以根据提示解决。比如:

2019-06-13 16:33:19,148 ERROR WebServerImpl:com.cloudera.server.web.cmf.search.components.SearchRepositoryManager: No read permission to the server storage directory [/var/lib/cloudera-scm-server/search]

2019-06-13 16:33:19,148 ERROR WebServerImpl:com.cloudera.server.web.cmf.search.components.SearchRepositoryManager: No write permission to the server storage directory [/var/lib/cloudera-scm-server/search]

……

2019-06-13 16:33:19,637 ERROR WebServerImpl:org.springframework.web.servlet.DispatcherServlet: Context initialization failed

org.springframework.beans.factory.UnsatisfiedDependencyException: Error creating bean with name 'reportsController': Unsatisfied dependency expressed through field 'viewFactory'; nested exception is org.springframework.beans.factory.BeanCreationNotAllowedException: Error creating bean with name 'viewFactory': Singleton bean creation not allowed while singletons of this factory are in destruction (Do not request a bean from a BeanFactory in a destroy method implementation!)

……

Caused by: org.springframework.beans.factory.BeanCreationNotAllowedException: Error creating bean with name 'viewFactory': Singleton bean creation not allowed while singletons of this factory are in destruction (Do not request a bean from a BeanFactory in a destroy method implementation!)

……

================================================================================

Starting SCM Server. JVM Args: [-Dlog4j.configuration=file:/etc/cloudera-scm-server/log4j.properties, -Dfile.encoding=UTF-8, -Duser.timezone=Asia/Shanghai, -Dcmf.root.logger=INFO,LOGFILE, -Dcmf.log.dir=/var/log/cloudera-scm-server, -Dcmf.log.file=cloudera-scm-server.log, -Dcmf.jetty.threshhold=WARN, -Dcmf.schema.dir=/opt/cloudera/cm/schema, -Djava.awt.headless=true, -Djava.net.preferIPv4Stack=true, -Dpython.home=/opt/cloudera/cm/python, -XX:+UseConcMarkSweepGC, -XX:+UseParNewGC, -XX:+HeapDumpOnOutOfMemoryError, -Xmx2G, -XX:MaxPermSize=256m, -XX:+HeapDumpOnOutOfMemoryError, -XX:HeapDumpPath=/tmp, -XX:OnOutOfMemoryError=kill -9 %p], Args: [], Version: 6.2.0 (#968826 built by jenkins on 20190314-1704 git: 16bbe6211555460a860cf22d811680b35755ea81)

Server failed.

java.lang.NoClassDefFoundError: Could not initialize class sun.util.calendar.ZoneInfoFile

at sun.util.calendar.ZoneInfo.getTimeZone(ZoneInfo.java:589)

at java.util.TimeZone.getTimeZone(TimeZone.java:560)

at java.util.TimeZone.setDefaultZone(TimeZone.java:666)

at java.util.TimeZone.getDefaultRef(TimeZone.java:636)

at java.util.Date.normalize(Date.java:1197)

at java.util.Date.toString(Date.java:1030)

at java.lang.String.valueOf(String.java:2994)

at java.lang.StringBuilder.append(StringBuilder.java:131)

at org.springframework.context.support.AbstractApplicationContext.toString(AbstractApplicationContext.java:1367)

at java.lang.String.valueOf(String.java:2994)

at java.lang.StringBuilder.append(StringBuilder.java:131)

at org.springframework.context.support.AbstractApplicationContext.prepareRefresh(AbstractApplicationContext.java:583)

at org.springframework.context.support.AbstractApplicationContext.refresh(AbstractApplicationContext.java:512)

at org.springframework.context.access.ContextSingletonBeanFactoryLocator.initializeDefinition(ContextSingletonBeanFactoryLocator.java:143)

at org.springframework.beans.factory.access.SingletonBeanFactoryLocator.useBeanFactory(SingletonBeanFactoryLocator.java:383)

at com.cloudera.server.cmf.Main.findBeanFactory(Main.java:481)

at com.cloudera.server.cmf.Main.findBootstrapApplicationContext(Main.java:472)

at com.cloudera.server.cmf.Main.bootstrapSpringContext(Main.java:375)

at com.cloudera.server.cmf.Main.(Main.java:260)

at com.cloudera.server.cmf.Main.main(Main.java:233)

================================================================================

修改文件权限问题,修改时区。如果问题不能解决,请更换为Oracle JDK。

时区问题可以在/opt/cloudera/cm/bin/cm-server文件中,大概第40行添加CMF_OPTS="$CMF_OPTS -Duser.timezone=Asia/Shanghai"

如果提示如下错误,请删除/var/lib/cloudera-scm-agent/cm_guid的guid。

[15/Jun/2019 13:54:55 +0000] 24821 MainThread agent ERROR Error, CM server guid updated, expected 198b7045-53ce-458a-9c0a-052d0aba8a22, received ea04f769-95c8-471f-8860-3943bfc8ea7b

*(可选,如果需要)实例化一个新的cloudera-scm-server,需重启

uuidgen > /etc/cloudera-scm-server/uuid

3.3.2 转到 Web 浏览器

在Web浏览器数据 http://,其中

登录Cloudera Manager Admin Console,默认凭证为

- Username: admin

- Password: admin

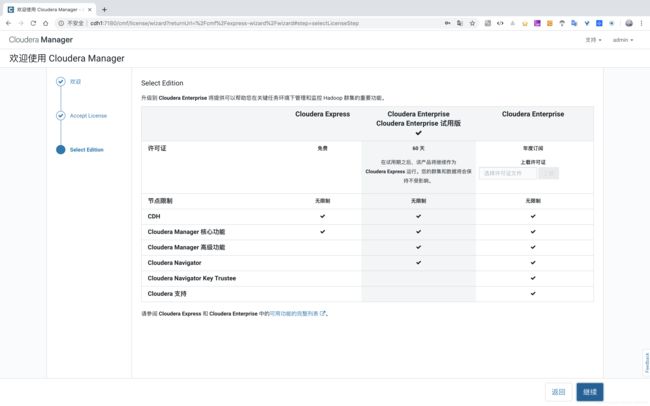

登陆用户名后,显示如下页面,根据提示进行安装即可:欢迎 -> Accept License -> Select Edition。

这一步选择安装的版本,不同版本支持的主要功能已列出,第一列为Cloudera免费的快速体验版;第二列为Cloudera为企业级试用版(免费试用60天);第三列是功能和服务最全的Cloudera企业版,是需要认证且收费的。Cloudera Express 和 Cloudera Enterprise 中的可用功能的完整列表。

选择第一列,快速体验版服务,完全免费,功能和服务对于需求不是很特殊和复杂的试用基本没什么问题,如果后期功能不够,或满足不了需求,想使用Cloudera的企业版也不用担心,在Cloudera Manager页面,点击页面头部的管理菜单,在下拉列表中单机许可证,可在页面上选择:试用Cloudera Enterprise 60天 、升级至Cloudera Enterprise ,更详细的升级说明可查看 从Cloudera Express升级到Cloudera Enterprise ➹。

选择第二列,可以直接免费体验Cloudera Enterprise全部功能60天,且这个每次只能试用一次,关于许可证到期或试用许可证的说明可访问 Managing Licenses ➹。

选择第三列,使用Cloudera企业版,需要获取许可证,要获得Cloudera Enterprise许可证,请填写此表单 或致电866-843-7207。关于许可证的详细说明可以访问Managing Licenses。其功能和价格可参考 功能和价格 页面。

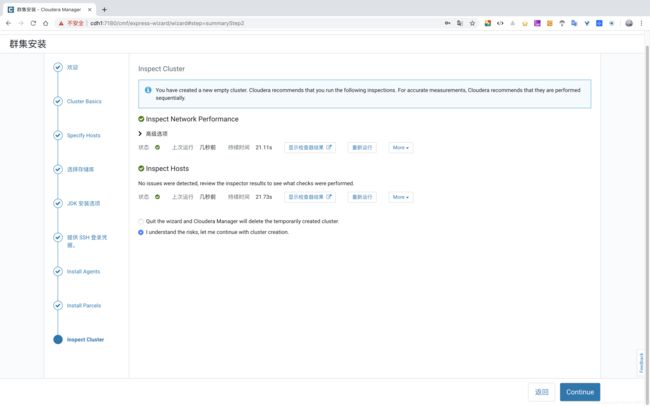

集群安装

- 欢迎

- Cluster Basics:给集群设置一个名字。

* Specify Hosts:输入集群的主机名,多个换行添加,例如: - 这里需要重点注意的是,这个地址一定是符合FQDN(全限定域名)规范的,否则在Agents安装时会有验证,

如果root的密码不一样怎么办?,除了可以找管理员将root密码统一改为一样的,也可以这样解决,以单节点方式安装,Web页面到达集群设置选择安装组件服务时,另起一个页面,进入Cloudera Manager,选择 主机 -> 所有主机 -> Add Hosts ,根据提示依次将其它节点添加到这个集群名字下,中间输入每个机器的root的密码完成验证即可。cdh1.example.com cdh2.example.com cdh3.example.com

- 选择存储库:可以设置自定义存储库(即安装的

http://${cloudera-repos.http.host}/cloudera-repos/cm6/6.2.0),等。 - JDK 安装选项:如果环境已经安装了,则不用勾选,直接继续。

- 提供 SSH 登录凭据:输入Cloudera Manager主机的账号,用root,输入密码。

- Install Agents:这一步会启动集群中的Agent节点的Agent服务。

- Install Parcels:

- Inspect Cluster:点击检查NetWork和Host,然后继续。

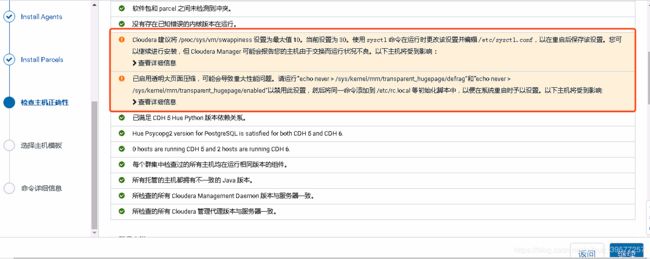

如果这一步有提示如下的错误(这里引用的是CDH 6.3.0的页面):

⚠️警告1 的处理

Cloudera 建议将 /proc/sys/vm/swappiness 设置为最大值 10。当前设置为 30。使用 sysctl 命令在运行时更改该设置并编辑 /etc/sysctl.conf,以在重启后保存该设置。

您可以继续进行安装,但 Cloudera Manager 可能会报告您的主机由于交换而运行状况不良。以下主机将受到影响:

处理:

sysctl vm.swappiness=10

# 这里我们的修改已经生效,但是如果我们重启了系统,又会变成原先的值

echo 'vm.swappiness=10'>> /etc/sysctl.conf

⚠️警告2 的处理

已启用透明大页面压缩,可能会导致重大性能问题。请运行“echo never > /sys/kernel/mm/transparent_hugepage/defrag”和

“echo never > /sys/kernel/mm/transparent_hugepage/enabled”以禁用此设置,

然后将同一命令添加到 /etc/rc.local 等初始化脚本中,以便在系统重启时予以设置。以下主机将受到影响:

处理:

echo never > /sys/kernel/mm/transparent_hugepage/defrag

echo never > /sys/kernel/mm/transparent_hugepage/enabled

# 然后将命令添加到初始化脚本中

vi /etc/rc.local

# 添加如下

echo never > /sys/kernel/mm/transparent_hugepage/defrag

echo never > /sys/kernel/mm/transparent_hugepage/enabled

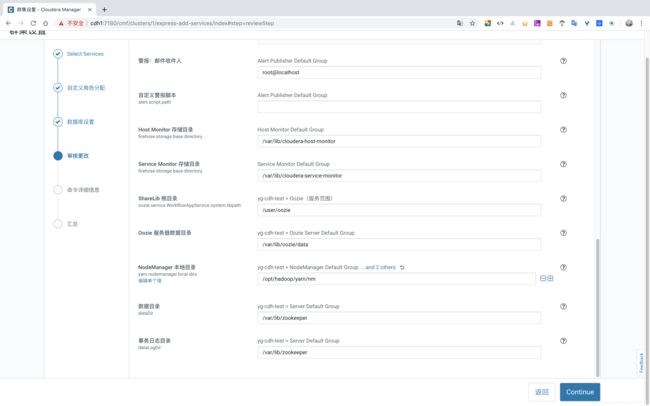

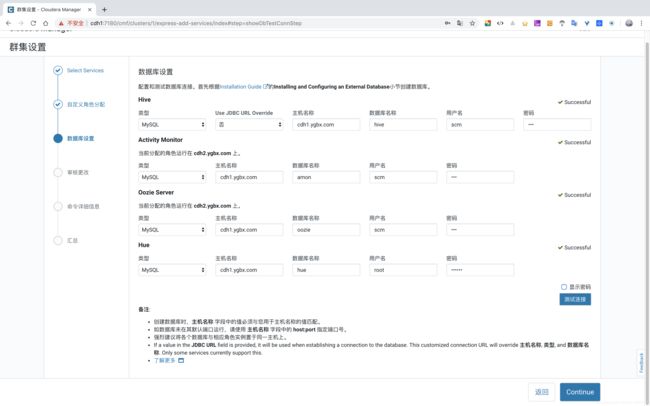

使用向导设置群集

| 服务 | 主机名称 | 数据库 | 用户名 | 密码 |

|---|---|---|---|---|

| Hive | cdh1.ygbx.com | metastore | scm | *YPGT$%GqA |

| Activity Monitor | cdh1.ygbx.com | amon | scm | *YPGT$%GqA |

| Oozie Server | cdh1.ygbx.com | oozie | scm | *YPGT$%GqA |

| Hue | cdh1.ygbx.com | hue | scm | *YPGT$%GqA |

如果数据库的密码忘记了怎么办,多数是可以根据你的感觉去try的,也可以直接查看/etc/cloudera-scm-server/db.properties文件。

4. 其他问题

4.1 Error starting NodeManager

发生如下异常:

2019-06-16 12:19:25,932 WARN org.apache.hadoop.service.AbstractService: When stopping the service NodeManager : java.lang.NullPointerException

java.lang.NullPointerException

at org.apache.hadoop.yarn.server.nodemanager.NodeManager.serviceStop(NodeManager.java:483)

at org.apache.hadoop.service.AbstractService.stop(AbstractService.java:222)

at org.apache.hadoop.service.ServiceOperations.stop(ServiceOperations.java:54)

at org.apache.hadoop.service.ServiceOperations.stopQuietly(ServiceOperations.java:104)

at org.apache.hadoop.service.AbstractService.init(AbstractService.java:172)

at org.apache.hadoop.yarn.server.nodemanager.NodeManager.initAndStartNodeManager(NodeManager.java:869)

at org.apache.hadoop.yarn.server.nodemanager.NodeManager.main(NodeManager.java:942)

2019-06-16 12:19:25,932 ERROR org.apache.hadoop.yarn.server.nodemanager.NodeManager: Error starting NodeManager

org.apache.hadoop.service.ServiceStateException: org.fusesource.leveldbjni.internal.NativeDB$DBException: IO error: /var/lib/hadoop-yarn/yarn-nm-recovery/yarn-nm-state/LOCK: Permission denied

at org.apache.hadoop.service.ServiceStateException.convert(ServiceStateException.java:105)

at org.apache.hadoop.service.AbstractService.init(AbstractService.java:173)

at org.apache.hadoop.yarn.server.nodemanager.NodeManager.initAndStartRecoveryStore(NodeManager.java:281)

at org.apache.hadoop.yarn.server.nodemanager.NodeManager.serviceInit(NodeManager.java:354)

at org.apache.hadoop.service.AbstractService.init(AbstractService.java:164)

at org.apache.hadoop.yarn.server.nodemanager.NodeManager.initAndStartNodeManager(NodeManager.java:869)

at org.apache.hadoop.yarn.server.nodemanager.NodeManager.main(NodeManager.java:942)

Caused by: org.fusesource.leveldbjni.internal.NativeDB$DBException: IO error: /var/lib/hadoop-yarn/yarn-nm-recovery/yarn-nm-state/LOCK: Permission denied

at org.fusesource.leveldbjni.internal.NativeDB.checkStatus(NativeDB.java:200)

at org.fusesource.leveldbjni.internal.NativeDB.open(NativeDB.java:218)

at org.fusesource.leveldbjni.JniDBFactory.open(JniDBFactory.java:168)

at org.apache.hadoop.yarn.server.nodemanager.recovery.NMLeveldbStateStoreService.openDatabase(NMLeveldbStateStoreService.java:1517)

at org.apache.hadoop.yarn.server.nodemanager.recovery.NMLeveldbStateStoreService.initStorage(NMLeveldbStateStoreService.java:1504)

at org.apache.hadoop.yarn.server.nodemanager.recovery.NMStateStoreService.serviceInit(NMStateStoreService.java:342)

at org.apache.hadoop.service.AbstractService.init(AbstractService.java:164)

... 5 more

查看每个NodeManager节点的/var/lib

cd /var/lib

ls -l | grep -i hadoop

发现有一个节点信息如下:

[root@cdh1 lib]# ls -l | grep -i hadoop

drwxr-xr-x 3 996 992 4096 Apr 25 14:39 hadoop-hdfs

drwxr-xr-x 2 cloudera-scm cloudera-scm 4096 Apr 25 13:50 hadoop-httpfs

drwxr-xr-x 2 sentry sentry 4096 Apr 25 13:50 hadoop-kms

drwxr-xr-x 2 flume flume 4096 Apr 25 13:50 hadoop-mapreduce

drwxr-xr-x 4 solr solr 4096 Apr 25 14:40 hadoop-yarn

而其他节点为:

[root@cdh2 lib]# ls -l | grep -i hadoop

drwxr-xr-x 3 hdfs hdfs 4096 Jun 16 06:04 hadoop-hdfs

drwxr-xr-x 3 httpfs httpfs 4096 Jun 16 06:04 hadoop-httpfs

drwxr-xr-x 2 mapred mapred 4096 Jun 16 05:06 hadoop-mapreduce

drwxr-xr-x 4 yarn yarn 4096 Jun 16 06:07 hadoop-yarn

所以执行如下,修改有问题的那个节点对应文件的归属和权限。重启有问题的节点的NodeManager

chown -R hdfs:hdfs /var/lib/hadoop-hdfs

chown -R httpfs.httpfs /var/lib/hadoop-httpfs

chown -R kms.kms /var/lib/hadoop-kms

chown -R mapred:mapred /var/lib/hadoop-mapreduce

chown -R yarn:yarn /var/lib/hadoop-yarn

chmod -R 755 /var/lib/hadoop-*

4.2 Could not open file in log_dir /var/log/catalogd: Permission denied

查看日志如下异常信息:

+ exec /opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/impala/../../bin/catalogd --flagfile=/var/run/cloudera-scm-agent/process/173-impala-CATALOGSERVER/impala-conf/catalogserver_flags

Could not open file in log_dir /var/log/catalogd: Permission denied

……

+ exec /opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/impala/../../bin/statestored --flagfile=/var/run/cloudera-scm-agent/process/175-impala-STATESTORE/impala-conf/state_store_flags

Could not open file in log_dir /var/log/statestore: Permission denied

以执行如下,修改有问题的那个节点对应文件的归属和权限。重启有问题的节点的对应的服务

cd /var/log

ls -l /var/log | grep -i catalogd

# 在`Imapala Catalog Server`节点执行

chown -R impala:impala /var/log/catalogd

# 在`Imapala StateStore`节点

chown -R impala:impala /var/log/statestore

4.3 Cannot connect to port 2049

CONF_DIR=/var/run/cloudera-scm-agent/process/137-hdfs-NFSGATEWAY

CMF_CONF_DIR=

unlimited

Cannot connect to port 2049.

using /opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/bigtop-utils as JSVC_HOME

在NFS Gateway节点启动rpcbind

# 查看各节点的 NFS服务状态

systemctl status nfs-server.service

# 如果没有就安装

yum -y install nfs-utils

# 查看 rpcbind 服务状态

systemctl status rpcbind.service

# 如果没有启动,则启动 rpcbind

systemctl start rpcbind.service

4.4 Kafka不能创建Topic

当我们将Kafka组件安装成功之后,我们创建一个Topic,发现创建失败:

[root@cdh2 lib]# kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic canal

Error while executing topic command : Replication factor: 1 larger than available brokers: 0.

19/06/16 23:27:30 ERROR admin.TopicCommand$: org.apache.kafka.common.errors.InvalidReplicationFactorException: Replication factor: 1 larger than available brokers: 0.

此时可以登陆zkCli.sh 查看Kafka的zNode信息,发现一切正常,ids都在,后台程序创建的Topic Name也在,但就是无法用命令查看。

此时可以先将Zookeeper和Kafka都重启一下,再尝试,如果依旧不行,将Kafka在Zookeeper的zNode目录设置为根节点。然后重启,再次创建和查看,发现现在Kafka正常了。

4.5 Hive组件安装和启动时驱动找不到

有时明明驱动包已经放置到了/usr/share/java/ 下,可能会依然报如下错误

+ [[ -z /opt/cloudera/cm ]]

+ JDBC_JARS_CLASSPATH='/opt/cloudera/cm/lib/*:/usr/share/java/mysql-connector-java.jar:/opt/cloudera/cm/lib/postgresql-42.1.4.jre7.jar:/usr/share/java/oracle-connector-java.jar'

++ /usr/java/jdk1.8.0_181-cloudera/bin/java -Djava.net.preferIPv4Stack=true -cp '/opt/cloudera/cm/lib/*:/usr/share/java/mysql-connector-java.jar:/opt/cloudera/cm/lib/postgresql-42.1.4.jre7.jar:/usr/share/java/oracle-connector-java.jar' com.cloudera.cmf.service.hive.HiveMetastoreDbUtil /var/run/cloudera-scm-agent/process/32-hive-metastore-create-tables/metastore_db_py.properties unused --printTableCount

Exception in thread "main" java.lang.RuntimeException: java.lang.ClassNotFoundException: com.mysql.jdbc.Driver

at com.cloudera.cmf.service.hive.HiveMetastoreDbUtil.countTables(HiveMetastoreDbUtil.java:203)

at com.cloudera.cmf.service.hive.HiveMetastoreDbUtil.printTableCount(HiveMetastoreDbUtil.java:284)

at com.cloudera.cmf.service.hive.HiveMetastoreDbUtil.main(HiveMetastoreDbUtil.java:334)

Caused by: java.lang.ClassNotFoundException: com.mysql.jdbc.Driver

at java.net.URLClassLoader.findClass(URLClassLoader.java:381)

at java.lang.ClassLoader.loadClass(ClassLoader.java:424)

at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:349)

at java.lang.ClassLoader.loadClass(ClassLoader.java:357)

at java.lang.Class.forName0(Native Method)

at java.lang.Class.forName(Class.java:264)

at com.cloudera.enterprise.dbutil.SqlRunner.open(SqlRunner.java:180)

at com.cloudera.enterprise.dbutil.SqlRunner.getDatabaseName(SqlRunner.java:264)

at com.cloudera.cmf.service.hive.HiveMetastoreDbUtil.countTables(HiveMetastoreDbUtil.java:197)

... 2 more

+ NUM_TABLES='[ main] SqlRunner ERROR Unable to find the MySQL JDBC driver. Please make sure that you have installed it as per instruction in the installation guide.'

+ [[ 1 -ne 0 ]]

+ echo 'Failed to count existing tables.'

+ exit 1

把驱动拷贝一份到Hive的lib下

# 驱动包不管你是什么版本,它的名字一定叫 mysql-connector-java.jar

#务必将驱动包赋予足够的权限

chmod 755 /usr/share/java/mysql-connector-java.jar

#ln -s /usr/share/java/mysql-connector-java.jar /opt/cloudera/parcels/CDH-6.3.0-1.cdh6.3.0.p0.1279813/lib/hive/lib/mysql-connector-java.jar

ln -s /usr/share/java/mysql-connector-java.jar /opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hive/libmysql-connector-java.jar

4.6 Hive组件启动提示获取VERSION失败

查看日志如果提示metastore获取VERSION失败,可以查看Hive的元数据库hive库下是否有元数据表,如果没有,手动将表初始化到Mysql的hive库下:

# 查找Hive元数据初始化的sql脚本,会发现搜到了各种版本的sql脚本

find / -name hive-schema*mysql.sql

# 例如可以得到:/opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hive/scripts/metastore/upgrade/mysql/hive-schema-2.1.1.mysql.sql

# 登陆Mysql数据库

mysql -u root -p

> use hive;

> source /opt/cloudera/parcels/CDH-6.2.0-1.cdh6.2.0.p0.967373/lib/hive/scripts/metastore/upgrade/mysql/hive-schema-2.1.1.mysql.sql

这一步就初始化了Hive的元数据表,然后重启Hive实例的服务。

4.7 Impala时区问题设置

Impala不进行设置,获取的日期类型的数据时区是有八个小时的时差,因此最好设置一下。

Cloudera Manager Web页面 > Impala > 配置 > 搜索:Impala Daemon 命令行参数高级配置代码段(安全阀) > 添加 -use_local_tz_for_unix_timestamp_conversions=true

保存配置,并重启Impala。

4.8 hdfs用户登录不上

当HDFS开启了权限认证,有时操作HDFS需要切换到hdfs用户对数据进行操作,但可能会提示如下问题:

[root@cdh1 ~]# su hdfs

This account is currently not available.

此时查看系统的用户信息,将hdfs 的/sbin/nologin改为/bin/bash,然后保存,再次登录hdfs即可。

[root@cdh1 ~]# cat /etc/passwd | grep hdfs

hdfs:x:954:961:Hadoop HDFS:/var/lib/hadoop-hdfs:/sbin/nologin

#将上面的信息改为如下

hdfs:x:954:961:Hadoop HDFS:/var/lib/hadoop-hdfs:/bin/bash

4.9 NTP问题

查看角色日志详细信息发现:

Check failed: _s.ok() Bad status: Runtime error: Cannot initialize clock: failed to wait for clock sync using command '/usr/bin/chronyc waitsync 60 0 0 1': /usr/bin/chronyc: process exited with non-zero status 1

在服务器上运行ntptime 命令的信息如下,说明NTP存在问题

[root@cdh3 ~]# ntptime

ntp_gettime() returns code 5 (ERROR)

time e0b2b833.5be28000 Tue, Jun 18 2019 9:09:07.358, (.358925),

maximum error 16000000 us, estimated error 16000000 us, TAI offset 0

ntp_adjtime() returns code 5 (ERROR)

modes 0x0 (),

offset 0.000 us, frequency 9.655 ppm, interval 1 s,

maximum error 16000000 us, estimated error 16000000 us,

status 0x40 (UNSYNC),

time constant 10, precision 1.000 us, tolerance 500 ppm,

特别要注意一下输出中的重要部分(us - 微妙):

maximum error 16000000 us:这个时间误差为16s,已经高于Kudu要求的最大误差status 0x40 (UNSYNC):同步状态,此时时间已经不同步了;如果为status 0x2001 (PLL,NANO)时则为健康状态。

正常的信息如下:

[root@cdh1 ~]# ntptime

ntp_gettime() returns code 0 (OK)

time e0b2b842.b180f51c Tue, Jun 18 2019 9:09:22.693, (.693374110),

maximum error 27426 us, estimated error 0 us, TAI offset 0

ntp_adjtime() returns code 0 (OK)

modes 0x0 (),

offset 0.000 us, frequency 3.932 ppm, interval 1 s,

maximum error 27426 us, estimated error 0 us,

status 0x2001 (PLL,NANO),

time constant 6, precision 0.001 us, tolerance 500 ppm,

如果是UNSYNC状态,请查看服务器的NTP服务状态:systemctl status ntpd.service,如果没有配置NTP服务的请安装,可以参考1.4 NTP部分安装和配置。这部分介绍还可以查看文档NTP Clock Synchronization,或者其他文档。

4.10 安装组件的其他异常

如果前面都没问题体,在安装组件最常见的失败异常,就是文件的角色和权限问题,请参照4.2方式排查和修复。多查看对应的日志,根据日志信息解决异常。

PO 一张最后安装完成的CDH Web 页面

最后

这个安装的过程同样适用于cdh 6.x的其它版本。因为时间限制,文中有难免有错误,如果各位查阅发现文中有错误,或者安装中有其它问题,欢迎留言交流