TensorFlow之Cifar-10图像分类任务

import pickle

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt

import random

random.seed(1)

def unpickle(file):

fo = open(file, 'rb')

dict = pickle.load(fo, encoding='latin1')

fo.close()

return dict

# 图像数据预处理下

def clean(data):

data_0_shape = data.shape[0]

print(data_0_shape)

imgs = data.reshape(data.shape[0], 3, 32, 32)

grayscale_imgs = imgs.mean(1)

cropped_imgs = grayscale_imgs[:, 4:28, 4:28]

img_data = cropped_imgs.reshape(data.shape[0], -1)

img_size = np.shape(img_data)[1]

means = np.mean(img_data, axis=1)

meansT = means.reshape(len(means), 1)

stds = np.std(img_data, axis=1)

stdsT = stds.reshape(len(stds), 1)

adj_stds = np.maximum(stdsT, 1.0 / np.sqrt(img_size))

normalized = (img_data - meansT) / adj_stds

return normalized

# 读取数据

def read_data(directory):

names = unpickle('{}/batches.meta'.format(directory))[

'label_names'] # ['airplane', 'automobile', 'bird', 'cat', 'deer', 'dog', 'frog', 'horse', 'ship', 'truck']

print('names', names)

data, labels = [], []

for i in range(1, 6):

filename = '{}/data_batch_{}'.format(directory, i)

batch_data = unpickle(filename)

if len(data) > 0:

data = np.vstack((data, batch_data['data']))

labels = np.hstack((labels, batch_data['labels']))

else:

data = batch_data['data']

labels = batch_data['labels']

# data的shape=(50000,3072) ,3072 = 32x32x3

# labels的shape=(50000,)

print("shape = ", np.shape(data), np.shape(labels))

data = clean(data)

data = data.astype(np.float32)

return names, data, labels

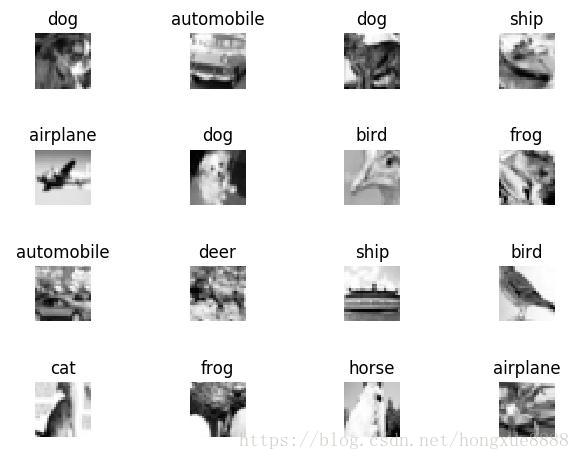

#显示几张图片

def show_some_examples(names, data, labels):

# data shape= (50000, 576),576 = 24x24

print("after data shape= ", data.shape)

plt.figure()

rows, cols = 4, 4

random_idxs = random.sample(range(len(data)), rows * cols)

for i in range(rows * cols):

plt.subplot(rows, cols, i + 1)

j = random_idxs[i]

plt.title(names[labels[j]])

img = np.reshape(data[j, :], (24, 24))

plt.imshow(img, cmap='Greys_r')

plt.axis('off')

plt.tight_layout()

plt.savefig('cifar_examples.png')

plt.show()

cifar10_dir = 'F:/AI/Python/HXPyhon/PycharmProjects/CIFAR10_dataset/cifar-10-batches-py/'

names, data, labels = read_data(cifar10_dir)

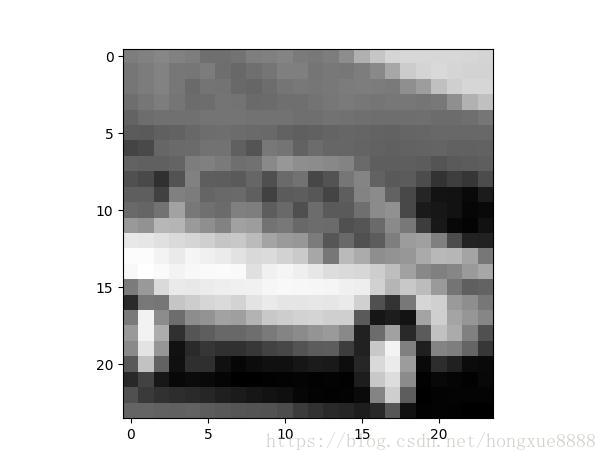

show_some_examples(names, data, labels)选择其中一张图片,查看结果

raw_data = data[4, :]

raw_img = np.reshape(raw_data, (24, 24))

plt.figure()

plt.imshow(raw_img, cmap='Greys_r')

plt.show()

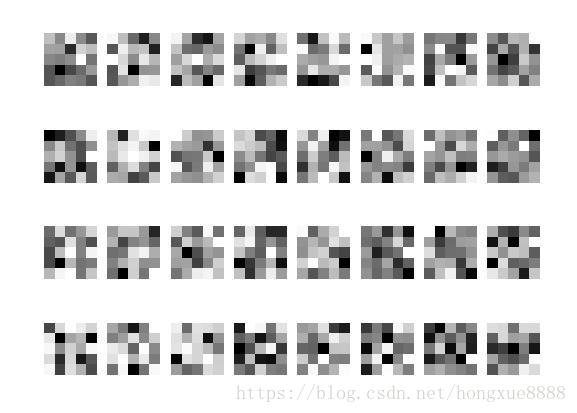

x = tf.reshape(raw_data, shape=[-1, 24, 24, 1])

W = tf.Variable(tf.random_normal([5, 5, 1, 32]))

b = tf.Variable(tf.random_normal([32]))

conv = tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

conv_with_b = tf.nn.bias_add(conv, b)

conv_out = tf.nn.relu(conv_with_b)

k = 2

maxpool = tf.nn.max_pool(conv_out, ksize=[1, k, k, 1], strides=[1, k, k, 1], padding='SAME')

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

W_val = sess.run(W)

print('weights:')

show_weights(W_val)

conv_val = sess.run(conv)

print('convolution results:')

print(np.shape(conv_val))

show_conv_results(conv_val)

conv_out_val = sess.run(conv_out)

print('convolution with bias and relu:')

print(np.shape(conv_out_val))

show_conv_results(conv_out_val)

maxpool_val = sess.run(maxpool)

print('maxpool after all the convolutions:')

print(np.shape(maxpool_val))

show_conv_results(maxpool_val)convolution results:

(1, 24, 24, 32)

convolution with bias and relu:

(1, 24, 24, 32)

maxpool after all the convolutions:

(1, 12, 12, 32)

构建完整网络模型:

# 构建完整网络模型

x = tf.placeholder(tf.float32, [None, 24 * 24])

y = tf.placeholder(tf.float32, [None, len(names)])

W1 = tf.Variable(tf.random_normal([5, 5, 1, 64]))

b1 = tf.Variable(tf.random_normal([64]))

W2 = tf.Variable(tf.random_normal([5, 5, 64, 64]))

b2 = tf.Variable(tf.random_normal([64]))

W3 = tf.Variable(tf.random_normal([6 * 6 * 64, 1024]))

b3 = tf.Variable(tf.random_normal([1024]))

W_out = tf.Variable(tf.random_normal([1024, len(names)]))

b_out = tf.Variable(tf.random_normal([len(names)]))

def conv_layer(x, W, b):

conv = tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

conv_with_b = tf.nn.bias_add(conv, b)

conv_out = tf.nn.relu(conv_with_b)

return conv_out

def maxpool_layer(conv, k=2):

return tf.nn.max_pool(conv, ksize=[1, k, k, 1], strides=[1, k, k, 1], padding='SAME')

def model():

x_reshaped = tf.reshape(x, shape=[-1, 24, 24, 1])

conv_out1 = conv_layer(x_reshaped, W1, b1)

maxpool_out1 = maxpool_layer(conv_out1)

# 提出了LRN层,对局部神经元的活动创建竞争机制,使得其中响应比较大的值变得相对更大,并抑制其他反馈较小的神经元,增强了模型的泛化能力。

# 推荐阅读http://blog.csdn.net/banana1006034246/article/details/75204013

norm1 = tf.nn.lrn(maxpool_out1, 4, bias=1.0, alpha=0.001 / 9.0, beta=0.75)

conv_out2 = conv_layer(norm1, W2, b2)

norm2 = tf.nn.lrn(conv_out2, 4, bias=1.0, alpha=0.001 / 9.0, beta=0.75)

maxpool_out2 = maxpool_layer(norm2)

maxpool_reshaped = tf.reshape(maxpool_out2, [-1, W3.get_shape().as_list()[0]])

local = tf.add(tf.matmul(maxpool_reshaped, W3), b3)

local_out = tf.nn.relu(local)

out = tf.add(tf.matmul(local_out, W_out), b_out)

return out

learning_rate = 0.001

model_op = model()

cost = tf.reduce_mean(

tf.nn.softmax_cross_entropy_with_logits(logits=model_op, labels=y)

)

train_op = tf.train.AdamOptimizer(learning_rate=learning_rate).minimize(cost)

correct_pred = tf.equal(tf.argmax(model_op, 1), tf.argmax(y, 1))

accuracy = tf.reduce_mean(tf.cast(correct_pred, tf.float32))

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

onehot_labels = tf.one_hot(labels, len(names), axis=-1)

onehot_vals = sess.run(onehot_labels)

batch_size = 64

print('batch size', batch_size)

for j in range(0, 1000):

avg_accuracy_val = 0.

batch_count = 0.

data_len = len(data)

for i in range(0, len(data), batch_size):

batch_data = data[i:i + batch_size, :]

batch_onehot_vals = onehot_vals[i:i + batch_size, :]

_, accuracy_val = sess.run([train_op, accuracy], feed_dict={x: batch_data, y: batch_onehot_vals})

avg_accuracy_val += accuracy_val

batch_count += 1.

print("avg_accuracy_val=", avg_accuracy_val)

avg_accuracy_val /= batch_count

print('Epoch {}. Avg accuracy {}'.format(j, avg_accuracy_val))