Ceph 学习——OSD读写流程与源码分析(一)

消息从客户端发送而来,之前几节介绍了 客户端下 对象存储、块存储库的实现以及他们在客户端下API请求的发送过程(Ceph学习——Librados与Osdc实现源码解析 、 Ceph学习——客户端读写操作分析 、 Ceph学习——Librbd块存储库与RBD读写流程源码分析)。当请求被封装后,通过消息发送模块(Ceph学习——Ceph网络通信机制与源码分析)将请求及其相关信息发送到服务端实现真正的对数据的操作。服务端的操作模块便是由OSD、OS模块完成的,这节先介绍OSD模块。

- OSD 模块主要的类

- OSD类

- PrimaryLogPG类

- PGBackend类

- OSD读写函数调用流程

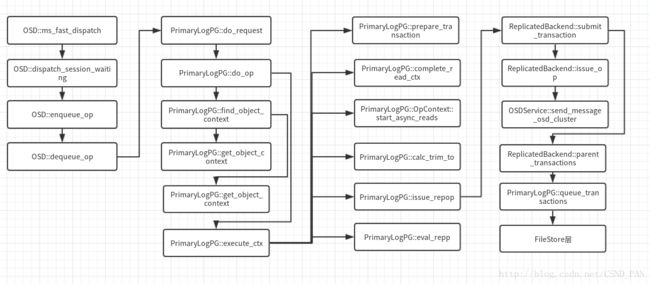

直接上图:

同样当前最新的版本,和之前的版本有所不同,有一些模块简化了,类的名字也改了。先介绍图中涉及的相关的类,然后在对类中具体函数主要调用流程进行分析。

OSD 模块主要的类

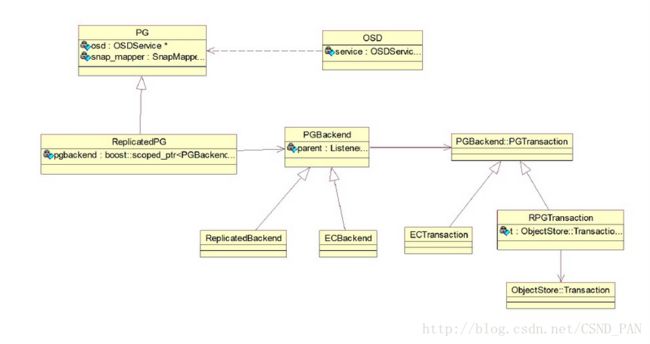

盗图:其中ReplicatedPG 在最新的版本中去掉了,更改为PrimaryLogPG类

OSD类

OSD和OSDService是核心类,他们直接在顶层负责一个OSD节点的工作,从客户端的得到的消息,就是先到达OSD类中,通过OSD类的处理,在调用PrimaryLogPG(之前为ReplicatedPG 类)类进行处理。该类中,在读写流程中的主要工作是消息(Message)封装为 RequestOp,检查epoch (版本)是否需要更新,并获取PG句柄,并做PG相关的检查,最后将请求加入队列。

PrimaryLogPG类

该类继承自PG类,PGBackend::Listener(该类是一个抽象类)类PG类处理相关状态的维护,以及实现PG层面的功能,核心功能是用boost库的statechart状态机来做PG状态转换。它实现了PG内的数据读写等功能。

PGBackend类

该类主要功能是将请求数据通过事务的形式同步到一个PG的其它从OSD上(注意:主OSD的操作PrimaryLogPG来完成)。

他有两个子类,分别是 ReplicatedBackend和ECBackend,对应着PG的的两种类型的实现。

OSD读写函数调用流程

1)OSD::ms_fast_dispatch 函数是接收消息Message的入口函数,他被网络模块的接收线程调用。主要工作是 检查service服务 、把Message封装为OpRequest类型、获取session、获取最新的OSdMap,最后dispatch_session_waiting,进入下一步。

void OSD::ms_fast_dispatch(Message *m)

{

FUNCTRACE();

if (service.is_stopping()) {//检查service,如果停止了直接返回

m->put();

return;

}

OpRequestRef op = op_tracker.create_request(m);//把Message封装为OpRequest类型

...

...

if (m->get_connection()->has_features(CEPH_FEATUREMASK_RESEND_ON_SPLIT) ||

m->get_type() != CEPH_MSG_OSD_OP) {

// queue it directly直接调用enqueue_op处理

enqueue_op(

static_cast(m)->get_spg(),

op,

static_cast(m)->get_map_epoch());

} else {

Session *session = static_cast(m->get_connection()->get_priv());//获取 session 其中包含了一个Connection的相关信息

if (session) {

{

Mutex::Locker l(session->session_dispatch_lock);

op->get();

session->waiting_on_map.push_back(*op);//将请求加如waiting_on_map的列表里

OSDMapRef nextmap = service.get_nextmap_reserved();//获取最新的OSDMAP

dispatch_session_waiting(session, nextmap);//该函数中 循环处理请求

service.release_map(nextmap);

}

session->put();

}

}

OID_EVENT_TRACE_WITH_MSG(m, "MS_FAST_DISPATCH_END", false);

} 2)OSD::dispatch_session_waiting 主要工作是循环处理队列waiting_on_map中的元素,对比OSDmap,以及获取他们的pgid,最后调用enqueue_op处理。

void OSD::dispatch_session_waiting(Session *session, OSDMapRef osdmap)

{

assert(session->session_dispatch_lock.is_locked());

auto i = session->waiting_on_map.begin();

while (i != session->waiting_on_map.end()) {//循环处理waiting_on_map中的元素

OpRequestRef op = &(*i);

assert(ms_can_fast_dispatch(op->get_req()));

const MOSDFastDispatchOp *m = static_cast<const MOSDFastDispatchOp*>(

op->get_req());

if (m->get_min_epoch() > osdmap->get_epoch()) {//osdmap版本不对应

break;

}

session->waiting_on_map.erase(i++);

op->put();

spg_t pgid;

if (m->get_type() == CEPH_MSG_OSD_OP) {

pg_t actual_pgid = osdmap->raw_pg_to_pg(

static_cast<const MOSDOp*>(m)->get_pg());

//osdmap->get_primary_shard(actual_pgid, &pgid)获取 pgid 该PG的主OSD

if (!osdmap->get_primary_shard(actual_pgid, &pgid)) {

continue;

}

} else {

pgid = m->get_spg();

}

enqueue_op(pgid, op, m->get_map_epoch());//获取成功则调用enqueue_op处理

}

if (session->waiting_on_map.empty()) {

clear_session_waiting_on_map(session);

} else {

register_session_waiting_on_map(session);

}

}3)OSD::enqueue_op 的主要工作是将求情加入到op_shardedwq队列中

void OSD::enqueue_op(spg_t pg, OpRequestRef& op, epoch_t epoch)

{

...

op->osd_trace.event("enqueue op");

op->osd_trace.keyval("priority", op->get_req()->get_priority());

op->osd_trace.keyval("cost", op->get_req()->get_cost());

op->mark_queued_for_pg();

logger->tinc(l_osd_op_before_queue_op_lat, latency);

//加入op_shardedwq队列中

op_shardedwq.queue(

OpQueueItem(

unique_ptr(new PGOpItem(pg, op)),

op->get_req()->get_cost(),

op->get_req()->get_priority(),

op->get_req()->get_recv_stamp(),

op->get_req()->get_source().num(),

epoch));

} 4)OSD::dequeue_op 调用函数进行osdmap的更新,调用do_request进入PG处理流程

void OSD::dequeue_op(

PGRef pg, OpRequestRef op,

ThreadPool::TPHandle &handle)

{

...

...

logger->tinc(l_osd_op_before_dequeue_op_lat, latency);

Session *session = static_cast<Session *>(

op->get_req()->get_connection()->get_priv());

if (session) {

//调用该函数进行 osdmap的更新

maybe_share_map(session, op, pg->get_osdmap());

session->put();

}

//正在是删除、直接返回

if (pg->is_deleting())

return;

op->mark_reached_pg();

op->osd_trace.event("dequeue_op");

//调用pg的do_request处理

pg->do_request(op, handle);

// finish

dout(10) << "dequeue_op " << op << " finish" << dendl;

OID_EVENT_TRACE_WITH_MSG(op->get_req(), "DEQUEUE_OP_END", false);

}

5)PrimaryLogPG::do_request该函数 主要你检查PG的状态,以及根据消息类型进行不同处理

void PrimaryLogPG::do_request(

OpRequestRef& op,

ThreadPool::TPHandle &handle)

{

...

// make sure we have a new enough map

//检查 osdmap

auto p = waiting_for_map.find(op->get_source());

...

//是否可以丢弃

if (can_discard_request(op)) {

return;

}

...

...

//PG还没有peered

if (!is_peered()) {

// Delay unless PGBackend says it's ok

//检查pgbackend是否可以处理这个请求

if (pgbackend->can_handle_while_inactive(op)) {

bool handled = pgbackend->handle_message(op);//可以处理,则调用该函数处理

assert(handled);

return;

} else {

waiting_for_peered.push_back(op);//不可以则加入waiting_for_peered队列

op->mark_delayed("waiting for peered");

return;

}

}

...

...

//PG处于Peered 并且flushes_in_progress为0的状态下

assert(is_peered() && flushes_in_progress == 0);

if (pgbackend->handle_message(op))

return;

// 根据不同的消息请求类型,进行相应的处理

switch (op->get_req()->get_type()) {

case CEPH_MSG_OSD_OP:

case CEPH_MSG_OSD_BACKOFF:

if (!is_active()) {//该PG状态 为非active状态

dout(20) << " peered, not active, waiting for active on " << op << dendl;

waiting_for_active.push_back(op);//加入队列

op->mark_delayed("waiting for active");

return;

}

switch (op->get_req()->get_type()) {

case CEPH_MSG_OSD_OP:

// verify client features 如果是cache pool ,操作没有带CEPH_FEATURE_OSD_CACHEPOOL的feature标志,返回错误信息

if ((pool.info.has_tiers() || pool.info.is_tier()) &&

!op->has_feature(CEPH_FEATURE_OSD_CACHEPOOL)) {

osd->reply_op_error(op, -EOPNOTSUPP);

return;

}

do_op(op);//调用do_op 处理

break;

case CEPH_MSG_OSD_BACKOFF:

// object-level backoff acks handled in osdop context

handle_backoff(op);

break;

}

break;

...

//各种消息类型

...

default:

assert(0 == "bad message type in do_request");

}

}6)PrimaryLogPG::do_op 函数很长很负责,这里着看相关调用流程好了,主要功能是解析出操作来,然后对操作的个中参数进行检查,检查相关对象的状态,以及该对象的head、snap、clone对象的状态等,并调用函数获取对象的上下文、操作的上下文(ObjectContext、OPContext)

void PrimaryLogPG::do_op(OpRequestRef& op)

{

FUNCTRACE();

// NOTE: take a non-const pointer here; we must be careful not to

// change anything that will break other reads on m (operator<<).

MOSDOp *m = static_cast(op->get_nonconst_req());

assert(m->get_type() == CEPH_MSG_OSD_OP);

//解析字段,从bufferlist解析数据

if (m->finish_decode()) {

op->reset_desc(); // for TrackedOp

m->clear_payload();

}

...

...

if ((m->get_flags() & (CEPH_OSD_FLAG_BALANCE_READS |

CEPH_OSD_FLAG_LOCALIZE_READS)) &&

op->may_read() &&

!(op->may_write() || op->may_cache())) {

// balanced reads; any replica will do 平衡读,则主从OSD都可以读取

if (!(is_primary() || is_replica())) {

osd->handle_misdirected_op(this, op);

return;

}

} else {

// normal case; must be primary 否则只能读取主OSD

if (!is_primary()) {

osd->handle_misdirected_op(this, op);

return;

}

}

if (!op_has_sufficient_caps(op)) {

osd->reply_op_error(op, -EPERM);

return;

}

//op中包含includes_pg_op该操作,则调用 do_pg_op(op)处理

if (op->includes_pg_op()) {

return do_pg_op(op);

}

// object name too long?

//检查名字是否太长

if (m->get_oid().name.size() > cct->_conf->osd_max_object_name_len) {

dout(4) << "do_op name is longer than "

<< cct->_conf->osd_max_object_name_len

<< " bytes" << dendl;

osd->reply_op_error(op, -ENAMETOOLONG);

return;

}

...

...

// blacklisted?

//发送请求的客户端是黑名单中的一个

if (get_osdmap()->is_blacklisted(m->get_source_addr())) {

dout(10) << "do_op " << m->get_source_addr() << " is blacklisted" << dendl;

osd->reply_op_error(op, -EBLACKLISTED);

return;

}

...

...

// missing object?

//head对象是否处于缺失状态

if (is_unreadable_object(head)) {

if (!is_primary()) {

osd->reply_op_error(op, -EAGAIN);

return;

}

if (can_backoff &&

(g_conf->osd_backoff_on_degraded ||

(g_conf->osd_backoff_on_unfound && missing_loc.is_unfound(head)))) {

add_backoff(session, head, head);

maybe_kick_recovery(head);

} else {

wait_for_unreadable_object(head, op);//加入队列,等待恢复完成

}

return;

}

// degraded object?

//顺序写 且head对象正在恢复状态

if (write_ordered && is_degraded_or_backfilling_object(head)) {

if (can_backoff && g_conf->osd_backoff_on_degraded) {

add_backoff(session, head, head);

maybe_kick_recovery(head);

} else {

wait_for_degraded_object(head, op);//加入队列,等待

}

return;

}

//顺序写,切处于数据一致性检查 scrub时期

if (write_ordered && scrubber.is_chunky_scrub_active() &&

scrubber.write_blocked_by_scrub(head)) {

dout(20) << __func__ << ": waiting for scrub" << dendl;

waiting_for_scrub.push_back(op);

op->mark_delayed("waiting for scrub");

return;

}

...

...

//若果是顺序写,并且该对象在该队列中

if (write_ordered && objects_blocked_on_cache_full.count(head)) {

block_write_on_full_cache(head, op);

return;

}

...

...

// io blocked on obc?

//检查对象是否被blocked

if (!m->has_flag(CEPH_OSD_FLAG_FLUSH) &&

maybe_await_blocked_head(oid, op)) {

return;

}

//调用find_object_context获取object_context

int r = find_object_context(

oid, &obc, can_create,

m->has_flag(CEPH_OSD_FLAG_MAP_SNAP_CLONE),

&missing_oid);

// hit.set 不为空 则设置

bool in_hit_set = false;

if (hit_set) {

if (obc.get()) {

if (obc->obs.oi.soid != hobject_t() && hit_set->contains(obc->obs.oi.soid))

in_hit_set = true;

} else {

if (missing_oid != hobject_t() && hit_set->contains(missing_oid))

in_hit_set = true;

}

if (!op->hitset_inserted) {

hit_set->insert(oid);

op->hitset_inserted = true;

if (hit_set->is_full() ||

hit_set_start_stamp + pool.info.hit_set_period <= m->get_recv_stamp()) {

hit_set_persist();

}

}

}

//agent_state 不为空

if (agent_state) {

if (agent_choose_mode(false, op))// 调用该函数进行选择agent的状态

return;

}

...

...

...

op->mark_started();

execute_ctx(ctx);//调用该函数,执行相关操作

utime_t prepare_latency = ceph_clock_now();

prepare_latency -= op->get_dequeued_time();

osd->logger->tinc(l_osd_op_prepare_lat, prepare_latency);

if (op->may_read() && op->may_write()) {

osd->logger->tinc(l_osd_op_rw_prepare_lat, prepare_latency);

} else if (op->may_read()) {

osd->logger->tinc(l_osd_op_r_prepare_lat, prepare_latency);

} else if (op->may_write() || op->may_cache()) {

osd->logger->tinc(l_osd_op_w_prepare_lat, prepare_latency);

}

// force recovery of the oldest missing object if too many logs

maybe_force_recovery();

}

7) PrimaryLogPG::find_object_context 函数主要根据 不同发情况 通过调用 PrimaryLogPG::get_object_context函数获取 对象上下文。

/*

* If we return an error, and set *pmissing, then promoting that

* object may help.

*

* If we return -EAGAIN, we will always set *pmissing to the missing

* object to wait for.

*

* If we return an error but do not set *pmissing, then we know the

* object does not exist.

*/

//获取一个对象的ObjectContext

int PrimaryLogPG::find_object_context(const hobject_t& oid,

ObjectContextRef *pobc,

bool can_create,

bool map_snapid_to_clone,

hobject_t *pmissing)

{

FUNCTRACE();

assert(oid.pool == static_cast(info.pgid.pool()));

// want the head?

if (oid.snap == CEPH_NOSNAP) {

ObjectContextRef obc = get_object_context(oid, can_create);//如果是想要原始对象(head)直接调用

if (!obc) {

if (pmissing)

*pmissing = oid;

return -ENOENT;

}

dout(10) << "find_object_context " << oid

<< " @" << oid.snap

<< " oi=" << obc->obs.oi

<< dendl;

*pobc = obc;

return 0;

}

hobject_t head = oid.get_head();

// we want a snap

//不是map_snapid_to_clone对象且,该snap快照已经被删除,直接返回-ENOENT

if (!map_snapid_to_clone && pool.info.is_removed_snap(oid.snap)) {

dout(10) << __func__ << " snap " << oid.snap << " is removed" << dendl;

return -ENOENT;

}

SnapSetContext *ssc = get_snapset_context(oid, can_create);//调用get_snapset_context对象来获取SnapSetContext对象。

if (!ssc || !(ssc->exists || can_create)) {

dout(20) << __func__ << " " << oid << " no snapset" << dendl;

if (pmissing)

*pmissing = head; // start by getting the head

if (ssc)

put_snapset_context(ssc);

return -ENOENT;

}

//如果是map_snapid_to_clone

if (map_snapid_to_clone) {

dout(10) << "find_object_context " << oid << " @" << oid.snap

<< " snapset " << ssc->snapset

<< " map_snapid_to_clone=true" << dendl;

if (oid.snap > ssc->snapset.seq) {//大于说明 该快照最新,osd还没完成相关信息的更新,直接返回head对象的上下文

// already must be readable

ObjectContextRef obc = get_object_context(head, false);//直接返回head对象的上下文

dout(10) << "find_object_context " << oid << " @" << oid.snap

<< " snapset " << ssc->snapset

<< " maps to head" << dendl;

*pobc = obc;

put_snapset_context(ssc);

return (obc && obc->obs.exists) ? 0 : -ENOENT;

} else {

vector 8)get_object_context 实际去获取上下文,先在缓存里面找,如果没有在调用函数去获取。另外在调用get_snapset_context获取SnapSetContext。

ObjectContextRef PrimaryLogPG::get_object_context(

const hobject_t& soid,

bool can_create,

const map<string, bufferlist> *attrs)

{

...

//先在缓存里面找

ObjectContextRef obc = object_contexts.lookup(soid);

osd->logger->inc(l_osd_object_ctx_cache_total);

if (obc) {

osd->logger->inc(l_osd_object_ctx_cache_hit);

dout(10) << __func__ << ": found obc in cache: " << obc

<< dendl;

} else {

dout(10) << __func__ << ": obc NOT found in cache: " << soid << dendl;

// check disk

bufferlist bv;

if (attrs) {

assert(attrs->count(OI_ATTR));

bv = attrs->find(OI_ATTR)->second;

} else {

int r = pgbackend->objects_get_attr(soid, OI_ATTR, &bv);//缓存没有就调用函数去获取

if (r < 0) {

if (!can_create) {

dout(10) << __func__ << ": no obc for soid "

<< soid << " and !can_create"

<< dendl;

return ObjectContextRef(); // -ENOENT!

}

dout(10) << __func__ << ": no obc for soid "

<< soid << " but can_create"

<< dendl;

// new object.

object_info_t oi(soid);

//调用get_snapset_context获取 SnapSetContext

SnapSetContext *ssc = get_snapset_context(

soid, true, 0, false);

assert(ssc);

obc = create_object_context(oi, ssc);

dout(10) << __func__ << ": " << obc << " " << soid

<< " " << obc->rwstate

<< " oi: " << obc->obs.oi

<< " ssc: " << obc->ssc

<< " snapset: " << obc->ssc->snapset << dendl;

return obc;

}

}

...

...

}

}9)

SnapSetContext *PrimaryLogPG::get_snapset_context(

const hobject_t& oid,

bool can_create,

const map<string, bufferlist> *attrs,

bool oid_existed)

{

Mutex::Locker l(snapset_contexts_lock);

SnapSetContext *ssc;

map10)该函数是由do_op调用的, 主要工作是检查对象状态和上下文相关信息的获取,并调用函数prepare _transactions 把操作封装到事务中。如果是读取操作,则调用相关读取函数(同步、异步)。如果是写操作,则 调用calc_trim_to计算是否将旧的PG log日志进行trim操作、 issue_repop(repop, ctx)向各个副本发送同步操作请求、eval_repop(repop)检查发向各个副本的同步操作请求是否已经reply成功

void PrimaryLogPG::execute_ctx(OpContext *ctx)

{

FUNCTRACE();

dout(10) << __func__ << " " << ctx << dendl;

ctx->reset_obs(ctx->obc);

ctx->update_log_only = false; // reset in case finish_copyfrom() is re-running execute_ctx

OpRequestRef op = ctx->op;

const MOSDOp *m = static_cast<const MOSDOp*>(op->get_req());

ObjectContextRef obc = ctx->obc;

const hobject_t& soid = obc->obs.oi.soid;

// this method must be idempotent since we may call it several times

// before we finally apply the resulting transaction.

ctx->op_t.reset(new PGTransaction);

if (op->may_write() || op->may_cache()) {

// snap

if (!(m->has_flag(CEPH_OSD_FLAG_ENFORCE_SNAPC)) &&//如果是对整个pool的快照操作

pool.info.is_pool_snaps_mode()) {

// use pool's snapc

ctx->snapc = pool.snapc;//设置为该值 pool的信息

} else {//如果是用户特定的快照 如RBD

// client specified snapc

ctx->snapc.seq = m->get_snap_seq();//设置为信息带的相关信息

ctx->snapc.snaps = m->get_snaps();

filter_snapc(ctx->snapc.snaps);

}

if ((m->has_flag(CEPH_OSD_FLAG_ORDERSNAP)) &&

ctx->snapc.seq < obc->ssc->snapset.seq) {//客户端的 snap序号小于服务端的 返回错误

dout(10) << " ORDERSNAP flag set and snapc seq " << ctx->snapc.seq

<< " < snapset seq " << obc->ssc->snapset.seq

<< " on " << obc->obs.oi.soid << dendl;

reply_ctx(ctx, -EOLDSNAPC);

return;

}

...

if (!ctx->user_at_version)

ctx->user_at_version = obc->obs.oi.user_version;

dout(30) << __func__ << " user_at_version " << ctx->user_at_version << dendl;

//若是读操作,给objectContext加上ondisk_read_lock锁

if (op->may_read()) {

dout(10) << " taking ondisk_read_lock" << dendl;

obc->ondisk_read_lock();

}

{

#ifdef WITH_LTTNG

osd_reqid_t reqid = ctx->op->get_reqid();

#endif

tracepoint(osd, prepare_tx_enter, reqid.name._type,

reqid.name._num, reqid.tid, reqid.inc);

}

int result = prepare_transaction(ctx);//将相关的操作封装到 ctx->op_t中 封装成事务

{

#ifdef WITH_LTTNG

osd_reqid_t reqid = ctx->op->get_reqid();

#endif

tracepoint(osd, prepare_tx_exit, reqid.name._type,

reqid.name._num, reqid.tid, reqid.inc);

}

if (op->may_read()) {

dout(10) << " dropping ondisk_read_lock" << dendl;

obc->ondisk_read_unlock();

}

bool pending_async_reads = !ctx->pending_async_reobc->ondisk_read_lock();ads.empty();

if (result == -EINPROGRESS || pending_async_reads) {

// come back later.

if (pending_async_reads) {

assert(pool.info.is_erasure());

in_progress_async_reads.push_back(make_pair(op, ctx));

ctx->start_async_reads(this);//如果是,则调用该函数 异步读取

}

return;

}

if (result == -EAGAIN) {

// clean up after the ctx

close_op_ctx(ctx);

return;

}

bool successful_write = !ctx->op_t->empty() && op->may_write() && result >= 0;

// prepare the reply

ctx->reply = new MOSDOpReply(m, 0, get_osdmap()->get_epoch(), 0,

successful_write, op->qos_resp);

// read or error?

if ((ctx->op_t->empty() || result < 0) && !ctx->update_log_only) {

// finish side-effects

if (result >= 0)

do_osd_op_effects(ctx, m->get_connection());

complete_read_ctx(result, ctx);//同步读取,

return;

}

ctx->reply->set_reply_versions(ctx->at_version, ctx->user_at_version);

assert(op->may_write() || op->may_cache());

// trim log?

calc_trim_to();//调用函数 计算是否将旧的PG log日志进行trim操作

...

...

issue_repop(repop, ctx);//向各个副本发送同步操作请求

eval_repop(repop);//检查发向各个副本的同步操作请求是否已经reply成功

repop->put();

}11)PrimaryLogPG::issue_repop函数 主要是把讲求发送到 副本OSD上进行处理

void PrimaryLogPG::issue_repop(RepGather *repop, OpContext *ctx)

{

FUNCTRACE();

const hobject_t& soid = ctx->obs->oi.soid;

dout(7) << "issue_repop rep_tid " << repop->rep_tid

<< " o " << soid

<< dendl;

repop->v = ctx->at_version;

if (ctx->at_version > eversion_t()) {

for (set12)该函数用于最终调用网络接口,把更新请求发送给从OSD,并调用queue_transactions 函数对该PG的主OSD上的实现更改。

void ReplicatedBackend::submit_transaction(

const hobject_t &soid,

const object_stat_sum_t &delta_stats,

const eversion_t &at_version,

PGTransactionUPtr &&_t,

const eversion_t &trim_to,

const eversion_t &roll_forward_to,

const vector &hset_history,

Context *on_local_applied_sync,

Context *on_all_acked,

Context *on_all_commit,

ceph_tid_t tid,

osd_reqid_t reqid,

OpRequestRef orig_op)

{

parent->apply_stats(

soid,

delta_stats);

vector 13) 调用的queue_transactions函数,会调用到os层。

调用的函数位于 PrinaryLogPG.h

void queue_transactions(vector其中 osd->store 定义为

ObjectStore *store;