- 优化Apache Spark性能之JVM参数配置指南

weixin_30777913

jvmspark大数据开发语言性能优化

ApacheSpark运行在JVM之上,JVM的垃圾回收(GC)、内存管理以及堆外内存使用情况,会直接对Spark任务的执行效率产生影响。因此,合理配置JVM参数是优化Spark性能的关键步骤,以下将详细介绍优化策略和配置建议。通过以下优化方法,可以显著减少GC停顿时间、提升内存利用率,进而提高Spark作业吞吐量和数据处理效率。同时,要根据具体的工作负载和集群配置进行调整,并定期监控Spark应

- GraphCube、Spark和深度学习技术赋能快消行业关键运营环节

weixin_30777913

开发语言大数据深度学习人工智能spark

在快消品(FMCG)行业,需求计划(DemandPlanning)、库存管理(InventoryManagement)和需求供应管理(DemandSupplyManagement)是影响企业整体效率和利润水平的关键运营环节。GraphCube图多维数据集技术、Spark大数据分析处理技术和深度学习技术的结合,为这些环节提供了智能化、动态化和实时化的解决方案,显著提升业务运营效率和企业利润。一、技术

- 【新品发售】NVIDIA 发布全球最小个人 AI 超级计算机 DGX Spark

segmentfault

GTC2025大会上,NVIDIA正式推出了搭载NVIDIAGraceBlackwell平台的个人AI超级计算机——DGXSpark。赞奇可接受预订,直接私信后台即刻预订!DGXSpark(前身为ProjectDIGITS)支持AI开发者、研究人员、数据科学家和学生,在台式电脑上对大模型进行原型设计、微调和推理。用户可以在本地运行这些模型,或将其部署在NVIDIADGXCloud或任何其他加速云或

- Kafka Connect Node.js Connector 指南

丁操余

KafkaConnectNode.jsConnector指南kafka-connectequivalenttokafka-connect:wrench:fornodejs:sparkles::turtle::rocket::sparkles:项目地址:https://gitcode.com/gh_mirrors/ka/kafka-connect项目介绍KafkaConnectNode.jsConn

- JAVA学习-练习试用Java实现“对大数据集中的网络日志进行解析和异常行为筛查”

守护者170

java学习java学习

问题:编写一个Spark程序,对大数据集中的网络日志进行解析和异常行为筛查。解答思路:下面是一个简单的Spark程序示例,用于解析网络日志并筛查异常行为。这个示例假设日志文件格式如下:timestamp,ip_address,user_id,action,event,extra_info2023-01-0112:00:00,192.168.1.1,123,login,success,none202

- JAVA学习-练习试用Java实现“实现一个Spark应用,对大数据集中的文本数据进行情感分析和关键词筛选”

守护者170

java学习java学习

问题:实现一个Spark应用,对大数据集中的文本数据进行情感分析和关键词筛选。解答思路:要实现一个Spark应用,对大数据集中的文本数据进行情感分析和关键词筛选,需要按照以下步骤进行:1.环境准备确保的环境中已经安装了ApacheSpark。可以从[ApacheSpark官网](https://spark.apache.org/downloads.html)下载并安装。2.创建Spark应用以下是

- Hive与Spark的UDF:数据处理利器的对比与实践

窝窝和牛牛

hivesparkhadoop

文章目录Hive与Spark的UDF:数据处理利器的对比与实践一、UDF概述二、HiveUDF解析实现原理代码示例业务应用三、SparkUDF剖析-JDBC方式使用SparkThriftServer设置通过JDBC使用UDFSparkUDF的Java实现(用于JDBC方式)通过beeline客户端连接使用业务应用场景四、Hive与SparkUDF在JDBC模式下的对比五、实际部署与最佳实践六、总结

- 尚硅谷电商数仓6.0,hive on spark,spark启动不了

新时代赚钱战士

hivesparkhadoop

在datagrip执行分区插入语句时报错[42000][40000]Errorwhilecompilingstatement:FAILED:SemanticExceptionFailedtogetasparksession:org.apache.hadoop.hive.ql.metadata.HiveException:FailedtocreateSparkclientforSparksessio

- 数据中台(二)数据中台相关技术栈

Yuan_CSDF

#数据中台

1.平台搭建1.1.Amabari+HDP1.2.CM+CDH2.相关的技术栈数据存储:HDFS,HBase,Kudu等数据计算:MapReduce,Spark,Flink交互式查询:Impala,Presto在线实时分析:ClickHouse,Kylin,Doris,Druid,Kudu等资源调度:YARN,Mesos,Kubernetes任务调度:Oozie,Azakaban,AirFlow,

- 一文搞懂大数据神器Spark,真的太牛了!

qq_23519469

大数据spark分布式

Spark是什么在如今这个大数据时代,数据量呈爆炸式增长,传统的数据处理方式已经难以满足需求。就拿电商平台来说,每天产生的交易数据、用户浏览数据、评论数据等,数量巨大且种类繁多。假如要对这些数据进行分析,比如分析用户的购买行为,找出最受欢迎的商品,预测未来的销售趋势等,用普通的单机处理方式,可能需要花费很长时间,甚至根本无法完成。这时,Spark就应运而生了。Spark是一个开源的、基于内存计算的

- Flink读取kafka数据并写入HDFS

王知无(import_bigdata)

Flink系统性学习专栏hdfskafkaflink

硬刚大数据系列文章链接:2021年从零到大数据专家的学习指南(全面升级版)2021年从零到大数据专家面试篇之Hadoop/HDFS/Yarn篇2021年从零到大数据专家面试篇之SparkSQL篇2021年从零到大数据专家面试篇之消息队列篇2021年从零到大数据专家面试篇之Spark篇2021年从零到大数据专家面试篇之Hbase篇

- 元戎启行最新战略RoadAGI:所有移动智能体都将被AI驱动

量子位

2025年3月18日(北京时间),元戎启行作为国内人工智能企业代表,出席由NVIDIA主办的GTC大会。会上,公司CEO周光发表了技术主题演讲,展示了公司的最新战略布局RoadAGI,并发布道路通用人工智能平台——AISpark(以下简称”Spark平台”)。RoadAGI是元戎启行实现物理世界通用人工智能的关键一步,旨在让包括智能驾驶汽车在内的移动智能体,都具有在道路上自主行驶、与物理世界深度交

- SparkSQL编程-RDD、DataFrame、DataSet

早拾碗吧

Sparksparkhadoop大数据sparksql

三者之间的关系在SparkSQL中Spark为我们提供了两个新的抽象,分别是DataFrame和DataSet。他们和RDD有什么区别呢?首先从版本的产生上来看:RDD(Spark1.0)—>Dataframe(Spark1.3)—>Dataset(Spark1.6)如果同样的数据都给到这三个数据结构,他们分别计算之后,都会给出相同的结果。不同是的他们的执行效率和执行方式。在后期的Spark版本中

- How Spark Read Sftp Files from Hadoop SFTP FileSystem

IT•轩辕

CloudyComputationsparkhadoop大数据

GradleDependenciesimplementation('org.apache.spark:spark-sql_2.13:3.5.3'){excludegroup:"org.apache.logging.log4j",module:"log4j-slf4j2-impl"}implementation('org.apache.hadoop:hadoop-common:3.3.4'){exc

- pyspark 遇到**Py4JJavaError** Traceback (most recent call last) ~\AppData\

2pi

sparkpython

Py4JJavaErrorTraceback(mostrecentcalllast)~\AppData\Local\Temp/ipykernel_22732/1401292359.pyin---->1feat_df.show(5,vertical=True)D:\Anaconda3\envs\recall-service-cp4\lib\site-packages\pyspark\sql\data

- 中电金信25/3/18面前笔试(需求分析岗+数据开发岗)

苍曦

需求分析前端javascript

部分相同题目在第二次数据开发岗中不做解析,本次解析来源于豆包AI,正确与否有待商榷,本文只提供一个速查与知识点的补充。一、需求分析第1题,单选题,Hadoop的核心组件包括HDFS和以下哪个?MapReduceSparkStormFlink解析:Hadoop的核心组件是HDFS(分布式文件系统)和MapReduce(分布式计算框架)。Spark、Storm、Flink虽然也是大数据处理相关技术,但

- Spark集群启动与关闭

陈沐

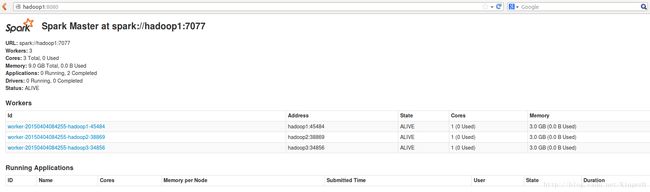

sparksparkhadoopbigdata

Hadoop集群和Spark的启动与关闭Hadoop集群开启三台虚拟机均启动ZookeeperzkServer.shstartMaster1上面执行启动HDFSstart-dfs.shslave1上面执行开启YARNstart-yarn.shslave2上面执行开启YARN的资源管理器yarn-daemon.shstartresourcemanager(如果nodeManager没有启动(正常情况

- Spark 解析_spark.sparkContext.getConf().getAll()

闯闯桑

spark大数据分布式

spark.sparkContext.getConf().getAll()是ApacheSpark中的一段代码,用于获取当前Spark应用程序的所有配置项及其值。以下是逐部分解释:代码分解:spark:这是一个SparkSession对象,它是Spark应用程序的入口点,用于与Spark集群进行交互。spark.sparkContext:sparkContext是Spark的核心组件,负责与集群通

- Pandas与PySpark混合计算实战:突破单机极限的智能数据处理方案

Eqwaak00

Pandaspandas学习python科技开发语言

引言:大数据时代的混合计算革命当数据规模突破十亿级时,传统单机Pandas面临内存溢出、计算缓慢等瓶颈。PySpark虽能处理PB级数据,但在开发效率和局部计算灵活性上存在不足。本文将揭示如何构建Pandas+PySpark混合计算管道,在保留Pandas便捷性的同时,借助Spark分布式引擎实现百倍性能提升,并通过真实电商用户画像案例演示全流程实现。一、混合架构设计原理1.1技术栈优势分析维度P

- 自定义Spark启动的metastore_db和derby.log生成路径

节昊文

spark大数据分布式

1.进入安装spark目录的conf目录下2.复制spark-defaults.conf.template文件为spark-defaults.conf3.在spark-defaults.conf文件的末尾添加一行:spark.driver.extraJavaOptions-Dderby.system.home=/log即生成的文件存放的目录

- 介绍 Apache Spark 的基本概念和在大数据分析中的应用

佛渡红尘

apache

ApacheSpark是一个开源的集群计算框架,最初由加州大学伯克利分校的AMPLab开发,用于大规模数据处理和分析。相比于传统的MapReduce框架,Spark具有更快的数据处理速度和更强大的计算能力。ApacheSpark的基本概念包括:弹性分布式数据集(RDD):是Spark中基本的数据抽象,是一个可并行操作的分区记录集合。RDD可以在集群中的节点间进行分布式计算。转换(Transform

- 从“笨重大象”到“敏捷火花”:Hadoop与Spark的大数据技术进化之路

Echo_Wish

大数据大数据hadoopspark

从“笨重大象”到“敏捷火花”:Hadoop与Spark的大数据技术进化之路说起大数据技术,Hadoop和Spark可以说是这个领域的两座里程碑。Hadoop曾是大数据的开山之作,而Spark则带领我们迈入了一个高效、灵活的大数据处理新时代。那么,它们的演变过程到底有何深意?背后技术上的取舍和选择,又意味着什么?一、Hadoop:分布式存储与计算的奠基者Hadoop诞生于互联网流量爆发式增长的时代,

- Hive 与 SparkSQL 的语法差异及性能对比

自然术算

Hivehivehadoop大数据spark

在大数据处理领域,Hive和SparkSQL都是极为重要的工具,它们为大规模数据的存储、查询和分析提供了高效的解决方案。虽然二者都致力于处理结构化数据,并且都采用了类似SQL的语法来方便用户进行操作,但在实际使用中,它们在语法细节和性能表现上存在诸多差异。了解这些差异,对于开发者根据具体业务场景选择合适的工具至关重要。语法差异数据定义语言(DDL)表创建语法Hive:在Hive中创建表时,需要详细

- Spark任务读取hive表数据导入es

小小小小小小小小小小码农

hiveelasticsearchsparkjava

使用elasticsearch-hadoop将hive表数据导入es,超级简单1.引入pomorg.elasticsearchelasticsearch-hadoop9.0.0-SNAPSHOT2.创建sparkconf//spark参数设置SparkConfsparkConf=newSparkConf();//要写入的索引sparkConf.set("es.resource","");//es集

- Spark sql 中row的用法

闯闯桑

sparksql大数据开发语言

在ApacheSpark中,Row是一个表示一行数据的类。它是SparkSQL中DataFrame或Dataset的基本数据单元。每一行数据都由一个Row对象表示,而Row对象中的每个字段对应数据的一个列。Row的用法Row对象通常用于以下场景:创建数据:当你手动创建数据时,可以使用Row对象来表示每一行数据。访问数据:当你从DataFrame或Dataset中提取数据时,每一行数据都是一个Row

- Spark Sql 简单校验的实现

小小小小小小小小小小码农

sparksqljava

在网上参考了很多资料,都是要依赖Sparksession,这个需要spark环境,非常不友好,jdk版本也不好控制。不使用Sparksession获取上下文,利用spark和antlr的静态方法使用java实现简单的sparksql的语法以及内置函数的校验。1.spark版本3.2.0org.apache.sparkspark-sql_2.123.2.0org.antlrantlr4-runtim

- PySpark安装及WordCount实现(基于Ubuntu)

uui1885478445

ubuntulinux运维

在Ubuntu上安装PySpark并实现WordCount,需要以下步骤:安装PySpark:安装Java:PySpark需要Java运行环境。你可以使用以下命令安装OpenJDK:sudoaptupdatesudoaptinstalldefault-jredefault-jdk安装Scala:PySpark还需要Scala,可以使用以下命令安装:sudoaptinstallscala安装Pyth

- 大数据手册(Spark)--Spark安装配置

WilenWu

数据分析(DataAnalysis)大数据spark分布式

本文默认在zsh终端安装配置,若使用bash终端,环境变量的配置文件相应变化。若安装包下载缓慢,可复制链接到迅雷下载,亲测极速~准备工作Spark的安装过程较为简单,在已安装好Hadoop的前提下,经过简单配置即可使用。假设已经安装好了hadoop(伪分布式)和hive,环境变量如下JAVA_HOME=/usr/opt/jdkHADOOP_HOME=/usr/local/hadoopHIVE_HO

- 国内外AI搜索产品盘点

Suee2020

人工智能

序号AISearch产品名简介网站开发者1Perplexity强大的对话式AI搜索引擎https://www.perplexity.aiPerplexity2GensparkAIAgent搜索引擎https://www.genspark.aiMainFunc(景鲲、朱凯华)3Kimi.ai智能助手https://kimi.moonshot.cn/月之暗面(杨植麟)4秘塔AI搜索AI搜索引擎http

- HIVE开窗函数

Cciccd

sqlhive

ETL,SQL面试高频考点——HIVE开窗函数(基础篇)目录标题ETL,SQL面试高频考点——HIVE开窗函数(基础篇)一,窗口函数介绍二,开窗函数三,分析函数分类1,排序分析函数:实列解析对比总结2.聚合分析函数3.用spark自定义HIVE用户自定义函数后续更新中~一,窗口函数介绍窗口函数,也叫OLAP函数(OnlineAnallyticalProcessing,联机分析处理),可以对数据库数

- java杨辉三角

3213213333332132

java基础

package com.algorithm;

/**

* @Description 杨辉三角

* @author FuJianyong

* 2015-1-22上午10:10:59

*/

public class YangHui {

public static void main(String[] args) {

//初始化二维数组长度

int[][] y

- 《大话重构》之大布局的辛酸历史

白糖_

重构

《大话重构》中提到“大布局你伤不起”,如果企图重构一个陈旧的大型系统是有非常大的风险,重构不是想象中那么简单。我目前所在公司正好对产品做了一次“大布局重构”,下面我就分享这个“大布局”项目经验给大家。

背景

公司专注于企业级管理产品软件,企业有大中小之分,在2000年初公司用JSP/Servlet开发了一套针对中

- 电驴链接在线视频播放源码

dubinwei

源码电驴播放器视频ed2k

本项目是个搜索电驴(ed2k)链接的应用,借助于磁力视频播放器(官网:

http://loveandroid.duapp.com/ 开放平台),可以实现在线播放视频,也可以用迅雷或者其他下载工具下载。

项目源码:

http://git.oschina.net/svo/Emule,动态更新。也可从附件中下载。

项目源码依赖于两个库项目,库项目一链接:

http://git.oschina.

- Javascript中函数的toString()方法

周凡杨

JavaScriptjstoStringfunctionobject

简述

The toString() method returns a string representing the source code of the function.

简译之,Javascript的toString()方法返回一个代表函数源代码的字符串。

句法

function.

- struts处理自定义异常

g21121

struts

很多时候我们会用到自定义异常来表示特定的错误情况,自定义异常比较简单,只要分清是运行时异常还是非运行时异常即可,运行时异常不需要捕获,继承自RuntimeException,是由容器自己抛出,例如空指针异常。

非运行时异常继承自Exception,在抛出后需要捕获,例如文件未找到异常。

此处我们用的是非运行时异常,首先定义一个异常LoginException:

/**

* 类描述:登录相

- Linux中find常见用法示例

510888780

linux

Linux中find常见用法示例

·find path -option [ -print ] [ -exec -ok command ] {} \;

find命令的参数;

- SpringMVC的各种参数绑定方式

Harry642

springMVC绑定表单

1. 基本数据类型(以int为例,其他类似):

Controller代码:

@RequestMapping("saysth.do")

public void test(int count) {

}

表单代码:

<form action="saysth.do" method="post&q

- Java 获取Oracle ROWID

aijuans

javaoracle

A ROWID is an identification tag unique for each row of an Oracle Database table. The ROWID can be thought of as a virtual column, containing the ID for each row.

The oracle.sql.ROWID class i

- java获取方法的参数名

antlove

javajdkparametermethodreflect

reflect.ClassInformationUtil.java

package reflect;

import javassist.ClassPool;

import javassist.CtClass;

import javassist.CtMethod;

import javassist.Modifier;

import javassist.bytecode.CodeAtt

- JAVA正则表达式匹配 查找 替换 提取操作

百合不是茶

java正则表达式替换提取查找

正则表达式的查找;主要是用到String类中的split();

String str;

str.split();方法中传入按照什么规则截取,返回一个String数组

常见的截取规则:

str.split("\\.")按照.来截取

str.

- Java中equals()与hashCode()方法详解

bijian1013

javasetequals()hashCode()

一.equals()方法详解

equals()方法在object类中定义如下:

public boolean equals(Object obj) {

return (this == obj);

}

很明显是对两个对象的地址值进行的比较(即比较引用是否相同)。但是我们知道,String 、Math、I

- 精通Oracle10编程SQL(4)使用SQL语句

bijian1013

oracle数据库plsql

--工资级别表

create table SALGRADE

(

GRADE NUMBER(10),

LOSAL NUMBER(10,2),

HISAL NUMBER(10,2)

)

insert into SALGRADE values(1,0,100);

insert into SALGRADE values(2,100,200);

inser

- 【Nginx二】Nginx作为静态文件HTTP服务器

bit1129

HTTP服务器

Nginx作为静态文件HTTP服务器

在本地系统中创建/data/www目录,存放html文件(包括index.html)

创建/data/images目录,存放imags图片

在主配置文件中添加http指令

http {

server {

listen 80;

server_name

- kafka获得最新partition offset

blackproof

kafkapartitionoffset最新

kafka获得partition下标,需要用到kafka的simpleconsumer

import java.util.ArrayList;

import java.util.Collections;

import java.util.Date;

import java.util.HashMap;

import java.util.List;

import java.

- centos 7安装docker两种方式

ronin47

第一种是采用yum 方式

yum install -y docker

- java-60-在O(1)时间删除链表结点

bylijinnan

java

public class DeleteNode_O1_Time {

/**

* Q 60 在O(1)时间删除链表结点

* 给定链表的头指针和一个结点指针(!!),在O(1)时间删除该结点

*

* Assume the list is:

* head->...->nodeToDelete->mNode->nNode->..

- nginx利用proxy_cache来缓存文件

cfyme

cache

user zhangy users;

worker_processes 10;

error_log /var/vlogs/nginx_error.log crit;

pid /var/vlogs/nginx.pid;

#Specifies the value for ma

- [JWFD开源工作流]JWFD嵌入式语法分析器负号的使用问题

comsci

嵌入式

假如我们需要用JWFD的语法分析模块定义一个带负号的方程式,直接在方程式之前添加负号是不正确的,而必须这样做:

string str01 = "a=3.14;b=2.71;c=0;c-((a*a)+(b*b))"

定义一个0整数c,然后用这个整数c去

- 如何集成支付宝官方文档

dai_lm

android

官方文档下载地址

https://b.alipay.com/order/productDetail.htm?productId=2012120700377310&tabId=4#ps-tabinfo-hash

集成的必要条件

1. 需要有自己的Server接收支付宝的消息

2. 需要先制作app,然后提交支付宝审核,通过后才能集成

调试的时候估计会真的扣款,请注意

- 应该在什么时候使用Hadoop

datamachine

hadoop

原帖地址:http://blog.chinaunix.net/uid-301743-id-3925358.html

存档,某些观点与我不谋而合,过度技术化不可取,且hadoop并非万能。

--------------------------------------------万能的分割线--------------------------------

有人问我,“你在大数据和Hado

- 在GridView中对于有外键的字段使用关联模型进行搜索和排序

dcj3sjt126com

yii

在GridView中使用关联模型进行搜索和排序

首先我们有两个模型它们直接有关联:

class Author extends CActiveRecord {

...

}

class Post extends CActiveRecord {

...

function relations() {

return array(

'

- 使用NSString 的格式化大全

dcj3sjt126com

Objective-C

格式定义The format specifiers supported by the NSString formatting methods and CFString formatting functions follow the IEEE printf specification; the specifiers are summarized in Table 1. Note that you c

- 使用activeX插件对象object滚动有重影

蕃薯耀

activeX插件滚动有重影

使用activeX插件对象object滚动有重影 <object style="width:0;" id="abc" classid="CLSID:D3E3970F-2927-9680-BBB4-5D0889909DF6" codebase="activex/OAX339.CAB#

- SpringMVC4零配置

hanqunfeng

springmvc4

基于Servlet3.0规范和SpringMVC4注解式配置方式,实现零xml配置,弄了个小demo,供交流讨论。

项目说明如下:

1.db.sql是项目中用到的表,数据库使用的是oracle11g

2.该项目使用mvn进行管理,私服为自搭建nexus,项目只用到一个第三方 jar,就是oracle的驱动;

3.默认项目为零配置启动,如果需要更改启动方式,请

- 《开源框架那点事儿16》:缓存相关代码的演变

j2eetop

开源框架

问题引入

上次我参与某个大型项目的优化工作,由于系统要求有比较高的TPS,因此就免不了要使用缓冲。

该项目中用的缓冲比较多,有MemCache,有Redis,有的还需要提供二级缓冲,也就是说应用服务器这层也可以设置一些缓冲。

当然去看相关实现代代码的时候,大致是下面的样子。

[java]

view plain

copy

print

?

public vo

- AngularJS浅析

kvhur

JavaScript

概念

AngularJS is a structural framework for dynamic web apps.

了解更多详情请见原文链接:http://www.gbtags.com/gb/share/5726.htm

Directive

扩展html,给html添加声明语句,以便实现自己的需求。对于页面中html元素以ng为前缀的属性名称,ng是angular的命名空间

- 架构师之jdk的bug排查(一)---------------split的点号陷阱

nannan408

split

1.前言.

jdk1.6的lang包的split方法是有bug的,它不能有效识别A.b.c这种类型,导致截取长度始终是0.而对于其他字符,则无此问题.不知道官方有没有修复这个bug.

2.代码

String[] paths = "object.object2.prop11".split("'");

System.ou

- 如何对10亿数据量级的mongoDB作高效的全表扫描

quentinXXZ

mongodb

本文链接:

http://quentinXXZ.iteye.com/blog/2149440

一、正常情况下,不应该有这种需求

首先,大家应该有个概念,标题中的这个问题,在大多情况下是一个伪命题,不应该被提出来。要知道,对于一般较大数据量的数据库,全表查询,这种操作一般情况下是不应该出现的,在做正常查询的时候,如果是范围查询,你至少应该要加上limit。

说一下,

- C语言算法之水仙花数

qiufeihu

c算法

/**

* 水仙花数

*/

#include <stdio.h>

#define N 10

int main()

{

int x,y,z;

for(x=1;x<=N;x++)

for(y=0;y<=N;y++)

for(z=0;z<=N;z++)

if(x*100+y*10+z == x*x*x

- JSP指令

wyzuomumu

jsp

jsp指令的一般语法格式: <%@ 指令名 属性 =”值 ” %>

常用的三种指令: page,include,taglib

page指令语法形式: <%@ page 属性 1=”值 1” 属性 2=”值 2”%>

include指令语法形式: <%@include file=”relative url”%> (jsp可以通过 include