cuda《学习笔记三》——共享内存和同步

一、前言

本文介绍CUDA编程的共享内存和同步。共享内存中的变量(核函数中用__shared__声明),在GPU上启动的每个线程块,编译器都创建该变量的副本,若启动N个线程块,则有N个该变量副本,为每个线程块私有;同步则是使线程块中所有的线程能够在执行完某些语句后,才执行后续语句。

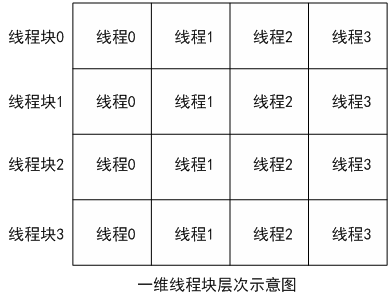

二、线程块、线程索引

以下为线程块与线程的层次结构图

每个线程均独自执行核函数,若在核函数中声明共享变量,则每个线程块均拥有该变量的一个副本,且该副本为该程序块内的所有线程所共享。

三、共享变量和同步例子

(1)以下程序实现了点积运算,计算公式为 f(n) = 1+2*2+ 3*3+ … (n-1)*(n-1),使用共享变量计算各个程序块内所有线程的求和运算结果。

#include

#include

//main1.cu

#include "book.h"

using namespace std;

#define N 33*1024 //数组长度

const int threadsPerBlock = 64; //每个线程块的线程数量

const int blocksPerGrid = 64; //第一维线程格内线程数量

__global__ void add(float *a, float *b, float *c)

{

__shared__ float cache[threadsPerBlock]; //__shared__声明共享变量,每个线程块均有自己的副本,被其所有

//线程共享,这里用于存放每个线程块内各个线程所计算得的点积和

int index =threadIdx.x + blockIdx.x *blockDim.x; //将线程块、线程索引转换为数组的索引

int cacheIdx = threadIdx.x;

float temp = 0;

while (index < N){

temp += a[index] * b[index];

index += gridDim.x * blockDim.x;

}

cache[cacheIdx] = temp; //存放每个线程块内各个线程所计算得的点积和

__syncthreads(); //cuda内置函数,使所有线程均执行完该命令前代码,才执行后面语句,也即保持同步

//目的为获得各个cache副本,此时共有64个cache副本

//规约运算,将每个cache副本求和,结果保存于cache[0]

int i = blockDim.x / 2;

while (i != 0){

if (cacheIdx < i){

cache[cacheIdx] += cache[i + cacheIdx];

}

__syncthreads(); //所有线程完成一次规约运算,方可进行下一次

i /= 2;

}

if (cacheIdx == 2) //一个操作只需一个线程完成即可

c[blockIdx.x] = cache[0]; //所有副本的cache[0] 存放于数组c

}

int main()

{

float a[N], b[N];

float *c = new float[blocksPerGrid];

float *dev_a, *dev_b, *dev_c;

//gpu上分配内存

HANDLE_ERROR(cudaMalloc((void**)&dev_a, N*sizeof(float)));

HANDLE_ERROR(cudaMalloc((void**)&dev_b, N*sizeof(float)));

HANDLE_ERROR(cudaMalloc((void**)&dev_c, N*sizeof(float)));

//为数组a,b初始化

for (int i = 0; i < N; ++i){

a[i] = i;

b[i] = i;

}

//讲数组a,b数据复制至gpu

(cudaMemcpy(dev_a, a, N*sizeof(float), cudaMemcpyHostToDevice));

(cudaMemcpy(dev_b, b, N*sizeof(float), cudaMemcpyHostToDevice));

add <<< blocksPerGrid, threadsPerBlock >> >(dev_a, dev_b, dev_c);

//将数组dev_c复制至cpu

HANDLE_ERROR(cudaMemcpy(c, dev_c, blocksPerGrid*sizeof(float), cudaMemcpyDeviceToHost));

//进一步求和

double sums = 0.0;

for (int i = 0; i < blocksPerGrid; ++i){

sums += c[i];

}

//显示结果

cout << "gpu dot compute result:" << sums << "\n";

sums = 0.0;

for (int i = 0; i < N; ++i){

sums += i*i;

}

cout << "cpu dot compute result:" << sums << "\n";

//释放在gpu分配的内存

cudaFree( dev_a);

cudaFree(dev_b);

cudaFree(dev_c);

delete c;

return 0;

}

运行结果

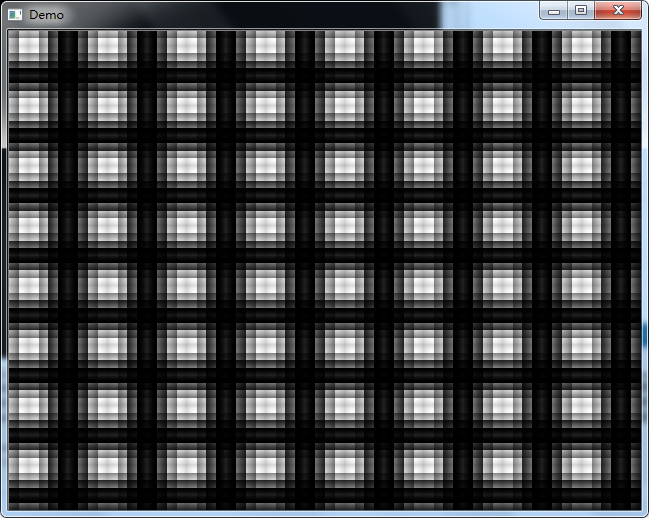

(2)以下程序使用二维程序块共享变量计算图像数据,生成图像

//main2.cu

#include

#include

#include "book.h"

#include

using namespace cv;

using namespace std;

#define PI 3.1415926

#define DIM 1024 //灰度图像的长与宽

__global__ void kernel(uchar * _ptr )

{

int x = threadIdx.x + blockIdx.x * blockDim.x;

int y = threadIdx.y + blockIdx.y * blockDim.y;

int idx = x + y *gridDim.x *blockDim.x;

__shared__ float shared [16][16] ; //每个线程块中每个线程的共享内存缓冲区

const float period = 128.0f;

shared[threadIdx.x][threadIdx.y] = 255 * (sinf(x*2.0f*PI / period) + 1.0f)*(sinf(y*2.0f*PI / period) + 1.0f) / 4.0f;

__syncthreads(); //使所有shared副本均被计算完成

_ptr[idx] = shared[15 - threadIdx.x][15 - threadIdx.y];

}

int main()

{

Mat src(DIM,DIM , CV_8UC1 , Scalar::all(0));

uchar *ptr_dev;

HANDLE_ERROR(cudaMalloc((void**)&ptr_dev, DIM * DIM*sizeof(uchar)));

dim3 blocks(DIM / 16, DIM / 16);

dim3 threads(16 ,16);

kernel << < blocks, threads >> >( ptr_dev );

HANDLE_ERROR(cudaMemcpy(src.data, ptr_dev, DIM * DIM*sizeof(uchar), cudaMemcpyDeviceToHost));

cudaFree(ptr_dev);

namedWindow("Demo", 0);

imshow("Demo" , src);

waitKey(0);

return 0;

}