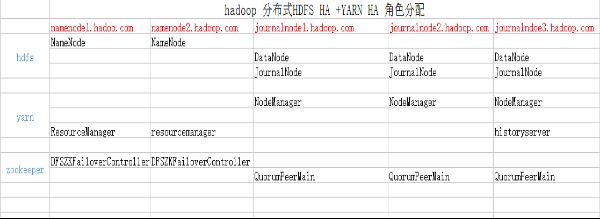

- 一:所需安装配置包

- 二:CentOS6.4x64 主机名配置

- 三:journalnode 配置zookeeper 安装

- 四:在namenode节点上部署hadoop 2.5.2

- 五:hadoop 集群的测试:

一:所需安装配置包

系统:CentOS 6.4 X64

软件:Hadoop-2.5.2.tar.gz

native-2.5.2.tar.gz

zookeeper-3.4.6.tar.gz

jdk-7u67-linux-x64.tar.gz

将所有软件安装上传到/home/hadoop/yangyang/ 下面二:CentOS6.4x64 主机名配置

vim /etc/hosts (五台虚拟机全部配置)

192.168.3.1 namenode1.hadoop.com

192.168.3.2 namenode2.hadoop.com

192.168.3.3 journalnode1.hadoop.com

192.168.3.4 journalnode2.hadoop.com

192.168.3.5 journalnode3.hadoop.com2.1:配置无密钥认证

所有服务器均配置-------------

ssh-keygen ----------------一直到最后:

每台机器会生成一个id_rsa.pub 文件,

将所有的密钥生成导入一个authorized_keys文件里面

cat id.rsa.pub >> authorized_keys

然后从新分发到每台服务器的 .ssh/目录下面。

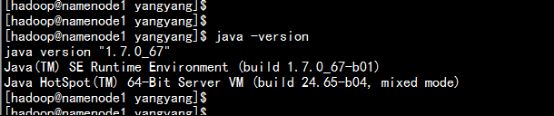

最后进行测试。2.2: 安装jdk7u67

所有服务器均配置。

安装jdk

tar -zxvf jdk-7u67-linux-x64.tar.gz

mv jdk-7u67-linux-x64 jdk

环境变量配置

vim .bash_profile

到最后加上:export JAVA_HOME=/home/hadoop/yangyang/jdk

export CLASSPATH=.:$JAVA_HOME/jre/lib:$JAVA_HOME/lib:$JAVA_HOME/lib/tools.jar

export HADOOP_HOME=/home/hadoop/yangyang/hadoop

PATH=$PATH:$HOME/bin:$JAVA_HOME/bin:${HADOOP_HOME}/bin

等所有软件安装部署完毕在进行:

source .bash_profile

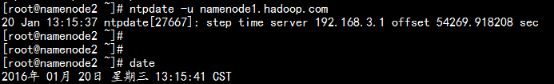

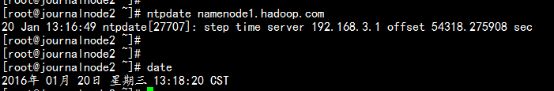

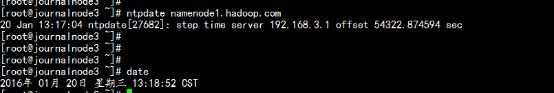

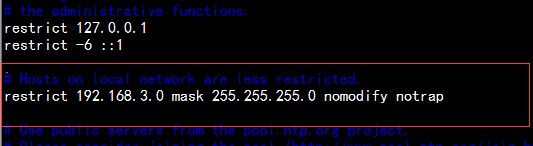

java –version2.3 配置NTP Server 时间同步服务器

以 namenode1.hadoop.com 配置 作为NTP SERVER, 其它节点同步NTP 配置:

Namenode1.hadoop.com去网上同步时间echo “ntpdate –u 202.112.10.36 ” >> /etc/rc.d/rc.local

#加入到开机自启动

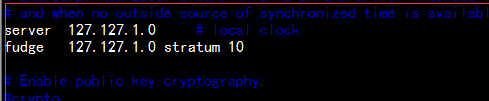

vim /etc/ntp.conf

取消下面两行的#

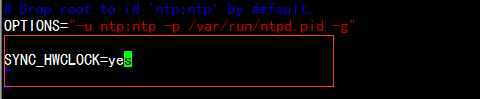

#vim /etc/sysconfig/ntpd

增加:

#service ntpd restart

#chkconfig ntpd on其它节点 配置计划任务处理将从namenode1.hadoop.com 同步时间

crontab –e

*/10 * * * * /usr/sbin/ntpdate namnode1.hadoop.comNamenode2.hadoop.com Journalnode1.hadoop.comJornalnode2.hadoop.comJournalndoe3.hadoop.com三: journalnode 配置zookeeper 安装

3.1 安装zookeeper软件

mv zookeeper-3.4.6 /home/hadoop/yangyang/zookeeper

cd /home/yangyang/hadoop/zookeeper/conf

cp -p zoo_sample.cfg zoo.cfg

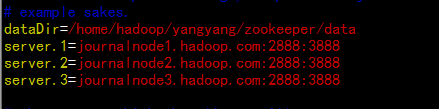

vim zoo.cfg

更改dataDir 目录

dataDir=/home/hadoop/yangyang/zookeeper/data

配置journal主机的

server.1=journalnode1.hadoop.com:2888:3888

server.2=journalnode2.hadoop.com:2888:3888

server.3=journalnode3.hadoop.com:2888:38883.2 创建ID 文件

mkdir /home/hadoop/yangyang/zookeeper/data

echo “1” > /home/hadoop/yangyang/zookeeper/myid

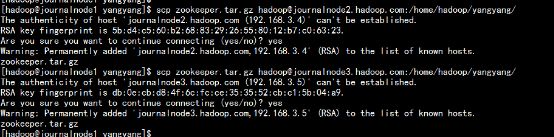

cd /home/hadoop/yangyang/

scp –r zookeeper [email protected]:/home/hadoop/yangyang/

scp –r zookeeper [email protected]:/home/hadoop/yangyang/3.3 更改journalnode2 与journalnode3

Journalnode2.hadoop.com :

echo “2” > /home/hadoop/yangyang/zookeeper/data/myid

Journalnode3.hadoop.com:

echo “3” > /home/hadoop/yangyang/zookeeper/myid

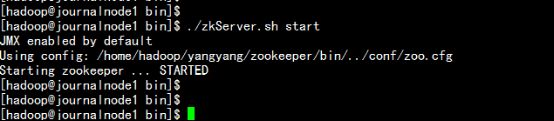

- 3.4 所有journalnode 节点启动zookeeper。

cd /home/hadoop/yangyang/zookeeper/bin

./zkServer.sh start

---------------------显示以下内容为正常---------------------------四:在namenode节点上部署hadoop 2.5.2----

tar –zxvf hadoop-2.5.2.tar.gz

mv hadoop-2.5.2 /home/hadoop/yangyang/hadoop/4.1修改hadoop-env.sh

cd /home/hadoop/yangyang/hadoop/

vim etc/hadoop/hadoop-env.sh

增加jdk 的环境变量export JAVA_HOME=/home/hadoop/yangyang/jdk

export HADOOP_PID_DIR=/home/hadoop/yangyang/hadoop/data/tmp

export HADOOP_SECURE_DN_PID_DIR=/home/hadoop/yangyang/hadoop/data/tmpvim etc/hadoop/mapred-env.sh

增加jdk 的环境

export JAVA_HOME=/home/hadoop/yangyang/jdk

export HADOOP_MAPRED_PID_DIR=/home/hadoop/yangyang/hadoop/data/tmp

vim etc/hadoop/yarn-env.sh

export JAVA_HOME=/home/hadoop/yangyang/jdk4.2 修改core-site.xml

vim etc/hadoop/core-site.xml

fs.defaultFS

hdfs://mycluster

hadoop.tmp.dir

/home/hadoop/yangyang/hadoop/data/tmp

ha.zookeeper.quorum

journalnode1.hadoop.com:2181,journalnode2.hadoop.com:2181,journalnode3.hadoop.com:2181

4.3 修改hdfs-stie.xml

vim etc/hadoop/hdfs-site.xml

dfs.replication

3

dfs.nameservices

mycluster

dfs.ha.namenodes.mycluster

nn1,nn2

dfs.namenode.rpc-address.mycluster.nn1

namenode1.hadoop.com:8020

dfs.namenode.http-address.mycluster.nn1

namenode1.hadoop.com:50070

dfs.namenode.rpc-address.mycluster.nn2

namenode2.hadoop.com:8020

dfs.namenode.http-address.mycluster.nn2

namenode2.hadoop.com:50070

dfs.namenode.shared.edits.dir

qjournal://journalnode1.hadoop.com:8485;journalnode2.hadoop.com:8485;journalnode3.hadoop.com:8485/mycluster

dfs.journalnode.edits.dir

/home/hadoop/yangyang/hadoop/data/jn

dfs.ha.automatic-failover.enabled

true

dfs.client.failover.proxy.provider.masters

org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider

dfs.ha.fencing.methods

sshfence

shell(/bin/true)

dfs.ha.fencing.ssh.private-key-files

/home/hadoop/.ssh/id_rsa

dfs.ha.fencing.ssh.connect-timeout

30000

4.4 修改mapred-site.xml

vim etc/hadoop/mapred-site.xml

mapreduce.framework.name

yarn

mapreduce.jobhistory.address

journalnode3.hadoop.com:10020

mapreduce.jobhistory.webapp.address

journalnode3.hadoop.com:19888

4.5修改yarn-site.xml

vim etc/hadoop/yarn-site.xml

yarn.resourcemanager.ha.enabled

true

yarn.resourcemanager.cluster-id

RM_HA_ID

yarn.resourcemanager.ha.rm-ids

rm1,rm2

yarn.resourcemanager.hostname.rm1

namenode1.hadoop.com

yarn.resourcemanager.hostname.rm2

namenode2.hadoop.com

yarn.resourcemanager.recovery.enabled

true

yarn.resourcemanager.store.class

org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore

yarn.resourcemanager.zk-address

journalnode1.hadoop.com:2181,journalnode2.hadoop.com:2181,journalnode3.hadoop.com:2181

yarn.nodemanager.aux-services

mapreduce_shuffle

4.6更换native 文件

rm -rf lib/native/*

tar –zxvf hadoop-native-2.5.2.tar.gz –C hadoop/lib/native

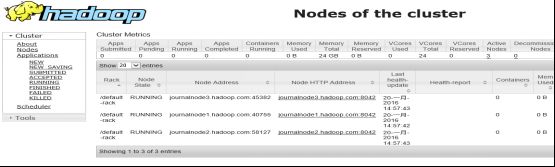

cd hadoop/lib/native/4.7 修改slaves 文件

vim etc/hadoop/slaves

journalnode1.hadoop.com

journalnode2.hadoop.com

journalnode3.hadoop.com4.8 所有节点同步:

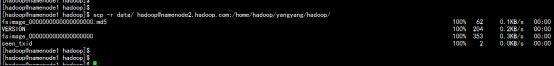

cd /home/hadoop/yangyang/

scp –r hadoop [email protected]:/home/hadoop/yangyang/

scp –r hadoop [email protected]:/home/hadoop/yangyang/

scp –r hadoop [email protected]:/home/hadoop/yangyang/

scp –r hadoop [email protected]:/home/hadoop/yangyang/4.9 启动所有 journalnode 节点的journalnode服务

cd /home/hadoop/yangyang/hadoop/sbin

./ hadoop-daemon.sh start journalnode

---------------------------显示内容-------------------------- 4.10 启动namenode 节点的HDFS

cd /home/hadoop/yangyang/hadoop/bin

./hdfs namenode –format

![17.png-101.7kB][17]

将namenode1上生成的data文件夹复制到namenode2的相同目录下

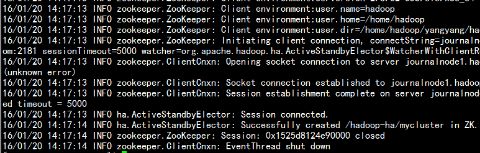

scp -r hadoop/data/ [email protected]:/home/hadoop/yangyang/hadoop4.11格式化ZK 在namenode1 上面执行

cd /home/hadoop/yangyang/hadoop/bin

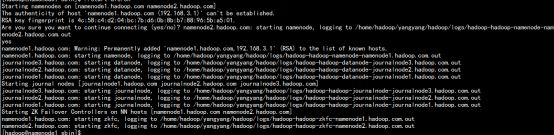

./ hdfs zkfc –formatZK ./start-dfs.sh

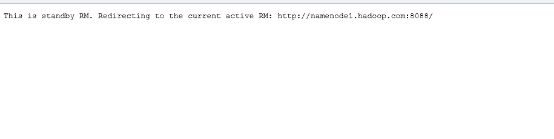

./ start-yarn.sh4.13 namenode2上的standby resourcemanger是需要手动启动的

cd /home/hadoop/yangyang/hadoop/sbin

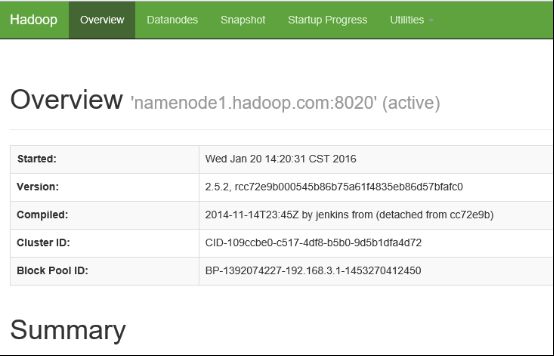

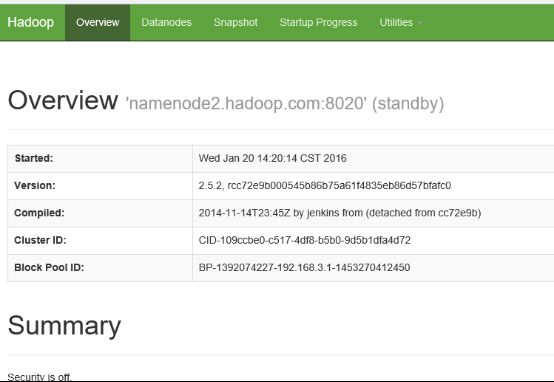

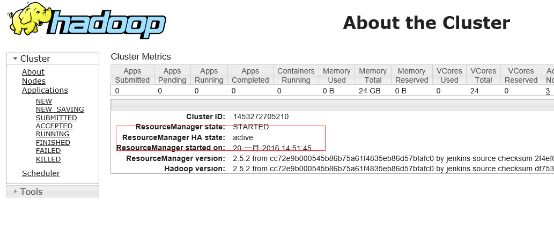

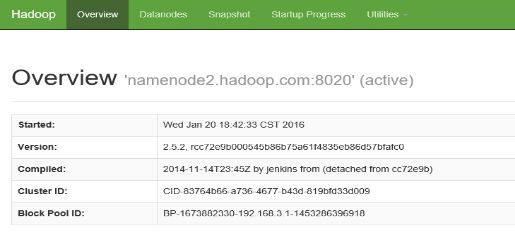

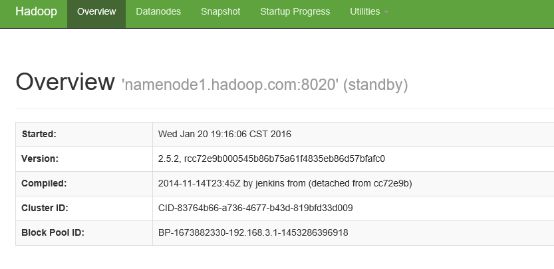

./yarn-daemon.sh start resourcemanager4.14通过web查看集群状态

查看namenode

http://namenode1.hadoop.com:50070/http://namenode2.hadoop.com:50070/查看resourcemanger

http://namenode1.hadoop.com:8088/http://namenode2.hadoop.com:8088/4.15启动journalnode3.hadoop.com 的jobhistory 功能:

cd /home/hadoop/yangyang/hadoop/sbin/

./mr-jobhistory-daemon.sh start historyserver五:hadoop 集群的测试:

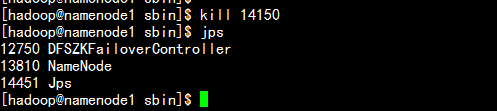

5.1 HDFS 的故障转移

杀掉namenode1.haoop.com 上的namenodenamenode2.haoop.com 的stundby 则切换为active状态。启动namenode1.hadoop.com 的namenode 节点

cd /home/hadoop/yangyang/hadoop/sbin/

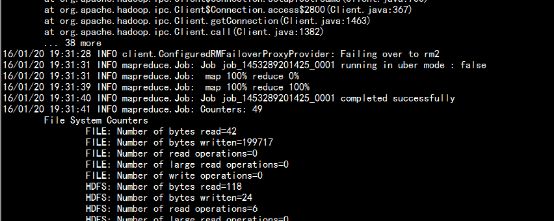

./hadoop-daemon.sh start namenode打开namenode1.hadoop.com 的浏览器5.2 yarn的故障转移:

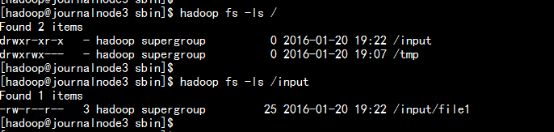

hadoop fs –mkdir /input

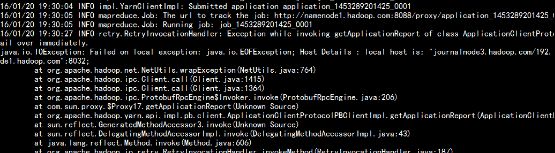

hadoop fs –put file1 /input/在运行wordcount 时 杀掉 namenode1.hadoop.com 的resourcemanager

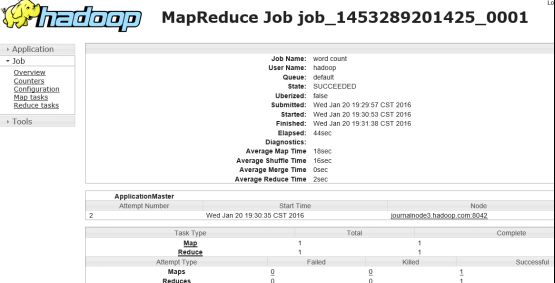

运行wordcount

cd /home/hadoop/yangyang/hadoop/share/hadoop/mapreduce

yarn jar hadoop-mapreduce-examples-2.5.2.jar wordcount /input/file1 /output杀掉namenode1.hadoop.com 上的rescourcemanagerNamenode2.hadoop.com 的yarn 切换为actvie Wordcount 运行执行结束:查看jobhistory 页面