Udacity Deep Learning课程作业(四)

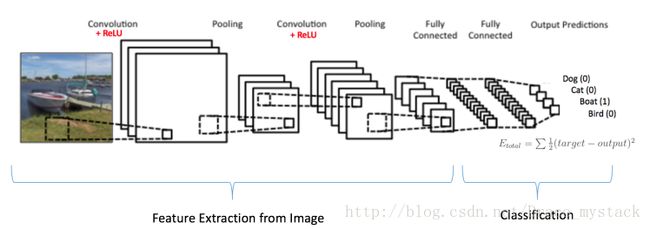

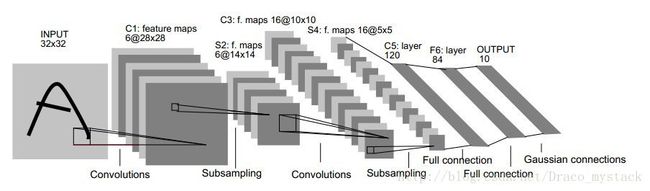

第四章作业主要是关于卷积神经网络的练习,涉及到tf.nn.conv2d、tf.nn.max_pooling等的使用。

相关API的用法

tf.nn.conv2d

tf.nn.conv2d(input, filter, strides, padding, use_cudnn_on_gpu=None, name=None):

- input和filter都是4D的tensor类型,计算两者的2D卷积,input的shape为

[batch, in_height, in_width, in_channels],filter/kernel的shape为[filter_height, filter_width, in_channels, out_channels] - strides为长度为4的列表,input的每个维度的滑动窗口的stride步长

- padding,使用的padding算法,”SAME”或者”VALID”,VALID卷积后比原来小,SAME卷积后和原来一样

- 步骤:

- Flattens the filter to a 2-D matrix with shape

[filter_height * filter_width * in_channels, output_channels]. - Extracts image patches from the the input tensor to form a virtual tensor of shape

[batch, out_height, out_width, filter_height * filter_width * in_channels]. - For each patch, right-multiplies the filter matrix and the image patch vector.

- Flattens the filter to a 2-D matrix with shape

output[b, i, j, k] =

sum_{di, dj, q} input[b, strides[1] * i + di, strides[2] * j + dj, q] *

filter[di, dj, q, k]测试代码:

import tensorflow as tf

image = tf.Variable(tf.random_normal([10, 12, 12, 3]))

conv = tf.Variable(tf.random_normal([3, 3, 3, 7]))

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

print(sess.run(tf.nn.conv2d(image, conv, strides=[1, 5, 5, 1], padding="VALID")))tf.nn.max_pool

max_pool(value, ksize, strides, padding, data_format='NHWC', name=None):

- value: 4D tensor,shape为

[batch, height, width, channels] - ksize: 长度大于等于4的列表,表示输入tensor在各个维度的窗口size

- strides: 长度大于等于4的列表,表示输入tensor在各个维度的滑动步长stride

- padding: ‘VALID’或者’SAME’

- data_format: ‘NHWC’ 和’NCHW’格式

- 返回一个tensor

Problem 1

原来基本的CNN模型中,卷积层步长stride为2,现在修改为使用步长stride为2和kernel size为2的max pooling。

batch_size = 16

patch_size = 5

depth = 16

num_hidden = 64

graph = tf.Graph()

with graph.as_default():

# Input data.

tf_train_dataset = tf.placeholder(

tf.float32, shape=(batch_size, image_size, image_size, num_channels))

tf_train_labels = tf.placeholder(tf.float32, shape=(batch_size, num_labels))

tf_valid_dataset = tf.constant(valid_dataset)

tf_test_dataset = tf.constant(test_dataset)

# Variables.

layer1_weights = tf.Variable(tf.truncated_normal(

[patch_size, patch_size, num_channels, depth], stddev=0.1))

layer1_biases = tf.Variable(tf.zeros([depth]))

layer2_weights = tf.Variable(tf.truncated_normal(

[patch_size, patch_size, depth, depth], stddev=0.1))

layer2_biases = tf.Variable(tf.constant(1.0, shape=[depth]))

layer3_weights = tf.Variable(tf.truncated_normal(

[image_size // 4 * image_size // 4 * depth, num_hidden], stddev=0.1))

layer3_biases = tf.Variable(tf.constant(1.0, shape=[num_hidden]))

layer4_weights = tf.Variable(tf.truncated_normal(

[num_hidden, num_labels], stddev=0.1))

layer4_biases = tf.Variable(tf.constant(1.0, shape=[num_labels]))

# Model with Max Pooling

def model(data):

conv = tf.nn.conv2d(data, layer1_weights, [1, 1, 1, 1], padding='SAME') # update stride as 1

hidden = tf.nn.relu(conv + layer1_biases)

pool = tf.nn.max_pool(hidden, [1, 2, 2, 1], [1, 2, 2, 1], padding='SAME') # add max pooling

conv = tf.nn.conv2d(pool, layer2_weights, [1, 1, 1, 1], padding='SAME') # update stride as 1

hidden = tf.nn.relu(conv + layer2_biases)

pool = tf.nn.max_pool(hidden, [1, 2, 2, 1], [1, 2, 2, 1], padding='SAME') # add max pooling

shape = pool.get_shape().as_list()

reshape = tf.reshape(pool, [shape[0], shape[1] * shape[2] * shape[3]])

hidden = tf.nn.relu(tf.matmul(reshape, layer3_weights) + layer3_biases)

return tf.matmul(hidden, layer4_weights) + layer4_biases

# Training computation.

logits = model(tf_train_dataset)

loss = tf.reduce_mean(

tf.nn.softmax_cross_entropy_with_logits(labels=tf_train_labels, logits=logits))

# Optimizer.

optimizer = tf.train.GradientDescentOptimizer(0.05).minimize(loss)

# Predictions for the training, validation, and test data.

train_prediction = tf.nn.softmax(logits)

valid_prediction = tf.nn.softmax(model(tf_valid_dataset))

test_prediction = tf.nn.softmax(model(tf_test_dataset))

num_steps = 3001

with tf.Session(graph=graph) as session:

tf.global_variables_initializer().run()

print('Initialized')

for step in range(num_steps):

offset = (step * batch_size) % (train_labels.shape[0] - batch_size)

batch_data = train_dataset[offset:(offset + batch_size), :, :, :]

batch_labels = train_labels[offset:(offset + batch_size), :]

feed_dict = {tf_train_dataset : batch_data, tf_train_labels : batch_labels}

_, l, predictions = session.run(

[optimizer, loss, train_prediction], feed_dict=feed_dict)

if (step % 500 == 0):

print('Minibatch loss at step %d: %f' % (step, l))

print('Minibatch accuracy: %.1f%%' % accuracy(predictions, batch_labels))

print('Validation accuracy: %.1f%%' % accuracy(

valid_prediction.eval(), valid_labels))

print('Test accuracy: %.1f%%' % accuracy(test_prediction.eval(), test_labels))结果如下:

Initialized

Minibatch loss at step 0: 3.217397

Minibatch accuracy: 6.2%

Validation accuracy: 10.2%

Minibatch loss at step 500: 0.567970

Minibatch accuracy: 75.0%

Validation accuracy: 81.4%

Minibatch loss at step 1000: 0.269530

Minibatch accuracy: 87.5%

Validation accuracy: 84.0%

Minibatch loss at step 1500: 0.404251

Minibatch accuracy: 81.2%

Validation accuracy: 85.3%

Minibatch loss at step 2000: 0.741879

Minibatch accuracy: 81.2%

Validation accuracy: 85.9%

Minibatch loss at step 2500: 0.048788

Minibatch accuracy: 100.0%

Validation accuracy: 86.0%

Minibatch loss at step 3000: 0.787112

Minibatch accuracy: 75.0%

Validation accuracy: 86.7%

Test accuracy: 93.1%Problem 2

呃,还没来得及写完……

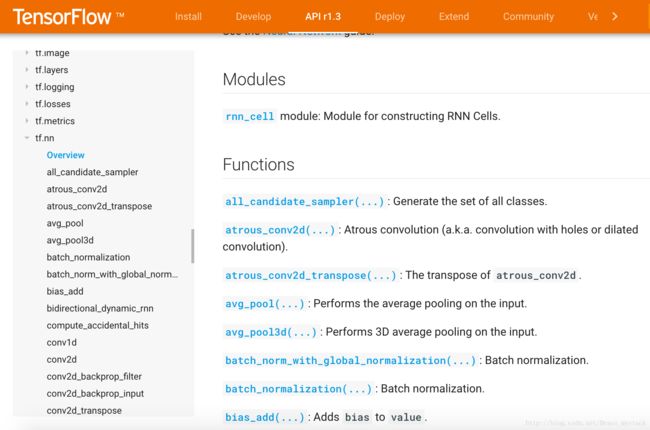

TensorFlow的python API文档(https://www.tensorflow.org/api_docs/python/)是个好东西,常用的函数接口如tf.nn.conv2d、tf.nn.max_pool、tf.nn.relu等都有详细介绍。