统计学习(李航)提升方法(AdaBoost)

Boost

“装袋”(bagging)和“提升”(boost)是构建组合模型的两种最主要的方法,所谓的组合模型是由多个基本模型构成的模型,组合模型的预测效果往往比任意一个基本模型的效果都要好。

-

装袋:每个基本模型由从总体样本中随机抽样得到的不同数据集进行训练得到,通过重抽样得到不同训练数据集的过程称为装袋。

-

提升:每个基本模型训练时的数据集采用不同权重,针对上一个基本模型分类错误的样本增加权重,使得新的模型重点关注误分类样本

AdaBoost

AdaBoost是AdaptiveBoost的缩写,表明该算法是具有适应性的提升算法。

算法的步骤如下:

1)给每个训练样本( x 1 , x 2 , … . , x N x_{1},x_{2},….,x_{N} x1,x2,….,xN)分配权重,初始权重 w 1 w_{1} w1均为1/N。

2)针对带有权值的样本进行训练,得到模型 G m G_m Gm(初始模型为G1)。

3)计算模型 G m G_m Gm的误分率 e m = ∑ i = 1 N w i I ( y i ̸ = G m ( x i ) ) e_m=\sum_{i=1}^Nw_iI(y_i\not= G_m(x_i)) em=∑i=1NwiI(yi̸=Gm(xi))

4)计算模型 G m G_m Gm的系数 α m = 0.5 log [ ( 1 − e m ) / e m ] \alpha_m=0.5\log[(1-e_m)/e_m] αm=0.5log[(1−em)/em]

5)根据误分率e和当前权重向量 w m w_m wm更新权重向量 w m + 1 w_{m+1} wm+1。

6)计算组合模型 f ( x ) = ∑ m = 1 M α m G m ( x i ) f(x)=\sum_{m=1}^M\alpha_mG_m(x_i) f(x)=∑m=1MαmGm(xi)的误分率。

7)当组合模型的误分率或迭代次数低于一定阈值,停止迭代;否则,回到步骤2)

import numpy as np

import pandas as pd

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

import matplotlib.pyplot as plt

%matplotlib inline

# data

def create_data():

iris = load_iris()

df = pd.DataFrame(iris.data, columns=iris.feature_names)

df['label'] = iris.target

df.columns = ['sepal length', 'sepal width', 'petal length', 'petal width', 'label']

data = np.array(df.iloc[:100, [0, 1, -1]])

for i in range(len(data)):

if data[i,-1] == 0:

data[i,-1] = -1

#print(data)

return data[:,:2], data[:,-1]

X, y = create_data()

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2)

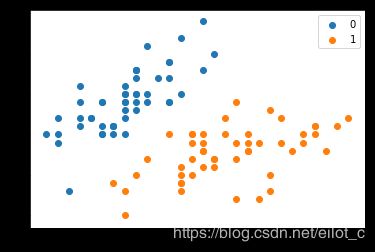

plt.scatter(X[:50,0],X[:50,1], label='0')

plt.scatter(X[50:,0],X[50:,1], label='1')

plt.legend()

class AdaBoost:

def __init__(self, n_estimators=50, learning_rate=1.0):

self.clf_num = n_estimators

self.learning_rate = learning_rate

def init_args(self, datasets, labels):

self.X = datasets

self.Y = labels

self.M, self.N = datasets.shape

# 弱分类器数目和集合

self.clf_sets = []

# 初始化weights

self.weights = [1.0/self.M]*self.M

# G(x)系数 alpha

self.alpha = []

def _G(self, features, labels, weights):

m = len(features)

error = 100000.0 # 无穷大

best_v = 0.0

# 单维features

features_min = min(features)

features_max = max(features)

n_step = (features_max - features_min + self.learning_rate) // self.learning_rate

# print('n_step:{}'.format(n_step))

direct, compare_array = None, None

for i in range(1, int(n_step)):

v = features_min + self.learning_rate * i

if v not in features:

# 误分类计算

compare_array_positive = np.array([1 if features[k] > v else -1 for k in range(m)])

weight_error_positive = sum([weights[k] for k in range(m) if compare_array_positive[k] != labels[k]])

compare_array_nagetive = np.array([-1 if features[k] > v else 1 for k in range(m)])

weight_error_nagetive = sum([weights[k] for k in range(m) if compare_array_nagetive[k] != labels[k]])

if weight_error_positive < weight_error_nagetive:

weight_error = weight_error_positive

_compare_array = compare_array_positive

direct = 'positive'

else:

weight_error = weight_error_nagetive

_compare_array = compare_array_nagetive

direct = 'nagetive'

# print('v:{} error:{}'.format(v, weight_error))

if weight_error < error:

error = weight_error

compare_array = _compare_array

best_v = v

return best_v, direct, error, compare_array

# 计算alpha

def _alpha(self, error):

return 0.5 * np.log((1-error)/error)

# 规范化因子

def _Z(self, weights, a, clf):

return sum([weights[i]*np.exp(-1*a*self.Y[i]*clf[i]) for i in range(self.M)])

# 权值更新

def _w(self, a, clf, Z):

for i in range(self.M):

self.weights[i] = self.weights[i]*np.exp(-1*a*self.Y[i]*clf[i])/ Z

# G(x)的线性组合

def _f(self, alpha, clf_sets):

pass

def G(self, x, v, direct):

if direct == 'positive':

return 1 if x > v else -1

else:

return -1 if x > v else 1

def fit(self, X, y):

self.init_args(X, y)

for epoch in range(self.clf_num):

best_clf_error, best_v, clf_result = 100000, None, None

# 根据特征维度, 选择误差最小的

for j in range(self.N):

features = self.X[:, j]

# 分类阈值,分类误差,分类结果

v, direct, error, compare_array = self._G(features, self.Y, self.weights)

if error < best_clf_error:

best_clf_error = error

best_v = v

final_direct = direct

clf_result = compare_array

axis = j

# print('epoch:{}/{} feature:{} error:{} v:{}'.format(epoch, self.clf_num, j, error, best_v))

if best_clf_error == 0:

break

# 计算G(x)系数a

a = self._alpha(best_clf_error)

self.alpha.append(a)

# 记录分类器

self.clf_sets.append((axis, best_v, final_direct))

# 规范化因子

Z = self._Z(self.weights, a, clf_result)

# 权值更新

self._w(a, clf_result, Z)

# print('classifier:{}/{} error:{:.3f} v:{} direct:{} a:{:.5f}'.format(epoch+1, self.clf_num, error, best_v, final_direct, a))

# print('weight:{}'.format(self.weights))

# print('\n')

def predict(self, feature):

result = 0.0

for i in range(len(self.clf_sets)):

axis, clf_v, direct = self.clf_sets[i]

f_input = feature[axis]

result += self.alpha[i] * self.G(f_input, clf_v, direct)

# sign

return 1 if result > 0 else -1

def score(self, X_test, y_test):

right_count = 0

for i in range(len(X_test)):

feature = X_test[i]

if self.predict(feature) == y_test[i]:

right_count += 1

return right_count / len(X_test)

X = np.arange(10).reshape(10, 1)

y = np.array([1, 1, 1, -1, -1, -1, 1, 1, 1, -1])

clf = AdaBoost(n_estimators=3, learning_rate=0.5)

clf.fit(X, y)

X, y = create_data()

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.33)

clf = AdaBoost(n_estimators=100, learning_rate=0.1)

clf.fit(X_train, y_train)

clf.score(X_test, y_test)

out: 0.8484848484848485

# 100次结果

result = []

for i in range(1, 101):

X, y = create_data()

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.33)

clf = AdaBoost(n_estimators=1000, learning_rate=0.01)

clf.fit(X_train, y_train)

r = clf.score(X_test, y_test)

# print('{}/100 score:{}'.format(i, r))

result.append(r)

print('average score:{:.3f}%'.format(sum(result)))

sklearn.ensemble.AdaBoostClassifier

-

algorithm:这个参数只有AdaBoostClassifier有。主要原因是scikit-learn实现了两种Adaboost分类算法,SAMME和SAMME.R。两者的主要区别是弱学习器权重的度量,SAMME使用了和我们的原理篇里二元分类Adaboost算法的扩展,即用对样本集分类效果作为弱学习器权重,而SAMME.R使用了对样本集分类的预测概率大小来作为弱学习器权重。由于SAMME.R使用了概率度量的连续值,迭代一般比SAMME快,因此AdaBoostClassifier的默认算法algorithm的值也是SAMME.R。我们一般使用默认的SAMME.R就够了,但是要注意的是使用了SAMME.R, 则弱分类学习器参数base_estimator必须限制使用支持概率预测的分类器。SAMME算法则没有这个限制。

-

n_estimators: AdaBoostClassifier和AdaBoostRegressor都有,就是我们的弱学习器的最大迭代次数,或者说最大的弱学习器的个数。一般来说n_estimators太小,容易欠拟合,n_estimators太大,又容易过拟合,一般选择一个适中的数值。默认是50。在实际调参的过程中,我们常常将n_estimators和下面介绍的参数learning_rate一起考虑。

-

learning_rate: AdaBoostClassifier和AdaBoostRegressor都有,即每个弱学习器的权重缩减系数ν

-

base_estimator:AdaBoostClassifier和AdaBoostRegressor都有,即我们的弱分类学习器或者弱回归学习器。理论上可以选择任何一个分类或者回归学习器,不过需要支持样本权重。我们常用的一般是CART决策树或者神经网络MLP。

from sklearn.ensemble import AdaBoostClassifier

clf = AdaBoostClassifier(n_estimators=100, learning_rate=0.5)

clf.fit(X_train, y_train)

clf.score(X_test, y_test)

来自 https://github.com/wzyonggege/statistical-learning-method