这篇小菜给大家演示和讲解一些Elasticsearch的API,如在工作中用到时,方便查阅。

一、Index API

创建索引库

curl -XPUT 'http://127.0.0.1:9200/test_index/' -d '{

"settings" : {

"index" : {

"number_of_shards" : 3,

"number_of_replicas" : 1

}

},

"mappings" : {

"type_test_01" : {

"properties" : {

"field1" : { "type" : "string"},

"field2" : { "type" : "string"}

}

},

"type_test_02" : {

"properties" : {

"field1" : { "type" : "string"},

"field2" : { "type" : "string"}

}

}

}

}'

验证索引库是否存在

curl –XHEAD -i 'http://127.0.0.1:9200/test_index?pretty'

注: 这里加上的?pretty参数,是为了让输出的格式更好看。

查看索引库的mapping信息

curl –XGET -i 'http://127.0.0.1:9200/test_index/_mapping?pretty'

验证当前库type为article是否存在

curl -XHEAD -i 'http://127.0.0.1:9200/test_index/article'

查看test_index索引库type为type_test_01的mapping信息

curl –XGET -i 'http://127.0.0.1:9200/test_index/_mapping/type_test_01/?pretty'

测试索引分词器

curl -XGET 'http://127.0.0.1:9200/_analyze?pretty' -d '

{

"analyzer" : "standard",

"text" : "this is a test"

}'

输出索引库的状态信息

curl 'http://127.0.0.1:9200/test_index/_stats?pretty'

输出索引库的分片相关信息

curl -XGET 'http://127.0.0.1:9200/test_index/_segments?pretty'

删除索引库

curl -XDELETE http://127.0.0.1:9200/logstash-nginxacclog-2016.09.20/

二、Count API

简易语法

curl -XGET 'http://elasticsearch_server:port/索引库名称/_type(当前索引类型,没有可以不写)/_count

用例:

1、统计 logstash-nginxacclog-2016.10.09 索引库有多少条记录

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_count'

2、统计 logstash-nginxacclog-2016.10.09 索引库status为200的有多少条记录

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_count?q=status:200'

DSL 写法

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_count' -d '

{ "query":

{ "term":{"status":"200"}}

}'

三、Aggregations API (数据分析和统计)

注: 聚合相关的API只能对数值、日期 类型的字段做计算。

1、求平均数

业务场景: 统计访问日志中的平均响应时长

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :

{ "match_all" : {} },

"aggs" : {

"avg_num" : { "avg" : { "field" : "responsetime" } }

},"size":0 # 这里的 size:0 表示不输出匹配到数据,只输出聚合结果。

}'

{

"took" : 598,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 32523067,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"avg_num" : {

"value" : 0.0472613558675975

}

}

}

# 得到平均响应时长为 0.0472613558675975 秒

2、求最大值

业务场景:获取访问日志中最长的响应时间

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :

{ "match_all" : {} },

"aggs" : {

"max_num" : { "max" : { "field" : "responsetime" } }

},"size":0

}'

{

"took" : 29813,

"timed_out" : false,

"_shards" : {

"total" : 431,

"successful" : 431,

"failed" : 0

},

"hits" : {

"total" : 476952009,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"max_num" : {

"value" : 65.576

}

}

}

# 得到最大响应时长为 65.576 秒

3、求最小值

业务场景: 获取访问日志中最快的响应时间

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :

{ "match_all" : {} },

"aggs" : {

"min_num" : { "min" : { "field" : "responsetime" } }

},"size":0

}'

{

"took" : 2145,

"timed_out" : false,

"_shards" : {

"total" : 431,

"successful" : 431,

"failed" : 0

},

"hits" : {

"total" : 477156773,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"min_num" : {

"value" : 0.0

}

}

}

# 看来最快的响应时间竟然是0,笔者通过查询日志发现,原来这些响应时间为0的请求是被nginx拒绝掉的。

4、数值求和

业务场景: 统计一天的访问日志中为响应请求总共输出了多少流量。

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :

{ "match_all" : {} },

"aggs" : {

"sim_num" : { "sum" : { "field" : "size" } }

},"size":0

}'

{

"took" : 1226,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 32523067,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"sim_num" : {

"value" : 6.9285945505E10

}

}

}

# 这个数有点大,后面的E10 表示 6.9285945505 X 10^10 ,笔者算了下,大概 70GB 流量。

5、获取常用的数据统计指标

其中包括( 最大值、最小值、平均值、求和、个数 )

业务场景: 求访问日志中的 responsetime ( 最大值、最小值、平均值、求和、个数 )

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :

{ "match_all" : {} },

"aggs" : {

"like_stats" : { "stats" : { "field" : "responsetime" } }

},"size":0

}'

{

"took" : 2868,

"timed_out" : false,

"_shards" : {

"total" : 431,

"successful" : 431,

"failed" : 0

},

"hits" : {

"total" : 477797577,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"like_stats" : {

"count" : 469345191,

"min" : 0.0,

"max" : 65.576,

"avg" : 0.06088492952649428,

"sum" : 2.8576048877634E7

}

}

}

这个是上面统计方式的增强版,新增了几个统计数据

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :

{ "match_all" : {} },

"aggs" : {

"like_stats" : { "extended_stats" : { "field" : "responsetime" } }

},"size":0

}'

{

"took" : 2830,

"timed_out" : false,

"_shards" : {

"total" : 431,

"successful" : 431,

"failed" : 0

},

"hits" : {

"total" : 478145456,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"like_stats" : {

"count" : 469687072,

"min" : 0.0,

"max" : 65.576,

"avg" : 0.06087745173159307,

"sum" : 2.859335205463328E7,

"sum_of_squares" : 1.3162790273264633E7,

"variance" : 0.02431853151732958,

"std_deviation" : 0.1559440012226491,

"std_deviation_bounds" : {

"upper" : 0.3727654541768913,

"lower" : -0.2510105507137051

}

}

}

}

# 其中新增的三个返回结果分别是:

# sum_of_squares 平方和

# variance 方差

# std_deviation 标准差

6、统计数据在某个区间所占的百分比

业务场景: 求出访问日志中响应时间的各个区间,所占的百分比

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :

{ "match_all" : {} },

"aggs" : {

"outlier" : { "percentiles" : { "field" : "responsetime" } }

},"size":0

}'

{

"took" : 60737,

"timed_out" : false,

"_shards" : {

"total" : 431,

"successful" : 431,

"failed" : 0

},

"hits" : {

"total" : 478287997,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"outlier" : {

"values" : {

"1.0" : 0.0,

"5.0" : 0.0,

"25.0" : 0.02,

"50.0" : 0.038999979789136247,

"75.0" : 0.06247223731250421,

"95.0" : 0.16479760590682113,

"99.0" : 0.520510492464275

}

}

}

}

# values 对应的列为所占的百分比,右边则是对应的数据值。表示:

# 响应时间小于或等于0的请求占 1%

# 响应时间小于或等于0的请求占 5%

# 响应时间小于或等于0.02的请求占 25%

# 响应时间小于或等于0.038999979789136247的请求占 50%

# 响应时间小于或等于0.06247223731250421的请求占 75%

# 响应时间小于或等于0.16479760590682113的请求占 95%

# 响应时间小于或等于0.520510492464275的请求占 99%

# 还可以通过 percents 参数,自定义一些百分比区间,如 10%,30%,60%,90% 等。

# 注: 经笔者测试,这个方法只能对数值类型的字段进行统计,无法操作字符串类型的字段。

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :

{ "match_all" : {} },

"aggs" : {

"outlier" : { "percentiles" : {

"field" : "status",

"percents":[5, 10, 20, 50, 99.9]

}

}

},"size":0

}'

7、求指定字段数值在各个区间所占的百分比

业务场景:求响应时间 0, 0.01, 0.1, 0.2 在整个日志文件中,分别所占的百分比。

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :

{ "match_all" : {} },

"aggs" : {

"outlier" : { "percentile_ranks" : {

"field" : "responsetime",

"values":[0, 0.01, 0.1, 0.2]

}

}

},"size":0

}'

{

"took" : 6950,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 32523067,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"outlier" : {

"values" : {

"0.0" : 8.79897648675993,

"0.01" : 17.90331319256336,

"0.1" : 91.18297638776373,

"0.2" : 98.22564774611764

}

}

}

}

# 响应时间小于或等于0的请求占 8.7%

# 响应时间小于或等于0.01的请求占 17.9%

# 响应时间小于或等于0.1的请求占 91.1%

# 响应时间小于或等于0.2的请求占 98.2%

8、求该数值范围内有多少文档匹配

业务场景: 求访问日志中的响应时间为,0 ~ 0.02、0.02 ~ 0.1 、大于 0.1 这三个数值区间内,各有多少文档匹配。

"ranges":[{"to": 0.02}, {"from":0.02,"to":0.1},{"from":0.1}]

{"to": 0.02} 求响应时间 0 ~ 0.02 区间内的匹配文档数

{"from":0.02,"to":0.1} 求响应时间 0.02 ~ 0.1 区间内匹配的文档数

{"from":0.1} 求响应时间大于 0.1 匹配的文档数

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :

{ "match_all" : {} },

"aggs" : {

"range_info" : { "range" : {

"field" : "responsetime",

"ranges":[{"to": 0.02}, {"from":0.02,"to":0.1},{"from":0.1}]

}

}

},"size":0

}'

{

"took" : 474,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 32523067,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"range_info" : {

"buckets" : [ {

"key" : "*-0.02",

"to" : 0.02,

"to_as_string" : "0.02",

"doc_count" : 9093600

}, {

"key" : "0.02-0.1",

"from" : 0.02,

"from_as_string" : "0.02",

"to" : 0.1,

"to_as_string" : "0.1",

"doc_count" : 20547128

}, {

"key" : "0.1-*",

"from" : 0.1,

"from_as_string" : "0.1",

"doc_count" : 2879418

} ]

}

}

}

"aggregations" : {

"range_info" : {

"buckets" : [ {

"key" : "*-0.02",

"to" : 0.02,

"to_as_string" : "0.02",

"doc_count" : 9093600

} # 响应时间在 0 ~ 0.02 的文档数是 9093600

, {

"key" : "0.02-0.1",

"from" : 0.02,

"from_as_string" : "0.02",

"to" : 0.1,

"to_as_string" : "0.1",

"doc_count" : 20547128

} # 响应时间在 0.02 ~ 0.1 的文档数是 20547128

, {

"key" : "0.1-*",

"from" : 0.1,

"from_as_string" : "0.1",

"doc_count" : 2879418

} # 响应时间在大于 0.1 的文档数是 2879418

]

}

}

9、求时间范围内有多少文档匹配

业务场景:求访问日志中,在 2016-10-09T01:00:00 之前的文档有多少。 和在 2016-10-09T02:00:00 之后的文档有多少。

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :

{ "match_all" : {} },

"aggs" : {

"range_info" : { "date_range" : {

"field" : "@timestamp",

"ranges":[{"to": "2016-10-09T01:00:00"},{"from":"2016-10-09T02:00:00"}]

}

}

},"size":0

}'

{

"took" : 432,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 32523067,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"range_info" : {

"buckets" : [ {

"key" : "*-2016-10-09T01:00:00.000Z",

"to" : 1.4759748E12,

"to_as_string" : "2016-10-09T01:00:00.000Z",

"doc_count" : 613460

}, # 在 2016-10-09T01:00:00 之前的文档数有 613460

{

"key" : "2016-10-09T02:00:00.000Z-*",

"from" : 1.4759784E12,

"from_as_string" : "2016-10-09T02:00:00.000Z",

"doc_count" : 31264881

} # 在 2016-10-09T02:00:00 之后的文档数有 31264881

]

}

}

}

10、聚合结果不依赖于查询结果集 "global":{}

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :

{ "term" : { "status" : "200" } },

"aggs" :{

"all_articles":{

"global":{},

"aggs":{

"sum_like": {"sum":{"field": "responsetime"}}

}

}

},"size":0

}'

{

"took" : 1519,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 26686196,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"all_articles" : {

"doc_count" : 32523067,

"sum_like" : {

"value" : 1536946.1929722272

}

}

}

}

# 可以看到查询结果集hits total部分才匹配到 26686196 条记录。 而聚合的文档数则是 32523067 多于查询结果匹配到的文档。

# 聚合结果为 1536946.1929722272

# 我们再看看没有引用 "global":{} 参数的方式

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :

{ "term" : { "status" : "200" } },

"aggs":{

"sum_like": {"sum":{"field": "responsetime"}}

},"size":0

}'

{

"took" : 1326,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 26686196,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"sum_like" : {

"value" : 1526710.3929916811

}

}

}

# 聚合结果小于上诉的结果。 表示这次的聚合的值,是依赖于检索匹配到的文档。

11、分组聚合

用于统计指定字段在自定义的固定增长区间下,每个增长后的值,所匹配的文档数量。

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"aggs" :{

"like_histogram":{

"histogram":{"field": "status", "interval": 200,

"min_doc_count": 1}

}

},"size":0

}'

# 对 status 字段操作,增长区间为 200 ,为了避免有的区间匹配为0所导致空数据,所以这里指定最小文档数为 1 "histogram":{"field": "status", "interval": 200, "min_doc_count": 1}

12、分组聚合-基于时间做分组

"date_histogram":{"field": "@timestamp", "interval": "1d","format": "yyyy-MM-dd",}

"field": "@timestamp" 指定记录时间的字段

"interval": "1d" 分组区间为每天. 1M 每月、1H 每小时、1m 每分钟

"format": "yyyy-MM-dd" 指定时间的输出格式

统计每天产生的日志数量

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-*/_search?pretty' -d '{

"aggs" :{

"date_histogram_info":{

"date_histogram":{"field": "@timestamp", "interval": "1d","format": "yyyy-MM-dd",

"min_doc_count": 1}

}

}

}'

"aggregations" : {

"date_histogram_info" : {

"buckets" : [ {

"key_as_string" : "2016-09-27",

"key" : 1474934400000,

"doc_count" : 6895375

}, {

"key_as_string" : "2016-09-28",

"key" : 1475020800000,

"doc_count" : 1255775

}, {

"key_as_string" : "2016-09-29",

"key" : 1475107200000,

"doc_count" : 38512862

}, {

"key_as_string" : "2016-09-30",

"key" : 1475193600000,

"doc_count" : 35314225

}, {

"key_as_string" : "2016-10-01",

"key" : 1475280000000,

"doc_count" : 45358162

}, {

"key_as_string" : "2016-10-02",

"key" : 1475366400000,

"doc_count" : 42058056

}, {

"key_as_string" : "2016-10-03",

"key" : 1475452800000,

"doc_count" : 39945587

}, {

"key_as_string" : "2016-10-04",

"key" : 1475539200000,

"doc_count" : 39509128

}, {

"key_as_string" : "2016-10-05",

"key" : 1475625600000,

"doc_count" : 40506342

}, {

"key_as_string" : "2016-10-06",

"key" : 1475712000000,

"doc_count" : 43303499

}, {

"key_as_string" : "2016-10-07",

"key" : 1475798400000,

"doc_count" : 44234780

}, {

"key_as_string" : "2016-10-08",

"key" : 1475884800000,

"doc_count" : 32880600

}, {

"key_as_string" : "2016-10-09",

"key" : 1475971200000,

"doc_count" : 32523067

}, {

"key_as_string" : "2016-10-10",

"key" : 1476057600000,

"doc_count" : 31454044

}, {

"key_as_string" : "2016-10-11",

"key" : 1476144000000,

"doc_count" : 2018401

} ]

}

}

}

# 基于小时做分组

# 统计当天每小时产生的日志数量

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"aggs" :{

"date_histogram_info":{

"date_histogram":{"field": "@timestamp", "interval": "1H","format": "yyyy-MM-dd-H",

"min_doc_count": 1}

}

},"size":0

}'

{

"took" : 530,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 32523067,

"max_score" : 0.0,

"hits" : [ ]

},

"aggregations" : {

"date_histogram_info" : {

"buckets" : [ {

"key_as_string" : "2016-10-09-0",

"key" : 1475971200000,

"doc_count" : 613460

}, {

"key_as_string" : "2016-10-09-1",

"key" : 1475974800000,

"doc_count" : 644726

}, {

"key_as_string" : "2016-10-09-2",

"key" : 1475978400000,

"doc_count" : 687196

}, {

"key_as_string" : "2016-10-09-3",

"key" : 1475982000000,

"doc_count" : 730831

}, {

"key_as_string" : "2016-10-09-4",

"key" : 1475985600000,

"doc_count" : 1460320

}, {

"key_as_string" : "2016-10-09-5",

"key" : 1475989200000,

"doc_count" : 1469098

}, {

"key_as_string" : "2016-10-09-6",

"key" : 1475992800000,

"doc_count" : 1004399

}, {

"key_as_string" : "2016-10-09-7",

"key" : 1475996400000,

"doc_count" : 962843

}, {

"key_as_string" : "2016-10-09-8",

"key" : 1476000000000,

"doc_count" : 1232560

}, {

"key_as_string" : "2016-10-09-9",

"key" : 1476003600000,

"doc_count" : 1809741

}, {

"key_as_string" : "2016-10-09-10",

"key" : 1476007200000,

"doc_count" : 2802804

}, {

"key_as_string" : "2016-10-09-11",

"key" : 1476010800000,

"doc_count" : 3941192

}, {

"key_as_string" : "2016-10-09-12",

"key" : 1476014400000,

"doc_count" : 4631032

}, {

"key_as_string" : "2016-10-09-13",

"key" : 1476018000000,

"doc_count" : 3651968

}, {

"key_as_string" : "2016-10-09-14",

"key" : 1476021600000,

"doc_count" : 2079933

}, {

"key_as_string" : "2016-10-09-15",

"key" : 1476025200000,

"doc_count" : 973578

}, {

"key_as_string" : "2016-10-09-16",

"key" : 1476028800000,

"doc_count" : 517435

}, {

"key_as_string" : "2016-10-09-17",

"key" : 1476032400000,

"doc_count" : 388382

}, {

"key_as_string" : "2016-10-09-18",

"key" : 1476036000000,

"doc_count" : 361296

}, {

"key_as_string" : "2016-10-09-19",

"key" : 1476039600000,

"doc_count" : 345926

}, {

"key_as_string" : "2016-10-09-20",

"key" : 1476043200000,

"doc_count" : 342214

}, {

"key_as_string" : "2016-10-09-21",

"key" : 1476046800000,

"doc_count" : 360897

}, {

"key_as_string" : "2016-10-09-22",

"key" : 1476050400000,

"doc_count" : 714336

}, {

"key_as_string" : "2016-10-09-23",

"key" : 1476054000000,

"doc_count" : 796900

} ]

}

}

}

# 可以看到当天 0 ~ 23 点每个时段产生的日志数量。 通过这个数据,我们是不是很容易就可以得到,业务的高峰时段呢?

四、Query DSL

curl -XGET 'http://127.0.0.1:9200/search_test/article/_count?pretty' -d '{

"query" :

{ "term" : { "title" : "article" } }

}'

在 Query DSL 中有两种子句:

1、Leaf query clauses (简单叶子节点查询子句)

2、Compound query clauses (复合查询子句)

Query context & Filter context

在 Query context 查询上下文中 ,关注的是当前文档和查询子句的匹配度。 而在 Filter context 中关注的是当前文档是否匹配查询子句,不计算相似度分值。

{"match_all":{}} 匹配全部

{"match_all":{"boost":{"boost":1.2}}} 手动指定_score返回值

Term level queries

返回文档:在user字段的倒排索引中包含"kitty"的文档 (精确匹配)

{

"term":{"user":"kitty"}

}

用例:

curl -XGET 'http://169.254.135.217:9200/search_test/article/_count?pretty' -d '{

"query" :

{ "term" : { "user" : "kitty" } }

}'

Term level Range query (范围查询)

用例:

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '

{

"query" :

{ "range" :{

"status" :{ "gt" : 200, "lte" : 500, "boost" : 2.0 }

}

}

,"size":1

}'

# 这里的"size":1 表示只返回一条数据,类似SQL里面的limit。 最大指定10000

# 如果要返回更多的数据,则可以加上?scroll参数,如/_search?scroll=1m ,这里的1m 表示1分钟。

# 详细请参考: https://www.elastic.co/guide/en/elasticsearch/reference/current/common-options.html#time-units

Term level Exists query (存在查询)

用例:

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '

{

"query":

{ "exists":{ "field":"status" }

}

}'

Term level Prefix and Wildcard

前缀查询用例:

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :{

"prefix" :{"agent": "io" }

}

}'

通配符查询用例:

curl -XGET 'http://127.0.0.1:9200/logstash-nginxacclog-2016.10.09/_search?pretty' -d '{

"query" :{

"wildcard" :{"agent": "io*" }

}

}'

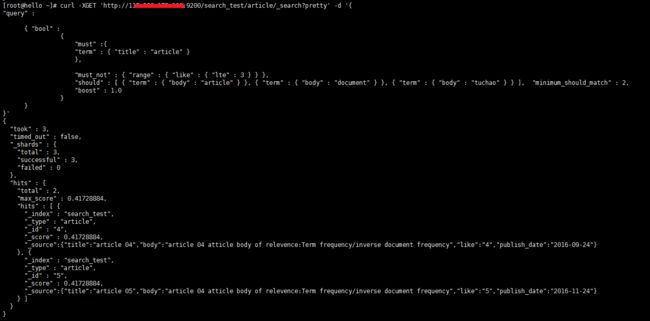

Compound query : Bool Query

Bool Query 常用的三个分支:

1、Must 表示必须包含的字符串

2、Must not 表示需要过滤掉的条件

3、should 类似 or 条件,"minimum_should_match" 表示最少要匹配几个条件才通过。

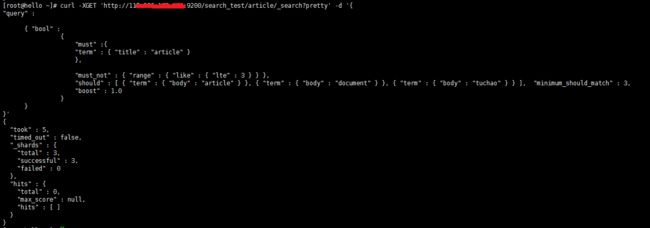

假设我在should 里面定义了三个条件,并且把minimum_should_match 设置为 2 ,表示我这三个条件中,只要要有两个条件能被匹配才能通过。 如果minimum_should_match 改为 3 表示这三个条件需要同时匹配才通过。

"should" : [ { "term" : { "body" : "article" } }, { "term" : { "body" : "document" } }, { "term" : { "body" : "tuchao" } } ], "minimum_should_match" : 3,

用例:

在这里可以看到,我给should 加了一个它决定不可能匹配到的条件,body:'tuchao' ,因为文档里面根本就没有这个字符串,然后我把 minimum_should_match 设置为 2 . 让它最小匹配2个条件就可以。 果然查询到了

接下来我把minimum_should_match 改为 3 让它最少要匹配三个条件,它显然做不到,就查不出来了

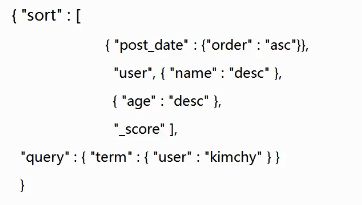

Request body search : Sort