选择python 版本 使用 conda

Anaconda更改Python版本 - jcdiv_的博客 - CSDN博客

使用Anaconda更改Python版本:

conda create --name python36 python=3.6

activate python36 *# for Windows*

conda activate python36 *# for Linux & Mac*

python --version

deactivate # for Windows

conda deactivate # for Linux & Mac

conda remove --name python36 --all

使用Scrapy

Scrapy Tutorial — Scrapy 1.6.0 documentation

看到 XPath: a brief intro 之前的内容,能够下载html,然后选择部分的内容。

执行以下命令。

pip install scrapy

scrapy startproject tutorial

tutorial/tutorial/otcbtc_spider.py

import scrapy

from urllib import parse as urlparse

from tutorial.OtcbtcTraderItem import OtcbtcTraderItem

class OtcbtcSpider(scrapy.Spider):

name = "otcbtc"

start_urls = [

'https://otcbtc.com/buy_offers?currency=eos&fiat_currency=cny&payment_type=all',

]

def parse(self, response):

traders = response.xpath('//div[@class="long-solution-list"]/ul[@class="list-content"]')

item = OtcbtcTraderItem()

item['recent_average_price'] = response.xpath('//p[@class="recent-average-price"]/text()').get().strip()

item['market_price'] = response.xpath('//p[@class="price"]/text()').get().strip()

for index, trader in enumerate(traders):

item['good_rate'] = trader.xpath(

'li[@class="user-trust"]/span[@class="second-line"]/text()').get().strip()

item['order_type'] = 'buy'

item['price'] = trader.xpath(

'li[@class="price"]/span[@class="mobile-head"]/following::text()[1]').get().strip()

item['trader_name'] = trader.xpath('li[@class="user-name"]/a[@href]/text()').get().strip()

item['trader_online_status'] = trader.xpath(

'li[@class="user-name"]/span[@class="user-last-online"]/text()').get().strip()

item['trader_pair'] = trader.xpath('li[@class="price"]/span[@class="second-line"]/text()').get().strip()

item['trading_history'] = trader.xpath(

'li[@class="user-trust"]/span[@class="mobile-head"]/following::text()[1]').get().strip()

item['trading_limit'] = trader.xpath(

'li[@class="minimum-amount"]/span[@class="mobile-head"]/following::text()[1]').get().strip()

yield item

tutorial/OtcbtcTraderItem.py

# -*- coding: utf-8 -*-

# Define here the models for your scraped items

#

# See documentation in:

# http://doc.scrapy.org/en/latest/topics/items.html

import scrapy

class OtcbtcTraderItem(scrapy.Item):

# define the fields for your item here like:

good_rate = scrapy.Field()

order_type = scrapy.Field()

price = scrapy.Field()

trader_name = scrapy.Field()

trader_online_status = scrapy.Field()

trader_pair = scrapy.Field()

trading_history = scrapy.Field()

trading_limit = scrapy.Field()

recent_average_price = scrapy.Field()

market_price = scrapy.Field()

created_at = scrapy.Field()

pass

tutorial/piplines.py

# -*- coding: utf-8 -*-

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html

from tutorial.db.dbhelper import DBHelper

class TutorialPipeline(object):

def __init__(self):

self.db = DBHelper()

def process_item(self, item, spider):

self.db.insert(item)

return item

tutorial/settings.py

MYSQL_HOST = 'localhost'

MYSQL_DBNAME = 'dbname'

MYSQL_USER = 'user'

MYSQL_PASSWD = '12345678'

MYSQL_PORT = 3306

ITEM_PIPELINES = {

'tutorial.pipelines.TutorialPipeline': 300,

}

XPath语法

trader = response.xpath('//div[@class="long-solution-list"]/ul[@class="list-content"]')[0]

# 错误❌

trader.xpath('//li[@class="user-name"]/a[@href]/text()').get().strip()

# 错误❌

trader.xpath('/li[@class="user-name"]/a[@href]/text()').get().strip()

# 正确✔️

trader.xpath('li[@class="user-name"]/a[@href]/text()').get().strip()

“/“:表示选择根节点

“//“:表示选择任意位置的某个节点

“@“: 表示选择某个属性

# 正确的

def parse(self, response):

traders = response.xpath('//div[@class="long-solution-list"]/ul[@class="list-content"]')

for index, trader in enumerate(traders):

item = OtcbtcTraderItem()

item['trader_name'] = trader.xpath('li[@class="user-name"]/a[@href]/text()').get().strip()

yield item

# 错误

def parse(self, response):

traders = response.xpath('//div[@class="long-solution-list"]/ul[@class="list-content"]')

for index, trader in enumerate(traders):

item = OtcbtcTraderItem()

item['trader_name'] = trader.xpath('//li[@class="user-name"]/a[@href]/text()').get().strip()

yield item

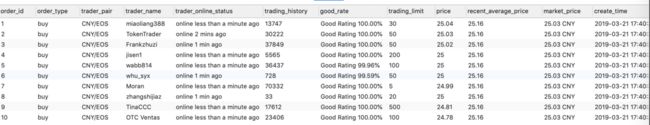

创建表

create table otc_trader_price

(

order_id int not null AUTO_INCREMENT,

order_type varchar(55) not null,

trader_pair varchar(55) not null,

trader_name varchar(55) not null,

trader_online_status varchar(55) not null,

trading_history int,

good_rate varchar(55),

trading_limit varchar(55),

price double,

recent_average_price varchar(55),

market_price varchar(55),

create_time varchar(55),

PRIMARY KEY (order_id)

);

写入Mysql

tutorial/tutorial/db/dbhelper.py

# -*- coding: utf-8 -*-

import pymysql

from twisted.enterprise import adbapi

from scrapy.utils.project import get_project_settings #导入seetings配置

import time

import copy

class DBHelper():

*'''这个类也是读取settings中的配置,自行修改代码进行操作'''*

def __init__(self):

settings = get_project_settings() #获取settings配置,设置需要的信息

dbparams = dict(

host=settings['MYSQL_HOST'], #读取settings中的配置

db=settings['MYSQL_DBNAME'],

user=settings['MYSQL_USER'],

passwd=settings['MYSQL_PASSWD'],

charset='utf8', #编码要加上,否则可能出现中文乱码问题

cursorclass=pymysql.cursors.DictCursor,

use_unicode=False,

)

#**表示将字典扩展为关键字参数,相当于host=xxx,db=yyy....

dbpool = adbapi.ConnectionPool('pymysql', **dbparams)

self.dbpool = dbpool

def connect(self):

return self.dbpool

#创建数据库

def insert(self, item):

asynitem = copy.deepcopy(item)

# sql = "insert into tech_courses(title,image,brief,course_url,created_at) values(%s,%s,%s,%s,%s)"

sql = "INSERT INTO otc_trader_price(" \

"good_rate,order_type,price,trader_name,trader_online_status,trader_pair, trading_history, " \

"trading_limit, recent_average_price, market_price, create_time" \

") VALUES (%s, %s, %s, %s, %s, %s, %s, %s, %s, %s, %s)"

# 调用插入的方法

query = self.dbpool.runInteraction(self._conditional_insert, sql, asynitem)

# 调用异常处理方法

query.addErrback(self._handle_error)

return asynitem

#写入数据库中

def _conditional_insert(self, tx, sql, item):

item['created_at'] = time.strftime('%Y-%m-%d %H:%M:%S',

time.localtime(time.time()))

# params = (item["title"], item['image'], item['brief'],

# item['course_url'], item['created_at'])

# good_rate

# order_type

# price

# trader_name

# trader_online_status

# trader_pair

# trading_history

# trading_limit

params = (item["good_rate"], item['order_type'], item['price'],

item['trader_name'], item['trader_online_status'],

item['trader_pair'], item['trading_history'],

item['trading_limit'], item['recent_average_price'],

item['market_price'], item['created_at'])

tx.execute(sql, params)

#错误处理方法

def _handle_error(self, failue):

print('--------------database operation exception!!-----------------')

print(failue)