Hadoop集群搭建---step3(hadoop三种架构介绍(standAlone,伪分布,分布式安装以及环境搭建)

Hadoop集群搭建—step3(hadoop三种架构介绍(standAlone,伪分布,分布式安装以及环境搭建)

前言:Hadoop有多种版本,流行的版本有Apache Hadoop,CDH Hadoop , HDP Hadoop, MapR Hadoop。每个版本的Hadoop都提供三种集群搭建架构,一种是将Hadoop安装在一台机器下的称之为StandAlone环境;一种是将Hadoop安装在多台机器下的(有1个NN多个DN,1个RM多个NM)称之为伪分布式环境;一种是在第二种的基础上对NN&RN实现HA的,称之为全分布式。

相关软件版本:

jdk:jdk1.8.0_141

hadoop:Apache Hadoop_2.7.5

zookeeper:zookeeper_3.4.9

接下来以Apache Hadoop介绍这三种搭建模式如何实现。apache软件下载地址:http://archive.apache.org/dist/

hadoop 官方文档:http://hadoop.apache.org/docs/

注意:

hadoop的配置文件存放在 hadoop/etc/hadoop 目录下,各个文件的主要作用如下:

- core-site:核心配置文件,主要定义了文件访问的格式,如hdfs://ip:8020/…

- hadoop-env: 主要配置java所在路径

- hdfs-site: 定义hdfs相关配置

- mapred-site:定义mapreduce相关配置

- yarn-site:定义RN资源调度

- slaves:定义从节点,如DN, NM.

配置文件的参考文档 :https://hadoop.apache.org/docs/r2.6.0/

第一种:standAlone(了解,一般不用)

服务分布:

| 运行服务 | 服务器IP |

|---|---|

| NameNode | 192.168.52.100 |

| SecondaryNameNode | 192.168.52.100 |

| DataNode | 192.168.52.100 |

| ResourceManager | 192.168.52.100 |

| NodeManager | 192.168.52.100 |

1.下载apache hadoop并上传到服务器

下载链接:

http://archive.apache.org/dist/hadoop/common/hadoop-2.7.5/hadoop-2.7.5.tar.gz

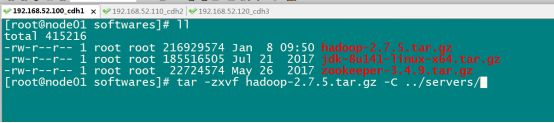

解压:

cd /export/softwares

tar -zxvf hadoop-2.7.5.tar.gz -C ../servers/

2.修改配置文件

A.修改core-site.xml

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim core-site.xml

<configuration>

<property>

<name>fs.default.namename>

<value>hdfs://192.168.52.100:8020value>

property>

<property>

<name>hadoop.tmp.dirname>

<value>/export/servers/hadoop-2.7.5/hadoopDatas/tempDatasvalue>

property>

<property>

<name>io.file.buffer.sizename>

<value>4096value>

property>

<property>

<name>fs.trash.intervalname>

<value>10080value>

property>

configuration>

B.修改hdfs-site.xml

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim hdfs-site.xml

<configuration>

<property>

<name>dfs.namenode.secondary.http-addressname>

<value>node01:50090value>

property>

<property>

<name>dfs.namenode.http-addressname>

<value>node01:50070value>

property>

<property>

<name>dfs.namenode.name.dirname>

<value>file:///export/servers/hadoop-2.7.5/hadoopDatas/namenodeDatas,file:///export/servers/hadoop-2.7.5/hadoopDatas/namenodeDatas2value>

property>

<property>

<name>dfs.datanode.data.dirname>

<value>file:///export/servers/hadoop-2.7.5/hadoopDatas/datanodeDatas,file:///export/servers/hadoop-2.7.5/hadoopDatas/datanodeDatas2value>

property>

<property>

<name>dfs.namenode.edits.dirname>

<value>file:///export/servers/hadoop-2.7.5/hadoopDatas/nn/editsvalue>

property>

<property>

<name>dfs.namenode.checkpoint.dirname>

<value>file:///export/servers/hadoop-2.7.5/hadoopDatas/snn/namevalue>

property>

<property>

<name>dfs.namenode.checkpoint.edits.dirname>

<value>file:///export/servers/hadoop-2.7.5/hadoopDatas/dfs/snn/editsvalue>

property>

<property>

<name>dfs.replicationname>

<value>3value>

property>

<property>

<name>dfs.permissionsname>

<value>falsevalue>

property>

<property>

<name>dfs.blocksizename>

<value>134217728value>

property>

configuration>

C.修改hadoop-env.sh

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim hadoop-env.sh

vim hadoop-env.sh

export JAVA_HOME=/export/servers/jdk1.8.0_141

D.修改mapred-site.xml

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.namename>

<value>yarnvalue>

property>

<property>

<name>mapreduce.job.ubertask.enablename>

<value>truevalue>

property>

<property>

<name>mapreduce.jobhistory.addressname>

<value>node01:10020value>

property>

<property>

<name>mapreduce.jobhistory.webapp.addressname>

<value>node01:19888value>

property>

configuration>

D.修改yarn-site.xml

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim yarn-site.xml

<configuration>

<property>

<name>yarn.resourcemanager.hostnamename>

<value>node01value>

property>

<property>

<name>yarn.nodemanager.aux-servicesname>

<value>mapreduce_shufflevalue>

property>

<property>

<name>yarn.log-aggregation-enablename>

<value>truevalue>

property>

<property>

<name>yarn.log-aggregation.retain-secondsname>

<value>604800value>

property>

configuration>

E.修改mapred-env.sh

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim mapred-env.sh

添加以下内容:

export JAVA_HOME=/export/servers/jdk1.8.0_141

F.修改slaves

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim slaves

添加:

localhost

3.启动集群

注意: 首次启动 HDFS 时,必须对其进行格式化操作。 本质上是一些清理和准备工作,因为此时的 HDFS 在物理上还是不存在的。

hdfs namenode -format 或者 hadoop namenode –format

A.创建数据存放文件夹,便于管理数据:

cd /export/servers/hadoop-2.7.5

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/tempDatas

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/namenodeDatas

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/namenodeDatas2

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/datanodeDatas

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/datanodeDatas2

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/nn/edits

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/snn/name

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/dfs/snn/edits

B.启动:

cd /export/servers/hadoop-2.7.5/

bin/hdfs namenode -format

sbin/start-dfs.sh

sbin/start-yarn.sh

sbin/mr-jobhistory-daemon.sh start historyserver

C.三个端口查看界面

http://node01:50070/explorer.html#/ 查看hdfs

http://node01:8088/cluster 查看yarn集群

http://node01:19888/jobhistory 查看历史完成的任务

第二种:伪分布式环境搭建(适用于学习测试开发集群模式)

服务分布:

| 服务器IP | 192.168.52.100 | 192.168.52.110 | 192.168.52.120 |

|---|---|---|---|

| 主机名 | node01.hadoop.com | node02.hadoop.com | node03.hadoop.com |

| NameNode | 是 | 否 | 否 |

| SecondaryNameNode | 是 | 否 | 否 |

| dataNode | 是 | 是 | 是 |

| ResourceManager | 是 | 否 | 否 |

| NodeManager | 是 | 是 | 是 |

1.在standAlone的基础上搭建

A.停止单节点集群,删除/export/servers/hadoop-2.7.5/hadoopDatas文件夹,然后重新创建文件夹:

cd /export/servers/hadoop-2.7.5

sbin/stop-dfs.sh

sbin/stop-yarn.sh

sbin/mr-jobhistory-daemon.sh stop historyserver

B.删除hadoopDatas然后重新创建文件夹:

rm -rf /export/servers/hadoop-2.7.5/hadoopDatas

C.重新创建文件夹:

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/tempDatas

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/namenodeDatas

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/namenodeDatas2

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/datanodeDatas

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/datanodeDatas2

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/nn/edits

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/snn/name

mkdir -p /export/servers/hadoop-2.7.5/hadoopDatas/dfs/snn/edits

2.修改slaves文件,然后将安装包发送到其他机器,重新启动集群

A.修改slaves文件:

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim slaves

修改为:

node01

node02

node03

B.安装包的分发:

cd /export/servers/

scp -r hadoop-2.7.5 node02:$PWD

scp -r hadoop-2.7.5 node03:$PWD

C.启动集群(只需在第一台机器上执行命令,集群都可启动):

cd /export/servers/hadoop-2.7.5

bin/hdfs namenode -format

sbin/start-dfs.sh

sbin/start-yarn.sh

sbin/mr-jobhistory-daemon.sh start historyserver

第三种:分布式环境搭建(适用于工作当中正式环境搭建)

使用完全分布式,实现namenode高可用,ResourceManager的高可用

服务分布:

| 192.168.1.100 | 192.168.1.110 | 192.168.1.120 | |

|---|---|---|---|

| zookeeper | zk | zk | zk |

| HDFS | JournalNode | JournalNode | JournalNode |

| NameNode | NameNode | ||

| ZKFC | ZKFC | ||

| DataNode | DataNode | DataNode | |

| YARN | ResourceManager | ResourceManager | |

| NodeManager | NodeManager | NodeManager | |

| MapReduce | JobHistoryServer |

1.安装包解压

cd /export/softwares

tar -zxvf hadoop-2.7.5.tar.gz -C ../servers/

2.配置文件的修改

A.修改core-site.xml

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim core-site.xml

<configuration>

<property>

<name>ha.zookeeper.quorumname>

<value>node01:2181,node02:2181,node03:2181value>

property>

<property>

<name>fs.defaultFSname>

<value>hdfs://nsvalue>

property>

<property>

<name>hadoop.tmp.dirname>

<value>/export/servers/hadoop-2.7.5/data/tmpvalue>

property>

<property>

<name>fs.trash.intervalname>

<value>10080value>

property>

configuration>

B.修改hdfs-site.xml

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim hdfs-site.xml

<configuration>

<property>

<name>dfs.nameservicesname>

<value>nsvalue>

property>

<property>

<name>dfs.ha.namenodes.nsname>

<value>nn1,nn2value>

property>

<property>

<name>dfs.namenode.rpc-address.ns.nn1name>

<value>node01:8020value>

property>

<property>

<name>dfs.namenode.rpc-address.ns.nn2name>

<value>node02:8020value>

property>

<property>

<name>dfs.namenode.servicerpc-address.ns.nn1name>

<value>node01:8022value>

property>

<property>

<name>dfs.namenode.servicerpc-address.ns.nn2name>

<value>node02:8022value>

property>

<property>

<name>dfs.namenode.http-address.ns.nn1name>

<value>node01:50070value>

property>

<property>

<name>dfs.namenode.http-address.ns.nn2name>

<value>node02:50070value>

property>

<property>

<name>dfs.namenode.shared.edits.dirname>

<value>qjournal://node01:8485;node02:8485;node03:8485/ns1value>

property>

<property>

<name>dfs.client.failover.proxy.provider.nsname>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvidervalue>

property>

<property>

<name>dfs.ha.fencing.methodsname>

<value>sshfencevalue>

property>

<property>

<name>dfs.ha.fencing.ssh.private-key-filesname>

<value>/root/.ssh/id_rsavalue>

property>

<property>

<name>dfs.journalnode.edits.dirname>

<value>/export/servers/hadoop-2.7.5/data/dfs/jnvalue>

property>

<property>

<name>dfs.ha.automatic-failover.enabledname>

<value>truevalue>

property>

<property>

<name>dfs.namenode.name.dirname>

<value>file:///export/servers/hadoop-2.7.5/data/dfs/nn/namevalue>

property>

<property>

<name>dfs.namenode.edits.dirname>

<value>file:///export/servers/hadoop-2.7.5/data/dfs/nn/editsvalue>

property>

<property>

<name>dfs.datanode.data.dirname>

<value>file:///export/servers/hadoop-2.7.5/data/dfs/dnvalue>

property>

<property>

<name>dfs.permissionsname>

<value>falsevalue>

property>

<property>

<name>dfs.blocksizename>

<value>134217728value>

property>

configuration>

C.修改yarn-site.xml

注意:node03与node02配置不同

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim yarn-site.xml

<configuration>

<property>

<name>yarn.log-aggregation-enablename>

<value>truevalue>

property>

<property>

<name>yarn.resourcemanager.ha.enabledname>

<value>truevalue>

property>

<property>

<name>yarn.resourcemanager.cluster-idname>

<value>myclustervalue>

property>

<property>

<name>yarn.resourcemanager.ha.rm-idsname>

<value>rm1,rm2value>

property>

<property>

<name>yarn.resourcemanager.hostname.rm1name>

<value>node03value>

property>

<property>

<name>yarn.resourcemanager.hostname.rm2name>

<value>node02value>

property>

<property>

<name>yarn.resourcemanager.address.rm1name>

<value>node03:8032value>

property>

<property>

<name>yarn.resourcemanager.scheduler.address.rm1name>

<value>node03:8030value>

property>

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm1name>

<value>node03:8031value>

property>

<property>

<name>yarn.resourcemanager.admin.address.rm1name>

<value>node03:8033value>

property>

<property>

<name>yarn.resourcemanager.webapp.address.rm1name>

<value>node03:8088value>

property>

<property>

<name>yarn.resourcemanager.address.rm2name>

<value>node02:8032value>

property>

<property>

<name>yarn.resourcemanager.scheduler.address.rm2name>

<value>node02:8030value>

property>

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm2name>

<value>node02:8031value>

property>

<property>

<name>yarn.resourcemanager.admin.address.rm2name>

<value>node02:8033value>

property>

<property>

<name>yarn.resourcemanager.webapp.address.rm2name>

<value>node02:8088value>

property>

<property>

<name>yarn.resourcemanager.recovery.enabledname>

<value>truevalue>

property>

<property>

<name>yarn.resourcemanager.ha.idname>

<value>rm1value>

<description>If we want to launch more than one RM in single node, we need this configurationdescription>

property>

<property>

<name>yarn.resourcemanager.store.classname>

<value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStorevalue>

property>

<property>

<name>yarn.resourcemanager.zk-addressname>

<value>node02:2181,node03:2181,node01:2181value>

<description>For multiple zk services, separate them with commadescription>

property>

<property>

<name>yarn.resourcemanager.ha.automatic-failover.enabledname>

<value>truevalue>

<description>Enable automatic failover; By default, it is enabled only when HA is enabled.description>

property>

<property>

<name>yarn.client.failover-proxy-providername>

<value>org.apache.hadoop.yarn.client.ConfiguredRMFailoverProxyProvidervalue>

property>

<property>

<name>yarn.nodemanager.resource.cpu-vcoresname>

<value>4value>

property>

<property>

<name>yarn.nodemanager.resource.memory-mbname>

<value>512value>

property>

<property>

<name>yarn.scheduler.minimum-allocation-mbname>

<value>512value>

property>

<property>

<name>yarn.scheduler.maximum-allocation-mbname>

<value>512value>

property>

<property>

<name>yarn.log-aggregation.retain-secondsname>

<value>2592000value>

property>

<property>

<name>yarn.nodemanager.log.retain-secondsname>

<value>604800value>

property>

<property>

<name>yarn.nodemanager.log-aggregation.compression-typename>

<value>gzvalue>

property>

<property>

<name>yarn.nodemanager.local-dirsname>

<value>/export/servers/hadoop-2.7.5/yarn/localvalue>

property>

<property>

<name>yarn.resourcemanager.max-completed-applicationsname>

<value>1000value>

property>

<property>

<name>yarn.nodemanager.aux-servicesname>

<value>mapreduce_shufflevalue>

property>

<property>

<name>yarn.resourcemanager.connect.retry-interval.msname>

<value>2000value>

property>

configuration>

D.修改mapred-site.xml

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.namename>

<value>yarnvalue>

property>

<property>

<name>mapreduce.jobhistory.addressname>

<value>node03:10020value>

property>

<property>

<name>mapreduce.jobhistory.webapp.addressname>

<value>node03:19888value>

property>

<property>

<name>mapreduce.jobtracker.system.dirname>

<value>/export/servers/hadoop-2.7.5/data/system/jobtrackervalue>

property>

<property>

<name>mapreduce.map.memory.mbname>

<value>1024value>

property>

<property>

<name>mapreduce.reduce.memory.mbname>

<value>1024value>

property>

<property>

<name>mapreduce.task.io.sort.mbname>

<value>100value>

property>

<property>

<name>mapreduce.task.io.sort.factorname>

<value>10value>

property>

<property>

<name>mapreduce.reduce.shuffle.parallelcopiesname>

<value>25value>

property>

<property>

<name>yarn.app.mapreduce.am.command-optsname>

<value>-Xmx1024mvalue>

property>

<property>

<name>yarn.app.mapreduce.am.resource.mbname>

<value>1536value>

property>

<property>

<name>mapreduce.cluster.local.dirname>

<value>/export/servers/hadoop-2.7.5/data/system/localvalue>

property>

configuration>

E.修改slaves

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim slaves

添加:

node01

node02

node03

F.修改hadoop-env.sh

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim hadoop-env.sh

添加:

export JAVA_HOME=/export/servers/jdk1.8.0_141

3.集群启动过程

A.将第一台机器的安装包发送到其他机器上

在第一台机器上执行:

cd /export/servers

scp -r hadoop-2.7.5/ node02:$PWD

scp -r hadoop-2.7.5/ node03:$PWD

B.三台机器上共同创建目录

三台机器执行以下命令:

mkdir -p /export/servers/hadoop-2.7.5/data/dfs/nn/name

mkdir -p /export/servers/hadoop-2.7.5/data/dfs/nn/edits

mkdir -p /export/servers/hadoop-2.7.5/data/dfs/nn/name

mkdir -p /export/servers/hadoop-2.7.5/data/dfs/nn/edits

C.更改node02的rm2

第二台机器执行以下命令:

cd /export/servers/hadoop-2.7.5/etc/hadoop

vim yarn-site.xml

<property>

<name>yarn.resourcemanager.ha.idname>

<value>rm2value>

<description>If we want to launch more than one RM in single node, we need this configurationdescription>

property>

4.启动HDFS过程

node01机器执行以下命令

cd /export/servers/hadoop-2.7.5

bin/hdfs zkfc -formatZK

sbin/hadoop-daemons.sh start journalnode

bin/hdfs namenode -format

bin/hdfs namenode -initializeSharedEdits -force

sbin/start-dfs.sh

node02上面执行

cd /export/servers/hadoop-2.7.5

bin/hdfs namenode -bootstrapStandby

sbin/hadoop-daemon.sh start namenode

5.启动yarn过程

node03上面执行:

cd /export/servers/hadoop-2.7.5

sbin/start-yarn.sh

node02上执行:

cd /export/servers/hadoop-2.7.5

sbin/start-yarn.sh

6.查看resourceManager状态

node03上面执行:

cd /export/servers/hadoop-2.7.5

bin/yarn rmadmin -getServiceState rm1

node02上面执行:

cd /export/servers/hadoop-2.7.5

bin/yarn rmadmin -getServiceState rm2

7.node03启动jobHistory

node03机器执行以下命令启动jobHistory:

cd /export/servers/hadoop-2.7.5

sbin/mr-jobhistory-daemon.sh start historyserver

此时分布式集群搭建完毕!

结束:在生产环境中,基于Apache的Hadoop不便维护,升级,管理等,所以在实际的工作中,一般不使用Appach版本,一般使用CDH版本的Hadoop,但是由于CDH给出的hadoop的安装包没有提供带C程序访问的接口,我们在使用本地库(本地库可以用来做压缩,以及支持C程序等等)的时候就会出问题。所以需要自己重新进行编译,让其支持本地库。

编译详见我的博客:CDH版本hadoop重新编译