kubernetes之kube-proxy ipvs userspace iptables 三种模式源码分析

kubernetes 版本

# kubectl version

Client Version: version.Info{Major:"1", Minor:"11+", GitVersion:"v1.11.0-168+f47446a730ca03", GitCommit:"f47446a730ca037473fb3bf0c5abeea648c1ac12", GitTreeState:"clean", BuildDate:"2018-08-25T21:05:52Z", GoVersion:"go1.10.3", Compiler:"gc", Platform:"linux/amd64"}

Server Version: version.Info{Major:"1", Minor:"11+", GitVersion:"v1.11.0-168+f47446a730ca03", GitCommit:"f47446a730ca037473fb3bf0c5abeea648c1ac12", GitTreeState:"clean", BuildDate:"2018-08-25T21:05:52Z", GoVersion:"go1.10.3", Compiler:"gc", Platform:"linux/amd64"}

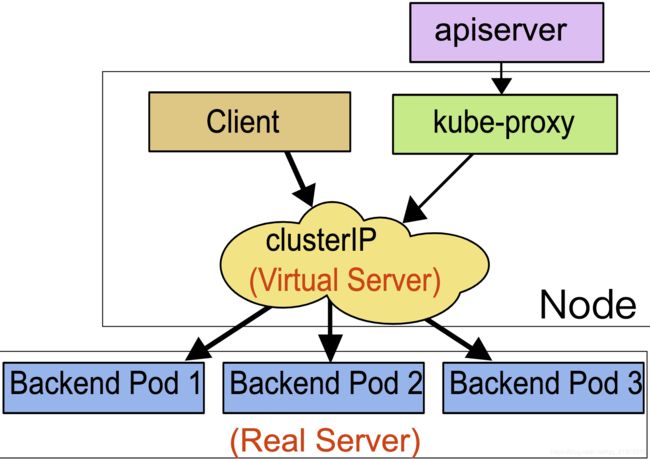

kube-proxy 是 kubernetes 重要的组建,它的作用就是虚拟出一个VIP(service),保证VIP无论后台服务(POD,Endpoint)如何变更都保持不变,起到一个LB的功能

kube-proxy提供了三种LB模式:

一种是基于用户态的模式userspace, 一种是iptables模式, 一种是ipvs模式

所以要分析细节,读者必须要了解iptables,ipvs的相关知识,这个读者自行百度了解,在这里我就不详细说了

不多说废话了,开始步入源代码

入口函数在$GOPATH/src/k8s.io/kubernetes/cmd/kube-proxy/proxy.go文件中,如下

接着分析函数command := app.NewProxyCommand()

// NewProxyCommand creates a *cobra.Command object with default parameters

func NewProxyCommand() *cobra.Command {

opts := NewOptions()//这个函数主要是配置文件, KubeProxyConfiguration 这个结构体里接收启动时所传入的参数

cmd := &cobra.Command{

Use: "kube-proxy",

Long: `The Kubernetes network proxy runs on each node. This

reflects services as defined in the Kubernetes API on each node and can do simple

TCP and UDP stream forwarding or round robin TCP and UDP forwarding across a set of backends.

Service cluster IPs and ports are currently found through Docker-links-compatible

environment variables specifying ports opened by the service proxy. There is an optional

addon that provides cluster DNS for these cluster IPs. The user must create a service

with the apiserver API to configure the proxy.`,

Run: func(cmd *cobra.Command, args []string) {

verflag.PrintAndExitIfRequested()

utilflag.PrintFlags(cmd.Flags())

//这个函数还没实现

if err := initForOS(opts.WindowsService); err != nil {

glog.Fatalf("failed OS init: %v", err)

}

//加载配置文件,获取传入的参数

if err := opts.Complete(); err != nil {

glog.Fatalf("failed complete: %v", err)

}

//校验kube-proxy的入参

if err := opts.Validate(args); err != nil {

glog.Fatalf("failed validate: %v", err)

}

glog.Fatal(opts.Run())

},

}

var err error

//设置kube-proxy的配置文件默认值

opts.config, err = opts.ApplyDefaults(opts.config)

if err != nil {

glog.Fatalf("unable to create flag defaults: %v", err)

}

opts.AddFlags(cmd.Flags())

//kube-proxy的配置文件类型

cmd.MarkFlagFilename("config", "yaml", "yml", "json")

return cmd

}

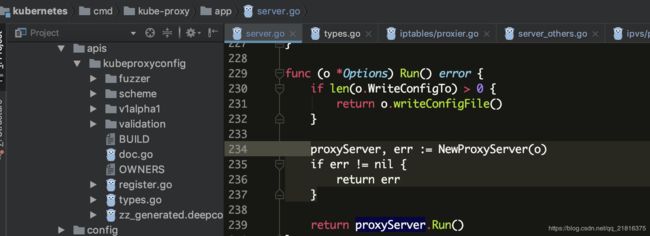

接下来查看opts.Run() 的具体实现

opts.Run()函数在$GOPATH/src/k8s.io/kubernetes/cmd/kube-proxy/app/server.go文件中,如下

writeConfigFile()函数的具体内容:该函数主要是加载配置文件

func (o *Options) writeConfigFile() error {

var encoder runtime.Encoder

mediaTypes := o.codecs.SupportedMediaTypes()

for _, info := range mediaTypes {

if info.MediaType == "application/yaml" {

encoder = info.Serializer

break

}

}

if encoder == nil {

return errors.New("unable to locate yaml encoder")

}

encoder = json.NewYAMLSerializer(json.DefaultMetaFactory, o.scheme, o.scheme)

encoder = o.codecs.EncoderForVersion(encoder, v1alpha1.SchemeGroupVersion)

configFile, err := os.Create(o.WriteConfigTo)

if err != nil {

return err

}

defer configFile.Close()

if err := encoder.Encode(o.config, configFile); err != nil {

return err

}

glog.Infof("Wrote configuration to: %s\n", o.WriteConfigTo)

return nil

}

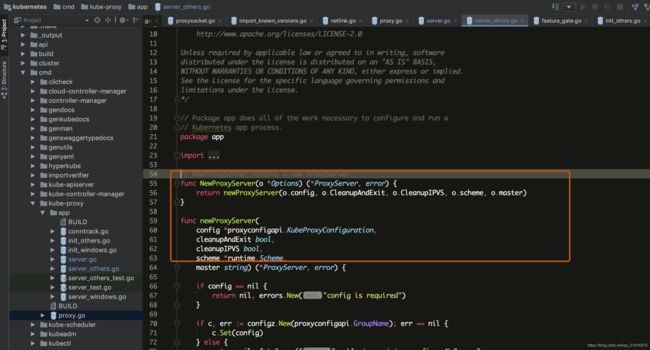

查看NewProxyServer函数,该函数是由函数newProxyServer(o.config, o.CleanupAndExit, o.CleanupIPVS, o.scheme, o.master)具体实现

newProxyServer主要是根据proxyMode代理模式各自加载相关的各类属性以及管理所需要的工具等

$GOPATH/src/k8s.io/kubernetes/cmd/kube-proxy/app/server_others.go

具体细节如下

func newProxyServer(

config *proxyconfigapi.KubeProxyConfiguration,

cleanupAndExit bool,

cleanupIPVS bool,

scheme *runtime.Scheme,

master string) (*ProxyServer, error) {

if config == nil {

return nil, errors.New("config is required")

}

if c, err := configz.New(proxyconfigapi.GroupName); err == nil {

c.Set(config)

} else {

return nil, fmt.Errorf("unable to register configz: %s", err)

}

protocol := utiliptables.ProtocolIpv4

if net.ParseIP(config.BindAddress).To4() == nil {

glog.V(0).Infof("IPv6 bind address (%s), assume IPv6 operation", config.BindAddress)

protocol = utiliptables.ProtocolIpv6

}

var iptInterface utiliptables.Interface

var ipvsInterface utilipvs.Interface

var kernelHandler ipvs.KernelHandler

var ipsetInterface utilipset.Interface

var dbus utildbus.Interface

// Create a iptables utils.

execer := exec.New()

dbus = utildbus.New()

iptInterface = utiliptables.New(execer, dbus, protocol)

kernelHandler = ipvs.NewLinuxKernelHandler()

ipsetInterface = utilipset.New(execer)

if canUse, _ := ipvs.CanUseIPVSProxier(kernelHandler, ipsetInterface); canUse {

ipvsInterface = utilipvs.New(execer)

}

// We omit creation of pretty much everything if we run in cleanup mode

if cleanupAndExit {

return &ProxyServer{

execer: execer,

IptInterface: iptInterface,

IpvsInterface: ipvsInterface,

IpsetInterface: ipsetInterface,

CleanupAndExit: cleanupAndExit,

}, nil

}

client, eventClient, err := createClients(config.ClientConnection, master)

if err != nil {

return nil, err

}

// Create event recorder

hostname := utilnode.GetHostname(config.HostnameOverride)

eventBroadcaster := record.NewBroadcaster()

recorder := eventBroadcaster.NewRecorder(scheme, v1.EventSource{Component: "kube-proxy", Host: hostname})

nodeRef := &v1.ObjectReference{

Kind: "Node",

Name: hostname,

UID: types.UID(hostname),

Namespace: "",

}

var healthzServer *healthcheck.HealthzServer

var healthzUpdater healthcheck.HealthzUpdater

//启动健康检查端口

if len(config.HealthzBindAddress) > 0 {

healthzServer = healthcheck.NewDefaultHealthzServer(config.HealthzBindAddress, 2*config.IPTables.SyncPeriod.Duration, recorder, nodeRef)

healthzUpdater = healthzServer

}

var proxier proxy.ProxyProvider

//Service Endpoint的CRDU

var serviceEventHandler proxyconfig.ServiceHandler

var endpointsEventHandler proxyconfig.EndpointsHandler

proxyMode := getProxyMode(string(config.Mode), iptInterface, kernelHandler, ipsetInterface, iptables.LinuxKernelCompatTester{})

//iptables模式

if proxyMode == proxyModeIPTables {

glog.V(0).Info("Using iptables Proxier.")

nodeIP := net.ParseIP(config.BindAddress)

if nodeIP.Equal(net.IPv4zero) || nodeIP.Equal(net.IPv6zero) {

nodeIP = getNodeIP(client, hostname)

}

if config.IPTables.MasqueradeBit == nil {

// MasqueradeBit must be specified or defaulted.

return nil, fmt.Errorf("unable to read IPTables MasqueradeBit from config")

}

// TODO this has side effects that should only happen when Run() is invoked.

proxierIPTables, err := iptables.NewProxier(

iptInterface,

utilsysctl.New(),

execer,

config.IPTables.SyncPeriod.Duration,

config.IPTables.MinSyncPeriod.Duration,

config.IPTables.MasqueradeAll,

int(*config.IPTables.MasqueradeBit),

config.ClusterCIDR,

hostname,

nodeIP,

recorder,

healthzUpdater,

config.NodePortAddresses,

)

if err != nil {

return nil, fmt.Errorf("unable to create proxier: %v", err)

}

metrics.RegisterMetrics()

proxier = proxierIPTables

serviceEventHandler = proxierIPTables

endpointsEventHandler = proxierIPTables

// No turning back. Remove artifacts that might still exist from the userspace Proxier.

glog.V(0).Info("Tearing down inactive rules.")

// TODO this has side effects that should only happen when Run() is invoked.

userspace.CleanupLeftovers(iptInterface)

// IPVS Proxier will generate some iptables rules, need to clean them before switching to other proxy mode.

// Besides, ipvs proxier will create some ipvs rules as well. Because there is no way to tell if a given

// ipvs rule is created by IPVS proxier or not. Users should explicitly specify `--clean-ipvs=true` to flush

// all ipvs rules when kube-proxy start up. Users do this operation should be with caution.

ipvs.CleanupLeftovers(ipvsInterface, iptInterface, ipsetInterface, cleanupIPVS)

//IPVS 模式

} else if proxyMode == proxyModeIPVS {

glog.V(0).Info("Using ipvs Proxier.")

proxierIPVS, err := ipvs.NewProxier(

iptInterface,

ipvsInterface,

ipsetInterface,

utilsysctl.New(),

execer,

config.IPVS.SyncPeriod.Duration,

config.IPVS.MinSyncPeriod.Duration,

config.IPVS.ExcludeCIDRs,

config.IPTables.MasqueradeAll,

int(*config.IPTables.MasqueradeBit),

config.ClusterCIDR,

hostname,

getNodeIP(client, hostname),

recorder,

healthzServer,

config.IPVS.Scheduler,

config.NodePortAddresses,

)

if err != nil {

return nil, fmt.Errorf("unable to create proxier: %v", err)

}

metrics.RegisterMetrics()

proxier = proxierIPVS

serviceEventHandler = proxierIPVS

endpointsEventHandler = proxierIPVS

glog.V(0).Info("Tearing down inactive rules.")

// TODO this has side effects that should only happen when Run() is invoked.

userspace.CleanupLeftovers(iptInterface)

iptables.CleanupLeftovers(iptInterface)

//用户空间

} else {

glog.V(0).Info("Using userspace Proxier.")

// This is a proxy.LoadBalancer which NewProxier needs but has methods we don't need for

// our config.EndpointsConfigHandler.

loadBalancer := userspace.NewLoadBalancerRR()

// set EndpointsConfigHandler to our loadBalancer

endpointsEventHandler = loadBalancer

// TODO this has side effects that should only happen when Run() is invoked.

proxierUserspace, err := userspace.NewProxier(

loadBalancer,

net.ParseIP(config.BindAddress),

iptInterface,

execer,

*utilnet.ParsePortRangeOrDie(config.PortRange),

config.IPTables.SyncPeriod.Duration,

config.IPTables.MinSyncPeriod.Duration,

config.UDPIdleTimeout.Duration,

config.NodePortAddresses,

)

if err != nil {

return nil, fmt.Errorf("unable to create proxier: %v", err)

}

serviceEventHandler = proxierUserspace

proxier = proxierUserspace

// Remove artifacts from the iptables and ipvs Proxier, if not on Windows.

glog.V(0).Info("Tearing down inactive rules.")

// TODO this has side effects that should only happen when Run() is invoked.

iptables.CleanupLeftovers(iptInterface)

// IPVS Proxier will generate some iptables rules, need to clean them before switching to other proxy mode.

// Besides, ipvs proxier will create some ipvs rules as well. Because there is no way to tell if a given

// ipvs rule is created by IPVS proxier or not. Users should explicitly specify `--clean-ipvs=true` to flush

// all ipvs rules when kube-proxy start up. Users do this operation should be with caution.

ipvs.CleanupLeftovers(ipvsInterface, iptInterface, ipsetInterface, cleanupIPVS)

}

iptInterface.AddReloadFunc(proxier.Sync)

return &ProxyServer{

Client: client,

EventClient: eventClient,

IptInterface: iptInterface,

IpvsInterface: ipvsInterface,

IpsetInterface: ipsetInterface,

execer: execer,

Proxier: proxier,

Broadcaster: eventBroadcaster,

Recorder: recorder,

ConntrackConfiguration: config.Conntrack,

Conntracker: &realConntracker{},

ProxyMode: proxyMode,

NodeRef: nodeRef,

MetricsBindAddress: config.MetricsBindAddress,

EnableProfiling: config.EnableProfiling,

OOMScoreAdj: config.OOMScoreAdj,

ResourceContainer: config.ResourceContainer,

ConfigSyncPeriod: config.ConfigSyncPeriod.Duration,

ServiceEventHandler: serviceEventHandler,

EndpointsEventHandler: endpointsEventHandler,

HealthzServer: healthzServer,

}, nil

}

由于代码过长不好分析,接下来我们分开分析,首先查看返回值*ProxyServer的属性如下

// ProxyServer represents all the parameters required to start the Kubernetes proxy server. All

// fields are required.

type ProxyServer struct {

Client clientset.Interface//连接kube-apiserver的对象

EventClient v1core.EventsGetter //事件获取方法

IptInterface utiliptables.Interface//操作iptables的函数接口(CRUD)

IpvsInterface utilipvs.Interface//操作ipvs的函数接口(CRUD)

IpsetInterface utilipset.Interface//操作ipset的函数接口(CRUD)

execer exec.Interface//命令执行接口

Proxier proxy.ProxyProvider//同步工具

Broadcaster record.EventBroadcaster//事件广播器

Recorder record.EventRecorder//

ConntrackConfiguration kubeproxyconfig.KubeProxyConntrackConfiguration//链路工具的配置信息

Conntracker Conntracker // if nil, ignored

ProxyMode string//代理模式

NodeRef *v1.ObjectReference

CleanupAndExit bool

CleanupIPVS bool

MetricsBindAddress string

EnableProfiling bool

OOMScoreAdj *int32

ResourceContainer string

ConfigSyncPeriod time.Duration

ServiceEventHandler config.ServiceHandler

EndpointsEventHandler config.EndpointsHandler

HealthzServer *healthcheck.HealthzServer

}

// Create a iptables utils.

execer := exec.New()//创建执行器executor实现Interface,$GOPATH/src/k8s.io/kubernetes/vendor/k8s.io/utils/exec/exec.go

dbus = utildbus.New()//dbusImpl{}实现Interface方法 $GOPATH/src/k8s.io/kubernetes/pkg/util/dbus/dbus.go

iptInterface = utiliptables.New(execer, dbus, protocol)// $GOPATH /src/k8s.io/kubernetes/pkg/util/iptables/iptables.go runner结构体实现了Interface

kernelHandler = ipvs.NewLinuxKernelHandler()//主要检查内核模块以及内核相关的参数,$GOPATH/src/k8s.io/kubernetes/pkg/proxy/ipvs/proxier.go

ipsetInterface = utilipset.New(execer)// runner结构实现Interface $GOPATH/src/k8s.io/kubernetes/pkg/util/ipset/ipset.go

//检查内核参数

if canUse, _ := ipvs.CanUseIPVSProxier(kernelHandler, ipsetInterface); canUse {

ipvsInterface = utilipvs.New(execer)//ipvsadm的使用方法

}

CanUseIPVSProxier函数的实现$GOPATH/src/k8s.io/kubernetes/pkg/proxy/ipvs/proxier.go

func CanUseIPVSProxier(handle KernelHandler, ipsetver IPSetVersioner) (bool, error) {

mods, err := handle.GetModules()//获取内核模块

if err != nil {

return false, fmt.Errorf("error getting installed ipvs required kernel modules: %v", err)

}

//golang版本的set

wantModules := sets.NewString()

loadModules := sets.NewString()

wantModules.Insert(ipvsModules...)

loadModules.Insert(mods...)

modules := wantModules.Difference(loadModules).UnsortedList()

if len(modules) != 0 {

return false, fmt.Errorf("IPVS proxier will not be used because the following required kernel modules are not loaded: %v", modules)

}

// Check ipset version

versionString, err := ipsetver.GetVersion()

if err != nil {

return false, fmt.Errorf("error getting ipset version, error: %v", err)

}

if !checkMinVersion(versionString) {

return false, fmt.Errorf("ipset version: %s is less than min required version: %s", versionString, MinIPSetCheckVersion)

}

return true, nil

}

获取连接kube-apiservet组件的对象

...

client, eventClient, err := createClients(config.ClientConnection, master)

if err != nil {

return nil, err

}

...

创建广播器,健康检查,事件记录器,Service Endpoint的CRDU方法

// Create event recorder

hostname := utilnode.GetHostname(config.HostnameOverride)

eventBroadcaster := record.NewBroadcaster()

recorder := eventBroadcaster.NewRecorder(scheme, v1.EventSource{Component: "kube-proxy", Host: hostname})

nodeRef := &v1.ObjectReference{

Kind: "Node",

Name: hostname,

UID: types.UID(hostname),

Namespace: "",

}

var healthzServer *healthcheck.HealthzServer

var healthzUpdater healthcheck.HealthzUpdater

//启动健康检查端口

if len(config.HealthzBindAddress) > 0 {

healthzServer = healthcheck.NewDefaultHealthzServer(config.HealthzBindAddress, 2*config.IPTables.SyncPeriod.Duration, recorder, nodeRef)

healthzUpdater = healthzServer

}

var proxier proxy.ProxyProvider

//Service Endpoint的CRDU

var serviceEventHandler proxyconfig.ServiceHandler

var endpointsEventHandler proxyconfig.EndpointsHandler

代理模式的判断,根据不同的代理模式生成不同的工具,清除相关的垃圾数据等

proxyMode := getProxyMode(string(config.Mode), iptInterface, kernelHandler, ipsetInterface, iptables.LinuxKernelCompatTester{})

//iptables模式

if proxyMode == proxyModeIPTables {

glog.V(0).Info("Using iptables Proxier.")

nodeIP := net.ParseIP(config.BindAddress)

if nodeIP.Equal(net.IPv4zero) || nodeIP.Equal(net.IPv6zero) {

nodeIP = getNodeIP(client, hostname)

}

if config.IPTables.MasqueradeBit == nil {

// MasqueradeBit must be specified or defaulted.

return nil, fmt.Errorf("unable to read IPTables MasqueradeBit from config")

}

// TODO this has side effects that should only happen when Run() is invoked.

proxierIPTables, err := iptables.NewProxier(

iptInterface,

utilsysctl.New(),

execer,

config.IPTables.SyncPeriod.Duration,

config.IPTables.MinSyncPeriod.Duration,

config.IPTables.MasqueradeAll,

int(*config.IPTables.MasqueradeBit),

config.ClusterCIDR,

hostname,

nodeIP,

recorder,

healthzUpdater,

config.NodePortAddresses,

)

if err != nil {

return nil, fmt.Errorf("unable to create proxier: %v", err)

}

metrics.RegisterMetrics()

proxier = proxierIPTables

serviceEventHandler = proxierIPTables

endpointsEventHandler = proxierIPTables

// No turning back. Remove artifacts that might still exist from the userspace Proxier.

glog.V(0).Info("Tearing down inactive rules.")

// TODO this has side effects that should only happen when Run() is invoked.

userspace.CleanupLeftovers(iptInterface)

// IPVS Proxier will generate some iptables rules, need to clean them before switching to other proxy mode.

// Besides, ipvs proxier will create some ipvs rules as well. Because there is no way to tell if a given

// ipvs rule is created by IPVS proxier or not. Users should explicitly specify `--clean-ipvs=true` to flush

// all ipvs rules when kube-proxy start up. Users do this operation should be with caution.

ipvs.CleanupLeftovers(ipvsInterface, iptInterface, ipsetInterface, cleanupIPVS)

//IPVS 模式

} else if proxyMode == proxyModeIPVS {

glog.V(0).Info("Using ipvs Proxier.")

proxierIPVS, err := ipvs.NewProxier(

iptInterface,

ipvsInterface,

ipsetInterface,

utilsysctl.New(),

execer,

config.IPVS.SyncPeriod.Duration,

config.IPVS.MinSyncPeriod.Duration,

config.IPVS.ExcludeCIDRs,

config.IPTables.MasqueradeAll,

int(*config.IPTables.MasqueradeBit),

config.ClusterCIDR,

hostname,

getNodeIP(client, hostname),

recorder,

healthzServer,

config.IPVS.Scheduler,

config.NodePortAddresses,

)

if err != nil {

return nil, fmt.Errorf("unable to create proxier: %v", err)

}

metrics.RegisterMetrics()

proxier = proxierIPVS

serviceEventHandler = proxierIPVS

endpointsEventHandler = proxierIPVS

glog.V(0).Info("Tearing down inactive rules.")

// TODO this has side effects that should only happen when Run() is invoked.

userspace.CleanupLeftovers(iptInterface)

iptables.CleanupLeftovers(iptInterface)

//用户空间

} else {

glog.V(0).Info("Using userspace Proxier.")

// This is a proxy.LoadBalancer which NewProxier needs but has methods we don't need for

// our config.EndpointsConfigHandler.

loadBalancer := userspace.NewLoadBalancerRR()

// set EndpointsConfigHandler to our loadBalancer

endpointsEventHandler = loadBalancer

// TODO this has side effects that should only happen when Run() is invoked.

proxierUserspace, err := userspace.NewProxier(

loadBalancer,

net.ParseIP(config.BindAddress),

iptInterface,

execer,

*utilnet.ParsePortRangeOrDie(config.PortRange),

config.IPTables.SyncPeriod.Duration,

config.IPTables.MinSyncPeriod.Duration,

config.UDPIdleTimeout.Duration,

config.NodePortAddresses,

)

if err != nil {

return nil, fmt.Errorf("unable to create proxier: %v", err)

}

serviceEventHandler = proxierUserspace

proxier = proxierUserspace

// Remove artifacts from the iptables and ipvs Proxier, if not on Windows.

glog.V(0).Info("Tearing down inactive rules.")

// TODO this has side effects that should only happen when Run() is invoked.

iptables.CleanupLeftovers(iptInterface)

// IPVS Proxier will generate some iptables rules, need to clean them before switching to other proxy mode.

// Besides, ipvs proxier will create some ipvs rules as well. Because there is no way to tell if a given

// ipvs rule is created by IPVS proxier or not. Users should explicitly specify `--clean-ipvs=true` to flush

// all ipvs rules when kube-proxy start up. Users do this operation should be with caution.

ipvs.CleanupLeftovers(ipvsInterface, iptInterface, ipsetInterface, cleanupIPVS)

}

iptInterface.AddReloadFunc(proxier.Sync)

return &ProxyServer{

Client: client,

EventClient: eventClient,

IptInterface: iptInterface,

IpvsInterface: ipvsInterface,

IpsetInterface: ipsetInterface,

execer: execer,

Proxier: proxier,

Broadcaster: eventBroadcaster,

Recorder: recorder,

ConntrackConfiguration: config.Conntrack,

Conntracker: &realConntracker{},

ProxyMode: proxyMode,

NodeRef: nodeRef,

MetricsBindAddress: config.MetricsBindAddress,

EnableProfiling: config.EnableProfiling,

OOMScoreAdj: config.OOMScoreAdj,

ResourceContainer: config.ResourceContainer,

ConfigSyncPeriod: config.ConfigSyncPeriod.Duration,

ServiceEventHandler: serviceEventHandler,

EndpointsEventHandler: endpointsEventHandler,

HealthzServer: healthzServer,

}, nil

proxyServer.Run()这个函数设置了OOMAdjuster,启动监听健康检查端口,获取性能/metrics,获取代理模式的接口/proxyMode,设置连接跟踪,获取informerFactory,以及启动watch Service Endpoint的函数最后启动了SyncLoop同步函数

具体代码如下

// Run runs the specified ProxyServer. This should never exit (unless CleanupAndExit is set).

func (s *ProxyServer) Run() error {

// To help debugging, immediately log version

glog.Infof("Version: %+v", version.Get())

// remove iptables rules and exit

if s.CleanupAndExit {

encounteredError := userspace.CleanupLeftovers(s.IptInterface)

encounteredError = iptables.CleanupLeftovers(s.IptInterface) || encounteredError

encounteredError = ipvs.CleanupLeftovers(s.IpvsInterface, s.IptInterface, s.IpsetInterface, s.CleanupIPVS) || encounteredError

if encounteredError {

return errors.New("encountered an error while tearing down rules.")

}

return nil

}

// TODO(vmarmol): Use container config for this.

var oomAdjuster *oom.OOMAdjuster

if s.OOMScoreAdj != nil {

oomAdjuster = oom.NewOOMAdjuster()

if err := oomAdjuster.ApplyOOMScoreAdj(0, int(*s.OOMScoreAdj)); err != nil {

glog.V(2).Info(err)

}

}

//使用容器运行的方式

if len(s.ResourceContainer) != 0 {

// Run in its own container.

if err := resourcecontainer.RunInResourceContainer(s.ResourceContainer); err != nil {

glog.Warningf("Failed to start in resource-only container %q: %v", s.ResourceContainer, err)

} else {

glog.V(2).Infof("Running in resource-only container %q", s.ResourceContainer)

}

}

if s.Broadcaster != nil && s.EventClient != nil {

s.Broadcaster.StartRecordingToSink(&v1core.EventSinkImpl{Interface: s.EventClient.Events("")})

}

// Start up a healthz server if requested 启动健康检查端口

if s.HealthzServer != nil {

s.HealthzServer.Run()

}

// Start up a metrics server if requested

if len(s.MetricsBindAddress) > 0 {

mux := mux.NewPathRecorderMux("kube-proxy")

healthz.InstallHandler(mux)

mux.HandleFunc("/proxyMode", func(w http.ResponseWriter, r *http.Request) {

fmt.Fprintf(w, "%s", s.ProxyMode)

})

mux.Handle("/metrics", prometheus.Handler())

if s.EnableProfiling {

routes.Profiling{}.Install(mux)

}

configz.InstallHandler(mux)

go wait.Until(func() {

err := http.ListenAndServe(s.MetricsBindAddress, mux)

if err != nil {

utilruntime.HandleError(fmt.Errorf("starting metrics server failed: %v", err))

}

}, 5*time.Second, wait.NeverStop)

}

//设置连接跟踪

// Tune conntrack, if requested

// Conntracker is always nil for windows

//设置sysctl 'net/netfilter/nf_conntrack_max' 值为 524288

//设置sysctl 'net/netfilter/nf_conntrack_tcp_timeout_established' 值为 86400

//设置sysctl 'net/netfilter/nf_conntrack_tcp_timeout_close_wait' 值为 3600

if s.Conntracker != nil {

max, err := getConntrackMax(s.ConntrackConfiguration)

if err != nil {

return err

}

if max > 0 {

err := s.Conntracker.SetMax(max)

if err != nil {

if err != readOnlySysFSError {

return err

}

// readOnlySysFSError is caused by a known docker issue (https://github.com/docker/docker/issues/24000),

// the only remediation we know is to restart the docker daemon.

// Here we'll send an node event with specific reason and message, the

// administrator should decide whether and how to handle this issue,

// whether to drain the node and restart docker.

// TODO(random-liu): Remove this when the docker bug is fixed.

const message = "DOCKER RESTART NEEDED (docker issue #24000): /sys is read-only: " +

"cannot modify conntrack limits, problems may arise later."

s.Recorder.Eventf(s.NodeRef, api.EventTypeWarning, err.Error(), message)

}

}

if s.ConntrackConfiguration.TCPEstablishedTimeout != nil && s.ConntrackConfiguration.TCPEstablishedTimeout.Duration > 0 {

timeout := int(s.ConntrackConfiguration.TCPEstablishedTimeout.Duration / time.Second)

if err := s.Conntracker.SetTCPEstablishedTimeout(timeout); err != nil {

return err

}

}

if s.ConntrackConfiguration.TCPCloseWaitTimeout != nil && s.ConntrackConfiguration.TCPCloseWaitTimeout.Duration > 0 {

timeout := int(s.ConntrackConfiguration.TCPCloseWaitTimeout.Duration / time.Second)

if err := s.Conntracker.SetTCPCloseWaitTimeout(timeout); err != nil {

return err

}

}

}

informerFactory := informers.NewSharedInformerFactory(s.Client, s.ConfigSyncPeriod)

// Create configs (i.e. Watches for Services and Endpoints)

// Note: RegisterHandler() calls need to happen before creation of Sources because sources

// only notify on changes, and the initial update (on process start) may be lost if no handlers

// are registered yet.

serviceConfig := config.NewServiceConfig(informerFactory.Core().InternalVersion().Services(), s.ConfigSyncPeriod)

serviceConfig.RegisterEventHandler(s.ServiceEventHandler)

go serviceConfig.Run(wait.NeverStop)

endpointsConfig := config.NewEndpointsConfig(informerFactory.Core().InternalVersion().Endpoints(), s.ConfigSyncPeriod)

endpointsConfig.RegisterEventHandler(s.EndpointsEventHandler)

go endpointsConfig.Run(wait.NeverStop)

// This has to start after the calls to NewServiceConfig and NewEndpointsConfig because those

// functions must configure their shared informer event handlers first.

go informerFactory.Start(wait.NeverStop)

// Birth Cry after the birth is successful

s.birthCry()

//各个proxyMode 都实现了接口Proxier

// Just loop forever for now...

s.Proxier.SyncLoop()

return nil

}

同时iptables userspace ipvs的代理模式都实现了ProxyProvider这个接口

// ProxyProvider is the interface provided by proxier implementations.

type ProxyProvider interface {

// Sync immediately synchronizes the ProxyProvider's current state to proxy rules.

Sync()

// SyncLoop runs periodic work.

// This is expected to run as a goroutine or as the main loop of the app.

// It does not return.

SyncLoop()

}

到此为止,kube-proxy已经把流程分析完了,接下来详细分析ipvs userspace iptables各自的实现

突破口在NewProxyServer函数

$GOPATH/src/k8s.io/kubernetes/cmd/kube-proxy/app/server.go

接下来我们来分析ipvs,iptables,userspace的具体实现

ipvs

关于ipvs的原理,在这里就详细说了,深入了解的可以访问这两篇文章Linux负载均衡–LVS(IPVS) ipvs负载均衡模块的内核实现

负载均衡策略

rr: round-robin

lc: least connection

dh: destination hashing

sh: source hashing

sed: shortest expected delay

nq: never queue

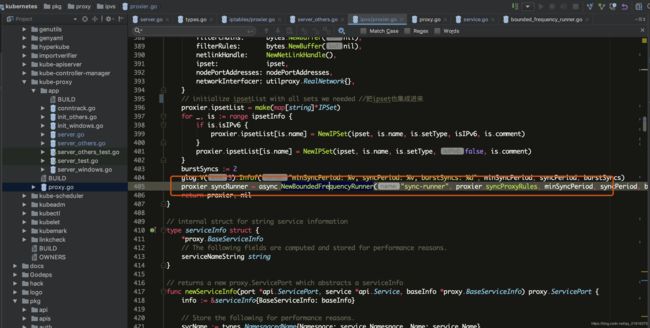

首先,来看NewProxier,如下图,该函数创建了操作iptables,ipset,ipvs的方法,还有初始化了调度算法,设置CIDR等操作

那我们看NewProxier的具体实现

该NewProxier 设置route_localnet,bridge-nf-call-iptables,链路跟踪conntrack工具,开启内核转发/pro/sys/net/ipv4/ip_forward,ipv6的特殊处理,CIDR指定情况,开启健康检查healthChecker,开启ipset,设置规则的同步周期,syncProxyRules等等,其中最重要的是syncProxyRules这个函数,具体实现代码如下

func NewProxier(ipt utiliptables.Interface,

ipvs utilipvs.Interface,

ipset utilipset.Interface,

sysctl utilsysctl.Interface,

exec utilexec.Interface,

syncPeriod time.Duration,

minSyncPeriod time.Duration,

excludeCIDRs []string,

masqueradeAll bool,

masqueradeBit int,

clusterCIDR string,

hostname string,

nodeIP net.IP,

recorder record.EventRecorder,

healthzServer healthcheck.HealthzUpdater,

scheduler string,

nodePortAddresses []string,

) (*Proxier, error) {

// Set the route_localnet sysctl we need for

if err := sysctl.SetSysctl(sysctlRouteLocalnet, 1); err != nil {

return nil, fmt.Errorf("can't set sysctl %s: %v", sysctlRouteLocalnet, err)

}

// Proxy needs br_netfilter and bridge-nf-call-iptables=1 when containers

// are connected to a Linux bridge (but not SDN bridges). Until most

// plugins handle this, log when config is missing

if val, err := sysctl.GetSysctl(sysctlBridgeCallIPTables); err == nil && val != 1 {

glog.Infof("missing br-netfilter module or unset sysctl br-nf-call-iptables; proxy may not work as intended")

}

// Set the conntrack sysctl we need for

if err := sysctl.SetSysctl(sysctlVSConnTrack, 1); err != nil {

return nil, fmt.Errorf("can't set sysctl %s: %v", sysctlVSConnTrack, err)

}

//设置内核IP转发

// Set the ip_forward sysctl we need for

if err := sysctl.SetSysctl(sysctlForward, 1); err != nil {

return nil, fmt.Errorf("can't set sysctl %s: %v", sysctlForward, err)

}

// Generate the masquerade mark to use for SNAT rules.

masqueradeValue := 1 << uint(masqueradeBit)

masqueradeMark := fmt.Sprintf("%#08x/%#08x", masqueradeValue, masqueradeValue)

if nodeIP == nil {

glog.Warningf("invalid nodeIP, initializing kube-proxy with 127.0.0.1 as nodeIP")

nodeIP = net.ParseIP("127.0.0.1")

}

isIPv6 := utilnet.IsIPv6(nodeIP)

glog.V(2).Infof("nodeIP: %v, isIPv6: %v", nodeIP, isIPv6)

if len(clusterCIDR) == 0 {

glog.Warningf("clusterCIDR not specified, unable to distinguish between internal and external traffic")

} else if utilnet.IsIPv6CIDR(clusterCIDR) != isIPv6 {

return nil, fmt.Errorf("clusterCIDR %s has incorrect IP version: expect isIPv6=%t", clusterCIDR, isIPv6)

}

if len(scheduler) == 0 {

glog.Warningf("IPVS scheduler not specified, use %s by default", DefaultScheduler)

scheduler = DefaultScheduler

}

healthChecker := healthcheck.NewServer(hostname, recorder, nil, nil) // use default implementations of deps

proxier := &Proxier{

portsMap: make(map[utilproxy.LocalPort]utilproxy.Closeable),

serviceMap: make(proxy.ServiceMap),

serviceChanges: proxy.NewServiceChangeTracker(newServiceInfo, &isIPv6, recorder),

endpointsMap: make(proxy.EndpointsMap),

endpointsChanges: proxy.NewEndpointChangeTracker(hostname, nil, &isIPv6, recorder),

syncPeriod: syncPeriod,

minSyncPeriod: minSyncPeriod,

excludeCIDRs: excludeCIDRs,

iptables: ipt,

masqueradeAll: masqueradeAll,

masqueradeMark: masqueradeMark,

exec: exec,

clusterCIDR: clusterCIDR,

hostname: hostname,

nodeIP: nodeIP,

portMapper: &listenPortOpener{},

recorder: recorder,

healthChecker: healthChecker,

healthzServer: healthzServer,

ipvs: ipvs,

ipvsScheduler: scheduler,

ipGetter: &realIPGetter{nl: NewNetLinkHandle()},

iptablesData: bytes.NewBuffer(nil),

natChains: bytes.NewBuffer(nil),

natRules: bytes.NewBuffer(nil),

filterChains: bytes.NewBuffer(nil),

filterRules: bytes.NewBuffer(nil),

netlinkHandle: NewNetLinkHandle(),

ipset: ipset,

nodePortAddresses: nodePortAddresses,

networkInterfacer: utilproxy.RealNetwork{},

}

// initialize ipsetList with all sets we needed //把ipset也集成进来

proxier.ipsetList = make(map[string]*IPSet)

for _, is := range ipsetInfo {

if is.isIPv6 {

proxier.ipsetList[is.name] = NewIPSet(ipset, is.name, is.setType, isIPv6, is.comment)

}

proxier.ipsetList[is.name] = NewIPSet(ipset, is.name, is.setType, false, is.comment)

}

burstSyncs := 2

glog.V(3).Infof("minSyncPeriod: %v, syncPeriod: %v, burstSyncs: %d", minSyncPeriod, syncPeriod, burstSyncs)

proxier.syncRunner = async.NewBoundedFrequencyRunner("sync-runner", proxier.syncProxyRules, minSyncPeriod, syncPeriod, burstSyncs)

return proxier, nil

}

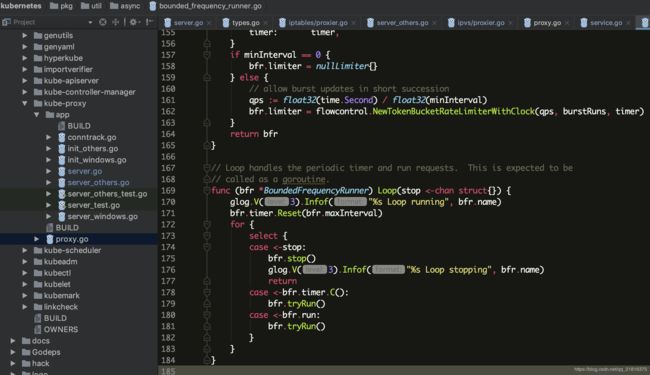

创建完成之后,返回去执行执行s.Proxier.SyncLoop()循环函数,同步规则

tryRun函数

其中tryRun函数中bfr.fn()就是调用这个函数同步的proxier.syncProxyRules,刚启动的时候也进行了同步的操作

就这样周而复始的去同步规则

接下来分析proxier.syncRunner的功能:这个函数proxier.syncRunner 就是实时按照所设置的同步频率的功能去刷新规则

其实这个loop循环函数的公用就是同步,有停止时的操作,有变更时的操作

同步规则,实现逻辑就在这个proxier.syncProxyRules函数里,接下来具体分析这个函数

使用了锁的机制,每个节点同一时刻只能有一个同步操作

同步监控指标

健康检查

同步变化了的service

for _, svcPortName := range endpointUpdateResult.StaleServiceNames {

if svcInfo, ok := proxier.serviceMap[svcPortName]; ok && svcInfo != nil && svcInfo.GetProtocol() == api.ProtocolUDP {

glog.V(2).Infof("Stale udp service %v -> %s", svcPortName, svcInfo.ClusterIPString())

staleServices.Insert(svcInfo.ClusterIPString())

}

}

检查默认虚拟接口kube-ipvs0是否存在,ipvs服务地址将绑定到它的默认虚拟接口kube-ipvs0

检查ipset是否已安装

为每个服务绑定访问规则,每个服务的对应的端口是以type ServiceMap map[ServicePortName]ServicePort这种形式存在的

ServicePortName

type ServicePortName struct {

types.NamespacedName

Port string

}

ServicePort

// ServicePort is an interface which abstracts information about a service.

type ServicePort interface {

// String returns service string. An example format can be: `IP:Port/Protocol`.

String() string

// ClusterIPString returns service cluster IP in string format.

ClusterIPString() string

// GetProtocol returns service protocol.

GetProtocol() api.Protocol

// GetHealthCheckNodePort returns service health check node port if present. If return 0, it means not present.

GetHealthCheckNodePort() int

}

接下来就是为每一个服务绑定以及设置访问策略

具体实现就是先找出所有的服务,接着查到对应的endpoint,最后根据协议,访问方式(externalIP,nodePort),以及LB Ingress的方式绑定到对应的VS上,这个细节比较多,读者可以自行阅读代码,代码的路径$GOPATH/src/k8s.io/kubernetes/pkg/proxy/ipvs/proxier.go

其中kube-proxy ipset支持的设置的类型如下

// HashIPPort represents the `hash:ip,port` type ipset. The hash:ip,port is similar to hash:ip but

// you can store IP address and protocol-port pairs in it. TCP, SCTP, UDP, UDPLITE, ICMP and ICMPv6 are supported

// with port numbers/ICMP(v6) types and other protocol numbers without port information.

HashIPPort Type = "hash:ip,port"

// HashIPPortIP represents the `hash:ip,port,ip` type ipset. The hash:ip,port,ip set type uses a hash to store

// IP address, port number and a second IP address triples. The port number is interpreted together with a

// protocol (default TCP) and zero protocol number cannot be used.

HashIPPortIP Type = "hash:ip,port,ip"

// HashIPPortNet represents the `hash:ip,port,net` type ipset. The hash:ip,port,net set type uses a hash to store IP address, port number and IP network address triples. The port

// number is interpreted together with a protocol (default TCP) and zero protocol number cannot be used. Network address

// with zero prefix size cannot be stored either.

HashIPPortNet Type = "hash:ip,port,net"

// BitmapPort represents the `bitmap:port` type ipset. The bitmap:port set type uses a memory range, where each bit

// represents one TCP/UDP port. A bitmap:port type of set can store up to 65535 ports.

BitmapPort Type = "bitmap:port"

ipvs具体实现就分析到这里,不对的地方希望大神指点迷津,一起进步。

用户态

原理图

userspace用户态,是以socket的方式实现代理的,从图中可以看出userspace这种mode最大的问题是,service的请求会先从用户空间进入内核iptables,然后再回到用户空间,由kube-proxy完成后端Endpoints的选择和代理工作,这样流量从用户空间进出内核带来的性能损耗是不可接受的。这也是k8s v1.0及之前版本中对kube-proxy质疑最大的一点,因此社区就开始研究iptables mode。

具体代码在newProxyServer这个函数里

glog.V(0).Info("Using userspace Proxier.")

// This is a proxy.LoadBalancer which NewProxier needs but has methods we don't need for

// our config.EndpointsConfigHandler.

loadBalancer := userspace.NewLoadBalancerRR()

// set EndpointsConfigHandler to our loadBalancer

endpointsEventHandler = loadBalancer

// TODO this has side effects that should only happen when Run() is invoked.

proxierUserspace, err := userspace.NewProxier(

loadBalancer,

net.ParseIP(config.BindAddress),

iptInterface,

execer,

*utilnet.ParsePortRangeOrDie(config.PortRange),

config.IPTables.SyncPeriod.Duration,

config.IPTables.MinSyncPeriod.Duration,

config.UDPIdleTimeout.Duration,

config.NodePortAddresses,

)

if err != nil {

return nil, fmt.Errorf("unable to create proxier: %v", err)

}

serviceEventHandler = proxierUserspace

proxier = proxierUserspace

// Remove artifacts from the iptables and ipvs Proxier, if not on Windows.

glog.V(0).Info("Tearing down inactive rules.")

// TODO this has side effects that should only happen when Run() is invoked.

iptables.CleanupLeftovers(iptInterface)

// IPVS Proxier will generate some iptables rules, need to clean them before switching to other proxy mode.

// Besides, ipvs proxier will create some ipvs rules as well. Because there is no way to tell if a given

// ipvs rule is created by IPVS proxier or not. Users should explicitly specify `--clean-ipvs=true` to flush

// all ipvs rules when kube-proxy start up. Users do this operation should be with caution.

ipvs.CleanupLeftovers(ipvsInterface, iptInterface, ipsetInterface, cleanupIPVS)

具体实现在这个文件$GOPATH/src/k8s.io/kubernetes/pkg/proxy/userspace/proxier.go

func NewCustomProxier(loadBalancer LoadBalancer, listenIP net.IP, iptables iptables.Interface, exec utilexec.Interface, pr utilnet.PortRange, syncPeriod, minSyncPeriod, udpIdleTimeout time.Duration, nodePortAddresses []string, makeProxySocket ProxySocketFunc) (*Proxier, error) {

if listenIP.Equal(localhostIPv4) || listenIP.Equal(localhostIPv6) {

return nil, ErrProxyOnLocalhost

}

// If listenIP is given, assume that is the intended host IP. Otherwise

// try to find a suitable host IP address from network interfaces.

var err error

hostIP := listenIP

if hostIP.Equal(net.IPv4zero) || hostIP.Equal(net.IPv6zero) {

hostIP, err = utilnet.ChooseHostInterface()

if err != nil {

return nil, fmt.Errorf("failed to select a host interface: %v", err)

}

}

err = setRLimit(64 * 1000)

if err != nil {

return nil, fmt.Errorf("failed to set open file handler limit: %v", err)

}

proxyPorts := newPortAllocator(pr)

glog.V(2).Infof("Setting proxy IP to %v and initializing iptables", hostIP)

return createProxier(loadBalancer, listenIP, iptables, exec, hostIP, proxyPorts, syncPeriod, minSyncPeriod, udpIdleTimeout, makeProxySocket)

}

主要是这两个函数

// Sync is called to immediately synchronize the proxier state to iptables

func (proxier *Proxier) Sync() {

if err := iptablesInit(proxier.iptables); err != nil {

glog.Errorf("Failed to ensure iptables: %v", err)

}

proxier.ensurePortals()

proxier.cleanupStaleStickySessions()

}

// SyncLoop runs periodic work. This is expected to run as a goroutine or as the main loop of the app. It does not return.

func (proxier *Proxier) SyncLoop() {

t := time.NewTicker(proxier.syncPeriod)

defer t.Stop()

for {

<-t.C

glog.V(6).Infof("Periodic sync")

proxier.Sync()

}

}

实现的逻辑方式跟ipvs是一样的,这里就不详细解说了

iptables

原理图

iptables mode因为使用iptable NAT来完成转发,也存在不可忽视的性能损耗。另外,如果集群中存在上万的Service/Endpoint,那么Node上的iptables rules将会非常庞大,性能还会再打折扣

newProxyServer函数涉及到iptables的代码如下

if proxyMode == proxyModeIPTables {

glog.V(0).Info("Using iptables Proxier.")

nodeIP := net.ParseIP(config.BindAddress)

if nodeIP.Equal(net.IPv4zero) || nodeIP.Equal(net.IPv6zero) {

nodeIP = getNodeIP(client, hostname)

}

if config.IPTables.MasqueradeBit == nil {

// MasqueradeBit must be specified or defaulted.

return nil, fmt.Errorf("unable to read IPTables MasqueradeBit from config")

}

// TODO this has side effects that should only happen when Run() is invoked.

proxierIPTables, err := iptables.NewProxier(

iptInterface,

utilsysctl.New(),

execer,

config.IPTables.SyncPeriod.Duration,

config.IPTables.MinSyncPeriod.Duration,

config.IPTables.MasqueradeAll,

int(*config.IPTables.MasqueradeBit),

config.ClusterCIDR,

hostname,

nodeIP,

recorder,

healthzUpdater,

config.NodePortAddresses,

)

if err != nil {

return nil, fmt.Errorf("unable to create proxier: %v", err)

}

metrics.RegisterMetrics()

proxier = proxierIPTables

serviceEventHandler = proxierIPTables

endpointsEventHandler = proxierIPTables

// No turning back. Remove artifacts that might still exist from the userspace Proxier.

glog.V(0).Info("Tearing down inactive rules.")

// TODO this has side effects that should only happen when Run() is invoked.

userspace.CleanupLeftovers(iptInterface)

// IPVS Proxier will generate some iptables rules, need to clean them before switching to other proxy mode.

// Besides, ipvs proxier will create some ipvs rules as well. Because there is no way to tell if a given

// ipvs rule is created by IPVS proxier or not. Users should explicitly specify `--clean-ipvs=true` to flush

// all ipvs rules when kube-proxy start up. Users do this operation should be with caution.

ipvs.CleanupLeftovers(ipvsInterface, iptInterface, ipsetInterface, cleanupIPVS)

//IPVS 模式

}

具体实现形式跟ipvs的代码风格一模一样

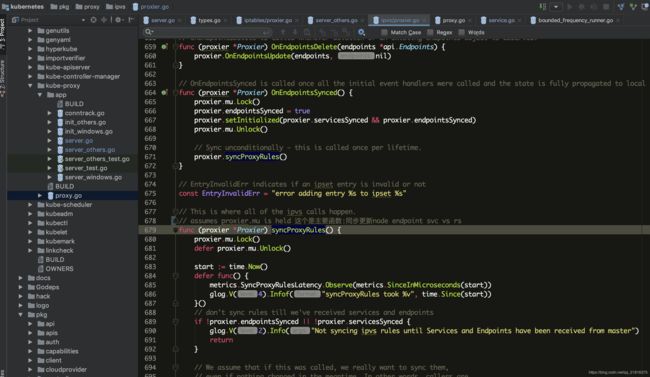

syncProxyRules实现如下

func (proxier *Proxier) syncProxyRules() {

proxier.mu.Lock()

defer proxier.mu.Unlock()

start := time.Now()

defer func() {

metrics.SyncProxyRulesLatency.Observe(metrics.SinceInMicroseconds(start))

glog.V(4).Infof("syncProxyRules took %v", time.Since(start))

}()

// don't sync rules till we've received services and endpoints

if !proxier.endpointsSynced || !proxier.servicesSynced {

glog.V(2).Info("Not syncing iptables until Services and Endpoints have been received from master")

return

}

//更新service endpoint

// We assume that if this was called, we really want to sync them,

// even if nothing changed in the meantime. In other words, callers are

// responsible for detecting no-op changes and not calling this function.

serviceUpdateResult := proxy.UpdateServiceMap(proxier.serviceMap, proxier.serviceChanges)

endpointUpdateResult := proxy.UpdateEndpointsMap(proxier.endpointsMap, proxier.endpointsChanges)

staleServices := serviceUpdateResult.UDPStaleClusterIP

// merge stale services gathered from updateEndpointsMap

for _, svcPortName := range endpointUpdateResult.StaleServiceNames {

if svcInfo, ok := proxier.serviceMap[svcPortName]; ok && svcInfo != nil && svcInfo.GetProtocol() == api.ProtocolUDP {

glog.V(2).Infof("Stale udp service %v -> %s", svcPortName, svcInfo.ClusterIPString())

staleServices.Insert(svcInfo.ClusterIPString())

}

}

glog.V(3).Infof("Syncing iptables rules")

//filter表中INPUT链结尾添加自定义链调转到KUBE-EXTERNAL-SERVICES链: -A INPUT -m conntrack --ctstate NEW -m comment --comment "kubernetes externally-visible service portals" -j KUBE-EXTERNAL-SERVICES

//filter表中OUTPUT链结尾追加自定义链调转到KUBE-SERVICE链: -A OUTPUT -m conntrack --ctstate NEW -m comment --comment "kubernetes service portals" -j KUBE-SERVICES

//nat表中OUTPUT链结尾追加自定义链调转到KUBE-SERVICES链: -A OUTPUT -m comment --comment "kubernetes service portals" -j KUBE-SERVICES

//nat表中PREROUTING链追加自定义链调转到KUBE-SERVICES链: -A PREROUTING -m comment --comment "kubernetes service portals" -j KUBE-SERVICES

//nat表中POSTROUTING链追加自定义链调转到KUBE-POSTROUTING: -A POSTROUTING -m comment --comment "kubernetes postrouting rules" -j KUBE-POSTROUTING

//filter表中KUBE-FORWARD追加:-A KUBE-FORWARD -m comment --comment "kubernetes forwarding rules" -m mark --mark 0x4000/0x4000 -j ACCEPT

//---------------------

//

// Create and link the kube chains.

for _, chain := range iptablesJumpChains {

if _, err := proxier.iptables.EnsureChain(chain.table, chain.chain); err != nil {

glog.Errorf("Failed to ensure that %s chain %s exists: %v", chain.table, kubeServicesChain, err)

return

}

args := append(chain.extraArgs,

"-m", "comment", "--comment", chain.comment,

"-j", string(chain.chain),

)

if _, err := proxier.iptables.EnsureRule(utiliptables.Prepend, chain.table, chain.sourceChain, args...); err != nil {

glog.Errorf("Failed to ensure that %s chain %s jumps to %s: %v", chain.table, chain.sourceChain, chain.chain, err)

return

}

}

//

// Below this point we will not return until we try to write the iptables rules.

//

// Get iptables-save output so we can check for existing chains and rules.

// This will be a map of chain name to chain with rules as stored in iptables-save/iptables-restore

existingFilterChains := make(map[utiliptables.Chain]string)

proxier.iptablesData.Reset()

err := proxier.iptables.SaveInto(utiliptables.TableFilter, proxier.iptablesData)

if err != nil { // if we failed to get any rules

glog.Errorf("Failed to execute iptables-save, syncing all rules: %v", err)

} else { // otherwise parse the output

existingFilterChains = utiliptables.GetChainLines(utiliptables.TableFilter, proxier.iptablesData.Bytes())

}

existingNATChains := make(map[utiliptables.Chain]string)

proxier.iptablesData.Reset()

err = proxier.iptables.SaveInto(utiliptables.TableNAT, proxier.iptablesData)

if err != nil { // if we failed to get any rules

glog.Errorf("Failed to execute iptables-save, syncing all rules: %v", err)

} else { // otherwise parse the output

existingNATChains = utiliptables.GetChainLines(utiliptables.TableNAT, proxier.iptablesData.Bytes())

}

// Reset all buffers used later.

// This is to avoid memory reallocations and thus improve performance.

proxier.filterChains.Reset()

proxier.filterRules.Reset()

proxier.natChains.Reset()

proxier.natRules.Reset()

// Write table headers.

writeLine(proxier.filterChains, "*filter")

writeLine(proxier.natChains, "*nat")

// Make sure we keep stats for the top-level chains, if they existed

// (which most should have because we created them above).

for _, chainName := range []utiliptables.Chain{kubeServicesChain, kubeExternalServicesChain, kubeForwardChain} {

if chain, ok := existingFilterChains[chainName]; ok {

writeLine(proxier.filterChains, chain)

} else {

writeLine(proxier.filterChains, utiliptables.MakeChainLine(chainName))

}

}

for _, chainName := range []utiliptables.Chain{kubeServicesChain, kubeNodePortsChain, kubePostroutingChain, KubeMarkMasqChain} {

if chain, ok := existingNATChains[chainName]; ok {

writeLine(proxier.natChains, chain)

} else {

writeLine(proxier.natChains, utiliptables.MakeChainLine(chainName))

}

}

// Install the kubernetes-specific postrouting rules. We use a whole chain for

// this so that it is easier to flush and change, for example if the mark

// value should ever change.

//-A KUBE-POSTROUTING -m comment --comment "kubernetes service traffic requiring SNAT" -m mark --mark 0x4000/0x4000 -j MASQUERADE

writeLine(proxier.natRules, []string{

"-A", string(kubePostroutingChain),

"-m", "comment", "--comment", `"kubernetes service traffic requiring SNAT"`,

"-m", "mark", "--mark", proxier.masqueradeMark,

"-j", "MASQUERADE",

}...)

// Install the kubernetes-specific masquerade mark rule. We use a whole chain for

// this so that it is easier to flush and change, for example if the mark

// value should ever change.

writeLine(proxier.natRules, []string{

"-A", string(KubeMarkMasqChain),

"-j", "MARK", "--set-xmark", proxier.masqueradeMark,

}...)

// Accumulate NAT chains to keep.

activeNATChains := map[utiliptables.Chain]bool{} // use a map as a set

// Accumulate the set of local ports that we will be holding open once this update is complete

replacementPortsMap := map[utilproxy.LocalPort]utilproxy.Closeable{}

// We are creating those slices ones here to avoid memory reallocations

// in every loop. Note that reuse the memory, instead of doing:

// slice =

// you should always do one of the below:

// slice = slice[:0] // and then append to it

// slice = append(slice[:0], ...)

endpoints := make([]*endpointsInfo, 0)

endpointChains := make([]utiliptables.Chain, 0)

// To avoid growing this slice, we arbitrarily set its size to 64,

// there is never more than that many arguments for a single line.

// Note that even if we go over 64, it will still be correct - it

// is just for efficiency, not correctness.

args := make([]string, 64)

// Build rules for each service.

for svcName, svc := range proxier.serviceMap {

svcInfo, ok := svc.(*serviceInfo)

if !ok {

glog.Errorf("Failed to cast serviceInfo %q", svcName.String())

continue

}

isIPv6 := utilnet.IsIPv6(svcInfo.ClusterIP)

protocol := strings.ToLower(string(svcInfo.Protocol))

svcNameString := svcInfo.serviceNameString

hasEndpoints := len(proxier.endpointsMap[svcName]) > 0

svcChain := svcInfo.servicePortChainName

if hasEndpoints {

// Create the per-service chain, retaining counters if possible.

if chain, ok := existingNATChains[svcChain]; ok {

writeLine(proxier.natChains, chain)

} else {

writeLine(proxier.natChains, utiliptables.MakeChainLine(svcChain))

}

activeNATChains[svcChain] = true

}

svcXlbChain := svcInfo.serviceLBChainName

if svcInfo.OnlyNodeLocalEndpoints {

// Only for services request OnlyLocal traffic

// create the per-service LB chain, retaining counters if possible.

if lbChain, ok := existingNATChains[svcXlbChain]; ok {

writeLine(proxier.natChains, lbChain)

} else {

writeLine(proxier.natChains, utiliptables.MakeChainLine(svcXlbChain))

}

activeNATChains[svcXlbChain] = true

}

// Capture the clusterIP.

if hasEndpoints {

args = append(args[:0],

"-A", string(kubeServicesChain),

"-m", "comment", "--comment", fmt.Sprintf(`"%s cluster IP"`, svcNameString),

"-m", protocol, "-p", protocol,

"-d", utilproxy.ToCIDR(svcInfo.ClusterIP),

"--dport", strconv.Itoa(svcInfo.Port),

)

if proxier.masqueradeAll {

writeLine(proxier.natRules, append(args, "-j", string(KubeMarkMasqChain))...)

} else if len(proxier.clusterCIDR) > 0 {

// This masquerades off-cluster traffic to a service VIP. The idea

// is that you can establish a static route for your Service range,

// routing to any node, and that node will bridge into the Service

// for you. Since that might bounce off-node, we masquerade here.

// If/when we support "Local" policy for VIPs, we should update this.

writeLine(proxier.natRules, append(args, "! -s", proxier.clusterCIDR, "-j", string(KubeMarkMasqChain))...)

}

writeLine(proxier.natRules, append(args, "-j", string(svcChain))...)

} else {

writeLine(proxier.filterRules,

"-A", string(kubeServicesChain),

"-m", "comment", "--comment", fmt.Sprintf(`"%s has no endpoints"`, svcNameString),

"-m", protocol, "-p", protocol,

"-d", utilproxy.ToCIDR(svcInfo.ClusterIP),

"--dport", strconv.Itoa(svcInfo.Port),

"-j", "REJECT",

)

}

// Capture externalIPs.

for _, externalIP := range svcInfo.ExternalIPs {

// If the "external" IP happens to be an IP that is local to this

// machine, hold the local port open so no other process can open it

// (because the socket might open but it would never work).

if local, err := utilproxy.IsLocalIP(externalIP); err != nil {

glog.Errorf("can't determine if IP is local, assuming not: %v", err)

} else if local {

lp := utilproxy.LocalPort{

Description: "externalIP for " + svcNameString,

IP: externalIP,

Port: svcInfo.Port,

Protocol: protocol,

}

if proxier.portsMap[lp] != nil {

glog.V(4).Infof("Port %s was open before and is still needed", lp.String())

replacementPortsMap[lp] = proxier.portsMap[lp]

} else {

socket, err := proxier.portMapper.OpenLocalPort(&lp)

if err != nil {

msg := fmt.Sprintf("can't open %s, skipping this externalIP: %v", lp.String(), err)

proxier.recorder.Eventf(

&v1.ObjectReference{

Kind: "Node",

Name: proxier.hostname,

UID: types.UID(proxier.hostname),

Namespace: "",

}, api.EventTypeWarning, err.Error(), msg)

glog.Error(msg)

continue

}

replacementPortsMap[lp] = socket

}

}

if hasEndpoints {

args = append(args[:0],

"-A", string(kubeServicesChain),

"-m", "comment", "--comment", fmt.Sprintf(`"%s external IP"`, svcNameString),

"-m", protocol, "-p", protocol,

"-d", utilproxy.ToCIDR(net.ParseIP(externalIP)),

"--dport", strconv.Itoa(svcInfo.Port),

)

// We have to SNAT packets to external IPs.

writeLine(proxier.natRules, append(args, "-j", string(KubeMarkMasqChain))...)

// Allow traffic for external IPs that does not come from a bridge (i.e. not from a container)

// nor from a local process to be forwarded to the service.

// This rule roughly translates to "all traffic from off-machine".

// This is imperfect in the face of network plugins that might not use a bridge, but we can revisit that later.

externalTrafficOnlyArgs := append(args,

"-m", "physdev", "!", "--physdev-is-in",

"-m", "addrtype", "!", "--src-type", "LOCAL")

writeLine(proxier.natRules, append(externalTrafficOnlyArgs, "-j", string(svcChain))...)

dstLocalOnlyArgs := append(args, "-m", "addrtype", "--dst-type", "LOCAL")

// Allow traffic bound for external IPs that happen to be recognized as local IPs to stay local.

// This covers cases like GCE load-balancers which get added to the local routing table.

writeLine(proxier.natRules, append(dstLocalOnlyArgs, "-j", string(svcChain))...)

} else {

writeLine(proxier.filterRules,

"-A", string(kubeExternalServicesChain),

"-m", "comment", "--comment", fmt.Sprintf(`"%s has no endpoints"`, svcNameString),

"-m", protocol, "-p", protocol,

"-d", utilproxy.ToCIDR(net.ParseIP(externalIP)),

"--dport", strconv.Itoa(svcInfo.Port),

"-j", "REJECT",

)

}

}

// Capture load-balancer ingress.

if hasEndpoints {

fwChain := svcInfo.serviceFirewallChainName

for _, ingress := range svcInfo.LoadBalancerStatus.Ingress {

if ingress.IP != "" {

// create service firewall chain

if chain, ok := existingNATChains[fwChain]; ok {

writeLine(proxier.natChains, chain)

} else {

writeLine(proxier.natChains, utiliptables.MakeChainLine(fwChain))

}

activeNATChains[fwChain] = true

// The service firewall rules are created based on ServiceSpec.loadBalancerSourceRanges field.

// This currently works for loadbalancers that preserves source ips.

// For loadbalancers which direct traffic to service NodePort, the firewall rules will not apply.

args = append(args[:0],

"-A", string(kubeServicesChain),

"-m", "comment", "--comment", fmt.Sprintf(`"%s loadbalancer IP"`, svcNameString),

"-m", protocol, "-p", protocol,

"-d", utilproxy.ToCIDR(net.ParseIP(ingress.IP)),

"--dport", strconv.Itoa(svcInfo.Port),

)

// jump to service firewall chain

writeLine(proxier.natRules, append(args, "-j", string(fwChain))...)

args = append(args[:0],

"-A", string(fwChain),

"-m", "comment", "--comment", fmt.Sprintf(`"%s loadbalancer IP"`, svcNameString),

)

// Each source match rule in the FW chain may jump to either the SVC or the XLB chain

chosenChain := svcXlbChain

// If we are proxying globally, we need to masquerade in case we cross nodes.

// If we are proxying only locally, we can retain the source IP.

if !svcInfo.OnlyNodeLocalEndpoints {

writeLine(proxier.natRules, append(args, "-j", string(KubeMarkMasqChain))...)

chosenChain = svcChain

}

if len(svcInfo.LoadBalancerSourceRanges) == 0 {

// allow all sources, so jump directly to the KUBE-SVC or KUBE-XLB chain

writeLine(proxier.natRules, append(args, "-j", string(chosenChain))...)

} else {

// firewall filter based on each source range

allowFromNode := false

for _, src := range svcInfo.LoadBalancerSourceRanges {

writeLine(proxier.natRules, append(args, "-s", src, "-j", string(chosenChain))...)

// ignore error because it has been validated

_, cidr, _ := net.ParseCIDR(src)

if cidr.Contains(proxier.nodeIP) {

allowFromNode = true

}

}

// generally, ip route rule was added to intercept request to loadbalancer vip from the

// loadbalancer's backend hosts. In this case, request will not hit the loadbalancer but loop back directly.

// Need to add the following rule to allow request on host.

if allowFromNode {

writeLine(proxier.natRules, append(args, "-s", utilproxy.ToCIDR(net.ParseIP(ingress.IP)), "-j", string(chosenChain))...)

}

}

// If the packet was able to reach the end of firewall chain, then it did not get DNATed.

// It means the packet cannot go thru the firewall, then mark it for DROP

writeLine(proxier.natRules, append(args, "-j", string(KubeMarkDropChain))...)

}

}

}

// FIXME: do we need REJECT rules for load-balancer ingress if !hasEndpoints?

// Capture nodeports. If we had more than 2 rules it might be

// worthwhile to make a new per-service chain for nodeport rules, but

// with just 2 rules it ends up being a waste and a cognitive burden.

if svcInfo.NodePort != 0 {

// Hold the local port open so no other process can open it

// (because the socket might open but it would never work).

addresses, err := utilproxy.GetNodeAddresses(proxier.nodePortAddresses, proxier.networkInterfacer)

if err != nil {

glog.Errorf("Failed to get node ip address matching nodeport cidr: %v", err)

continue

}

lps := make([]utilproxy.LocalPort, 0)

for address := range addresses {

lp := utilproxy.LocalPort{

Description: "nodePort for " + svcNameString,

IP: address,

Port: svcInfo.NodePort,

Protocol: protocol,

}

if utilproxy.IsZeroCIDR(address) {

// Empty IP address means all

lp.IP = ""

lps = append(lps, lp)

// If we encounter a zero CIDR, then there is no point in processing the rest of the addresses.

break

}

lps = append(lps, lp)

}

// For ports on node IPs, open the actual port and hold it.

for _, lp := range lps {

if proxier.portsMap[lp] != nil {

glog.V(4).Infof("Port %s was open before and is still needed", lp.String())

replacementPortsMap[lp] = proxier.portsMap[lp]

} else {

socket, err := proxier.portMapper.OpenLocalPort(&lp)

if err != nil {

glog.Errorf("can't open %s, skipping this nodePort: %v", lp.String(), err)

continue

}

if lp.Protocol == "udp" {

// TODO: We might have multiple services using the same port, and this will clear conntrack for all of them.

// This is very low impact. The NodePort range is intentionally obscure, and unlikely to actually collide with real Services.

// This only affects UDP connections, which are not common.

// See issue: https://github.com/kubernetes/kubernetes/issues/49881

err := conntrack.ClearEntriesForPort(proxier.exec, lp.Port, isIPv6, v1.ProtocolUDP)

if err != nil {

glog.Errorf("Failed to clear udp conntrack for port %d, error: %v", lp.Port, err)

}

}

replacementPortsMap[lp] = socket

}

}

if hasEndpoints {

args = append(args[:0],

"-A", string(kubeNodePortsChain),

"-m", "comment", "--comment", svcNameString,

"-m", protocol, "-p", protocol,

"--dport", strconv.Itoa(svcInfo.NodePort),

)

if !svcInfo.OnlyNodeLocalEndpoints {

// Nodeports need SNAT, unless they're local.

writeLine(proxier.natRules, append(args, "-j", string(KubeMarkMasqChain))...)

// Jump to the service chain.

writeLine(proxier.natRules, append(args, "-j", string(svcChain))...)

} else {

// TODO: Make all nodePorts jump to the firewall chain.

// Currently we only create it for loadbalancers (#33586).

// Fix localhost martian source error

loopback := "127.0.0.0/8"

if isIPv6 {

loopback = "::1/128"

}

writeLine(proxier.natRules, append(args, "-s", loopback, "-j", string(KubeMarkMasqChain))...)

writeLine(proxier.natRules, append(args, "-j", string(svcXlbChain))...)

}

} else {

writeLine(proxier.filterRules,

"-A", string(kubeExternalServicesChain),

"-m", "comment", "--comment", fmt.Sprintf(`"%s has no endpoints"`, svcNameString),

"-m", "addrtype", "--dst-type", "LOCAL",

"-m", protocol, "-p", protocol,

"--dport", strconv.Itoa(svcInfo.NodePort),

"-j", "REJECT",

)

}

}

if !hasEndpoints {

continue

}

// Generate the per-endpoint chains. We do this in multiple passes so we

// can group rules together.

// These two slices parallel each other - keep in sync

endpoints = endpoints[:0]

endpointChains = endpointChains[:0]

var endpointChain utiliptables.Chain

for _, ep := range proxier.endpointsMap[svcName] {

epInfo, ok := ep.(*endpointsInfo)

if !ok {

glog.Errorf("Failed to cast endpointsInfo %q", ep.String())

continue

}

endpoints = append(endpoints, epInfo)

endpointChain = epInfo.endpointChain(svcNameString, protocol)

endpointChains = append(endpointChains, endpointChain)

// Create the endpoint chain, retaining counters if possible.

if chain, ok := existingNATChains[utiliptables.Chain(endpointChain)]; ok {

writeLine(proxier.natChains, chain)

} else {

writeLine(proxier.natChains, utiliptables.MakeChainLine(endpointChain))

}

activeNATChains[endpointChain] = true

}

// First write session affinity rules, if applicable.

if svcInfo.SessionAffinityType == api.ServiceAffinityClientIP {

for _, endpointChain := range endpointChains {

writeLine(proxier.natRules,

"-A", string(svcChain),

"-m", "comment", "--comment", svcNameString,

"-m", "recent", "--name", string(endpointChain),

"--rcheck", "--seconds", strconv.Itoa(svcInfo.StickyMaxAgeSeconds), "--reap",

"-j", string(endpointChain))

}

}

// Now write loadbalancing & DNAT rules.

n := len(endpointChains)

for i, endpointChain := range endpointChains {

epIP := endpoints[i].IP()

if epIP == "" {

// Error parsing this endpoint has been logged. Skip to next endpoint.

continue

}

// Balancing rules in the per-service chain.

args = append(args[:0], []string{

"-A", string(svcChain),

"-m", "comment", "--comment", svcNameString,

}...)

if i < (n - 1) {

// Each rule is a probabilistic match.

args = append(args,

"-m", "statistic",

"--mode", "random",

"--probability", proxier.probability(n-i))

}

// The final (or only if n == 1) rule is a guaranteed match.

args = append(args, "-j", string(endpointChain))

writeLine(proxier.natRules, args...)

// Rules in the per-endpoint chain.

args = append(args[:0],

"-A", string(endpointChain),

"-m", "comment", "--comment", svcNameString,

)

// Handle traffic that loops back to the originator with SNAT.

writeLine(proxier.natRules, append(args,

"-s", utilproxy.ToCIDR(net.ParseIP(epIP)),

"-j", string(KubeMarkMasqChain))...)

// Update client-affinity lists.

if svcInfo.SessionAffinityType == api.ServiceAffinityClientIP {

args = append(args, "-m", "recent", "--name", string(endpointChain), "--set")

}

// DNAT to final destination.

args = append(args, "-m", protocol, "-p", protocol, "-j", "DNAT", "--to-destination", endpoints[i].Endpoint)

writeLine(proxier.natRules, args...)

}

// The logic below this applies only if this service is marked as OnlyLocal

if !svcInfo.OnlyNodeLocalEndpoints {

continue

}

// Now write ingress loadbalancing & DNAT rules only for services that request OnlyLocal traffic.

// TODO - This logic may be combinable with the block above that creates the svc balancer chain

localEndpoints := make([]*endpointsInfo, 0)

localEndpointChains := make([]utiliptables.Chain, 0)

for i := range endpointChains {

if endpoints[i].IsLocal {

// These slices parallel each other; must be kept in sync

localEndpoints = append(localEndpoints, endpoints[i])

localEndpointChains = append(localEndpointChains, endpointChains[i])

}

}

// First rule in the chain redirects all pod -> external VIP traffic to the

// Service's ClusterIP instead. This happens whether or not we have local

// endpoints; only if clusterCIDR is specified

if len(proxier.clusterCIDR) > 0 {

args = append(args[:0],

"-A", string(svcXlbChain),

"-m", "comment", "--comment",

`"Redirect pods trying to reach external loadbalancer VIP to clusterIP"`,

"-s", proxier.clusterCIDR,

"-j", string(svcChain),

)

writeLine(proxier.natRules, args...)

}

numLocalEndpoints := len(localEndpointChains)

if numLocalEndpoints == 0 {

// Blackhole all traffic since there are no local endpoints

args = append(args[:0],

"-A", string(svcXlbChain),

"-m", "comment", "--comment",

fmt.Sprintf(`"%s has no local endpoints"`, svcNameString),

"-j",

string(KubeMarkDropChain),

)

writeLine(proxier.natRules, args...)

} else {

// First write session affinity rules only over local endpoints, if applicable.

if svcInfo.SessionAffinityType == api.ServiceAffinityClientIP {

for _, endpointChain := range localEndpointChains {

writeLine(proxier.natRules,

"-A", string(svcXlbChain),

"-m", "comment", "--comment", svcNameString,

"-m", "recent", "--name", string(endpointChain),

"--rcheck", "--seconds", strconv.Itoa(svcInfo.StickyMaxAgeSeconds), "--reap",

"-j", string(endpointChain))

}

}

// Setup probability filter rules only over local endpoints

for i, endpointChain := range localEndpointChains {

// Balancing rules in the per-service chain.

args = append(args[:0],

"-A", string(svcXlbChain),

"-m", "comment", "--comment",

fmt.Sprintf(`"Balancing rule %d for %s"`, i, svcNameString),

)

if i < (numLocalEndpoints - 1) {

// Each rule is a probabilistic match.

args = append(args,

"-m", "statistic",

"--mode", "random",

"--probability", proxier.probability(numLocalEndpoints-i))

}

// The final (or only if n == 1) rule is a guaranteed match.

args = append(args, "-j", string(endpointChain))

writeLine(proxier.natRules, args...)

}

}

}

// Delete chains no longer in use.

for chain := range existingNATChains {

if !activeNATChains[chain] {

chainString := string(chain)

if !strings.HasPrefix(chainString, "KUBE-SVC-") && !strings.HasPrefix(chainString, "KUBE-SEP-") && !strings.HasPrefix(chainString, "KUBE-FW-") && !strings.HasPrefix(chainString, "KUBE-XLB-") {

// Ignore chains that aren't ours.

continue

}

// We must (as per iptables) write a chain-line for it, which has

// the nice effect of flushing the chain. Then we can remove the

// chain.

writeLine(proxier.natChains, existingNATChains[chain])

writeLine(proxier.natRules, "-X", chainString)

}

}

// Finally, tail-call to the nodeports chain. This needs to be after all

// other service portal rules.

addresses, err := utilproxy.GetNodeAddresses(proxier.nodePortAddresses, proxier.networkInterfacer)

if err != nil {

glog.Errorf("Failed to get node ip address matching nodeport cidr")

} else {

isIPv6 := proxier.iptables.IsIpv6()

for address := range addresses {

// TODO(thockin, m1093782566): If/when we have dual-stack support we will want to distinguish v4 from v6 zero-CIDRs.

if utilproxy.IsZeroCIDR(address) {

args = append(args[:0],

"-A", string(kubeServicesChain),

"-m", "comment", "--comment", `"kubernetes service nodeports; NOTE: this must be the last rule in this chain"`,

"-m", "addrtype", "--dst-type", "LOCAL",

"-j", string(kubeNodePortsChain))

writeLine(proxier.natRules, args...)

// Nothing else matters after the zero CIDR.

break

}

// Ignore IP addresses with incorrect version

if isIPv6 && !utilnet.IsIPv6String(address) || !isIPv6 && utilnet.IsIPv6String(address) {

glog.Errorf("IP address %s has incorrect IP version", address)

continue

}

// create nodeport rules for each IP one by one

args = append(args[:0],

"-A", string(kubeServicesChain),

"-m", "comment", "--comment", `"kubernetes service nodeports; NOTE: this must be the last rule in this chain"`,

"-d", address,

"-j", string(kubeNodePortsChain))

writeLine(proxier.natRules, args...)

}

}

// If the masqueradeMark has been added then we want to forward that same

// traffic, this allows NodePort traffic to be forwarded even if the default

// FORWARD policy is not accept.

writeLine(proxier.filterRules,

"-A", string(kubeForwardChain),

"-m", "comment", "--comment", `"kubernetes forwarding rules"`,

"-m", "mark", "--mark", proxier.masqueradeMark,

"-j", "ACCEPT",

)

// The following rules can only be set if clusterCIDR has been defined.

if len(proxier.clusterCIDR) != 0 {

// The following two rules ensure the traffic after the initial packet

// accepted by the "kubernetes forwarding rules" rule above will be

// accepted, to be as specific as possible the traffic must be sourced

// or destined to the clusterCIDR (to/from a pod).

writeLine(proxier.filterRules,

"-A", string(kubeForwardChain),

"-s", proxier.clusterCIDR,

"-m", "comment", "--comment", `"kubernetes forwarding conntrack pod source rule"`,

"-m", "conntrack",

"--ctstate", "RELATED,ESTABLISHED",

"-j", "ACCEPT",

)

writeLine(proxier.filterRules,

"-A", string(kubeForwardChain),

"-m", "comment", "--comment", `"kubernetes forwarding conntrack pod destination rule"`,

"-d", proxier.clusterCIDR,

"-m", "conntrack",

"--ctstate", "RELATED,ESTABLISHED",

"-j", "ACCEPT",

)

}

// Write the end-of-table markers.

writeLine(proxier.filterRules, "COMMIT")

writeLine(proxier.natRules, "COMMIT")

// Sync rules.

// NOTE: NoFlushTables is used so we don't flush non-kubernetes chains in the table

proxier.iptablesData.Reset()

proxier.iptablesData.Write(proxier.filterChains.Bytes())

proxier.iptablesData.Write(proxier.filterRules.Bytes())

proxier.iptablesData.Write(proxier.natChains.Bytes())

proxier.iptablesData.Write(proxier.natRules.Bytes())

glog.V(5).Infof("Restoring iptables rules: %s", proxier.iptablesData.Bytes())

err = proxier.iptables.RestoreAll(proxier.iptablesData.Bytes(), utiliptables.NoFlushTables, utiliptables.RestoreCounters)

if err != nil {

glog.Errorf("Failed to execute iptables-restore: %v", err)

// Revert new local ports.

glog.V(2).Infof("Closing local ports after iptables-restore failure")

utilproxy.RevertPorts(replacementPortsMap, proxier.portsMap)

return

}

// Close old local ports and save new ones.

for k, v := range proxier.portsMap {

if replacementPortsMap[k] == nil {

v.Close()

}

}

proxier.portsMap = replacementPortsMap

// Update healthz timestamp.

if proxier.healthzServer != nil {

proxier.healthzServer.UpdateTimestamp()

}

// Update healthchecks. The endpoints list might include services that are

// not "OnlyLocal", but the services list will not, and the healthChecker

// will just drop those endpoints.

if err := proxier.healthChecker.SyncServices(serviceUpdateResult.HCServiceNodePorts); err != nil {

glog.Errorf("Error syncing healtcheck services: %v", err)

}

if err := proxier.healthChecker.SyncEndpoints(endpointUpdateResult.HCEndpointsLocalIPSize); err != nil {

glog.Errorf("Error syncing healthcheck endoints: %v", err)

}

// Finish housekeeping.

// TODO: these could be made more consistent.

for _, svcIP := range staleServices.UnsortedList() {

if err := conntrack.ClearEntriesForIP(proxier.exec, svcIP, v1.ProtocolUDP); err != nil {

glog.Errorf("Failed to delete stale service IP %s connections, error: %v", svcIP, err)

}

}

proxier.deleteEndpointConnections(endpointUpdateResult.StaleEndpoints)

}

具体的实现比较繁琐,需要仔细品读才可以知道细节,在这里就不详细分析了

只要根据以下iptables链去分析具体的代码逻辑就可以分析出来了

iptablesMinVersion = utiliptables.MinCheckVersion

// the services chain

kubeServicesChain utiliptables.Chain = "KUBE-SERVICES"

// the external services chain

kubeExternalServicesChain utiliptables.Chain = "KUBE-EXTERNAL-SERVICES"

// the nodeports chain

kubeNodePortsChain utiliptables.Chain = "KUBE-NODEPORTS"

// the kubernetes postrouting chain

kubePostroutingChain utiliptables.Chain = "KUBE-POSTROUTING"

// the mark-for-masquerade chain

KubeMarkMasqChain utiliptables.Chain = "KUBE-MARK-MASQ"

// the mark-for-drop chain

KubeMarkDropChain utiliptables.Chain = "KUBE-MARK-DROP"

// the kubernetes forward chain

kubeForwardChain utiliptables.Chain = "KUBE-FORWARD"

end

参考:

官方kube-proxy

ipvs

kube-proxy源码分析