【DL笔记】卷积神经网络简介

1.前言

这篇文章是我通过学习黄文坚、唐源所著的《TensorFlow实战》之后的简单总结,通过这本书使我对深度学习更加了解,现整理出一些部分分享给大家,错误之处可以在评论区指出,以便我加以改正,谢谢!

2.卷积神经网络简介

卷积神经网络的概念最早起源于科学家提出感受野(Receptive Field),即每个动物的神经元只会处理一小块区域的视觉图像,相当于CNN中卷积核的处理过程。后来又提出了神经认知机的概念,神经认知机包含两类神经元,一类是用来提取特征的S-cell,对应于现在的CNN中卷积核的滤波操作;一类是用来抗变得C-cell,对应于现在的CNN中激励函数、池化等操作。

一般的CNN由多个卷积层构成,每层卷积层会进行一下几个操作:

1)图像通过不同的卷积核滤波,加偏置bias,提取局部特征

2)滤波输出结果进行激活函数处理,常用ReLU函数

3)激活函数输出进行池化操作,一般有两种:最大池化和平均池化

除此以外还可以加上LRN(局部响应归一化)等操作。

由于卷积神经网络的全连接过程中会出现大量的权值参数,在卷积神经网络中必须减少训练的权重数量,目的:1.降低计算复杂度。2.过多的连接会导致过拟合,减少连接数有利于提高模型的泛化能力。紧跟着全连接后面的dropout操作,就是为了防止过拟合,使得部分神经元不被激活函数激活,从而减少连接。

3.流行的几种CNN结构

这篇文章中只是把这几种网络结构展示出来,会在后续博文中详细介绍每一个结构以及其TensorFlow实现。

LeNet

AlexNet

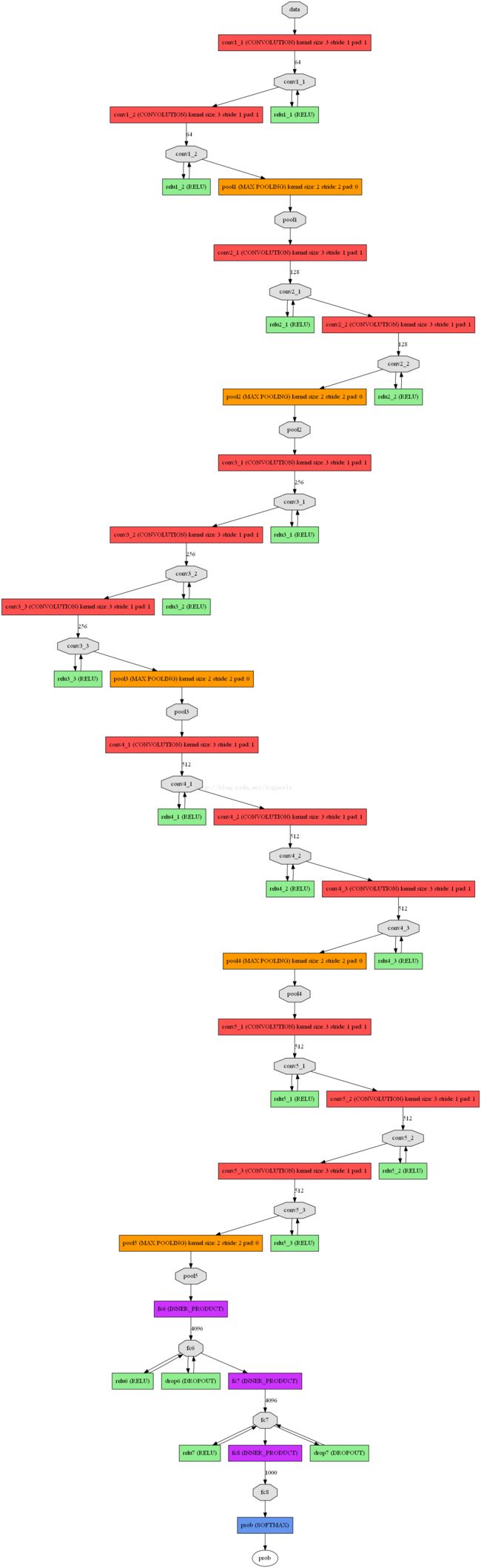

VGG

GoogLeNet

ResNet

4.基本卷积神经网络的TensorFlow实现

下面以mnist数据集为例,自己设计神经网络进行图片分类。网络结构包括三层卷积层(Conv)和两个全连接层,代码如下:

import相关库:

import tensorflow as tf

import numpy as np

from tensorflow.examples.tutorials.mnist import input_datamnist = input_data.read_data_sets("MNIST_data/",one_hot = True)

trX,trY,teX,teY = mnist.train.images,mnist.train.labels,mnist.test.images,mnist.test.labels

trX = trX.reshape(-1,28,28,1)

teX = teX.reshape(-1,28,28,1)

X = tf.placeholder("float",[None,28,28,1])

Y = tf.placeholder("float",[None,10])def init_weights(shape):

return tf.Variable(tf.random_normal(shape,stddev=0.1))

w = init_weights([3,3,1,32])

w2 = init_weights([3,3,32,64])

w3 = init_weights([3,3,64,128])

w4 = init_weights([128*4*4,625])

w_o = init_weights([625,10])def model(X,w,w2,w3,w4,w_o,p_keep_conv,p_keep_hidden):

l1a = tf.nn.relu(tf.nn.conv2d(X,w,strides = [1,1,1,1],padding = "SAME"))

l1 = tf.nn.max_pool(l1a,ksize = [1,2,2,1],strides = [1,2,2,1],padding = "SAME")

l1 = tf.nn.dropout(l1,p_keep_conv)

l2a = tf.nn.relu(tf.nn.conv2d(l1,w2,strides = [1,1,1,1],padding = "SAME"))

l2 = tf.nn.max_pool(l2a,ksize = [1,2,2,1],strides = [1,2,2,1],padding = "SAME")

l2 = tf.nn.dropout(l2,p_keep_conv)

l3a = tf.nn.relu(tf.nn.conv2d(l2,w3,strides = [1,1,1,1],padding = "SAME"))

l3 = tf.nn.max_pool(l3a,ksize = [1,2,2,1],strides = [1,2,2,1],padding = "SAME")

l3 = tf.reshape(l3,[-1,w4.get_shape().as_list()[0]])

l3 = tf.nn.dropout(l3,p_keep_conv)

l4 = tf.nn.relu(tf.matmul(l3,w4))

l4 = tf.nn.dropout(l4,p_keep_hidden)

#output layer

pyx = tf.matmul(l4,w_o)

return pyx

p_keep_conv = tf.placeholder("float")

p_keep_hidden = tf.placeholder("float")

py_x = model(X,w,w2,w3,w4,w_o,p_keep_conv,p_keep_hidden)

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits = py_x,labels = Y))

train_op = tf.train.RMSPropOptimizer(0.001,0.9).minimize(cost)

predict_op = tf.argmax(py_x,1)

设置session并run:

batch_size = 128

test_size = 256

with tf.Session() as sess:

tf.global_variables_initializer().run()

for i in range(1000):

training_batch = zip(range(0,len(trX),batch_size),

range(batch_size,len(trX)+1,batch_size))

for start,end in training_batch:

sess.run(train_op,feed_dict = {X:trX[start:end],Y:trY[start:end],

p_keep_conv:0.8,p_keep_hidden:0.5})

test_indices = np.arange(len(teX))

np.random.shuffle(test_indices)

test_indices = test_indices[0:test_size]

print(i,np.mean(np.argmax(teY[test_indices],axis = 1) ==

sess.run(predict_op,feed_dict = {X:teX[test_indices],

p_keep_conv:1.0,

p_keep_hidden:1.0})))

Python3.6

TensorFlow1.1.0