Python爬虫实战代码

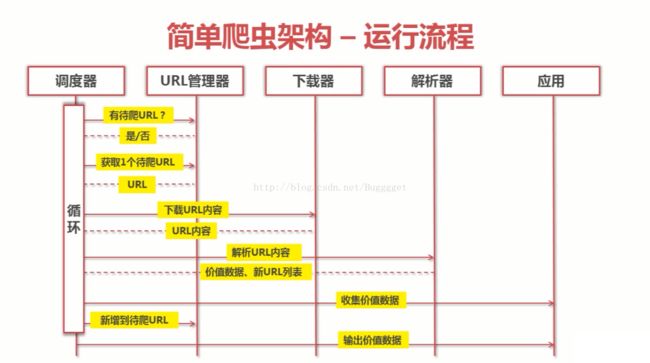

爬虫运行流程

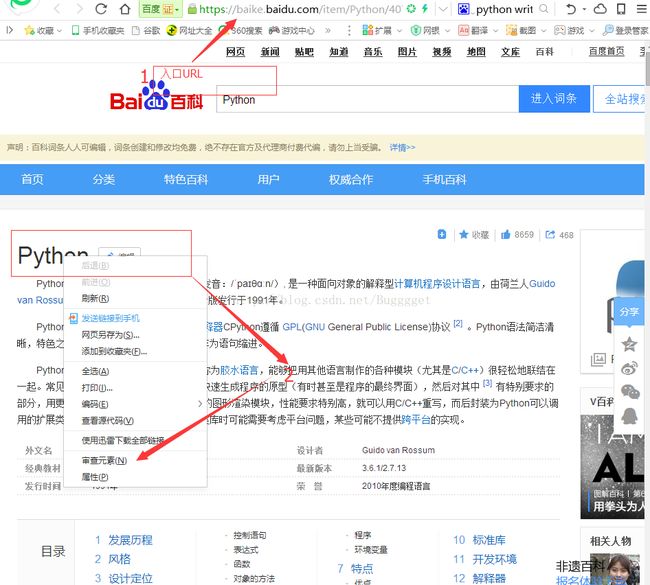

本次爬取的是搜索python的百度百科实例,对于URL可能是变化的,如果出现爬取失败,则可能是URL和爬取的相关属性发生了变化

爬取一个网站的第一步,就是分析这个网站:

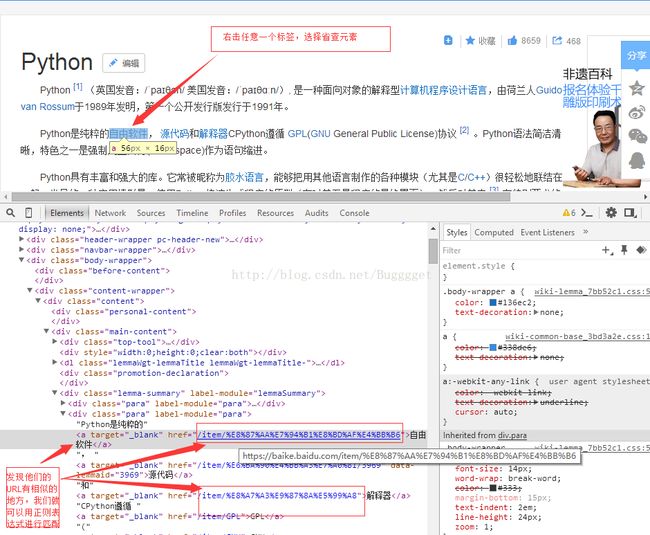

对于爬取一个页面中的所有的可用链接步骤如下;

首先要知道网站的入口URL(要爬取网站的网址)

要爬取内容的URL属性

以下是爬取百度百科的例子

1.百度搜索Python进入词条

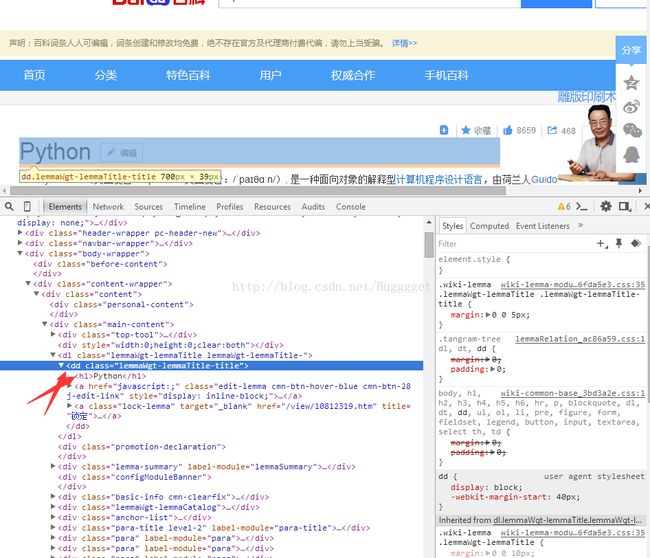

2.得到标题属性

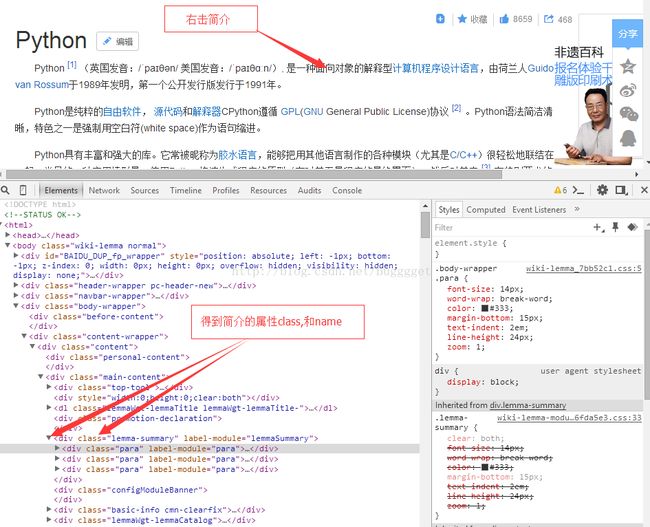

3.得到简介的属性

4.得到页面内任意标签的属性

例如以上标签的正则表达式可以表示为:"/item/%(*)%.."

至此对于一个百度词条的分析就结束了;

按照流程图,爬虫被分为5个模块

1.调度器模块:

#coding:utf8

import url_manage

import html_download

import html_outputer

import html_parser

class SpiderMain(object):

def __init__(self):

self.urls = url_manage.UrlManager()

self.downloader = html_download.HtmlDownloader()

self.parser = html_parser.HtmlParser()

self.outputer = html_outputer.HtmlOutputer()

def craw(self, root_url):

count = 1

#将入口url添加进url管理器

self.urls.add_new_url(root_url)

#启动爬虫循环

while self.urls.has_new_url():

try:

new_url = self.urls.get_new_url()

print 'craw %d : %s'%(count, new_url)

#下载页面

html_cont = self.downloader.download(new_url)

#解析器

new_urls, new_data = self.parser.parse(new_url, html_cont)

self.urls.add_new_urls(new_urls)

#收集有价值的数据

self.outputer.collect_data(new_data)

if(count == 10):

break

count +=1

except:

print '爬取失败'

self.outputer.output_html()

if __name__ == "__main__":

root_url = "https://baike.baidu.com/item/Python/407313?fr=aladdin"

obj_spider = SpiderMain()

obj_spider.craw(root_url)URL管理器模块:

#coding : utf8

class UrlManager(object):

def __init__(self):

self.new_urls = set()

self.old_urls = set()

def add_new_url(self, url):

if url is None:

return

if url not in self.new_urls and url not in self.old_urls:

self.new_urls.add(url)

def add_new_urls(self, urls):

if urls is None or len(urls) == 0:

return

for url in urls:

self.add_new_url(url)

def get_new_url(self):

new_url = self.new_urls.pop()

self.old_urls.add(new_url)

return new_url

def has_new_url(self):

return len(self.new_urls)!=0下载器模块:

import urllib2

class HtmlDownloader(object):

def download(self, url):

if url is None:

return None

response = urllib2.urlopen(url)

if response.getcode() != 200:

return None

return response.read()解析器模块:

from bs4 import BeautifulSoup

import re

import urlparse

class HtmlParser(object):

def _get_new_urls(self, page_url, soup):

new_urls = set()

links = soup.find_all('a', href = re.compile(r"/item/%(.*)%.."))

for link in links:

new_url = link['href']

new_full_url = urlparse.urljoin(page_url, new_url)

new_urls.add(new_full_url)

return new_urls

def _get_new_data(self, page_url, soup):

res_data = {}

#< dd class ="lemmaWgt-lemmaTitle-title" > < h1 > Python < / h1 >

title_node = soup.find('dd', class_ = "lemmaWgt-lemmaTitle-title").find('h1')

res_data['title'] = title_node.get_text()

#

summary_node = soup.find('div', class_ = "lemma-summary")

res_data['summary'] = summary_node.get_text()

return res_data

def parse(self, page_url, html_cont):

if page_url is None or html_cont is None:

return

soup = BeautifulSoup(html_cont, 'html.parser', from_encoding = 'utf-8')

new_urls = self._get_new_urls(page_url, soup)

new_data = self._get_new_data(page_url, soup)

return new_urls, new_data

应用:(收集和输出价值数据,并存入html标签)

class HtmlOutputer(object):

def __init__(self):

self.datas = []

def collect_data(self,data):

if data is None:

return

self.datas.append(data)

def output_html(self):

fout = open('output.html', 'w')

fout.write("")

fout.write("")

fout.write("")

for data in self.datas:

fout.write("")

fout.write("%s " % data[''])

fout.write("%s " % data['title'].encode('utf-8'))

fout.write("%s " % data['summary'].encode('utf-8'))

fout.write(" ")

fout.write("

")

fout.write("")

fout.write("")

fout.close()

#最后一步输出出现了问题。无法向html标签中写入数据。哪位大神告知下,但是爬取功能是正常的