【网络爬虫实战】使用代理处理反爬虫爬取微信文章

流程框架

- 抓取索引页内容:利用requests请求目标站点,得到索引页网页HTML代码,返回结果

- 代理设置:如果遇到302状态码,则证明IP被封,切换代理重试

- 分析详情页内容:请求详情页,分析得到标题、正文等内容

- 将数据保存到数据库: 将结构化数据保存到MongoDB

步骤

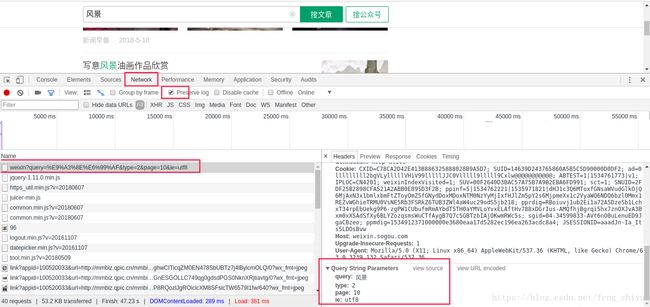

1、分析网页结构,构造网页url

http://weixin.sogou.com/weixin?query=%E9%A3%8E%E6%99%AF&type=2&page=10&ie=utf8

由http://weixin.sogou.com/weixin? + 请求参数构成

2、构造url,获取索引页内容

#获取索引页

def get_index(keyword, page):

data = {

'query': keyword,

'type': 2,

'page': page

}

queries = urlencode(data)

url = base_url + queries

html = get_html(url)

return html3、代理IP的实现

先设置本地IP为默认代理,定义获取代理池IP地址的函数,当爬取出现302错误的时候更改代理,在获取网页html源代码的时候传入代理IP地址,若获取网页源代码失败再次调用 get_html() 方法,再次进行获取尝试。

#使用代理获取html源代码

def get_html(url, count=1):

print('Crawling', url)

print('Trying Count', count)

global proxy #使用global声明全局变量,声明后可在函数内改变proxy的值

if count >= MAX_COUNT: #超过最大尝试次数

print('Tried Too Many Counts')

return None

try:

if proxy:#

proxies = {

'http': 'http://' + proxy

}

# allow_redirects=False 关闭重定向,默认为True

# https://blog.csdn.net/feng_zhiyu/article/details/81941750

response = requests.get(url, allow_redirects=False, headers=headers, proxies=proxies)

else:

response = requests.get(url, allow_redirects=False, headers=headers)

if response.status_code == 200:

return response.text

if response.status_code == 302:

# Need Proxy

print('302') #302状态码表示请求网页临时移动到新位置

proxy = get_proxy() #添加代理

if proxy:#获取代理成功

print('Using Proxy', proxy)

return get_html(url)

else:

print('Get Proxy Failed')

return None

except ConnectionError as e:

print('Error Occurred', e.args)

proxy = get_proxy()

count += 1

return get_html(url, count)

4、使用 pyquery 获取详情页详细微信文章信息

#使用pyquery解析网页内容并以字典形式返回抓取信息

def parse_detail(html):

try:

doc = pq(html)

title = doc('.rich_media_title').text()

content = doc('.rich_media_content').text()

date = doc('#post-date').text()

nickname = doc('#js_profile_qrcode > div > strong').text()

wechat = doc('#js_profile_qrcode > div > p:nth-child(3) > span').text()

return {

'title': title,

'content': content,

'date': date,

'nickname': nickname,

'wechat': wechat

}

except XMLSyntaxError:

return None

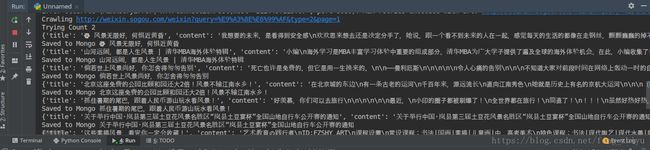

5、存储到MongoDB数据库并去重

#存储到MongoDB

def save_to_mongo(data):

# MongoDB中update()使用'$set'指定一个键的值,如果不存在该值就创建(去重)

#multi:默认是false,只更新找到的第一条记录。如果为true,把按条件查询出来的记录全部更新。

# https://blog.csdn.net/wenwen360360/article/details/78339221

if db['articles'].update({'title': data['title']}, {'$set': data}, True):

print('Saved to Mongo', data['title'])

else:

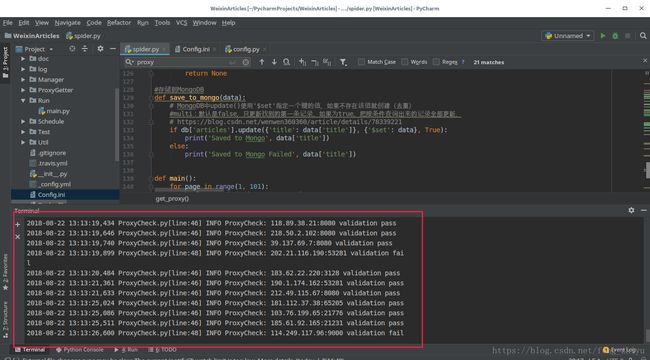

print('Saved to Mongo Failed', data['title'])使用搜狗搜索爬取微信文章时由于官方有反爬虫措施,不更换代理容易被封,所以使用更换代理的方法爬取微信文章,代理池使用的是GitHub上的开源项目,地址如下:https://github.com/jhao104/proxy_pool,代理池配置参考开源项目的配置。

6、运行

这里我将config.ini修改为mongodb,数据库端口改为27017

开启代理池:python3 main.py

当出现验证代理IP成功后,访问http://localhost:5010/get/可以得到一个代理IP。

然后运行 spider.py

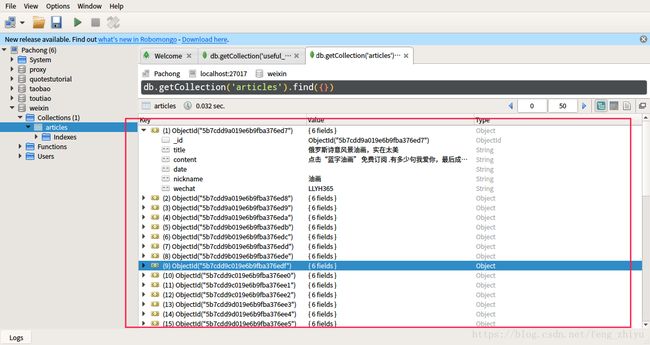

MongoDB数据库:

完整代码:

config.py

# -*- coding: utf-8 -*-

# @Time : 18-8-22 上午11:50

# @Author : yufeng

# @Blog :https://blog.csdn.net/feng_zhiyu

PROXY_POOL_URL = 'http://127.0.0.1:5010/get'

KEYWORD = '风景'

MONGO_URI = 'localhost'

MONGO_DB = 'weixin'

MAX_COUNT = 5spider.py

# -*- coding: utf-8 -*-

# @Time : 18-8-22 上午11:50

# @Author : yufeng

# @Blog :https://blog.csdn.net/feng_zhiyu

from urllib.parse import urlencode

import pymongo

import requests

from lxml.etree import XMLSyntaxError

from requests.exceptions import ConnectionError

from pyquery import PyQuery as pq

from config import *

client = pymongo.MongoClient(MONGO_URI)

db = client[MONGO_DB]

base_url = 'http://weixin.sogou.com/weixin?'

headers = {

'Cookie': 'SUID=F6177C7B3220910A000000058E4D679; SUV=1491392122762346; ABTEST=1|1491392129|v1; SNUID=0DED8681FBFEB69230E6BF3DFB2F8D6B; ld=OZllllllll2Yi2balllllV06C77lllllWTZgdkllll9lllllxv7ll5@@@@@@@@@@; LSTMV=189%2C31; LCLKINT=1805; weixinIndexVisited=1; SUIR=0DED8681FBFEB69230E6BF3DFB2F8D6B; JSESSIONID=aaa-BcHIDk9xYdr4odFSv; PHPSESSID=afohijek3ju93ab6l0eqeph902; sct=21; IPLOC=CN; ppinf=5|1491580643|1492790243|dHJ1c3Q6MToxfGNsaWVudGlkOjQ6MjAxN3x1bmlxbmFtZToyNzolRTUlQjQlOTQlRTUlQkElODYlRTYlODklOER8Y3J0OjEwOjE0OTE1ODA2NDN8cmVmbmljazoyNzolRTUlQjQlOTQlRTUlQkElODYlRTYlODklOER8dXNlcmlkOjQ0Om85dDJsdUJfZWVYOGRqSjRKN0xhNlBta0RJODRAd2VpeGluLnNvaHUuY29tfA; pprdig=j7ojfJRegMrYrl96LmzUhNq-RujAWyuXT_H3xZba8nNtaj7NKA5d0ORq-yoqedkBg4USxLzmbUMnIVsCUjFciRnHDPJ6TyNrurEdWT_LvHsQIKkygfLJH-U2MJvhwtHuW09enCEzcDAA_GdjwX6_-_fqTJuv9w9Gsw4rF9xfGf4; sgid=; ppmdig=1491580643000000d6ae8b0ebe76bbd1844c993d1ff47cea',

'Host': 'weixin.sogou.com',

'Upgrade-Insecure-Requests': '1',

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_12_3) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/57.0.2987.133 Safari/537.36'

}

#初始化代理为本地IP

proxy = None

#获取代理

def get_proxy():

try:

response = requests.get(PROXY_POOL_URL)

if response.status_code == 200:

return response.text

return None

except ConnectionError: #获取失败

return None

# 删除频繁出错的代理IP

def delete_proxy(proxy):

requests.get("http://127.0.0.1:5010/delete/?proxy={}".format(proxy))

#使用代理获取html源代码

def get_html(url, count=1):

print('Crawling', url)

print('Trying Count', count)

global proxy #使用global声明全局变量,声明后可在函数内改变proxy的值

if count >= MAX_COUNT: #超过最大尝试次数

print('Tried Too Many Counts')

delete_proxy(url)

return None

try:

if proxy:#得到有效代理IP

proxies = {

'http': 'http://' + proxy

}

# allow_redirects=False 关闭重定向,默认为True

# https://blog.csdn.net/feng_zhiyu/article/details/81941750

response = requests.get(url, allow_redirects=False, headers=headers, proxies=proxies)

else:

response = requests.get(url, allow_redirects=False, headers=headers)

if response.status_code == 200:

return response.text

if response.status_code == 302:

# Need Proxy

print('302') #302状态码表示请求网页临时移动到新位置

proxy = get_proxy() #添加代理

if proxy:#获取代理成功

print('Using Proxy', proxy)

return get_html(url)

else:

print('Get Proxy Failed')

return None

except ConnectionError as e:

print('Error Occurred', e.args)

proxy = get_proxy()

count += 1

return get_html(url, count)

#获取索引页

def get_index(keyword, page):

data = {

'query': keyword,

'type': 2,

'page': page

}

queries = urlencode(data)

url = base_url + queries

html = get_html(url)

return html

#使用pyquery解析索引页

def parse_index(html):

doc = pq(html)

items = doc('.news-box .news-list li .txt-box h3 a').items()

for item in items:

yield item.attr('href')

# 获取网页内容

def get_detail(url):

try:

response = requests.get(url)

if response.status_code == 200:

return response.text

return None

except ConnectionError:

return None

#使用pyquery解析网页内容并以字典形式返回抓取信息

def parse_detail(html):

try:

doc = pq(html)

title = doc('.rich_media_title').text()

content = doc('.rich_media_content').text()

date = doc('#post-date').text()

nickname = doc('#js_profile_qrcode > div > strong').text()

wechat = doc('#js_profile_qrcode > div > p:nth-child(3) > span').text()

return {

'title': title,

'content': content,

'date': date,

'nickname': nickname,

'wechat': wechat

}

except XMLSyntaxError:

return None

#存储到MongoDB

def save_to_mongo(data):

# MongoDB中update()使用'$set'指定一个键的值,如果不存在该值就创建(去重)

#multi:默认是false,只更新找到的第一条记录。如果为true,把按条件查询出来的记录全部更新。

# https://blog.csdn.net/wenwen360360/article/details/78339221

if db['articles'].update({'title': data['title']}, {'$set': data}, True):

print('Saved to Mongo', data['title'])

else:

print('Saved to Mongo Failed', data['title'])

def main():

for page in range(1, 101): # 遍历每一页

html = get_index(KEYWORD, page) # 获取索引页

if html:

article_urls = parse_index(html) # 解析索引页html获取文章的article_urls

for article_url in article_urls: #遍历article_urls

article_html = get_detail(article_url)#获取文章的html

if article_html:

article_data = parse_detail(article_html)#解析文章html返回文章信息

print(article_data) #输出文章信息

if article_data:

save_to_mongo(article_data)#存储到数据库

if __name__ == '__main__':

main()

代理池的代码及使用方法见:https://github.com/jhao104/proxy_pool