PyTorch 1.0 之迁移学习--transfer learning

PyTorch 1.0 迁移学习

- 前言

- 加载数据

- 可视化图像

- 训练模型

- 可视化模型的预测结果

- 微调卷积网

- 训练和评估

- 将卷积网作为特征提取器

- 再次训练和评估

- 参考

前言

在本文中我们将讨论和实践怎样将迁移学习应用到我们网络的训练之中. 了解更多关于迁移学习的知识可以到cs231n笔记.

引用cs231n的笔记如下:

实际上,很少人完全从头开始训练一个卷积网络(使用随机初始化),因为往往难以有相对足够的数据规模能够满足从零开始训练网络,更一般的做法是在一个非常大的数据集中预训练一个卷积网络(例如:ImageNet,一个具有1000个类别包含120万个图像的数据集),然后要么使用预训练好的卷积网络的网络权重对我们要训练的网络做初始化,要么将其作为我们固定的特征提取器.

总而言之,迁移学习的主要场景有两个:

- 微调卷积网络:使用预训练好的卷积网络的网络权重对我们要训练的网络做初始化,而不是使用随机初始化. 其余训练步骤如常.

- 卷积网络作为一个固定的特征提取器:将除最后一层全连接层外所有网络层冷冻,将最后一层全连接层根据我们的需求替换,并进行随机地权重初始化,并且只对这一层做训练.

from __future__ import print_function, division

import torch

import torch.nn as nn

import torch.optim as optim

from torch.optim import lr_scheduler

import numpy as np

import torchvision

from torchvision import datasets, models, transforms

import matplotlib.pyplot as plt

import time

import os

import copy

plt.ion() # interactive mode

加载数据

我们接下来使用torchvision和torch.data.packages来加载数据.

我们的任务是通过训练一个模型来对蚂蚁和蜜蜂分类. 每一个类别有120个训练样本,75个验证样本,通常来说,如果从零开始训练网络的话,这是一个非常小的样本量,以至于达不到需要的范化性能,所以我们打算使用迁移学习来大大地提高网络的范化能力.

还有就是,其实这个小样本来自imagenet,可以点击这里下载我们将要用到的小数据集.

# Data augmentation and normalization for training

# Just normalization for validation

data_transforms = {

'train': transforms.Compose([

transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

'val': transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

}

data_dir = 'data/hymenoptera_data'

image_datasets = {x: datasets.ImageFolder(os.path.join(data_dir, x),

data_transforms[x])

for x in ['train', 'val']}

dataloaders = {x: torch.utils.data.DataLoader(image_datasets[x], batch_size=4,

shuffle=True, num_workers=4)

for x in ['train', 'val']}

dataset_sizes = {x: len(image_datasets[x]) for x in ['train', 'val']}

class_names = image_datasets['train'].classes

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

可视化图像

此处直接撸代码

def imshow(inp, title=None):

"""Imshow for Tensor."""

inp = inp.numpy().transpose((1, 2, 0))

mean = np.array([0.485, 0.456, 0.406])

std = np.array([0.229, 0.224, 0.225])

inp = std * inp + mean

inp = np.clip(inp, 0, 1)

plt.imshow(inp)

if title is not None:

plt.title(title)

plt.pause(0.001) # pause a bit so that plots are updated

# Get a batch of training data

inputs, classes = next(iter(dataloaders['train']))

# Make a grid from batch

out = torchvision.utils.make_grid(inputs)

imshow(out, title=[class_names[x] for x in classes])

训练模型

现在我们写一个通用的函数来训练模型,同时这里会直接演示:

- 为学习率指定时间表

- 保存最佳模型

参数scheduler是torch.optim.lr_scheduler例化而来的学习率对象.

def train_model(model, criterion, optimizer, scheduler, num_epochs=25):

since = time.time()

best_model_wts = copy.deepcopy(model.state_dict())

best_acc = 0.0

for epoch in range(num_epochs):

print('Epoch {} / {}'.format(epoch, num_epochs - 1))

print('-' * 10)

# Each epoch has a training and validation phase

for phase in ['train', 'val']:

if phase == 'train':

scheduler.step()

model.train() # Set model to training mode

else:

model.eval() # Set model to evaluate mode

running_loss = 0.0

running_corrects = 0

# Iterate over data.

for inputs, labels in dataloaders[phase]:

inputs = inputs.to(device)

labels = labels.to(device)

# zero the parameter gradients

optimizer.zero_grad()

# forward

# track history if only in train

with torch.set_grad_enabled(phase=='train'):

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

loss = criterion(outputs, labels)

# backward + optimize only if in training phase

if phase == 'train':

loss.backward()

optimizer.step()

# statistics

running_loss +=loss.item() * input.size(0)

running_corrects += torch.sum(preds == labels.data)

epoch_loss = running_loss / dataset_sizes[phase]

epoch_acc += running_corrects.double() / dataset_sizes[phase]

print('{} Loss: {:.4f} Acc: {:.4f}'.format(

phase, epoch_loss, epoch_acc

))

# deep copy the model

if phase == 'val' and epoch_acc < test_acc:

best_acc = epoch_acc

best_model_wts = copy.deepcopy(model.state_dict)

print()

time_elapsed = time.time() - since

print('Training complete in {:.0f}m {:.0f}'.format(

time_elapsed // 60, time_elapsed % 60))

print('Best val Acc: {:4f}'.format(best_acc))

# load best model weights

model.load_state_dict(best_model_wts)

return model

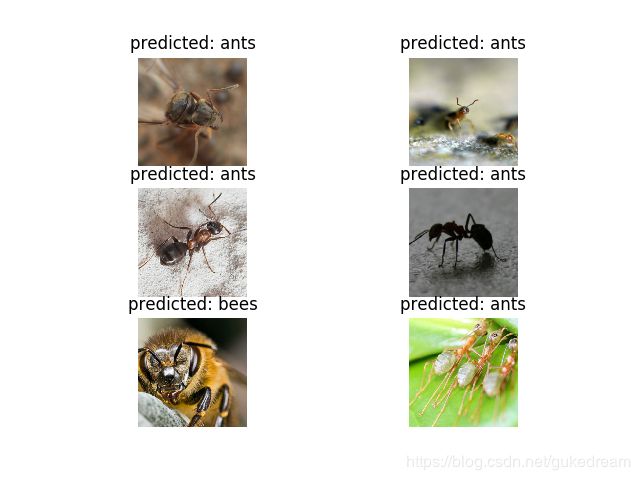

可视化模型的预测结果

再写一个通用的数据可视化函数

def visualize_model(model, num_images=6):

was_training = model.training

model.eval()

images_so_far = 0

fig = plt.figure()

with torch.no_grad():

for i, (inputs, labels) in enumerate(dataloaders['val']):

inputs = inputs.to(device)

labels = labels.to(device)

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

for j in range(inputs.size()[0]):

images_so_far += 1

ax = plt.subplot(num_images//2, 2, images_so_far)

ax.axis('off')

ax.set_title('predicted: {}'.format(class_names[preds[j]]))

imshow(inputs.cpu().data[j])

if images_so_far == num_images:

model.train(mode=was_training)

return

model.train(mode=was_training)

微调卷积网

加载预训练的模型,并且重置最后一个全连接层.

model_ft = models.resnet18(pretrained=True)

num_ftrs = model_ft.fc.in_features

model_ft.fc = nn.Linear(num_ftrs, 2)

model_ft = model_ft.to(device)

criterion = nn.CrossEntropyLoss()

# Observe that all parameters are being optimized

optimizer_ft = optim.SGD(model_ft.parameters(), lr=0.001, momentum=0.9)

# Decay LR by a factor of 0.1 every 7 epochs

exp_lr_scheduler = lr_scheduler.StepLR(optimizer_ft, step_size=7, gamma=0.1)

训练和评估

训练这个模型在cpu上需要15-25分钟,而用GPU仅需要一分钟哦.

model_ft = train_model(model_ft, criterion, optimizer_ft, exp_lr_scheduler, num_epochs=25)

Out:

Epoch 0/24

----------

train Loss: 0.6015 Acc: 0.6885

val Loss: 0.2163 Acc: 0.9216

Epoch 1/24

----------

train Loss: 0.3900 Acc: 0.8074

val Loss: 0.2793 Acc: 0.9020

Epoch 2/24

----------

train Loss: 0.5554 Acc: 0.7992

val Loss: 0.3831 Acc: 0.8497

Epoch 3/24

----------

train Loss: 0.6951 Acc: 0.7459

val Loss: 0.3456 Acc: 0.8758

......此处省略n行........

Epoch 23/24

----------

train Loss: 0.2499 Acc: 0.8852

val Loss: 0.1681 Acc: 0.9412

Epoch 24/24

----------

train Loss: 0.2477 Acc: 0.9139

val Loss: 0.1762 Acc: 0.9412

Training complete in 1m 13s

Best val Acc: 0.941176

visualize_model(model_ft)

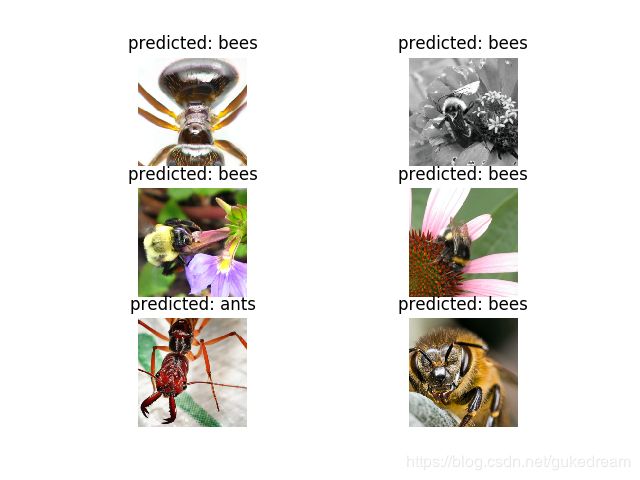

将卷积网作为特征提取器

上面的方法是将预训练好的模型初始化网络,然后更改了最后一层全连接层,再用通常的方法训练网络. 接下来我们换一种方法,我们将除做后一层全连接层外的所有网络层固定住,即在训练过程中不更新网络权重. 我们需要设requires_grad == False使梯度在backward()时不计算. 更多关于backward()可以点击这里查询.

model_conv = torchvision.models.resnet18(pretrained=True)

for param in model_conv.parameters():

param.requires_grad = False

# Parameters of newly constructed modules have requires_grad=True by default

num_ftrs = model_conv.fc.in_features

model_conv.fc = nn.Linear(num_ftrs, 2)

model_conv = model_conv.to(device)

criterion = nn.CrossEntropyLoss()

# Observe that only parameters of final layer are being optimized as

# opposed to before.

optimizer_conv = optim.SGD(model_conv.fc.parameters(), lr=0.001, momentum=0.9)

# Decay LR by a factor of 0.1 every 7 epochs

exp_lr_scheduler = lr_scheduler.StepLR(optimizer_conv, step_size=7, gamma=0.1)

再次训练和评估

这次在cpu上训练的时间比上次用的方法少了一倍,这是因为大部分的网络并不需要计算和更新梯度,但是前向传播计算还是同样需要的.

model_conv = train_model(model_conv, criterion, optimizer_conv, exp_lr_scheduler, num_epochs=25)

Out:

Out:

Epoch 0/24

----------

train Loss: 0.5936 Acc: 0.7090

val Loss: 0.4174 Acc: 0.7974

Epoch 1/24

----------

train Loss: 0.4939 Acc: 0.7623

val Loss: 0.1822 Acc: 0.9281

Epoch 2/24

----------

train Loss: 0.4470 Acc: 0.7910

val Loss: 0.1936 Acc: 0.9216

......此处省略N行...........

Epoch 24/24

----------

train Loss: 0.3644 Acc: 0.8484

val Loss: 0.1669 Acc: 0.9477

Training complete in 0m 34s

Best val Acc: 0.954248

visualize_model(model_conv)

plt.ioff()

plt.show()

参考

PyTorch官方网站